modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-07-14 06:27:53

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 519

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-07-14 06:27:45

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

teven/bi_all_bs192_hardneg_finetuned_WebNLG2020_data_coverage | teven | 2022-09-21T15:50:15Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:50:08Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all_bs192_hardneg_finetuned_WebNLG2020_data_coverage

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all_bs192_hardneg_finetuned_WebNLG2020_data_coverage')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all_bs192_hardneg_finetuned_WebNLG2020_data_coverage)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 161 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 5e-05

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 805,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_data_coverage | teven | 2022-09-21T15:49:40Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:49:33Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_data_coverage

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_data_coverage')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_data_coverage)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 41 with parameters:

```

{'batch_size': 64, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 0.0001

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 205,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

tianchez/autotrain-line_clip_no_nut_boltline_clip_no_nut_bolt-1523955096 | tianchez | 2022-09-21T15:49:25Z | 196 | 0 | transformers | [

"transformers",

"pytorch",

"autotrain",

"vision",

"image-classification",

"dataset:tianchez/autotrain-data-line_clip_no_nut_boltline_clip_no_nut_bolt",

"co2_eq_emissions",

"endpoints_compatible",

"region:us"

]

| image-classification | 2022-09-21T15:42:51Z | ---

tags:

- autotrain

- vision

- image-classification

datasets:

- tianchez/autotrain-data-line_clip_no_nut_boltline_clip_no_nut_bolt

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

example_title: Tiger

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

example_title: Teapot

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

example_title: Palace

co2_eq_emissions:

emissions: 10.423410288264847

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 1523955096

- CO2 Emissions (in grams): 10.4234

## Validation Metrics

- Loss: 0.580

- Accuracy: 0.798

- Macro F1: 0.542

- Micro F1: 0.798

- Weighted F1: 0.796

- Macro Precision: 0.548

- Micro Precision: 0.798

- Weighted Precision: 0.796

- Macro Recall: 0.537

- Micro Recall: 0.798

- Weighted Recall: 0.798 |

teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_data_coverage | teven | 2022-09-21T15:49:04Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:48:57Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_data_coverage

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_data_coverage')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_data_coverage)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 41 with parameters:

```

{'batch_size': 64, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 0.0002

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 205,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_relevance | teven | 2022-09-21T15:44:37Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:44:30Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_relevance

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_relevance')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_relevance)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 161 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 0.0005

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 805,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness | teven | 2022-09-21T15:42:49Z | 4 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:42:41Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness')

model = AutoModel.from_pretrained('teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/cross_all_bs320_vanilla_finetuned_WebNLG2020_correctness)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness | teven | 2022-09-21T15:41:45Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:41:37Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness')

model = AutoModel.from_pretrained('teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/cross_all_bs160_allneg_finetuned_WebNLG2020_correctness)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_correctness | teven | 2022-09-21T15:40:30Z | 4 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:40:23Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_correctness

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_correctness')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all_bs320_vanilla_finetuned_WebNLG2020_correctness)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 321 with parameters:

```

{'batch_size': 8, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 5e-05

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 1605,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_correctness | teven | 2022-09-21T15:37:39Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:37:31Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_correctness

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_correctness')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all-mpnet-base-v2_finetuned_WebNLG2020_correctness)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 41 with parameters:

```

{'batch_size': 64, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 0.0002

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 205,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

teven/bi_all_bs160_allneg_finetuned_WebNLG2020_correctness | teven | 2022-09-21T15:37:00Z | 3 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-09-21T15:36:53Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# teven/bi_all_bs160_allneg_finetuned_WebNLG2020_correctness

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('teven/bi_all_bs160_allneg_finetuned_WebNLG2020_correctness')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=teven/bi_all_bs160_allneg_finetuned_WebNLG2020_correctness)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 81 with parameters:

```

{'batch_size': 32, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 50,

"evaluation_steps": 0,

"evaluator": "better_cross_encoder.PearsonCorrelationEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 0.0002

},

"scheduler": "warmupcosine",

"steps_per_epoch": null,

"warmup_steps": 405,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

sd-concepts-library/kogatan-shiny | sd-concepts-library | 2022-09-21T15:11:22Z | 0 | 3 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T15:11:16Z | ---

license: mit

---

### kogatan_shiny on Stable Diffusion

This is the `kogatan` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/jojo-bizzare-adventure-manga-lineart | sd-concepts-library | 2022-09-21T15:03:39Z | 0 | 1 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T15:03:33Z | ---

license: mit

---

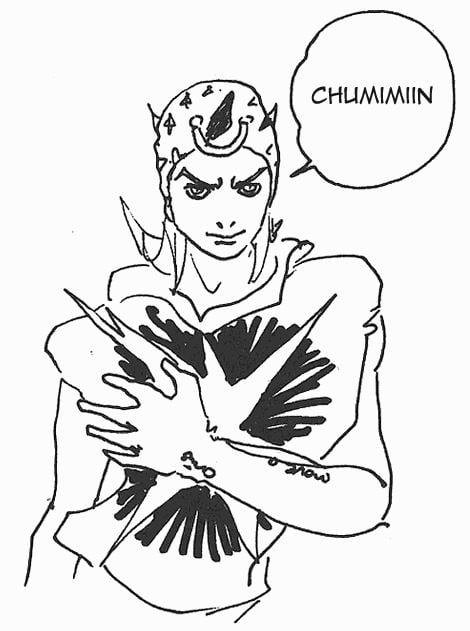

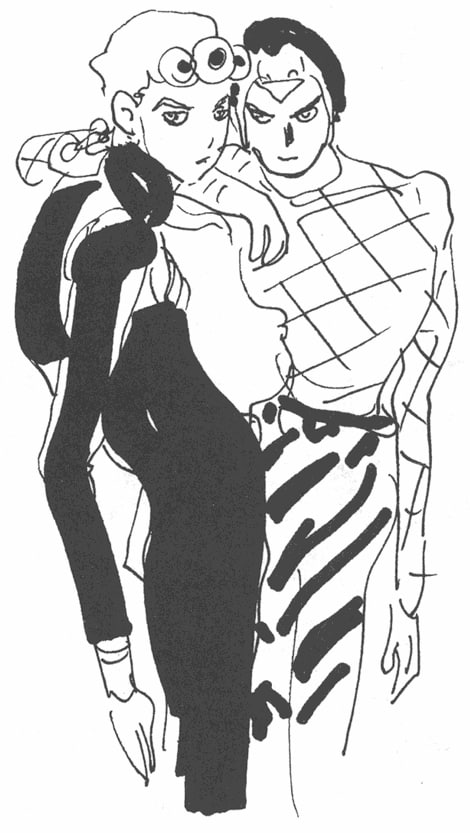

### JoJo Bizzare Adventure manga lineart on Stable Diffusion

This is the `<JoJo_lineart>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

csdeptsju/distilbert-base-uncased-finetuned-emotion | csdeptsju | 2022-09-21T15:00:30Z | 108 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-09-21T14:25:43Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.918

- name: F1

type: f1

value: 0.9179414471754404

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2255

- Accuracy: 0.918

- F1: 0.9179

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8539 | 1.0 | 250 | 0.3348 | 0.896 | 0.8916 |

| 0.2589 | 2.0 | 500 | 0.2255 | 0.918 | 0.9179 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.0

- Tokenizers 0.12.1

|

sd-concepts-library/phan-s-collage | sd-concepts-library | 2022-09-21T14:44:10Z | 0 | 1 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T14:44:04Z | ---

license: mit

---

### Phan's Collage on Stable Diffusion

This is the `<pcollage>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

rugo/xlm-roberta-base-finetuned | rugo | 2022-09-21T14:07:10Z | 105 | 0 | transformers | [

"transformers",

"pytorch",

"xlm-roberta",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| fill-mask | 2022-09-21T13:43:38Z | xml-roberta-base-finetuned

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an legal documents dataset. |

RandomLegend/Cyberpunk-Lucy | RandomLegend | 2022-09-21T14:03:17Z | 0 | 0 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T13:51:48Z | ---

license: mit

---

Cyberpunk-Lucy on Stable Diffusion

This is the <cyberpunk-lucy> concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the Stable

Conceptualizer notebook. You can also train your own concepts and load them into the concept libraries using this notebook.

Here is the new concept you will be able to use as an object: cyberpunk-lucy

Training Images: |

sd-concepts-library/david-martinez-cyberpunk | sd-concepts-library | 2022-09-21T14:03:07Z | 0 | 2 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T14:02:55Z | ---

license: mit

---

### david martinez cyberpunk on Stable Diffusion

This is the `<david-martinez-cyberpunk>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

Wanjiru/autotrain_gro_ner | Wanjiru | 2022-09-21T13:54:32Z | 106 | 1 | transformers | [

"transformers",

"pytorch",

"bert",

"token-classification",

"sequence-tagger-model",

"en",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| token-classification | 2022-07-18T12:54:37Z | ---

tags:

- bert

- token-classification

- sequence-tagger-model

language: en

widget:

- text: "Total exports of maize"

---

## Token Classification

Classifies Gro's items and metrics

| **tag** | **token** |

|---------------------------------|-----------|

|B-ITEM | BEGINNING ITEM|

|I-ITEM | INSIDE ITEM|

|B-METRIC |BEGINNING METRIC |

|I-METRIC | INSIDE METRIC|

|O | OUTSIDE |

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from transformers import AutoModelForTokenClassification, AutoTokenizer, pipeline

tokenizer = AutoTokenizer.from_pretrained("Wanjiru/autotrain_gro_ner")

model = AutoModelForTokenClassification.from_pretrained("Wanjiru/autotrain_gro_ner")

nlp = pipeline("ner", model=model, tokenizer=tokenizer)

example = "Wanjru"

ner_res = nlp(example)

```

---

|

sd-concepts-library/cyberpunk-lucy | sd-concepts-library | 2022-09-21T13:37:50Z | 0 | 9 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T13:37:44Z | ---

license: mit

---

### Cyberpunk-Lucy on Stable Diffusion

This is the `<cyberpunk-lucy>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

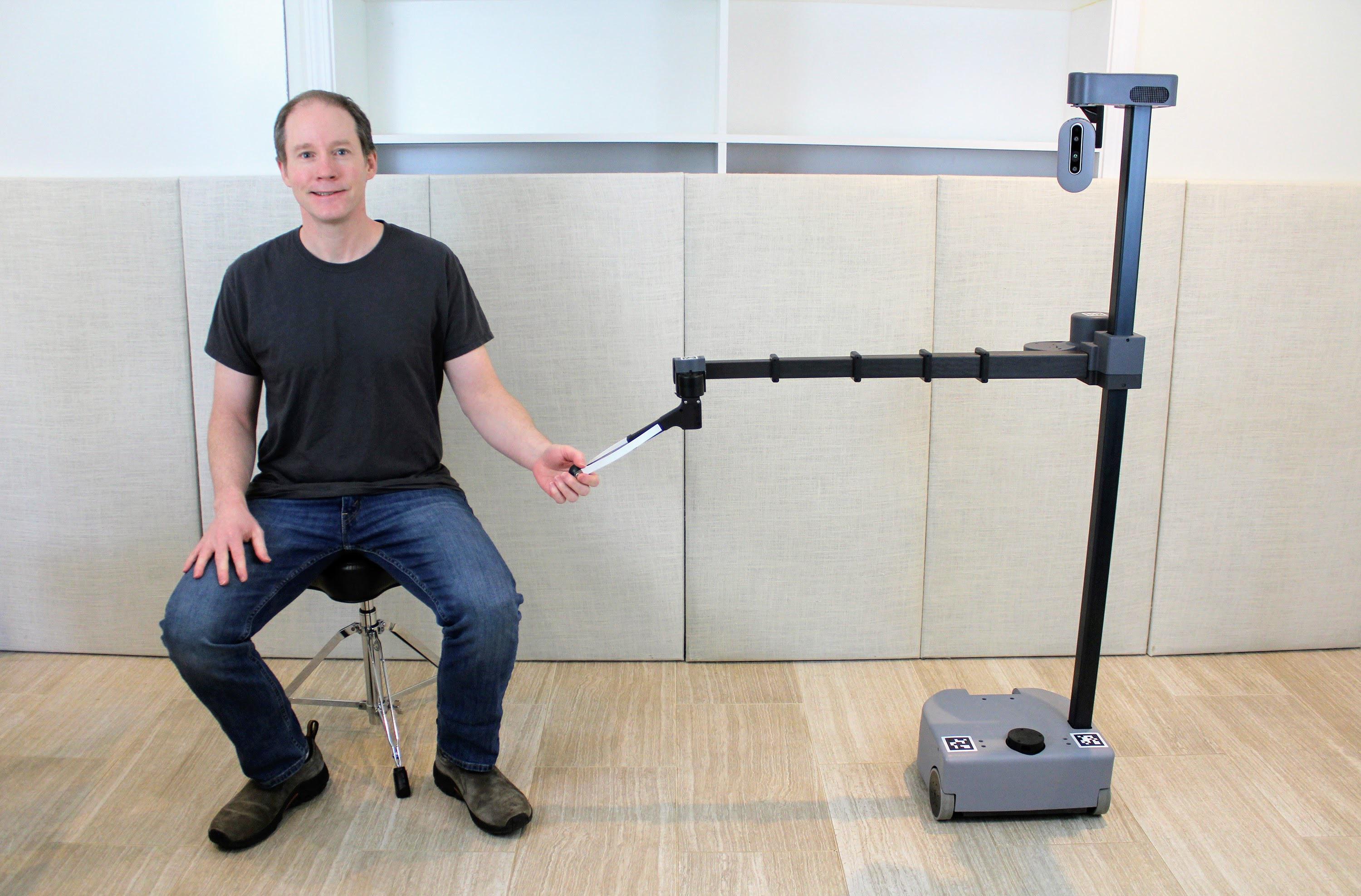

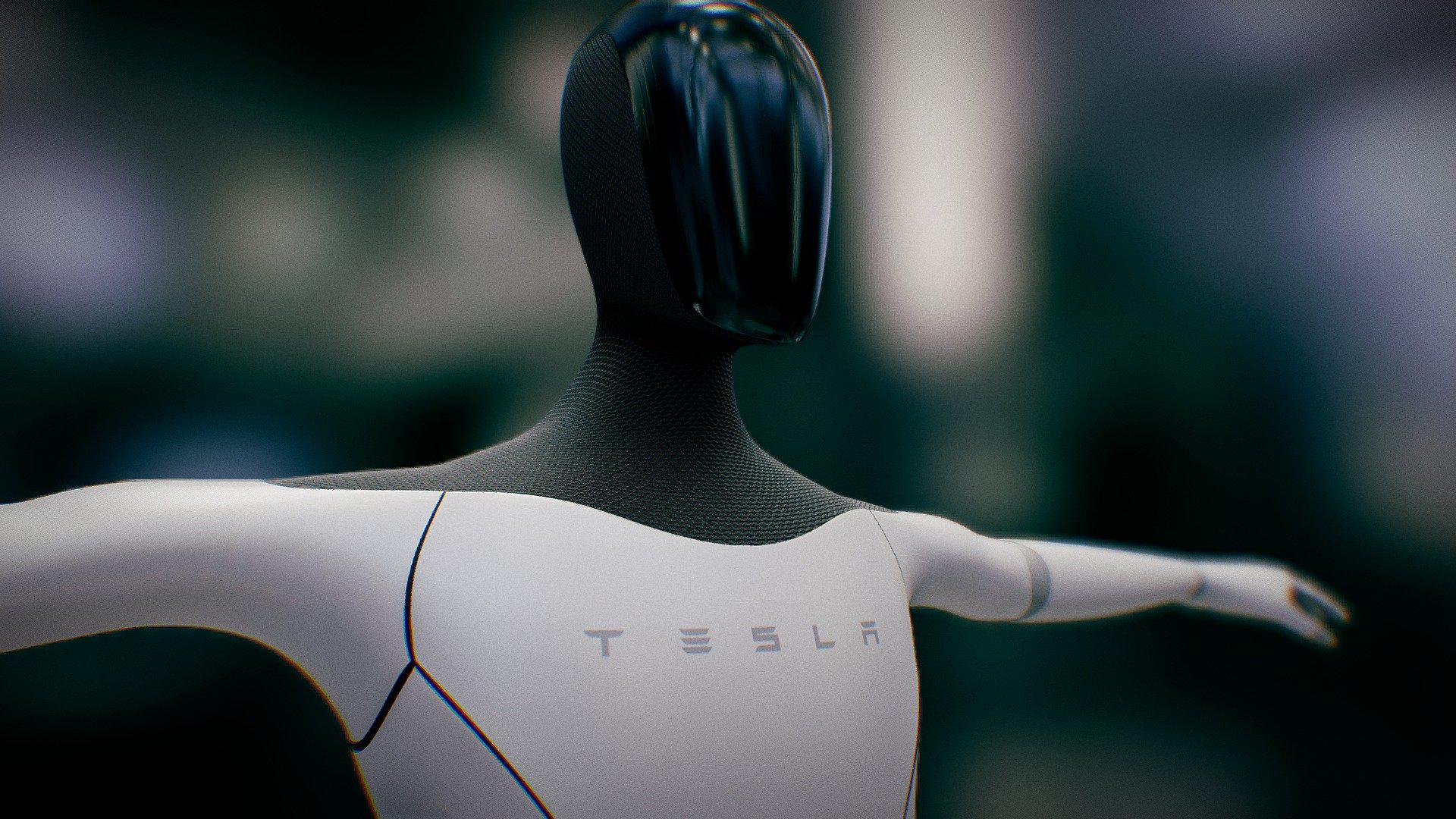

sd-concepts-library/titan-robot | sd-concepts-library | 2022-09-21T13:20:01Z | 0 | 0 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T13:19:47Z | ---

license: mit

---

### Titan Robot on Stable Diffusion

This is the `<titan>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

maretamasaeva/bert-nieuweorganisatie_meerdan100 | maretamasaeva | 2022-09-21T12:40:50Z | 160 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-09-21T07:56:39Z | ---

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: bert-nieuweorganisatie_meerdan100

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-nieuweorganisatie_meerdan100

This model is a fine-tuned version of [GroNLP/bert-base-dutch-cased](https://huggingface.co/GroNLP/bert-base-dutch-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1482

- Accuracy: 0.7584

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 6

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 1.0871 | 1.0 | 1886 | 0.9585 | 0.7355 |

| 0.8357 | 2.0 | 3772 | 0.9421 | 0.7377 |

| 0.6399 | 3.0 | 5658 | 0.9207 | 0.7531 |

| 0.4953 | 4.0 | 7544 | 0.9751 | 0.7568 |

| 0.3685 | 5.0 | 9430 | 1.0538 | 0.7475 |

| 0.2704 | 6.0 | 11316 | 1.1482 | 0.7584 |

### Framework versions

- Transformers 4.21.0

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/child-zombie | sd-concepts-library | 2022-09-21T12:17:50Z | 0 | 0 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T12:17:38Z | ---

license: mit

---

### child zombie on Stable Diffusion

This is the `<child-zombie>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

mayorov-s/q-Taxi-v3 | mayorov-s | 2022-09-21T11:53:14Z | 0 | 0 | null | [

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

]

| reinforcement-learning | 2022-09-21T11:53:07Z | ---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="mayorov-s/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

GItaf/roberta-base-roberta-base-TF-weight2-epoch5 | GItaf | 2022-09-21T11:19:37Z | 47 | 0 | transformers | [

"transformers",

"pytorch",

"roberta",

"text-generation",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-generation | 2022-09-21T08:55:59Z | ---

tags:

- generated_from_trainer

model-index:

- name: roberta-base-roberta-base-TF-weight2-epoch5

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-roberta-base-TF-weight2-epoch5

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 5.5174

- Cls loss: 0.6899

- Lm loss: 4.1376

- Cls Accuracy: 0.5401

- Cls F1: 0.3788

- Cls Precision: 0.2917

- Cls Recall: 0.5401

- Perplexity: 62.65

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Cls loss | Lm loss | Cls Accuracy | Cls F1 | Cls Precision | Cls Recall | Perplexity |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:-------:|:------------:|:------:|:-------------:|:----------:|:----------:|

| 6.023 | 1.0 | 3470 | 5.6863 | 0.6910 | 4.3046 | 0.5401 | 0.3788 | 0.2917 | 0.5401 | 74.04 |

| 5.6871 | 2.0 | 6940 | 5.5897 | 0.6926 | 4.2045 | 0.5401 | 0.3788 | 0.2917 | 0.5401 | 66.99 |

| 5.5587 | 3.0 | 10410 | 5.5414 | 0.6905 | 4.1604 | 0.5401 | 0.3788 | 0.2917 | 0.5401 | 64.10 |

| 5.481 | 4.0 | 13880 | 5.5208 | 0.6900 | 4.1409 | 0.5401 | 0.3788 | 0.2917 | 0.5401 | 62.86 |

| 5.4338 | 5.0 | 17350 | 5.5174 | 0.6899 | 4.1376 | 0.5401 | 0.3788 | 0.2917 | 0.5401 | 62.65 |

### Framework versions

- Transformers 4.21.2

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/raichu | sd-concepts-library | 2022-09-21T10:17:46Z | 0 | 3 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T10:17:41Z | ---

license: mit

---

### Raichu on Stable Diffusion

This is the `<raichu>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/test | sd-concepts-library | 2022-09-21T09:30:47Z | 0 | 0 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T09:30:33Z | ---

license: mit

---

### TEST on Stable Diffusion

This is the `<AIO>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Sphere-Fall2022/nima-test-bert-glue | Sphere-Fall2022 | 2022-09-21T08:12:31Z | 105 | 1 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-09-21T08:03:35Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

model-index:

- name: nima-test-bert-glue

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# nima-test-bert-glue

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| No log | 1.0 | 367 | 0.4436 | 0.8106 | 0.8597 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

AlbedoAI/DialoGPT-medium-Albedo | AlbedoAI | 2022-09-21T07:46:12Z | 112 | 2 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2022-08-14T04:44:07Z | ---

tags:

- conversational

---

# Albedo Medium DialoGPT Model Casual

This model does not do well with short greetings. But it can handle question and answer types of conversations most of the time.

It is trained on Albedo's dialogues from his story quests [Princeps Cretaceus Chapter](https://genshin-impact.fandom.com/wiki/Princeps_Cretaceus_Chapter) and [Shadows Amidst Snowstorms Event Story](https://genshin-impact.fandom.com/wiki/Shadows_Amidst_Snowstorms/Story)

Socials

- Twitter: [@tofuboy05](https://twitter.com/tofuboy05) (Creator)

- Tiktok: [@tofuboyart](https://www.tiktok.com/@tofuboyart)

- HoYoLAB: [TofuBoy](https://www.hoyolab.com/accountCenter/postList?id=78394798)

|

research-backup/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated | research-backup | 2022-09-21T07:30:29Z | 103 | 0 | transformers | [

"transformers",

"pytorch",

"roberta",

"feature-extraction",

"dataset:relbert/semeval2012_relational_similarity",

"model-index",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

]

| feature-extraction | 2022-09-21T06:35:28Z | ---

datasets:

- relbert/semeval2012_relational_similarity

model-index:

- name: relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated

results:

- task:

name: Relation Mapping

type: sorting-task

dataset:

name: Relation Mapping

args: relbert/relation_mapping

type: relation-mapping

metrics:

- name: Accuracy

type: accuracy

value: 0.8907142857142857

- task:

name: Analogy Questions (SAT full)

type: multiple-choice-qa

dataset:

name: SAT full

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6283422459893048

- task:

name: Analogy Questions (SAT)

type: multiple-choice-qa

dataset:

name: SAT

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6201780415430267

- task:

name: Analogy Questions (BATS)

type: multiple-choice-qa

dataset:

name: BATS

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.8143413007226237

- task:

name: Analogy Questions (Google)

type: multiple-choice-qa

dataset:

name: Google

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.924

- task:

name: Analogy Questions (U2)

type: multiple-choice-qa

dataset:

name: U2

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.631578947368421

- task:

name: Analogy Questions (U4)

type: multiple-choice-qa

dataset:

name: U4

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6574074074074074

- task:

name: Lexical Relation Classification (BLESS)

type: classification

dataset:

name: BLESS

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.9159258701220431

- name: F1 (macro)

type: f1_macro

value: 0.9095586987612104

- task:

name: Lexical Relation Classification (CogALexV)

type: classification

dataset:

name: CogALexV

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.8671361502347418

- name: F1 (macro)

type: f1_macro

value: 0.715166083502338

- task:

name: Lexical Relation Classification (EVALution)

type: classification

dataset:

name: BLESS

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.6847237269772481

- name: F1 (macro)

type: f1_macro

value: 0.6678741454455487

- task:

name: Lexical Relation Classification (K&H+N)

type: classification

dataset:

name: K&H+N

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.9613966752451832

- name: F1 (macro)

type: f1_macro

value: 0.8893979772488178

- task:

name: Lexical Relation Classification (ROOT09)

type: classification

dataset:

name: ROOT09

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.9088060169225948

- name: F1 (macro)

type: f1_macro

value: 0.9059327892815358

---

# relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated

RelBERT fine-tuned from [roberta-large](https://huggingface.co/roberta-large) on

[relbert/semeval2012_relational_similarity](https://huggingface.co/datasets/relbert/semeval2012_relational_similarity).

Fine-tuning is done via [RelBERT](https://github.com/asahi417/relbert) library (see the repository for more detail).

It achieves the following results on the relation understanding tasks:

- Analogy Question ([dataset](https://huggingface.co/datasets/relbert/analogy_questions), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated/raw/main/analogy.json)):

- Accuracy on SAT (full): 0.6283422459893048

- Accuracy on SAT: 0.6201780415430267

- Accuracy on BATS: 0.8143413007226237

- Accuracy on U2: 0.631578947368421

- Accuracy on U4: 0.6574074074074074

- Accuracy on Google: 0.924

- Lexical Relation Classification ([dataset](https://huggingface.co/datasets/relbert/lexical_relation_classification), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated/raw/main/classification.json)):

- Micro F1 score on BLESS: 0.9159258701220431

- Micro F1 score on CogALexV: 0.8671361502347418

- Micro F1 score on EVALution: 0.6847237269772481

- Micro F1 score on K&H+N: 0.9613966752451832

- Micro F1 score on ROOT09: 0.9088060169225948

- Relation Mapping ([dataset](https://huggingface.co/datasets/relbert/relation_mapping), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated/raw/main/relation_mapping.json)):

- Accuracy on Relation Mapping: 0.8907142857142857

### Usage

This model can be used through the [relbert library](https://github.com/asahi417/relbert). Install the library via pip

```shell

pip install relbert

```

and activate model as below.

```python

from relbert import RelBERT

model = RelBERT("relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated")

vector = model.get_embedding(['Tokyo', 'Japan']) # shape of (1024, )

```

### Training hyperparameters

The following hyperparameters were used during training:

- model: roberta-large

- max_length: 64

- mode: average

- data: relbert/semeval2012_relational_similarity

- template_mode: manual

- template: Today, I finally discovered the relation between <subj> and <obj> : <mask>

- loss_function: info_loob

- temperature_nce_constant: 0.05

- temperature_nce_rank: {'min': 0.01, 'max': 0.05, 'type': 'linear'}

- epoch: 21

- batch: 128

- lr: 5e-06

- lr_decay: False

- lr_warmup: 1

- weight_decay: 0

- random_seed: 0

- exclude_relation: None

- n_sample: 640

- gradient_accumulation: 8

The full configuration can be found at [fine-tuning parameter file](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-c-loob-conceptnet-validated/raw/main/trainer_config.json).

### Reference

If you use any resource from RelBERT, please consider to cite our [paper](https://aclanthology.org/2021.eacl-demos.7/).

```

@inproceedings{ushio-etal-2021-distilling-relation-embeddings,

title = "{D}istilling {R}elation {E}mbeddings from {P}re-trained {L}anguage {M}odels",

author = "Ushio, Asahi and

Schockaert, Steven and

Camacho-Collados, Jose",

booktitle = "EMNLP 2021",

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

}

```

|

sd-concepts-library/joemad | sd-concepts-library | 2022-09-21T07:30:18Z | 0 | 2 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T07:30:15Z | ---

license: mit

---

### JoeMad on Stable Diffusion

This is the `<joemad>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Lemming/distilbert-base-uncased-finetuned-emotion | Lemming | 2022-09-21T06:36:30Z | 103 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-09-21T05:13:07Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9215

- name: F1

type: f1

value: 0.9216499948953181

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2104

- Accuracy: 0.9215

- F1: 0.9216

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8206 | 1.0 | 250 | 0.2908 | 0.92 | 0.9183 |

| 0.2399 | 2.0 | 500 | 0.2104 | 0.9215 | 0.9216 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/bamse-og-kylling | sd-concepts-library | 2022-09-21T06:23:26Z | 0 | 0 | null | [

"license:mit",

"region:us"

]

| null | 2022-09-21T06:23:17Z | ---

license: mit

---

### Bamse og kylling on Stable Diffusion

This is the `<bamse-kylling>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

huggingtweets/houstonhotwife-thongwife | huggingtweets | 2022-09-21T05:43:45Z | 109 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2022-09-21T05:38:58Z | ---

language: en

thumbnail: http://www.huggingtweets.com/houstonhotwife-thongwife/1663739021491/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1571839912202178561/tbXoqNM5_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/722128170808320000/YNGcYakC_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Houston Hotwife & Thongwife</div>

<div style="text-align: center; font-size: 14px;">@houstonhotwife-thongwife</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Houston Hotwife & Thongwife.

| Data | Houston Hotwife | Thongwife |

| --- | --- | --- |

| Tweets downloaded | 3173 | 3225 |

| Retweets | 1166 | 1469 |

| Short tweets | 524 | 1560 |

| Tweets kept | 1483 | 196 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2g5af0zu/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @houstonhotwife-thongwife's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1uh4ivfz) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1uh4ivfz/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/houstonhotwife-thongwife')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

research-backup/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated | research-backup | 2022-09-21T05:40:34Z | 103 | 0 | transformers | [

"transformers",

"pytorch",

"roberta",

"feature-extraction",

"dataset:relbert/semeval2012_relational_similarity",

"model-index",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

]

| feature-extraction | 2022-09-21T04:45:26Z | ---

datasets:

- relbert/semeval2012_relational_similarity

model-index:

- name: relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated

results:

- task:

name: Relation Mapping

type: sorting-task

dataset:

name: Relation Mapping

args: relbert/relation_mapping

type: relation-mapping

metrics:

- name: Accuracy

type: accuracy

value: 0.8358531746031747

- task:

name: Analogy Questions (SAT full)

type: multiple-choice-qa

dataset:

name: SAT full

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6310160427807486

- task:

name: Analogy Questions (SAT)

type: multiple-choice-qa

dataset:

name: SAT

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6320474777448071

- task:

name: Analogy Questions (BATS)

type: multiple-choice-qa

dataset:

name: BATS

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.7409672040022235

- task:

name: Analogy Questions (Google)

type: multiple-choice-qa

dataset:

name: Google

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.918

- task:

name: Analogy Questions (U2)

type: multiple-choice-qa

dataset:

name: U2

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.5745614035087719

- task:

name: Analogy Questions (U4)

type: multiple-choice-qa

dataset:

name: U4

args: relbert/analogy_questions

type: analogy-questions

metrics:

- name: Accuracy

type: accuracy

value: 0.6018518518518519

- task:

name: Lexical Relation Classification (BLESS)

type: classification

dataset:

name: BLESS

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.9133644718999548

- name: F1 (macro)

type: f1_macro

value: 0.9091653089166233

- task:

name: Lexical Relation Classification (CogALexV)

type: classification

dataset:

name: CogALexV

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.8523474178403756

- name: F1 (macro)

type: f1_macro

value: 0.6906026137184262

- task:

name: Lexical Relation Classification (EVALution)

type: classification

dataset:

name: BLESS

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.6700975081256771

- name: F1 (macro)

type: f1_macro

value: 0.6599264465141299

- task:

name: Lexical Relation Classification (K&H+N)

type: classification

dataset:

name: K&H+N

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.9501286777491827

- name: F1 (macro)

type: f1_macro

value: 0.8552943975279798

- task:

name: Lexical Relation Classification (ROOT09)

type: classification

dataset:

name: ROOT09

args: relbert/lexical_relation_classification

type: relation-classification

metrics:

- name: F1

type: f1

value: 0.8987778125979317

- name: F1 (macro)

type: f1_macro

value: 0.8958673797671589

---

# relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated

RelBERT fine-tuned from [roberta-large](https://huggingface.co/roberta-large) on

[relbert/semeval2012_relational_similarity](https://huggingface.co/datasets/relbert/semeval2012_relational_similarity).

Fine-tuning is done via [RelBERT](https://github.com/asahi417/relbert) library (see the repository for more detail).

It achieves the following results on the relation understanding tasks:

- Analogy Question ([dataset](https://huggingface.co/datasets/relbert/analogy_questions), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated/raw/main/analogy.json)):

- Accuracy on SAT (full): 0.6310160427807486

- Accuracy on SAT: 0.6320474777448071

- Accuracy on BATS: 0.7409672040022235

- Accuracy on U2: 0.5745614035087719

- Accuracy on U4: 0.6018518518518519

- Accuracy on Google: 0.918

- Lexical Relation Classification ([dataset](https://huggingface.co/datasets/relbert/lexical_relation_classification), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated/raw/main/classification.json)):

- Micro F1 score on BLESS: 0.9133644718999548

- Micro F1 score on CogALexV: 0.8523474178403756

- Micro F1 score on EVALution: 0.6700975081256771

- Micro F1 score on K&H+N: 0.9501286777491827

- Micro F1 score on ROOT09: 0.8987778125979317

- Relation Mapping ([dataset](https://huggingface.co/datasets/relbert/relation_mapping), [full result](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated/raw/main/relation_mapping.json)):

- Accuracy on Relation Mapping: 0.8358531746031747

### Usage

This model can be used through the [relbert library](https://github.com/asahi417/relbert). Install the library via pip

```shell

pip install relbert

```

and activate model as below.

```python

from relbert import RelBERT

model = RelBERT("relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated")

vector = model.get_embedding(['Tokyo', 'Japan']) # shape of (1024, )

```

### Training hyperparameters

The following hyperparameters were used during training:

- model: roberta-large

- max_length: 64

- mode: average

- data: relbert/semeval2012_relational_similarity

- template_mode: manual

- template: Today, I finally discovered the relation between <subj> and <obj> : <subj> is the <mask> of <obj>

- loss_function: info_loob

- temperature_nce_constant: 0.05

- temperature_nce_rank: {'min': 0.01, 'max': 0.05, 'type': 'linear'}

- epoch: 21

- batch: 128

- lr: 5e-06

- lr_decay: False

- lr_warmup: 1

- weight_decay: 0

- random_seed: 0

- exclude_relation: None

- n_sample: 640

- gradient_accumulation: 8

The full configuration can be found at [fine-tuning parameter file](https://huggingface.co/relbert/roberta-large-semeval2012-average-prompt-a-loob-conceptnet-validated/raw/main/trainer_config.json).

### Reference

If you use any resource from RelBERT, please consider to cite our [paper](https://aclanthology.org/2021.eacl-demos.7/).

```

@inproceedings{ushio-etal-2021-distilling-relation-embeddings,

title = "{D}istilling {R}elation {E}mbeddings from {P}re-trained {L}anguage {M}odels",

author = "Ushio, Asahi and

Schockaert, Steven and

Camacho-Collados, Jose",

booktitle = "EMNLP 2021",

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

}

```

|

Najeen/bert-finetuned-ner | Najeen | 2022-09-21T03:51:04Z | 105 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:conll2003",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| token-classification | 2022-09-19T13:52:02Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- conll2003

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-finetuned-ner

results:

- task:

name: Token Classification

type: token-classification

dataset: