modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-07-15 12:29:39

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 521

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-07-15 12:28:52

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

Mark-Cooper/my_aime_gpt2_clm-model | Mark-Cooper | 2023-02-17T15:09:47Z | 4 | 0 | transformers | [

"transformers",

"tf",

"gpt2",

"text-generation",

"generated_from_keras_callback",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-generation | 2023-02-17T12:24:15Z | ---

license: mit

tags:

- generated_from_keras_callback

model-index:

- name: Mark-Cooper/my_aime_gpt2_clm-model

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Mark-Cooper/my_aime_gpt2_clm-model

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 3.0541

- Validation Loss: 2.8548

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 4.3688 | 3.7021 | 0 |

| 3.5122 | 3.1472 | 1 |

| 3.0541 | 2.8548 | 2 |

### Framework versions

- Transformers 4.26.1

- TensorFlow 2.11.0

- Datasets 2.9.0

- Tokenizers 0.13.2

|

jjanarek/sd-class-butterflies-32 | jjanarek | 2023-02-17T15:01:20Z | 0 | 0 | diffusers | [

"diffusers",

"pytorch",

"unconditional-image-generation",

"diffusion-models-class",

"license:mit",

"diffusers:DDPMPipeline",

"region:us"

]

| unconditional-image-generation | 2023-02-17T15:00:59Z | ---

license: mit

tags:

- pytorch

- diffusers

- unconditional-image-generation

- diffusion-models-class

---

# Model Card for Unit 1 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class)

This model is a diffusion model for unconditional image generation of cute 🦋.

## Usage

```python

from diffusers import DDPMPipeline

pipeline = DDPMPipeline.from_pretrained('jjanarek/sd-class-butterflies-32')

image = pipeline().images[0]

image

```

|

jannikskytt/ppo-implemented-LunarLander-v2 | jannikskytt | 2023-02-17T14:59:36Z | 0 | 0 | null | [

"tensorboard",

"LunarLander-v2",

"ppo",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"deep-rl-course",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-17T10:41:59Z | ---

tags:

- LunarLander-v2

- ppo

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

- deep-rl-course

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -105.68 +/- 49.60

name: mean_reward

verified: false

---

# PPO Agent Playing LunarLander-v2

This is a trained model of a PPO agent playing LunarLander-v2.

# Hyperparameters

```python

{'exp_name': 'ppo2'

'seed': 1

'torch_deterministic': True

'cuda': True

'track': False

'wandb_project_name': 'cleanRL'

'wandb_entity': None

'capture_video': False

'env_id': 'LunarLander-v2'

'total_timesteps': 3500000

'learning_rate': 0.00025

'num_envs': 16

'num_steps': 128

'anneal_lr': True

'gae': True

'gamma': 0.99

'gae_lambda': 0.95

'num_minibatches': 4

'update_epochs': 4

'norm_adv': True

'clip_coef': 0.2

'clip_vloss': True

'ent_coef': 0.01

'vf_coef': 0.5

'max_grad_norm': 0.5

'target_kl': None

'repo_id': 'jannikskytt/ppo-implemented-LunarLander-v2'

'batch_size': 2048

'minibatch_size': 512}

```

|

MunSu/xlm-roberta-base-finetuned-panx-all | MunSu | 2023-02-17T14:55:36Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| token-classification | 2023-02-15T10:57:08Z | ---

license: mit

tags:

- generated_from_trainer

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-all

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-all

This model is a fine-tuned version of [MunSu/xlm-roberta-base-finetuned-panx-de](https://huggingface.co/MunSu/xlm-roberta-base-finetuned-panx-de) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2643

- F1: 0.8601

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.1634 | 1.0 | 2503 | 0.2532 | 0.8289 |

| 0.1004 | 2.0 | 5006 | 0.2586 | 0.8541 |

| 0.0576 | 3.0 | 7509 | 0.2643 | 0.8601 |

### Framework versions

- Transformers 4.26.0

- Pytorch 1.8.0

- Datasets 2.9.0

- Tokenizers 0.13.2

|

Andrazp/multilingual-hate-speech-robacofi | Andrazp | 2023-02-17T14:52:29Z | 116 | 2 | transformers | [

"transformers",

"pytorch",

"xlm-roberta",

"text-classification",

"en",

"arxiv:2104.12250",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2022-10-14T09:16:05Z | ---

widget:

- text: "My name is Mark and I live in London. I am a postgraduate student at Queen Mary University."

language:

- en

license: mit

---

# Multilingual Hate Speech Classifier for Social Media Content

A multilingual model for hate speech classification of social media content. The model is based on pre-trained multilingual representations from the XLM-T model (https://arxiv.org/abs/2104.12250) and was jointly fine-tuned on five languages, namely Arabic, Croatian, English, German and Slovenian. The test results on these five languages in terms of F1 score are as follows:

| Language | F1 |

|-----------|:------:|

| Arabic | 0.8704 |

| Croatian | 0.7226 |

| English | 0.7851 |

| German | 0.7826 |

| Slovenian | 0.7596 |

## Tokenizer

During training the text was preprocessed using the original XLM-T tokenizer. The pretrained tokenizer files are included in this repository. We suggest the same tokenizer is used for inference.

## Model output

The model classifies each input into one of two distinct classes:

* 0 - not-offensive

* 1 - offensive

## Acknowledgments

The authors acknowledge the financial support from the RobaCOFI project, which has indirectly received funding from the European Union’s Horizon 2020 research and innovation action programme via the AI4Media Open Call #1 issued and executed under the AI4Media project (Grant Agreement no. 951911), and from from the Slovenian Research Agency for the the project Hate speech in contemporary conceptualizations of nationalism, racism, gender and migration (J5-3102).

|

selvino/Reinforce-PixelcopterPLE-v0 | selvino | 2023-02-17T14:48:36Z | 0 | 0 | null | [

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-17T14:48:13Z | ---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-PixelcopterPLE-v0

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 17.90 +/- 14.69

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

Muffins987/amazon-xlnet-large-2 | Muffins987 | 2023-02-17T14:45:33Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"xlnet",

"text-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2023-02-17T12:13:28Z | ---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: amazon-xlnet-large-2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# amazon-xlnet-large-2

This model is a fine-tuned version of [xlnet-large-cased](https://huggingface.co/xlnet-large-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5627

- Accuracy: 0.7763

- F1: 0.7702

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|

| 0.5505 | 1.0 | 20000 | 0.5627 | 0.7763 | 0.7702 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

Dunkindont/Foto-Assisted-Diffusion-FAD_V0 | Dunkindont | 2023-02-17T14:22:20Z | 135 | 170 | diffusers | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"safetensors",

"artwork",

"HDR photography",

"photos",

"en",

"license:creativeml-openrail-m",

"region:us"

]

| text-to-image | 2023-02-10T23:22:33Z | ---

license: creativeml-openrail-m

language:

- en

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- safetensors

- diffusers

- artwork

- HDR photography

- safetensors

- photos

inference: true

---

# Foto Assisted Diffusion (FAD)_V0

This model is meant to mimic a modern HDR photography style

It was trained on 600 HDR images on SD1.5 and works best at **768x768** resolutions

Merged with one of my own models for illustrations and drawings, to increase flexibility

# Features:

* **No additional licensing**

* **Multi-resolution support**

* **HDR photographic outputs**

* **No Hi-Res fix required**

* [**Spreadsheet with supported resolutions, keywords for prompting and other useful hints/tips**](https://docs.google.com/spreadsheets/d/1RGRLZhgiFtLMm5Pg8qK0YMc6wr6uvj9-XdiFM877Pp0/edit#gid=364842308)

# Example Cards:

Below you will find some example cards that this model is capable of outputting.

You can acquire the images used here: [HF](https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/tree/main/Model%20Examples) or

[Google Drive](https://docs.google.com/spreadsheets/d/1RGRLZhgiFtLMm5Pg8qK0YMc6wr6uvj9-XdiFM877Pp0/edit#gid=364842308).

Google Drive gives you them all at once without needing to clone the repo, which is easier.

If you decide to clone it, set ``` GIT_LFS_SKIP_SMUDGE=1 ``` to skip downloading large files

Place them into an EXIF viewer such as the built in "PNG Info" tab in the popular Auto1111 repository to quickly copy the parameters and replicate them!

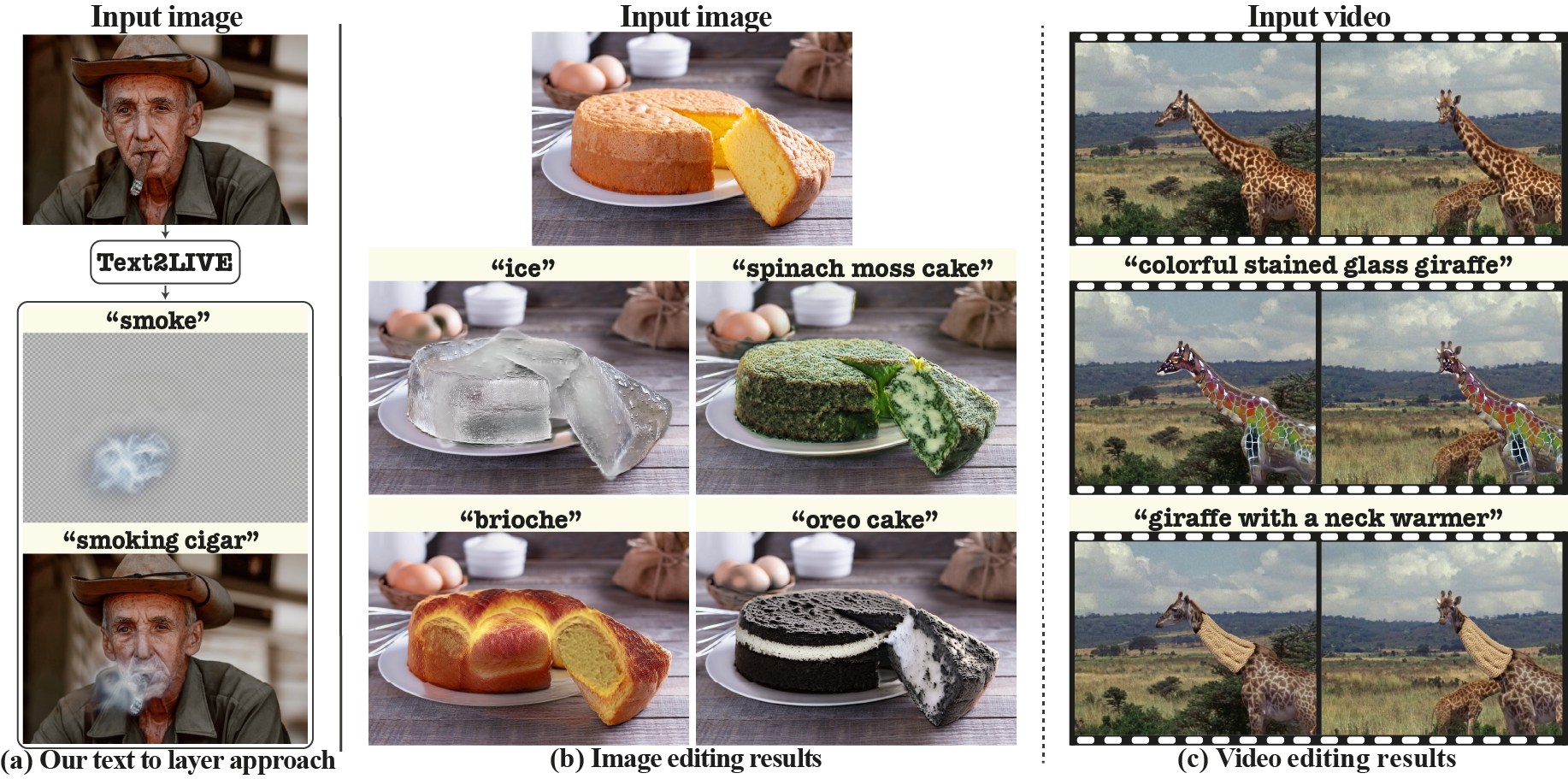

## 768x768 Food

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/768x768%20Food.jpg" style="max-width: 800px;" width="100%"/>

## 768x768 Landscapes

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/768x768%20Landscapes.jpg" style="max-width: 800px;" width="100%"/>

## 768x768 People

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/768x768%20People.jpg" style="max-width: 800px;" width="100%"/>

## 768x768 Random

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/768x768%20Random.jpg" style="max-width: 800px;" width="100%"/>

## 512x512 Artwork

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/512x512%20Artwork.jpg" style="max-width: 800px;" width="100%"/>

## 512x512 Photos

<img src="https://huggingface.co/Dunkindont/Foto-Assisted-Diffusion-FAD_V0/resolve/main/512x512%20Photo.jpg" style="max-width: 800px;" width="100%"/>

## Cloud Support

Sinkin kindly hosted our model. [Click here to run it on the cloud](https://sinkin.ai/m/V6vYoaL)!

## License

*My motivation for making this model was to have a free, non-restricted model for the community to use and for startups.*

*I was noticing the models people gravitated towards, were merged models which had prior license requirements from the people who trained them.*

*This was just a fun project I put together for you guys.*

*My fun ended when I posted the results :D*

*Enjoy! Sharing is caring :)* |

Alex48/ppo-Huggy | Alex48 | 2023-02-17T14:18:04Z | 0 | 0 | ml-agents | [

"ml-agents",

"tensorboard",

"onnx",

"unity-ml-agents",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

]

| reinforcement-learning | 2023-02-17T14:17:57Z |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

library_name: ml-agents

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-Huggy

2. Step 1: Write your model_id: Alex48/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

junnyu/demo_test_v2 | junnyu | 2023-02-17T14:11:41Z | 0 | 0 | null | [

"paddlepaddle",

"stable-diffusion",

"stable-diffusion-ppdiffusers",

"text-to-image",

"ppdiffusers",

"lora",

"base_model:runwayml/stable-diffusion-v1-5",

"base_model:adapter:runwayml/stable-diffusion-v1-5",

"license:creativeml-openrail-m",

"region:us"

]

| text-to-image | 2023-02-17T09:31:42Z |

---

license: creativeml-openrail-m

base_model: runwayml/stable-diffusion-v1-5

instance_prompt: a photo of sks dog

tags:

- stable-diffusion

- stable-diffusion-ppdiffusers

- text-to-image

- ppdiffusers

- lora

inference: false

---

# LoRA DreamBooth - junnyu/demo_test_v2

These are LoRA adaption weights for runwayml/stable-diffusion-v1-5. The weights were trained on a photo of sks dog using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

|

Belethor/mt5-small-finetuned-amazon-en-fr | Belethor | 2023-02-17T14:07:32Z | 3 | 0 | transformers | [

"transformers",

"tf",

"mt5",

"text2text-generation",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text2text-generation | 2023-02-17T09:36:54Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Belethor/mt5-small-finetuned-amazon-en-fr

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Belethor/mt5-small-finetuned-amazon-en-fr

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 9.0466

- Validation Loss: 4.0067

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 20496, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 9.0466 | 4.0067 | 0 |

### Framework versions

- Transformers 4.26.1

- TensorFlow 2.11.0

- Datasets 2.9.0

- Tokenizers 0.13.2

|

Glac1er/Glac1loraRest | Glac1er | 2023-02-17T14:04:51Z | 0 | 0 | null | [

"anime",

"region:us"

]

| null | 2023-02-17T14:03:31Z | ---

tags:

- anime

---

NOTICE: My LoRAs require high amount of tags to look good, I will fix this later on and update all of my LoRAs if everything works out.

# General Information

- [Overview](#overview)

- [Installation](#installation)

- [Usage](#usage)

- [SocialMedia](#socialmedia)

- [Plans for the future](#plans-for-the-future)

# Overview

Welcome to the place where I host my LoRAs. In short, LoRA is just a checkpoint trained on specific artstyle/subject that you load into your WebUI, that can be used with other models.

Although you can use it with any model, the effects of LoRA will vary between them.

Most of the previews use models that come from [WarriorMama777](https://huggingface.co/WarriorMama777/OrangeMixs) .

For more information about them, you can visit the original LoRA repository: https://github.com/cloneofsimo/lora

Every images posted here, or on the other sites have metadata in them that you can use in PNG Info tab in your WebUI to get access to the prompt of the image.

Everything I do here is for free of charge!

I don't guarantee that my LoRAs will give you good results, if you think they are bad, don't use them.

# Installation

To use them in your WebUI, please install the extension linked under, following the installation guide:

https://github.com/kohya-ss/sd-webui-additional-networks#installation

# Usage

All of my LoRAs are to be used with their original danbooru tag. For example:

```

asuna \(blue archive\)

```

My LoRAs will have sufixes that will tell you how much they were trained. Either by using words like "soft" and "hard",

where soft stands for lower amount of training and hard for higher amount of training.

More trained LoRA is harder to modify but provides higher consistency in details and original outfits,

while lower trained one will be more flexible, but may get details wrong.

All the LoRAs that aren't marked with PRUNED require tagging everything about the character to get the likness of it.

You have to tag every part of the character like: eyes,hair,breasts,accessories,special features,etc...

In theory, this should allow LoRAs to be more flexible, but it requires to prompt those things always, because character tag doesn't have those features baked into it.

From 1/16 I will test releasing pruned versions which will not require those prompting those things.

The usage of them is also explained in this guide:

https://github.com/kohya-ss/sd-webui-additional-networks#how-to-use

# SocialMedia

Here are some places where you can find my other stuff that I post, or if you feel like buying me a coffee:

[Twitter](https://twitter.com/Trauter8)

[Pixiv](https://www.pixiv.net/en/users/88153216)

[Buymeacoffee](https://www.buymeacoffee.com/Trauter)

# Plans for the future

- Remake all of my LoRAs into pruned versions which will be more user friendly and easier to use, and use 768x768 res. for training and better Learning Rate

- After finishing all of my LoRA that I want to make, go over the old ones and try to make them better.

- Accept suggestions for almost every character.

- Maybe get motivation to actually tag outfits.

# LoRAs

- [Genshin Impact](#genshin-impact)

- [Eula](#eula)

- [Barbara](#barbara)

- [Diluc](#diluc)

- [Mona](#mona)

- [Rosaria](#rosaria)

- [Yae Miko](#yae-miko)

- [Raiden Shogun](#raiden-shogun)

- [Kujou Sara](#kujou-sara)

- [Shenhe](#shenhe)

- [Yelan](#yelan)

- [Jean](#jean)

- [Lisa](#lisa)

- [Zhongli](#zhongli)

- [Yoimiya](#yoimiya)

- [Blue Archive](#blue-archive)

- [Rikuhachima Aru](#rikuhachima-aru)

- [Ichinose Asuna](#ichinose-asuna)

- [Fate Grand Order](#fate-grand-order)

- [Minamoto-no-Raikou](#minamoto-no-raikou)

- [Misc. Characters](#misc.-characters)

- [Aponia](#aponia)

- [Reisalin Stout](#reisalin-stout)

- [Artstyles](#artstyles)

- [Pozer](#pozer)

# Genshin Impact

- # Eula

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/1.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/1.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305293076)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Eula)

- # Barbara

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/bar.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/bar.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305435137)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Barbara)

- # Diluc

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/dil.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/dil.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305427945)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Diluc)

- # Mona

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/mon.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/mon.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305428050)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Mona)

- # Rosaria

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ros.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ros.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305428015)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Rosaria)

- # Yae Miko

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/yae.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/yae.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305448948)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/yae%20miko)

- # Raiden Shogun

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ra.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ra.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, raiden shogun, 1girl, breasts, solo, cleavage, kimono, bangs, sash, mole, obi, tassel, blush, large breasts, purple eyes, japanese clothes, long hair, looking at viewer, hand on own chest, hair ornament, purple hair, bridal gauntlets, closed mouth, purple kimono, blue hair, mole under eye, shoulder armor, long sleeves, wide sleeves, mitsudomoe (shape), tomoe (symbol), cowboy shot

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, from behind

Steps: 30, Sampler: DPM++ 2M Karras, CFG scale: 4.5, Seed: 2544310848, Size: 704x384, Model hash: 2bba3136, Denoising strength: 0.5, Clip skip: 2, ENSD: 31337, Hires upscale: 2.05, Hires upscaler: 4x_foolhardy_Remacri

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305313633)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Raiden%20Shogun)

- # Kujou Sara

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ku.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ku.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, kujou sara, 1girl, solo, mask, gloves, bangs, bodysuit, gradient, sidelocks, signature, yellow eyes, bird mask, mask on head, looking at viewer, short hair, black hair, detached sleeves, simple background, japanese clothes, black gloves, black bodysuit, wide sleeves, white background, upper body, gradient background, closed mouth, hair ornament, artist name, elbow gloves

Negative prompt: (worst quality, low quality:1.4)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 3966121353, Size: 512x768, Model hash: 931f9552, Denoising strength: 0.5, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires steps: 20, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305311498)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Kujou%20Sara)

- # Shenhe

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/sh.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/sh.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, shenhe \(genshin impact\), 1girl, solo, breasts, bodysuit, tassel, gloves, bangs, braid, outdoors, bird, jewelry, earrings, sky, breast curtain, long hair, hair over one eye, covered navel, blue eyes, looking at viewer, hair ornament, large breasts, shoulder cutout, clothing cutout, very long hair, hip vent, braided ponytail, partially fingerless gloves, black bodysuit, tassel earrings, black gloves, gold trim, cowboy shot, white hair

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 573332187, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305307599)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Shenhe)

- # Yelan

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/10.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/10.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, yelan \(genshin impact\), 1girl, breasts, solo, bangs, armpits, smile, sky, cleavage, jewelry, gloves, jacket, dice, mole, cloud, grin, dress, blush, earrings, thighs, tassel, sleeveless, day, outdoors, large breasts, looking at viewer, green eyes, arms up, short hair, blue hair, vision (genshin impact), fur trim, white jacket, blue sky, mole on breast, arms behind head, bob cut, multicolored hair, black hair, fur-trimmed jacket, elbow gloves, bare shoulders, blue dress, parted lips, diagonal bangs, clothing cutout, pelvic curtain, asymmetrical gloves

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name

Steps: 23, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 575500509, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 2.4, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305296897)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Yelan)

- # Jean

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/333.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/333.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, jean \(genshin impact\), 1girl, breasts, solo, cleavage, strapless, smile, ponytail, bangs, jewelry, earrings, bow, capelet, signature, sidelocks, cape, corset, shiny, blonde hair, long hair, upper body, detached sleeves, purple eyes, hair between eyes, hair bow, parted lips, looking to the side, large breasts, detached collar, medium breasts, blue capelet, white background, black bow, blue eyes, bare shoulders, simple background

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7.5, Seed: 32930253, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.59, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305307594)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Jean)

- # Lisa

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/lis.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/lis.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, lisa \(genshin impact\), 1girl, solo, hat, breasts, gloves, cleavage, flower, smile, bangs, dress, rose, jewelry, witch, capelet, green eyes, witch hat, brown hair, purple headwear, looking at viewer, white background, large breasts, long hair, simple background, black gloves, purple flower, hair between eyes, upper body, purple rose, parted lips, purple capelet, hat flower, multicolored dress, hair ornament, multicolored clothes, vision (genshin impact)

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, worst quality, low quality, extra digits, loli, loli face

Steps: 23, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 350134479, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305290865)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Lisa)

- # Zhongli

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/zho.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/zho.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, zhongli \(genshin impact\), solo, 1boy, bangs, jewelry, tassel, earrings, ponytail, low ponytail, gloves, necktie, jacket, shirt, formal, petals, suit, makeup, eyeliner, eyeshadow, male focus, long hair, brown hair, multicolored hair, long sleeves, tassel earrings, single earring, collared shirt, hair between eyes, black gloves, closed mouth, yellow eyes, gradient hair, orange hair, simple background

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, worst quality, low quality, extra digits, loli, loli face

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7, Seed: 88418604, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305311423)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Zhongli)

- # Yoimiya

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/Yoi.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/Yoi.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305448498)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Yoimiya)

# Blue Archive

- # Rikuhachima Aru

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/22.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/22.png)

<details>

<summary>Sample Prompt</summary>

<pre>

aru \(blue archive\), masterpiece, best quality, 1girl, solo, horns, skirt, gloves, shirt, halo, window, breasts, blush, sweatdrop, ribbon, coat, bangs, :d, smile, indoors, standing, plant, thighs, sweat, jacket, day, sunlight, long hair, white shirt, white gloves, black skirt, looking at viewer, open mouth, long sleeves, red ribbon, fur trim, neck ribbon, red hair, fur-trimmed coat, collared shirt, orange eyes, medium breasts, brown coat, hands up, side slit, coat on shoulders, v-shaped eyebrows, yellow eyes, potted plant, fur collar, shirt tucked in, demon horns, high-waist skirt, dress shirt

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 1190296645, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305293051)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Blue-Archive/Rikuhachima%20Aru)

- # Ichinose Asuna

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/asu.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/asu.png)

<details>

<summary>Sample Prompt</summary>

<pre>

photorealistic, (hyperrealistic:1.2), (extremely detailed CG unity 8k wallpaper), (ultra-detailed), (mature female:1.2), masterpiece, best quality, asuna \(blue archive\), 1girl, breasts, solo, gloves, pantyhose, ass, leotard, smile, tail, halo, grin, blush, bangs, sideboob, highleg, standing, mole, strapless, ribbon, thighs, animal ears, playboy bunny, rabbit ears, long hair, white gloves, very long hair, large breasts, high heels, blue leotard, hair over one eye, fake animal ears, blue eyes, looking at viewer, white footwear, rabbit tail, official alternate costume, full body, elbow gloves, simple background, white background, absurdly long hair, bare shoulders, detached collar, thighband pantyhose, leaning forward, highleg leotard, strapless leotard, hair ribbon, brown pantyhose, black pantyhose, mole on breast, light brown hair, brown hair, looking back, fake tail

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 2052579935, Size: 512x768, Model hash: ffa7b160, Clip skip: 2, ENSD: 31337

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305292996)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Blue-Archive/Ichinose%20Asuna)

# Fate Grand Order

- # Minamoto-no-Raikou

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/3.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/3.png)

<details>

<summary>Sample Prompt</summary>

<pre>

mature female, masterpiece, best quality, minamoto no raikou \(fate\), 1girl, breasts, solo, bodysuit, gloves, bangs, smile, rope, heart, blush, thighs, armor, kote, long hair, purple hair, fingerless gloves, purple eyes, large breasts, very long hair, looking at viewer, parted bangs, ribbed sleeves, black gloves, arm guards, covered navel, low-tied long hair, purple bodysuit, japanese armor

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7.5, Seed: 3383453781, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.59, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305290900)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Fate-Grand-Order/Minamoto-no-Raikou)

# Misc. Characters

- # Aponia

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/apo.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/apo.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305445819)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Misc.%20Characters/Aponia)

- # Reisalin Stout

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ryza.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ryza.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305448553)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Misc.%20Characters/reisalin%20stout)

# Artstyles

- # Pozer

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/art.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/art.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305445399)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Artstyles/Pozer) |

lizziedearden/my_aime_gpt2_clm-model | lizziedearden | 2023-02-17T13:58:08Z | 4 | 0 | transformers | [

"transformers",

"tf",

"gpt2",

"text-generation",

"generated_from_keras_callback",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-generation | 2023-02-17T12:09:15Z | ---

license: mit

tags:

- generated_from_keras_callback

model-index:

- name: lizziedearden/my_aime_gpt2_clm-model

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# lizziedearden/my_aime_gpt2_clm-model

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 2.9895

- Validation Loss: 2.9968

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 4.3162 | 3.7395 | 0 |

| 3.4696 | 3.2608 | 1 |

| 2.9895 | 2.9968 | 2 |

### Framework versions

- Transformers 4.26.1

- TensorFlow 2.9.2

- Datasets 2.9.0

- Tokenizers 0.13.2

|

Tritkoman/EnglishtoAncientGreekV5 | Tritkoman | 2023-02-17T13:56:46Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"autotrain",

"translation",

"unk",

"dataset:Tritkoman/autotrain-data-apapaqjajq",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| translation | 2023-02-17T13:35:19Z | ---

tags:

- autotrain

- translation

language:

- unk

- unk

datasets:

- Tritkoman/autotrain-data-apapaqjajq

co2_eq_emissions:

emissions: 0.10700184364056661

---

# Model Trained Using AutoTrain

- Problem type: Translation

- Model ID: 3548795734

- CO2 Emissions (in grams): 0.1070

## Validation Metrics

- Loss: 1.703

- SacreBLEU: 7.516

- Gen len: 25.710 |

Ithai/Reinforce-Pixelcopter-PLE-v0 | Ithai | 2023-02-17T13:49:21Z | 0 | 0 | null | [

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-16T15:53:28Z | ---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-Pixelcopter-PLE-v0

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 39.05 +/- 37.14

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

YoaneBailiang/STL | YoaneBailiang | 2023-02-17T13:29:08Z | 0 | 0 | null | [

"license:creativeml-openrail-m",

"region:us"

]

| null | 2023-02-17T13:29:08Z | ---

license: creativeml-openrail-m

---

|

katkha/whisper-small-ka | katkha | 2023-02-17T12:22:05Z | 9 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"whisper",

"automatic-speech-recognition",

"whisper-event",

"generated_from_trainer",

"ka",

"dataset:mozilla-foundation/common_voice_11_0",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

]

| automatic-speech-recognition | 2023-02-13T11:25:36Z | ---

language:

- ka

license: apache-2.0

tags:

- whisper-event

- generated_from_trainer

datasets:

- mozilla-foundation/common_voice_11_0

model-index:

- name: Whisper Small ka - Davit Barbakadze

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Whisper Small ka - Davit Barbakadze

This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.1652

- eval_wer: 47.0800

- eval_runtime: 1493.5786

- eval_samples_per_second: 1.673

- eval_steps_per_second: 0.21

- epoch: 13.01

- step: 1000

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 5000

- mixed_precision_training: Native AMP

### Framework versions

- Transformers 4.27.0.dev0

- Pytorch 1.13.1+cu116

- Datasets 2.9.1.dev0

- Tokenizers 0.13.2

|

MunSu/xlm-roberta-base-finetuned-panx-de | MunSu | 2023-02-17T12:18:59Z | 9 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"dataset:xtreme",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| token-classification | 2023-02-14T23:58:31Z | ---

license: mit

tags:

- generated_from_trainer

datasets:

- xtreme

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-de

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: xtreme

type: xtreme

config: PAN-X.fr

split: validation

args: PAN-X.fr

metrics:

- name: F1

type: f1

value: 0.8525033829499323

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-de

This model is a fine-tuned version of [MunSu/xlm-roberta-base-finetuned-panx-de](https://huggingface.co/MunSu/xlm-roberta-base-finetuned-panx-de) on the xtreme dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4005

- F1: 0.8525

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| No log | 1.0 | 500 | 0.3080 | 0.8254 |

| No log | 2.0 | 1000 | 0.3795 | 0.8448 |

| No log | 3.0 | 1500 | 0.4005 | 0.8525 |

### Framework versions

- Transformers 4.26.0

- Pytorch 1.8.0

- Datasets 2.9.0

- Tokenizers 0.13.2

|

ZhihongDeng/Reinforce-CartPole-v1 | ZhihongDeng | 2023-02-17T12:07:05Z | 0 | 0 | null | [

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-17T12:06:55Z | ---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

jiaoqsh/mbart-large-50-finetuned-stocks-event-1 | jiaoqsh | 2023-02-17T12:02:11Z | 10 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"mbart",

"text2text-generation",

"summarization",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| summarization | 2023-02-17T11:42:33Z | ---

license: mit

tags:

- summarization

- generated_from_trainer

metrics:

- rouge

model-index:

- name: mbart-large-50-finetuned-stocks-event-1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mbart-large-50-finetuned-stocks-event-1

This model is a fine-tuned version of [jiaoqsh/mbart-large-50-finetuned-stock-dividend](https://huggingface.co/jiaoqsh/mbart-large-50-finetuned-stock-dividend) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1419

- Rouge1: 0.9120

- Rouge2: 0.8056

- Rougel: 0.9120

- Rougelsum: 0.9120

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5.6e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 8

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|

| 0.3179 | 1.0 | 20 | 0.1784 | 0.8727 | 0.7639 | 0.8704 | 0.8727 |

| 0.0569 | 2.0 | 40 | 0.0822 | 0.9167 | 0.8333 | 0.9167 | 0.9144 |

| 0.0284 | 3.0 | 60 | 0.1842 | 0.9120 | 0.8194 | 0.9144 | 0.9120 |

| 0.0153 | 4.0 | 80 | 0.1448 | 0.9236 | 0.8472 | 0.9213 | 0.9236 |

| 0.0066 | 5.0 | 100 | 0.1271 | 0.9444 | 0.875 | 0.9421 | 0.9444 |

| 0.0013 | 6.0 | 120 | 0.1381 | 0.9190 | 0.8194 | 0.9213 | 0.9213 |

| 0.0083 | 7.0 | 140 | 0.1414 | 0.9190 | 0.8194 | 0.9213 | 0.9213 |

| 0.0002 | 8.0 | 160 | 0.1419 | 0.9120 | 0.8056 | 0.9120 | 0.9120 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.9.0

- Tokenizers 0.13.2

|

alexrink/t5-small-finetuned-xsum | alexrink | 2023-02-17T11:44:18Z | 7 | 0 | transformers | [

"transformers",

"tf",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: alexrink/t5-small-finetuned-xsum

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# alexrink/t5-small-finetuned-xsum

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 5.6399

- Validation Loss: 6.0028

- Epoch: 19

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 0.2, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 11.4991 | 6.9902 | 0 |

| 6.5958 | 6.2502 | 1 |

| 6.1443 | 6.1638 | 2 |

| 5.9379 | 6.0765 | 3 |

| 5.7739 | 5.9393 | 4 |

| 5.7033 | 6.0061 | 5 |

| 5.7070 | 5.9305 | 6 |

| 5.7000 | 5.9698 | 7 |

| 5.6888 | 5.9223 | 8 |

| 5.6657 | 5.9773 | 9 |

| 5.6827 | 5.9734 | 10 |

| 5.6380 | 5.9428 | 11 |

| 5.6532 | 5.9799 | 12 |

| 5.6617 | 5.9974 | 13 |

| 5.6402 | 5.9563 | 14 |

| 5.6710 | 5.9926 | 15 |

| 5.6999 | 5.9764 | 16 |

| 5.6573 | 5.9557 | 17 |

| 5.6297 | 5.9678 | 18 |

| 5.6399 | 6.0028 | 19 |

### Framework versions

- Transformers 4.26.1

- TensorFlow 2.11.0

- Datasets 2.9.0

- Tokenizers 0.13.2

|

Tincando/my_awesome_neo_gpt-model | Tincando | 2023-02-17T11:33:12Z | 12 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"gpt_neo",

"text-generation",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-generation | 2023-02-16T19:00:41Z | ---

license: mit

tags:

- generated_from_trainer

model-index:

- name: my_awesome_neo_gpt-model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_awesome_neo_gpt-model

This model is a fine-tuned version of [EleutherAI/gpt-neo-125M](https://huggingface.co/EleutherAI/gpt-neo-125M) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 3.4912

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 3.3957 | 1.0 | 1109 | 3.4908 |

| 3.2523 | 2.0 | 2218 | 3.4871 |

| 3.1771 | 3.0 | 3327 | 3.4912 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu116

- Datasets 2.9.0

- Tokenizers 0.13.2

|

danilyef/dqn-SpaceInvadersNoFrameskip-v4 | danilyef | 2023-02-17T11:32:00Z | 0 | 0 | stable-baselines3 | [

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-17T11:31:13Z | ---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 717.50 +/- 348.83

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga danilyef -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga danilyef -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga danilyef

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

Al3ksandra/distilbert-base-uncased-finetuned-emotion | Al3ksandra | 2023-02-17T11:10:51Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2023-02-14T12:21:06Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.3158

- eval_accuracy: 0.902

- eval_f1: 0.8997

- eval_runtime: 102.1735

- eval_samples_per_second: 19.575

- eval_steps_per_second: 0.313

- epoch: 1.0

- step: 250

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Framework versions

- Transformers 4.24.0

- Pytorch 1.13.0+cpu

- Datasets 2.9.0

- Tokenizers 0.13.2

|

akoshel/Reinforce-PixelCopter | akoshel | 2023-02-17T11:06:34Z | 0 | 0 | null | [

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2023-02-17T10:38:59Z | ---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-PixelCopter

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 25.30 +/- 17.07

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

akadhim-ai/dilbert-comic-model-v1-2_2k | akadhim-ai | 2023-02-17T10:58:58Z | 3 | 0 | diffusers | [

"diffusers",

"text-to-image",

"en",

"dataset:Ali-fb/dilbert-comic-sample-dataset",

"license:openrail",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

]

| text-to-image | 2023-02-15T01:53:27Z | ---

license: openrail

datasets:

- Ali-fb/dilbert-comic-sample-dataset

language:

- en

metrics:

- accuracy

library_name: diffusers

pipeline_tag: text-to-image

---

# DreamBooth model for the Dilbert concept trained by Ali-fb/dilbert-comic-sample-dataset on the a dataset.

This is a Stable Diffusion model fine-tuned on the Dilbert concept. It can be used by modifying the `instance_prompt`: **dilbert**

## Description

A DilbertDiffusion model

## Usage

```python

from diffusers import StableDiffusionPipeline

pipeline = StableDiffusionPipeline.from_pretrained('Ali-fb/dilbert-comic-model-v1-2_2k')

image = pipeline().images[0]

image

``` |

ybelkada/gpt-neo-125m-detoxified-long-context | ybelkada | 2023-02-17T10:58:33Z | 14 | 0 | transformers | [

"transformers",

"pytorch",

"gpt_neo",

"text-generation",

"trl",

"reinforcement-learning",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| reinforcement-learning | 2023-02-13T19:45:08Z | ---

license: apache-2.0

tags:

- trl

- transformers

- reinforcement-learning

---

# TRL Model

This is a [TRL language model](https://github.com/lvwerra/trl) that has been fine-tuned with reinforcement learning to

guide the model outputs according to a value, function, or human feedback. The model can be used for text generation.