modelId

stringlengths 4

112

| sha

stringlengths 40

40

| lastModified

stringlengths 24

24

| tags

list | pipeline_tag

stringclasses 29

values | private

bool 1

class | author

stringlengths 2

38

⌀ | config

null | id

stringlengths 4

112

| downloads

float64 0

36.8M

⌀ | likes

float64 0

712

⌀ | library_name

stringclasses 17

values | __index_level_0__

int64 0

38.5k

| readme

stringlengths 0

186k

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

delvan/DialoGPT-medium-DwightV1

|

eba600f8bb554a42b871329fdc9569687afd8e16

|

2021-10-24T20:29:11.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

| false |

delvan

| null |

delvan/DialoGPT-medium-DwightV1

| 467 | null |

transformers

| 2,400 |

---

tags:

- conversational

---

#DialoGPT medium based model of Dwight Schrute, trained with 10 context lines of history for 20 epochs.

|

dhtocks/Topic-Classification

|

6ff7f547583d0c48b862f349f9ca11747731ad61

|

2022-01-12T03:14:00.000Z

|

[

"pytorch",

"roberta",

"text-classification",

"transformers"

] |

text-classification

| false |

dhtocks

| null |

dhtocks/Topic-Classification

| 467 | null |

transformers

| 2,401 |

Entry not found

|

facebook/nllb-200-1.3B

|

592daca35b1d4712f683a3401240ed61f0854685

|

2022-07-19T15:46:08.000Z

|

[

"pytorch",

"m2m_100",

"text2text-generation",

"ace",

"acm",

"acq",

"aeb",

"af",

"ajp",

"ak",

"als",

"am",

"apc",

"ar",

"ars",

"ary",

"arz",

"as",

"ast",

"awa",

"ayr",

"azb",

"azj",

"ba",

"bm",

"ban",

"be",

"bem",

"bn",

"bho",

"bjn",

"bo",

"bs",

"bug",

"bg",

"ca",

"ceb",

"cs",

"cjk",

"ckb",

"crh",

"cy",

"da",

"de",

"dik",

"dyu",

"dz",

"el",

"en",

"eo",

"et",

"eu",

"ee",

"fo",

"fj",

"fi",

"fon",

"fr",

"fur",

"fuv",

"gaz",

"gd",

"ga",

"gl",

"gn",

"gu",

"ht",

"ha",

"he",

"hi",

"hne",

"hr",

"hu",

"hy",

"ig",

"ilo",

"id",

"is",

"it",

"jv",

"ja",

"kab",

"kac",

"kam",

"kn",

"ks",

"ka",

"kk",

"kbp",

"kea",

"khk",

"km",

"ki",

"rw",

"ky",

"kmb",

"kmr",

"knc",

"kg",

"ko",

"lo",

"lij",

"li",

"ln",

"lt",

"lmo",

"ltg",

"lb",

"lua",

"lg",

"luo",

"lus",

"lvs",

"mag",

"mai",

"ml",

"mar",

"min",

"mk",

"mt",

"mni",

"mos",

"mi",

"my",

"nl",

"nn",

"nb",

"npi",

"nso",

"nus",

"ny",

"oc",

"ory",

"pag",

"pa",

"pap",

"pbt",

"pes",

"plt",

"pl",

"pt",

"prs",

"quy",

"ro",

"rn",

"ru",

"sg",

"sa",

"sat",

"scn",

"shn",

"si",

"sk",

"sl",

"sm",

"sn",

"sd",

"so",

"st",

"es",

"sc",

"sr",

"ss",

"su",

"sv",

"swh",

"szl",

"ta",

"taq",

"tt",

"te",

"tg",

"tl",

"th",

"ti",

"tpi",

"tn",

"ts",

"tk",

"tum",

"tr",

"tw",

"tzm",

"ug",

"uk",

"umb",

"ur",

"uzn",

"vec",

"vi",

"war",

"wo",

"xh",

"ydd",

"yo",

"yue",

"zh",

"zsm",

"zu",

"dataset:flores-200",

"transformers",

"nllb",

"license:cc-by-nc-4.0",

"autotrain_compatible"

] |

text2text-generation

| false |

facebook

| null |

facebook/nllb-200-1.3B

| 467 | 1 |

transformers

| 2,402 |

---

language:

- ace

- acm

- acq

- aeb

- af

- ajp

- ak

- als

- am

- apc

- ar

- ars

- ary

- arz

- as

- ast

- awa

- ayr

- azb

- azj

- ba

- bm

- ban

- be

- bem

- bn

- bho

- bjn

- bo

- bs

- bug

- bg

- ca

- ceb

- cs

- cjk

- ckb

- crh

- cy

- da

- de

- dik

- dyu

- dz

- el

- en

- eo

- et

- eu

- ee

- fo

- fj

- fi

- fon

- fr

- fur

- fuv

- gaz

- gd

- ga

- gl

- gn

- gu

- ht

- ha

- he

- hi

- hne

- hr

- hu

- hy

- ig

- ilo

- id

- is

- it

- jv

- ja

- kab

- kac

- kam

- kn

- ks

- ka

- kk

- kbp

- kea

- khk

- km

- ki

- rw

- ky

- kmb

- kmr

- knc

- kg

- ko

- lo

- lij

- li

- ln

- lt

- lmo

- ltg

- lb

- lua

- lg

- luo

- lus

- lvs

- mag

- mai

- ml

- mar

- min

- mk

- mt

- mni

- mos

- mi

- my

- nl

- nn

- nb

- npi

- nso

- nus

- ny

- oc

- ory

- pag

- pa

- pap

- pbt

- pes

- plt

- pl

- pt

- prs

- quy

- ro

- rn

- ru

- sg

- sa

- sat

- scn

- shn

- si

- sk

- sl

- sm

- sn

- sd

- so

- st

- es

- sc

- sr

- ss

- su

- sv

- swh

- szl

- ta

- taq

- tt

- te

- tg

- tl

- th

- ti

- tpi

- tn

- ts

- tk

- tum

- tr

- tw

- tzm

- ug

- uk

- umb

- ur

- uzn

- vec

- vi

- war

- wo

- xh

- ydd

- yo

- yue

- zh

- zsm

- zu

language_details: "ace_Arab, ace_Latn, acm_Arab, acq_Arab, aeb_Arab, afr_Latn, ajp_Arab, aka_Latn, amh_Ethi, apc_Arab, arb_Arab, ars_Arab, ary_Arab, arz_Arab, asm_Beng, ast_Latn, awa_Deva, ayr_Latn, azb_Arab, azj_Latn, bak_Cyrl, bam_Latn, ban_Latn,bel_Cyrl, bem_Latn, ben_Beng, bho_Deva, bjn_Arab, bjn_Latn, bod_Tibt, bos_Latn, bug_Latn, bul_Cyrl, cat_Latn, ceb_Latn, ces_Latn, cjk_Latn, ckb_Arab, crh_Latn, cym_Latn, dan_Latn, deu_Latn, dik_Latn, dyu_Latn, dzo_Tibt, ell_Grek, eng_Latn, epo_Latn, est_Latn, eus_Latn, ewe_Latn, fao_Latn, pes_Arab, fij_Latn, fin_Latn, fon_Latn, fra_Latn, fur_Latn, fuv_Latn, gla_Latn, gle_Latn, glg_Latn, grn_Latn, guj_Gujr, hat_Latn, hau_Latn, heb_Hebr, hin_Deva, hne_Deva, hrv_Latn, hun_Latn, hye_Armn, ibo_Latn, ilo_Latn, ind_Latn, isl_Latn, ita_Latn, jav_Latn, jpn_Jpan, kab_Latn, kac_Latn, kam_Latn, kan_Knda, kas_Arab, kas_Deva, kat_Geor, knc_Arab, knc_Latn, kaz_Cyrl, kbp_Latn, kea_Latn, khm_Khmr, kik_Latn, kin_Latn, kir_Cyrl, kmb_Latn, kon_Latn, kor_Hang, kmr_Latn, lao_Laoo, lvs_Latn, lij_Latn, lim_Latn, lin_Latn, lit_Latn, lmo_Latn, ltg_Latn, ltz_Latn, lua_Latn, lug_Latn, luo_Latn, lus_Latn, mag_Deva, mai_Deva, mal_Mlym, mar_Deva, min_Latn, mkd_Cyrl, plt_Latn, mlt_Latn, mni_Beng, khk_Cyrl, mos_Latn, mri_Latn, zsm_Latn, mya_Mymr, nld_Latn, nno_Latn, nob_Latn, npi_Deva, nso_Latn, nus_Latn, nya_Latn, oci_Latn, gaz_Latn, ory_Orya, pag_Latn, pan_Guru, pap_Latn, pol_Latn, por_Latn, prs_Arab, pbt_Arab, quy_Latn, ron_Latn, run_Latn, rus_Cyrl, sag_Latn, san_Deva, sat_Beng, scn_Latn, shn_Mymr, sin_Sinh, slk_Latn, slv_Latn, smo_Latn, sna_Latn, snd_Arab, som_Latn, sot_Latn, spa_Latn, als_Latn, srd_Latn, srp_Cyrl, ssw_Latn, sun_Latn, swe_Latn, swh_Latn, szl_Latn, tam_Taml, tat_Cyrl, tel_Telu, tgk_Cyrl, tgl_Latn, tha_Thai, tir_Ethi, taq_Latn, taq_Tfng, tpi_Latn, tsn_Latn, tso_Latn, tuk_Latn, tum_Latn, tur_Latn, twi_Latn, tzm_Tfng, uig_Arab, ukr_Cyrl, umb_Latn, urd_Arab, uzn_Latn, vec_Latn, vie_Latn, war_Latn, wol_Latn, xho_Latn, ydd_Hebr, yor_Latn, yue_Hant, zho_Hans, zho_Hant, zul_Latn"

tags:

- nllb

license: "cc-by-nc-4.0"

datasets:

- flores-200

metrics:

- bleu

- spbleu

- chrf++

---

# NLLB-200

This is the model card of NLLB-200's 1.3B variant.

Here are the [metrics](https://tinyurl.com/nllb200dense1bmetrics) for that particular checkpoint.

- Information about training algorithms, parameters, fairness constraints or other applied approaches, and features. The exact training algorithm, data and the strategies to handle data imbalances for high and low resource languages that were used to train NLLB-200 is described in the paper.

- Paper or other resource for more information NLLB Team et al, No Language Left Behind: Scaling Human-Centered Machine Translation, Arxiv, 2022

- License: CC-BY-NC

- Where to send questions or comments about the model: https://github.com/facebookresearch/fairseq/issues

## Intended Use

- Primary intended uses: NLLB-200 is a machine translation model primarily intended for research in machine translation, - especially for low-resource languages. It allows for single sentence translation among 200 languages. Information on how to - use the model can be found in Fairseq code repository along with the training code and references to evaluation and training data.

- Primary intended users: Primary users are researchers and machine translation research community.

- Out-of-scope use cases: NLLB-200 is a research model and is not released for production deployment. NLLB-200 is trained on general domain text data and is not intended to be used with domain specific texts, such as medical domain or legal domain. The model is not intended to be used for document translation. The model was trained with input lengths not exceeding 512 tokens, therefore translating longer sequences might result in quality degradation. NLLB-200 translations can not be used as certified translations.

## Metrics

• Model performance measures: NLLB-200 model was evaluated using BLEU, spBLEU, and chrF++ metrics widely adopted by machine translation community. Additionally, we performed human evaluation with the XSTS protocol and measured the toxicity of the generated translations.

## Evaluation Data

- Datasets: Flores-200 dataset is described in Section 4

- Motivation: We used Flores-200 as it provides full evaluation coverage of the languages in NLLB-200

- Preprocessing: Sentence-split raw text data was preprocessed using SentencePiece. The

SentencePiece model is released along with NLLB-200.

## Training Data

• We used parallel multilingual data from a variety of sources to train the model. We provide detailed report on data selection and construction process in Section 5 in the paper. We also used monolingual data constructed from Common Crawl. We provide more details in Section 5.2.

## Ethical Considerations

• In this work, we took a reflexive approach in technological development to ensure that we prioritize human users and minimize risks that could be transferred to them. While we reflect on our ethical considerations throughout the article, here are some additional points to highlight. For one, many languages chosen for this study are low-resource languages, with a heavy emphasis on African languages. While quality translation could improve education and information access in many in these communities, such an access could also make groups with lower levels of digital literacy more vulnerable to misinformation or online scams. The latter scenarios could arise if bad actors misappropriate our work for nefarious activities, which we conceive as an example of unintended use. Regarding data acquisition, the training data used for model development were mined from various publicly available sources on the web. Although we invested heavily in data cleaning, personally identifiable information may not be entirely eliminated. Finally, although we did our best to optimize for translation quality, mistranslations produced by the model could remain. Although the odds are low, this could have adverse impact on those who rely on these translations to make important decisions (particularly when related to health and safety).

## Caveats and Recommendations

• Our model has been tested on the Wikimedia domain with limited investigation on other domains supported in NLLB-MD. In addition, the supported languages may have variations that our model is not capturing. Users should make appropriate assessments.

## Carbon Footprint Details

• The carbon dioxide (CO2e) estimate is reported in Section 8.8.

|

IlyaGusev/xlm_roberta_large_headline_cause_full

|

481b4dfb94058bbcd8d47330c45755fa69481533

|

2022-07-13T15:35:52.000Z

|

[

"pytorch",

"xlm-roberta",

"text-classification",

"ru",

"en",

"dataset:IlyaGusev/headline_cause",

"arxiv:2108.12626",

"transformers",

"xlm-roberta-large",

"license:apache-2.0"

] |

text-classification

| false |

IlyaGusev

| null |

IlyaGusev/xlm_roberta_large_headline_cause_full

| 465 | null |

transformers

| 2,403 |

---

language:

- ru

- en

tags:

- xlm-roberta-large

datasets:

- IlyaGusev/headline_cause

license: apache-2.0

widget:

- text: "Песков опроверг свой перевод на удаленку</s>Дмитрий Песков перешел на удаленку"

---

# XLM-RoBERTa HeadlineCause Full

## Model description

This model was trained to predict the presence of causal relations between two headlines. This model is for the Full task with 7 possible labels: titles are almost the same, A causes B, B causes A, A refutes B, B refutes A, A linked with B in another way, A is not linked to B. English and Russian languages are supported.

You can use hosted inference API to infer a label for a headline pair. To do this, you shoud seperate headlines with ```</s>``` token.

For example:

```

Песков опроверг свой перевод на удаленку</s>Дмитрий Песков перешел на удаленку

```

## Intended uses & limitations

#### How to use

```python

from tqdm.notebook import tqdm

from transformers import AutoTokenizer, AutoModelForSequenceClassification, pipeline

def get_batch(data, batch_size):

start_index = 0

while start_index < len(data):

end_index = start_index + batch_size

batch = data[start_index:end_index]

yield batch

start_index = end_index

def pipe_predict(data, pipe, batch_size=64):

raw_preds = []

for batch in tqdm(get_batch(data, batch_size)):

raw_preds += pipe(batch)

return raw_preds

MODEL_NAME = TOKENIZER_NAME = "IlyaGusev/xlm_roberta_large_headline_cause_full"

tokenizer = AutoTokenizer.from_pretrained(TOKENIZER_NAME, do_lower_case=False)

model = AutoModelForSequenceClassification.from_pretrained(MODEL_NAME)

model.eval()

pipe = pipeline("text-classification", model=model, tokenizer=tokenizer, framework="pt", return_all_scores=True)

texts = [

(

"Judge issues order to allow indoor worship in NC churches",

"Some local churches resume indoor services after judge lifted NC governor’s restriction"

),

(

"Gov. Kevin Stitt defends $2 million purchase of malaria drug touted by Trump",

"Oklahoma spent $2 million on malaria drug touted by Trump"

),

(

"Песков опроверг свой перевод на удаленку",

"Дмитрий Песков перешел на удаленку"

)

]

pipe_predict(texts, pipe)

```

#### Limitations and bias

The models are intended to be used on news headlines. No other limitations are known.

## Training data

* HuggingFace dataset: [IlyaGusev/headline_cause](https://huggingface.co/datasets/IlyaGusev/headline_cause)

* GitHub: [IlyaGusev/HeadlineCause](https://github.com/IlyaGusev/HeadlineCause)

## Training procedure

* Notebook: [HeadlineCause](https://colab.research.google.com/drive/1NAnD0OJ0TnYCJRsHpYUyYkjr_yi8ObcA)

* Stand-alone script: [train.py](https://github.com/IlyaGusev/HeadlineCause/blob/main/headline_cause/train.py)

## Eval results

Evaluation results can be found in the [arxiv paper](https://arxiv.org/pdf/2108.12626.pdf).

### BibTeX entry and citation info

```bibtex

@misc{gusev2021headlinecause,

title={HeadlineCause: A Dataset of News Headlines for Detecting Causalities},

author={Ilya Gusev and Alexey Tikhonov},

year={2021},

eprint={2108.12626},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

uer/chinese_roberta_L-2_H-768

|

1aa682dea961da5d795029b2a5d097099982662c

|

2022-07-15T08:11:17.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"fill-mask",

"zh",

"dataset:CLUECorpusSmall",

"arxiv:1909.05658",

"arxiv:1908.08962",

"transformers",

"autotrain_compatible"

] |

fill-mask

| false |

uer

| null |

uer/chinese_roberta_L-2_H-768

| 465 | null |

transformers

| 2,404 |

---

language: zh

datasets: CLUECorpusSmall

widget:

- text: "北京是[MASK]国的首都。"

---

# Chinese RoBERTa Miniatures

## Model description

This is the set of 24 Chinese RoBERTa models pre-trained by [UER-py](https://github.com/dbiir/UER-py/), which is introduced in [this paper](https://arxiv.org/abs/1909.05658).

[Turc et al.](https://arxiv.org/abs/1908.08962) have shown that the standard BERT recipe is effective on a wide range of model sizes. Following their paper, we released the 24 Chinese RoBERTa models. In order to facilitate users to reproduce the results, we used the publicly available corpus and provided all training details.

You can download the 24 Chinese RoBERTa miniatures either from the [UER-py Modelzoo page](https://github.com/dbiir/UER-py/wiki/Modelzoo), or via HuggingFace from the links below:

| | H=128 | H=256 | H=512 | H=768 |

| -------- | :-----------------------: | :-----------------------: | :-------------------------: | :-------------------------: |

| **L=2** | [**2/128 (Tiny)**][2_128] | [2/256][2_256] | [2/512][2_512] | [2/768][2_768] |

| **L=4** | [4/128][4_128] | [**4/256 (Mini)**][4_256] | [**4/512 (Small)**][4_512] | [4/768][4_768] |

| **L=6** | [6/128][6_128] | [6/256][6_256] | [6/512][6_512] | [6/768][6_768] |

| **L=8** | [8/128][8_128] | [8/256][8_256] | [**8/512 (Medium)**][8_512] | [8/768][8_768] |

| **L=10** | [10/128][10_128] | [10/256][10_256] | [10/512][10_512] | [10/768][10_768] |

| **L=12** | [12/128][12_128] | [12/256][12_256] | [12/512][12_512] | [**12/768 (Base)**][12_768] |

Here are scores on the devlopment set of six Chinese tasks:

| Model | Score | douban | chnsenticorp | lcqmc | tnews(CLUE) | iflytek(CLUE) | ocnli(CLUE) |

| -------------- | :---: | :----: | :----------: | :---: | :---------: | :-----------: | :---------: |

| RoBERTa-Tiny | 72.3 | 83.0 | 91.4 | 81.8 | 62.0 | 55.0 | 60.3 |

| RoBERTa-Mini | 75.7 | 84.8 | 93.7 | 86.1 | 63.9 | 58.3 | 67.4 |

| RoBERTa-Small | 76.8 | 86.5 | 93.4 | 86.5 | 65.1 | 59.4 | 69.7 |

| RoBERTa-Medium | 77.8 | 87.6 | 94.8 | 88.1 | 65.6 | 59.5 | 71.2 |

| RoBERTa-Base | 79.5 | 89.1 | 95.2 | 89.2 | 67.0 | 60.9 | 75.5 |

For each task, we selected the best fine-tuning hyperparameters from the lists below, and trained with the sequence length of 128:

- epochs: 3, 5, 8

- batch sizes: 32, 64

- learning rates: 3e-5, 1e-4, 3e-4

## How to use

You can use this model directly with a pipeline for masked language modeling (take the case of RoBERTa-Medium):

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='uer/chinese_roberta_L-8_H-512')

>>> unmasker("中国的首都是[MASK]京。")

[

{'sequence': '[CLS] 中 国 的 首 都 是 北 京 。 [SEP]',

'score': 0.8701988458633423,

'token': 1266,

'token_str': '北'},

{'sequence': '[CLS] 中 国 的 首 都 是 南 京 。 [SEP]',

'score': 0.1194809079170227,

'token': 1298,

'token_str': '南'},

{'sequence': '[CLS] 中 国 的 首 都 是 东 京 。 [SEP]',

'score': 0.0037803512532263994,

'token': 691,

'token_str': '东'},

{'sequence': '[CLS] 中 国 的 首 都 是 普 京 。 [SEP]',

'score': 0.0017127094324678183,

'token': 3249,

'token_str': '普'},

{'sequence': '[CLS] 中 国 的 首 都 是 望 京 。 [SEP]',

'score': 0.001687526935711503,

'token': 3307,

'token_str': '望'}

]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained('uer/chinese_roberta_L-8_H-512')

model = BertModel.from_pretrained("uer/chinese_roberta_L-8_H-512")

text = "用你喜欢的任何文本替换我。"

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('uer/chinese_roberta_L-8_H-512')

model = TFBertModel.from_pretrained("uer/chinese_roberta_L-8_H-512")

text = "用你喜欢的任何文本替换我。"

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

## Training data

[CLUECorpusSmall](https://github.com/CLUEbenchmark/CLUECorpus2020/) is used as training data. We found that models pre-trained on CLUECorpusSmall outperform those pre-trained on CLUECorpus2020, although CLUECorpus2020 is much larger than CLUECorpusSmall.

## Training procedure

Models are pre-trained by [UER-py](https://github.com/dbiir/UER-py/) on [Tencent Cloud](https://cloud.tencent.com/). We pre-train 1,000,000 steps with a sequence length of 128 and then pre-train 250,000 additional steps with a sequence length of 512. We use the same hyper-parameters on different model sizes.

Taking the case of RoBERTa-Medium

Stage1:

```

python3 preprocess.py --corpus_path corpora/cluecorpussmall.txt \

--vocab_path models/google_zh_vocab.txt \

--dataset_path cluecorpussmall_seq128_dataset.pt \

--processes_num 32 --seq_length 128 \

--dynamic_masking --data_processor mlm

```

```

python3 pretrain.py --dataset_path cluecorpussmall_seq128_dataset.pt \

--vocab_path models/google_zh_vocab.txt \

--config_path models/bert/medium_config.json \

--output_model_path models/cluecorpussmall_roberta_medium_seq128_model.bin \

--world_size 8 --gpu_ranks 0 1 2 3 4 5 6 7 \

--total_steps 1000000 --save_checkpoint_steps 100000 --report_steps 50000 \

--learning_rate 1e-4 --batch_size 64 \

--data_processor mlm --target mlm

```

Stage2:

```

python3 preprocess.py --corpus_path corpora/cluecorpussmall.txt \

--vocab_path models/google_zh_vocab.txt \

--dataset_path cluecorpussmall_seq512_dataset.pt \

--processes_num 32 --seq_length 512 \

--dynamic_masking --data_processor mlm

```

```

python3 pretrain.py --dataset_path cluecorpussmall_seq512_dataset.pt \

--vocab_path models/google_zh_vocab.txt \

--pretrained_model_path models/cluecorpussmall_roberta_medium_seq128_model.bin-1000000 \

--config_path models/bert/medium_config.json \

--output_model_path models/cluecorpussmall_roberta_medium_seq512_model.bin \

--world_size 8 --gpu_ranks 0 1 2 3 4 5 6 7 \

--total_steps 250000 --save_checkpoint_steps 50000 --report_steps 10000 \

--learning_rate 5e-5 --batch_size 16 \

--data_processor mlm --target mlm

```

Finally, we convert the pre-trained model into Huggingface's format:

```

python3 scripts/convert_bert_from_uer_to_huggingface.py --input_model_path models/cluecorpussmall_roberta_medium_seq512_model.bin-250000 \

--output_model_path pytorch_model.bin \

--layers_num 8 --type mlm

```

### BibTeX entry and citation info

```

@article{devlin2018bert,

title={Bert: Pre-training of deep bidirectional transformers for language understanding},

author={Devlin, Jacob and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina},

journal={arXiv preprint arXiv:1810.04805},

year={2018}

}

@article{liu2019roberta,

title={Roberta: A robustly optimized bert pretraining approach},

author={Liu, Yinhan and Ott, Myle and Goyal, Naman and Du, Jingfei and Joshi, Mandar and Chen, Danqi and Levy, Omer and Lewis, Mike and Zettlemoyer, Luke and Stoyanov, Veselin},

journal={arXiv preprint arXiv:1907.11692},

year={2019}

}

@article{turc2019,

title={Well-Read Students Learn Better: On the Importance of Pre-training Compact Models},

author={Turc, Iulia and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina},

journal={arXiv preprint arXiv:1908.08962v2 },

year={2019}

}

@article{zhao2019uer,

title={UER: An Open-Source Toolkit for Pre-training Models},

author={Zhao, Zhe and Chen, Hui and Zhang, Jinbin and Zhao, Xin and Liu, Tao and Lu, Wei and Chen, Xi and Deng, Haotang and Ju, Qi and Du, Xiaoyong},

journal={EMNLP-IJCNLP 2019},

pages={241},

year={2019}

}

```

[2_128]:https://huggingface.co/uer/chinese_roberta_L-2_H-128

[2_256]:https://huggingface.co/uer/chinese_roberta_L-2_H-256

[2_512]:https://huggingface.co/uer/chinese_roberta_L-2_H-512

[2_768]:https://huggingface.co/uer/chinese_roberta_L-2_H-768

[4_128]:https://huggingface.co/uer/chinese_roberta_L-4_H-128

[4_256]:https://huggingface.co/uer/chinese_roberta_L-4_H-256

[4_512]:https://huggingface.co/uer/chinese_roberta_L-4_H-512

[4_768]:https://huggingface.co/uer/chinese_roberta_L-4_H-768

[6_128]:https://huggingface.co/uer/chinese_roberta_L-6_H-128

[6_256]:https://huggingface.co/uer/chinese_roberta_L-6_H-256

[6_512]:https://huggingface.co/uer/chinese_roberta_L-6_H-512

[6_768]:https://huggingface.co/uer/chinese_roberta_L-6_H-768

[8_128]:https://huggingface.co/uer/chinese_roberta_L-8_H-128

[8_256]:https://huggingface.co/uer/chinese_roberta_L-8_H-256

[8_512]:https://huggingface.co/uer/chinese_roberta_L-8_H-512

[8_768]:https://huggingface.co/uer/chinese_roberta_L-8_H-768

[10_128]:https://huggingface.co/uer/chinese_roberta_L-10_H-128

[10_256]:https://huggingface.co/uer/chinese_roberta_L-10_H-256

[10_512]:https://huggingface.co/uer/chinese_roberta_L-10_H-512

[10_768]:https://huggingface.co/uer/chinese_roberta_L-10_H-768

[12_128]:https://huggingface.co/uer/chinese_roberta_L-12_H-128

[12_256]:https://huggingface.co/uer/chinese_roberta_L-12_H-256

[12_512]:https://huggingface.co/uer/chinese_roberta_L-12_H-512

[12_768]:https://huggingface.co/uer/chinese_roberta_L-12_H-768

|

HamidRezaAttar/gpt2-product-description-generator

|

207b5c894c24825678a2d7e11e5494f30ebe3cc4

|

2022-04-30T09:53:14.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"en",

"arxiv:1706.03762",

"transformers",

"license:apache-2.0"

] |

text-generation

| false |

HamidRezaAttar

| null |

HamidRezaAttar/gpt2-product-description-generator

| 464 | 6 |

transformers

| 2,405 |

---

language: en

tags:

- text-generation

license: apache-2.0

widget:

- text: "Maximize your bedroom space without sacrificing style with the storage bed."

- text: "Handcrafted of solid acacia in weathered gray, our round Jozy drop-leaf dining table is a space-saving."

- text: "Our plush and luxurious Emmett modular sofa brings custom comfort to your living space."

---

## GPT2-Home

This model is fine-tuned using GPT-2 on amazon home products metadata.

It can generate descriptions for your **home** products by getting a text prompt.

### Model description

[GPT-2](https://openai.com/blog/better-language-models/) is a large [transformer](https://arxiv.org/abs/1706.03762)-based language model with 1.5 billion parameters, trained on a dataset of 8 million web pages. GPT-2 is trained with a simple objective: predict the next word, given all of the previous words within some text. The diversity of the dataset causes this simple goal to contain naturally occurring demonstrations of many tasks across diverse domains. GPT-2 is a direct scale-up of GPT, with more than 10X the parameters and trained on more than 10X the amount of data.

### Live Demo

For testing model with special configuration, please visit [Demo](https://huggingface.co/spaces/HamidRezaAttar/gpt2-home)

### Blog Post

For more detailed information about project development please refer to my [blog post](https://hamidrezaattar.github.io/blog/markdown/2022/02/17/gpt2-home.html).

### How to use

For best experience and clean outputs, you can use Live Demo mentioned above, also you can use the notebook mentioned in my [GitHub](https://github.com/HamidRezaAttar/GPT2-Home)

You can use this model directly with a pipeline for text generation.

```python

>>> from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline

>>> tokenizer = AutoTokenizer.from_pretrained("HamidRezaAttar/gpt2-product-description-generator")

>>> model = AutoModelForCausalLM.from_pretrained("HamidRezaAttar/gpt2-product-description-generator")

>>> generator = pipeline('text-generation', model, tokenizer=tokenizer, config={'max_length':100})

>>> generated_text = generator("This bed is very comfortable.")

```

### Citation info

```bibtex

@misc{GPT2-Home,

author = {HamidReza Fatollah Zadeh Attar},

title = {GPT2-Home the English home product description generator},

year = {2021},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/HamidRezaAttar/GPT2-Home}},

}

```

|

cross-encoder/nli-deberta-base

|

c4dd278f8b91ff189eecea98e76a9b371ed1db37

|

2021-08-05T08:40:53.000Z

|

[

"pytorch",

"deberta",

"text-classification",

"en",

"dataset:multi_nli",

"dataset:snli",

"transformers",

"deberta-base-base",

"license:apache-2.0",

"zero-shot-classification"

] |

zero-shot-classification

| false |

cross-encoder

| null |

cross-encoder/nli-deberta-base

| 464 | 2 |

transformers

| 2,406 |

---

language: en

pipeline_tag: zero-shot-classification

tags:

- deberta-base-base

datasets:

- multi_nli

- snli

metrics:

- accuracy

license: apache-2.0

---

# Cross-Encoder for Natural Language Inference

This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class.

## Training Data

The model was trained on the [SNLI](https://nlp.stanford.edu/projects/snli/) and [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/) datasets. For a given sentence pair, it will output three scores corresponding to the labels: contradiction, entailment, neutral.

## Performance

For evaluation results, see [SBERT.net - Pretrained Cross-Encoder](https://www.sbert.net/docs/pretrained_cross-encoders.html#nli).

## Usage

Pre-trained models can be used like this:

```python

from sentence_transformers import CrossEncoder

model = CrossEncoder('cross-encoder/nli-deberta-base')

scores = model.predict([('A man is eating pizza', 'A man eats something'), ('A black race car starts up in front of a crowd of people.', 'A man is driving down a lonely road.')])

#Convert scores to labels

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(axis=1)]

```

## Usage with Transformers AutoModel

You can use the model also directly with Transformers library (without SentenceTransformers library):

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

import torch

model = AutoModelForSequenceClassification.from_pretrained('cross-encoder/nli-deberta-base')

tokenizer = AutoTokenizer.from_pretrained('cross-encoder/nli-deberta-base')

features = tokenizer(['A man is eating pizza', 'A black race car starts up in front of a crowd of people.'], ['A man eats something', 'A man is driving down a lonely road.'], padding=True, truncation=True, return_tensors="pt")

model.eval()

with torch.no_grad():

scores = model(**features).logits

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(dim=1)]

print(labels)

```

## Zero-Shot Classification

This model can also be used for zero-shot-classification:

```python

from transformers import pipeline

classifier = pipeline("zero-shot-classification", model='cross-encoder/nli-deberta-base')

sent = "Apple just announced the newest iPhone X"

candidate_labels = ["technology", "sports", "politics"]

res = classifier(sent, candidate_labels)

print(res)

```

|

worsterman/DialoGPT-small-mulder

|

c08bdc69d797c730c41d003cf619df6bb4585b3c

|

2021-06-20T22:50:26.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

| false |

worsterman

| null |

worsterman/DialoGPT-small-mulder

| 463 | null |

transformers

| 2,407 |

---

tags:

- conversational

---

# DialoGPT Trained on the Speech of Fox Mulder from The X-Files

|

Skoltech/russian-inappropriate-messages

|

2f0eca13446320dafb6f9743c56d812fa6f19a11

|

2021-05-18T22:39:46.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"text-classification",

"ru",

"transformers",

"toxic comments classification"

] |

text-classification

| false |

Skoltech

| null |

Skoltech/russian-inappropriate-messages

| 462 | 3 |

transformers

| 2,408 |

---

language:

- ru

tags:

- toxic comments classification

licenses:

- cc-by-nc-sa

---

## General concept of the model

#### Proposed usage

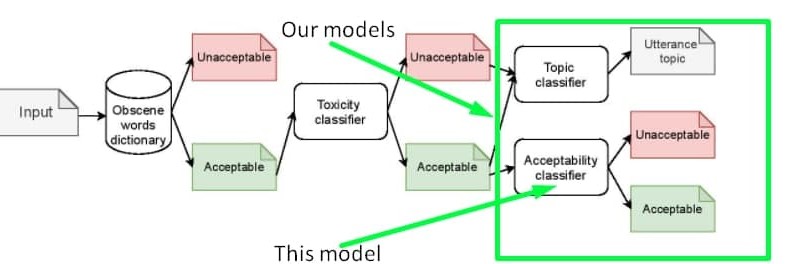

The **'inappropriateness'** substance we tried to collect in the dataset and detect with the model **is NOT a substitution of toxicity**, it is rather a derivative of toxicity. So the model based on our dataset could serve as **an additional layer of inappropriateness filtering after toxicity and obscenity filtration**. You can detect the exact sensitive topic by using [another model](https://huggingface.co/Skoltech/russian-sensitive-topics). The proposed pipeline is shown in the scheme below.

You can also train one classifier for both toxicity and inappropriateness detection. The data to be mixed with toxic labelled samples could be found on our [GitHub](https://github.com/skoltech-nlp/inappropriate-sensitive-topics/blob/main/Version2/appropriateness/Appropriateness.csv) or on [kaggle](https://www.kaggle.com/nigula/russianinappropriatemessages)

#### Inappropraiteness intuition

This model is trained on the dataset of inappropriate messages of the Russian language. Generally, an inappropriate utterance is an utterance that has not obscene words or any kind of toxic intent, but can still harm the reputation of the speaker. Find some sample for more intuition in the table below. Learn more about the concept of inappropriateness [in this article ](https://www.aclweb.org/anthology/2021.bsnlp-1.4/) presented at the workshop for Balto-Slavic NLP at the EACL-2021 conference. Please note that this article describes the first version of the dataset, while the model is trained on the extended version of the dataset open-sourced on our [GitHub](https://github.com/skoltech-nlp/inappropriate-sensitive-topics/blob/main/Version2/appropriateness/Appropriateness.csv) or on [kaggle](https://www.kaggle.com/nigula/russianinappropriatemessages). The properties of the dataset are the same as the one described in the article, the only difference is the size.

| Text | Inappropriate | Comment | Unsafe topic |

|------------------------------------------------------------------------------------------------------|---------------|----------------------------------------------------------------|---------------|

| Ладно бы видного деятеля завалили а тут какого то ноунейм нигру преступника | yes | Оправдание убийства | offline crime |

| Это нарушение УКРФ! | no | Осуждение нарушения закона | offline crime |

| Религия - это насилие высшего уровня. Верующего принуждают к вере еще в младенчестве. | yes | Оскорбление чувств верующих | religion |

| Христиане, как вы относитесь к иноверцам, в частности к Гамалиилу и к уверовавшим в Иисуса фарисеям? | no | Обычные вопросы к верующим без намека на оскорбление их чувств | religion |

| Хорошо что проституция запрещена | no | Осуждение простуитуции | prostitution |

| Возьму бабки и сниму шлюх на выходных | yes | Популяризация проституции | prostitution |

#### Metrics

The model was trained, validated, and tested only on the samples with 100% confidence, which allowed to get the following metrics on test set:

| | precision | recall | f1-score | support |

|--------------|----------|--------|----------|---------|

| 0 | 0.92 | 0.93 | 0.93 | 7839 |

| 1 | 0.80 | 0.76 | 0.78 | 2726 |

| accuracy | | | 0.89 | 10565 |

| macro avg | 0.86 | 0.85 | 0.85 | 10565 |

| weighted avg | 0.89 | 0.89 | 0.89 | 10565 |

## Licensing Information

[Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License][cc-by-nc-sa].

[![CC BY-NC-SA 4.0][cc-by-nc-sa-image]][cc-by-nc-sa]

[cc-by-nc-sa]: http://creativecommons.org/licenses/by-nc-sa/4.0/

[cc-by-nc-sa-image]: https://i.creativecommons.org/l/by-nc-sa/4.0/88x31.png

## Citation

If you find this repository helpful, feel free to cite our publication:

```

@inproceedings{babakov-etal-2021-detecting,

title = "Detecting Inappropriate Messages on Sensitive Topics that Could Harm a Company{'}s Reputation",

author = "Babakov, Nikolay and

Logacheva, Varvara and

Kozlova, Olga and

Semenov, Nikita and

Panchenko, Alexander",

booktitle = "Proceedings of the 8th Workshop on Balto-Slavic Natural Language Processing",

month = apr,

year = "2021",

address = "Kiyv, Ukraine",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2021.bsnlp-1.4",

pages = "26--36",

abstract = "Not all topics are equally {``}flammable{''} in terms of toxicity: a calm discussion of turtles or fishing less often fuels inappropriate toxic dialogues than a discussion of politics or sexual minorities. We define a set of sensitive topics that can yield inappropriate and toxic messages and describe the methodology of collecting and labelling a dataset for appropriateness. While toxicity in user-generated data is well-studied, we aim at defining a more fine-grained notion of inappropriateness. The core of inappropriateness is that it can harm the reputation of a speaker. This is different from toxicity in two respects: (i) inappropriateness is topic-related, and (ii) inappropriate message is not toxic but still unacceptable. We collect and release two datasets for Russian: a topic-labelled dataset and an appropriateness-labelled dataset. We also release pre-trained classification models trained on this data.",

}

```

## Contacts

If you have any questions please contact [Nikolay](mailto:[email protected])

|

arbml/wav2vec2-large-xlsr-53-arabic-egyptian

|

d21ab2f8afebd15f8d3e9c95d2a77343c6f78d7b

|

2021-07-05T18:12:38.000Z

|

[

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"???",

"dataset:common_voice",

"transformers",

"audio",

"speech",

"xlsr-fine-tuning-week",

"license:apache-2.0",

"model-index"

] |

automatic-speech-recognition

| false |

arbml

| null |

arbml/wav2vec2-large-xlsr-53-arabic-egyptian

| 462 | 2 |

transformers

| 2,409 |

---

language: ???

datasets:

- common_voice

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

model-index:

- name: XLSR Wav2Vec2 Arabic Egyptian by Zaid

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice ???

type: common_voice

args: ???

metrics:

- name: Test WER

type: wer

value: ???

---

# Wav2Vec2-Large-XLSR-53-Tamil

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Tamil using the [Common Voice](https://huggingface.co/datasets/common_voice)

When using this model, make sure that your speech input is sampled at 16kHz.

## Usage

The model can be used directly (without a language model) as follows:

```python

import torch

import torchaudio

from datasets import load_dataset

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

test_dataset = load_dataset("common_voice", "???", split="test[:2%]").

processor = Wav2Vec2Processor.from_pretrained("Zaid/wav2vec2-large-xlsr-53-arabic-egyptian")

model = Wav2Vec2ForCTC.from_pretrained("Zaid/wav2vec2-large-xlsr-53-arabic-egyptian")

resampler = torchaudio.transforms.Resample(48_000, 16_000)

# Preprocessing the datasets.

# We need to read the aduio files as arrays

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

batch["speech"] = resampler(speech_array).squeeze().numpy()

return batch

test_dataset = test_dataset.map(speech_file_to_array_fn)

inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True)

with torch.no_grad():

logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits

predicted_ids = torch.argmax(logits, dim=-1)

print("Prediction:", processor.batch_decode(predicted_ids))

print("Reference:", test_dataset["sentence"][:2])

```

## Evaluation

The model can be evaluated as follows on the {language} test data of Common Voice.

```python

import torch

import torchaudio

from datasets import load_dataset, load_metric

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

import re

test_dataset = load_dataset("common_voice", "???", split="test")

wer = load_metric("wer")

processor = Wav2Vec2Processor.from_pretrained("Zaid/wav2vec2-large-xlsr-53-arabic-egyptian")

model = Wav2Vec2ForCTC.from_pretrained("Zaid/wav2vec2-large-xlsr-53-arabic-egyptian")

model.to("cuda")

chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“]'

resampler = torchaudio.transforms.Resample(48_000, 16_000)

# Preprocessing the datasets.

# We need to read the aduio files as arrays

def speech_file_to_array_fn(batch):

batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower()

speech_array, sampling_rate = torchaudio.load(batch["path"])

batch["speech"] = resampler(speech_array).squeeze().numpy()

return batch

test_dataset = test_dataset.map(speech_file_to_array_fn)

# Preprocessing the datasets.

# We need to read the aduio files as arrays

def evaluate(batch):

inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

with torch.no_grad():

logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["pred_strings"] = processor.batch_decode(pred_ids)

return batch

result = test_dataset.map(evaluate, batched=True, batch_size=8)

print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"])))

```

**Test Result**: ??? %

## Training

The Common Voice `train`, `validation` datasets were used for training.

The script used for training can be found ???

|

Pensador777critico/DialoGPT-small-RickandMorty

|

1c42f5be6b7a0e42229317f9b42321a94b317d81

|

2021-08-31T09:17:11.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

| false |

Pensador777critico

| null |

Pensador777critico/DialoGPT-small-RickandMorty

| 461 | null |

transformers

| 2,410 |

---

tags:

- conversational

---

# Rick and Morty DialoGPT Model

|

seyonec/PubChem10M_SMILES_BPE_396_250

|

a8829bbdf9a6fbd64c91950389687934b3de8394

|

2021-05-20T21:01:53.000Z

|

[

"pytorch",

"jax",

"roberta",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

| false |

seyonec

| null |

seyonec/PubChem10M_SMILES_BPE_396_250

| 461 | null |

transformers

| 2,411 |

Entry not found

|

flax-sentence-embeddings/all_datasets_v3_mpnet-base

|

d442c0e304ff575c261b9bbdf8b13fcbe6ee933c

|

2021-08-18T11:16:43.000Z

|

[

"pytorch",

"mpnet",

"fill-mask",

"en",

"arxiv:1904.06472",

"arxiv:2102.07033",

"arxiv:2104.08727",

"arxiv:1704.05179",

"arxiv:1810.09305",

"sentence-transformers",

"feature-extraction",

"sentence-similarity",

"license:apache-2.0"

] |

sentence-similarity

| false |

flax-sentence-embeddings

| null |

flax-sentence-embeddings/all_datasets_v3_mpnet-base

| 460 | 4 |

sentence-transformers

| 2,412 |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

language: en

license: apache-2.0

---

# all-mpnet-base-v1

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/all-mpnet-base-v1')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

import torch.nn.functional as F

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/all-mpnet-base-v1')

model = AutoModel.from_pretrained('sentence-transformers/all-mpnet-base-v1')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

# Normalize embeddings

sentence_embeddings = F.normalize(sentence_embeddings, p=2, dim=1)

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/all-mpnet-base-v1)

------

## Background

The project aims to train sentence embedding models on very large sentence level datasets using a self-supervised

contrastive learning objective. We used the pretrained [`microsoft/mpnet-base`](https://huggingface.co/microsoft/mpnet-base) model and fine-tuned in on a

1B sentence pairs dataset. We use a contrastive learning objective: given a sentence from the pair, the model should predict which out of a set of randomly sampled other sentences, was actually paired with it in our dataset.

We developped this model during the

[Community week using JAX/Flax for NLP & CV](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104),

organized by Hugging Face. We developped this model as part of the project:

[Train the Best Sentence Embedding Model Ever with 1B Training Pairs](https://discuss.huggingface.co/t/train-the-best-sentence-embedding-model-ever-with-1b-training-pairs/7354). We benefited from efficient hardware infrastructure to run the project: 7 TPUs v3-8, as well as intervention from Googles Flax, JAX, and Cloud team member about efficient deep learning frameworks.

## Intended uses

Our model is intented to be used as a sentence and short paragraph encoder. Given an input text, it ouptuts a vector which captures

the semantic information. The sentence vector may be used for information retrieval, clustering or sentence similarity tasks.

By default, input text longer than 128 word pieces is truncated.

## Training procedure

### Pre-training

We use the pretrained [`microsoft/mpnet-base`](https://huggingface.co/microsoft/mpnet-base). Please refer to the model card for more detailed information about the pre-training procedure.

### Fine-tuning

We fine-tune the model using a contrastive objective. Formally, we compute the cosine similarity from each possible sentence pairs from the batch.

We then apply the cross entropy loss by comparing with true pairs.

#### Hyper parameters

We trained ou model on a TPU v3-8. We train the model during 920k steps using a batch size of 512 (64 per TPU core).

We use a learning rate warm up of 500. The sequence length was limited to 128 tokens. We used the AdamW optimizer with

a 2e-5 learning rate. The full training script is accessible in this current repository: `train_script.py`.

#### Training data

We use the concatenation from multiple datasets to fine-tune our model. The total number of sentence pairs is above 1 billion sentences.

We sampled each dataset given a weighted probability which configuration is detailed in the `data_config.json` file.

| Dataset | Paper | Number of training tuples |

|--------------------------------------------------------|:----------------------------------------:|:--------------------------:|

| [Reddit comments (2015-2018)](https://github.com/PolyAI-LDN/conversational-datasets/tree/master/reddit) | [paper](https://arxiv.org/abs/1904.06472) | 726,484,430 |

| [S2ORC](https://github.com/allenai/s2orc) Citation pairs (Abstracts) | [paper](https://aclanthology.org/2020.acl-main.447/) | 116,288,806 |

| [WikiAnswers](https://github.com/afader/oqa#wikianswers-corpus) Duplicate question pairs | [paper](https://doi.org/10.1145/2623330.2623677) | 77,427,422 |

| [PAQ](https://github.com/facebookresearch/PAQ) (Question, Answer) pairs | [paper](https://arxiv.org/abs/2102.07033) | 64,371,441 |

| [S2ORC](https://github.com/allenai/s2orc) Citation pairs (Titles) | [paper](https://aclanthology.org/2020.acl-main.447/) | 52,603,982 |

| [S2ORC](https://github.com/allenai/s2orc) (Title, Abstract) | [paper](https://aclanthology.org/2020.acl-main.447/) | 41,769,185 |

| [Stack Exchange](https://huggingface.co/datasets/flax-sentence-embeddings/stackexchange_xml) (Title, Body) pairs | - | 25,316,456 |

| [MS MARCO](https://microsoft.github.io/msmarco/) triplets | [paper](https://doi.org/10.1145/3404835.3462804) | 9,144,553 |

| [GOOAQ: Open Question Answering with Diverse Answer Types](https://github.com/allenai/gooaq) | [paper](https://arxiv.org/pdf/2104.08727.pdf) | 3,012,496 |

| [Yahoo Answers](https://www.kaggle.com/soumikrakshit/yahoo-answers-dataset) (Title, Answer) | [paper](https://proceedings.neurips.cc/paper/2015/hash/250cf8b51c773f3f8dc8b4be867a9a02-Abstract.html) | 1,198,260 |

| [Code Search](https://huggingface.co/datasets/code_search_net) | - | 1,151,414 |

| [COCO](https://cocodataset.org/#home) Image captions | [paper](https://link.springer.com/chapter/10.1007%2F978-3-319-10602-1_48) | 828,395|

| [SPECTER](https://github.com/allenai/specter) citation triplets | [paper](https://doi.org/10.18653/v1/2020.acl-main.207) | 684,100 |

| [Yahoo Answers](https://www.kaggle.com/soumikrakshit/yahoo-answers-dataset) (Question, Answer) | [paper](https://proceedings.neurips.cc/paper/2015/hash/250cf8b51c773f3f8dc8b4be867a9a02-Abstract.html) | 681,164 |

| [Yahoo Answers](https://www.kaggle.com/soumikrakshit/yahoo-answers-dataset) (Title, Question) | [paper](https://proceedings.neurips.cc/paper/2015/hash/250cf8b51c773f3f8dc8b4be867a9a02-Abstract.html) | 659,896 |

| [SearchQA](https://huggingface.co/datasets/search_qa) | [paper](https://arxiv.org/abs/1704.05179) | 582,261 |

| [Eli5](https://huggingface.co/datasets/eli5) | [paper](https://doi.org/10.18653/v1/p19-1346) | 325,475 |

| [Flickr 30k](https://shannon.cs.illinois.edu/DenotationGraph/) | [paper](https://transacl.org/ojs/index.php/tacl/article/view/229/33) | 317,695 |

| [Stack Exchange](https://huggingface.co/datasets/flax-sentence-embeddings/stackexchange_xml) Duplicate questions (titles) | | 304,525 |

| AllNLI ([SNLI](https://nlp.stanford.edu/projects/snli/) and [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/) | [paper SNLI](https://doi.org/10.18653/v1/d15-1075), [paper MultiNLI](https://doi.org/10.18653/v1/n18-1101) | 277,230 |

| [Stack Exchange](https://huggingface.co/datasets/flax-sentence-embeddings/stackexchange_xml) Duplicate questions (bodies) | | 250,519 |

| [Stack Exchange](https://huggingface.co/datasets/flax-sentence-embeddings/stackexchange_xml) Duplicate questions (titles+bodies) | | 250,460 |

| [Sentence Compression](https://github.com/google-research-datasets/sentence-compression) | [paper](https://www.aclweb.org/anthology/D13-1155/) | 180,000 |

| [Wikihow](https://github.com/pvl/wikihow_pairs_dataset) | [paper](https://arxiv.org/abs/1810.09305) | 128,542 |

| [Altlex](https://github.com/chridey/altlex/) | [paper](https://aclanthology.org/P16-1135.pdf) | 112,696 |

| [Quora Question Triplets](https://quoradata.quora.com/First-Quora-Dataset-Release-Question-Pairs) | - | 103,663 |

| [Simple Wikipedia](https://cs.pomona.edu/~dkauchak/simplification/) | [paper](https://www.aclweb.org/anthology/P11-2117/) | 102,225 |

| [Natural Questions (NQ)](https://ai.google.com/research/NaturalQuestions) | [paper](https://transacl.org/ojs/index.php/tacl/article/view/1455) | 100,231 |

| [SQuAD2.0](https://rajpurkar.github.io/SQuAD-explorer/) | [paper](https://aclanthology.org/P18-2124.pdf) | 87,599 |

| [TriviaQA](https://huggingface.co/datasets/trivia_qa) | - | 73,346 |

| **Total** | | **1,124,818,467** |

|

satyaalmasian/temporal_tagger_BERT_tokenclassifier

|

46bdd518ce24100e9eca478d714e145b86a50380

|

2021-09-21T11:23:18.000Z

|

[

"pytorch",

"bert",

"token-classification",

"transformers",

"autotrain_compatible"

] |

token-classification

| false |

satyaalmasian

| null |

satyaalmasian/temporal_tagger_BERT_tokenclassifier

| 460 | 2 |

transformers

| 2,413 |

# BERT based temporal tagged

Token classifier for temporal tagging of plain text using BERT language model. The model is introduced in the paper BERT got a Date: Introducing Transformers to Temporal Tagging and release in this [repository](https://github.com/satya77/Transformer_Temporal_Tagger).

# Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. We use BERT for token classification to tag the tokens in text with classes:

```

O -- outside of a tag

I-TIME -- inside tag of time

B-TIME -- beginning tag of time

I-DATE -- inside tag of date

B-DATE -- beginning tag of date

I-DURATION -- inside tag of duration

B-DURATION -- beginning tag of duration

I-SET -- inside tag of the set

B-SET -- beginning tag of the set

```

# Intended uses & limitations

This model is best used accompanied with code from the [repository](https://github.com/satya77/Transformer_Temporal_Tagger). Especially for inference, the direct output might be noisy and hard to decipher, in the repository we provide alignment functions and voting strategies for the final output.

# How to use

you can load the model as follows:

```

tokenizer = AutoTokenizer.from_pretrained("satyaalmasian/temporal_tagger_BERT_tokenclassifier", use_fast=False)

model = BertForTokenClassification.from_pretrained("satyaalmasian/temporal_tagger_BERT_tokenclassifier")

```

for inference use:

```

processed_text = tokenizer(input_text, return_tensors="pt")

result = model(**processed_text)

classification= result[0]

```

for an example with post-processing, refer to the [repository](https://github.com/satya77/Transformer_Temporal_Tagger).

We provide a function `merge_tokens` to decipher the output.

to further fine-tune, use the `Trainer` from hugginface. An example of a similar fine-tuning can be found [here](https://github.com/satya77/Transformer_Temporal_Tagger/blob/master/run_token_classifier.py).

#Training data

We use 3 data sources:

[Tempeval-3](https://www.cs.york.ac.uk/semeval-2013/task1/index.php%3Fid=data.html), Wikiwars, Tweets datasets. For the correct data versions please refer to our [repository](https://github.com/satya77/Transformer_Temporal_Tagger).

#Training procedure

The model is trained from publicly available checkpoints on huggingface (`bert-base-uncased`), with a batch size of 34. We use a learning rate of 5e-05 with an Adam optimizer and linear weight decay.

We fine-tune with 5 different random seeds, this version of the model is the only seed=4.

For training, we use 2 NVIDIA A100 GPUs with 40GB of memory.

|

pranaydeeps/Ancient-Greek-BERT

|

db7bf93218e0fdaf880320bd4968aab1efdbf9f6

|

2021-09-24T15:07:58.000Z

|

[

"pytorch",

"bert",

"feature-extraction",

"transformers"

] |

feature-extraction

| false |

pranaydeeps

| null |

pranaydeeps/Ancient-Greek-BERT

| 459 | 2 |

transformers

| 2,414 |

# Ancient Greek BERT

<img src="https://ichef.bbci.co.uk/images/ic/832xn/p02m4gzb.jpg"/>

The first and only available Ancient Greek sub-word BERT model!

State-of-the-art post fine-tuning on Part-of-Speech Tagging and Morphological Analysis.

Pre-trained weights are made available for a standard 12 layer, 768d BERT-base model.

Further scripts for using the model and fine-tuning it for PoS Tagging are available on our [Github repository](https://github.com/pranaydeeps/Ancient-Greek-BERT)!

Please refer to our paper titled: "A Pilot Study for BERT Language Modelling and Morphological Analysis for Ancient and Medieval Greek". In Proceedings of The 5th Joint SIGHUM Workshop on Computational Linguistics for Cultural Heritage, Social Sciences, Humanities and Literature (LaTeCH-CLfL 2021)

## How to use

Requirements:

```python

pip install transformers

pip install unicodedata

pip install flair

```

Can be directly used from the HuggingFace Model Hub with:

```python

from transformers import AutoTokenizer, AutoModel

tokeniser = AutoTokenizer.from_pretrained("pranaydeeps/Ancient-Greek-BERT")

model = AutoModel.from_pretrained("pranaydeeps/Ancient-Greek-BERT")

```

## Fine-tuning for POS/Morphological Analysis

Please refer the GitHub repository for the code and details regarding fine-tuning

## Training data

The model was initialised from [AUEB NLP Group's Greek BERT](https://huggingface.co/nlpaueb/bert-base-greek-uncased-v1)

and subsequently trained on monolingual data from the First1KGreek Project, Perseus Digital Library, PROIEL Treebank and

Gorman's Treebank

## Training and Eval details

Standard de-accentuating and lower-casing for Greek as suggested in [AUEB NLP Group's Greek BERT](https://huggingface.co/nlpaueb/bert-base-greek-uncased-v1)

The model was trained on 4 NVIDIA Tesla V100 16GB GPUs for 80 epochs, with a max-seq-len of 512 and results in a perplexity of 4.8 on the held out test set.

It also gives state-of-the-art results when fine-tuned for PoS Tagging and Morphological Analysis on all 3 treebanks averaging >90% accuracy. Please consult our paper or contact [me](mailto:[email protected]) for further questions!

## Cite

If you end up using Ancient-Greek-BERT in your research, please cite the paper:

```

@inproceedings{ancient-greek-bert,

author = {Singh, Pranaydeep and Rutten, Gorik and Lefever, Els},

title = {A Pilot Study for BERT Language Modelling and Morphological Analysis for Ancient and Medieval Greek},

year = {2021},

booktitle = {The 5th Joint SIGHUM Workshop on Computational Linguistics for Cultural Heritage, Social Sciences, Humanities and Literature (LaTeCH-CLfL 2021)}

}

```

|

BSC-TeMU/roberta-base-bne

|

052845e3a3abcabb150e4724d2c85f0ab59dd67e

|

2021-10-21T10:30:31.000Z

|

[

"pytorch",

"roberta",

"fill-mask",

"es",

"dataset:bne",

"arxiv:1907.11692",

"arxiv:2107.07253",

"transformers",

"national library of spain",

"spanish",

"bne",

"license:apache-2.0",

"autotrain_compatible"

] |

fill-mask

| false |

BSC-TeMU

| null |

BSC-TeMU/roberta-base-bne

| 457 | 8 |

transformers

| 2,415 |

---

language:

- es

license: apache-2.0

tags:

- "national library of spain"

- "spanish"

- "bne"

datasets:

- "bne"

metrics:

- "ppl"

widget:

- text: "Este año las campanadas de La Sexta las presentará <mask>."

- text: "David Broncano es un presentador de La <mask>."

- text: "Gracias a los datos de la BNE se ha podido <mask> este modelo del lenguaje."

- text: "Hay base legal dentro del marco <mask> actual."

---

**⚠️NOTICE⚠️: THIS MODEL HAS BEEN MOVED TO THE FOLLOWING URL AND WILL SOON BE REMOVED:** https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne

# RoBERTa base trained with data from National Library of Spain (BNE)

## Model Description

RoBERTa-base-bne is a transformer-based masked language model for the Spanish language. It is based on the [RoBERTa](https://arxiv.org/abs/1907.11692) base model and has been pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019.

## Training corpora and preprocessing

The [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) crawls all .es domains once a year. The training corpus consists of 59TB of WARC files from these crawls, carried out from 2009 to 2019.

To obtain a high-quality training corpus, the corpus has been preprocessed with a pipeline of operations, including among the others, sentence splitting, language detection, filtering of bad-formed sentences and deduplication of repetitive contents. During the process document boundaries are kept. This resulted into 2TB of Spanish clean corpus. Further global deduplication among the corpus is applied, resulting into 570GB of text.

Some of the statistics of the corpus:

| Corpora | Number of documents | Number of tokens | Size (GB) |

|---------|---------------------|------------------|-----------|

| BNE | 201,080,084 | 135,733,450,668 | 570GB |

## Tokenization and pre-training

The training corpus has been tokenized using a byte version of Byte-Pair Encoding (BPE) used in the original [RoBERTA](https://arxiv.org/abs/1907.11692) model with a vocabulary size of 50,262 tokens. The RoBERTa-base-bne pre-training consists of a masked language model training that follows the approach employed for the RoBERTa base. The training lasted a total of 48 hours with 16 computing nodes each one with 4 NVIDIA V100 GPUs of 16GB VRAM.

## Evaluation and results

For evaluation details visit our [GitHub repository](https://github.com/PlanTL-SANIDAD/lm-spanish).

## Citing

Check out our paper for all the details: https://arxiv.org/abs/2107.07253

```

@misc{gutierrezfandino2021spanish,

title={Spanish Language Models},

author={Asier Gutiérrez-Fandiño and Jordi Armengol-Estapé and Marc Pàmies and Joan Llop-Palao and Joaquín Silveira-Ocampo and Casimiro Pio Carrino and Aitor Gonzalez-Agirre and Carme Armentano-Oller and Carlos Rodriguez-Penagos and Marta Villegas},

year={2021},

eprint={2107.07253},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

flax-community/gpt-2-spanish

|

359ff61122561956ce53fc4caaf660b9d66a5248

|

2022-04-22T11:16:44.000Z

|

[

"pytorch",

"jax",

"tensorboard",

"gpt2",

"text-generation",

"es",

"dataset:oscar",

"transformers"

] |

text-generation

| false |

flax-community

| null |

flax-community/gpt-2-spanish

| 457 | 1 |

transformers

| 2,416 |

---

language: es

tags:

- text-generation

datasets:

- oscar

widgets:

- text: "Érase un vez "

- text: "Frase: Esta película es muy agradable. Sentimiento: positivo

Frase: Odiaba esta película, apesta. Sentimiento: negativo

Frase: Esta película fue bastante mala. Sentimiento: "

---

# Spanish GPT-2

GPT-2 model trained from scratch on the Spanish portion of [OSCAR](https://huggingface.co/datasets/viewer/?dataset=oscar).

The model is trained with Flax and using TPUs sponsored by Google since this is part of the

[Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104)

organised by HuggingFace.

## Model description

The model used for training is [OpenAI's GPT-2](https://openai.com/blog/better-language-models/), introduced in the paper ["Language Models are Unsupervised Multitask Learners"](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) by Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei and Ilya Sutskever.

This model is available in the 🤗 [Model Hub](https://huggingface.co/gpt2).

## Training data

Spanish portion of OSCAR or **O**pen **S**uper-large **C**rawled **A**LMAnaCH co**R**pus, a huge multilingual corpus obtained by language classification and filtering of the [Common Crawl](https://commoncrawl.org/) corpus using the [goclassy](https://github.com/pjox/goclassy) architecture.

This corpus is available in the 🤗 [Datasets](https://huggingface.co/datasets/oscar) library.

## Team members

- Manuel Romero ([mrm8488](https://huggingface.co/mrm8488))

- María Grandury ([mariagrandury](https://huggingface.co/mariagrandury))

- Pablo González de Prado ([Pablogps](https://huggingface.co/Pablogps))

- Daniel Vera ([daveni](https://huggingface.co/daveni))

- Sri Lakshmi ([srisweet](https://huggingface.co/srisweet))

- José Posada ([jdposa](https://huggingface.co/jdposa))

- Santiago Hincapie ([shpotes](https://huggingface.co/shpotes))

- Jorge ([jorgealro](https://huggingface.co/jorgealro))

|

izumi-lab/bert-small-japanese-fin

|

fe4d803446cc47c33233b187b221585fc428d5c8

|

2022-03-19T09:38:08.000Z

|

[

"pytorch",

"bert",

"fill-mask",

"ja",

"dataset:wikipedia",

"dataset:securities reports",

"dataset:summaries of financial results",

"arxiv:2003.10555",

"transformers",

"finance",

"license:cc-by-sa-4.0",

"autotrain_compatible"

] |

fill-mask

| false |

izumi-lab

| null |

izumi-lab/bert-small-japanese-fin

| 456 | null |

transformers

| 2,417 |

---

language: ja

license: cc-by-sa-4.0

tags:

- finance

datasets:

- wikipedia

- securities reports

- summaries of financial results

widget:

- text: 流動[MASK]は、1億円となりました。

---

# BERT small Japanese finance

This is a [BERT](https://github.com/google-research/bert) model pretrained on texts in the Japanese language.

The codes for the pretraining are available at [retarfi/language-pretraining](https://github.com/retarfi/language-pretraining/tree/v1.0).

## Model architecture

The model architecture is the same as BERT small in the [original ELECTRA paper](https://arxiv.org/abs/2003.10555); 12 layers, 256 dimensions of hidden states, and 4 attention heads.

## Training Data

The models are trained on Wikipedia corpus and financial corpus.

The Wikipedia corpus is generated from the Japanese Wikipedia dump file as of June 1, 2021.

The corpus file is 2.9GB, consisting of approximately 20M sentences.

The financial corpus consists of 2 corpora:

- Summaries of financial results from October 9, 2012, to December 31, 2020

- Securities reports from February 8, 2018, to December 31, 2020

The financial corpus file is 5.2GB, consisting of approximately 27M sentences.

## Tokenization

The texts are first tokenized by MeCab with IPA dictionary and then split into subwords by the WordPiece algorithm.

The vocabulary size is 32768.

## Training

The models are trained with the same configuration as BERT small in the [original ELECTRA paper](https://arxiv.org/abs/2003.10555); 128 tokens per instance, 128 instances per batch, and 1.45M training steps.

## Citation

**There will be another paper for this pretrained model. Be sure to check here again when you cite.**

```

@inproceedings{suzuki2021fin-bert-electra,

title={金融文書を用いた事前学習言語モデルの構築と検証},

% title={Construction and Validation of a Pre-Trained Language Model Using Financial Documents},

author={鈴木 雅弘 and 坂地 泰紀 and 平野 正徳 and 和泉 潔},

% author={Masahiro Suzuki and Hiroki Sakaji and Masanori Hirano and Kiyoshi Izumi},

booktitle={人工知能学会第27回金融情報学研究会(SIG-FIN)},

% booktitle={Proceedings of JSAI Special Interest Group on Financial Infomatics (SIG-FIN) 27},

pages={5-10},

year={2021}

}

```

## Licenses

The pretrained models are distributed under the terms of the [Creative Commons Attribution-ShareAlike 4.0](https://creativecommons.org/licenses/by-sa/4.0/).

## Acknowledgments

This work was supported by JSPS KAKENHI Grant Number JP21K12010.

|

nvidia/stt_en_conformer_ctc_large

|

bf01f044a18d14ccba90d7de497e6664fa68a669

|

2022-06-25T01:04:09.000Z

|

[

"nemo",

"en",

"dataset:librispeech_asr",

"dataset:fisher_corpus",

"dataset:Switchboard-1",

"dataset:WSJ-0",

"dataset:WSJ-1",

"dataset:National Singapore Corpus Part 1",

"dataset:National Singapore Corpus Part 6",

"dataset:vctk",

"dataset:VoxPopuli (EN)",

"dataset:Europarl-ASR (EN)",

"dataset:Multilingual LibriSpeech (2000 hours)",

"dataset:mozilla-foundation/common_voice_7_0",

"arxiv:2005.08100",

"automatic-speech-recognition",

"speech",

"audio",

"CTC",

"Conformer",

"Transformer",

"pytorch",

"NeMo",

"hf-asr-leaderboard",

"Riva",

"license:cc-by-4.0",

"model-index"

] |

automatic-speech-recognition

| false |

nvidia

| null |

nvidia/stt_en_conformer_ctc_large

| 456 | 8 |

nemo

| 2,418 |

---

language:

- en

library_name: nemo

datasets:

- librispeech_asr

- fisher_corpus

- Switchboard-1

- WSJ-0

- WSJ-1

- National Singapore Corpus Part 1

- National Singapore Corpus Part 6

- vctk

- VoxPopuli (EN)

- Europarl-ASR (EN)

- Multilingual LibriSpeech (2000 hours)

- mozilla-foundation/common_voice_7_0

thumbnail: null

tags:

- automatic-speech-recognition

- speech

- audio

- CTC

- Conformer

- Transformer

- pytorch

- NeMo

- hf-asr-leaderboard

- Riva

license: cc-by-4.0

widget:

- example_title: Librispeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/sample1.flac

- example_title: Librispeech sample 2

src: https://cdn-media.huggingface.co/speech_samples/sample2.flac

model-index:

- name: stt_en_conformer_ctc_large

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: LibriSpeech (clean)

type: librispeech_asr

config: clean

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.2

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: LibriSpeech (other)

type: librispeech_asr

config: other

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 4.3

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Multilingual LibriSpeech

type: facebook/multilingual_librispeech

config: english

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 7.2

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Mozilla Common Voice 7.0

type: mozilla-foundation/common_voice_7_0

config: en

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 8.0

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Mozilla Common Voice 8.0

type: mozilla-foundation/common_voice_8_0

config: en

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 9.48

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Wall Street Journal 92

type: wsj_0

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.0

- task: