modelId

stringlengths 4

112

| sha

stringlengths 40

40

| lastModified

stringlengths 24

24

| tags

list | pipeline_tag

stringclasses 29

values | private

bool 1

class | author

stringlengths 2

38

⌀ | config

null | id

stringlengths 4

112

| downloads

float64 0

36.8M

⌀ | likes

float64 0

712

⌀ | library_name

stringclasses 17

values | __index_level_0__

int64 0

38.5k

| readme

stringlengths 0

186k

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

sileod/roberta-base-mnli

|

86d5eb9545d2276806ce7290e670134a65e95e84

|

2022-05-31T10:08:10.000Z

|

[

"pytorch",

"roberta",

"text-classification",

"dataset:multi_nli",

"transformers",

"generated_from_trainer",

"license:mit",

"model-index"

] |

text-classification

| false |

sileod

| null |

sileod/roberta-base-mnli

| 516 | 1 |

transformers

| 2,300 |

---

license: mit

tags:

- generated_from_trainer

datasets:

- multi_nli

metrics:

- accuracy

model-index:

- name: roberta-base-mnli

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: multi_nli

type: multi_nli

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.8719307182883341

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-mnli

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the multi_nli dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4661

- Accuracy: 0.8719

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 0

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.4172 | 1.0 | 24544 | 0.4175 | 0.8508 |

| 0.3324 | 2.0 | 49088 | 0.4146 | 0.8609 |

| 0.2191 | 3.0 | 73632 | 0.4661 | 0.8719 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu102

- Datasets 2.0.0

- Tokenizers 0.11.6

|

nvidia/mit-b2

|

44acc700d01cdfdac6f5c236e69da847985eaac3

|

2022-07-29T13:15:51.000Z

|

[

"pytorch",

"tf",

"segformer",

"image-classification",

"dataset:imagenet_1k",

"arxiv:2105.15203",

"transformers",

"vision",

"license:apache-2.0"

] |

image-classification

| false |

nvidia

| null |

nvidia/mit-b2

| 515 | null |

transformers

| 2,301 |

---

license: apache-2.0

tags:

- vision

datasets:

- imagenet_1k

widget:

- src: https://huggingface.co/datasets/hf-internal-testing/fixtures_ade20k/resolve/main/ADE_val_00000001.jpg

example_title: House

- src: https://huggingface.co/datasets/hf-internal-testing/fixtures_ade20k/resolve/main/ADE_val_00000002.jpg

example_title: Castle

---

# SegFormer (b2-sized) encoder pre-trained-only

SegFormer encoder fine-tuned on Imagenet-1k. It was introduced in the paper [SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers](https://arxiv.org/abs/2105.15203) by Xie et al. and first released in [this repository](https://github.com/NVlabs/SegFormer).

Disclaimer: The team releasing SegFormer did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

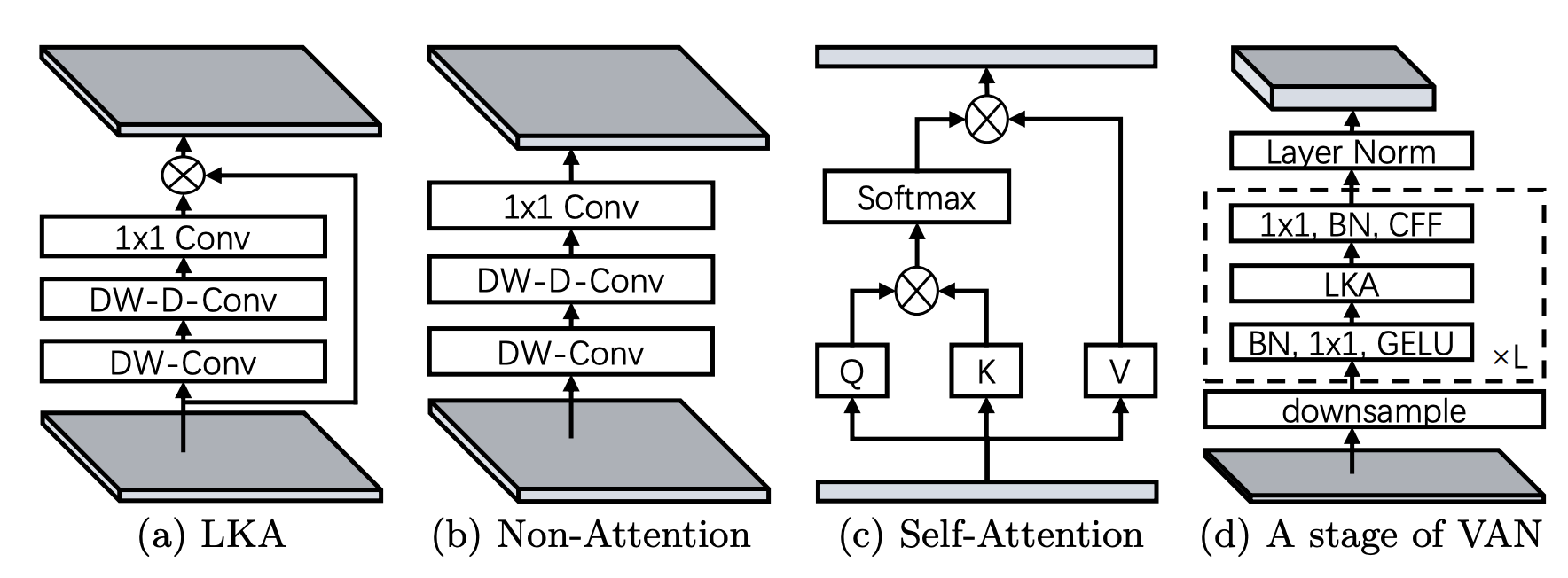

SegFormer consists of a hierarchical Transformer encoder and a lightweight all-MLP decode head to achieve great results on semantic segmentation benchmarks such as ADE20K and Cityscapes. The hierarchical Transformer is first pre-trained on ImageNet-1k, after which a decode head is added and fine-tuned altogether on a downstream dataset.

This repository only contains the pre-trained hierarchical Transformer, hence it can be used for fine-tuning purposes.

## Intended uses & limitations

You can use the model for fine-tuning of semantic segmentation. See the [model hub](https://huggingface.co/models?other=segformer) to look for fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes:

```python

from transformers import SegformerFeatureExtractor, SegformerForImageClassification

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

feature_extractor = SegformerFeatureExtractor.from_pretrained("nvidia/mit-b2")

model = SegformerForImageClassification.from_pretrained("nvidia/mit-b2")

inputs = feature_extractor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits

# model predicts one of the 1000 ImageNet classes

predicted_class_idx = logits.argmax(-1).item()

print("Predicted class:", model.config.id2label[predicted_class_idx])

```

For more code examples, we refer to the [documentation](https://huggingface.co/transformers/model_doc/segformer.html#).

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2105-15203,

author = {Enze Xie and

Wenhai Wang and

Zhiding Yu and

Anima Anandkumar and

Jose M. Alvarez and

Ping Luo},

title = {SegFormer: Simple and Efficient Design for Semantic Segmentation with

Transformers},

journal = {CoRR},

volume = {abs/2105.15203},

year = {2021},

url = {https://arxiv.org/abs/2105.15203},

eprinttype = {arXiv},

eprint = {2105.15203},

timestamp = {Wed, 02 Jun 2021 11:46:42 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2105-15203.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

|

prajjwal1/bert-mini-mnli

|

2793a188a2d6f995f9e6a5f73d9dd8b7a3a3aaa6

|

2021-10-05T17:57:20.000Z

|

[

"pytorch",

"jax",

"bert",

"text-classification",

"arxiv:1908.08962",

"arxiv:2110.01518",

"transformers"

] |

text-classification

| false |

prajjwal1

| null |

prajjwal1/bert-mini-mnli

| 515 | null |

transformers

| 2,302 |

The following model is a Pytorch pre-trained model obtained from converting Tensorflow checkpoint found in the [official Google BERT repository](https://github.com/google-research/bert). These BERT variants were introduced in the paper [Well-Read Students Learn Better: On the Importance of Pre-training Compact Models](https://arxiv.org/abs/1908.08962). These models are trained on MNLI.

If you use the model, please consider citing the paper

```

@misc{bhargava2021generalization,

title={Generalization in NLI: Ways (Not) To Go Beyond Simple Heuristics},

author={Prajjwal Bhargava and Aleksandr Drozd and Anna Rogers},

year={2021},

eprint={2110.01518},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

Original Implementation and more info can be found in [this Github repository](https://github.com/prajjwal1/generalize_lm_nli).

```

MNLI: 68.04%

MNLI-mm: 69.17%

```

These models were trained for 4 epochs.

[@prajjwal_1](https://twitter.com/prajjwal_1)

|

Biasface/DDDC

|

4481ffe566e96900e4b4e4df6ebc815524295bbf

|

2021-11-30T17:30:53.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

| false |

Biasface

| null |

Biasface/DDDC

| 513 | null |

transformers

| 2,303 |

---

tags:

- conversational

---

#hi

|

studio-ousia/mluke-base

|

0f3c9dc42873eaf0e807bd2736bc4cfbe73de3b2

|

2022-03-11T02:58:43.000Z

|

[

"pytorch",

"luke",

"fill-mask",

"multilingual",

"transformers",

"named entity recognition",

"relation classification",

"question answering",

"license:apache-2.0",

"autotrain_compatible"

] |

fill-mask

| false |

studio-ousia

| null |

studio-ousia/mluke-base

| 513 | 3 |

transformers

| 2,304 |

---

language: multilingual

thumbnail: https://github.com/studio-ousia/luke/raw/master/resources/luke_logo.png

tags:

- luke

- named entity recognition

- relation classification

- question answering

license: apache-2.0

---

## mLUKE

**mLUKE** (multilingual LUKE) is a multilingual extension of LUKE.

Please check the [official repository](https://github.com/studio-ousia/luke) for

more details and updates.

This is the mLUKE base model with 12 hidden layers, 768 hidden size. The total number

of parameters in this model is 585M (278M for the word embeddings and encoder, 307M for the entity embeddings).

The model was initialized with the weights of XLM-RoBERTa(base) and trained using December 2020 version of Wikipedia in 24 languages.

### Citation

If you find mLUKE useful for your work, please cite the following paper:

```latex

@inproceedings{ri2021mluke,

title={mLUKE: The Power of Entity Representations in Multilingual Pretrained Language Models},

author={Ryokan Ri, Ikuya Yamada, Yoshimasa Tsuruoka},

booktitle={arXiv},

year={2021}

}

```

|

DeepPavlov/distilrubert-small-cased-conversational

|

e348066b4a7279b97138038299bddc6580a9169a

|

2022-06-28T17:19:09.000Z

|

[

"pytorch",

"distilbert",

"ru",

"arxiv:2205.02340",

"transformers"

] | null | false |

DeepPavlov

| null |

DeepPavlov/distilrubert-small-cased-conversational

| 513 | null |

transformers

| 2,305 |

---

language:

- ru

---

# distilrubert-small-cased-conversational

Conversational DistilRuBERT-small \(Russian, cased, 2‑layer, 768‑hidden, 12‑heads, 107M parameters\) was trained on OpenSubtitles\[1\], [Dirty](https://d3.ru/), [Pikabu](https://pikabu.ru/), and a Social Media segment of Taiga corpus\[2\] (as [Conversational RuBERT](https://huggingface.co/DeepPavlov/rubert-base-cased-conversational)). It can be considered as small copy of [Conversational DistilRuBERT-base](https://huggingface.co/DeepPavlov/distilrubert-base-cased-conversational).

Our DistilRuBERT-small was highly inspired by \[3\], \[4\]. Namely, we used

* KL loss (between teacher and student output logits)

* MLM loss (between tokens labels and student output logits)

* Cosine embedding loss (between averaged six consecutive hidden states from teacher's encoder and one hidden state of the student)

* MSE loss (between averaged six consecutive attention maps from teacher's encoder and one attention map of the student)

The model was trained for about 80 hrs. on 8 nVIDIA Tesla P100-SXM2.0 16Gb.

To evaluate improvements in the inference speed, we ran teacher and student models on random sequences with seq_len=512, batch_size = 16 (for throughput) and batch_size=1 (for latency).

All tests were performed on Intel(R) Xeon(R) CPU E5-2698 v4 @ 2.20GHz and nVIDIA Tesla P100-SXM2.0 16Gb.

| Model | Size, Mb. | CPU latency, sec.| GPU latency, sec. | CPU throughput, samples/sec. | GPU throughput, samples/sec. |

|-------------------------------------------------|------------|------------------|-------------------|------------------------------|------------------------------|

| Teacher (RuBERT-base-cased-conversational) | 679 | 0.655 | 0.031 | 0.3754 | 36.4902 |

| Student (DistilRuBERT-small-cased-conversational)| 409 | 0.1656 | 0.015 | 0.9692 | 71.3553 |

To evaluate model quality, we fine-tuned DistilRuBERT-small on classification, NER and question answering tasks. Scores and archives with fine-tuned models can be found in [DeepPavlov docs](http://docs.deeppavlov.ai/en/master/features/overview.html#models). Also, results could be found in the [paper](https://arxiv.org/abs/2205.02340) Tables 1&2 as well as performance benchmarks and training details.

# Citation

If you found the model useful for your research, we are kindly ask to cite [this](https://arxiv.org/abs/2205.02340) paper:

```

@misc{https://doi.org/10.48550/arxiv.2205.02340,

doi = {10.48550/ARXIV.2205.02340},

url = {https://arxiv.org/abs/2205.02340},

author = {Kolesnikova, Alina and Kuratov, Yuri and Konovalov, Vasily and Burtsev, Mikhail},

keywords = {Computation and Language (cs.CL), Machine Learning (cs.LG), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Knowledge Distillation of Russian Language Models with Reduction of Vocabulary},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

\[1\]: P. Lison and J. Tiedemann, 2016, OpenSubtitles2016: Extracting Large Parallel Corpora from Movie and TV Subtitles. In Proceedings of the 10th International Conference on Language Resources and Evaluation \(LREC 2016\)

\[2\]: Shavrina T., Shapovalova O. \(2017\) TO THE METHODOLOGY OF CORPUS CONSTRUCTION FOR MACHINE LEARNING: «TAIGA» SYNTAX TREE CORPUS AND PARSER. in proc. of “CORPORA2017”, international conference , Saint-Petersbourg, 2017.

\[3\]: Sanh, V., Debut, L., Chaumond, J., & Wolf, T. \(2019\). DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. arXiv preprint arXiv:1910.01108.

\[4\]: <https://github.com/huggingface/transformers/tree/master/examples/research_projects/distillation>

|

tner/xlm-roberta-large-uncased-wnut2017

|

d2f13491ebb59b477fa61dc0224d88daf851513f

|

2021-02-13T00:12:33.000Z

|

[

"pytorch",

"xlm-roberta",

"token-classification",

"transformers",

"autotrain_compatible"

] |

token-classification

| false |

tner

| null |

tner/xlm-roberta-large-uncased-wnut2017

| 512 | null |

transformers

| 2,306 |

# XLM-RoBERTa for NER

XLM-RoBERTa finetuned on NER. Check more detail at [TNER repository](https://github.com/asahi417/tner).

## Usage

```

from transformers import AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained("asahi417/tner-xlm-roberta-large-uncased-wnut2017")

model = AutoModelForTokenClassification.from_pretrained("asahi417/tner-xlm-roberta-large-uncased-wnut2017")

```

|

huggingface/distilbert-base-uncased-finetuned-mnli

|

0fadb1fe60cd119b3af82e2bf9cb98a59336d7bc

|

2021-02-25T20:27:07.000Z

|

[

"pytorch",

"tf",

"distilbert",

"text-classification",

"transformers"

] |

text-classification

| false |

huggingface

| null |

huggingface/distilbert-base-uncased-finetuned-mnli

| 512 | null |

transformers

| 2,307 |

Entry not found

|

SIKU-BERT/sikuroberta

|

bb25260d5c321924fe4fb353c09191c0aaf5c5c6

|

2021-09-22T00:22:36.000Z

|

[

"pytorch",

"bert",

"fill-mask",

"zh",

"transformers",

"chinese",

"classical chinese",

"literary chinese",

"ancient chinese",

"roberta",

"license:apache-2.0",

"autotrain_compatible"

] |

fill-mask

| false |

SIKU-BERT

| null |

SIKU-BERT/sikuroberta

| 511 | 2 |

transformers

| 2,308 |

---

language:

- "zh"

thumbnail: "https://raw.githubusercontent.com/SIKU-BERT/SikuBERT/main/appendix/sikubert.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "roberta"

- "pytorch"

inference: false

license: "apache-2.0"

---

# SikuBERT

## Model description

Digital humanities research needs the support of large-scale corpus and high-performance ancient Chinese natural language processing tools. The pre-training language model has greatly improved the accuracy of text mining in English and modern Chinese texts. At present, there is an urgent need for a pre-training model specifically for the automatic processing of ancient texts. We used the verified high-quality “Siku Quanshu” full-text corpus as the training set, based on the BERT deep language model architecture, we constructed the SikuBERT and SikuRoBERTa pre-training language models for intelligent processing tasks of ancient Chinese.

## How to use

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("SIKU-BERT/sikuroberta")

model = AutoModel.from_pretrained("SIKU-BERT/sikuroberta")

```

## About Us

We are from Nanjing Agricultural University.

> Created with by SIKU-BERT [](https://github.com/SIKU-BERT/SikuBERT-for-digital-humanities-and-classical-Chinese-information-processing)

|

Helsinki-NLP/opus-mt-ru-fr

|

55c73236818495c7a6dd5a98e3529de3481bc3ae

|

2021-09-10T14:02:31.000Z

|

[

"pytorch",

"jax",

"marian",

"text2text-generation",

"ru",

"fr",

"transformers",

"translation",

"license:apache-2.0",

"autotrain_compatible"

] |

translation

| false |

Helsinki-NLP

| null |

Helsinki-NLP/opus-mt-ru-fr

| 510 | null |

transformers

| 2,309 |

---

tags:

- translation

license: apache-2.0

---

### opus-mt-ru-fr

* source languages: ru

* target languages: fr

* OPUS readme: [ru-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ru-fr/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-26.zip](https://object.pouta.csc.fi/OPUS-MT-models/ru-fr/opus-2020-01-26.zip)

* test set translations: [opus-2020-01-26.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ru-fr/opus-2020-01-26.test.txt)

* test set scores: [opus-2020-01-26.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ru-fr/opus-2020-01-26.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| newstest2012.ru.fr | 18.3 | 0.497 |

| newstest2013.ru.fr | 21.6 | 0.516 |

| Tatoeba.ru.fr | 51.5 | 0.670 |

|

KoichiYasuoka/chinese-roberta-base-upos

|

2fcc4e89732370e30451b65e5a7227c78811f0d4

|

2022-02-11T06:28:59.000Z

|

[

"pytorch",

"bert",

"token-classification",

"zh",

"dataset:universal_dependencies",

"transformers",

"chinese",

"pos",

"wikipedia",

"dependency-parsing",

"license:apache-2.0",

"autotrain_compatible"

] |

token-classification

| false |

KoichiYasuoka

| null |

KoichiYasuoka/chinese-roberta-base-upos

| 510 | 2 |

transformers

| 2,310 |

---

language:

- "zh"

tags:

- "chinese"

- "token-classification"

- "pos"

- "wikipedia"

- "dependency-parsing"

datasets:

- "universal_dependencies"

license: "apache-2.0"

pipeline_tag: "token-classification"

---

# chinese-roberta-base-upos

## Model Description

This is a BERT model pre-trained on Chinese Wikipedia texts (both simplified and traditional) for POS-tagging and dependency-parsing, derived from [chinese-roberta-wwm-ext](https://huggingface.co/hfl/chinese-roberta-wwm-ext). Every word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech).

## How to Use

```py

from transformers import AutoTokenizer,AutoModelForTokenClassification

tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/chinese-roberta-base-upos")

model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/chinese-roberta-base-upos")

```

or

```py

import esupar

nlp=esupar.load("KoichiYasuoka/chinese-roberta-base-upos")

```

## See Also

[esupar](https://github.com/KoichiYasuoka/esupar): Tokenizer POS-tagger and Dependency-parser with BERT/RoBERTa models

|

anton-l/wav2vec2-base-superb-sv

|

0a1a74d00d5e44dbd7344b65c9847a1eb625c73b

|

2021-12-14T12:49:10.000Z

|

[

"pytorch",

"wav2vec2",

"audio-xvector",

"transformers"

] | null | false |

anton-l

| null |

anton-l/wav2vec2-base-superb-sv

| 510 | null |

transformers

| 2,311 |

Entry not found

|

Davlan/bert-base-multilingual-cased-finetuned-yoruba

|

000f80b4509f73bca9a33f9db0573d6f67396a12

|

2022-06-27T11:50:30.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"fill-mask",

"yo",

"transformers",

"autotrain_compatible"

] |

fill-mask

| false |

Davlan

| null |

Davlan/bert-base-multilingual-cased-finetuned-yoruba

| 509 | null |

transformers

| 2,312 |

Hugging Face's logo

---

language: yo

datasets:

---

# bert-base-multilingual-cased-finetuned-yoruba

## Model description

**bert-base-multilingual-cased-finetuned-yoruba** is a **Yoruba BERT** model obtained by fine-tuning **bert-base-multilingual-cased** model on Yorùbá language texts. It provides **better performance** than the multilingual BERT on text classification and named entity recognition datasets.

Specifically, this model is a *bert-base-multilingual-cased* model that was fine-tuned on Yorùbá corpus.

## Intended uses & limitations

#### How to use

You can use this model with Transformers *pipeline* for masked token prediction.

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='Davlan/bert-base-multilingual-cased-finetuned-yoruba')

>>> unmasker("Arẹmọ Phillip to jẹ ọkọ [MASK] Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun")

[{'sequence': '[CLS] Arẹmọ Phillip to jẹ ọkọ Mary Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun [SEP]', 'score': 0.1738305538892746,

'token': 12176,

'token_str': 'Mary'},

{'sequence': '[CLS] Arẹmọ Phillip to jẹ ọkọ Queen Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun [SEP]', 'score': 0.16382873058319092,

'token': 13704,

'token_str': 'Queen'},

{'sequence': '[CLS] Arẹmọ Phillip to jẹ ọkọ ti Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun [SEP]', 'score': 0.13272495567798615,

'token': 14382,

'token_str': 'ti'},

{'sequence': '[CLS] Arẹmọ Phillip to jẹ ọkọ King Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun [SEP]', 'score': 0.12823280692100525,

'token': 11515,

'token_str': 'King'},

{'sequence': '[CLS] Arẹmọ Phillip to jẹ ọkọ Lady Elizabeth to ti wa lori aisan ti dagbere faye lẹni ọdun mọkandilọgọrun [SEP]', 'score': 0.07841219753026962,

'token': 14005,

'token_str': 'Lady'}]

```

#### Limitations and bias

This model is limited by its training dataset of entity-annotated news articles from a specific span of time. This may not generalize well for all use cases in different domains.

## Training data

This model was fine-tuned on Bible, JW300, [Menyo-20k](https://huggingface.co/datasets/menyo20k_mt), [Yoruba Embedding corpus](https://huggingface.co/datasets/yoruba_text_c3) and [CC-Aligned](https://opus.nlpl.eu/), Wikipedia, news corpora (BBC Yoruba, VON Yoruba, Asejere, Alaroye), and other small datasets curated from friends.

## Training procedure

This model was trained on a single NVIDIA V100 GPU

## Eval results on Test set (F-score, average over 5 runs)

Dataset| mBERT F1 | yo_bert F1

-|-|-

[MasakhaNER](https://github.com/masakhane-io/masakhane-ner) | 78.97 | 82.58

[BBC Yorùbá Textclass](https://huggingface.co/datasets/yoruba_bbc_topics) | 75.13 | 79.11

### BibTeX entry and citation info

By David Adelani

```

```

|

facebook/wav2vec2-xls-r-1b

|

6d8fad78d7d9c252adfdf48da029590b21f47414

|

2021-11-18T16:32:35.000Z

|

[

"pytorch",

"wav2vec2",

"pretraining",

"multilingual",

"dataset:common_voice",

"dataset:multilingual_librispeech",

"arxiv:2111.09296",

"transformers",

"speech",

"xls_r",

"xls_r_pretrained",

"license:apache-2.0"

] | null | false |

facebook

| null |

facebook/wav2vec2-xls-r-1b

| 509 | 10 |

transformers

| 2,313 |

---

language: multilingual

datasets:

- common_voice

- multilingual_librispeech

tags:

- speech

- xls_r

- xls_r_pretrained

license: apache-2.0

---

# Wav2Vec2-XLS-R-1B

[Facebook's Wav2Vec2 XLS-R](https://ai.facebook.com/blog/xls-r-self-supervised-speech-processing-for-128-languages) counting **1 billion** parameters.

XLS-R is Facebook AI's large-scale multilingual pretrained model for speech (the "XLM-R for Speech"). It is pretrained on 436k hours of unlabeled speech, including VoxPopuli, MLS, CommonVoice, BABEL, and VoxLingua107. It uses the wav2vec 2.0 objective, in 128 languages. When using the model make sure that your speech input is sampled at 16kHz.

**Note**: This model should be fine-tuned on a downstream task, like Automatic Speech Recognition, Translation, or Classification. Check out [**this blog**](https://huggingface.co/blog/fine-tune-xlsr-wav2vec2) for more information about ASR.

[XLS-R Paper](https://arxiv.org/abs/2111.09296)

**Abstract**

This paper presents XLS-R, a large-scale model for cross-lingual speech representation learning based on wav2vec 2.0. We train models with up to 2B parameters on 436K hours of publicly available speech audio in 128 languages, an order of magnitude more public data than the largest known prior work. Our evaluation covers a wide range of tasks, domains, data regimes and languages, both high and low-resource. On the CoVoST-2 speech translation benchmark, we improve the previous state of the art by an average of 7.4 BLEU over 21 translation directions into English. For speech recognition, XLS-R improves over the best known prior work on BABEL, MLS, CommonVoice as well as VoxPopuli, lowering error rates by 20%-33% relative on average. XLS-R also sets a new state of the art on VoxLingua107 language identification. Moreover, we show that with sufficient model size, cross-lingual pretraining can outperform English-only pretraining when translating English speech into other languages, a setting which favors monolingual pretraining. We hope XLS-R can help to improve speech processing tasks for many more languages of the world.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

See [this google colab](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLS_R_on_Common_Voice.ipynb) for more information on how to fine-tune the model.

You can find other pretrained XLS-R models with different numbers of parameters:

* [300M parameters version](https://huggingface.co/facebook/wav2vec2-xls-r-300m)

* [1B version version](https://huggingface.co/facebook/wav2vec2-xls-r-1b)

* [2B version version](https://huggingface.co/facebook/wav2vec2-xls-r-2b)

|

oliverguhr/spelling-correction-english-base

|

a30d76e2e7de0b0b350304c8e17cef99da8eb8e7

|

2022-06-13T12:09:01.000Z

|

[

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"en",

"transformers",

"license:mit",

"autotrain_compatible"

] |

text2text-generation

| false |

oliverguhr

| null |

oliverguhr/spelling-correction-english-base

| 509 | 2 |

transformers

| 2,314 |

---

language:

- en

license: mit

widget:

- text: "lets do a comparsion"

example_title: "1"

- text: "Their going to be here so0n"

example_title: "2"

- text: "ze shop is cloed due to covid 19"

example_title: "3"

metrics:

- cer

---

This is an experimental model that should fix your typos and punctuation.

If you like to run your own experiments or train for a different language, have a look at [the code](https://github.com/oliverguhr/spelling).

## Model description

This is a proof of concept spelling correction model for English.

## Intended uses & limitations

This project is work in progress, be aware that the model can produce artefacts.

You can test the model using the pipeline-interface:

```python

from transformers import pipeline

fix_spelling = pipeline("text2text-generation",model="oliverguhr/spelling-correction-english-base")

print(fix_spelling("lets do a comparsion",max_length=2048))

```

|

SEBIS/code_trans_t5_base_code_documentation_generation_python

|

f42aaecddfc35f12575e9c887ee79cf3d6cdb97d

|

2021-06-23T04:43:22.000Z

|

[

"pytorch",

"jax",

"t5",

"feature-extraction",

"transformers",

"summarization"

] |

summarization

| false |

SEBIS

| null |

SEBIS/code_trans_t5_base_code_documentation_generation_python

| 508 | null |

transformers

| 2,315 |

---

tags:

- summarization

widget:

- text: "def e ( message , exit_code = None ) : print_log ( message , YELLOW , BOLD ) if exit_code is not None : sys . exit ( exit_code )"

---

# CodeTrans model for code documentation generation python

Pretrained model on programming language python using the t5 base model architecture. It was first released in

[this repository](https://github.com/agemagician/CodeTrans). This model is trained on tokenized python code functions: it works best with tokenized python functions.

## Model description

This CodeTrans model is based on the `t5-base` model. It has its own SentencePiece vocabulary model. It used single-task training on CodeSearchNet Corpus python dataset.

## Intended uses & limitations

The model could be used to generate the description for the python function or be fine-tuned on other python code tasks. It can be used on unparsed and untokenized python code. However, if the python code is tokenized, the performance should be better.

### How to use

Here is how to use this model to generate python function documentation using Transformers SummarizationPipeline:

```python

from transformers import AutoTokenizer, AutoModelWithLMHead, SummarizationPipeline

pipeline = SummarizationPipeline(

model=AutoModelWithLMHead.from_pretrained("SEBIS/code_trans_t5_base_code_documentation_generation_python"),

tokenizer=AutoTokenizer.from_pretrained("SEBIS/code_trans_t5_base_code_documentation_generation_python", skip_special_tokens=True),

device=0

)

tokenized_code = "def e ( message , exit_code = None ) : print_log ( message , YELLOW , BOLD ) if exit_code is not None : sys . exit ( exit_code )"

pipeline([tokenized_code])

```

Run this example in [colab notebook](https://github.com/agemagician/CodeTrans/blob/main/prediction/single%20task/function%20documentation%20generation/python/base_model.ipynb).

## Training data

The supervised training tasks datasets can be downloaded on [Link](https://www.dropbox.com/sh/488bq2of10r4wvw/AACs5CGIQuwtsD7j_Ls_JAORa/finetuning_dataset?dl=0&subfolder_nav_tracking=1)

## Evaluation results

For the code documentation tasks, different models achieves the following results on different programming languages (in BLEU score):

Test results :

| Language / Model | Python | Java | Go | Php | Ruby | JavaScript |

| -------------------- | :------------: | :------------: | :------------: | :------------: | :------------: | :------------: |

| CodeTrans-ST-Small | 17.31 | 16.65 | 16.89 | 23.05 | 9.19 | 13.7 |

| CodeTrans-ST-Base | 16.86 | 17.17 | 17.16 | 22.98 | 8.23 | 13.17 |

| CodeTrans-TF-Small | 19.93 | 19.48 | 18.88 | 25.35 | 13.15 | 17.23 |

| CodeTrans-TF-Base | 20.26 | 20.19 | 19.50 | 25.84 | 14.07 | 18.25 |

| CodeTrans-TF-Large | 20.35 | 20.06 | **19.54** | 26.18 | 14.94 | **18.98** |

| CodeTrans-MT-Small | 19.64 | 19.00 | 19.15 | 24.68 | 14.91 | 15.26 |

| CodeTrans-MT-Base | **20.39** | 21.22 | 19.43 | **26.23** | **15.26** | 16.11 |

| CodeTrans-MT-Large | 20.18 | **21.87** | 19.38 | 26.08 | 15.00 | 16.23 |

| CodeTrans-MT-TF-Small | 19.77 | 20.04 | 19.36 | 25.55 | 13.70 | 17.24 |

| CodeTrans-MT-TF-Base | 19.77 | 21.12 | 18.86 | 25.79 | 14.24 | 18.62 |

| CodeTrans-MT-TF-Large | 18.94 | 21.42 | 18.77 | 26.20 | 14.19 | 18.83 |

| State of the art | 19.06 | 17.65 | 18.07 | 25.16 | 12.16 | 14.90 |

> Created by [Ahmed Elnaggar](https://twitter.com/Elnaggar_AI) | [LinkedIn](https://www.linkedin.com/in/prof-ahmed-elnaggar/) and Wei Ding | [LinkedIn](https://www.linkedin.com/in/wei-ding-92561270/)

|

indobenchmark/indobert-large-p2

|

4b280c3bfcc1ed2d6b4589be5c876076b7d73568

|

2021-05-19T20:28:22.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"feature-extraction",

"id",

"dataset:Indo4B",

"arxiv:2009.05387",

"transformers",

"indobert",

"indobenchmark",

"indonlu",

"license:mit"

] |

feature-extraction

| false |

indobenchmark

| null |

indobenchmark/indobert-large-p2

| 508 | null |

transformers

| 2,316 |

---

language: id

tags:

- indobert

- indobenchmark

- indonlu

license: mit

inference: false

datasets:

- Indo4B

---

# IndoBERT Large Model (phase2 - uncased)

[IndoBERT](https://arxiv.org/abs/2009.05387) is a state-of-the-art language model for Indonesian based on the BERT model. The pretrained model is trained using a masked language modeling (MLM) objective and next sentence prediction (NSP) objective.

## All Pre-trained Models

| Model | #params | Arch. | Training data |

|--------------------------------|--------------------------------|-------|-----------------------------------|

| `indobenchmark/indobert-base-p1` | 124.5M | Base | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-base-p2` | 124.5M | Base | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-large-p1` | 335.2M | Large | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-large-p2` | 335.2M | Large | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-lite-base-p1` | 11.7M | Base | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-lite-base-p2` | 11.7M | Base | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-lite-large-p1` | 17.7M | Large | Indo4B (23.43 GB of text) |

| `indobenchmark/indobert-lite-large-p2` | 17.7M | Large | Indo4B (23.43 GB of text) |

## How to use

### Load model and tokenizer

```python

from transformers import BertTokenizer, AutoModel

tokenizer = BertTokenizer.from_pretrained("indobenchmark/indobert-large-p2")

model = AutoModel.from_pretrained("indobenchmark/indobert-large-p2")

```

### Extract contextual representation

```python

x = torch.LongTensor(tokenizer.encode('aku adalah anak [MASK]')).view(1,-1)

print(x, model(x)[0].sum())

```

## Authors

<b>IndoBERT</b> was trained and evaluated by Bryan Wilie\*, Karissa Vincentio\*, Genta Indra Winata\*, Samuel Cahyawijaya\*, Xiaohong Li, Zhi Yuan Lim, Sidik Soleman, Rahmad Mahendra, Pascale Fung, Syafri Bahar, Ayu Purwarianti.

## Citation

If you use our work, please cite:

```bibtex

@inproceedings{wilie2020indonlu,

title={IndoNLU: Benchmark and Resources for Evaluating Indonesian Natural Language Understanding},

author={Bryan Wilie and Karissa Vincentio and Genta Indra Winata and Samuel Cahyawijaya and X. Li and Zhi Yuan Lim and S. Soleman and R. Mahendra and Pascale Fung and Syafri Bahar and A. Purwarianti},

booktitle={Proceedings of the 1st Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 10th International Joint Conference on Natural Language Processing},

year={2020}

}

```

|

kamalkraj/bioelectra-base-discriminator-pubmed-pmc-lt

|

d807405696fdace62f42841dc06289d2354e1158

|

2021-06-10T14:22:08.000Z

|

[

"pytorch",

"electra",

"pretraining",

"transformers"

] | null | false |

kamalkraj

| null |

kamalkraj/bioelectra-base-discriminator-pubmed-pmc-lt

| 508 | 2 |

transformers

| 2,317 |

## BioELECTRA:Pretrained Biomedical text Encoder using Discriminators

Recent advancements in pretraining strategies in NLP have shown a significant improvement in the performance of models on various text mining tasks. In this paper, we introduce BioELECTRA, a biomedical domain-specific language encoder model that adapts ELECTRA (Clark et al., 2020) for the Biomedical domain. BioELECTRA outperforms the previous models and achieves state of the art (SOTA) on all the 13 datasets in BLURB benchmark and on all the 4 Clinical datasets from BLUE Benchmark across 7 NLP tasks. BioELECTRA pretrained on PubMed and PMC full text articles performs very well on Clinical datasets as well. BioELECTRA achieves new SOTA 86.34%(1.39% accuracy improvement) on MedNLI and 64% (2.98% accuracy improvement) on PubMedQA dataset.

For a detailed description and experimental results, please refer to our paper [BioELECTRA:Pretrained Biomedical text Encoder using Discriminators](https://www.aclweb.org/anthology/2021.bionlp-1.16/).

## How to use the discriminator in `transformers`

```python

from transformers import ElectraForPreTraining, ElectraTokenizerFast

import torch

discriminator = ElectraForPreTraining.from_pretrained("kamalkraj/bioelectra-base-discriminator-pubmed")

tokenizer = ElectraTokenizerFast.from_pretrained("kamalkraj/bioelectra-base-discriminator-pubmed")

sentence = "The quick brown fox jumps over the lazy dog"

fake_sentence = "The quick brown fox fake over the lazy dog"

fake_tokens = tokenizer.tokenize(fake_sentence)

fake_inputs = tokenizer.encode(fake_sentence, return_tensors="pt")

discriminator_outputs = discriminator(fake_inputs)

predictions = torch.round((torch.sign(discriminator_outputs[0]) + 1) / 2)

[print("%7s" % token, end="") for token in fake_tokens]

[print("%7s" % int(prediction), end="") for prediction in predictions[0].tolist()]

```

|

facebook/regnet-y-040

|

40577f588ce4b8b3a306e59b93b117047e0a6625

|

2022-06-30T18:56:14.000Z

|

[

"pytorch",

"tf",

"regnet",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2003.13678",

"transformers",

"vision",

"license:apache-2.0"

] |

image-classification

| false |

facebook

| null |

facebook/regnet-y-040

| 508 | null |

transformers

| 2,318 |

---

license: apache-2.0

tags:

- vision

- image-classification

datasets:

- imagenet-1k

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

example_title: Tiger

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

example_title: Teapot

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

example_title: Palace

---

# RegNet

RegNet model trained on imagenet-1k. It was introduced in the paper [Designing Network Design Spaces](https://arxiv.org/abs/2003.13678) and first released in [this repository](https://github.com/facebookresearch/pycls).

Disclaimer: The team releasing RegNet did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

The authors design search spaces to perform Neural Architecture Search (NAS). They first start from a high dimensional search space and iteratively reduce the search space by empirically applying constraints based on the best-performing models sampled by the current search space.

## Intended uses & limitations

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=regnet) to look for

fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model:

```python

>>> from transformers import AutoFeatureExtractor, RegNetForImageClassification

>>> import torch

>>> from datasets import load_dataset

>>> dataset = load_dataset("huggingface/cats-image")

>>> image = dataset["test"]["image"][0]

>>> feature_extractor = AutoFeatureExtractor.from_pretrained("facebook/regnet-y-040")

>>> model = RegNetForImageClassification.from_pretrained("facebook/regnet-y-040")

>>> inputs = feature_extractor(image, return_tensors="pt")

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> # model predicts one of the 1000 ImageNet classes

>>> predicted_label = logits.argmax(-1).item()

>>> print(model.config.id2label[predicted_label])

'tabby, tabby cat'

```

For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/regnet).

|

cross-encoder/quora-roberta-base

|

195493c8767e7155c449e9ff7e64890d116d432d

|

2021-08-05T08:41:36.000Z

|

[

"pytorch",

"jax",

"roberta",

"text-classification",

"transformers",

"license:apache-2.0"

] |

text-classification

| false |

cross-encoder

| null |

cross-encoder/quora-roberta-base

| 507 | 1 |

transformers

| 2,319 |

---

license: apache-2.0

---

# Cross-Encoder for Quora Duplicate Questions Detection

This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class.

## Training Data

This model was trained on the [Quora Duplicate Questions](https://www.quora.com/q/quoradata/First-Quora-Dataset-Release-Question-Pairs) dataset. The model will predict a score between 0 and 1 how likely the two given questions are duplicates.

Note: The model is not suitable to estimate the similarity of questions, e.g. the two questions "How to learn Java" and "How to learn Python" will result in a rahter low score, as these are not duplicates.

## Usage and Performance

Pre-trained models can be used like this:

```

from sentence_transformers import CrossEncoder

model = CrossEncoder('model_name')

scores = model.predict([('Question 1', 'Question 2'), ('Question 3', 'Question 4')])

```

You can use this model also without sentence_transformers and by just using Transformers ``AutoModel`` class

|

valurank/distilroberta-news-small

|

dad826d1ce6732850428d4673ff50835c8f7f59b

|

2022-06-08T20:45:50.000Z

|

[

"pytorch",

"roberta",

"text-classification",

"en",

"dataset:valurank/news-small",

"transformers",

"license:other"

] |

text-classification

| false |

valurank

| null |

valurank/distilroberta-news-small

| 507 | null |

transformers

| 2,320 |

---

license: other

language: en

datasets:

- valurank/news-small

---

# DistilROBERTA fine-tuned for news classification

This model is based on [distilroberta-base](https://huggingface.co/distilroberta-base) pretrained weights, with a classification head fine-tuned to classify news articles into 3 categories (bad, medium, good).

## Training data

The dataset used to fine-tune the model is [news-small](https://huggingface.co/datasets/valurank/news-small), the 300 article news dataset manually annotated by Alex.

## Inputs

Similar to its base model, this model accepts inputs with a maximum length of 512 tokens.

|

Milos/slovak-gpt-j-1.4B

|

1ca9a664fba18d050377579e43b92897efca62d4

|

2022-02-17T14:29:47.000Z

|

[

"pytorch",

"gptj",

"text-generation",

"sk",

"arxiv:2104.09864",

"transformers",

"Slovak GPT-J",

"causal-lm",

"license:gpl-3.0"

] |

text-generation

| false |

Milos

| null |

Milos/slovak-gpt-j-1.4B

| 506 | null |

transformers

| 2,321 |

---

language:

- sk

tags:

- Slovak GPT-J

- pytorch

- causal-lm

license: gpl-3.0

---

# Slovak GPT-J-1.4B

Slovak GPT-J-1.4B with the whopping `1,415,283,792` parameters is the latest and the largest model released in Slovak GPT-J series. Smaller variants, [Slovak GPT-J-405M](https://huggingface.co/Milos/slovak-gpt-j-405M) and [Slovak GPT-J-162M](https://huggingface.co/Milos/slovak-gpt-j-162M), are still available.

## Model Description

Model is based on [GPT-J](https://github.com/kingoflolz/mesh-transformer-jax/) and has over 1.4B trainable parameters.

<figure>

| Hyperparameter | Value |

|----------------------|----------------------------------------------------------------------------------------------------------------------------------------|

| \\(n_{parameters}\\) | 1,415,283,792 |

| \\(n_{layers}\\) | 24 |

| \\(d_{model}\\) | 2048 |

| \\(d_{ff}\\) | 16384 |

| \\(n_{heads}\\) | 16 |

| \\(d_{head}\\) | 256 |

| \\(n_{ctx}\\) | 2048 |

| \\(n_{vocab}\\) | 50256 (same tokenizer as GPT-2/3†) |

| Positional Encoding | [Rotary Position Embedding (RoPE)](https://arxiv.org/abs/2104.09864) |

| RoPE Dimensions | [64](https://github.com/kingoflolz/mesh-transformer-jax/blob/f2aa66e0925de6593dcbb70e72399b97b4130482/mesh_transformer/layers.py#L223) |

<p><strong>†</strong> ByteLevelBPETokenizer was trained on the same Slovak corpus.</p></figure>

## Training data

Slovak GPT-J models were trained on a privately collected dataset consisting of predominantly Slovak text spanning different categories, e.g. web, news articles or even biblical texts - in total, over 40GB of text data was used to train this model.

The dataset was preprocessed and cleaned in a specific way that involves minor but a few caveats, so in order to achieve the expected performance, feel free to refer to [How to use] section. Please, keep in mind that despite the effort to remove inappropriate corpus, the model still might generate sensitive content or leak sensitive information.

## Training procedure

This model was trained for a bit more than 26.5 billion tokens over 48,001 steps on TPU v3-8 pod. The cross-entropy validation loss at the last step was `2.657`.

## Intended Use

Same as the original GPT-J, Slovak GPT-J learns an inner representation of the language that can be used to extract features useful for downstream tasks, however, the intended use is text generation from a prompt.

### How to use

This model along with the tokenizer can be easily loaded using the `AutoModelForCausalLM` functionality:

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("Milos/slovak-gpt-j-1.4B")

model = AutoModelForCausalLM.from_pretrained("Milos/slovak-gpt-j-1.4B")

```

When generating a prompt keep in mind these three things, and you should be good to go:

1. Never leave trailing whitespaces. There's a difference between how tokenizer encodes "Mám rád slovenčinu" (no space after `slovenčinu`) and "Mám rád slovenčinu " (trailing space after `slovenčinu`), i.e `[12805, 2872, 46878]` != `[12805, 2872, 46878, 221]`.

2. Always use good ol' US English primary double quotation marks, i.e. `""` instead of `„“`.

3. In case of a new line always enter `\n\n` instead of a single `\n`

To illustrate an example of a basic text generation:

```

>>> prompt = "Tradičné jedlo na Orave sú"

>>> encoded_input = tokenizer(prompt, return_tensors='pt')

>>> output = model.generate(**encoded_input)

>>> tokenizer.decode(output[0])

'Tradičné jedlo na Orave sú bryndzové halušky\n\nNa Orave sa v minulosti varilo viac druhov'

```

### Capabilities, Limitations, and Biases

Based on the parameter count and our internal testing this should be by far the most capable model out of all models released in Slovak GPT-J series.

For sake of simplicity, I have omitted all the boilerplate code and swapped `\n` for new lines in the examples that follow. These were generated with both `top_p` and `temperature` set to `0.9`.

A spin on "Mor ho!" by Samo Chalpuka

```

PROMPT

Zleteli orly z Tatry, tiahnu na podolia, ponad vysoké hory, ponad rovné polia; preleteli cez Dunaj, cez tú šíru vodu, sadli tam za pomedzím slovenského rodu.

Duní Dunaj a luna za lunou sa valí

OUTPUT

Zleteli orly z Tatry, tiahnu na podolia, ponad vysoké hory, ponad rovné polia; preleteli cez Dunaj, cez tú šíru vodu, sadli tam za pomedzím slovenského rodu.

Duní Dunaj a luna za lunou sa valí: nad ním svieti pevný hrad na vysokom bralí. Pod tým hradom je zem slovenská, na nej je Slovensko naše.

Na tom vrchu leží slovenský národ, pod tým bralom leží krajina slovenská. A pod tým hradom leží i jeho sláva: na tom vrchu, na tom brale leží sláva slovenského národa.```

```

Jokes are a bit hit or miss. Very often they contain vulgarisms and/or are highly politically incorrect. This one is rather exception than the rule.

```

PROMPT

Nasledujú najlepšie vtipy.

Prečo

OUTPUT

Nasledujú najlepšie vtipy.

Prečo má policajt pri sebe vždy dva nože? Jeden je na sebaobranu, druhý na boj zblízka.

```

The infamous unicorn prompt from GPT-2 paper.

```

PROMPT

V šokujúcom náleze vedci objavili stádo jednorožcov žijúcich v odľahlom, predtým nepreskúmanom údolí, v Andách. Ešte prekvapivejšie pre vedcov bol fakt, že jednorožce hovorili plynule po slovensky.

OUTPUT

V šokujúcom náleze vedci objavili stádo jednorožcov žijúcich v odľahlom, predtým nepreskúmanom údolí, v Andách. Ešte prekvapivejšie pre vedcov bol fakt, že jednorožce hovorili plynule po slovensky.

V Andách ich totiž žilo približne 700 jedincov, ktoré sa živili výhradne materským mliekom. Slováci sú tak pravdepodobne prvými Európanmi, ktorí v Andách stretli jednorožca. "Je to dôkaz, že tieto zvieratá sú inteligentné a že žijú v Andách už stovky rokov," povedal pre webový portál televízie JOJ profesor geológie, geografie a zoológie, Milan Kováč.

Podľa profesora Kováča si v Andách zvieratá vytvárajú svoj vlastný jazyk. Je to zároveň dôkaz, že jednorožce žili v minulosti aj v slovenských pohoriach. "Jednorožce sa tam síce vyskytovali, ale neboli tak dobre preskúmané, ako teraz v Andách."

Na Slovensku však ľudia o jednorožcoch donedávna vedeli veľmi málo.<|endoftext|>

```

Since the dataset contains profanity, politically incorrect language, and (unintentionally) even a bits of text in Czech, the model can generate them in some extent too. Here's an example of the model output when prompt is in Czech:

```

>>> prompt = "Věta nesmí být sprostá a musí být zcela"

>>> encoded_input = tokenizer(prompt, return_tensors='pt')

>>> output = model.generate(**encoded_input, max_length=16)

>>> tokenizer.decode(output[0])

'Věta nesmí být sprostá a musí být zcela pravdivá.'

```

## Citation and Related Information

This was done as a moonlighting project during summer of 2021 to better understand transformers. I didn't have much free time to open source it properly, so it all sat on my hard drive until now :)

If you use this model or have any questions about it feel free to hit me up at [twitter](https://twitter.com/miloskondela) or check out my [github](https://github.com/kondela) profile.

### BibTeX entry

To cite this model:

```bibtex

@misc{slovak-gpt-j-1.4B,

author = {Kondela, Milos},

title = {{Slovak GPT-J-1.4B}},

howpublished = {\url{https://huggingface.co/Milos/slovak-gpt-j-1.4B}},

year = 2022,

month = February

}

```

To cite the codebase that trained this model:

```bibtex

@misc{mesh-transformer-jax,

author = {Wang, Ben},

title = {{Mesh-Transformer-JAX: Model-Parallel Implementation of Transformer Language Model with JAX}},

howpublished = {\url{https://github.com/kingoflolz/mesh-transformer-jax}},

year = 2021,

month = May

}

```

## Acknowledgements

This project was generously supported by [TPU Research Cloud (TRC) program](https://sites.research.google/trc/about/). Shoutout also goes to [Ben Wang](https://github.com/kingoflolz) and great [EleutherAI community](https://www.eleuther.ai/).

|

SIKU-BERT/sikubert

|

fc656de2d6bde33919102dd3abe31c843f42226a

|

2021-09-13T13:34:40.000Z

|

[

"pytorch",

"bert",

"fill-mask",

"zh",

"transformers",

"chinese",

"classical chinese",

"literary chinese",

"ancient chinese",

"roberta",

"license:apache-2.0",

"autotrain_compatible"

] |

fill-mask

| false |

SIKU-BERT

| null |

SIKU-BERT/sikubert

| 506 | 2 |

transformers

| 2,322 |

---

language:

- "zh"

thumbnail: "https://raw.githubusercontent.com/SIKU-BERT/SikuBERT/main/appendix/sikubert.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "roberta"

- "pytorch"

inference: false

license: "apache-2.0"

---

# SikuBERT

## Model description

Digital humanities research needs the support of large-scale corpus and high-performance ancient Chinese natural language processing tools. The pre-training language model has greatly improved the accuracy of text mining in English and modern Chinese texts. At present, there is an urgent need for a pre-training model specifically for the automatic processing of ancient texts. We used the verified high-quality “Siku Quanshu” full-text corpus as the training set, based on the BERT deep language model architecture, we constructed the SikuBERT and SikuRoBERTa pre-training language models for intelligent processing tasks of ancient Chinese.

## How to use

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("SIKU-BERT/sikubert")

model = AutoModel.from_pretrained("SIKU-BERT/sikubert")

```

## About Us

We are from Nanjing Agricultural University.

> Created with by SIKU-BERT [](https://github.com/SIKU-BERT/SikuBERT-for-digital-humanities-and-classical-Chinese-information-processing)

|

google/owlvit-base-patch32

|

4641e344cbbe8e25e0f2ab4e7e53372091ef9cfd

|

2022-07-21T10:49:01.000Z

|

[

"pytorch",

"owlvit",

"transformers"

] | null | false |

google

| null |

google/owlvit-base-patch32

| 506 | null |

transformers

| 2,323 |

Entry not found

|

boychaboy/SNLI_distilbert-base-cased

|

fabefe1f7390d5aecf5d152e13da5998eee2e84d

|

2021-05-10T17:08:47.000Z

|

[

"pytorch",

"distilbert",

"text-classification",

"transformers"

] |

text-classification

| false |

boychaboy

| null |

boychaboy/SNLI_distilbert-base-cased

| 505 | null |

transformers

| 2,324 |

Entry not found

|

microsoft/unispeech-sat-base-plus-sv

|

a492b4bf41b1bd2fa6e6d07c6eae573b3f711b66

|

2021-12-17T13:56:17.000Z

|

[

"pytorch",

"unispeech-sat",

"audio-xvector",

"en",

"arxiv:1912.07875",

"arxiv:2106.06909",

"arxiv:2101.00390",

"arxiv:2110.05752",

"transformers",

"speech"

] | null | false |

microsoft

| null |

microsoft/unispeech-sat-base-plus-sv

| 505 | null |

transformers

| 2,325 |

---

language:

- en

tags:

- speech

---

# UniSpeech-SAT-Base for Speaker Verification

[Microsoft's UniSpeech](https://www.microsoft.com/en-us/research/publication/unispeech-unified-speech-representation-learning-with-labeled-and-unlabeled-data/)

The model was pretrained on 16kHz sampled speech audio with utterance and speaker contrastive loss. When using the model, make sure that your speech input is also sampled at 16kHz.

The model was pre-trained on:

- 60,000 hours of [Libri-Light](https://arxiv.org/abs/1912.07875)

- 10,000 hours of [GigaSpeech](https://arxiv.org/abs/2106.06909)

- 24,000 hours of [VoxPopuli](https://arxiv.org/abs/2101.00390)

[Paper: UNISPEECH-SAT: UNIVERSAL SPEECH REPRESENTATION LEARNING WITH SPEAKER

AWARE PRE-TRAINING](https://arxiv.org/abs/2110.05752)

Authors: Sanyuan Chen, Yu Wu, Chengyi Wang, Zhengyang Chen, Zhuo Chen, Shujie Liu, Jian Wu, Yao Qian, Furu Wei, Jinyu Li, Xiangzhan Yu

**Abstract**

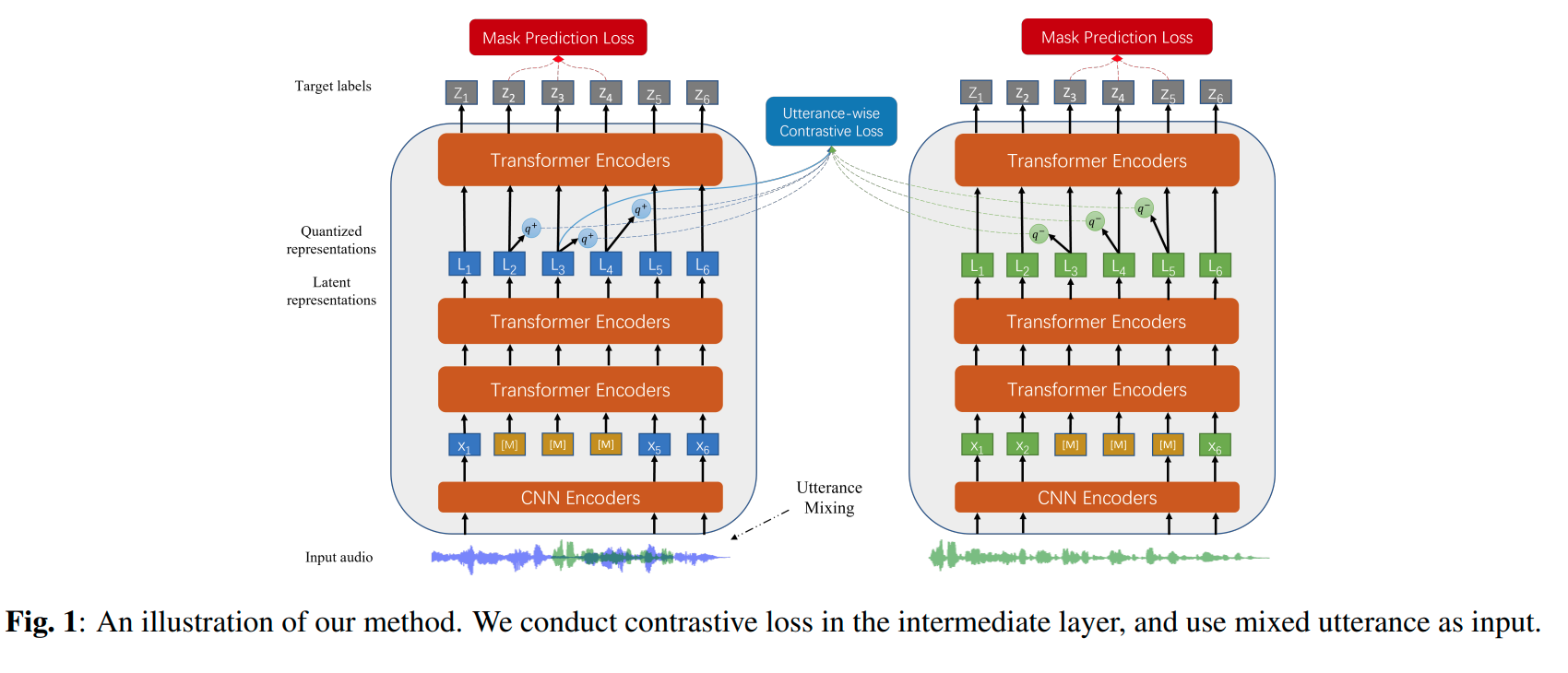

*Self-supervised learning (SSL) is a long-standing goal for speech processing, since it utilizes large-scale unlabeled data and avoids extensive human labeling. Recent years witness great successes in applying self-supervised learning in speech recognition, while limited exploration was attempted in applying SSL for modeling speaker characteristics. In this paper, we aim to improve the existing SSL framework for speaker representation learning. Two methods are introduced for enhancing the unsupervised speaker information extraction. First, we apply the multi-task learning to the current SSL framework, where we integrate the utterance-wise contrastive loss with the SSL objective function. Second, for better speaker discrimination, we propose an utterance mixing strategy for data augmentation, where additional overlapped utterances are created unsupervisely and incorporate during training. We integrate the proposed methods into the HuBERT framework. Experiment results on SUPERB benchmark show that the proposed system achieves state-of-the-art performance in universal representation learning, especially for speaker identification oriented tasks. An ablation study is performed verifying the efficacy of each proposed method. Finally, we scale up training dataset to 94 thousand hours public audio data and achieve further performance improvement in all SUPERB tasks..*

The original model can be found under https://github.com/microsoft/UniSpeech/tree/main/UniSpeech-SAT.

# Fine-tuning details

The model is fine-tuned on the [VoxCeleb1 dataset](https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox1.html) using an X-Vector head with an Additive Margin Softmax loss

[X-Vectors: Robust DNN Embeddings for Speaker Recognition](https://www.danielpovey.com/files/2018_icassp_xvectors.pdf)

# Usage

## Speaker Verification

```python

from transformers import Wav2Vec2FeatureExtractor, UniSpeechSatForXVector

from datasets import load_dataset

import torch

dataset = load_dataset("hf-internal-testing/librispeech_asr_demo", "clean", split="validation")

feature_extractor = Wav2Vec2FeatureExtractor.from_pretrained('microsoft/unispeech-sat-base-plus-sv')

model = UniSpeechSatForXVector.from_pretrained('microsoft/unispeech-sat-base-plus-sv')

# audio files are decoded on the fly

inputs = feature_extractor(dataset[:2]["audio"]["array"], return_tensors="pt")

embeddings = model(**inputs).embeddings

embeddings = torch.nn.functional.normalize(embeddings, dim=-1).cpu()

# the resulting embeddings can be used for cosine similarity-based retrieval

cosine_sim = torch.nn.CosineSimilarity(dim=-1)

similarity = cosine_sim(embeddings[0], embeddings[1])

threshold = 0.89 # the optimal threshold is dataset-dependent

if similarity < threshold:

print("Speakers are not the same!")

```

# License

The official license can be found [here](https://github.com/microsoft/UniSpeech/blob/main/LICENSE)

|

nkoh01/MSRoberta

|

3ff20e811ea95572470d3538cad29e816f05d7f4

|

2021-05-20T18:51:20.000Z

|

[

"pytorch",

"jax",

"roberta",

"fill-mask",

"transformers",

"autotrain_compatible"

] |

fill-mask

| false |

nkoh01

| null |

nkoh01/MSRoberta

| 505 | null |

transformers

| 2,326 |

# MSRoBERTa

Fine-tuned RoBERTa MLM model for [`Miscrosoft Sentence Completion Challenge`](https://www.microsoft.com/en-us/research/wp-content/uploads/2016/02/MSR_SCCD.pdf). This model case-sensitive following the `Roberta-base` model.

# Model description (taken from: [here](https://huggingface.co/roberta-base))

RoBERTa is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means

it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts.

More precisely, it was pretrained with the Masked language modeling (MLM) objective. Taking a sentence, the model

randomly masks 15% of the words in the input then run the entire masked sentence through the model and has to predict

the masked words. This is different from traditional recurrent neural networks (RNNs) that usually see the words one

after the other, or from autoregressive models like GPT which internally mask the future tokens. It allows the model to

learn a bidirectional representation of the sentence.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

from transformers import pipeline,AutoModelForMaskedLM,AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("nkoh01/MSRoberta")

model = AutoModelForMaskedLM.from_pretrained("nkoh01/MSRoberta")

unmasker = pipeline(

"fill-mask",

model=model,

tokenizer=tokenizer

)

unmasker("Hello, it is a <mask> to meet you.")

[{'score': 0.9508683085441589,

'sequence': 'hello, it is a pleasure to meet you.',

'token': 10483,

'token_str': ' pleasure'},

{'score': 0.015089659951627254,

'sequence': 'hello, it is a privilege to meet you.',

'token': 9951,

'token_str': ' privilege'},

{'score': 0.013942377641797066,

'sequence': 'hello, it is a joy to meet you.',

'token': 5823,

'token_str': ' joy'},

{'score': 0.006964420434087515,

'sequence': 'hello, it is a delight to meet you.',

'token': 13213,

'token_str': ' delight'},

{'score': 0.0024567877408117056,

'sequence': 'hello, it is a honour to meet you.',

'token': 6671,

'token_str': ' honour'}]

```

## Installations

Make sure you run `!pip install transformers` command to install the transformers library before running the commands above.

## Bias and limitations

Under construction.

|

fmikaelian/camembert-base-fquad

|

341bf4683d9388a0a4022ce4062283255dc9246c

|

2020-12-11T21:40:08.000Z

|

[

"pytorch",

"camembert",

"question-answering",

"fr",

"transformers",

"autotrain_compatible"

] |

question-answering

| false |

fmikaelian

| null |

fmikaelian/camembert-base-fquad

| 504 | 1 |

transformers

| 2,327 |

---

language: fr

---

# camembert-base-fquad

## Description

A baseline model for question-answering in french ([CamemBERT](https://camembert-model.fr/) model fine-tuned on [FQuAD](https://fquad.illuin.tech/))

## Training hyperparameters

```shell

python3 ./examples/question-answering/run_squad.py \

--model_type camembert \

--model_name_or_path camembert-base \

--do_train \

--do_eval \

--do_lower_case \

--train_file train.json \

--predict_file valid.json \

--learning_rate 3e-5 \

--num_train_epochs 2 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir output \

--per_gpu_eval_batch_size=3 \

--per_gpu_train_batch_size=3 \

--save_steps 10000

```

## Evaluation results

```shell

{"f1": 77.24515316052342, "exact_match": 52.82308657465496}

```

## Usage

```python

from transformers import pipeline

nlp = pipeline('question-answering', model='fmikaelian/camembert-base-fquad', tokenizer='fmikaelian/camembert-base-fquad')

nlp({

'question': "Qui est Claude Monet?",

'context': "Claude Monet, né le 14 novembre 1840 à Paris et mort le 5 décembre 1926 à Giverny, est un peintre français et l’un des fondateurs de l'impressionnisme."

})

```

|

lanwuwei/GigaBERT-v3-Arabic-and-English

|

ee5c781756946364d989e0102b91b4a15390f6ac

|

2021-05-19T00:17:42.000Z

|

[

"pytorch",

"jax",

"bert",

"feature-extraction",

"en",

"ar",

"dataset:gigaword",

"dataset:oscar",

"dataset:wikipedia",

"transformers"

] |

feature-extraction

| false |

lanwuwei

| null |

lanwuwei/GigaBERT-v3-Arabic-and-English

| 504 | null |

transformers

| 2,328 |

---

language:

- en

- ar

datasets:

- gigaword

- oscar

- wikipedia

---

## GigaBERT-v3

GigaBERT-v3 is a customized bilingual BERT for English and Arabic. It was pre-trained in a large-scale corpus (Gigaword+Oscar+Wikipedia) with ~10B tokens, showing state-of-the-art zero-shot transfer performance from English to Arabic on information extraction (IE) tasks. More details can be found in the following paper:

@inproceedings{lan2020gigabert,

author = {Lan, Wuwei and Chen, Yang and Xu, Wei and Ritter, Alan},

title = {An Empirical Study of Pre-trained Transformers for Arabic Information Extraction},

booktitle = {Proceedings of The 2020 Conference on Empirical Methods on Natural Language Processing (EMNLP)},

year = {2020}

}

## Usage

```

from transformers import *

tokenizer = BertTokenizer.from_pretrained("lanwuwei/GigaBERT-v3-Arabic-and-English", do_lower_case=True)

model = BertForTokenClassification.from_pretrained("lanwuwei/GigaBERT-v3-Arabic-and-English")

```

More code examples can be found [here](https://github.com/lanwuwei/GigaBERT).

|

orai-nlp/ElhBERTeu

|

8d4de0a5d8c49f260010d5ea239afe77de31cfe2

|

2022-07-06T10:21:53.000Z

|

[

"pytorch",

"bert",

"feature-extraction",

"eu",

"transformers",

"basque",

"euskara",

"license:cc-by-4.0"

] |

feature-extraction

| false |

orai-nlp

| null |

orai-nlp/ElhBERTeu

| 502 | 0 |

transformers

| 2,329 |

---

license: cc-by-4.0

language: eu

tags:

- bert

- basque

- euskara

---

# ElhBERTeu

This is a BERT model for Basque introduced in [BasqueGLUE: A Natural Language Understanding Benchmark for Basque]().

To train ElhBERTeu, we collected different corpora sources from several domains: updated (2021) national and local news sources, Basque Wikipedia, as well as novel news sources and texts from other domains, such as science (both academic and divulgative), literature or subtitles. More details about the corpora used and their sizes are shown in the following table. Texts from news sources were oversampled (duplicated) as done during the training of BERTeus. In total 575M tokens were used for pre-training ElhBERTeu.

|Domain | Size |

|-----------|----------|

|News | 2 x 224M |

|Wikipedia | 40M |

|Science | 58M |

|Literature | 24M |

|Others | 7M |

|Total | 575M |

ElhBERTeu is a base, cased monolingual BERT model for Basque, with a vocab size of 50K, which has 124M parameters in total.

ElhBERTeu was trained following the design decisions for [BERTeus](https://huggingface.co/ixa-ehu/berteus-base-cased). The tokenizer and the hyper-parameter settings remained the same, with the only difference being that the full pre-training of the model (1M steps) was performed with a sequence length of 512 on a v3-8 TPU.

The model has been evaluated on the recently created [BasqueGLUE](https://github.com/Elhuyar/BasqueGLUE) NLU benchmark:

| Model | AVG | NERC | F_intent | F_slot | BHTC | BEC | Vaxx | QNLI | WiC | coref |

|-----------|:---------:|:---------:|:---------:|:-------:|:-------:|:-------:|:-------:|:-------:|:-------:|:-------:|

| | | F1 | F1 | F1 | F1 | F1 | MF1 | acc | acc | acc |

| BERTeus | 73.23 | 81.92 | **82.52** | 74.34 |**78.26**| 69.43 | 59.30 |**74.26**| 70.71 |**68.31**|

| ElhBERTeu | **73.71** | **82.30** | 82.24 |**75.64**| 78.05 |**69.89**|**63.81**| 73.84 |**71.71**| 65.93 |

If you use this model, please cite the following paper:

- G. Urbizu, I. San Vicente, X. Saralegi, R. Agerri, A. Soroa. BasqueGLUE: A Natural Language Understanding Benchmark for Basque. In proceedings of the 13th Language Resources and Evaluation Conference (LREC 2022). June 2022. Marseille, France

```

@InProceedings{urbizu2022basqueglue,

author = {Urbizu, Gorka and San Vicente, Iñaki and Saralegi, Xabier and Agerri, Rodrigo and Soroa, Aitor},

title = {BasqueGLUE: A Natural Language Understanding Benchmark for Basque},

booktitle = {Proceedings of the Language Resources and Evaluation Conference},

month = {June},

year = {2022},

address = {Marseille, France},

publisher = {European Language Resources Association},

pages = {1603--1612},

abstract = {Natural Language Understanding (NLU) technology has improved significantly over the last few years and multitask benchmarks such as GLUE are key to evaluate this improvement in a robust and general way. These benchmarks take into account a wide and diverse set of NLU tasks that require some form of language understanding, beyond the detection of superficial, textual clues. However, they are costly to develop and language-dependent, and therefore they are only available for a small number of languages. In this paper, we present BasqueGLUE, the first NLU benchmark for Basque, a less-resourced language, which has been elaborated from previously existing datasets and following similar criteria to those used for the construction of GLUE and SuperGLUE. We also report the evaluation of two state-of-the-art language models for Basque on BasqueGLUE, thus providing a strong baseline to compare upon. BasqueGLUE is freely available under an open license.},

url = {https://aclanthology.org/2022.lrec-1.172}

}

```

License:

CC BY 4.0

|

dhtocks/Named-Entity-Recognition

|

c9eb2cb284b0b69709132d19eeac3816ceb89c5b

|

2022-01-15T11:22:33.000Z

|

[

"pytorch",

"roberta",

"token-classification",

"transformers",

"autotrain_compatible"

] |

token-classification

| false |

dhtocks

| null |

dhtocks/Named-Entity-Recognition

| 500 | null |

transformers

| 2,330 |

Entry not found

|

anas-awadalla/splinter-large-finetuned-squad

|

36015d000da8055edcfbbf0a14c6f5d31a2e837c

|

2022-05-15T10:51:43.000Z

|

[

"pytorch",

"splinter",

"question-answering",

"dataset:squad",

"transformers",

"generated_from_trainer",

"license:apache-2.0",

"model-index",

"autotrain_compatible"

] |

question-answering

| false |

anas-awadalla

| null |

anas-awadalla/splinter-large-finetuned-squad

| 500 | null |

transformers

| 2,331 |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: splinter-large-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# splinter-large-finetuned-squad

This model is a fine-tuned version of [tau/splinter-large-qass](https://huggingface.co/tau/splinter-large-qass) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 12

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2.0

### Training results

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.0.0

- Tokenizers 0.11.6

|

STAM/agricore

|

b6dfd05bfdcb097a78e563599517f8441452b404

|

2022-06-01T14:24:16.000Z

|

[

"pytorch",

"t5",

"text2text-generation",

"transformers",

"license:mit",

"autotrain_compatible"

] |

text2text-generation

| false |

STAM

| null |

STAM/agricore

| 500 | null |

transformers

| 2,332 |

---

license: mit

---

|

TofuBoy/DialoGPT-medium-boon

|

cd59807e12d63621addb6c915273fe8621ba6145

|

2022-01-23T05:46:38.000Z

|

[

"pytorch",

"gpt2",

"text-generation",

"transformers",

"conversational"

] |

conversational

| false |

TofuBoy

| null |

TofuBoy/DialoGPT-medium-boon

| 499 | null |

transformers

| 2,333 |

---

tags:

- conversational

---

# Boon Bot DialoGPT Model

|

Recognai/zeroshot_selectra_medium

|

6c3ff31c3c1acb96375d7913f90a19707af33b9a

|

2022-03-27T09:30:04.000Z

|

[

"pytorch",

"electra",

"text-classification",

"es",

"dataset:xnli",

"transformers",

"zero-shot-classification",

"nli",

"license:apache-2.0"

] |

zero-shot-classification

| false |

Recognai

| null |

Recognai/zeroshot_selectra_medium

| 498 | 3 |

transformers

| 2,334 |

---

language: es

tags:

- zero-shot-classification

- nli

- pytorch

datasets:

- xnli

pipeline_tag: zero-shot-classification

license: apache-2.0

widget:

- text: "El autor se perfila, a los 50 años de su muerte, como uno de los grandes de su siglo"

candidate_labels: "cultura, sociedad, economia, salud, deportes"

---

# Zero-shot SELECTRA: A zero-shot classifier based on SELECTRA