modelId

stringlengths 4

112

| sha

stringlengths 40

40

| lastModified

stringlengths 24

24

| tags

list | pipeline_tag

stringclasses 29

values | private

bool 1

class | author

stringlengths 2

38

⌀ | config

null | id

stringlengths 4

112

| downloads

float64 0

36.8M

⌀ | likes

float64 0

712

⌀ | library_name

stringclasses 17

values | __index_level_0__

int64 0

38.5k

| readme

stringlengths 0

186k

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

TransQuest/monotransquest-da-multilingual

|

cd947f301588992a749d22fc867e535bc9cb1703

|

2021-06-03T19:06:25.000Z

|

[

"pytorch",

"xlm-roberta",

"text-classification",

"multilingual-multilingual",

"transformers",

"Quality Estimation",

"monotransquest",

"DA",

"license:apache-2.0"

] |

text-classification

| false |

TransQuest

| null |

TransQuest/monotransquest-da-multilingual

| 3,818 | null |

transformers

| 1,000 |

---

language: multilingual-multilingual

tags:

- Quality Estimation

- monotransquest

- DA

license: apache-2.0

---

# TransQuest: Translation Quality Estimation with Cross-lingual Transformers

The goal of quality estimation (QE) is to evaluate the quality of a translation without having access to a reference translation. High-accuracy QE that can be easily deployed for a number of language pairs is the missing piece in many commercial translation workflows as they have numerous potential uses. They can be employed to select the best translation when several translation engines are available or can inform the end user about the reliability of automatically translated content. In addition, QE systems can be used to decide whether a translation can be published as it is in a given context, or whether it requires human post-editing before publishing or translation from scratch by a human. The quality estimation can be done at different levels: document level, sentence level and word level.

With TransQuest, we have opensourced our research in translation quality estimation which also won the sentence-level direct assessment quality estimation shared task in [WMT 2020](http://www.statmt.org/wmt20/quality-estimation-task.html). TransQuest outperforms current open-source quality estimation frameworks such as [OpenKiwi](https://github.com/Unbabel/OpenKiwi) and [DeepQuest](https://github.com/sheffieldnlp/deepQuest).

## Features

- Sentence-level translation quality estimation on both aspects: predicting post editing efforts and direct assessment.

- Word-level translation quality estimation capable of predicting quality of source words, target words and target gaps.

- Outperform current state-of-the-art quality estimation methods like DeepQuest and OpenKiwi in all the languages experimented.

- Pre-trained quality estimation models for fifteen language pairs are available in [HuggingFace.](https://huggingface.co/TransQuest)

## Installation

### From pip

```bash

pip install transquest

```

### From Source

```bash

git clone https://github.com/TharinduDR/TransQuest.git

cd TransQuest

pip install -r requirements.txt

```

## Using Pre-trained Models

```python

import torch

from transquest.algo.sentence_level.monotransquest.run_model import MonoTransQuestModel

model = MonoTransQuestModel("xlmroberta", "TransQuest/monotransquest-da-multilingual", num_labels=1, use_cuda=torch.cuda.is_available())

predictions, raw_outputs = model.predict([["Reducerea acestor conflicte este importantă pentru conservare.", "Reducing these conflicts is not important for preservation."]])

print(predictions)

```

## Documentation

For more details follow the documentation.

1. **[Installation](https://tharindudr.github.io/TransQuest/install/)** - Install TransQuest locally using pip.

2. **Architectures** - Checkout the architectures implemented in TransQuest

1. [Sentence-level Architectures](https://tharindudr.github.io/TransQuest/architectures/sentence_level_architectures/) - We have released two architectures; MonoTransQuest and SiameseTransQuest to perform sentence level quality estimation.

2. [Word-level Architecture](https://tharindudr.github.io/TransQuest/architectures/word_level_architecture/) - We have released MicroTransQuest to perform word level quality estimation.

3. **Examples** - We have provided several examples on how to use TransQuest in recent WMT quality estimation shared tasks.

1. [Sentence-level Examples](https://tharindudr.github.io/TransQuest/examples/sentence_level_examples/)

2. [Word-level Examples](https://tharindudr.github.io/TransQuest/examples/word_level_examples/)

4. **Pre-trained Models** - We have provided pretrained quality estimation models for fifteen language pairs covering both sentence-level and word-level

1. [Sentence-level Models](https://tharindudr.github.io/TransQuest/models/sentence_level_pretrained/)

2. [Word-level Models](https://tharindudr.github.io/TransQuest/models/word_level_pretrained/)

5. **[Contact](https://tharindudr.github.io/TransQuest/contact/)** - Contact us for any issues with TransQuest

## Citations

If you are using the word-level architecture, please consider citing this paper which is accepted to [ACL 2021](https://2021.aclweb.org/).

```bash

@InProceedings{ranasinghe2021,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},

title = {An Exploratory Analysis of Multilingual Word Level Quality Estimation with Cross-Lingual Transformers},

booktitle = {Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics},

year = {2021}

}

```

If you are using the sentence-level architectures, please consider citing these papers which were presented in [COLING 2020](https://coling2020.org/) and in [WMT 2020](http://www.statmt.org/wmt20/) at EMNLP 2020.

```bash

@InProceedings{transquest:2020a,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},

title = {TransQuest: Translation Quality Estimation with Cross-lingual Transformers},

booktitle = {Proceedings of the 28th International Conference on Computational Linguistics},

year = {2020}

}

```

```bash

@InProceedings{transquest:2020b,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},

title = {TransQuest at WMT2020: Sentence-Level Direct Assessment},

booktitle = {Proceedings of the Fifth Conference on Machine Translation},

year = {2020}

}

```

|

eugenesiow/bart-paraphrase

|

561b9d9631d608b8c63c01ecb64b5f030cabdd73

|

2021-09-13T10:02:50.000Z

|

[

"pytorch",

"bart",

"text2text-generation",

"en",

"dataset:quora",

"dataset:paws",

"arxiv:1910.13461",

"transformers",

"paraphrase",

"seq2seq",

"license:apache-2.0",

"autotrain_compatible"

] |

text2text-generation

| false |

eugenesiow

| null |

eugenesiow/bart-paraphrase

| 3,805 | 3 |

transformers

| 1,001 |

---

language: en

license: apache-2.0

tags:

- transformers

- bart

- paraphrase

- seq2seq

datasets:

- quora

- paws

---

# BART Paraphrase Model (Large)

A large BART seq2seq (text2text generation) model fine-tuned on 3 paraphrase datasets.

## Model description

The BART model was proposed in [BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension](https://arxiv.org/abs/1910.13461) by Lewis et al. (2019).

- Bart uses a standard seq2seq/machine translation architecture with a bidirectional encoder (like BERT) and a left-to-right decoder (like GPT).

- The pretraining task involves randomly shuffling the order of the original sentences and a novel in-filling scheme, where spans of text are replaced with a single mask token.

- BART is particularly effective when fine tuned for text generation. This model is fine-tuned on 3 paraphrase datasets (Quora, PAWS and MSR paraphrase corpus).

The original BART code is from this [repository](https://github.com/pytorch/fairseq/tree/master/examples/bart).

## Intended uses & limitations

You can use the pre-trained model for paraphrasing an input sentence.

### How to use

```python

import torch

from transformers import BartForConditionalGeneration, BartTokenizer

input_sentence = "They were there to enjoy us and they were there to pray for us."

model = BartForConditionalGeneration.from_pretrained('eugenesiow/bart-paraphrase')

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model = model.to(device)

tokenizer = BartTokenizer.from_pretrained('eugenesiow/bart-paraphrase')

batch = tokenizer(input_sentence, return_tensors='pt')

generated_ids = model.generate(batch['input_ids'])

generated_sentence = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

print(generated_sentence)

```

### Output

```

['They were there to enjoy us and to pray for us.']

```

## Training data

The model was fine-tuned on a pretrained [`facebook/bart-large`](https://huggingface.co/facebook/bart-large), using the [Quora](https://huggingface.co/datasets/quora), [PAWS](https://huggingface.co/datasets/paws) and [MSR paraphrase corpus](https://www.microsoft.com/en-us/download/details.aspx?id=52398).

## Training procedure

We follow the training procedure provided in the [simpletransformers](https://github.com/ThilinaRajapakse/simpletransformers) seq2seq [example](https://github.com/ThilinaRajapakse/simpletransformers/blob/master/examples/seq2seq/paraphrasing/train.py).

## BibTeX entry and citation info

```bibtex

@misc{lewis2019bart,

title={BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension},

author={Mike Lewis and Yinhan Liu and Naman Goyal and Marjan Ghazvininejad and Abdelrahman Mohamed and Omer Levy and Ves Stoyanov and Luke Zettlemoyer},

year={2019},

eprint={1910.13461},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

camembert/camembert-large

|

df7dbf53dd70551faa6b4ec45deb4a566445c7cc

|

2020-12-11T21:35:25.000Z

|

[

"pytorch",

"camembert",

"fr",

"arxiv:1911.03894",

"transformers"

] | null | false |

camembert

| null |

camembert/camembert-large

| 3,801 | 4 |

transformers

| 1,002 |

---

language: fr

---

# CamemBERT: a Tasty French Language Model

## Introduction

[CamemBERT](https://arxiv.org/abs/1911.03894) is a state-of-the-art language model for French based on the RoBERTa model.

It is now available on Hugging Face in 6 different versions with varying number of parameters, amount of pretraining data and pretraining data source domains.

For further information or requests, please go to [Camembert Website](https://camembert-model.fr/)

## Pre-trained models

| Model | #params | Arch. | Training data |

|--------------------------------|--------------------------------|-------|-----------------------------------|

| `camembert-base` | 110M | Base | OSCAR (138 GB of text) |

| `camembert/camembert-large` | 335M | Large | CCNet (135 GB of text) |

| `camembert/camembert-base-ccnet` | 110M | Base | CCNet (135 GB of text) |

| `camembert/camembert-base-wikipedia-4gb` | 110M | Base | Wikipedia (4 GB of text) |

| `camembert/camembert-base-oscar-4gb` | 110M | Base | Subsample of OSCAR (4 GB of text) |

| `camembert/camembert-base-ccnet-4gb` | 110M | Base | Subsample of CCNet (4 GB of text) |

## How to use CamemBERT with HuggingFace

##### Load CamemBERT and its sub-word tokenizer :

```python

from transformers import CamembertModel, CamembertTokenizer

# You can replace "camembert-base" with any other model from the table, e.g. "camembert/camembert-large".

tokenizer = CamembertTokenizer.from_pretrained("camembert/camembert-large")

camembert = CamembertModel.from_pretrained("camembert/camembert-large")

camembert.eval() # disable dropout (or leave in train mode to finetune)

```

##### Filling masks using pipeline

```python

from transformers import pipeline

camembert_fill_mask = pipeline("fill-mask", model="camembert/camembert-large", tokenizer="camembert/camembert-large")

results = camembert_fill_mask("Le camembert est <mask> :)")

# results

#[{'sequence': '<s> Le camembert est bon :)</s>', 'score': 0.15560828149318695, 'token': 305},

#{'sequence': '<s> Le camembert est excellent :)</s>', 'score': 0.06821336597204208, 'token': 3497},

#{'sequence': '<s> Le camembert est délicieux :)</s>', 'score': 0.060438305139541626, 'token': 11661},

#{'sequence': '<s> Le camembert est ici :)</s>', 'score': 0.02023460529744625, 'token': 373},

#{'sequence': '<s> Le camembert est meilleur :)</s>', 'score': 0.01778135634958744, 'token': 876}]

```

##### Extract contextual embedding features from Camembert output

```python

import torch

# Tokenize in sub-words with SentencePiece

tokenized_sentence = tokenizer.tokenize("J'aime le camembert !")

# ['▁J', "'", 'aime', '▁le', '▁cam', 'ember', 't', '▁!']

# 1-hot encode and add special starting and end tokens

encoded_sentence = tokenizer.encode(tokenized_sentence)

# [5, 133, 22, 1250, 16, 12034, 14324, 81, 76, 6]

# NB: Can be done in one step : tokenize.encode("J'aime le camembert !")

# Feed tokens to Camembert as a torch tensor (batch dim 1)

encoded_sentence = torch.tensor(encoded_sentence).unsqueeze(0)

embeddings, _ = camembert(encoded_sentence)

# embeddings.detach()

# torch.Size([1, 10, 1024])

#tensor([[[-0.1284, 0.2643, 0.4374, ..., 0.1627, 0.1308, -0.2305],

# [ 0.4576, -0.6345, -0.2029, ..., -0.1359, -0.2290, -0.6318],

# [ 0.0381, 0.0429, 0.5111, ..., -0.1177, -0.1913, -0.1121],

# ...,

```

##### Extract contextual embedding features from all Camembert layers

```python

from transformers import CamembertConfig

# (Need to reload the model with new config)

config = CamembertConfig.from_pretrained("camembert/camembert-large", output_hidden_states=True)

camembert = CamembertModel.from_pretrained("camembert/camembert-large", config=config)

embeddings, _, all_layer_embeddings = camembert(encoded_sentence)

# all_layer_embeddings list of len(all_layer_embeddings) == 25 (input embedding layer + 24 self attention layers)

all_layer_embeddings[5]

# layer 5 contextual embedding : size torch.Size([1, 10, 1024])

#tensor([[[-0.0600, 0.0742, 0.0332, ..., -0.0525, -0.0637, -0.0287],

# [ 0.0950, 0.2840, 0.1985, ..., 0.2073, -0.2172, -0.6321],

# [ 0.1381, 0.1872, 0.1614, ..., -0.0339, -0.2530, -0.1182],

# ...,

```

## Authors

CamemBERT was trained and evaluated by Louis Martin\*, Benjamin Muller\*, Pedro Javier Ortiz Suárez\*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

## Citation

If you use our work, please cite:

```bibtex

@inproceedings{martin2020camembert,

title={CamemBERT: a Tasty French Language Model},

author={Martin, Louis and Muller, Benjamin and Su{\'a}rez, Pedro Javier Ortiz and Dupont, Yoann and Romary, Laurent and de la Clergerie, {\'E}ric Villemonte and Seddah, Djam{\'e} and Sagot, Beno{\^\i}t},

booktitle={Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics},

year={2020}

}

```

|

sentence-transformers/msmarco-MiniLM-L-6-v3

|

195276c0c8647b99dfe128bd8bc4ecd1a66d41f8

|

2022-06-15T21:52:00.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"feature-extraction",

"arxiv:1908.10084",

"sentence-transformers",

"sentence-similarity",

"transformers",

"license:apache-2.0"

] |

sentence-similarity

| false |

sentence-transformers

| null |

sentence-transformers/msmarco-MiniLM-L-6-v3

| 3,781 | 3 |

sentence-transformers

| 1,003 |

---

pipeline_tag: sentence-similarity

license: apache-2.0

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# sentence-transformers/msmarco-MiniLM-L-6-v3

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 384 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/msmarco-MiniLM-L-6-v3')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/msmarco-MiniLM-L-6-v3')

model = AutoModel.from_pretrained('sentence-transformers/msmarco-MiniLM-L-6-v3')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/msmarco-MiniLM-L-6-v3)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: BertModel

(1): Pooling({'word_embedding_dimension': 384, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

This model was trained by [sentence-transformers](https://www.sbert.net/).

If you find this model helpful, feel free to cite our publication [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084):

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "http://arxiv.org/abs/1908.10084",

}

```

|

microsoft/wavlm-base-plus

|

4c66d4806a428f2e922ccfa1a962776e232d487b

|

2021-12-22T17:23:24.000Z

|

[

"pytorch",

"wavlm",

"feature-extraction",

"en",

"arxiv:1912.07875",

"arxiv:2106.06909",

"arxiv:2101.00390",

"arxiv:2110.13900",

"transformers",

"speech"

] |

feature-extraction

| false |

microsoft

| null |

microsoft/wavlm-base-plus

| 3,775 | 2 |

transformers

| 1,004 |

---

language:

- en

datasets:

tags:

- speech

inference: false

---

# WavLM-Base-Plus

[Microsoft's WavLM](https://github.com/microsoft/unilm/tree/master/wavlm)

The base model pretrained on 16kHz sampled speech audio. When using the model, make sure that your speech input is also sampled at 16kHz.

**Note**: This model does not have a tokenizer as it was pretrained on audio alone. In order to use this model **speech recognition**, a tokenizer should be created and the model should be fine-tuned on labeled text data. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more in-detail explanation of how to fine-tune the model.

The model was pre-trained on:

- 60,000 hours of [Libri-Light](https://arxiv.org/abs/1912.07875)

- 10,000 hours of [GigaSpeech](https://arxiv.org/abs/2106.06909)

- 24,000 hours of [VoxPopuli](https://arxiv.org/abs/2101.00390)

[Paper: WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900)

Authors: Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei

**Abstract**

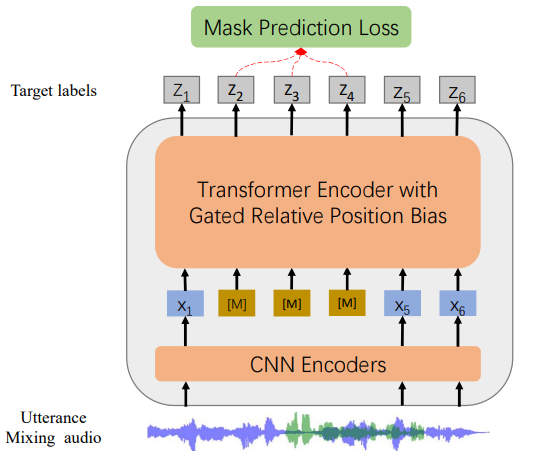

*Self-supervised learning (SSL) achieves great success in speech recognition, while limited exploration has been attempted for other speech processing tasks. As speech signal contains multi-faceted information including speaker identity, paralinguistics, spoken content, etc., learning universal representations for all speech tasks is challenging. In this paper, we propose a new pre-trained model, WavLM, to solve full-stack downstream speech tasks. WavLM is built based on the HuBERT framework, with an emphasis on both spoken content modeling and speaker identity preservation. We first equip the Transformer structure with gated relative position bias to improve its capability on recognition tasks. For better speaker discrimination, we propose an utterance mixing training strategy, where additional overlapped utterances are created unsupervisely and incorporated during model training. Lastly, we scale up the training dataset from 60k hours to 94k hours. WavLM Large achieves state-of-the-art performance on the SUPERB benchmark, and brings significant improvements for various speech processing tasks on their representative benchmarks.*

The original model can be found under https://github.com/microsoft/unilm/tree/master/wavlm.

# Usage

This is an English pre-trained speech model that has to be fine-tuned on a downstream task like speech recognition or audio classification before it can be

used in inference. The model was pre-trained in English and should therefore perform well only in English. The model has been shown to work well on the [SUPERB benchmark](https://superbbenchmark.org/).

**Note**: The model was pre-trained on phonemes rather than characters. This means that one should make sure that the input text is converted to a sequence

of phonemes before fine-tuning.

## Speech Recognition

To fine-tune the model for speech recognition, see [the official speech recognition example](https://github.com/huggingface/transformers/tree/master/examples/pytorch/speech-recognition).

## Speech Classification

To fine-tune the model for speech classification, see [the official audio classification example](https://github.com/huggingface/transformers/tree/master/examples/pytorch/audio-classification).

## Speaker Verification

TODO

## Speaker Diarization

TODO

# Contribution

The model was contributed by [cywang](https://huggingface.co/cywang) and [patrickvonplaten](https://huggingface.co/patrickvonplaten).

# License

The official license can be found [here](https://github.com/microsoft/UniSpeech/blob/main/LICENSE)

|

KoboldAI/GPT-J-6B-Adventure

|

e2c00dc99f986f2430f5d34c0214969cee786755

|

2021-12-24T19:32:09.000Z

|

[

"pytorch",

"gptj",

"text-generation",

"transformers"

] |

text-generation

| false |

KoboldAI

| null |

KoboldAI/GPT-J-6B-Adventure

| 3,772 | 2 |

transformers

| 1,005 |

Entry not found

|

flair/ner-dutch

|

16f9e2a2e2c6b739c723b81a8d72a923f4e46b0a

|

2021-03-02T22:03:57.000Z

|

[

"pytorch",

"nl",

"dataset:conll2003",

"flair",

"token-classification",

"sequence-tagger-model"

] |

token-classification

| false |

flair

| null |

flair/ner-dutch

| 3,769 | null |

flair

| 1,006 |

---

tags:

- flair

- token-classification

- sequence-tagger-model

language: nl

datasets:

- conll2003

widget:

- text: "George Washington ging naar Washington."

---

# Dutch NER in Flair (default model)

This is the standard 4-class NER model for Dutch that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **92,58** (CoNLL-03)

Predicts 4 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| PER | person name |

| LOC | location name |

| ORG | organization name |

| MISC | other name |

Based on Transformer embeddings and LSTM-CRF.

---

# Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/ner-dutch")

# make example sentence

sentence = Sentence("George Washington ging naar Washington")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [1,2]: "George Washington" [− Labels: PER (0.997)]

Span [5]: "Washington" [− Labels: LOC (0.9996)]

```

So, the entities "*George Washington*" (labeled as a **person**) and "*Washington*" (labeled as a **location**) are found in the sentence "*George Washington ging naar Washington*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import CONLL_03_DUTCH

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. get the corpus

corpus: Corpus = CONLL_03_DUTCH()

# 2. what tag do we want to predict?

tag_type = 'ner'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize embeddings

embeddings = TransformerWordEmbeddings('wietsedv/bert-base-dutch-cased')

# 5. initialize sequence tagger

tagger: SequenceTagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

trainer: ModelTrainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/ner-dutch',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik-etal-2019-flair,

title = "{FLAIR}: An Easy-to-Use Framework for State-of-the-Art {NLP}",

author = "Akbik, Alan and

Bergmann, Tanja and

Blythe, Duncan and

Rasul, Kashif and

Schweter, Stefan and

Vollgraf, Roland",

booktitle = "Proceedings of the 2019 Conference of the North {A}merican Chapter of the Association for Computational Linguistics (Demonstrations)",

year = "2019",

url = "https://www.aclweb.org/anthology/N19-4010",

pages = "54--59",

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

PlanTL-GOB-ES/roberta-large-bne-sqac

|

49f9afb2bf305084e1c8c61046369123a60bd0c5

|

2022-04-06T14:43:56.000Z

|

[

"pytorch",

"roberta",

"question-answering",

"es",

"dataset:PlanTL-GOB-ES/SQAC",

"arxiv:1907.11692",

"arxiv:2107.07253",

"transformers",

"national library of spain",

"spanish",

"bne",

"qa",

"question answering",

"license:apache-2.0",

"autotrain_compatible"

] |

question-answering

| false |

PlanTL-GOB-ES

| null |

PlanTL-GOB-ES/roberta-large-bne-sqac

| 3,764 | 2 |

transformers

| 1,007 |

---

language:

- es

license: apache-2.0

tags:

- "national library of spain"

- "spanish"

- "bne"

- "qa"

- "question answering"

datasets:

- "PlanTL-GOB-ES/SQAC"

metrics:

- "f1"

---

# Spanish RoBERTa-large trained on BNE finetuned for Spanish Question Answering Corpus (SQAC) dataset.

RoBERTa-large-bne is a transformer-based masked language model for the Spanish language. It is based on the [RoBERTa](https://arxiv.org/abs/1907.11692) large model and has been pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019.

Original pre-trained model can be found here: https://huggingface.co/BSC-TeMU/roberta-large-bne

## Dataset

The dataset used is the [SQAC corpus](https://huggingface.co/datasets/PlanTL-GOB-ES/SQAC).

## Evaluation and results

F1 Score: 0.7993 (average of 5 runs).

For evaluation details visit our [GitHub repository](https://github.com/PlanTL-GOB-ES/lm-spanish).

## Citing

Check out our paper for all the details: https://arxiv.org/abs/2107.07253

```

@article{gutierrezfandino2022,

author = {Asier Gutiérrez-Fandiño and Jordi Armengol-Estapé and Marc Pàmies and Joan Llop-Palao and Joaquin Silveira-Ocampo and Casimiro Pio Carrino and Carme Armentano-Oller and Carlos Rodriguez-Penagos and Aitor Gonzalez-Agirre and Marta Villegas},

title = {MarIA: Spanish Language Models},

journal = {Procesamiento del Lenguaje Natural},

volume = {68},

number = {0},

year = {2022},

issn = {1989-7553},

url = {http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6405},

pages = {39--60}

}

```

## Funding

This work was partially funded by the Spanish State Secretariat for Digitalization and Artificial Intelligence (SEDIA) within the framework of the Plan-TL, and the Future of Computing Center, a Barcelona Supercomputing Center and IBM initiative (2020).

## Disclaimer

The models published in this repository are intended for a generalist purpose and are available to third parties. These models may have bias and/or any other undesirable distortions.

When third parties, deploy or provide systems and/or services to other parties using any of these models (or using systems based on these models) or become users of the models, they should note that it is their responsibility to mitigate the risks arising from their use and, in any event, to comply with applicable regulations, including regulations regarding the use of artificial intelligence.

In no event shall the owner of the models (SEDIA – State Secretariat for digitalization and artificial intelligence) nor the creator (BSC – Barcelona Supercomputing Center) be liable for any results arising from the use made by third parties of these models.

Los modelos publicados en este repositorio tienen una finalidad generalista y están a disposición de terceros. Estos modelos pueden tener sesgos y/u otro tipo de distorsiones indeseables.

Cuando terceros desplieguen o proporcionen sistemas y/o servicios a otras partes usando alguno de estos modelos (o utilizando sistemas basados en estos modelos) o se conviertan en usuarios de los modelos, deben tener en cuenta que es su responsabilidad mitigar los riesgos derivados de su uso y, en todo caso, cumplir con la normativa aplicable, incluyendo la normativa en materia de uso de inteligencia artificial.

En ningún caso el propietario de los modelos (SEDIA – Secretaría de Estado de Digitalización e Inteligencia Artificial) ni el creador (BSC – Barcelona Supercomputing Center) serán responsables de los resultados derivados del uso que hagan terceros de estos modelos.

|

mrm8488/bert-base-spanish-wwm-cased-finetuned-spa-squad2-es

|

99818221720ac345078458b0b0489d61b21fe137

|

2021-05-20T00:22:53.000Z

|

[

"pytorch",

"jax",

"bert",

"question-answering",

"es",

"transformers",

"autotrain_compatible"

] |

question-answering

| false |

mrm8488

| null |

mrm8488/bert-base-spanish-wwm-cased-finetuned-spa-squad2-es

| 3,764 | 1 |

transformers

| 1,008 |

---

language: es

thumbnail: https://i.imgur.com/jgBdimh.png

---

# BETO (Spanish BERT) + Spanish SQuAD2.0

This model is provided by [BETO team](https://github.com/dccuchile/beto) and fine-tuned on [SQuAD-es-v2.0](https://github.com/ccasimiro88/TranslateAlignRetrieve) for **Q&A** downstream task.

## Details of the language model('dccuchile/bert-base-spanish-wwm-cased')

Language model ([**'dccuchile/bert-base-spanish-wwm-cased'**](https://github.com/dccuchile/beto/blob/master/README.md)):

BETO is a [BERT model](https://github.com/google-research/bert) trained on a [big Spanish corpus](https://github.com/josecannete/spanish-corpora). BETO is of size similar to a BERT-Base and was trained with the Whole Word Masking technique. Below you find Tensorflow and Pytorch checkpoints for the uncased and cased versions, as well as some results for Spanish benchmarks comparing BETO with [Multilingual BERT](https://github.com/google-research/bert/blob/master/multilingual.md) as well as other (not BERT-based) models.

## Details of the downstream task (Q&A) - Dataset

[SQuAD-es-v2.0](https://github.com/ccasimiro88/TranslateAlignRetrieve)

| Dataset | # Q&A |

| ---------------------- | ----- |

| SQuAD2.0 Train | 130 K |

| SQuAD2.0-es-v2.0 | 111 K |

| SQuAD2.0 Dev | 12 K |

| SQuAD-es-v2.0-small Dev| 69 K |

## Model training

The model was trained on a Tesla P100 GPU and 25GB of RAM with the following command:

```bash

export SQUAD_DIR=path/to/nl_squad

python transformers/examples/question-answering/run_squad.py \

--model_type bert \

--model_name_or_path dccuchile/bert-base-spanish-wwm-cased \

--do_train \

--do_eval \

--do_lower_case \

--train_file $SQUAD_DIR/train_nl-v2.0.json \

--predict_file $SQUAD_DIR/dev_nl-v2.0.json \

--per_gpu_train_batch_size 12 \

--learning_rate 3e-5 \

--num_train_epochs 2.0 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /content/model_output \

--save_steps 5000 \

--threads 4 \

--version_2_with_negative

```

## Results:

| Metric | # Value |

| ---------------------- | ----- |

| **Exact** | **76.50**50 |

| **F1** | **86.07**81 |

```json

{

"exact": 76.50501430594491,

"f1": 86.07818773108252,

"total": 69202,

"HasAns_exact": 67.93020719738277,

"HasAns_f1": 82.37912207996466,

"HasAns_total": 45850,

"NoAns_exact": 93.34104145255225,

"NoAns_f1": 93.34104145255225,

"NoAns_total": 23352,

"best_exact": 76.51223953064941,

"best_exact_thresh": 0.0,

"best_f1": 86.08541295578848,

"best_f1_thresh": 0.0

}

```

### Model in action (in a Colab Notebook)

<details>

1. Set the context and ask some questions:

2. Run predictions:

</details>

> Created by [Manuel Romero/@mrm8488](https://twitter.com/mrm8488)

> Made with <span style="color: #e25555;">♥</span> in Spain

|

Salesforce/codet5-base-multi-sum

|

4c34d0047a64ff95973d49d2cc0e61ae37fc2cd0

|

2021-11-23T09:54:43.000Z

|

[

"pytorch",

"t5",

"text2text-generation",

"dataset:code_search_net",

"arxiv:2109.00859",

"arxiv:1909.09436",

"arxiv:1907.11692",

"arxiv:2002.08155",

"transformers",

"codet5",

"license:bsd-3",

"autotrain_compatible"

] |

text2text-generation

| false |

Salesforce

| null |

Salesforce/codet5-base-multi-sum

| 3,753 | 6 |

transformers

| 1,009 |

---

license: BSD-3

tags:

- codet5

datasets:

- code_search_net

inference: true

---

# CodeT5-base for Code Summarization

[CodeT5-base](https://huggingface.co/Salesforce/codet5-base) model fine-tuned on CodeSearchNet data in a multi-lingual training setting (

Ruby/JavaScript/Go/Python/Java/PHP) for code summarization. It was introduced in this EMNLP 2021

paper [CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation](https://arxiv.org/abs/2109.00859)

by Yue Wang, Weishi Wang, Shafiq Joty, Steven C.H. Hoi. Please check out more

at [this repository](https://github.com/salesforce/CodeT5).

## How to use

Here is how to use this model:

```python

from transformers import RobertaTokenizer, T5ForConditionalGeneration

if __name__ == '__main__':

tokenizer = RobertaTokenizer.from_pretrained('Salesforce/codet5-base-multi-sum')

model = T5ForConditionalGeneration.from_pretrained('Salesforce/codet5-base-multi-sum')

text = """def svg_to_image(string, size=None):

if isinstance(string, unicode):

string = string.encode('utf-8')

renderer = QtSvg.QSvgRenderer(QtCore.QByteArray(string))

if not renderer.isValid():

raise ValueError('Invalid SVG data.')

if size is None:

size = renderer.defaultSize()

image = QtGui.QImage(size, QtGui.QImage.Format_ARGB32)

painter = QtGui.QPainter(image)

renderer.render(painter)

return image"""

input_ids = tokenizer(text, return_tensors="pt").input_ids

generated_ids = model.generate(input_ids, max_length=20)

print(tokenizer.decode(generated_ids[0], skip_special_tokens=True))

# this prints: "Convert a SVG string to a QImage."

```

## Fine-tuning data

We employ the filtered version of CodeSearchNet data [[Husain et al., 2019](https://arxiv.org/abs/1909.09436)]

from [CodeXGLUE](https://github.com/microsoft/CodeXGLUE/tree/main/Code-Text/code-to-text) benchmark for fine-tuning on

code summarization. The data is tokenized with our pre-trained code-specific BPE (Byte-Pair Encoding) tokenizer. One can

prepare text (or code) for the model using RobertaTokenizer with the vocab files from [codet5-base](https://huggingface.co/Salesforce/codet5-base).

### Data statistic

| Programming Language | Training | Dev | Test |

| :------------------- | :------: | :----: | :----: |

| Python | 251,820 | 13,914 | 14,918 |

| PHP | 241,241 | 12,982 | 14,014 |

| Go | 167,288 | 7,325 | 8,122 |

| Java | 164,923 | 5,183 | 10,955 |

| JavaScript | 58,025 | 3,885 | 3,291 |

| Ruby | 24,927 | 1,400 | 1,261 |

## Training procedure

We fine-tune codet5-base on these six programming languages (Ruby/JavaScript/Go/Python/Java/PHP) in the multi-task learning setting. We employ the

balanced sampling to avoid biasing towards high-resource tasks. Please refer to the [paper](https://arxiv.org/abs/2109.00859) for more details.

## Evaluation results

Unlike the paper allowing to select different best checkpoints for different programming languages (PLs), here we employ one checkpoint for

all PLs. Besides, we remove the task control prefix to specify the PL in training and inference. The results on the test set are shown as below:

| Model | Ruby | Javascript | Go | Python | Java | PHP | Overall |

| ----------- | :-------: | :--------: | :-------: | :-------: | :-------: | :-------: | :-------: |

| Seq2Seq | 9.64 | 10.21 | 13.98 | 15.93 | 15.09 | 21.08 | 14.32 |

| Transformer | 11.18 | 11.59 | 16.38 | 15.81 | 16.26 | 22.12 | 15.56 |

| [RoBERTa](https://arxiv.org/pdf/1907.11692.pdf) | 11.17 | 11.90 | 17.72 | 18.14 | 16.47 | 24.02 | 16.57 |

| [CodeBERT](https://arxiv.org/pdf/2002.08155.pdf) | 12.16 | 14.90 | 18.07 | 19.06 | 17.65 | 25.16 | 17.83 |

| [PLBART](https://aclanthology.org/2021.naacl-main.211.pdf) | 14.11 |15.56 | 18.91 | 19.30 | 18.45 | 23.58 | 18.32 |

| [CodeT5-small](https://arxiv.org/abs/2109.00859) |14.87 | 15.32 | 19.25 | 20.04 | 19.92 | 25.46 | 19.14 |

| [CodeT5-base](https://arxiv.org/abs/2109.00859) | **15.24** | 16.16 | 19.56 | 20.01 | **20.31** | 26.03 | 19.55 |

| [CodeT5-base-multi-sum](https://arxiv.org/abs/2109.00859) | **15.24** | **16.18** | **19.95** | **20.42** | 20.26 | **26.10** | **19.69** |

## Citation

```bibtex

@inproceedings{

wang2021codet5,

title={CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation},

author={Yue Wang, Weishi Wang, Shafiq Joty, Steven C.H. Hoi},

booktitle={Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, EMNLP 2021},

year={2021},

}

```

|

UBC-NLP/MARBERT

|

ef5bf8d54e104731fc045d5c76e72af8a23988cf

|

2022-01-19T20:37:55.000Z

|

[

"pytorch",

"tf",

"jax",

"bert",

"fill-mask",

"ar",

"transformers",

"Arabic BERT",

"MSA",

"Twitter",

"Masked Langauge Model",

"autotrain_compatible"

] |

fill-mask

| false |

UBC-NLP

| null |

UBC-NLP/MARBERT

| 3,747 | 6 |

transformers

| 1,010 |

---

language:

- ar

tags:

- Arabic BERT

- MSA

- Twitter

- Masked Langauge Model

widget:

- text: "اللغة العربية هي لغة [MASK]."

---

<img src="https://raw.githubusercontent.com/UBC-NLP/marbert/main/ARBERT_MARBERT.jpg" alt="drawing" width="200" height="200" align="right"/>

**MARBERT** is one of three models described in our **ACL 2021 paper** **["ARBERT & MARBERT: Deep Bidirectional Transformers for Arabic"](https://aclanthology.org/2021.acl-long.551.pdf)**. MARBERT is a large-scale pre-trained masked language model focused on both Dialectal Arabic (DA) and MSA. Arabic has multiple varieties. To train MARBERT, we randomly sample 1B Arabic tweets from a large in-house dataset of about 6B tweets. We only include tweets with at least 3 Arabic words, based on character string matching, regardless whether the tweet has non-Arabic string or not. That is, we do not remove non-Arabic so long as the tweet meets the 3 Arabic word criterion. The dataset makes up **128GB of text** (**15.6B tokens**). We use the same network architecture as ARBERT (BERT-base), but without the next sentence prediction (NSP) objective since tweets are short. See our [repo](https://github.com/UBC-NLP/LMBERT) for modifying BERT code to remove NSP. For more information about MARBERT, please visit our own GitHub [repo](https://github.com/UBC-NLP/marbert).

# BibTex

If you use our models (ARBERT, MARBERT, or MARBERTv2) for your scientific publication, or if you find the resources in this repository useful, please cite our paper as follows (to be updated):

```bibtex

@inproceedings{abdul-mageed-etal-2021-arbert,

title = "{ARBERT} {\&} {MARBERT}: Deep Bidirectional Transformers for {A}rabic",

author = "Abdul-Mageed, Muhammad and

Elmadany, AbdelRahim and

Nagoudi, El Moatez Billah",

booktitle = "Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers)",

month = aug,

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.acl-long.551",

doi = "10.18653/v1/2021.acl-long.551",

pages = "7088--7105",

abstract = "Pre-trained language models (LMs) are currently integral to many natural language processing systems. Although multilingual LMs were also introduced to serve many languages, these have limitations such as being costly at inference time and the size and diversity of non-English data involved in their pre-training. We remedy these issues for a collection of diverse Arabic varieties by introducing two powerful deep bidirectional transformer-based models, ARBERT and MARBERT. To evaluate our models, we also introduce ARLUE, a new benchmark for multi-dialectal Arabic language understanding evaluation. ARLUE is built using 42 datasets targeting six different task clusters, allowing us to offer a series of standardized experiments under rich conditions. When fine-tuned on ARLUE, our models collectively achieve new state-of-the-art results across the majority of tasks (37 out of 48 classification tasks, on the 42 datasets). Our best model acquires the highest ARLUE score (77.40) across all six task clusters, outperforming all other models including XLM-R Large ( 3.4x larger size). Our models are publicly available at https://github.com/UBC-NLP/marbert and ARLUE will be released through the same repository.",

}

```

## Acknowledgments

We gratefully acknowledge support from the Natural Sciences and Engineering Research Council of Canada, the Social Sciences and Humanities Research Council of Canada, Canadian Foundation for Innovation, [ComputeCanada](www.computecanada.ca) and [UBC ARC-Sockeye](https://doi.org/10.14288/SOCKEYE). We also thank the [Google TensorFlow Research Cloud (TFRC)](https://www.tensorflow.org/tfrc) program for providing us with free TPU access.

|

unicamp-dl/translation-en-pt-t5

|

8418d7e9b1837687137af06624cb3596b45c9343

|

2021-10-11T03:47:21.000Z

|

[

"pytorch",

"t5",

"text2text-generation",

"en",

"pt",

"dataset:EMEA",

"dataset:ParaCrawl 99k",

"dataset:CAPES",

"dataset:Scielo",

"dataset:JRC-Acquis",

"dataset:Biomedical Domain Corpora",

"transformers",

"translation",

"autotrain_compatible"

] |

translation

| false |

unicamp-dl

| null |

unicamp-dl/translation-en-pt-t5

| 3,743 | 5 |

transformers

| 1,011 |

---

language:

- en

- pt

datasets:

- EMEA

- ParaCrawl 99k

- CAPES

- Scielo

- JRC-Acquis

- Biomedical Domain Corpora

tags:

- translation

metrics:

- bleu

---

# Introduction

This repository brings an implementation of T5 for translation in EN-PT tasks using a modest hardware setup. We propose some changes in tokenizator and post-processing that improves the result and used a Portuguese pretrained model for the translation. You can collect more informations in [our repository](https://github.com/unicamp-dl/Lite-T5-Translation). Also, check [our paper](https://aclanthology.org/2020.wmt-1.90.pdf)!

# Usage

Just follow "Use in Transformers" instructions. It is necessary to add a few words before to define the task to T5.

You can also create a pipeline for it. An example with the phrase "I like to eat rice" is:

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, pipeline

tokenizer = AutoTokenizer.from_pretrained("unicamp-dl/translation-en-pt-t5")

model = AutoModelForSeq2SeqLM.from_pretrained("unicamp-dl/translation-en-pt-t5")

enpt_pipeline = pipeline('text2text-generation', model=model, tokenizer=tokenizer)

enpt_pipeline("translate English to Portuguese: I like to eat rice.")

```

# Citation

```bibtex

@inproceedings{lopes-etal-2020-lite,

title = "Lite Training Strategies for {P}ortuguese-{E}nglish and {E}nglish-{P}ortuguese Translation",

author = "Lopes, Alexandre and

Nogueira, Rodrigo and

Lotufo, Roberto and

Pedrini, Helio",

booktitle = "Proceedings of the Fifth Conference on Machine Translation",

month = nov,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.wmt-1.90",

pages = "833--840",

}

```

|

londogard/flair-swe-ner

|

f7ec252c72488deafa3cec6e27d9d1e18a3376ca

|

2021-03-29T08:06:38.000Z

|

[

"pytorch",

"sv",

"dataset:SUC 3.0",

"flair",

"token-classification",

"sequence-tagger-model"

] |

token-classification

| false |

londogard

| null |

londogard/flair-swe-ner

| 3,742 | null |

flair

| 1,012 |

---

tags:

- flair

- token-classification

- sequence-tagger-model

language: sv

datasets:

- SUC 3.0

widget:

- text: "Hampus bor i Skåne och har levererat denna model idag."

---

Published with ❤️ from [londogard](https://londogard.com).

## Swedish NER in Flair (SUC 3.0)

F1-Score: **85.6** (SUC 3.0)

Predicts 8 tags:

|**Tag**|**Meaning**|

|---|---|

| PRS| person name |

| ORG | organisation name|

| TME | time unit |

| WRK | building name |

| LOC | location name |

| EVN | event name |

| MSR | measurement unit |

| OBJ | object (like "Rolls-Royce" is a object in the form of a special car) |

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("londogard/flair-swe-ner")

# make example sentence

sentence = Sentence("Hampus bor i Skåne och har levererat denna model idag.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [0]: "Hampus" [− Labels: PRS (1.0)]

Span [3]: "Skåne" [− Labels: LOC (1.0)]

Span [9]: "idag" [− Labels: TME(1.0)]

```

So, the entities "_Hampus_" (labeled as a **PRS**), "_Skåne_" (labeled as a **LOC**), "_idag_" (labeled as a **TME**) are found in the sentence "_Hampus bor i Skåne och har levererat denna model idag._".

---

**Please mention londogard if using this models.**

|

flair/ner-english-ontonotes

|

4e50d09d85d60fd36e2c78175d4e405b1e3caa8c

|

2021-03-02T22:07:31.000Z

|

[

"pytorch",

"en",

"dataset:ontonotes",

"flair",

"token-classification",

"sequence-tagger-model"

] |

token-classification

| false |

flair

| null |

flair/ner-english-ontonotes

| 3,728 | 1 |

flair

| 1,013 |

---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- ontonotes

widget:

- text: "On September 1st George Washington won 1 dollar."

---

## English NER in Flair (Ontonotes default model)

This is the 18-class NER model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **89.27** (Ontonotes)

Predicts 18 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| CARDINAL | cardinal value |

| DATE | date value |

| EVENT | event name |

| FAC | building name |

| GPE | geo-political entity |

| LANGUAGE | language name |

| LAW | law name |

| LOC | location name |

| MONEY | money name |

| NORP | affiliation |

| ORDINAL | ordinal value |

| ORG | organization name |

| PERCENT | percent value |

| PERSON | person name |

| PRODUCT | product name |

| QUANTITY | quantity value |

| TIME | time value |

| WORK_OF_ART | name of work of art |

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/ner-english-ontonotes")

# make example sentence

sentence = Sentence("On September 1st George Washington won 1 dollar.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [2,3]: "September 1st" [− Labels: DATE (0.8824)]

Span [4,5]: "George Washington" [− Labels: PERSON (0.9604)]

Span [7,8]: "1 dollar" [− Labels: MONEY (0.9837)]

```

So, the entities "*September 1st*" (labeled as a **date**), "*George Washington*" (labeled as a **person**) and "*1 dollar*" (labeled as a **money**) are found in the sentence "*On September 1st George Washington won 1 dollar*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import ColumnCorpus

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. load the corpus (Ontonotes does not ship with Flair, you need to download and reformat into a column format yourself)

corpus: Corpus = ColumnCorpus(

"resources/tasks/onto-ner",

column_format={0: "text", 1: "pos", 2: "upos", 3: "ner"},

tag_to_bioes="ner",

)

# 2. what tag do we want to predict?

tag_type = 'ner'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

# GloVe embeddings

WordEmbeddings('en-crawl'),

# contextual string embeddings, forward

FlairEmbeddings('news-forward'),

# contextual string embeddings, backward

FlairEmbeddings('news-backward'),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/ner-english-ontonotes',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2018coling,

title={Contextual String Embeddings for Sequence Labeling},

author={Akbik, Alan and Blythe, Duncan and Vollgraf, Roland},

booktitle = {{COLING} 2018, 27th International Conference on Computational Linguistics},

pages = {1638--1649},

year = {2018}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/upos-multi

|

236615d6d0770325a1870c2659899e098cf71953

|

2021-03-02T22:16:39.000Z

|

[

"pytorch",

"en",

"de",

"fr",

"it",

"nl",

"pl",

"es",

"sv",

"da",

"no",

"fi",

"cs",

"dataset:ontonotes",

"flair",

"token-classification",

"sequence-tagger-model"

] |

token-classification

| false |

flair

| null |

flair/upos-multi

| 3,707 | 3 |

flair

| 1,014 |

---

tags:

- flair

- token-classification

- sequence-tagger-model

language:

- en

- de

- fr

- it

- nl

- pl

- es

- sv

- da

- no

- fi

- cs

datasets:

- ontonotes

widget:

- text: "Ich liebe Berlin, as they say"

---

## Multilingual Universal Part-of-Speech Tagging in Flair (default model)

This is the default multilingual universal part-of-speech tagging model that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **98,47** (12 UD Treebanks covering English, German, French, Italian, Dutch, Polish, Spanish, Swedish, Danish, Norwegian, Finnish and Czech)

Predicts universal POS tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

|ADJ | adjective |

| ADP | adposition |

| ADV | adverb |

| AUX | auxiliary |

| CCONJ | coordinating conjunction |

| DET | determiner |

| INTJ | interjection |

| NOUN | noun |

| NUM | numeral |

| PART | particle |

| PRON | pronoun |

| PROPN | proper noun |

| PUNCT | punctuation |

| SCONJ | subordinating conjunction |

| SYM | symbol |

| VERB | verb |

| X | other |

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/upos-multi")

# make example sentence

sentence = Sentence("Ich liebe Berlin, as they say. ")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('pos'):

print(entity)

```

This yields the following output:

```

Span [1]: "Ich" [− Labels: PRON (0.9999)]

Span [2]: "liebe" [− Labels: VERB (0.9999)]

Span [3]: "Berlin" [− Labels: PROPN (0.9997)]

Span [4]: "," [− Labels: PUNCT (1.0)]

Span [5]: "as" [− Labels: SCONJ (0.9991)]

Span [6]: "they" [− Labels: PRON (0.9998)]

Span [7]: "say" [− Labels: VERB (0.9998)]

Span [8]: "." [− Labels: PUNCT (1.0)]

```

So, the words "*Ich*" and "*they*" are labeled as **pronouns** (PRON), while "*liebe*" and "*say*" are labeled as **verbs** (VERB) in the multilingual sentence "*Ich liebe Berlin, as they say*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import MultiCorpus

from flair.datasets import UD_ENGLISH, UD_GERMAN, UD_FRENCH, UD_ITALIAN, UD_POLISH, UD_DUTCH, UD_CZECH, \

UD_DANISH, UD_SPANISH, UD_SWEDISH, UD_NORWEGIAN, UD_FINNISH

from flair.embeddings import StackedEmbeddings, FlairEmbeddings

# 1. make a multi corpus consisting of 12 UD treebanks (in_memory=False here because this corpus becomes large)

corpus = MultiCorpus([

UD_ENGLISH(in_memory=False),

UD_GERMAN(in_memory=False),

UD_DUTCH(in_memory=False),

UD_FRENCH(in_memory=False),

UD_ITALIAN(in_memory=False),

UD_SPANISH(in_memory=False),

UD_POLISH(in_memory=False),

UD_CZECH(in_memory=False),

UD_DANISH(in_memory=False),

UD_SWEDISH(in_memory=False),

UD_NORWEGIAN(in_memory=False),

UD_FINNISH(in_memory=False),

])

# 2. what tag do we want to predict?

tag_type = 'upos'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

# contextual string embeddings, forward

FlairEmbeddings('multi-forward'),

# contextual string embeddings, backward

FlairEmbeddings('multi-backward'),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type,

use_crf=False)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/upos-multi',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2018coling,

title={Contextual String Embeddings for Sequence Labeling},

author={Akbik, Alan and Blythe, Duncan and Vollgraf, Roland},

booktitle = {{COLING} 2018, 27th International Conference on Computational Linguistics},

pages = {1638--1649},

year = {2018}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

dumitrescustefan/bert-base-romanian-cased-v1

|

9718c77b8a4f402f3d2a9202e9c918f7fdcdcceb

|

2021-11-02T15:25:55.000Z

|

[

"pytorch",

"jax",

"bert",

"ro",

"transformers"

] | null | false |

dumitrescustefan

| null |

dumitrescustefan/bert-base-romanian-cased-v1

| 3,685 | 4 |

transformers

| 1,015 |

---

language: ro

---

# bert-base-romanian-cased-v1

The BERT **base**, **cased** model for Romanian, trained on a 15GB corpus, version

### How to use

```python

from transformers import AutoTokenizer, AutoModel

import torch

# load tokenizer and model

tokenizer = AutoTokenizer.from_pretrained("dumitrescustefan/bert-base-romanian-cased-v1")

model = AutoModel.from_pretrained("dumitrescustefan/bert-base-romanian-cased-v1")

# tokenize a sentence and run through the model

input_ids = torch.tensor(tokenizer.encode("Acesta este un test.", add_special_tokens=True)).unsqueeze(0) # Batch size 1

outputs = model(input_ids)

# get encoding

last_hidden_states = outputs[0] # The last hidden-state is the first element of the output tuple

```

Remember to always sanitize your text! Replace ``s`` and ``t`` cedilla-letters to comma-letters with :

```

text = text.replace("ţ", "ț").replace("ş", "ș").replace("Ţ", "Ț").replace("Ş", "Ș")

```

because the model was **NOT** trained on cedilla ``s`` and ``t``s. If you don't, you will have decreased performance due to <UNK>s and increased number of tokens per word.

### Evaluation

Evaluation is performed on Universal Dependencies [Romanian RRT](https://universaldependencies.org/treebanks/ro_rrt/index.html) UPOS, XPOS and LAS, and on a NER task based on [RONEC](https://github.com/dumitrescustefan/ronec). Details, as well as more in-depth tests not shown here, are given in the dedicated [evaluation page](https://github.com/dumitrescustefan/Romanian-Transformers/tree/master/evaluation/README.md).

The baseline is the [Multilingual BERT](https://github.com/google-research/bert/blob/master/multilingual.md) model ``bert-base-multilingual-(un)cased``, as at the time of writing it was the only available BERT model that works on Romanian.

| Model | UPOS | XPOS | NER | LAS |

|--------------------------------|:-----:|:------:|:-----:|:-----:|

| bert-base-multilingual-cased | 97.87 | 96.16 | 84.13 | 88.04 |

| bert-base-romanian-cased-v1 | **98.00** | **96.46** | **85.88** | **89.69** |

### Corpus

The model is trained on the following corpora (stats in the table below are after cleaning):

| Corpus | Lines(M) | Words(M) | Chars(B) | Size(GB) |

|----------- |:--------: |:--------: |:--------: |:--------: |

| OPUS | 55.05 | 635.04 | 4.045 | 3.8 |

| OSCAR | 33.56 | 1725.82 | 11.411 | 11 |

| Wikipedia | 1.54 | 60.47 | 0.411 | 0.4 |

| **Total** | **90.15** | **2421.33** | **15.867** | **15.2** |

#### Acknowledgements

- We'd like to thank [Sampo Pyysalo](https://github.com/spyysalo) from TurkuNLP for helping us out with the compute needed to pretrain the v1.0 BERT models. He's awesome!

|

Norod78/hebrew-bad_wiki-gpt_neo-tiny

|

a71dae1355352449475f8cb3066e85533197603e

|

2022-07-19T18:11:08.000Z

|

[

"pytorch",

"gpt_neo",

"text-generation",

"he",

"arxiv:1910.09700",

"arxiv:2105.09680",

"transformers",

"license:mit"

] |

text-generation

| false |

Norod78

| null |

Norod78/hebrew-bad_wiki-gpt_neo-tiny

| 3,683 | null |

transformers

| 1,016 |

---

language: he

thumbnail: https://avatars1.githubusercontent.com/u/3617152?norod.jpg

widget:

- text: "מתמטיקה:"

- text: "עליית המכונות"

- text: "ויקיפדיה העברית"

- text: "האירוויזיון הוא"

- text: "דוד בן-גוריון היה"

license: mit

---

# hebrew-bad_wiki-gpt_neo-tiny

## Table of Contents

- [Model Details](#model-details)

- [Uses](#uses)

- [Risks, Limitations and Biases](#risks-limitations-and-biases)

- [Training](#training)

- [Evaluation](#evaluation)

- [Environmental Impact](#environmental-impact)

- [How to Get Started With the Model](#how-to-get-started-with-the-model)

## Model Details

**Model Description:**

The model developer notes that the model is

> Hebrew nonsense generation model which produces really bad wiki-abstract text.

- **Developed by:** [Doron Adler](https://github.com/Norod)

- **Model Type:** Text Generation

- **Language(s):** Hebrew

- **License:** MIT

- **Resources for more information:**

- [GitHub Repo](https://github.com/Norod/hebrew-gpt_neo)

- [HuggingFace Space](https://huggingface.co/spaces/Norod78/Hebrew-GPT-Neo-Small)

## Uses

#### Direct Use

This model can be used for text generation.

#### Misuse and Out-of-scope Use

## Risks, Limitations and Biases

**CONTENT WARNING: Readers should be aware this section contains content that is disturbing, offensive, and can propagate historical and current stereotypes.**

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)).

## Training

#### Training Data

[Hebrew Wikipedia Dump](https://dumps.wikimedia.org/hewiki/latest/) (hewiki abstract) from May 2020

#### Training Procedure

This model was fined tuned upon [hebrew-gpt_neo-tiny](https://huggingface.co/Norod78/hebrew-gpt_neo-tiny) which was previously trained using [EleutherAI's gpt-neo](https://github.com/EleutherAI/gpt-neo).

Fine-tuning on the wiki-absract text was done using [@minimaxir](https://twitter.com/minimaxir)'s [aitextgen](https://github.com/minimaxir/aitextgen).

## Evaluation

#### Configs

Model configs for the hebrew-gpt_neo-tiny is available on the [hebrew-gpt_neo model github](https://github.com/Norod/hebrew-gpt_neo/tree/main/hebrew-gpt_neo-tiny/configs)

* **Activation Function:** gelu

* **Number_Head:** 12

* **Number_Vocab:** 50257

* **Train batch size:** 250

* **Eval batch size:** 64

* **Predict batch size:** 1

## Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). We present the hardware type based on the [associated paper](https://arxiv.org/pdf/2105.09680.pdf).

- **Hardware Type:** [More information needed]

- **Hours used:** Unknown

- **Cloud Provider:** GCP tpu-v8s

- **Compute Region:** europe-west4

- **Carbon Emitted:** [More information needed]

## How to Get Started With the Model

A Google Colab Notebook is also available [here](https://colab.research.google.com/github/Norod/hebrew-gpt_neo/blob/main/hebrew-gpt_neo-tiny/Norod78_hebrew_gpt_neo_tiny_Colab.ipynb)

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("Norod78/hebrew-bad_wiki-gpt_neo-tiny")

model = AutoModelForCausalLM.from_pretrained("Norod78/hebrew-bad_wiki-gpt_neo-tiny")

```

|

rabindralamsal/BERTsent

|

9514b1314be823ab18e320b361247ffcd94e8d83

|

2022-07-01T03:51:37.000Z

|

[

"pytorch",

"tf",

"roberta",

"text-classification",

"arxiv:2206.10471",

"transformers"

] |

text-classification

| false |

rabindralamsal

| null |

rabindralamsal/BERTsent

| 3,681 | 2 |

transformers

| 1,017 |

# Sentiment Analysis of English Tweets (including COVID-19-specific tweets) with BERTsent

**BERTsent**: A finetuned **BERT** based **sent**iment classifier for English language tweets.

BERTsent is trained with SemEval 2017 corpus (39k plus tweets) and is based on [bertweet-base](https://github.com/VinAIResearch/BERTweet) that was trained on 850M English Tweets (cased) and additional 23M COVID-19 English Tweets (cased). The base model used [RoBERTa](https://github.com/pytorch/fairseq/blob/master/examples/roberta/README.md) pre-training procedure.

Output labels:

- 0 represents "negative" sentiment

- 1 represents "neutral" sentiment

- 2 represents "positive" sentiment

## COVID-19 tweets specific task

Eg.,

The model distinguishes: "covid" -> neutral sentiment, "I have covid" -> negative sentiment

## Cite

If you use BERTsent in your project/research, please cite the following article:

Lamsal, R., Harwood, A., & Read, M. R. (2022). [Twitter conversations predict the daily confirmed COVID-19 cases](https://arxiv.org/abs/2206.10471). arXiv preprint arXiv:2206.10471.

@article{lamsal2022twitter,

title={Twitter conversations predict the daily confirmed COVID-19 cases},

author={Lamsal, Rabindra and Harwood, Aaron and Read, Maria Rodriguez},

journal={arXiv preprint arXiv:2206.10471},

year={2022}

}

## Using the model

Install transformers and emoji, if already not installed:

terminal:

pip install transformers

pip install emoji (for converting emoticons or emojis into text)

notebooks (Colab, Kaggle):

!pip install transformers

!pip install emoji

Import BERTsent from the transformers library:

from transformers import AutoTokenizer, TFAutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("rabindralamsal/finetuned-bertweet-sentiment-analysis")

model = TFAutoModelForSequenceClassification.from_pretrained("rabindralamsal/finetuned-bertweet-sentiment-analysis")

Import TensorFlow and numpy:

import tensorflow as tf

import numpy as np

We have installed and imported everything that's needed for the sentiment analysis. Let's predict sentiment of an example tweet:

example_tweet = "The NEET exams show our Govt in a poor light: unresponsiveness to genuine concerns; admit cards not delivered to aspirants in time; failure to provide centres in towns they reside, thus requiring unnecessary & risky travels. What a disgrace to treat our #Covid warriors like this!"

#this tweet resides on Twitter with an identifier-1435793872588738560

input = tokenizer.encode(example_tweet, return_tensors="tf")

output = model.predict(input)[0]

prediction = tf.nn.softmax(output, axis=1).numpy()

sentiment = np.argmax(prediction)

print(prediction)

print(sentiment)

Output:

[[0.972672164440155 0.023684727028012276 0.003643065458163619]]

0

|

Langboat/mengzi-bert-base

|

a685cb1101fb1ea116e8432b2e14042194e4738b

|

2021-10-14T09:01:34.000Z

|

[

"pytorch",

"bert",

"fill-mask",

"zh",

"arxiv:2110.06696",

"transformers",

"license:apache-2.0",

"autotrain_compatible"

] |

fill-mask

| false |

Langboat

| null |

Langboat/mengzi-bert-base

| 3,670 | 15 |

transformers

| 1,018 |

---

language:

- zh

license: apache-2.0

widget:

- text: "生活的真谛是[MASK]。"

---

# Mengzi-BERT base model (Chinese)

Pretrained model on 300G Chinese corpus. Masked language modeling(MLM), part-of-speech(POS) tagging and sentence order prediction(SOP) are used as training task.

[Mengzi: A lightweight yet Powerful Chinese Pre-trained Language Model](https://arxiv.org/abs/2110.06696)

## Usage

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained("Langboat/mengzi-bert-base")

model = BertModel.from_pretrained("Langboat/mengzi-bert-base")

```

## Scores on nine chinese tasks (without any data augmentation)

| Model | AFQMC | TNEWS | IFLYTEK | CMNLI | WSC | CSL | CMRC2018 | C3 | CHID |

|-|-|-|-|-|-|-|-|-|-|

|RoBERTa-wwm-ext| 74.30 | 57.51 | 60.80 | 80.70 | 67.20 | 80.67 | 77.59 | 67.06 | 83.78 |

|Mengzi-BERT-base| 74.58 | 57.97 | 60.68 | 82.12 | 87.50 | 85.40 | 78.54 | 71.70 | 84.16 |

RoBERTa-wwm-ext scores are from CLUE baseline

## Citation

If you find the technical report or resource is useful, please cite the following technical report in your paper.

```

@misc{zhang2021mengzi,

title={Mengzi: Towards Lightweight yet Ingenious Pre-trained Models for Chinese},

author={Zhuosheng Zhang and Hanqing Zhang and Keming Chen and Yuhang Guo and Jingyun Hua and Yulong Wang and Ming Zhou},

year={2021},

eprint={2110.06696},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

valhalla/t5-base-qg-hl

|

6b9bc6f65b1df793cd1d08674b149263b0b88515

|

2021-06-23T14:40:47.000Z

|

[

"pytorch",

"t5",

"text2text-generation",

"dataset:squad",

"arxiv:1910.10683",

"transformers",

"question-generation",

"license:mit",

"autotrain_compatible"

] |

text2text-generation

| false |

valhalla

| null |

valhalla/t5-base-qg-hl

| 3,665 | 1 |

transformers

| 1,019 |

---

datasets:

- squad

tags:

- question-generation

widget:

- text: "<hl> 42 <hl> is the answer to life, the universe and everything. </s>"

- text: "Python is a programming language. It is developed by <hl> Guido Van Rossum <hl>. </s>"

- text: "Although <hl> practicality <hl> beats purity </s>"

license: mit

---

## T5 for question-generation

This is [t5-base](https://arxiv.org/abs/1910.10683) model trained for answer aware question generation task. The answer spans are highlighted within the text with special highlight tokens.

You can play with the model using the inference API, just highlight the answer spans with `<hl>` tokens and end the text with `</s>`. For example