modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

sequence | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

DokHee/Alpha-Edu-LLM-TEST-V1 | DokHee | 2024-05-17T02:49:10Z | 79 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"4-bit",

"bitsandbytes",

"region:us"

] | text-generation | 2024-05-17T02:37:47Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

KaggleMasterX/mistral_orpo_5k_test | KaggleMasterX | 2024-05-17T02:44:25Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2024-05-17T02:43:16Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

mkellock/merged_16bit | mkellock | 2024-05-17T02:43:47Z | 2 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"unsloth",

"trl",

"sft",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-17T02:35:51Z | ---

library_name: transformers

tags:

- unsloth

- trl

- sft

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf | RichardErkhov | 2024-05-17T02:39:53Z | 47 | 0 | null | [

"gguf",

"arxiv:2312.11805",

"arxiv:2009.03300",

"arxiv:1905.07830",

"arxiv:1911.11641",

"arxiv:1904.09728",

"arxiv:1905.10044",

"arxiv:1907.10641",

"arxiv:1811.00937",

"arxiv:1809.02789",

"arxiv:1911.01547",

"arxiv:1705.03551",

"arxiv:2107.03374",

"arxiv:2108.07732",

"arxiv:2110.14168",

"arxiv:2304.06364",

"arxiv:2206.04615",

"arxiv:1804.06876",

"arxiv:2110.08193",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2024-05-17T00:57:12Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

gemma-1.1-7b-it - GGUF

- Model creator: https://huggingface.co/OpenModels4all/

- Original model: https://huggingface.co/OpenModels4all/gemma-1.1-7b-it/

| Name | Quant method | Size |

| ---- | ---- | ---- |

| [gemma-1.1-7b-it.Q2_K.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q2_K.gguf) | Q2_K | 3.24GB |

| [gemma-1.1-7b-it.IQ3_XS.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.IQ3_XS.gguf) | IQ3_XS | 3.54GB |

| [gemma-1.1-7b-it.IQ3_S.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.IQ3_S.gguf) | IQ3_S | 3.71GB |

| [gemma-1.1-7b-it.Q3_K_S.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q3_K_S.gguf) | Q3_K_S | 3.71GB |

| [gemma-1.1-7b-it.IQ3_M.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.IQ3_M.gguf) | IQ3_M | 3.82GB |

| [gemma-1.1-7b-it.Q3_K.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q3_K.gguf) | Q3_K | 4.07GB |

| [gemma-1.1-7b-it.Q3_K_M.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q3_K_M.gguf) | Q3_K_M | 4.07GB |

| [gemma-1.1-7b-it.Q3_K_L.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q3_K_L.gguf) | Q3_K_L | 4.39GB |

| [gemma-1.1-7b-it.IQ4_XS.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.IQ4_XS.gguf) | IQ4_XS | 4.48GB |

| [gemma-1.1-7b-it.Q4_0.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q4_0.gguf) | Q4_0 | 4.67GB |

| [gemma-1.1-7b-it.IQ4_NL.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.IQ4_NL.gguf) | IQ4_NL | 4.69GB |

| [gemma-1.1-7b-it.Q4_K_S.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q4_K_S.gguf) | Q4_K_S | 4.7GB |

| [gemma-1.1-7b-it.Q4_K.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q4_K.gguf) | Q4_K | 4.96GB |

| [gemma-1.1-7b-it.Q4_K_M.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q4_K_M.gguf) | Q4_K_M | 4.96GB |

| [gemma-1.1-7b-it.Q4_1.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q4_1.gguf) | Q4_1 | 5.12GB |

| [gemma-1.1-7b-it.Q5_0.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q5_0.gguf) | Q5_0 | 5.57GB |

| [gemma-1.1-7b-it.Q5_K_S.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q5_K_S.gguf) | Q5_K_S | 5.57GB |

| [gemma-1.1-7b-it.Q5_K.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q5_K.gguf) | Q5_K | 5.72GB |

| [gemma-1.1-7b-it.Q5_K_M.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q5_K_M.gguf) | Q5_K_M | 5.72GB |

| [gemma-1.1-7b-it.Q5_1.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q5_1.gguf) | Q5_1 | 6.02GB |

| [gemma-1.1-7b-it.Q6_K.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q6_K.gguf) | Q6_K | 6.53GB |

| [gemma-1.1-7b-it.Q8_0.gguf](https://huggingface.co/RichardErkhov/OpenModels4all_-_gemma-1.1-7b-it-gguf/blob/main/gemma-1.1-7b-it.Q8_0.gguf) | Q8_0 | 8.45GB |

Original model description:

---

library_name: transformers

widget:

- messages:

- role: user

content: How does the brain work?

inference:

parameters:

max_new_tokens: 200

extra_gated_heading: Access Gemma on Hugging Face

extra_gated_prompt: >-

To access Gemma on Hugging Face, you’re required to review and agree to

Google’s usage license. To do this, please ensure you’re logged-in to Hugging

Face and click below. Requests are processed immediately.

extra_gated_button_content: Acknowledge license

license: gemma

---

# Ungated version of Gemma

**Model Page**: [Gemma](https://ai.google.dev/gemma/docs)

This model card corresponds to the latest 7B instruct version of the Gemma model. Here you can find other models in the Gemma family:

| | Base | Instruct |

|----|----------------------------------------------------|----------------------------------------------------------------------|

| 2B | [gemma-2b](https://huggingface.co/google/gemma-2b) | [gemma-1.1-2b-it](https://huggingface.co/google/gemma-1.1-2b-it) |

| 7B | [gemma-7b](https://huggingface.co/google/gemma-7b) | [**gemma-1.1-7b-it**](https://huggingface.co/google/gemma-1.1-7b-it) |

**Release Notes**

This is Gemma 1.1 7B (IT), an update over the original instruction-tuned Gemma release.

Gemma 1.1 was trained using a novel RLHF method, leading to substantial gains on quality, coding capabilities, factuality, instruction following and multi-turn conversation quality. We also fixed a bug in multi-turn conversations, and made sure that model responses don't always start with `"Sure,"`.

We believe this release represents an improvement for most use cases, but we encourage users to test in their particular applications. The previous model [will continue to be available in the same repo](https://huggingface.co/google/gemma-7b-it). We appreciate the enthusiastic adoption of Gemma, and we continue to welcome all feedback from the community.

**Resources and Technical Documentation**:

* [Responsible Generative AI Toolkit](https://ai.google.dev/responsible)

* [Gemma on Kaggle](https://www.kaggle.com/models/google/gemma)

* [Gemma on Vertex Model Garden](https://console.cloud.google.com/vertex-ai/publishers/google/model-garden/335)

**Terms of Use**: [Terms](https://www.kaggle.com/models/google/gemma/license/consent)

**Authors**: Google

## Model Information

Summary description and brief definition of inputs and outputs.

### Description

Gemma is a family of lightweight, state-of-the-art open models from Google,

built from the same research and technology used to create the Gemini models.

They are text-to-text, decoder-only large language models, available in English,

with open weights, pre-trained variants, and instruction-tuned variants. Gemma

models are well-suited for a variety of text generation tasks, including

question answering, summarization, and reasoning. Their relatively small size

makes it possible to deploy them in environments with limited resources such as

a laptop, desktop or your own cloud infrastructure, democratizing access to

state of the art AI models and helping foster innovation for everyone.

### Usage

Below we share some code snippets on how to get quickly started with running the model. First make sure to `pip install -U transformers`, then copy the snippet from the section that is relevant for your usecase.

#### Running the model on a CPU

As explained below, we recommend `torch.bfloat16` as the default dtype. You can use [a different precision](#precisions) if necessary.

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

torch_dtype=torch.bfloat16

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt")

outputs = model.generate(**input_ids, max_new_tokens=50)

print(tokenizer.decode(outputs[0]))

```

#### Running the model on a single / multi GPU

```python

# pip install accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

device_map="auto",

torch_dtype=torch.bfloat16

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

<a name="precisions"></a>

#### Running the model on a GPU using different precisions

The native weights of this model were exported in `bfloat16` precision. You can use `float16`, which may be faster on certain hardware, indicating the `torch_dtype` when loading the model. For convenience, the `float16` revision of the repo contains a copy of the weights already converted to that precision.

You can also use `float32` if you skip the dtype, but no precision increase will occur (model weights will just be upcasted to `float32`). See examples below.

* _Using `torch.float16`_

```python

# pip install accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

device_map="auto",

torch_dtype=torch.float16,

revision="float16",

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

* _Using `torch.bfloat16`_

```python

# pip install accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

tokenizer = AutoTokenizer.from_pretrained("google/gemma-2b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

device_map="auto",

torch_dtype=torch.bfloat16

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

* _Upcasting to `torch.float32`_

```python

# pip install accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

device_map="auto"

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

#### Quantized Versions through `bitsandbytes`

* _Using 8-bit precision (int8)_

```python

# pip install bitsandbytes accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

quantization_config = BitsAndBytesConfig(load_in_8bit=True)

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

quantization_config=quantization_config

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

* _Using 4-bit precision_

```python

# pip install bitsandbytes accelerate

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

quantization_config = BitsAndBytesConfig(load_in_4bit=True)

tokenizer = AutoTokenizer.from_pretrained("google/gemma-1.1-7b-it")

model = AutoModelForCausalLM.from_pretrained(

"google/gemma-1.1-7b-it",

quantization_config=quantization_config

)

input_text = "Write me a poem about Machine Learning."

input_ids = tokenizer(input_text, return_tensors="pt").to("cuda")

outputs = model.generate(**input_ids)

print(tokenizer.decode(outputs[0]))

```

#### Other optimizations

* _Flash Attention 2_

First make sure to install `flash-attn` in your environment `pip install flash-attn`

```diff

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.float16,

+ attn_implementation="flash_attention_2"

).to(0)

```

#### Running the model in JAX / Flax

Use the `flax` branch of the repository:

```python

import jax.numpy as jnp

from transformers import AutoTokenizer, FlaxGemmaForCausalLM

model_id = "google/gemma-1.1-7b-it"

tokenizer = AutoTokenizer.from_pretrained(model_id)

tokenizer.padding_side = "left"

model, params = FlaxGemmaForCausalLM.from_pretrained(

model_id,

dtype=jnp.bfloat16,

revision="flax",

_do_init=False,

)

inputs = tokenizer("Valencia and Málaga are", return_tensors="np", padding=True)

output = model.generate(**inputs, params=params, max_new_tokens=20, do_sample=False)

output_text = tokenizer.batch_decode(output.sequences, skip_special_tokens=True)

```

[Check this notebook](https://colab.research.google.com/github/sanchit-gandhi/notebooks/blob/main/jax_gemma.ipynb) for a comprehensive walkthrough on how to parallelize JAX inference.

### Chat Template

The instruction-tuned models use a chat template that must be adhered to for conversational use.

The easiest way to apply it is using the tokenizer's built-in chat template, as shown in the following snippet.

Let's load the model and apply the chat template to a conversation. In this example, we'll start with a single user interaction:

```py

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

model_id = "google/gemma-1.1-7b-it"

dtype = torch.bfloat16

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="cuda",

torch_dtype=dtype,

)

chat = [

{ "role": "user", "content": "Write a hello world program" },

]

prompt = tokenizer.apply_chat_template(chat, tokenize=False, add_generation_prompt=True)

```

At this point, the prompt contains the following text:

```

<bos><start_of_turn>user

Write a hello world program<end_of_turn>

<start_of_turn>model

```

As you can see, each turn is preceded by a `<start_of_turn>` delimiter and then the role of the entity

(either `user`, for content supplied by the user, or `model` for LLM responses). Turns finish with

the `<end_of_turn>` token.

You can follow this format to build the prompt manually, if you need to do it without the tokenizer's

chat template.

After the prompt is ready, generation can be performed like this:

```py

inputs = tokenizer.encode(prompt, add_special_tokens=False, return_tensors="pt")

outputs = model.generate(input_ids=inputs.to(model.device), max_new_tokens=150)

```

### Fine-tuning

You can find some fine-tuning scripts under the [`examples/` directory](https://huggingface.co/google/gemma-7b/tree/main/examples) of [`google/gemma-7b`](https://huggingface.co/google/gemma-7b) repository. To adapt them to this model, simply change the model-id to `google/gemma-1.1-7b-it`.

We provide:

* A script to perform Supervised Fine-Tuning (SFT) on UltraChat dataset using QLoRA

* A script to perform SFT using FSDP on TPU devices

* A notebook that you can run on a free-tier Google Colab instance to perform SFT on the English quotes dataset

### Inputs and outputs

* **Input:** Text string, such as a question, a prompt, or a document to be

summarized.

* **Output:** Generated English-language text in response to the input, such

as an answer to a question, or a summary of a document.

## Model Data

Data used for model training and how the data was processed.

### Training Dataset

These models were trained on a dataset of text data that includes a wide variety

of sources, totaling 6 trillion tokens. Here are the key components:

* Web Documents: A diverse collection of web text ensures the model is exposed

to a broad range of linguistic styles, topics, and vocabulary. Primarily

English-language content.

* Code: Exposing the model to code helps it to learn the syntax and patterns of

programming languages, which improves its ability to generate code or

understand code-related questions.

* Mathematics: Training on mathematical text helps the model learn logical

reasoning, symbolic representation, and to address mathematical queries.

The combination of these diverse data sources is crucial for training a powerful

language model that can handle a wide variety of different tasks and text

formats.

### Data Preprocessing

Here are the key data cleaning and filtering methods applied to the training

data:

* CSAM Filtering: Rigorous CSAM (Child Sexual Abuse Material) filtering was

applied at multiple stages in the data preparation process to ensure the

exclusion of harmful and illegal content

* Sensitive Data Filtering: As part of making Gemma pre-trained models safe and

reliable, automated techniques were used to filter out certain personal

information and other sensitive data from training sets.

* Additional methods: Filtering based on content quality and safely in line with

[our policies](https://storage.googleapis.com/gweb-uniblog-publish-prod/documents/2023_Google_AI_Principles_Progress_Update.pdf#page=11).

## Implementation Information

Details about the model internals.

### Hardware

Gemma was trained using the latest generation of

[Tensor Processing Unit (TPU)](https://cloud.google.com/tpu/docs/intro-to-tpu) hardware (TPUv5e).

Training large language models requires significant computational power. TPUs,

designed specifically for matrix operations common in machine learning, offer

several advantages in this domain:

* Performance: TPUs are specifically designed to handle the massive computations

involved in training LLMs. They can speed up training considerably compared to

CPUs.

* Memory: TPUs often come with large amounts of high-bandwidth memory, allowing

for the handling of large models and batch sizes during training. This can

lead to better model quality.

* Scalability: TPU Pods (large clusters of TPUs) provide a scalable solution for

handling the growing complexity of large foundation models. You can distribute

training across multiple TPU devices for faster and more efficient processing.

* Cost-effectiveness: In many scenarios, TPUs can provide a more cost-effective

solution for training large models compared to CPU-based infrastructure,

especially when considering the time and resources saved due to faster

training.

* These advantages are aligned with

[Google's commitments to operate sustainably](https://sustainability.google/operating-sustainably/).

### Software

Training was done using [JAX](https://github.com/google/jax) and [ML Pathways](https://blog.google/technology/ai/introducing-pathways-next-generation-ai-architecture/ml-pathways).

JAX allows researchers to take advantage of the latest generation of hardware,

including TPUs, for faster and more efficient training of large models.

ML Pathways is Google's latest effort to build artificially intelligent systems

capable of generalizing across multiple tasks. This is specially suitable for

[foundation models](https://ai.google/discover/foundation-models/), including large language models like

these ones.

Together, JAX and ML Pathways are used as described in the

[paper about the Gemini family of models](https://arxiv.org/abs/2312.11805); "the 'single

controller' programming model of Jax and Pathways allows a single Python

process to orchestrate the entire training run, dramatically simplifying the

development workflow."

## Evaluation

Model evaluation metrics and results.

### Benchmark Results

The pre-trained base models were evaluated against a large collection of different datasets and

metrics to cover different aspects of text generation:

| Benchmark | Metric | Gemma PT 2B | Gemma PT 7B |

| ------------------------------ | ------------- | ----------- | ----------- |

| [MMLU](https://arxiv.org/abs/2009.03300) | 5-shot, top-1 | 42.3 | 64.3 |

| [HellaSwag](https://arxiv.org/abs/1905.07830) | 0-shot | 71.4 | 81.2 |

| [PIQA](https://arxiv.org/abs/1911.11641) | 0-shot | 77.3 | 81.2 |

| [SocialIQA](https://arxiv.org/abs/1904.09728) | 0-shot | 49.7 | 51.8 |

| [BoolQ](https://arxiv.org/abs/1905.10044) | 0-shot | 69.4 | 83.2 |

| [WinoGrande](https://arxiv.org/abs/1907.10641) | partial score | 65.4 | 72.3 |

| [CommonsenseQA](https://arxiv.org/abs/1811.00937) | 7-shot | 65.3 | 71.3 |

| [OpenBookQA](https://arxiv.org/abs/1809.02789) | | 47.8 | 52.8 |

| [ARC-e](https://arxiv.org/abs/1911.01547) | | 73.2 | 81.5 |

| [ARC-c](https://arxiv.org/abs/1911.01547) | | 42.1 | 53.2 |

| [TriviaQA](https://arxiv.org/abs/1705.03551) | 5-shot | 53.2 | 63.4 |

| [Natural Questions](https://github.com/google-research-datasets/natural-questions) | 5-shot | 12.5 | 23.0 |

| [HumanEval](https://arxiv.org/abs/2107.03374) | pass@1 | 22.0 | 32.3 |

| [MBPP](https://arxiv.org/abs/2108.07732) | 3-shot | 29.2 | 44.4 |

| [GSM8K](https://arxiv.org/abs/2110.14168) | maj@1 | 17.7 | 46.4 |

| [MATH](https://arxiv.org/abs/2108.07732) | 4-shot | 11.8 | 24.3 |

| [AGIEval](https://arxiv.org/abs/2304.06364) | | 24.2 | 41.7 |

| [BIG-Bench](https://arxiv.org/abs/2206.04615) | | 35.2 | 55.1 |

| ------------------------------ | ------------- | ----------- | ----------- |

| **Average** | | **44.9** | **56.4** |

## Ethics and Safety

Ethics and safety evaluation approach and results.

### Evaluation Approach

Our evaluation methods include structured evaluations and internal red-teaming

testing of relevant content policies. Red-teaming was conducted by a number of

different teams, each with different goals and human evaluation metrics. These

models were evaluated against a number of different categories relevant to

ethics and safety, including:

* Text-to-Text Content Safety: Human evaluation on prompts covering safety

policies including child sexual abuse and exploitation, harassment, violence

and gore, and hate speech.

* Text-to-Text Representational Harms: Benchmark against relevant academic

datasets such as [WinoBias](https://arxiv.org/abs/1804.06876) and [BBQ Dataset](https://arxiv.org/abs/2110.08193v2).

* Memorization: Automated evaluation of memorization of training data, including

the risk of personally identifiable information exposure.

* Large-scale harm: Tests for "dangerous capabilities," such as chemical,

biological, radiological, and nuclear (CBRN) risks.

### Evaluation Results

The results of ethics and safety evaluations are within acceptable thresholds

for meeting [internal policies](https://storage.googleapis.com/gweb-uniblog-publish-prod/documents/2023_Google_AI_Principles_Progress_Update.pdf#page=11) for categories such as child

safety, content safety, representational harms, memorization, large-scale harms.

On top of robust internal evaluations, the results of well known safety

benchmarks like BBQ, BOLD, Winogender, Winobias, RealToxicity, and TruthfulQA

are shown here.

#### Gemma 1.0

| Benchmark | Metric | Gemma 1.0 IT 2B | Gemma 1.0 IT 7B |

| ------------------------ | ------------- | --------------- | --------------- |

| [RealToxicity][realtox] | average | 6.86 | 7.90 |

| [BOLD][bold] | | 45.57 | 49.08 |

| [CrowS-Pairs][crows] | top-1 | 45.82 | 51.33 |

| [BBQ Ambig][bbq] | 1-shot, top-1 | 62.58 | 92.54 |

| [BBQ Disambig][bbq] | top-1 | 54.62 | 71.99 |

| [Winogender][winogender] | top-1 | 51.25 | 54.17 |

| [TruthfulQA][truthfulqa] | | 44.84 | 31.81 |

| [Winobias 1_2][winobias] | | 56.12 | 59.09 |

| [Winobias 2_2][winobias] | | 91.10 | 92.23 |

| [Toxigen][toxigen] | | 29.77 | 39.59 |

| ------------------------ | ------------- | --------------- | --------------- |

#### Gemma 1.1

| Benchmark | Metric | Gemma 1.1 IT 2B | Gemma 1.1 IT 7B |

| ------------------------ | ------------- | --------------- | --------------- |

| [RealToxicity][realtox] | average | 7.03 | 8.04 |

| [BOLD][bold] | | 47.76 | |

| [CrowS-Pairs][crows] | top-1 | 45.89 | 49.67 |

| [BBQ Ambig][bbq] | 1-shot, top-1 | 58.97 | 86.06 |

| [BBQ Disambig][bbq] | top-1 | 53.90 | 85.08 |

| [Winogender][winogender] | top-1 | 50.14 | 57.64 |

| [TruthfulQA][truthfulqa] | | 44.24 | 45.34 |

| [Winobias 1_2][winobias] | | 55.93 | 59.22 |

| [Winobias 2_2][winobias] | | 89.46 | 89.2 |

| [Toxigen][toxigen] | | 29.64 | 38.75 |

| ------------------------ | ------------- | --------------- | --------------- |

## Usage and Limitations

These models have certain limitations that users should be aware of.

### Intended Usage

Open Large Language Models (LLMs) have a wide range of applications across

various industries and domains. The following list of potential uses is not

comprehensive. The purpose of this list is to provide contextual information

about the possible use-cases that the model creators considered as part of model

training and development.

* Content Creation and Communication

* Text Generation: These models can be used to generate creative text formats

such as poems, scripts, code, marketing copy, and email drafts.

* Chatbots and Conversational AI: Power conversational interfaces for customer

service, virtual assistants, or interactive applications.

* Text Summarization: Generate concise summaries of a text corpus, research

papers, or reports.

* Research and Education

* Natural Language Processing (NLP) Research: These models can serve as a

foundation for researchers to experiment with NLP techniques, develop

algorithms, and contribute to the advancement of the field.

* Language Learning Tools: Support interactive language learning experiences,

aiding in grammar correction or providing writing practice.

* Knowledge Exploration: Assist researchers in exploring large bodies of text

by generating summaries or answering questions about specific topics.

### Limitations

* Training Data

* The quality and diversity of the training data significantly influence the

model's capabilities. Biases or gaps in the training data can lead to

limitations in the model's responses.

* The scope of the training dataset determines the subject areas the model can

handle effectively.

* Context and Task Complexity

* LLMs are better at tasks that can be framed with clear prompts and

instructions. Open-ended or highly complex tasks might be challenging.

* A model's performance can be influenced by the amount of context provided

(longer context generally leads to better outputs, up to a certain point).

* Language Ambiguity and Nuance

* Natural language is inherently complex. LLMs might struggle to grasp subtle

nuances, sarcasm, or figurative language.

* Factual Accuracy

* LLMs generate responses based on information they learned from their

training datasets, but they are not knowledge bases. They may generate

incorrect or outdated factual statements.

* Common Sense

* LLMs rely on statistical patterns in language. They might lack the ability

to apply common sense reasoning in certain situations.

### Ethical Considerations and Risks

The development of large language models (LLMs) raises several ethical concerns.

In creating an open model, we have carefully considered the following:

* Bias and Fairness

* LLMs trained on large-scale, real-world text data can reflect socio-cultural

biases embedded in the training material. These models underwent careful

scrutiny, input data pre-processing described and posterior evaluations

reported in this card.

* Misinformation and Misuse

* LLMs can be misused to generate text that is false, misleading, or harmful.

* Guidelines are provided for responsible use with the model, see the

[Responsible Generative AI Toolkit](http://ai.google.dev/gemma/responsible).

* Transparency and Accountability:

* This model card summarizes details on the models' architecture,

capabilities, limitations, and evaluation processes.

* A responsibly developed open model offers the opportunity to share

innovation by making LLM technology accessible to developers and researchers

across the AI ecosystem.

Risks identified and mitigations:

* Perpetuation of biases: It's encouraged to perform continuous monitoring

(using evaluation metrics, human review) and the exploration of de-biasing

techniques during model training, fine-tuning, and other use cases.

* Generation of harmful content: Mechanisms and guidelines for content safety

are essential. Developers are encouraged to exercise caution and implement

appropriate content safety safeguards based on their specific product policies

and application use cases.

* Misuse for malicious purposes: Technical limitations and developer and

end-user education can help mitigate against malicious applications of LLMs.

Educational resources and reporting mechanisms for users to flag misuse are

provided. Prohibited uses of Gemma models are outlined in the

[Gemma Prohibited Use Policy](https://ai.google.dev/gemma/prohibited_use_policy).

* Privacy violations: Models were trained on data filtered for removal of PII

(Personally Identifiable Information). Developers are encouraged to adhere to

privacy regulations with privacy-preserving techniques.

### Benefits

At the time of release, this family of models provides high-performance open

large language model implementations designed from the ground up for Responsible

AI development compared to similarly sized models.

Using the benchmark evaluation metrics described in this document, these models

have shown to provide superior performance to other, comparably-sized open model

alternatives.

|

Chan2chan1/solar_test240517_4bit | Chan2chan1 | 2024-05-17T02:38:26Z | 79 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:cc-by-nc-nd-4.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"4-bit",

"bitsandbytes",

"region:us"

] | text-generation | 2024-05-16T07:02:09Z | ---

license: cc-by-nc-nd-4.0

---

|

XueyingJia/llama3_mnli_openai_3_shots_generated_data_openai | XueyingJia | 2024-05-17T02:20:48Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"text-generation-inference",

"unsloth",

"llama",

"trl",

"en",

"base_model:meta-llama/Meta-Llama-3-8B",

"base_model:finetune:meta-llama/Meta-Llama-3-8B",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2024-05-17T02:20:33Z | ---

language:

- en

license: apache-2.0

tags:

- text-generation-inference

- transformers

- unsloth

- llama

- trl

base_model: meta-llama/Meta-Llama-3-8B

---

# Uploaded model

- **Developed by:** XueyingJia

- **License:** apache-2.0

- **Finetuned from model :** meta-llama/Meta-Llama-3-8B

This llama model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

huiwonLee/function_call_12_v1 | huiwonLee | 2024-05-17T02:20:05Z | 9 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"trl",

"sft",

"generated_from_trainer",

"conversational",

"base_model:hiieu/Meta-Llama-3-8B-Instruct-function-calling-json-mode",

"base_model:finetune:hiieu/Meta-Llama-3-8B-Instruct-function-calling-json-mode",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-17T00:45:34Z | ---

base_model: hiieu/Meta-Llama-3-8B-Instruct-function-calling-json-mode

tags:

- trl

- sft

- generated_from_trainer

model-index:

- name: function_call_12_v1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# function_call_12_v1

This model is a fine-tuned version of [hiieu/Meta-Llama-3-8B-Instruct-function-calling-json-mode](https://huggingface.co/hiieu/Meta-Llama-3-8B-Instruct-function-calling-json-mode) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 8 | 0.2566 |

### Framework versions

- Transformers 4.40.2

- Pytorch 2.1.1+cu121

- Datasets 2.19.1

- Tokenizers 0.19.1

|

Dhaniahmad/whisper-small-id | Dhaniahmad | 2024-05-17T02:10:27Z | 94 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"whisper",

"automatic-speech-recognition",

"generated_from_trainer",

"id",

"dataset:mozilla-foundation/common_voice_15_0",

"base_model:openai/whisper-small",

"base_model:finetune:openai/whisper-small",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] | automatic-speech-recognition | 2024-05-16T02:31:29Z | ---

language:

- id

license: apache-2.0

base_model: openai/whisper-small

tags:

- generated_from_trainer

datasets:

- mozilla-foundation/common_voice_15_0

metrics:

- wer

model-index:

- name: Whisper Small Id - Dhani

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice 15.0

type: mozilla-foundation/common_voice_15_0

config: id

split: None

args: 'config: id, split: test'

metrics:

- name: Wer

type: wer

value: 40.903586399627386

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Whisper Small Id - Dhani

This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 15.0 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6569

- Wer: 40.9036

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-06

- train_batch_size: 64

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 4000

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-------:|:----:|:---------------:|:-------:|

| 0.2799 | 7.6923 | 1000 | 0.5497 | 38.1509 |

| 0.0732 | 15.3846 | 2000 | 0.5844 | 38.1602 |

| 0.0257 | 23.0769 | 3000 | 0.6366 | 39.9348 |

| 0.0164 | 30.7692 | 4000 | 0.6569 | 40.9036 |

### Framework versions

- Transformers 4.40.2

- Pytorch 2.2.1+cu121

- Datasets 2.19.1

- Tokenizers 0.19.1

|

littleworth/protgpt2-distilled-small | littleworth | 2024-05-17T02:07:33Z | 170 | 0 | transformers | [

"transformers",

"safetensors",

"gpt2",

"text-generation",

"chemistry",

"biology",

"dataset:nferruz/UR50_2021_04",

"arxiv:1503.02531",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-07T06:54:07Z | ---

license: apache-2.0

datasets:

- nferruz/UR50_2021_04

tags:

- chemistry

- biology

---

### Model Description

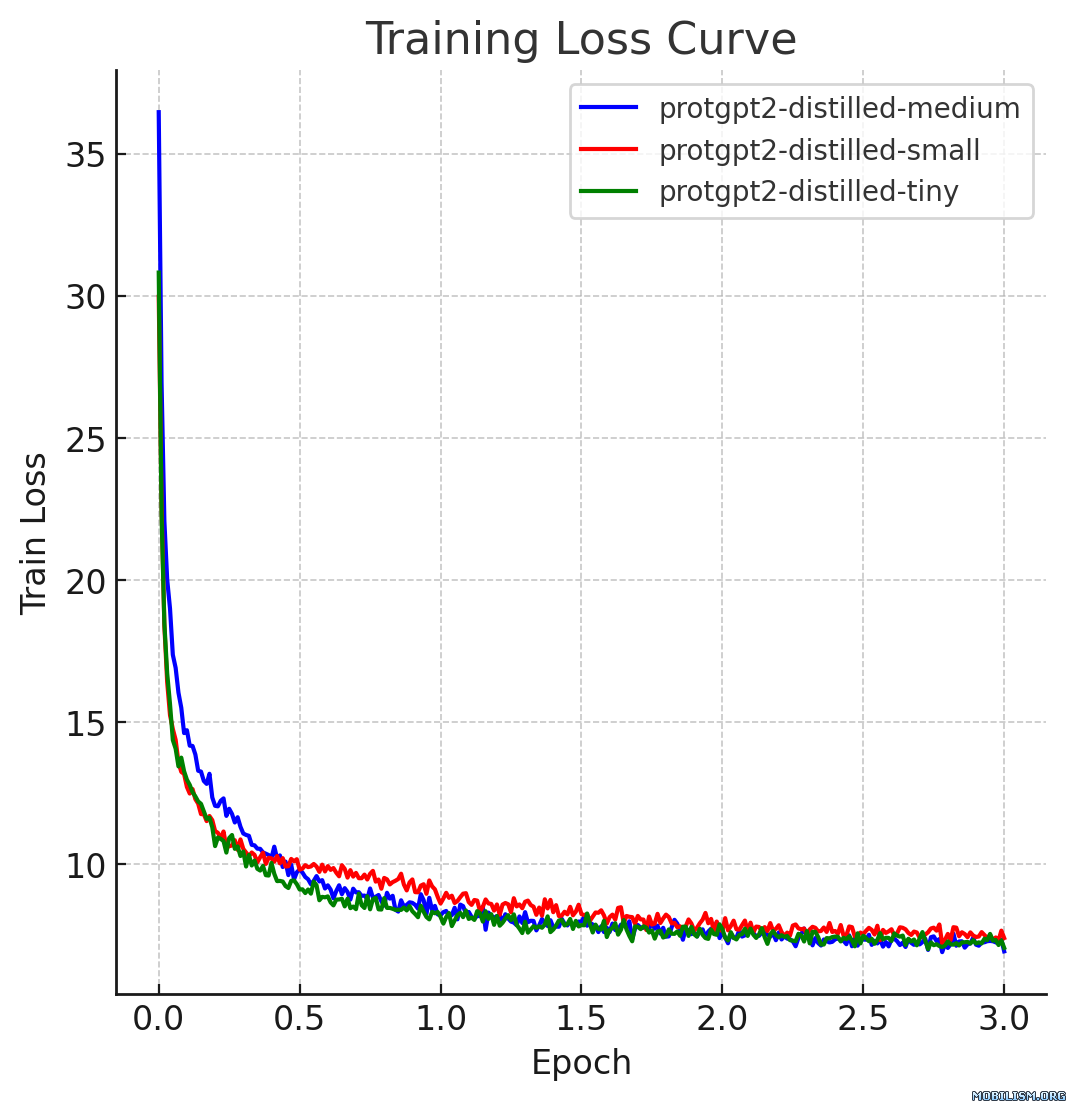

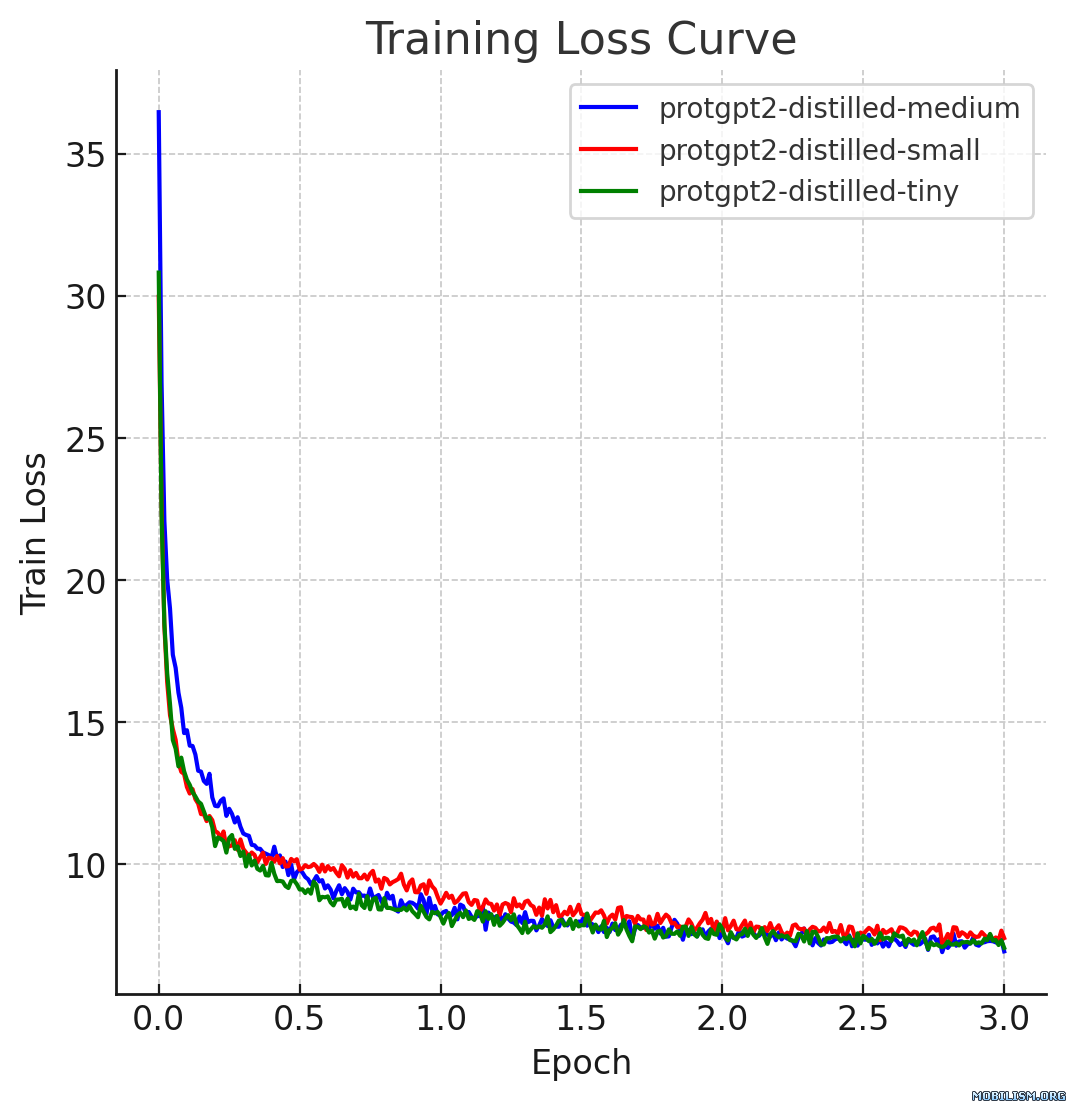

This model card describes the distilled version of [ProtGPT2](https://huggingface.co/nferruz/ProtGPT2), referred to as `protgpt2-distilled-small`. The distillation process for this model follows the methodology of knowledge distillation from a larger teacher model to a smaller, more efficient student model. The process combines both "Soft Loss" (Knowledge Distillation Loss) and "Hard Loss" (Cross-Entropy Loss) to ensure the student model not only generalizes like its teacher but also retains practical prediction capabilities.

### Technical Details

**Distillation Parameters:**

- **Temperature (T):** 10

- **Alpha (α):** 0.1

- **Model Architecture:**

- **Number of Layers:** 6

- **Number of Attention Heads:** 8

- **Embedding Size:** 768

**Dataset Used:**

- The model was distilled using a subset of the evaluation dataset provided by [nferruz/UR50_2021_04](https://huggingface.co/datasets/nferruz/UR50_2021_04).

<strong>Loss Formulation:</strong>

<ul>

<li><strong>Soft Loss:</strong> <span>ℒ<sub>soft</sub> = KL(softmax(s/T), softmax(t/T))</span>, where <em>s</em> are the logits from the student model, <em>t</em> are the logits from the teacher model, and <em>T</em> is the temperature used to soften the probabilities.</li>

<li><strong>Hard Loss:</strong> <span>ℒ<sub>hard</sub> = -∑<sub>i</sub> y<sub>i</sub> log(softmax(s<sub>i</sub>))</span>, where <em>y<sub>i</sub></em> represents the true labels, and <em>s<sub>i</sub></em> are the logits from the student model corresponding to each label.</li>

<li><strong>Combined Loss:</strong> <span>ℒ = α ℒ<sub>hard</sub> + (1 - α) ℒ<sub>soft</sub></span>, where <em>α</em> (alpha) is the weight factor that balances the hard loss and soft loss.</li>

</ul>

<p><strong>Note:</strong> KL represents the Kullback-Leibler divergence, a measure used to quantify how one probability distribution diverges from a second, expected probability distribution.</p>

### Performance

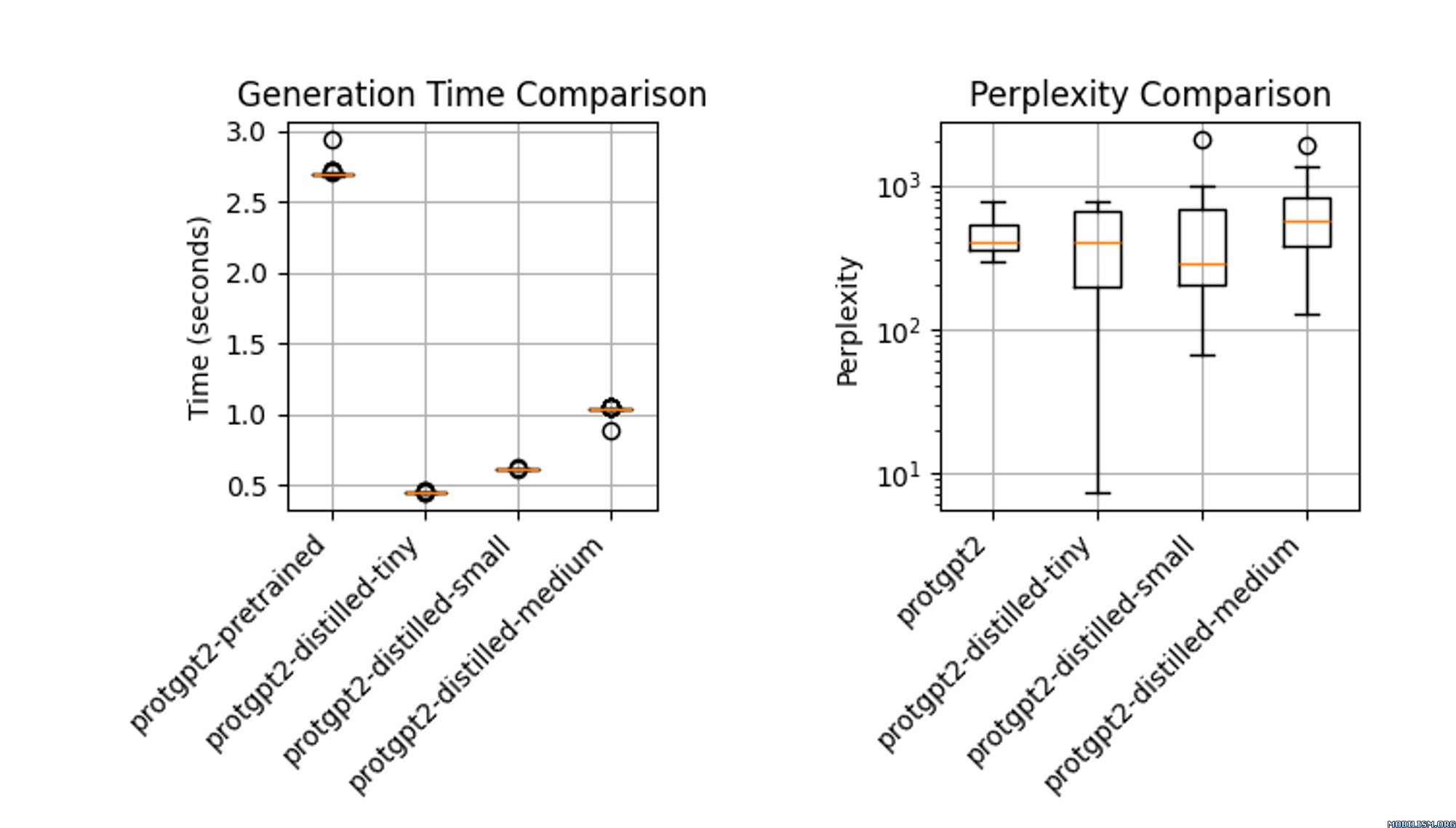

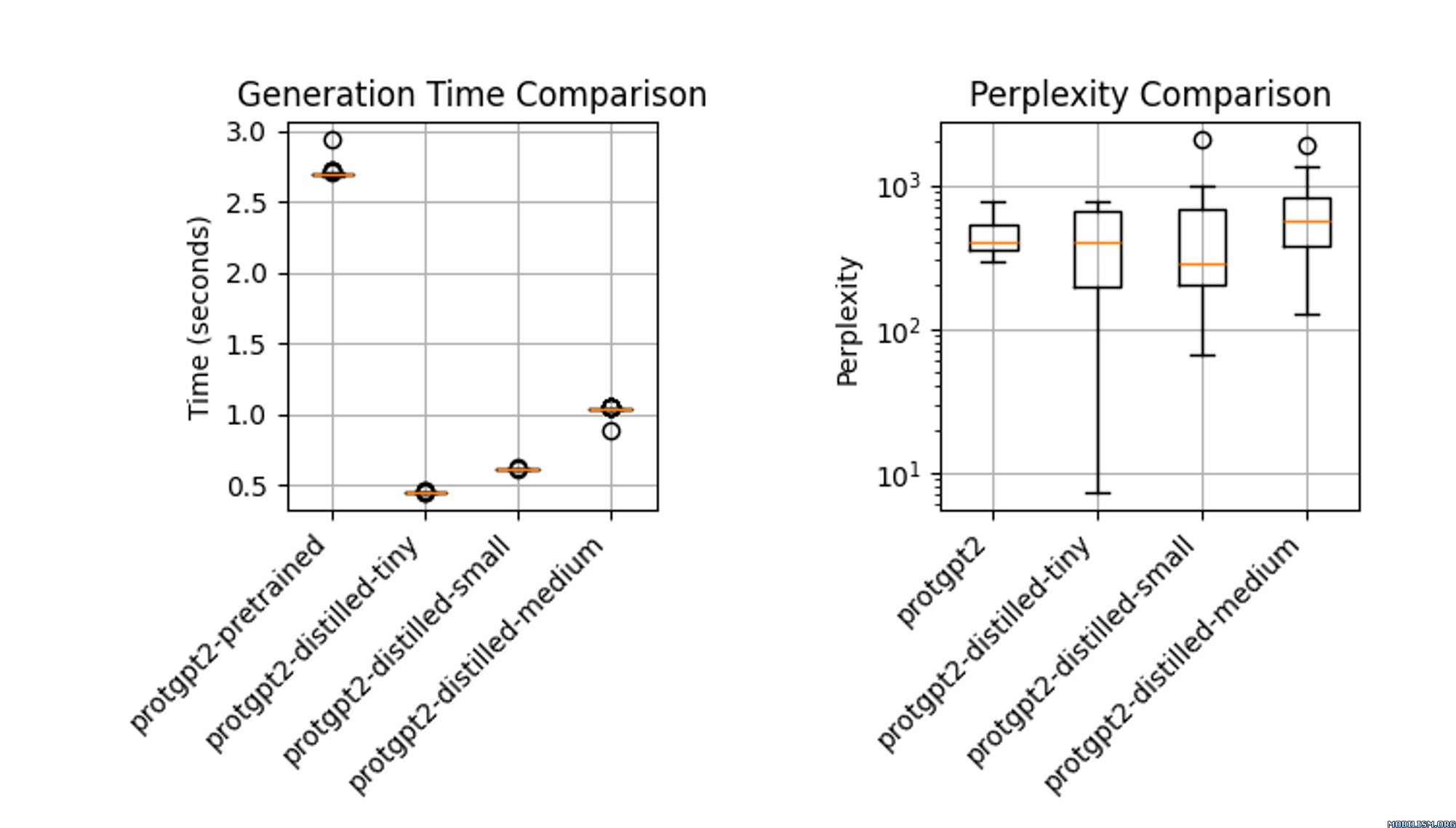

The distilled model, `protgpt2-distilled-tiny`, demonstrates a substantial increase in inference speed—up to 6 times faster than the pretrained version. This assessment is based on evaluations using \(n=100\) tests, showing that while the speed is significantly enhanced, the model still maintains perplexities comparable to the original.

### Usage

```

from transformers import GPT2Tokenizer, GPT2LMHeadModel, TextGenerationPipeline

import re

# Load the model and tokenizer

model_name = "littleworth/protgpt2-distilled-small"

tokenizer = GPT2Tokenizer.from_pretrained(model_name)

model = GPT2LMHeadModel.from_pretrained(model_name)

# Initialize the pipeline

text_generator = TextGenerationPipeline(

model=model, tokenizer=tokenizer, device=0

) # specify device if needed

# Generate sequences

generated_sequences = text_generator(

"<|endoftext|>",

max_length=100,

do_sample=True,

top_k=950,

repetition_penalty=1.2,

num_return_sequences=10,

pad_token_id=tokenizer.eos_token_id, # Set pad_token_id to eos_token_id

eos_token_id=0,

truncation=True,

)

def clean_sequence(text):

# Remove the "<|endoftext|>" token

text = text.replace("<|endoftext|>", "")

# Remove newline characters and non-alphabetical characters

text = "".join(char for char in text if char.isalpha())

return text

# Print the generated sequences

for i, seq in enumerate(generated_sequences):

cleaned_text = clean_sequence(seq["generated_text"])

print(f">Seq_{i}")

print(cleaned_text)

```

### Use Cases

1. **High-Throughput Screening in Drug Discovery:** The distilled ProtGPT2 facilitates rapid mutation screening in drug discovery by predicting protein variant stability efficiently. Its reduced size allows for swift fine-tuning on new datasets, enhancing the pace of target identification.

2. **Portable Diagnostics in Healthcare:** Suitable for handheld devices, this model enables real-time protein analysis in remote clinical settings, providing immediate diagnostic results.

3. **Interactive Learning Tools in Academia:** Integrated into educational software, the distilled model helps biology students simulate and understand protein dynamics without advanced computational resources.

### References

- Hinton, G., Vinyals, O., & Dean, J. (2015). Distilling the Knowledge in a Neural Network. arXiv:1503.02531.

- Original ProtGPT2 Paper: [Link to paper](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC9329459/) |

littleworth/protgpt2-distilled-tiny | littleworth | 2024-05-17T02:01:58Z | 172 | 2 | transformers | [

"transformers",

"safetensors",

"gpt2",

"text-generation",

"chemistry",

"biology",

"dataset:nferruz/UR50_2021_04",

"arxiv:1503.02531",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-07T05:11:33Z | ---

license: apache-2.0

datasets:

- nferruz/UR50_2021_04

tags:

- chemistry

- biology

---

### Model Description

This model card describes the distilled version of [ProtGPT2](https://huggingface.co/nferruz/ProtGPT2), referred to as `protgpt2-distilled-tiny`. The distillation process for this model follows the methodology of knowledge distillation from a larger teacher model to a smaller, more efficient student model. The process combines both "Soft Loss" (Knowledge Distillation Loss) and "Hard Loss" (Cross-Entropy Loss) to ensure the student model not only generalizes like its teacher but also retains practical prediction capabilities.

### Technical Details

**Distillation Parameters:**

- **Temperature (T):** 10

- **Alpha (α):** 0.1

- **Model Architecture:**

- **Number of Layers:** 4

- **Number of Attention Heads:** 4

- **Embedding Size:** 512

**Dataset Used:**

- The model was distilled using a subset of the evaluation dataset provided by [nferruz/UR50_2021_04](https://huggingface.co/datasets/nferruz/UR50_2021_04).

<strong>Loss Formulation:</strong>

<ul>

<li><strong>Soft Loss:</strong> <span>ℒ<sub>soft</sub> = KL(softmax(s/T), softmax(t/T))</span>, where <em>s</em> are the logits from the student model, <em>t</em> are the logits from the teacher model, and <em>T</em> is the temperature used to soften the probabilities.</li>

<li><strong>Hard Loss:</strong> <span>ℒ<sub>hard</sub> = -∑<sub>i</sub> y<sub>i</sub> log(softmax(s<sub>i</sub>))</span>, where <em>y<sub>i</sub></em> represents the true labels, and <em>s<sub>i</sub></em> are the logits from the student model corresponding to each label.</li>

<li><strong>Combined Loss:</strong> <span>ℒ = α ℒ<sub>hard</sub> + (1 - α) ℒ<sub>soft</sub></span>, where <em>α</em> (alpha) is the weight factor that balances the hard loss and soft loss.</li>

</ul>

<p><strong>Note:</strong> KL represents the Kullback-Leibler divergence, a measure used to quantify how one probability distribution diverges from a second, expected probability distribution.</p>

### Performance

The distilled model, `protgpt2-distilled-tiny`, demonstrates a substantial increase in inference speed—up to 6 times faster than the pretrained version. This assessment is based on evaluations using \(n=100\) tests, showing that while the speed is significantly enhanced, the model still maintains perplexities comparable to the original.

### Usage

```

from transformers import GPT2Tokenizer, GPT2LMHeadModel, TextGenerationPipeline

import re

# Load the model and tokenizer

model_name = "littleworth/protgpt2-distilled-tiny"

tokenizer = GPT2Tokenizer.from_pretrained(model_name)

model = GPT2LMHeadModel.from_pretrained(model_name)

# Initialize the pipeline

text_generator = TextGenerationPipeline(

model=model, tokenizer=tokenizer, device=0

) # specify device if needed

# Generate sequences

generated_sequences = text_generator(

"<|endoftext|>",

max_length=100,

do_sample=True,

top_k=950,

repetition_penalty=1.2,

num_return_sequences=10,

pad_token_id=tokenizer.eos_token_id, # Set pad_token_id to eos_token_id

eos_token_id=0,

truncation=True,

)

def clean_sequence(text):

# Remove the "<|endoftext|>" token

text = text.replace("<|endoftext|>", "")

# Remove newline characters and non-alphabetical characters

text = "".join(char for char in text if char.isalpha())

return text

# Print the generated sequences

for i, seq in enumerate(generated_sequences):

cleaned_text = clean_sequence(seq["generated_text"])

print(f">Seq_{i}")

print(cleaned_text)

```

### Use Cases

1. **High-Throughput Screening in Drug Discovery:** The distilled ProtGPT2 facilitates rapid mutation screening in drug discovery by predicting protein variant stability efficiently. Its reduced size allows for swift fine-tuning on new datasets, enhancing the pace of target identification.

2. **Portable Diagnostics in Healthcare:** Suitable for handheld devices, this model enables real-time protein analysis in remote clinical settings, providing immediate diagnostic results.

3. **Interactive Learning Tools in Academia:** Integrated into educational software, the distilled model helps biology students simulate and understand protein dynamics without advanced computational resources.

### References

- Hinton, G., Vinyals, O., & Dean, J. (2015). Distilling the Knowledge in a Neural Network. arXiv:1503.02531.

- Original ProtGPT2 Paper: [Link to paper](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC9329459/) |

emilykang/medQuad_finetuned_model | emilykang | 2024-05-17T02:01:07Z | 152 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-17T01:56:54Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

ghetrs/jhtgrhestjrdkgl | ghetrs | 2024-05-17T01:57:51Z | 0 | 0 | null | [

"license:creativeml-openrail-m",

"region:us"

] | null | 2024-05-17T01:57:51Z | ---

license: creativeml-openrail-m

---

|

FallenMerick/Space-Whale-Lite-13B-GGUF | FallenMerick | 2024-05-17T01:36:52Z | 7 | 0 | null | [

"gguf",

"quantized",

"4-bit",

"5-bit",

"6-bit",

"8-bit",

"GGUF",

"merge",

"frankenmerge",

"text-generation",

"base_model:FallenMerick/Space-Whale-Lite-13B",

"base_model:quantized:FallenMerick/Space-Whale-Lite-13B",

"endpoints_compatible",

"region:us"

] | text-generation | 2024-05-16T21:10:15Z | ---

base_model:

- FallenMerick/Space-Whale-Lite-13B

model_name: Space-Whale-Lite-13B

pipeline_tag: text-generation

tags:

- quantized

- 4-bit

- 5-bit

- 6-bit

- 8-bit

- GGUF

- merge

- frankenmerge

- text-generation

---

# Space-Whale-Lite-13B

These are GGUF quants for the following model:

https://huggingface.co/FallenMerick/Space-Whale-Lite-13B |

mradermacher/Sailor-14B-Chat-GGUF | mradermacher | 2024-05-17T01:36:29Z | 88 | 0 | transformers | [

"transformers",

"gguf",

"multilingual",

"sea",

"sailor",

"sft",

"chat",

"instruction",

"en",

"zh",

"id",

"th",

"vi",

"ms",

"lo",

"dataset:CohereForAI/aya_dataset",

"dataset:CohereForAI/aya_collection",

"dataset:Open-Orca/OpenOrca",

"dataset:HuggingFaceH4/ultrachat_200k",

"dataset:openbmb/UltraFeedback",

"base_model:sail/Sailor-14B-Chat",

"base_model:quantized:sail/Sailor-14B-Chat",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2024-05-17T00:46:37Z | ---

base_model: sail/Sailor-14B-Chat

datasets:

- CohereForAI/aya_dataset

- CohereForAI/aya_collection

- Open-Orca/OpenOrca

- HuggingFaceH4/ultrachat_200k

- openbmb/UltraFeedback

language:

- en

- zh

- id

- th

- vi

- ms

- lo

library_name: transformers

license: apache-2.0

quantized_by: mradermacher

tags:

- multilingual

- sea

- sailor

- sft

- chat

- instruction

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

static quants of https://huggingface.co/sail/Sailor-14B-Chat

<!-- provided-files -->

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q2_K.gguf) | Q2_K | 6.0 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.IQ3_XS.gguf) | IQ3_XS | 6.6 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.IQ3_S.gguf) | IQ3_S | 6.9 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q3_K_S.gguf) | Q3_K_S | 6.9 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.IQ3_M.gguf) | IQ3_M | 7.2 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q3_K_M.gguf) | Q3_K_M | 7.5 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q3_K_L.gguf) | Q3_K_L | 7.9 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.IQ4_XS.gguf) | IQ4_XS | 8.0 | |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q4_K_S.gguf) | Q4_K_S | 8.7 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Sailor-14B-Chat-GGUF/resolve/main/Sailor-14B-Chat.Q4_K_M.gguf) | Q4_K_M | 9.3 | fast, recommended |