problem_id

stringlengths 18

22

| source

stringclasses 1

value | task_type

stringclasses 1

value | in_source_id

stringlengths 13

58

| prompt

stringlengths 1.71k

18.9k

| golden_diff

stringlengths 145

5.13k

| verification_info

stringlengths 465

23.6k

| num_tokens_prompt

int64 556

4.1k

| num_tokens_diff

int64 47

1.02k

|

|---|---|---|---|---|---|---|---|---|

gh_patches_debug_11555

|

rasdani/github-patches

|

git_diff

|

pypa__setuptools-753

|

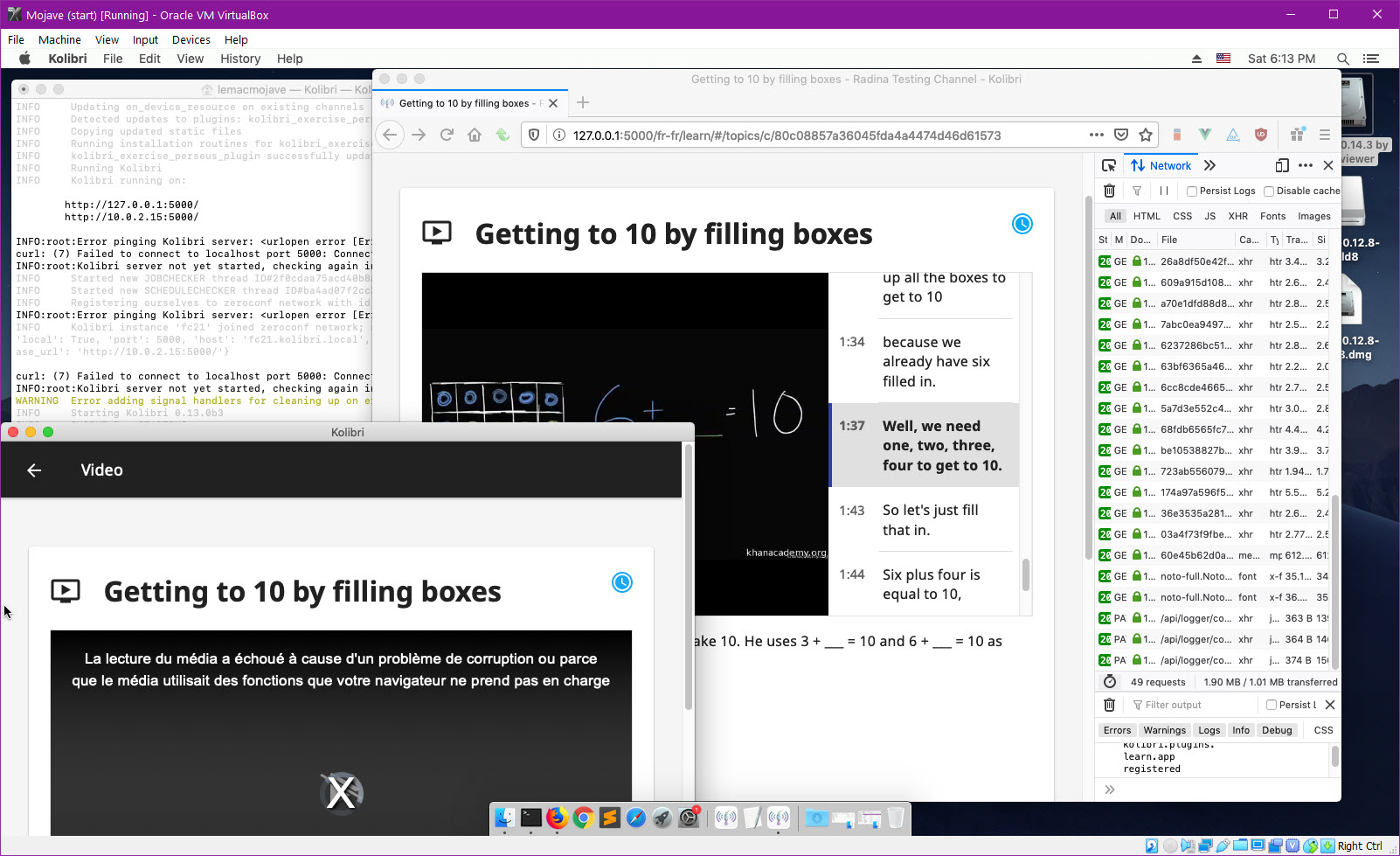

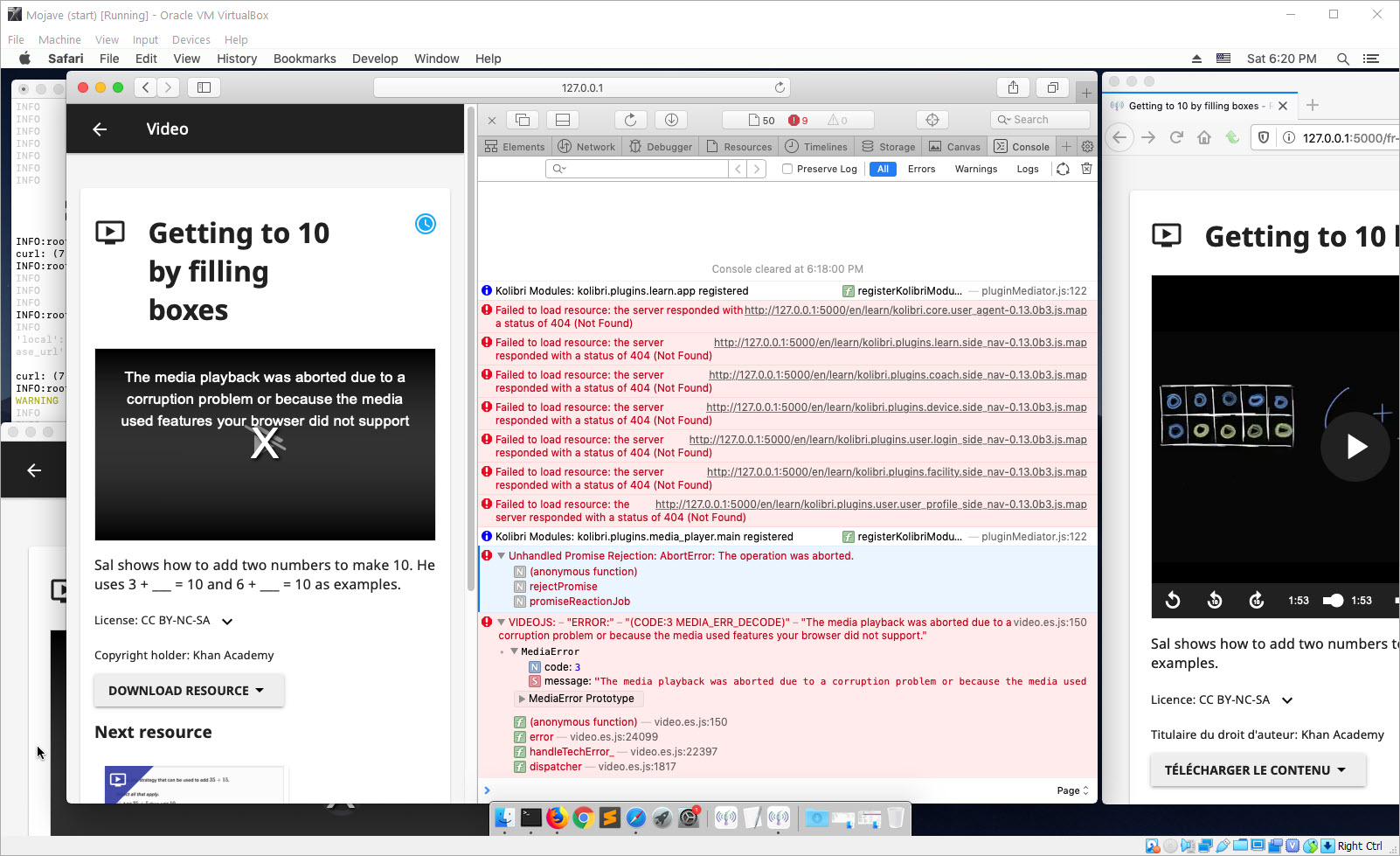

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

Setuptools doesn't play nice with Numpy

See: https://github.com/numpy/numpy/blob/master/numpy/distutils/extension.py#L42-L52

That functionality was broken by:

https://github.com/pypa/setuptools/blob/master/setuptools/extension.py#L39-L41

In this pr: https://github.com/pypa/setuptools/pull/718

Here's the the pdb session where I debugged this:

```

(Pdb) old_Extension.__module__

'setuptools.extension'

```

</issue>

<code>

[start of setuptools/extension.py]

1 import sys

2 import re

3 import functools

4 import distutils.core

5 import distutils.errors

6 import distutils.extension

7

8 from setuptools.extern.six.moves import map

9

10 from .dist import _get_unpatched

11 from . import msvc

12

13 _Extension = _get_unpatched(distutils.core.Extension)

14

15 msvc.patch_for_specialized_compiler()

16

17

18 def _have_cython():

19 """

20 Return True if Cython can be imported.

21 """

22 cython_impl = 'Cython.Distutils.build_ext'

23 try:

24 # from (cython_impl) import build_ext

25 __import__(cython_impl, fromlist=['build_ext']).build_ext

26 return True

27 except Exception:

28 pass

29 return False

30

31

32 # for compatibility

33 have_pyrex = _have_cython

34

35

36 class Extension(_Extension):

37 """Extension that uses '.c' files in place of '.pyx' files"""

38

39 def __init__(self, name, sources, py_limited_api=False, **kw):

40 self.py_limited_api = py_limited_api

41 _Extension.__init__(self, name, sources, **kw)

42

43 def _convert_pyx_sources_to_lang(self):

44 """

45 Replace sources with .pyx extensions to sources with the target

46 language extension. This mechanism allows language authors to supply

47 pre-converted sources but to prefer the .pyx sources.

48 """

49 if _have_cython():

50 # the build has Cython, so allow it to compile the .pyx files

51 return

52 lang = self.language or ''

53 target_ext = '.cpp' if lang.lower() == 'c++' else '.c'

54 sub = functools.partial(re.sub, '.pyx$', target_ext)

55 self.sources = list(map(sub, self.sources))

56

57

58 class Library(Extension):

59 """Just like a regular Extension, but built as a library instead"""

60

61

62 distutils.core.Extension = Extension

63 distutils.extension.Extension = Extension

64 if 'distutils.command.build_ext' in sys.modules:

65 sys.modules['distutils.command.build_ext'].Extension = Extension

66

[end of setuptools/extension.py]

</code>

I need you to solve this issue by generating a single patch file that I can apply directly to this repository using git apply. Please respond with a single patch file in the following format.

<patch>

diff --git a/file.py b/file.py

--- a/file.py

+++ b/file.py

@@ -1,27 +1,35 @@

def euclidean(a, b):

- while b:

- a, b = b, a % b

- return a

+ if b == 0:

+ return a

+ return euclidean(b, a % b)

def bresenham(x0, y0, x1, y1):

points = []

dx = abs(x1 - x0)

dy = abs(y1 - y0)

- sx = 1 if x0 < x1 else -1

- sy = 1 if y0 < y1 else -1

- err = dx - dy

+ x, y = x0, y0

+ sx = -1 if x0 > x1 else 1

+ sy = -1 if y0 > y1 else 1

- while True:

- points.append((x0, y0))

- if x0 == x1 and y0 == y1:

- break

- e2 = 2 * err

- if e2 > -dy:

- err -= dy

- x0 += sx

- if e2 < dx:

- err += dx

- y0 += sy

+ if dx > dy:

+ err = dx / 2.0

+ while x != x1:

+ points.append((x, y))

+ err -= dy

+ if err < 0:

+ y += sy

+ err += dx

+ x += sx

+ else:

+ err = dy / 2.0

+ while y != y1:

+ points.append((x, y))

+ err -= dx

+ if err < 0:

+ x += sx

+ err += dy

+ y += sy

+

+ points.append((x, y))

return points

</patch>

|

diff --git a/setuptools/extension.py b/setuptools/extension.py

--- a/setuptools/extension.py

+++ b/setuptools/extension.py

@@ -36,9 +36,11 @@

class Extension(_Extension):

"""Extension that uses '.c' files in place of '.pyx' files"""

- def __init__(self, name, sources, py_limited_api=False, **kw):

- self.py_limited_api = py_limited_api

- _Extension.__init__(self, name, sources, **kw)

+ def __init__(self, name, sources, *args, **kw):

+ # The *args is needed for compatibility as calls may use positional

+ # arguments. py_limited_api may be set only via keyword.

+ self.py_limited_api = kw.pop("py_limited_api", False)

+ _Extension.__init__(self, name, sources, *args, **kw)

def _convert_pyx_sources_to_lang(self):

"""

|

{"golden_diff": "diff --git a/setuptools/extension.py b/setuptools/extension.py\n--- a/setuptools/extension.py\n+++ b/setuptools/extension.py\n@@ -36,9 +36,11 @@\n class Extension(_Extension):\n \"\"\"Extension that uses '.c' files in place of '.pyx' files\"\"\"\n \n- def __init__(self, name, sources, py_limited_api=False, **kw):\n- self.py_limited_api = py_limited_api\n- _Extension.__init__(self, name, sources, **kw)\n+ def __init__(self, name, sources, *args, **kw):\n+ # The *args is needed for compatibility as calls may use positional\n+ # arguments. py_limited_api may be set only via keyword.\n+ self.py_limited_api = kw.pop(\"py_limited_api\", False)\n+ _Extension.__init__(self, name, sources, *args, **kw)\n \n def _convert_pyx_sources_to_lang(self):\n \"\"\"\n", "issue": "Setuptools doesn't play nice with Numpy\nSee: https://github.com/numpy/numpy/blob/master/numpy/distutils/extension.py#L42-L52\n\nThat functionality was broken by:\nhttps://github.com/pypa/setuptools/blob/master/setuptools/extension.py#L39-L41\n\nIn this pr: https://github.com/pypa/setuptools/pull/718\n\nHere's the the pdb session where I debugged this:\n\n```\n(Pdb) old_Extension.__module__\n'setuptools.extension'\n```\n\n", "before_files": [{"content": "import sys\nimport re\nimport functools\nimport distutils.core\nimport distutils.errors\nimport distutils.extension\n\nfrom setuptools.extern.six.moves import map\n\nfrom .dist import _get_unpatched\nfrom . import msvc\n\n_Extension = _get_unpatched(distutils.core.Extension)\n\nmsvc.patch_for_specialized_compiler()\n\n\ndef _have_cython():\n \"\"\"\n Return True if Cython can be imported.\n \"\"\"\n cython_impl = 'Cython.Distutils.build_ext'\n try:\n # from (cython_impl) import build_ext\n __import__(cython_impl, fromlist=['build_ext']).build_ext\n return True\n except Exception:\n pass\n return False\n\n\n# for compatibility\nhave_pyrex = _have_cython\n\n\nclass Extension(_Extension):\n \"\"\"Extension that uses '.c' files in place of '.pyx' files\"\"\"\n\n def __init__(self, name, sources, py_limited_api=False, **kw):\n self.py_limited_api = py_limited_api\n _Extension.__init__(self, name, sources, **kw)\n\n def _convert_pyx_sources_to_lang(self):\n \"\"\"\n Replace sources with .pyx extensions to sources with the target\n language extension. This mechanism allows language authors to supply\n pre-converted sources but to prefer the .pyx sources.\n \"\"\"\n if _have_cython():\n # the build has Cython, so allow it to compile the .pyx files\n return\n lang = self.language or ''\n target_ext = '.cpp' if lang.lower() == 'c++' else '.c'\n sub = functools.partial(re.sub, '.pyx$', target_ext)\n self.sources = list(map(sub, self.sources))\n\n\nclass Library(Extension):\n \"\"\"Just like a regular Extension, but built as a library instead\"\"\"\n\n\ndistutils.core.Extension = Extension\ndistutils.extension.Extension = Extension\nif 'distutils.command.build_ext' in sys.modules:\n sys.modules['distutils.command.build_ext'].Extension = Extension\n", "path": "setuptools/extension.py"}]}

| 1,215 | 219 |

gh_patches_debug_22728

|

rasdani/github-patches

|

git_diff

|

holoviz__holoviews-5502

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

holoviews 1.15.1 doesn't work in Jupyterlite

#### ALL software version info

holoviews: 1.15.1

jupyterlite: Version 0.1.0-beta.13

#### Description of expected behavior and the observed behavior

```python

import piplite

await piplite.install('holoviews==1.15.1')

import holoviews as hv

hv.extension('bokeh')

hv.Curve([1, 2, 3])

```

raise following exception:

```

ImportError: cannot import name 'document' from 'js' (unknown location)

```

Here is the reason:

https://github.com/jupyterlite/pyodide-kernel/issues/94

</issue>

<code>

[start of holoviews/pyodide.py]

1 import asyncio

2 import sys

3

4

5 from bokeh.document import Document

6 from bokeh.embed.elements import script_for_render_items

7 from bokeh.embed.util import standalone_docs_json_and_render_items

8 from bokeh.embed.wrappers import wrap_in_script_tag

9 from panel.io.pyodide import _link_docs

10 from panel.pane import panel as as_panel

11

12 from .core.dimension import LabelledData

13 from .core.options import Store

14 from .util import extension as _extension

15

16

17 #-----------------------------------------------------------------------------

18 # Private API

19 #-----------------------------------------------------------------------------

20

21 async def _link(ref, doc):

22 from js import Bokeh

23 rendered = Bokeh.index.object_keys()

24 if ref not in rendered:

25 await asyncio.sleep(0.1)

26 await _link(ref, doc)

27 return

28 views = Bokeh.index.object_values()

29 view = views[rendered.indexOf(ref)]

30 _link_docs(doc, view.model.document)

31

32 def render_html(obj):

33 from js import document

34 if hasattr(sys.stdout, '_out'):

35 target = sys.stdout._out # type: ignore

36 else:

37 raise ValueError("Could not determine target node to write to.")

38 doc = Document()

39 as_panel(obj).server_doc(doc, location=False)

40 docs_json, [render_item,] = standalone_docs_json_and_render_items(

41 doc.roots, suppress_callback_warning=True

42 )

43 for root in doc.roots:

44 render_item.roots._roots[root] = target

45 document.getElementById(target).classList.add('bk-root')

46 script = script_for_render_items(docs_json, [render_item])

47 asyncio.create_task(_link(doc.roots[0].ref['id'], doc))

48 return {'text/html': wrap_in_script_tag(script)}, {}

49

50 def render_image(element, fmt):

51 """

52 Used to render elements to an image format (svg or png) if requested

53 in the display formats.

54 """

55 if fmt not in Store.display_formats:

56 return None

57

58 backend = Store.current_backend

59 if type(element) not in Store.registry[backend]:

60 return None

61 renderer = Store.renderers[backend]

62 plot = renderer.get_plot(element)

63

64 # Current renderer does not support the image format

65 if fmt not in renderer.param.objects('existing')['fig'].objects:

66 return None

67

68 data, info = renderer(plot, fmt=fmt)

69 return {info['mime_type']: data}, {}

70

71 def render_png(element):

72 return render_image(element, 'png')

73

74 def render_svg(element):

75 return render_image(element, 'svg')

76

77 #-----------------------------------------------------------------------------

78 # Public API

79 #-----------------------------------------------------------------------------

80

81 class pyodide_extension(_extension):

82

83 _loaded = False

84

85 def __call__(self, *args, **params):

86 super().__call__(*args, **params)

87 if not self._loaded:

88 Store.output_settings.initialize(list(Store.renderers.keys()))

89 Store.set_display_hook('html+js', LabelledData, render_html)

90 Store.set_display_hook('png', LabelledData, render_png)

91 Store.set_display_hook('svg', LabelledData, render_svg)

92 pyodide_extension._loaded = True

93

[end of holoviews/pyodide.py]

[start of holoviews/__init__.py]

1 """

2 HoloViews makes data analysis and visualization simple

3 ======================================================

4

5 HoloViews lets you focus on what you are trying to explore and convey, not on

6 the process of plotting.

7

8 HoloViews

9

10 - supports a wide range of data sources including Pandas, Dask, XArray

11 Rapids cuDF, Streamz, Intake, Geopandas, NetworkX and Ibis.

12 - supports the plotting backends Bokeh (default), Matplotlib and Plotly.

13 - allows you to drop into the rest of the

14 HoloViz ecosystem when more power or flexibility is needed.

15

16 For basic data exploration we recommend using the higher level hvPlot package,

17 which provides the familiar Pandas `.plot` api. You can drop into HoloViews

18 when needed.

19

20 To learn more check out https://holoviews.org/. To report issues or contribute

21 go to https://github.com/holoviz/holoviews. To join the community go to

22 https://discourse.holoviz.org/.

23

24 How to use HoloViews in 3 simple steps

25 --------------------------------------

26

27 Work with the data source you already know and ❤️

28

29 >>> import pandas as pd

30 >>> station_info = pd.read_csv('https://raw.githubusercontent.com/holoviz/holoviews/master/examples/assets/station_info.csv')

31

32 Import HoloViews and configure your plotting backend

33

34 >>> import holoviews as hv

35 >>> hv.extension('bokeh')

36

37 Annotate your data

38

39 >>> scatter = (

40 ... hv.Scatter(station_info, kdims='services', vdims='ridership')

41 ... .redim(

42 ... services=hv.Dimension("services", label='Services'),

43 ... ridership=hv.Dimension("ridership", label='Ridership'),

44 ... )

45 ... .opts(size=10, color="red", responsive=True)

46 ... )

47 >>> scatter

48

49 In a notebook this will display a nice scatter plot.

50

51 Note that the `kdims` (The key dimension(s)) represents the independent

52 variable(s) and the `vdims` (value dimension(s)) the dependent variable(s).

53

54 For more check out https://holoviews.org/getting_started/Introduction.html

55

56 How to get help

57 ---------------

58

59 You can understand the structure of your objects by printing them.

60

61 >>> print(scatter)

62 :Scatter [services] (ridership)

63

64 You can get extensive documentation using `hv.help`.

65

66 >>> hv.help(scatter)

67

68 In a notebook or ipython environment the usual

69

70 - `help` and `?` will provide you with documentation.

71 - `TAB` and `SHIFT+TAB` completion will help you navigate.

72

73 To ask the community go to https://discourse.holoviz.org/.

74 To report issues go to https://github.com/holoviz/holoviews.

75 """

76 import io, os, sys

77

78 import numpy as np # noqa (API import)

79 import param

80

81 __version__ = str(param.version.Version(fpath=__file__, archive_commit="$Format:%h$",

82 reponame="holoviews"))

83

84 from . import util # noqa (API import)

85 from .annotators import annotate # noqa (API import)

86 from .core import archive, config # noqa (API import)

87 from .core.boundingregion import BoundingBox # noqa (API import)

88 from .core.dimension import OrderedDict, Dimension # noqa (API import)

89 from .core.element import Element, Collator # noqa (API import)

90 from .core.layout import (Layout, NdLayout, Empty, # noqa (API import)

91 AdjointLayout)

92 from .core.ndmapping import NdMapping # noqa (API import)

93 from .core.options import (Options, Store, Cycle, # noqa (API import)

94 Palette, StoreOptions)

95 from .core.overlay import Overlay, NdOverlay # noqa (API import)

96 from .core.spaces import (HoloMap, Callable, DynamicMap, # noqa (API import)

97 GridSpace, GridMatrix)

98

99 from .operation import Operation # noqa (API import)

100 from .element import * # noqa (API import)

101 from .element import __all__ as elements_list

102 from .selection import link_selections # noqa (API import)

103 from .util import (extension, renderer, output, opts, # noqa (API import)

104 render, save)

105 from .util.transform import dim # noqa (API import)

106

107 # Suppress warnings generated by NumPy in matplotlib

108 # Expected to be fixed in next matplotlib release

109 import warnings

110 warnings.filterwarnings("ignore",

111 message="elementwise comparison failed; returning scalar instead")

112

113 try:

114 import IPython # noqa (API import)

115 from .ipython import notebook_extension

116 extension = notebook_extension # noqa (name remapping)

117 except ImportError:

118 class notebook_extension(param.ParameterizedFunction):

119 def __call__(self, *args, **opts): # noqa (dummy signature)

120 raise Exception("IPython notebook not available: use hv.extension instead.")

121

122 if '_pyodide' in sys.modules:

123 from .pyodide import pyodide_extension as extension # noqa (API import)

124

125 # A single holoviews.rc file may be executed if found.

126 for rcfile in [os.environ.get("HOLOVIEWSRC", ''),

127 os.path.abspath(os.path.join(os.path.split(__file__)[0],

128 '..', 'holoviews.rc')),

129 "~/.holoviews.rc",

130 "~/.config/holoviews/holoviews.rc"]:

131 filename = os.path.expanduser(rcfile)

132 if os.path.isfile(filename):

133 with io.open(filename, encoding='utf8') as f:

134 code = compile(f.read(), filename, 'exec')

135 try:

136 exec(code)

137 except Exception as e:

138 print("Warning: Could not load %r [%r]" % (filename, str(e)))

139 del f, code

140 break

141 del filename

142

143 def help(obj, visualization=True, ansi=True, backend=None,

144 recursive=False, pattern=None):

145 """

146 Extended version of the built-in help that supports parameterized

147 functions and objects. A pattern (regular expression) may be used to

148 filter the output and if recursive is set to True, documentation for

149 the supplied object is shown. Note that the recursive option will

150 only work with an object instance and not a class.

151

152 If ansi is set to False, all ANSI color

153 codes are stripped out.

154 """

155 backend = backend if backend else Store.current_backend

156 info = Store.info(obj, ansi=ansi, backend=backend, visualization=visualization,

157 recursive=recursive, pattern=pattern, elements=elements_list)

158

159 msg = ("\nTo view the visualization options applicable to this "

160 "object or class, use:\n\n"

161 " holoviews.help(obj, visualization=True)\n\n")

162 if info:

163 print((msg if visualization is False else '') + info)

164 else:

165 import pydoc

166 pydoc.help(obj)

167

168

169 del io, np, os, rcfile, warnings

170

[end of holoviews/__init__.py]

</code>

I need you to solve this issue by generating a single patch file that I can apply directly to this repository using git apply. Please respond with a single patch file in the following format.

<patch>

diff --git a/file.py b/file.py

--- a/file.py

+++ b/file.py

@@ -1,27 +1,35 @@

def euclidean(a, b):

- while b:

- a, b = b, a % b

- return a

+ if b == 0:

+ return a

+ return euclidean(b, a % b)

def bresenham(x0, y0, x1, y1):

points = []

dx = abs(x1 - x0)

dy = abs(y1 - y0)

- sx = 1 if x0 < x1 else -1

- sy = 1 if y0 < y1 else -1

- err = dx - dy

+ x, y = x0, y0

+ sx = -1 if x0 > x1 else 1

+ sy = -1 if y0 > y1 else 1

- while True:

- points.append((x0, y0))

- if x0 == x1 and y0 == y1:

- break

- e2 = 2 * err

- if e2 > -dy:

- err -= dy

- x0 += sx

- if e2 < dx:

- err += dx

- y0 += sy

+ if dx > dy:

+ err = dx / 2.0

+ while x != x1:

+ points.append((x, y))

+ err -= dy

+ if err < 0:

+ y += sy

+ err += dx

+ x += sx

+ else:

+ err = dy / 2.0

+ while y != y1:

+ points.append((x, y))

+ err -= dx

+ if err < 0:

+ x += sx

+ err += dy

+ y += sy

+

+ points.append((x, y))

return points

</patch>

|

diff --git a/holoviews/__init__.py b/holoviews/__init__.py

--- a/holoviews/__init__.py

+++ b/holoviews/__init__.py

@@ -120,7 +120,12 @@

raise Exception("IPython notebook not available: use hv.extension instead.")

if '_pyodide' in sys.modules:

- from .pyodide import pyodide_extension as extension # noqa (API import)

+ from .pyodide import pyodide_extension, in_jupyterlite

+ # The notebook_extension is needed inside jupyterlite,

+ # so the override is only done if we are not inside jupyterlite.

+ if not in_jupyterlite():

+ extension = pyodide_extension

+ del pyodide_extension, in_jupyterlite

# A single holoviews.rc file may be executed if found.

for rcfile in [os.environ.get("HOLOVIEWSRC", ''),

diff --git a/holoviews/pyodide.py b/holoviews/pyodide.py

--- a/holoviews/pyodide.py

+++ b/holoviews/pyodide.py

@@ -74,6 +74,10 @@

def render_svg(element):

return render_image(element, 'svg')

+def in_jupyterlite():

+ import js

+ return hasattr(js, "_JUPYTERLAB")

+

#-----------------------------------------------------------------------------

# Public API

#-----------------------------------------------------------------------------

|

{"golden_diff": "diff --git a/holoviews/__init__.py b/holoviews/__init__.py\n--- a/holoviews/__init__.py\n+++ b/holoviews/__init__.py\n@@ -120,7 +120,12 @@\n raise Exception(\"IPython notebook not available: use hv.extension instead.\")\n \n if '_pyodide' in sys.modules:\n- from .pyodide import pyodide_extension as extension # noqa (API import)\n+ from .pyodide import pyodide_extension, in_jupyterlite\n+ # The notebook_extension is needed inside jupyterlite,\n+ # so the override is only done if we are not inside jupyterlite.\n+ if not in_jupyterlite():\n+ extension = pyodide_extension\n+ del pyodide_extension, in_jupyterlite\n \n # A single holoviews.rc file may be executed if found.\n for rcfile in [os.environ.get(\"HOLOVIEWSRC\", ''),\ndiff --git a/holoviews/pyodide.py b/holoviews/pyodide.py\n--- a/holoviews/pyodide.py\n+++ b/holoviews/pyodide.py\n@@ -74,6 +74,10 @@\n def render_svg(element):\n return render_image(element, 'svg')\n \n+def in_jupyterlite():\n+ import js\n+ return hasattr(js, \"_JUPYTERLAB\")\n+\n #-----------------------------------------------------------------------------\n # Public API\n #-----------------------------------------------------------------------------\n", "issue": "holoviews 1.15.1 doesn't work in Jupyterlite\n#### ALL software version info\r\n\r\nholoviews: 1.15.1\r\njupyterlite: Version 0.1.0-beta.13\r\n\r\n#### Description of expected behavior and the observed behavior\r\n\r\n```python\r\nimport piplite\r\nawait piplite.install('holoviews==1.15.1')\r\n\r\nimport holoviews as hv\r\nhv.extension('bokeh')\r\n\r\nhv.Curve([1, 2, 3])\r\n```\r\n\r\nraise following exception:\r\n\r\n```\r\nImportError: cannot import name 'document' from 'js' (unknown location)\r\n```\r\n\r\nHere is the reason:\r\n\r\nhttps://github.com/jupyterlite/pyodide-kernel/issues/94\n", "before_files": [{"content": "import asyncio\nimport sys\n\n\nfrom bokeh.document import Document\nfrom bokeh.embed.elements import script_for_render_items\nfrom bokeh.embed.util import standalone_docs_json_and_render_items\nfrom bokeh.embed.wrappers import wrap_in_script_tag\nfrom panel.io.pyodide import _link_docs\nfrom panel.pane import panel as as_panel\n\nfrom .core.dimension import LabelledData\nfrom .core.options import Store\nfrom .util import extension as _extension\n\n\n#-----------------------------------------------------------------------------\n# Private API\n#-----------------------------------------------------------------------------\n\nasync def _link(ref, doc):\n from js import Bokeh\n rendered = Bokeh.index.object_keys()\n if ref not in rendered:\n await asyncio.sleep(0.1)\n await _link(ref, doc)\n return\n views = Bokeh.index.object_values()\n view = views[rendered.indexOf(ref)]\n _link_docs(doc, view.model.document)\n\ndef render_html(obj):\n from js import document\n if hasattr(sys.stdout, '_out'):\n target = sys.stdout._out # type: ignore\n else:\n raise ValueError(\"Could not determine target node to write to.\")\n doc = Document()\n as_panel(obj).server_doc(doc, location=False)\n docs_json, [render_item,] = standalone_docs_json_and_render_items(\n doc.roots, suppress_callback_warning=True\n )\n for root in doc.roots:\n render_item.roots._roots[root] = target\n document.getElementById(target).classList.add('bk-root')\n script = script_for_render_items(docs_json, [render_item])\n asyncio.create_task(_link(doc.roots[0].ref['id'], doc))\n return {'text/html': wrap_in_script_tag(script)}, {}\n\ndef render_image(element, fmt):\n \"\"\"\n Used to render elements to an image format (svg or png) if requested\n in the display formats.\n \"\"\"\n if fmt not in Store.display_formats:\n return None\n\n backend = Store.current_backend\n if type(element) not in Store.registry[backend]:\n return None\n renderer = Store.renderers[backend]\n plot = renderer.get_plot(element)\n\n # Current renderer does not support the image format\n if fmt not in renderer.param.objects('existing')['fig'].objects:\n return None\n\n data, info = renderer(plot, fmt=fmt)\n return {info['mime_type']: data}, {}\n\ndef render_png(element):\n return render_image(element, 'png')\n\ndef render_svg(element):\n return render_image(element, 'svg')\n\n#-----------------------------------------------------------------------------\n# Public API\n#-----------------------------------------------------------------------------\n\nclass pyodide_extension(_extension):\n\n _loaded = False\n\n def __call__(self, *args, **params):\n super().__call__(*args, **params)\n if not self._loaded:\n Store.output_settings.initialize(list(Store.renderers.keys()))\n Store.set_display_hook('html+js', LabelledData, render_html)\n Store.set_display_hook('png', LabelledData, render_png)\n Store.set_display_hook('svg', LabelledData, render_svg)\n pyodide_extension._loaded = True\n", "path": "holoviews/pyodide.py"}, {"content": "\"\"\"\nHoloViews makes data analysis and visualization simple\n======================================================\n\nHoloViews lets you focus on what you are trying to explore and convey, not on\nthe process of plotting.\n\nHoloViews\n\n- supports a wide range of data sources including Pandas, Dask, XArray\nRapids cuDF, Streamz, Intake, Geopandas, NetworkX and Ibis.\n- supports the plotting backends Bokeh (default), Matplotlib and Plotly.\n- allows you to drop into the rest of the\nHoloViz ecosystem when more power or flexibility is needed.\n\nFor basic data exploration we recommend using the higher level hvPlot package,\nwhich provides the familiar Pandas `.plot` api. You can drop into HoloViews\nwhen needed.\n\nTo learn more check out https://holoviews.org/. To report issues or contribute\ngo to https://github.com/holoviz/holoviews. To join the community go to\nhttps://discourse.holoviz.org/.\n\nHow to use HoloViews in 3 simple steps\n--------------------------------------\n\nWork with the data source you already know and \u2764\ufe0f\n\n>>> import pandas as pd\n>>> station_info = pd.read_csv('https://raw.githubusercontent.com/holoviz/holoviews/master/examples/assets/station_info.csv')\n\nImport HoloViews and configure your plotting backend\n\n>>> import holoviews as hv\n>>> hv.extension('bokeh')\n\nAnnotate your data\n\n>>> scatter = (\n... hv.Scatter(station_info, kdims='services', vdims='ridership')\n... .redim(\n... services=hv.Dimension(\"services\", label='Services'),\n... ridership=hv.Dimension(\"ridership\", label='Ridership'),\n... )\n... .opts(size=10, color=\"red\", responsive=True)\n... )\n>>> scatter\n\nIn a notebook this will display a nice scatter plot.\n\nNote that the `kdims` (The key dimension(s)) represents the independent\nvariable(s) and the `vdims` (value dimension(s)) the dependent variable(s).\n\nFor more check out https://holoviews.org/getting_started/Introduction.html\n\nHow to get help\n---------------\n\nYou can understand the structure of your objects by printing them.\n\n>>> print(scatter)\n:Scatter [services] (ridership)\n\nYou can get extensive documentation using `hv.help`.\n\n>>> hv.help(scatter)\n\nIn a notebook or ipython environment the usual\n\n- `help` and `?` will provide you with documentation.\n- `TAB` and `SHIFT+TAB` completion will help you navigate.\n\nTo ask the community go to https://discourse.holoviz.org/.\nTo report issues go to https://github.com/holoviz/holoviews.\n\"\"\"\nimport io, os, sys\n\nimport numpy as np # noqa (API import)\nimport param\n\n__version__ = str(param.version.Version(fpath=__file__, archive_commit=\"$Format:%h$\",\n reponame=\"holoviews\"))\n\nfrom . import util # noqa (API import)\nfrom .annotators import annotate # noqa (API import)\nfrom .core import archive, config # noqa (API import)\nfrom .core.boundingregion import BoundingBox # noqa (API import)\nfrom .core.dimension import OrderedDict, Dimension # noqa (API import)\nfrom .core.element import Element, Collator # noqa (API import)\nfrom .core.layout import (Layout, NdLayout, Empty, # noqa (API import)\n AdjointLayout)\nfrom .core.ndmapping import NdMapping # noqa (API import)\nfrom .core.options import (Options, Store, Cycle, # noqa (API import)\n Palette, StoreOptions)\nfrom .core.overlay import Overlay, NdOverlay # noqa (API import)\nfrom .core.spaces import (HoloMap, Callable, DynamicMap, # noqa (API import)\n GridSpace, GridMatrix)\n\nfrom .operation import Operation # noqa (API import)\nfrom .element import * # noqa (API import)\nfrom .element import __all__ as elements_list\nfrom .selection import link_selections # noqa (API import)\nfrom .util import (extension, renderer, output, opts, # noqa (API import)\n render, save)\nfrom .util.transform import dim # noqa (API import)\n\n# Suppress warnings generated by NumPy in matplotlib\n# Expected to be fixed in next matplotlib release\nimport warnings\nwarnings.filterwarnings(\"ignore\",\n message=\"elementwise comparison failed; returning scalar instead\")\n\ntry:\n import IPython # noqa (API import)\n from .ipython import notebook_extension\n extension = notebook_extension # noqa (name remapping)\nexcept ImportError:\n class notebook_extension(param.ParameterizedFunction):\n def __call__(self, *args, **opts): # noqa (dummy signature)\n raise Exception(\"IPython notebook not available: use hv.extension instead.\")\n\nif '_pyodide' in sys.modules:\n from .pyodide import pyodide_extension as extension # noqa (API import)\n\n# A single holoviews.rc file may be executed if found.\nfor rcfile in [os.environ.get(\"HOLOVIEWSRC\", ''),\n os.path.abspath(os.path.join(os.path.split(__file__)[0],\n '..', 'holoviews.rc')),\n \"~/.holoviews.rc\",\n \"~/.config/holoviews/holoviews.rc\"]:\n filename = os.path.expanduser(rcfile)\n if os.path.isfile(filename):\n with io.open(filename, encoding='utf8') as f:\n code = compile(f.read(), filename, 'exec')\n try:\n exec(code)\n except Exception as e:\n print(\"Warning: Could not load %r [%r]\" % (filename, str(e)))\n del f, code\n break\n del filename\n\ndef help(obj, visualization=True, ansi=True, backend=None,\n recursive=False, pattern=None):\n \"\"\"\n Extended version of the built-in help that supports parameterized\n functions and objects. A pattern (regular expression) may be used to\n filter the output and if recursive is set to True, documentation for\n the supplied object is shown. Note that the recursive option will\n only work with an object instance and not a class.\n\n If ansi is set to False, all ANSI color\n codes are stripped out.\n \"\"\"\n backend = backend if backend else Store.current_backend\n info = Store.info(obj, ansi=ansi, backend=backend, visualization=visualization,\n recursive=recursive, pattern=pattern, elements=elements_list)\n\n msg = (\"\\nTo view the visualization options applicable to this \"\n \"object or class, use:\\n\\n\"\n \" holoviews.help(obj, visualization=True)\\n\\n\")\n if info:\n print((msg if visualization is False else '') + info)\n else:\n import pydoc\n pydoc.help(obj)\n\n\ndel io, np, os, rcfile, warnings\n", "path": "holoviews/__init__.py"}]}

| 3,484 | 332 |

gh_patches_debug_4250

|

rasdani/github-patches

|

git_diff

|

scoutapp__scout_apm_python-495

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

Default app name

Use a default app name like "Python App" rather than the empty string, so if users forget to set it it still appears on the consle.

</issue>

<code>

[start of src/scout_apm/core/config.py]

1 # coding=utf-8

2 from __future__ import absolute_import, division, print_function, unicode_literals

3

4 import logging

5 import os

6 import warnings

7

8 from scout_apm.compat import string_type

9 from scout_apm.core import platform_detection

10

11 logger = logging.getLogger(__name__)

12

13

14 class ScoutConfig(object):

15 """

16 Configuration object for the ScoutApm agent.

17

18 Contains a list of configuration "layers". When a configuration key is

19 looked up, each layer is asked in turn if it knows the value. The first one

20 to answer affirmatively returns the value.

21 """

22

23 def __init__(self):

24 self.layers = [

25 Env(),

26 Python(),

27 Derived(self),

28 Defaults(),

29 Null(),

30 ]

31

32 def value(self, key):

33 value = self.locate_layer_for_key(key).value(key)

34 if key in CONVERSIONS:

35 return CONVERSIONS[key](value)

36 return value

37

38 def locate_layer_for_key(self, key):

39 for layer in self.layers:

40 if layer.has_config(key):

41 return layer

42

43 # Should be unreachable because Null returns None for all keys.

44 raise ValueError("key {!r} not found in any layer".format(key))

45

46 def log(self):

47 logger.debug("Configuration Loaded:")

48 for key in self.known_keys():

49 layer = self.locate_layer_for_key(key)

50 logger.debug(

51 "%-9s: %s = %s", layer.__class__.__name__, key, layer.value(key)

52 )

53

54 def known_keys(self):

55 return [

56 "app_server",

57 "application_root",

58 "core_agent_dir",

59 "core_agent_download",

60 "core_agent_launch",

61 "core_agent_log_level",

62 "core_agent_permissions",

63 "core_agent_version",

64 "disabled_instruments",

65 "download_url",

66 "framework",

67 "framework_version",

68 "hostname",

69 "ignore",

70 "key",

71 "log_level",

72 "monitor",

73 "name",

74 "revision_sha",

75 "scm_subdirectory",

76 "shutdown_timeout_seconds",

77 "socket_path",

78 ]

79

80 def core_agent_permissions(self):

81 try:

82 return int(str(self.value("core_agent_permissions")), 8)

83 except ValueError:

84 logger.exception(

85 "Invalid core_agent_permissions value, using default of 0o700"

86 )

87 return 0o700

88

89 @classmethod

90 def set(cls, **kwargs):

91 """

92 Sets a configuration value for the Scout agent. Values set here will

93 not override values set in ENV.

94 """

95 for key, value in kwargs.items():

96 SCOUT_PYTHON_VALUES[key] = value

97

98 @classmethod

99 def unset(cls, *keys):

100 """

101 Removes a configuration value for the Scout agent.

102 """

103 for key in keys:

104 SCOUT_PYTHON_VALUES.pop(key, None)

105

106 @classmethod

107 def reset_all(cls):

108 """

109 Remove all configuration settings set via `ScoutConfig.set(...)`.

110

111 This is meant for use in testing.

112 """

113 SCOUT_PYTHON_VALUES.clear()

114

115

116 # Module-level data, the ScoutConfig.set(key="value") adds to this

117 SCOUT_PYTHON_VALUES = {}

118

119

120 class Python(object):

121 """

122 A configuration overlay that lets other parts of python set values.

123 """

124

125 def has_config(self, key):

126 return key in SCOUT_PYTHON_VALUES

127

128 def value(self, key):

129 return SCOUT_PYTHON_VALUES[key]

130

131

132 class Env(object):

133 """

134 Reads configuration from environment by prefixing the key

135 requested with "SCOUT_"

136

137 Example: the `key` config looks for SCOUT_KEY

138 environment variable

139 """

140

141 def has_config(self, key):

142 env_key = self.modify_key(key)

143 return env_key in os.environ

144

145 def value(self, key):

146 env_key = self.modify_key(key)

147 return os.environ[env_key]

148

149 def modify_key(self, key):

150 env_key = ("SCOUT_" + key).upper()

151 return env_key

152

153

154 class Derived(object):

155 """

156 A configuration overlay that calculates from other values.

157 """

158

159 def __init__(self, config):

160 """

161 config argument is the overall ScoutConfig var, so we can lookup the

162 components of the derived info.

163 """

164 self.config = config

165

166 def has_config(self, key):

167 return self.lookup_func(key) is not None

168

169 def value(self, key):

170 return self.lookup_func(key)()

171

172 def lookup_func(self, key):

173 """

174 Returns the derive_#{key} function, or None if it isn't defined

175 """

176 func_name = "derive_" + key

177 return getattr(self, func_name, None)

178

179 def derive_socket_path(self):

180 return "{}/{}/scout-agent.sock".format(

181 self.config.value("core_agent_dir"),

182 self.config.value("core_agent_full_name"),

183 )

184

185 def derive_core_agent_full_name(self):

186 triple = self.config.value("core_agent_triple")

187 if not platform_detection.is_valid_triple(triple):

188 warnings.warn("Invalid value for core_agent_triple: {}".format(triple))

189 return "{name}-{version}-{triple}".format(

190 name="scout_apm_core",

191 version=self.config.value("core_agent_version"),

192 triple=triple,

193 )

194

195 def derive_core_agent_triple(self):

196 return platform_detection.get_triple()

197

198

199 class Defaults(object):

200 """

201 Provides default values for important configurations

202 """

203

204 def __init__(self):

205 self.defaults = {

206 "app_server": "",

207 "application_root": "",

208 "core_agent_dir": "/tmp/scout_apm_core",

209 "core_agent_download": True,

210 "core_agent_launch": True,

211 "core_agent_log_level": "info",

212 "core_agent_permissions": 700,

213 "core_agent_version": "v1.2.6", # can be an exact tag name, or 'latest'

214 "disabled_instruments": [],

215 "download_url": "https://s3-us-west-1.amazonaws.com/scout-public-downloads/apm_core_agent/release", # noqa: E501

216 "framework": "",

217 "framework_version": "",

218 "hostname": None,

219 "key": "",

220 "monitor": False,

221 "name": "",

222 "revision_sha": self._git_revision_sha(),

223 "scm_subdirectory": "",

224 "shutdown_timeout_seconds": 2.0,

225 "uri_reporting": "filtered_params",

226 }

227

228 def _git_revision_sha(self):

229 # N.B. The environment variable SCOUT_REVISION_SHA may also be used,

230 # but that will be picked up by Env

231 return os.environ.get("HEROKU_SLUG_COMMIT", "")

232

233 def has_config(self, key):

234 return key in self.defaults

235

236 def value(self, key):

237 return self.defaults[key]

238

239

240 class Null(object):

241 """

242 Always answers that a key is present, but the value is None

243

244 Used as the last step of the layered configuration.

245 """

246

247 def has_config(self, key):

248 return True

249

250 def value(self, key):

251 return None

252

253

254 def convert_to_bool(value):

255 if isinstance(value, bool):

256 return value

257 if isinstance(value, string_type):

258 return value.lower() in ("yes", "true", "t", "1")

259 # Unknown type - default to false?

260 return False

261

262

263 def convert_to_float(value):

264 try:

265 return float(value)

266 except ValueError:

267 return 0.0

268

269

270 def convert_to_list(value):

271 if isinstance(value, list):

272 return value

273 if isinstance(value, tuple):

274 return list(value)

275 if isinstance(value, string_type):

276 # Split on commas

277 return [item.strip() for item in value.split(",") if item]

278 # Unknown type - default to empty?

279 return []

280

281

282 CONVERSIONS = {

283 "core_agent_download": convert_to_bool,

284 "core_agent_launch": convert_to_bool,

285 "disabled_instruments": convert_to_list,

286 "ignore": convert_to_list,

287 "monitor": convert_to_bool,

288 "shutdown_timeout_seconds": convert_to_float,

289 }

290

291

292 scout_config = ScoutConfig()

293

[end of src/scout_apm/core/config.py]

</code>

I need you to solve this issue by generating a single patch file that I can apply directly to this repository using git apply. Please respond with a single patch file in the following format.

<patch>

diff --git a/file.py b/file.py

--- a/file.py

+++ b/file.py

@@ -1,27 +1,35 @@

def euclidean(a, b):

- while b:

- a, b = b, a % b

- return a

+ if b == 0:

+ return a

+ return euclidean(b, a % b)

def bresenham(x0, y0, x1, y1):

points = []

dx = abs(x1 - x0)

dy = abs(y1 - y0)

- sx = 1 if x0 < x1 else -1

- sy = 1 if y0 < y1 else -1

- err = dx - dy

+ x, y = x0, y0

+ sx = -1 if x0 > x1 else 1

+ sy = -1 if y0 > y1 else 1

- while True:

- points.append((x0, y0))

- if x0 == x1 and y0 == y1:

- break

- e2 = 2 * err

- if e2 > -dy:

- err -= dy

- x0 += sx

- if e2 < dx:

- err += dx

- y0 += sy

+ if dx > dy:

+ err = dx / 2.0

+ while x != x1:

+ points.append((x, y))

+ err -= dy

+ if err < 0:

+ y += sy

+ err += dx

+ x += sx

+ else:

+ err = dy / 2.0

+ while y != y1:

+ points.append((x, y))

+ err -= dx

+ if err < 0:

+ x += sx

+ err += dy

+ y += sy

+

+ points.append((x, y))

return points

</patch>

|

diff --git a/src/scout_apm/core/config.py b/src/scout_apm/core/config.py

--- a/src/scout_apm/core/config.py

+++ b/src/scout_apm/core/config.py

@@ -218,7 +218,7 @@

"hostname": None,

"key": "",

"monitor": False,

- "name": "",

+ "name": "Python App",

"revision_sha": self._git_revision_sha(),

"scm_subdirectory": "",

"shutdown_timeout_seconds": 2.0,

|

{"golden_diff": "diff --git a/src/scout_apm/core/config.py b/src/scout_apm/core/config.py\n--- a/src/scout_apm/core/config.py\n+++ b/src/scout_apm/core/config.py\n@@ -218,7 +218,7 @@\n \"hostname\": None,\n \"key\": \"\",\n \"monitor\": False,\n- \"name\": \"\",\n+ \"name\": \"Python App\",\n \"revision_sha\": self._git_revision_sha(),\n \"scm_subdirectory\": \"\",\n \"shutdown_timeout_seconds\": 2.0,\n", "issue": "Default app name\nUse a default app name like \"Python App\" rather than the empty string, so if users forget to set it it still appears on the consle.\n", "before_files": [{"content": "# coding=utf-8\nfrom __future__ import absolute_import, division, print_function, unicode_literals\n\nimport logging\nimport os\nimport warnings\n\nfrom scout_apm.compat import string_type\nfrom scout_apm.core import platform_detection\n\nlogger = logging.getLogger(__name__)\n\n\nclass ScoutConfig(object):\n \"\"\"\n Configuration object for the ScoutApm agent.\n\n Contains a list of configuration \"layers\". When a configuration key is\n looked up, each layer is asked in turn if it knows the value. The first one\n to answer affirmatively returns the value.\n \"\"\"\n\n def __init__(self):\n self.layers = [\n Env(),\n Python(),\n Derived(self),\n Defaults(),\n Null(),\n ]\n\n def value(self, key):\n value = self.locate_layer_for_key(key).value(key)\n if key in CONVERSIONS:\n return CONVERSIONS[key](value)\n return value\n\n def locate_layer_for_key(self, key):\n for layer in self.layers:\n if layer.has_config(key):\n return layer\n\n # Should be unreachable because Null returns None for all keys.\n raise ValueError(\"key {!r} not found in any layer\".format(key))\n\n def log(self):\n logger.debug(\"Configuration Loaded:\")\n for key in self.known_keys():\n layer = self.locate_layer_for_key(key)\n logger.debug(\n \"%-9s: %s = %s\", layer.__class__.__name__, key, layer.value(key)\n )\n\n def known_keys(self):\n return [\n \"app_server\",\n \"application_root\",\n \"core_agent_dir\",\n \"core_agent_download\",\n \"core_agent_launch\",\n \"core_agent_log_level\",\n \"core_agent_permissions\",\n \"core_agent_version\",\n \"disabled_instruments\",\n \"download_url\",\n \"framework\",\n \"framework_version\",\n \"hostname\",\n \"ignore\",\n \"key\",\n \"log_level\",\n \"monitor\",\n \"name\",\n \"revision_sha\",\n \"scm_subdirectory\",\n \"shutdown_timeout_seconds\",\n \"socket_path\",\n ]\n\n def core_agent_permissions(self):\n try:\n return int(str(self.value(\"core_agent_permissions\")), 8)\n except ValueError:\n logger.exception(\n \"Invalid core_agent_permissions value, using default of 0o700\"\n )\n return 0o700\n\n @classmethod\n def set(cls, **kwargs):\n \"\"\"\n Sets a configuration value for the Scout agent. Values set here will\n not override values set in ENV.\n \"\"\"\n for key, value in kwargs.items():\n SCOUT_PYTHON_VALUES[key] = value\n\n @classmethod\n def unset(cls, *keys):\n \"\"\"\n Removes a configuration value for the Scout agent.\n \"\"\"\n for key in keys:\n SCOUT_PYTHON_VALUES.pop(key, None)\n\n @classmethod\n def reset_all(cls):\n \"\"\"\n Remove all configuration settings set via `ScoutConfig.set(...)`.\n\n This is meant for use in testing.\n \"\"\"\n SCOUT_PYTHON_VALUES.clear()\n\n\n# Module-level data, the ScoutConfig.set(key=\"value\") adds to this\nSCOUT_PYTHON_VALUES = {}\n\n\nclass Python(object):\n \"\"\"\n A configuration overlay that lets other parts of python set values.\n \"\"\"\n\n def has_config(self, key):\n return key in SCOUT_PYTHON_VALUES\n\n def value(self, key):\n return SCOUT_PYTHON_VALUES[key]\n\n\nclass Env(object):\n \"\"\"\n Reads configuration from environment by prefixing the key\n requested with \"SCOUT_\"\n\n Example: the `key` config looks for SCOUT_KEY\n environment variable\n \"\"\"\n\n def has_config(self, key):\n env_key = self.modify_key(key)\n return env_key in os.environ\n\n def value(self, key):\n env_key = self.modify_key(key)\n return os.environ[env_key]\n\n def modify_key(self, key):\n env_key = (\"SCOUT_\" + key).upper()\n return env_key\n\n\nclass Derived(object):\n \"\"\"\n A configuration overlay that calculates from other values.\n \"\"\"\n\n def __init__(self, config):\n \"\"\"\n config argument is the overall ScoutConfig var, so we can lookup the\n components of the derived info.\n \"\"\"\n self.config = config\n\n def has_config(self, key):\n return self.lookup_func(key) is not None\n\n def value(self, key):\n return self.lookup_func(key)()\n\n def lookup_func(self, key):\n \"\"\"\n Returns the derive_#{key} function, or None if it isn't defined\n \"\"\"\n func_name = \"derive_\" + key\n return getattr(self, func_name, None)\n\n def derive_socket_path(self):\n return \"{}/{}/scout-agent.sock\".format(\n self.config.value(\"core_agent_dir\"),\n self.config.value(\"core_agent_full_name\"),\n )\n\n def derive_core_agent_full_name(self):\n triple = self.config.value(\"core_agent_triple\")\n if not platform_detection.is_valid_triple(triple):\n warnings.warn(\"Invalid value for core_agent_triple: {}\".format(triple))\n return \"{name}-{version}-{triple}\".format(\n name=\"scout_apm_core\",\n version=self.config.value(\"core_agent_version\"),\n triple=triple,\n )\n\n def derive_core_agent_triple(self):\n return platform_detection.get_triple()\n\n\nclass Defaults(object):\n \"\"\"\n Provides default values for important configurations\n \"\"\"\n\n def __init__(self):\n self.defaults = {\n \"app_server\": \"\",\n \"application_root\": \"\",\n \"core_agent_dir\": \"/tmp/scout_apm_core\",\n \"core_agent_download\": True,\n \"core_agent_launch\": True,\n \"core_agent_log_level\": \"info\",\n \"core_agent_permissions\": 700,\n \"core_agent_version\": \"v1.2.6\", # can be an exact tag name, or 'latest'\n \"disabled_instruments\": [],\n \"download_url\": \"https://s3-us-west-1.amazonaws.com/scout-public-downloads/apm_core_agent/release\", # noqa: E501\n \"framework\": \"\",\n \"framework_version\": \"\",\n \"hostname\": None,\n \"key\": \"\",\n \"monitor\": False,\n \"name\": \"\",\n \"revision_sha\": self._git_revision_sha(),\n \"scm_subdirectory\": \"\",\n \"shutdown_timeout_seconds\": 2.0,\n \"uri_reporting\": \"filtered_params\",\n }\n\n def _git_revision_sha(self):\n # N.B. The environment variable SCOUT_REVISION_SHA may also be used,\n # but that will be picked up by Env\n return os.environ.get(\"HEROKU_SLUG_COMMIT\", \"\")\n\n def has_config(self, key):\n return key in self.defaults\n\n def value(self, key):\n return self.defaults[key]\n\n\nclass Null(object):\n \"\"\"\n Always answers that a key is present, but the value is None\n\n Used as the last step of the layered configuration.\n \"\"\"\n\n def has_config(self, key):\n return True\n\n def value(self, key):\n return None\n\n\ndef convert_to_bool(value):\n if isinstance(value, bool):\n return value\n if isinstance(value, string_type):\n return value.lower() in (\"yes\", \"true\", \"t\", \"1\")\n # Unknown type - default to false?\n return False\n\n\ndef convert_to_float(value):\n try:\n return float(value)\n except ValueError:\n return 0.0\n\n\ndef convert_to_list(value):\n if isinstance(value, list):\n return value\n if isinstance(value, tuple):\n return list(value)\n if isinstance(value, string_type):\n # Split on commas\n return [item.strip() for item in value.split(\",\") if item]\n # Unknown type - default to empty?\n return []\n\n\nCONVERSIONS = {\n \"core_agent_download\": convert_to_bool,\n \"core_agent_launch\": convert_to_bool,\n \"disabled_instruments\": convert_to_list,\n \"ignore\": convert_to_list,\n \"monitor\": convert_to_bool,\n \"shutdown_timeout_seconds\": convert_to_float,\n}\n\n\nscout_config = ScoutConfig()\n", "path": "src/scout_apm/core/config.py"}]}

| 3,130 | 120 |

gh_patches_debug_23602

|

rasdani/github-patches

|

git_diff

|

apluslms__a-plus-655

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

Course points API endpoint should contain points for all submissions

This comes from the IntelliJ project: https://github.com/Aalto-LeTech/intellij-plugin/issues/302

The `/api/v2/courses/<course-id>/points/me/` endpoint should be able to provide points for all submissions (for one student in all exercises of one course). We need to still consider if all points are always included in the output or only when some parameter is given in the request GET query parameters. All points should already be available in the points cache: https://github.com/apluslms/a-plus/blob/9e595a0a902d19bcadeeaff8b3160873b0265f43/exercise/cache/points.py#L98

Let's not modify the existing submissions URL list in order to preserve backwards-compatibility. A new key shall be added to the output.

Example snippet for the output (the existing submissions list only contains the URLs of the submissions):

```

"submissions_and_points": [

{

"url": "https://plus.cs.aalto.fi/api/v2/submissions/123/",

"points": 10

},

{

"url": "https://plus.cs.aalto.fi/api/v2/submissions/456/",

"points": 5

}

]

```

Jaakko says that it could be best to add the grade field to the existing `SubmissionBriefSerializer` class. It could be more uniform with the rest of the API.

</issue>

<code>

[start of exercise/api/custom_serializers.py]

1 from rest_framework import serializers

2 from rest_framework.reverse import reverse

3 from course.api.serializers import CourseUsertagBriefSerializer

4 from lib.api.serializers import AlwaysListSerializer

5 from userprofile.api.serializers import UserBriefSerializer, UserListField

6 from ..cache.points import CachedPoints

7 from .full_serializers import SubmissionSerializer

8

9

10 class UserToTagSerializer(AlwaysListSerializer, CourseUsertagBriefSerializer):

11

12 class Meta(CourseUsertagBriefSerializer.Meta):

13 fields = CourseUsertagBriefSerializer.Meta.fields + (

14 'name',

15 )

16

17

18 class UserWithTagsSerializer(UserBriefSerializer):

19 tags = serializers.SerializerMethodField()

20

21 class Meta(UserBriefSerializer.Meta):

22 fields = UserBriefSerializer.Meta.fields + (

23 'tags',

24 )

25

26 def get_tags(self, obj):

27 view = self.context['view']

28 ser = UserToTagSerializer(

29 obj.taggings.tags_for_instance(view.instance),

30 context=self.context

31 )

32 return ser.data

33

34

35 class ExercisePointsSerializer(serializers.Serializer):

36

37 def to_representation(self, entry):

38 request = self.context['request']

39

40 def exercise_url(exercise_id):

41 return reverse('api:exercise-detail', kwargs={

42 'exercise_id': exercise_id,

43 }, request=request)

44

45 def submission_url(submission_id):

46 if submission_id is None:

47 return None

48 return reverse('api:submission-detail', kwargs={

49 'submission_id': submission_id

50 }, request=request)

51

52 exercise_data = {

53 'url': exercise_url(entry['id']),

54 'best_submission': submission_url(entry['best_submission']),

55 'submissions': [submission_url(s['id']) for s in entry['submissions']],

56 }

57 for key in [

58 # exercise

59 'id',

60 'name',

61 'difficulty',

62 'max_points',

63 'points_to_pass',

64 'submission_count',

65 # best submission

66 'points',

67 'passed',

68 # 'official',

69 ]:

70 exercise_data[key] = entry[key]

71 exercise_data['official'] = (entry['graded'] and

72 not entry.get('unconfirmed', False))

73 return exercise_data

74

75

76 class UserPointsSerializer(UserWithTagsSerializer):

77

78 def to_representation(self, obj):

79 rep = super().to_representation(obj)

80 view = self.context['view']

81 points = CachedPoints(view.instance, obj.user, view.content)

82 modules = []

83 for module in points.modules_flatted():

84 module_data = {}

85 for key in [

86 'id', 'name',

87 'max_points', 'points_to_pass', 'submission_count',

88 'points', 'points_by_difficulty', 'passed',

89 ]:

90 module_data[key] = module[key]

91

92 exercises = []

93 for entry in module['flatted']:

94 if entry['type'] == 'exercise' and entry['submittable']:

95 exercises.append(

96 ExercisePointsSerializer(entry, context=self.context).data

97 )

98 module_data['exercises'] = exercises

99 modules.append(module_data)

100

101 total = points.total()

102 for key in ['submission_count', 'points', 'points_by_difficulty']:

103 rep[key] = total[key]

104 rep['modules'] = modules

105

106 return rep

107

108

109 class SubmitterStatsSerializer(UserWithTagsSerializer):

110

111 def to_representation(self, obj):

112 rep = super().to_representation(obj)

113 view = self.context['view']

114 points = CachedPoints(view.instance, obj.user, view.content)

115 entry,_,_,_ = points.find(view.exercise)

116 data = ExercisePointsSerializer(entry, context=self.context).data

117 for key,value in data.items():

118 rep[key] = value

119 return rep

120

[end of exercise/api/custom_serializers.py]

[start of exercise/api/serializers.py]

1 from rest_framework import serializers

2 from rest_framework.reverse import reverse

3

4 from lib.api.fields import NestedHyperlinkedIdentityField

5 from lib.api.serializers import AplusModelSerializer, HtmlViewField

6 from userprofile.api.serializers import UserBriefSerializer

7 from ..models import Submission, SubmittedFile, BaseExercise

8

9

10 __all__ = [

11 'ExerciseBriefSerializer',

12 'SubmissionBriefSerializer',

13 'SubmittedFileBriefSerializer',

14 'SubmitterStatsBriefSerializer',

15 ]

16

17

18 class ExerciseBriefSerializer(AplusModelSerializer):

19 url = NestedHyperlinkedIdentityField(

20 view_name='api:exercise-detail',

21 lookup_map='exercise.api.views.ExerciseViewSet',

22 )

23 display_name = serializers.CharField(source='__str__')

24

25 class Meta(AplusModelSerializer.Meta):

26 model = BaseExercise

27 fields = (

28 'url',

29 'html_url',

30 'display_name',

31 'max_points',

32 'max_submissions',

33 )

34

35

36 class SubmissionBriefSerializer(AplusModelSerializer):

37 #display_name = serializers.CharField(source='__str__')

38

39 class Meta(AplusModelSerializer.Meta):

40 model = Submission

41 fields = (

42 'submission_time',

43 )

44 extra_kwargs = {

45 'url': {

46 'view_name': 'api:submission-detail',

47 'lookup_map': 'exercise.api.views.SubmissionViewSet',

48 }

49 }

50

51

52 class SubmittedFileBriefSerializer(AplusModelSerializer):

53 #url = HtmlViewField()

54 url = NestedHyperlinkedIdentityField(

55 view_name='api:submission-files-detail',

56 lookup_map='exercise.api.views.SubmissionFileViewSet',

57 )

58

59 class Meta(AplusModelSerializer.Meta):

60 model = SubmittedFile

61 fields = (

62 'url',

63 'filename',

64 'param_name',

65 )

66

67

68 class SubmitterStatsBriefSerializer(UserBriefSerializer):

69 stats = serializers.SerializerMethodField()

70

71 def get_stats(self, profile):

72 return reverse(

73 'api:exercise-submitter_stats-detail',

74 kwargs={

75 'exercise_id': self.context['view'].exercise.id,

76 'user_id': profile.user.id,

77 },

78 request=self.context['request']

79 )

80

81 class Meta(UserBriefSerializer.Meta):

82 fields = UserBriefSerializer.Meta.fields + (

83 'stats',

84 )

85

[end of exercise/api/serializers.py]

</code>

I need you to solve this issue by generating a single patch file that I can apply directly to this repository using git apply. Please respond with a single patch file in the following format.

<patch>

diff --git a/file.py b/file.py

--- a/file.py

+++ b/file.py

@@ -1,27 +1,35 @@

def euclidean(a, b):

- while b:

- a, b = b, a % b

- return a

+ if b == 0:

+ return a

+ return euclidean(b, a % b)

def bresenham(x0, y0, x1, y1):

points = []

dx = abs(x1 - x0)

dy = abs(y1 - y0)

- sx = 1 if x0 < x1 else -1

- sy = 1 if y0 < y1 else -1

- err = dx - dy

+ x, y = x0, y0

+ sx = -1 if x0 > x1 else 1

+ sy = -1 if y0 > y1 else 1

- while True:

- points.append((x0, y0))

- if x0 == x1 and y0 == y1:

- break

- e2 = 2 * err

- if e2 > -dy:

- err -= dy

- x0 += sx

- if e2 < dx:

- err += dx

- y0 += sy

+ if dx > dy:

+ err = dx / 2.0

+ while x != x1:

+ points.append((x, y))

+ err -= dy

+ if err < 0:

+ y += sy

+ err += dx

+ x += sx

+ else:

+ err = dy / 2.0

+ while y != y1:

+ points.append((x, y))

+ err -= dx

+ if err < 0:

+ x += sx

+ err += dy

+ y += sy

+

+ points.append((x, y))

return points

</patch>

|

diff --git a/exercise/api/custom_serializers.py b/exercise/api/custom_serializers.py

--- a/exercise/api/custom_serializers.py

+++ b/exercise/api/custom_serializers.py

@@ -49,10 +49,21 @@

'submission_id': submission_id

}, request=request)

+ def submission_obj(submission_cached):

+ id_ = submission_cached['id']

+ return {

+ 'id': id_,

+ 'url': submission_url(id_),

+ 'submission_time': submission_cached['date'],

+ 'grade': submission_cached['points'],

+ }

+

+ submissions = [submission_obj(s) for s in entry['submissions']]

exercise_data = {

'url': exercise_url(entry['id']),

'best_submission': submission_url(entry['best_submission']),

- 'submissions': [submission_url(s['id']) for s in entry['submissions']],

+ 'submissions': [s['url'] for s in submissions],

+ 'submissions_with_points': submissions,

}

for key in [

# exercise

diff --git a/exercise/api/serializers.py b/exercise/api/serializers.py

--- a/exercise/api/serializers.py

+++ b/exercise/api/serializers.py

@@ -40,6 +40,7 @@

model = Submission

fields = (

'submission_time',

+ 'grade',

)

extra_kwargs = {

'url': {

|

{"golden_diff": "diff --git a/exercise/api/custom_serializers.py b/exercise/api/custom_serializers.py\n--- a/exercise/api/custom_serializers.py\n+++ b/exercise/api/custom_serializers.py\n@@ -49,10 +49,21 @@\n 'submission_id': submission_id\n }, request=request)\n \n+ def submission_obj(submission_cached):\n+ id_ = submission_cached['id']\n+ return {\n+ 'id': id_,\n+ 'url': submission_url(id_),\n+ 'submission_time': submission_cached['date'],\n+ 'grade': submission_cached['points'],\n+ }\n+\n+ submissions = [submission_obj(s) for s in entry['submissions']]\n exercise_data = {\n 'url': exercise_url(entry['id']),\n 'best_submission': submission_url(entry['best_submission']),\n- 'submissions': [submission_url(s['id']) for s in entry['submissions']],\n+ 'submissions': [s['url'] for s in submissions],\n+ 'submissions_with_points': submissions,\n }\n for key in [\n # exercise\ndiff --git a/exercise/api/serializers.py b/exercise/api/serializers.py\n--- a/exercise/api/serializers.py\n+++ b/exercise/api/serializers.py\n@@ -40,6 +40,7 @@\n model = Submission\n fields = (\n 'submission_time',\n+ 'grade',\n )\n extra_kwargs = {\n 'url': {\n", "issue": "Course points API endpoint should contain points for all submissions\nThis comes from the IntelliJ project: https://github.com/Aalto-LeTech/intellij-plugin/issues/302\r\n\r\nThe `/api/v2/courses/<course-id>/points/me/` endpoint should be able to provide points for all submissions (for one student in all exercises of one course). We need to still consider if all points are always included in the output or only when some parameter is given in the request GET query parameters. All points should already be available in the points cache: https://github.com/apluslms/a-plus/blob/9e595a0a902d19bcadeeaff8b3160873b0265f43/exercise/cache/points.py#L98\r\n\r\nLet's not modify the existing submissions URL list in order to preserve backwards-compatibility. A new key shall be added to the output.\r\n\r\nExample snippet for the output (the existing submissions list only contains the URLs of the submissions):\r\n\r\n```\r\n\"submissions_and_points\": [\r\n {\r\n \"url\": \"https://plus.cs.aalto.fi/api/v2/submissions/123/\",\r\n \"points\": 10\r\n },\r\n {\r\n \"url\": \"https://plus.cs.aalto.fi/api/v2/submissions/456/\",\r\n \"points\": 5\r\n }\r\n]\r\n```\r\n\r\nJaakko says that it could be best to add the grade field to the existing `SubmissionBriefSerializer` class. It could be more uniform with the rest of the API.\r\n\n", "before_files": [{"content": "from rest_framework import serializers\nfrom rest_framework.reverse import reverse\nfrom course.api.serializers import CourseUsertagBriefSerializer\nfrom lib.api.serializers import AlwaysListSerializer\nfrom userprofile.api.serializers import UserBriefSerializer, UserListField\nfrom ..cache.points import CachedPoints\nfrom .full_serializers import SubmissionSerializer\n\n\nclass UserToTagSerializer(AlwaysListSerializer, CourseUsertagBriefSerializer):\n\n class Meta(CourseUsertagBriefSerializer.Meta):\n fields = CourseUsertagBriefSerializer.Meta.fields + (\n 'name',\n )\n\n\nclass UserWithTagsSerializer(UserBriefSerializer):\n tags = serializers.SerializerMethodField()\n\n class Meta(UserBriefSerializer.Meta):\n fields = UserBriefSerializer.Meta.fields + (\n 'tags',\n )\n\n def get_tags(self, obj):\n view = self.context['view']\n ser = UserToTagSerializer(\n obj.taggings.tags_for_instance(view.instance),\n context=self.context\n )\n return ser.data\n\n\nclass ExercisePointsSerializer(serializers.Serializer):\n\n def to_representation(self, entry):\n request = self.context['request']\n\n def exercise_url(exercise_id):\n return reverse('api:exercise-detail', kwargs={\n 'exercise_id': exercise_id,\n }, request=request)\n\n def submission_url(submission_id):\n if submission_id is None:\n return None\n return reverse('api:submission-detail', kwargs={\n 'submission_id': submission_id\n }, request=request)\n\n exercise_data = {\n 'url': exercise_url(entry['id']),\n 'best_submission': submission_url(entry['best_submission']),\n 'submissions': [submission_url(s['id']) for s in entry['submissions']],\n }\n for key in [\n # exercise\n 'id',\n 'name',\n 'difficulty',\n 'max_points',\n 'points_to_pass',\n 'submission_count',\n # best submission\n 'points',\n 'passed',\n # 'official',\n ]:\n exercise_data[key] = entry[key]\n exercise_data['official'] = (entry['graded'] and\n not entry.get('unconfirmed', False))\n return exercise_data\n\n\nclass UserPointsSerializer(UserWithTagsSerializer):\n\n def to_representation(self, obj):\n rep = super().to_representation(obj)\n view = self.context['view']\n points = CachedPoints(view.instance, obj.user, view.content)\n modules = []\n for module in points.modules_flatted():\n module_data = {}\n for key in [\n 'id', 'name',\n 'max_points', 'points_to_pass', 'submission_count',\n 'points', 'points_by_difficulty', 'passed',\n ]:\n module_data[key] = module[key]\n\n exercises = []\n for entry in module['flatted']:\n if entry['type'] == 'exercise' and entry['submittable']:\n exercises.append(\n ExercisePointsSerializer(entry, context=self.context).data\n )\n module_data['exercises'] = exercises\n modules.append(module_data)\n\n total = points.total()\n for key in ['submission_count', 'points', 'points_by_difficulty']:\n rep[key] = total[key]\n rep['modules'] = modules\n\n return rep\n\n\nclass SubmitterStatsSerializer(UserWithTagsSerializer):\n\n def to_representation(self, obj):\n rep = super().to_representation(obj)\n view = self.context['view']\n points = CachedPoints(view.instance, obj.user, view.content)\n entry,_,_,_ = points.find(view.exercise)\n data = ExercisePointsSerializer(entry, context=self.context).data\n for key,value in data.items():\n rep[key] = value\n return rep\n", "path": "exercise/api/custom_serializers.py"}, {"content": "from rest_framework import serializers\nfrom rest_framework.reverse import reverse\n\nfrom lib.api.fields import NestedHyperlinkedIdentityField\nfrom lib.api.serializers import AplusModelSerializer, HtmlViewField\nfrom userprofile.api.serializers import UserBriefSerializer\nfrom ..models import Submission, SubmittedFile, BaseExercise\n\n\n__all__ = [\n 'ExerciseBriefSerializer',\n 'SubmissionBriefSerializer',\n 'SubmittedFileBriefSerializer',\n 'SubmitterStatsBriefSerializer',\n]\n\n\nclass ExerciseBriefSerializer(AplusModelSerializer):\n url = NestedHyperlinkedIdentityField(\n view_name='api:exercise-detail',\n lookup_map='exercise.api.views.ExerciseViewSet',\n )\n display_name = serializers.CharField(source='__str__')\n\n class Meta(AplusModelSerializer.Meta):\n model = BaseExercise\n fields = (\n 'url',\n 'html_url',\n 'display_name',\n 'max_points',\n 'max_submissions',\n )\n\n\nclass SubmissionBriefSerializer(AplusModelSerializer):\n #display_name = serializers.CharField(source='__str__')\n\n class Meta(AplusModelSerializer.Meta):\n model = Submission\n fields = (\n 'submission_time',\n )\n extra_kwargs = {\n 'url': {\n 'view_name': 'api:submission-detail',\n 'lookup_map': 'exercise.api.views.SubmissionViewSet',\n }\n }\n\n\nclass SubmittedFileBriefSerializer(AplusModelSerializer):\n #url = HtmlViewField()\n url = NestedHyperlinkedIdentityField(\n view_name='api:submission-files-detail',\n lookup_map='exercise.api.views.SubmissionFileViewSet',\n )\n\n class Meta(AplusModelSerializer.Meta):\n model = SubmittedFile\n fields = (\n 'url',\n 'filename',\n 'param_name',\n )\n\n\nclass SubmitterStatsBriefSerializer(UserBriefSerializer):\n stats = serializers.SerializerMethodField()\n\n def get_stats(self, profile):\n return reverse(\n 'api:exercise-submitter_stats-detail',\n kwargs={\n 'exercise_id': self.context['view'].exercise.id,\n 'user_id': profile.user.id,\n },\n request=self.context['request']\n )\n\n class Meta(UserBriefSerializer.Meta):\n fields = UserBriefSerializer.Meta.fields + (\n 'stats',\n )\n", "path": "exercise/api/serializers.py"}]}

| 2,567 | 318 |

gh_patches_debug_35131

|

rasdani/github-patches

|

git_diff

|

tensorflow__addons-1230

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

RSquare TypeError: tf__update_state() got an unexpected keyword argument 'sample_weight'

**System information**

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): ubuntu 18.04

- TensorFlow version and how it was installed (source or binary): 2.1.0 binary (conda)

- TensorFlow-Addons version and how it was installed (source or binary): 0.8.3 binary(pip)

- Python version: 3.7.6

- Is GPU used? (yes/no): yes

**Describe the bug**

The code goes wrong when I add "tfa.metrics.RSquare(dtype=tf.float32)" to model metrics.

The exception is "TypeError: tf__update_state() got an unexpected keyword argument 'sample_weight'"

And I don't see "sample_weight" parameter , what been added shown in #564 , of update_state() function in class RSquare in addons version 0.8.3.

Are there something wrong with my installed tensorflow and addons package?

**Code to reproduce the issue**

Usage in my code:

``` python

model.compile(

loss='mse',

optimizer=optimizer,

metrics=['mae', 'mse', tfa.metrics.RSquare(dtype=tf.float32)]

)

```

**Other info / logs**

Include any logs or source code that would be helpful to diagnose the problem. If including tracebacks, please include the full traceback. Large logs and files should be attached.

</issue>

<code>

[start of tensorflow_addons/metrics/multilabel_confusion_matrix.py]

1 # Copyright 2019 The TensorFlow Authors. All Rights Reserved.

2 #

3 # Licensed under the Apache License, Version 2.0 (the "License");

4 # you may not use this file except in compliance with the License.

5 # You may obtain a copy of the License at

6 #

7 # http://www.apache.org/licenses/LICENSE-2.0

8 #

9 # Unless required by applicable law or agreed to in writing, software

10 # distributed under the License is distributed on an "AS IS" BASIS,

11 # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 # See the License for the specific language governing permissions and

13 # limitations under the License.

14 # ==============================================================================

15 """Implements Multi-label confusion matrix scores."""

16

17 import tensorflow as tf

18 from tensorflow.keras.metrics import Metric

19 import numpy as np

20

21 from typeguard import typechecked

22 from tensorflow_addons.utils.types import AcceptableDTypes, FloatTensorLike

23

24

25 class MultiLabelConfusionMatrix(Metric):

26 """Computes Multi-label confusion matrix.

27

28 Class-wise confusion matrix is computed for the

29 evaluation of classification.

30

31 If multi-class input is provided, it will be treated

32 as multilabel data.

33

34 Consider classification problem with two classes

35 (i.e num_classes=2).

36

37 Resultant matrix `M` will be in the shape of (num_classes, 2, 2).

38

39 Every class `i` has a dedicated 2*2 matrix that contains:

40

41 - true negatives for class i in M(0,0)

42 - false positives for class i in M(0,1)

43 - false negatives for class i in M(1,0)

44 - true positives for class i in M(1,1)

45

46 ```python

47 # multilabel confusion matrix

48 y_true = tf.constant([[1, 0, 1], [0, 1, 0]],

49 dtype=tf.int32)

50 y_pred = tf.constant([[1, 0, 0],[0, 1, 1]],

51 dtype=tf.int32)

52 output = MultiLabelConfusionMatrix(num_classes=3)

53 output.update_state(y_true, y_pred)

54 print('Confusion matrix:', output.result().numpy())

55

56 # Confusion matrix: [[[1 0] [0 1]] [[1 0] [0 1]]

57 [[0 1] [1 0]]]

58

59 # if multiclass input is provided

60 y_true = tf.constant([[1, 0, 0], [0, 1, 0]],

61 dtype=tf.int32)

62 y_pred = tf.constant([[1, 0, 0],[0, 0, 1]],

63 dtype=tf.int32)

64 output = MultiLabelConfusionMatrix(num_classes=3)

65 output.update_state(y_true, y_pred)

66 print('Confusion matrix:', output.result().numpy())

67

68 # Confusion matrix: [[[1 0] [0 1]] [[1 0] [1 0]] [[1 1] [0 0]]]

69 ```

70 """

71

72 @typechecked

73 def __init__(

74 self,

75 num_classes: FloatTensorLike,

76 name: str = "Multilabel_confusion_matrix",

77 dtype: AcceptableDTypes = None,

78 **kwargs

79 ):

80 super().__init__(name=name, dtype=dtype)

81 self.num_classes = num_classes

82 self.true_positives = self.add_weight(

83 "true_positives",

84 shape=[self.num_classes],

85 initializer="zeros",

86 dtype=self.dtype,

87 )

88 self.false_positives = self.add_weight(

89 "false_positives",

90 shape=[self.num_classes],

91 initializer="zeros",

92 dtype=self.dtype,

93 )

94 self.false_negatives = self.add_weight(

95 "false_negatives",

96 shape=[self.num_classes],

97 initializer="zeros",

98 dtype=self.dtype,

99 )

100 self.true_negatives = self.add_weight(

101 "true_negatives",

102 shape=[self.num_classes],

103 initializer="zeros",

104 dtype=self.dtype,

105 )

106

107 def update_state(self, y_true, y_pred):

108 y_true = tf.cast(y_true, tf.int32)

109 y_pred = tf.cast(y_pred, tf.int32)

110 # true positive

111 true_positive = tf.math.count_nonzero(y_true * y_pred, 0)

112 # predictions sum

113 pred_sum = tf.math.count_nonzero(y_pred, 0)

114 # true labels sum

115 true_sum = tf.math.count_nonzero(y_true, 0)

116 false_positive = pred_sum - true_positive

117 false_negative = true_sum - true_positive

118 y_true_negative = tf.math.not_equal(y_true, 1)

119 y_pred_negative = tf.math.not_equal(y_pred, 1)

120 true_negative = tf.math.count_nonzero(

121 tf.math.logical_and(y_true_negative, y_pred_negative), axis=0

122 )