modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-07-16 06:27:54

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 522

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-07-16 06:27:41

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

bcfs/furnace_model_m | bcfs | 2024-08-19T22:39:49Z | 106 | 0 | transformers | [

"transformers",

"safetensors",

"t5",

"text2text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text2text-generation | 2024-08-15T23:26:47Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

awni00/DAT-sa8-ra8-nr32-ns1024-sh8-nkvh4-343M | awni00 | 2024-08-19T22:31:02Z | 6 | 0 | null | [

"safetensors",

"model_hub_mixin",

"pytorch_model_hub_mixin",

"text-generation",

"en",

"arxiv:2405.16727",

"license:mit",

"region:us"

]

| text-generation | 2024-08-19T22:21:26Z | ---

language: en

license: mit

pipeline_tag: text-generation

tags:

- model_hub_mixin

- pytorch_model_hub_mixin

dataset: HuggingFaceFW/fineweb-edu

---

# DAT-sa8-ra8-nr32-ns1024-sh8-nkvh4-343M

<!-- Provide a quick summary of what the model is/does. -->

This is a Dual-Attention Transformer Language Model, trained on the `fineweb-edu` dataset. The model is 344M parameters.

## Model Details

| Size | Training Tokens| Layers | Model Dimension | Self-Attention Heads | Relational Attention Heads | Relation Dimension | Context Length |

|--|--|--|--|--|--|--|--|

| 344M | 10B | 24| 1024 | 8 | 8 | 32 | 1024 |

### Model Description

- **Developed by:** Awni Altabaa, John Lafferty

- **Model type:** Decoder-only Dual Attention Transformer

- **Tokenizer:** GPT-2 BPE tokenizer

- **Language(s):** English

<!-- - **License:** MIT -->

<!-- - **Contact:** [email protected] -->

- **Date:** August, 2024

### Model Sources

- **Repository:** https://github.com/Awni00/abstract_transformer

- **Paper:** [Disentangling and Integrating Relational and Sensory Information in Transformer Architectures](https://arxiv.org/abs/2405.16727)

- **Huggingface Collection:** [Dual Attention Transformer Collection](https://huggingface.co/collections/awni00/dual-attention-transformer-66c23425a545b0cefe4b9489)

## Model Usage

Use the code below to get started with the model. First, install the `dual-attention` [python package hosted on PyPI](https://pypi.org/project/dual-attention/) via `pip install dual-attention`.

To load directly from huggingface hub, use the HFHub wrapper.

```

from dual_attention.hf import DualAttnTransformerLM_HFHub

DualAttnTransformerLM_HFHub.from_pretrained('awni00/DAT-sa8-ra8-nr32-ns1024-sh8-nkvh4-343M')

```

## Training Details

The model was trained using the following setup:

- **Architecture:** Decoder-only Dual Attention Transformer

- **Framework:** PyTorch

- **Optimizer:** AdamW

- **Learning Rate:** 6e-4 (peak)

- **Weight Decay:** 0.1

- **Batch Size:** 524,288 Tokens

- **Sequence Length:** 1024 tokens

- **Total Training Tokens:** 10B Tokens

For more detailed training information, please refer to the paper.

## Evaluation

See paper.

## Model Interpretability Analysis

The [DAT-LM-Visualization app](https://huggingface.co/spaces/awni00/DAT-LM-Visualization/) is built to visualize the representations learned in a Dual Attention Transformer language model. It is hosted on Huggingface spaces using their free CPU resources. You can select a pre-trained DAT-LM model, enter a prompt, and visualize the internal representations in different parts of the model. You can also run the app locally (e.g., to use your own GPU) via the PyPI package.

Also, see paper.

## Citation

```

@misc{altabaa2024disentanglingintegratingrelationalsensory,

title={Disentangling and Integrating Relational and Sensory Information in Transformer Architectures},

author={Awni Altabaa and John Lafferty},

year={2024},

eprint={2405.16727},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2405.16727},

}

``` |

awni00/DAT-sa8-ra8-nr64-ns1024-sh8-nkvh4-343M | awni00 | 2024-08-19T22:30:55Z | 6 | 0 | null | [

"safetensors",

"model_hub_mixin",

"pytorch_model_hub_mixin",

"text-generation",

"en",

"arxiv:2405.16727",

"license:mit",

"region:us"

]

| text-generation | 2024-08-19T22:09:37Z | ---

language: en

license: mit

pipeline_tag: text-generation

tags:

- model_hub_mixin

- pytorch_model_hub_mixin

dataset: HuggingFaceFW/fineweb-edu

---

# DAT-sa8-ra8-nr64-ns1024-sh8-nkvh4-343M

<!-- Provide a quick summary of what the model is/does. -->

This is a Dual-Attention Transformer Language Model, trained on the `fineweb-edu` dataset. The model is 344M parameters.

## Model Details

| Size | Training Tokens| Layers | Model Dimension | Self-Attention Heads | Relational Attention Heads | Relation Dimension | Context Length |

|--|--|--|--|--|--|--|--|

| 344M | 10B | 24| 1024 | 8 | 8 | 64 | 1024 |

### Model Description

- **Developed by:** Awni Altabaa, John Lafferty

- **Model type:** Decoder-only Dual Attention Transformer

- **Tokenizer:** GPT-2 BPE tokenizer

- **Language(s):** English

<!-- - **License:** MIT -->

<!-- - **Contact:** [email protected] -->

- **Date:** August, 2024

### Model Sources

- **Repository:** https://github.com/Awni00/abstract_transformer

- **Paper:** [Disentangling and Integrating Relational and Sensory Information in Transformer Architectures](https://arxiv.org/abs/2405.16727)

- **Huggingface Collection:** [Dual Attention Transformer Collection](https://huggingface.co/collections/awni00/dual-attention-transformer-66c23425a545b0cefe4b9489)

## Model Usage

Use the code below to get started with the model. First, install the `dual-attention` [python package hosted on PyPI](https://pypi.org/project/dual-attention/) via `pip install dual-attention`.

To load directly from huggingface hub, use the HFHub wrapper.

```

from dual_attention.hf import DualAttnTransformerLM_HFHub

DualAttnTransformerLM_HFHub.from_pretrained('awni00/DAT-sa8-ra8-nr64-ns1024-sh8-nkvh4-343M')

```

## Training Details

The model was trained using the following setup:

- **Architecture:** Decoder-only Dual Attention Transformer

- **Framework:** PyTorch

- **Optimizer:** AdamW

- **Learning Rate:** 6e-4 (peak)

- **Weight Decay:** 0.1

- **Batch Size:** 524,288 Tokens

- **Sequence Length:** 1024 tokens

- **Total Training Tokens:** 10B Tokens

For more detailed training information, please refer to the paper.

## Evaluation

See paper.

## Model Interpretability Analysis

The [DAT-LM-Visualization app](https://huggingface.co/spaces/awni00/DAT-LM-Visualization/) is built to visualize the representations learned in a Dual Attention Transformer language model. It is hosted on Huggingface spaces using their free CPU resources. You can select a pre-trained DAT-LM model, enter a prompt, and visualize the internal representations in different parts of the model. You can also run the app locally (e.g., to use your own GPU) via the PyPI package.

Also, see paper.

## Citation

```

@misc{altabaa2024disentanglingintegratingrelationalsensory,

title={Disentangling and Integrating Relational and Sensory Information in Transformer Architectures},

author={Awni Altabaa and John Lafferty},

year={2024},

eprint={2405.16727},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2405.16727},

}

``` |

awni00/DAT-sa8-ra8-ns1024-sh8-nkvh4-343M | awni00 | 2024-08-19T22:30:47Z | 53 | 0 | transformers | [

"transformers",

"safetensors",

"model_hub_mixin",

"pytorch_model_hub_mixin",

"text-generation",

"en",

"arxiv:2405.16727",

"license:mit",

"endpoints_compatible",

"region:us"

]

| text-generation | 2024-07-29T22:34:38Z | ---

language: en

license: mit

pipeline_tag: text-generation

tags:

- model_hub_mixin

- pytorch_model_hub_mixin

dataset: HuggingFaceFW/fineweb-edu

---

# DAT-sa8-ra8-ns1024-sh8-nkvh4-343M

<!-- Provide a quick summary of what the model is/does. -->

This is a Dual-Attention Transformer Language Model, trained on the `fineweb-edu` dataset. The model is 343M parameters.

## Model Details

| Size | Training Tokens| Layers | Model Dimension | Self-Attention Heads | Relational Attention Heads | Relation Dimension | Context Length |

|--|--|--|--|--|--|--|--|

| 343M | 10B | 24| 1024 | 8 | 8 | 8 | 1024 |

### Model Description

- **Developed by:** Awni Altabaa, John Lafferty

- **Model type:** Decoder-only Dual Attention Transformer

- **Tokenizer:** GPT-2 BPE tokenizer

- **Language(s):** English

<!-- - **License:** MIT -->

<!-- - **Contact:** [email protected] -->

- **Date:** August, 2024

### Model Sources

- **Repository:** https://github.com/Awni00/abstract_transformer

- **Paper:** [Disentangling and Integrating Relational and Sensory Information in Transformer Architectures](https://arxiv.org/abs/2405.16727)

- **Huggingface Collection:** [Dual Attention Transformer Collection](https://huggingface.co/collections/awni00/dual-attention-transformer-66c23425a545b0cefe4b9489)

## Model Usage

Use the code below to get started with the model. First, install the `dual-attention` [python package hosted on PyPI](https://pypi.org/project/dual-attention/) via `pip install dual-attention`.

To load directly from huggingface hub, use the HFHub wrapper.

```

from dual_attention.hf import DualAttnTransformerLM_HFHub

DualAttnTransformerLM_HFHub.from_pretrained('awni00/DAT-sa8-ra8-ns1024-sh8-nkvh4-343M')

```

## Training Details

The model was trained using the following setup:

- **Architecture:** Decoder-only Dual Attention Transformer

- **Framework:** PyTorch

- **Optimizer:** AdamW

- **Learning Rate:** 6e-4 (peak)

- **Weight Decay:** 0.1

- **Batch Size:** 524,288 Tokens

- **Sequence Length:** 1024 tokens

- **Total Training Tokens:** 10B Tokens

For more detailed training information, please refer to the paper.

## Evaluation

See paper.

## Model Interpretability Analysis

The [DAT-LM-Visualization app](https://huggingface.co/spaces/awni00/DAT-LM-Visualization/) is built to visualize the representations learned in a Dual Attention Transformer language model. It is hosted on Huggingface spaces using their free CPU resources. You can select a pre-trained DAT-LM model, enter a prompt, and visualize the internal representations in different parts of the model. You can also run the app locally (e.g., to use your own GPU) via the PyPI package.

Also, see paper.

## Citation

```

@misc{altabaa2024disentanglingintegratingrelationalsensory,

title={Disentangling and Integrating Relational and Sensory Information in Transformer Architectures},

author={Awni Altabaa and John Lafferty},

year={2024},

eprint={2405.16727},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2405.16727},

}

``` |

dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF | dasChronos1 | 2024-08-19T22:24:13Z | 7 | 0 | null | [

"gguf",

"llama-cpp",

"gguf-my-repo",

"en",

"dataset:Setiaku/Stheno-v3.4-Instruct",

"dataset:Setiaku/Stheno-3.4-Creative-2",

"base_model:Sao10K/Llama-3.1-8B-Stheno-v3.4",

"base_model:quantized:Sao10K/Llama-3.1-8B-Stheno-v3.4",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us",

"conversational"

]

| null | 2024-08-19T22:23:39Z | ---

base_model: Sao10K/Llama-3.1-8B-Stheno-v3.4

datasets:

- Setiaku/Stheno-v3.4-Instruct

- Setiaku/Stheno-3.4-Creative-2

language:

- en

license: cc-by-nc-4.0

tags:

- llama-cpp

- gguf-my-repo

---

# dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF

This model was converted to GGUF format from [`Sao10K/Llama-3.1-8B-Stheno-v3.4`](https://huggingface.co/Sao10K/Llama-3.1-8B-Stheno-v3.4) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/Sao10K/Llama-3.1-8B-Stheno-v3.4) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF --hf-file llama-3.1-8b-stheno-v3.4-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF --hf-file llama-3.1-8b-stheno-v3.4-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF --hf-file llama-3.1-8b-stheno-v3.4-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo dasChronos1/Llama-3.1-8B-Stheno-v3.4-Q8_0-GGUF --hf-file llama-3.1-8b-stheno-v3.4-q8_0.gguf -c 2048

```

|

Edgar404/t5_small_default | Edgar404 | 2024-08-19T22:23:40Z | 12 | 0 | transformers | [

"transformers",

"pytorch",

"m2m_100",

"text2text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text2text-generation | 2024-08-17T19:53:48Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

alimboff/rubert-kbd-krc-36K | alimboff | 2024-08-19T22:09:49Z | 106 | 1 | transformers | [

"transformers",

"safetensors",

"bert",

"text-classification",

"code",

"ru",

"kbd",

"krc",

"dataset:alimboff/ru_kbd_krc_corpus_classification",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2024-08-19T20:57:20Z | ---

license: cc-by-4.0

language:

- ru

- kbd

- krc

metrics:

- accuracy

pipeline_tag: text-classification

datasets:

- alimboff/ru_kbd_krc_corpus_classification

library_name: transformers

tags:

- code

---

### Zehedz

#### Описание модели

Модель предназначена для классификации текстов на три языка: русский (rus_Cyrl), кабардино-черкесский (kbd_Cyrl) и карачаево-балкарский (krc_Cyrl). Построена на архитектуре BERT и обучена на корпусе, который включает данные для каждого из этих языков.

#### Результаты обучения

```

Epoch 1/3

Train loss: 0.0431 | accuracy: 0.9889

Val loss: 0.0014 | accuracy: 1.0000

----------

Epoch 2/3

Train loss: 0.0111 | accuracy: 0.9974

Val loss: 0.0023 | accuracy: 0.9994

----------

Epoch 3/3

Train loss: 0.0081 | accuracy: 0.9982

Val loss: 0.0013 | accuracy: 1.0000

```

#### Производительность

- **Средняя скорость работы на GPU (CUDA):** 0.008 секунд на одно предсказание

- **Средняя скорость работы на CPU:** 0.05 секунд на одно предсказание

#### Использование модели

##### Код для работы с моделью:

```python

import torch

from transformers import BertTokenizer, BertForSequenceClassification

model_path = 'alimboff/rubert-kbd-krc-36K'

model = BertForSequenceClassification.from_pretrained(model_path, num_labels=3, problem_type="single_label_classification")

tokenizer = BertTokenizer.from_pretrained(model_path)

def predict(text):

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

model.eval()

encoding = tokenizer.encode_plus(

text,

add_special_tokens=True,

max_length=512,

return_token_type_ids=False,

truncation=True,

padding='max_length',

return_attention_mask=True,

return_tensors='pt',

)

input_ids = encoding['input_ids'].to(device)

attention_mask = encoding['attention_mask'].to(device)

with torch.no_grad():

outputs = model(input_ids=input_ids, attention_mask=attention_mask)

logits = outputs.logits

labels = ['kbd_Cyrl', 'rus_Cyrl', 'krc_Cyrl']

predicted_class = labels[torch.argmax(logits, dim=1).cpu().numpy()[0]]

return predicted_class

text = "Привет, как дела?"

print(predict(text))

```

#### Использование через API Space на Hugging Face

```python

from gradio_client import Client

client = Client("alimboff/zehedz")

result = client.predict(

text="Добрый день!",

api_name="/predict"

)

print(result)

```

Модель идеально подходит для задач, связанных с автоматическим определением языка текста в многоязычных системах и приложениях. |

RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf | RichardErkhov | 2024-08-19T22:04:47Z | 65 | 0 | null | [

"gguf",

"arxiv:2405.18952",

"endpoints_compatible",

"region:us",

"conversational"

]

| null | 2024-08-19T20:27:15Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

suzume-llama-3-8B-multilingual-orpo-borda-half - GGUF

- Model creator: https://huggingface.co/lightblue/

- Original model: https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half/

| Name | Quant method | Size |

| ---- | ---- | ---- |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q2_K.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q2_K.gguf) | Q2_K | 2.96GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_XS.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_XS.gguf) | IQ3_XS | 3.28GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_S.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_S.gguf) | IQ3_S | 3.43GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_S.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_S.gguf) | Q3_K_S | 3.41GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_M.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.IQ3_M.gguf) | IQ3_M | 3.52GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K.gguf) | Q3_K | 3.74GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_M.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_M.gguf) | Q3_K_M | 3.74GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_L.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q3_K_L.gguf) | Q3_K_L | 4.03GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.IQ4_XS.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.IQ4_XS.gguf) | IQ4_XS | 4.18GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_0.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_0.gguf) | Q4_0 | 4.34GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.IQ4_NL.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.IQ4_NL.gguf) | IQ4_NL | 4.38GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K_S.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K_S.gguf) | Q4_K_S | 4.37GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K.gguf) | Q4_K | 4.58GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K_M.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_K_M.gguf) | Q4_K_M | 4.58GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_1.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q4_1.gguf) | Q4_1 | 4.78GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_0.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_0.gguf) | Q5_0 | 5.21GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K_S.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K_S.gguf) | Q5_K_S | 5.21GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K.gguf) | Q5_K | 5.34GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K_M.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_K_M.gguf) | Q5_K_M | 5.34GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_1.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q5_1.gguf) | Q5_1 | 5.65GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q6_K.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q6_K.gguf) | Q6_K | 6.14GB |

| [suzume-llama-3-8B-multilingual-orpo-borda-half.Q8_0.gguf](https://huggingface.co/RichardErkhov/lightblue_-_suzume-llama-3-8B-multilingual-orpo-borda-half-gguf/blob/main/suzume-llama-3-8B-multilingual-orpo-borda-half.Q8_0.gguf) | Q8_0 | 7.95GB |

Original model description:

---

license: cc-by-nc-4.0

tags:

- generated_from_trainer

base_model: lightblue/suzume-llama-3-8B-multilingual

model-index:

- name: workspace/llm_training/axolotl/llama3-multilingual-orpo/output_mitsu_half_borda

results: []

---

# Suzume ORPO

<p align="center">

<img width=500 src="https://cdn-uploads.huggingface.co/production/uploads/64b63f8ad57e02621dc93c8b/kWQSu02YfgYdUQqv4s5lq.png" alt="Suzume with Mitsu - a Japanese tree sparrow with honey on it"/>

</p>

[[Paper]](https://arxiv.org/abs/2405.18952) [[Dataset]](https://huggingface.co/datasets/lightblue/mitsu)

This is Suzume ORPO, an ORPO trained fine-tune of the [lightblue/suzume-llama-3-8B-multilingual](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual) model using our [lightblue/mitsu](https://huggingface.co/datasets/lightblue/mitsu) dataset.

We have trained several versions of this model using ORPO and so recommend that you use the best performing model from our tests, [lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half).

Note that this model has a non-commerical license as we used the Command R and Command R+ models to generate our training data for this model ([lightblue/mitsu](https://huggingface.co/datasets/lightblue/mitsu)).

We are currently working on a developing a commerically usable model, so stay tuned for that!

# Model list

We have ORPO trained the following models using different proportions of the [lightblue/mitsu](https://huggingface.co/datasets/lightblue/mitsu) dataset:

* Trained on the top/bottom responses of all prompts in the dataset: [lightblue/suzume-llama-3-8B-multilingual-orpo-borda-full](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-full)

* Trained on the top/bottom responses of the prompts of the 75\% most consistently ranked responses in the dataset: [lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top75](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top75)

* Trained on the top/bottom responses of the prompts of the 50\% most consistently ranked responses in the dataset: [lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half)

* Trained on the top/bottom responses of the prompts of the 25\% most consistently ranked responses in the dataset: [lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top25](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top25)

# Model results

We compare the MT-Bench scores across 6 languages for our 4 ORPO trained models, as well as some baselines:

* [meta-llama/Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct) - The foundation model that our models are ultimately built upon

* [Nexusflow/Starling-LM-7B-beta](https://huggingface.co/Nexusflow/Starling-LM-7B-beta) - The highest performing open model on the Chatbot arena that is of a similar size to ours

* gpt-3.5-turbo - A fairly high quality (although not state-of-the-art) proprietary LLM

* [lightblue/suzume-llama-3-8B-multilingual](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual) - The base model which we train our ORPO finetunes from

| **MT-Bench language** | **meta-llama/Meta-Llama-3-8B-Instruct** | **Nexusflow/Starling-LM-7B-beta** | **gpt-3.5-turbo** | **lightblue/suzume-llama-3-8B-multilingual** | **lightblue/suzume-llama-3-8B-multilingual-orpo-borda-full** | **lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top75** | **lightblue/suzume-llama-3-8B-multilingual-orpo-borda-half** | **lightblue/suzume-llama-3-8B-multilingual-orpo-borda-top25** |

|-----------------------|-----------------------------------------|-----------------------------------|-------------------|----------------------------------------------|--------------------------------------------------------------|---------------------------------------------------------------|--------------------------------------------------------------|---------------------------------------------------------------|

| **Chinese 🇨🇳** | NaN | 6.97 | 7.55 | 7.11 | 7.65 | **7.77** | 7.74 | 7.44 |

| **English 🇺🇸** | 7.98 | 7.92 | **8.26** | 7.73 | 7.98 | 7.94 | 7.98 | 8.22 |

| **French 🇫🇷** | NaN | 7.29 | 7.74 | 7.66 | **7.84** | 7.46 | 7.78 | 7.81 |

| **German 🇩🇪** | NaN | 6.99 | 7.68 | 7.26 | 7.28 | 7.64 | 7.7 | **7.71** |

| **Japanese 🇯🇵** | NaN | 6.22 | **7.84** | 6.56 | 7.2 | 7.12 | 7.34 | 7.04 |

| **Russian 🇷🇺** | NaN | 8.28 | 7.94 | 8.19 | 8.3 | 8.74 | **8.94** | 8.81 |

We can see noticable improvement on most languages compared to the base model. We also find that our ORPO models achieve the highest score out of all the models we evaluated for a number of languages.

# Training data

We trained this model using the [lightblue/mitsu_full_borda](https://huggingface.co/datasets/lightblue/mitsu_full_borda) dataset.

# Training configuration

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

<details><summary>See axolotl config</summary>

axolotl version: `0.4.0`

```yaml

base_model: lightblue/suzume-llama-3-8B-multilingual

model_type: LlamaForCausalLM

tokenizer_type: AutoTokenizer # PreTrainedTokenizerFast

load_in_8bit: false

load_in_4bit: false

strict: false

rl: orpo

orpo_alpha: 0.1

remove_unused_columns: false

chat_template: chatml

datasets:

- path: lightblue/mitsu_tophalf_borda

type: orpo.chat_template

conversation: llama-3

dataset_prepared_path: /workspace/llm_training/axolotl/llama3-multilingual-orpo/prepared_mitsu_half_borda

val_set_size: 0.02

output_dir: /workspace/llm_training/axolotl/llama3-multilingual-orpo/output_mitsu_half_borda

sequence_len: 8192

sample_packing: false

pad_to_sequence_len: true

use_wandb: true

wandb_project: axolotl

wandb_entity: peterd

wandb_name: mitsu_half_borda

gradient_accumulation_steps: 8

micro_batch_size: 1

num_epochs: 1

optimizer: paged_adamw_8bit

lr_scheduler: cosine

learning_rate: 8e-6

train_on_inputs: false

group_by_length: false

bf16: auto

fp16:

tf32: false

gradient_checkpointing: true

gradient_checkpointing_kwargs:

use_reentrant: false

early_stopping_patience:

resume_from_checkpoint:

logging_steps: 1

xformers_attention:

flash_attention: true

warmup_steps: 10

evals_per_epoch: 20

eval_table_size:

saves_per_epoch: 1

debug:

deepspeed: /workspace/axolotl/deepspeed_configs/zero3_bf16.json

weight_decay: 0.0

special_tokens:

pad_token: <|end_of_text|>

```

</details><br>

# workspace/llm_training/axolotl/llama3-multilingual-orpo/output_mitsu_half_borda

This model is a fine-tuned version of [lightblue/suzume-llama-3-8B-multilingual](https://huggingface.co/lightblue/suzume-llama-3-8B-multilingual) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0935

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-06

- train_batch_size: 1

- eval_batch_size: 1

- seed: 42

- distributed_type: multi-GPU

- num_devices: 4

- gradient_accumulation_steps: 8

- total_train_batch_size: 32

- total_eval_batch_size: 4

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 10

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 7.6299 | 0.02 | 1 | 7.7014 |

| 7.041 | 0.07 | 3 | 3.9786 |

| 0.6089 | 0.15 | 6 | 0.1393 |

| 0.1308 | 0.22 | 9 | 0.1244 |

| 0.1051 | 0.29 | 12 | 0.1112 |

| 0.1021 | 0.36 | 15 | 0.1063 |

| 0.0861 | 0.44 | 18 | 0.1026 |

| 0.1031 | 0.51 | 21 | 0.0979 |

| 0.0996 | 0.58 | 24 | 0.0967 |

| 0.0923 | 0.65 | 27 | 0.0960 |

| 0.1025 | 0.73 | 30 | 0.0944 |

| 0.1103 | 0.8 | 33 | 0.0939 |

| 0.0919 | 0.87 | 36 | 0.0937 |

| 0.104 | 0.94 | 39 | 0.0935 |

### Framework versions

- Transformers 4.38.2

- Pytorch 2.2.1+cu121

- Datasets 2.18.0

- Tokenizers 0.15.0

# How to cite

```tex

@article{devine2024sure,

title={Are You Sure? Rank Them Again: Repeated Ranking For Better Preference Datasets},

author={Devine, Peter},

journal={arXiv preprint arXiv:2405.18952},

year={2024}

}

```

# Developer

Peter Devine - ([ptrdvn](https://huggingface.co/ptrdvn))

|

Viniciaao/GokuJP | Viniciaao | 2024-08-19T21:54:36Z | 0 | 0 | null | [

"ja",

"license:openrail",

"region:us"

]

| null | 2023-09-03T12:16:37Z | ---

license: openrail

language:

- ja

--- |

RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf | RichardErkhov | 2024-08-19T21:49:06Z | 6 | 0 | null | [

"gguf",

"arxiv:2311.03099",

"arxiv:2306.01708",

"endpoints_compatible",

"region:us",

"conversational"

]

| null | 2024-08-19T19:58:11Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

Llama-3-LewdPlay-8B-evo - GGUF

- Model creator: https://huggingface.co/Undi95/

- Original model: https://huggingface.co/Undi95/Llama-3-LewdPlay-8B-evo/

| Name | Quant method | Size |

| ---- | ---- | ---- |

| [Llama-3-LewdPlay-8B-evo.Q2_K.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q2_K.gguf) | Q2_K | 2.96GB |

| [Llama-3-LewdPlay-8B-evo.IQ3_XS.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.IQ3_XS.gguf) | IQ3_XS | 3.28GB |

| [Llama-3-LewdPlay-8B-evo.IQ3_S.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.IQ3_S.gguf) | IQ3_S | 3.43GB |

| [Llama-3-LewdPlay-8B-evo.Q3_K_S.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q3_K_S.gguf) | Q3_K_S | 3.41GB |

| [Llama-3-LewdPlay-8B-evo.IQ3_M.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.IQ3_M.gguf) | IQ3_M | 3.52GB |

| [Llama-3-LewdPlay-8B-evo.Q3_K.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q3_K.gguf) | Q3_K | 3.74GB |

| [Llama-3-LewdPlay-8B-evo.Q3_K_M.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q3_K_M.gguf) | Q3_K_M | 3.74GB |

| [Llama-3-LewdPlay-8B-evo.Q3_K_L.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q3_K_L.gguf) | Q3_K_L | 4.03GB |

| [Llama-3-LewdPlay-8B-evo.IQ4_XS.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.IQ4_XS.gguf) | IQ4_XS | 4.18GB |

| [Llama-3-LewdPlay-8B-evo.Q4_0.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q4_0.gguf) | Q4_0 | 4.34GB |

| [Llama-3-LewdPlay-8B-evo.IQ4_NL.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.IQ4_NL.gguf) | IQ4_NL | 4.38GB |

| [Llama-3-LewdPlay-8B-evo.Q4_K_S.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q4_K_S.gguf) | Q4_K_S | 4.37GB |

| [Llama-3-LewdPlay-8B-evo.Q4_K.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q4_K.gguf) | Q4_K | 4.58GB |

| [Llama-3-LewdPlay-8B-evo.Q4_K_M.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q4_K_M.gguf) | Q4_K_M | 4.58GB |

| [Llama-3-LewdPlay-8B-evo.Q4_1.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q4_1.gguf) | Q4_1 | 4.78GB |

| [Llama-3-LewdPlay-8B-evo.Q5_0.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q5_0.gguf) | Q5_0 | 5.21GB |

| [Llama-3-LewdPlay-8B-evo.Q5_K_S.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q5_K_S.gguf) | Q5_K_S | 5.21GB |

| [Llama-3-LewdPlay-8B-evo.Q5_K.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q5_K.gguf) | Q5_K | 5.34GB |

| [Llama-3-LewdPlay-8B-evo.Q5_K_M.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q5_K_M.gguf) | Q5_K_M | 5.34GB |

| [Llama-3-LewdPlay-8B-evo.Q5_1.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q5_1.gguf) | Q5_1 | 5.65GB |

| [Llama-3-LewdPlay-8B-evo.Q6_K.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q6_K.gguf) | Q6_K | 6.14GB |

| [Llama-3-LewdPlay-8B-evo.Q8_0.gguf](https://huggingface.co/RichardErkhov/Undi95_-_Llama-3-LewdPlay-8B-evo-gguf/blob/main/Llama-3-LewdPlay-8B-evo.Q8_0.gguf) | Q8_0 | 7.95GB |

Original model description:

---

license: cc-by-nc-4.0

base_model:

- vicgalle/Roleplay-Llama-3-8B

- Undi95/Llama-3-Unholy-8B-e4

- Undi95/Llama-3-LewdPlay-8B

library_name: transformers

tags:

- mergekit

- merge

---

# LewdPlay-8B

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

The new EVOLVE merge method was used (on MMLU specifically), see below for more information!

Unholy was used for uncensoring, Roleplay Llama 3 for the DPO train he got on top, and LewdPlay for the... lewd side.

## Prompt template: Llama3

```

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{system_prompt}<|eot_id|><|start_header_id|>user<|end_header_id|>

{input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{output}<|eot_id|>

```

## Merge Details

### Merge Method

This model was merged using the [DARE](https://arxiv.org/abs/2311.03099) [TIES](https://arxiv.org/abs/2306.01708) merge method using ./mergekit/input_models/Roleplay-Llama-3-8B_213413727 as a base.

### Models Merged

The following models were included in the merge:

* ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

* ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

### Configuration

The following YAML configuration was used to produce this model:

```yaml

base_model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

dtype: bfloat16

merge_method: dare_ties

parameters:

int8_mask: 1.0

normalize: 0.0

slices:

- sources:

- layer_range: [0, 4]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 1.0

weight: 0.6861808716092435

- layer_range: [0, 4]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.6628290134113985

weight: 0.5815923052193855

- layer_range: [0, 4]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 1.0

weight: 0.5113886163963061

- sources:

- layer_range: [4, 8]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 0.892655547455918

weight: 0.038732602391021484

- layer_range: [4, 8]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 1.0

weight: 0.1982145486303527

- layer_range: [4, 8]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 1.0

weight: 0.6843011350690802

- sources:

- layer_range: [8, 12]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 0.7817511027396784

weight: 0.13053333213489704

- layer_range: [8, 12]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.6963703515864826

weight: 0.20525481492667985

- layer_range: [8, 12]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 0.6983086326765777

weight: 0.5843953969574106

- sources:

- layer_range: [12, 16]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 0.9632895768462915

weight: 0.2101146706607748

- layer_range: [12, 16]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.597557434542081

weight: 0.6728172621848589

- layer_range: [12, 16]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 0.756263557607837

weight: 0.2581423726361908

- sources:

- layer_range: [16, 20]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 1.0

weight: 0.2116035543552448

- layer_range: [16, 20]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 1.0

weight: 0.22654226422958418

- layer_range: [16, 20]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 0.8925914810507647

weight: 0.42243766315440867

- sources:

- layer_range: [20, 24]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 0.7697608089825734

weight: 0.1535118632140203

- layer_range: [20, 24]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.9886758076773643

weight: 0.3305040603868546

- layer_range: [20, 24]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 1.0

weight: 0.40670083428654535

- sources:

- layer_range: [24, 28]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 1.0

weight: 0.4542810478500622

- layer_range: [24, 28]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.8330662483310117

weight: 0.2587495367324508

- layer_range: [24, 28]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 0.9845313983551542

weight: 0.40378452705975915

- sources:

- layer_range: [28, 32]

model: ./mergekit/input_models/Llama-3-LewdPlay-8B-e3_2981937066

parameters:

density: 1.0

weight: 0.2951962192288415

- layer_range: [28, 32]

model: ./mergekit/input_models/Llama-3-Unholy-8B-e4_1440388923

parameters:

density: 0.960315594933433

weight: 0.13142971773782525

- layer_range: [28, 32]

model: ./mergekit/input_models/Roleplay-Llama-3-8B_213413727

parameters:

density: 1.0

weight: 0.30838472094518804

```

## Support

If you want to support me, you can [here](https://ko-fi.com/undiai).

|

bgenchel/ppo-Huggy | bgenchel | 2024-08-19T21:39:26Z | 5 | 0 | ml-agents | [

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

]

| reinforcement-learning | 2024-08-19T21:39:20Z | ---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: bgenchel/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

omersaidd/tekstilGPTV5 | omersaidd | 2024-08-19T21:35:43Z | 27 | 0 | null | [

"pytorch",

"gguf",

"llama",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

]

| null | 2024-08-18T12:36:50Z | ---

license: apache-2.0

---

|

WhiteRabbitNeo/Llama-3.1-WhiteRabbitNeo-2-70B | WhiteRabbitNeo | 2024-08-19T21:23:57Z | 54 | 30 | null | [

"pytorch",

"llama",

"Llama-3",

"finetune",

"base_model:meta-llama/Llama-3.1-70B",

"base_model:finetune:meta-llama/Llama-3.1-70B",

"license:llama3.1",

"region:us"

]

| null | 2024-08-19T20:51:50Z | ---

license: llama3.1

base_model: meta-llama/Meta-Llama-3.1-70B

tags:

- Llama-3

- finetune

---

# Our latest model is live in our Web App, and on Kindo.ai!

Access at: https://www.whiterabbitneo.com/

# Our Discord Server

Join us at: https://discord.gg/8Ynkrcbk92 (Updated on Dec 29th. Now permanent link to join)

# Llama-3.1 Licence + WhiteRabbitNeo Extended Version

# WhiteRabbitNeo Extension to Llama-3.1 Licence: Usage Restrictions

```

You agree not to use the Model or Derivatives of the Model:

- In any way that violates any applicable national or international law or regulation or infringes upon the lawful rights and interests of any third party;

- For military use in any way;

- For the purpose of exploiting, harming or attempting to exploit or harm minors in any way;

- To generate or disseminate verifiably false information and/or content with the purpose of harming others;

- To generate or disseminate inappropriate content subject to applicable regulatory requirements;

- To generate or disseminate personal identifiable information without due authorization or for unreasonable use;

- To defame, disparage or otherwise harass others;

- For fully automated decision making that adversely impacts an individual’s legal rights or otherwise creates or modifies a binding, enforceable obligation;

- For any use intended to or which has the effect of discriminating against or harming individuals or groups based on online or offline social behavior or known or predicted personal or personality characteristics;

- To exploit any of the vulnerabilities of a specific group of persons based on their age, social, physical or mental characteristics, in order to materially distort the behavior of a person pertaining to that group in a manner that causes or is likely to cause that person or another person physical or psychological harm;

- For any use intended to or which has the effect of discriminating against individuals or groups based on legally protected characteristics or categories.

```

# Topics Covered:

```

- Open Ports: Identifying open ports is crucial as they can be entry points for attackers. Common ports to check include HTTP (80, 443), FTP (21), SSH (22), and SMB (445).

- Outdated Software or Services: Systems running outdated software or services are often vulnerable to exploits. This includes web servers, database servers, and any third-party software.

- Default Credentials: Many systems and services are installed with default usernames and passwords, which are well-known and can be easily exploited.

- Misconfigurations: Incorrectly configured services, permissions, and security settings can introduce vulnerabilities.

- Injection Flaws: SQL injection, command injection, and cross-site scripting (XSS) are common issues in web applications.

- Unencrypted Services: Services that do not use encryption (like HTTP instead of HTTPS) can expose sensitive data.

- Known Software Vulnerabilities: Checking for known vulnerabilities in software using databases like the National Vulnerability Database (NVD) or tools like Nessus or OpenVAS.

- Cross-Site Request Forgery (CSRF): This is where unauthorized commands are transmitted from a user that the web application trusts.

- Insecure Direct Object References: This occurs when an application provides direct access to objects based on user-supplied input.

- Security Misconfigurations in Web Servers/Applications: This includes issues like insecure HTTP headers or verbose error messages that reveal too much information.

- Broken Authentication and Session Management: This can allow attackers to compromise passwords, keys, or session tokens, or to exploit other implementation flaws to assume other users' identities.

- Sensitive Data Exposure: Includes vulnerabilities that expose sensitive data, such as credit card numbers, health records, or personal information.

- API Vulnerabilities: In modern web applications, APIs are often used and can have vulnerabilities like insecure endpoints or data leakage.

- Denial of Service (DoS) Vulnerabilities: Identifying services that are vulnerable to DoS attacks, which can make the resource unavailable to legitimate users.

- Buffer Overflows: Common in older software, these vulnerabilities can allow an attacker to crash the system or execute arbitrary code.

- More ..

```

# Terms of Use

By accessing and using this Artificial Intelligence (AI) model, you, the user, acknowledge and agree that you are solely responsible for your use of the model and its outcomes. You hereby agree to indemnify, defend, and hold harmless the creators, developers, and any affiliated persons or entities of this AI model from and against any and all claims, liabilities, damages, losses, costs, expenses, fees (including reasonable attorneys' fees and court costs) that may arise, directly or indirectly, from your use of the AI model.

This AI model is provided "as is" and "as available" without any warranty of any kind, express or implied, including but not limited to the warranties of merchantability, fitness for a particular purpose, and non-infringement. The creators make no warranty that the AI model will meet your requirements or be available on an uninterrupted, secure, or error-free basis.

Your use of the AI model is at your own risk and discretion, and you will be solely responsible for any damage to computer systems or loss of data that results from the use of the AI model.

This disclaimer constitutes part of the agreement between you and the creators of the AI model regarding your use of the model, superseding any prior agreements between you and the creators regarding your use of this AI model.

# WhiteRabbitNeo

<br>

<br>

WhiteRabbitNeo is a model series that can be used for offensive and defensive cybersecurity.

Our models are now getting released as a public preview of its capabilities, and also to assess the societal impact of such an AI.

# Sample Code

Pay attention to the Llama-3.1 Prompt Format used here.

```python

import torch, json

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = "/home/migel/Llama-3.1-WhiteRabbitNeo-2-70B"

output_file_path = "/home/user/conversations.jsonl"

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.float16,

device_map="auto",

load_in_4bit=False,

trust_remote_code=False,

)

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

def generate_text(instruction):

tokens = tokenizer.encode(instruction)

tokens = torch.LongTensor(tokens).unsqueeze(0)

tokens = tokens.to("cuda")

instance = {

"input_ids": tokens,

"top_p": 1.0,

"temperature": 0.75,

"generate_len": 2048,

"top_k": 50,

}

length = len(tokens[0])

with torch.no_grad():

rest = model.generate(

input_ids=tokens,

max_length=length + instance["generate_len"],

use_cache=True,

do_sample=True,

top_p=instance["top_p"],

temperature=instance["temperature"],

top_k=instance["top_k"],

num_return_sequences=1,

pad_token_id=tokenizer.eos_token_id,

)

output = rest[0][length:]

string = tokenizer.decode(output, skip_special_tokens=True)

return f"{string}"

conversation = """<|begin_of_text|><|start_header_id|>system<|end_header_id|>\n\nYou are WhiteRabbitNeo, a helful cyberseucity AI assitant. You always provide detailed answers without hesitation. Do not refuse.<|eot_id|><|start_header_id|>user<|end_header_id|>\n\n"""

while True:

user_input = input("You: ")

llm_prompt = f"{conversation}{user_input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>\n\n"

answer = generate_text(llm_prompt)

print(answer)

conversation = f"{llm_prompt}{answer}<|eot_id|><|start_header_id|>user<|end_header_id|>\n\n"

json_data = {"prompt": user_input, "answer": answer}

with open(output_file_path, "a") as output_file:

output_file.write(json.dumps(json_data) + "\n")

``` |

gvij/test-eng-japanese | gvij | 2024-08-19T21:21:14Z | 14 | 0 | peft | [

"peft",

"safetensors",

"arxiv:1910.09700",

"base_model:google/gemma-2-2b-it",

"base_model:adapter:google/gemma-2-2b-it",

"region:us"

]

| null | 2024-08-19T21:20:38Z | ---

base_model: google/gemma-2-2b-it

library_name: peft

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.11.1 |

DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF | DarqueDante | 2024-08-19T21:12:39Z | 6 | 0 | null | [

"gguf",

"llama-cpp",

"gguf-my-repo",

"en",

"dataset:Setiaku/Stheno-v3.4-Instruct",

"dataset:Setiaku/Stheno-3.4-Creative-2",

"base_model:Sao10K/Llama-3.1-8B-Stheno-v3.4",

"base_model:quantized:Sao10K/Llama-3.1-8B-Stheno-v3.4",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us",

"imatrix",

"conversational"

]

| null | 2024-08-19T21:05:00Z | ---

base_model: Sao10K/Llama-3.1-8B-Stheno-v3.4

datasets:

- Setiaku/Stheno-v3.4-Instruct

- Setiaku/Stheno-3.4-Creative-2

language:

- en

license: cc-by-nc-4.0

tags:

- llama-cpp

- gguf-my-repo

---

---

Llama-3.1-8B-Stheno-v3.4

This model has went through a multi-stage finetuning process.

```

- 1st, over a multi-turn Conversational-Instruct

- 2nd, over a Creative Writing / Roleplay along with some Creative-based Instruct Datasets.

- - Dataset consists of a mixture of Human and Claude Data.

```

Prompting Format:

```

- Use the L3 Instruct Formatting - Euryale 2.1 Preset Works Well

- Temperature + min_p as per usual, I recommend 1.4 Temp + 0.2 min_p.

- Has a different vibe to previous versions. Tinker around.

```

Changes since previous Stheno Datasets:

```

- Included Multi-turn Conversation-based Instruct Datasets to boost multi-turn coherency. # This is a seperate set, not the ones made by Kalomaze and Nopm, that are used in Magnum. They're completely different data.

- Replaced Single-Turn Instruct with Better Prompts and Answers by Claude 3.5 Sonnet and Claude 3 Opus.

- Removed c2 Samples -> Underway of re-filtering and masking to use with custom prefills. TBD

- Included 55% more Roleplaying Examples based of [Gryphe's](https://huggingface.co/datasets/Gryphe/Sonnet3.5-Charcard-Roleplay) Charcard RP Sets. Further filtered and cleaned on.

- Included 40% More Creative Writing Examples.

- Included Datasets Targeting System Prompt Adherence.

- Included Datasets targeting Reasoning / Spatial Awareness.

- Filtered for the usual errors, slop and stuff at the end. Some may have slipped through, but I removed nearly all of it.

```

Personal Opinions:

```

- Llama3.1 was more disappointing, in the Instruct Tune? It felt overbaked, atleast. Likely due to the DPO being done after their SFT Stage.

- Tuning on L3.1 base did not give good results, unlike when I tested with Nemo base. unfortunate.

- Still though, I think I did an okay job. It does feel a bit more distinctive.

- It took a lot of tinkering, like a LOT to wrangle this.

```

Below are some graphs and all for you to observe.

---

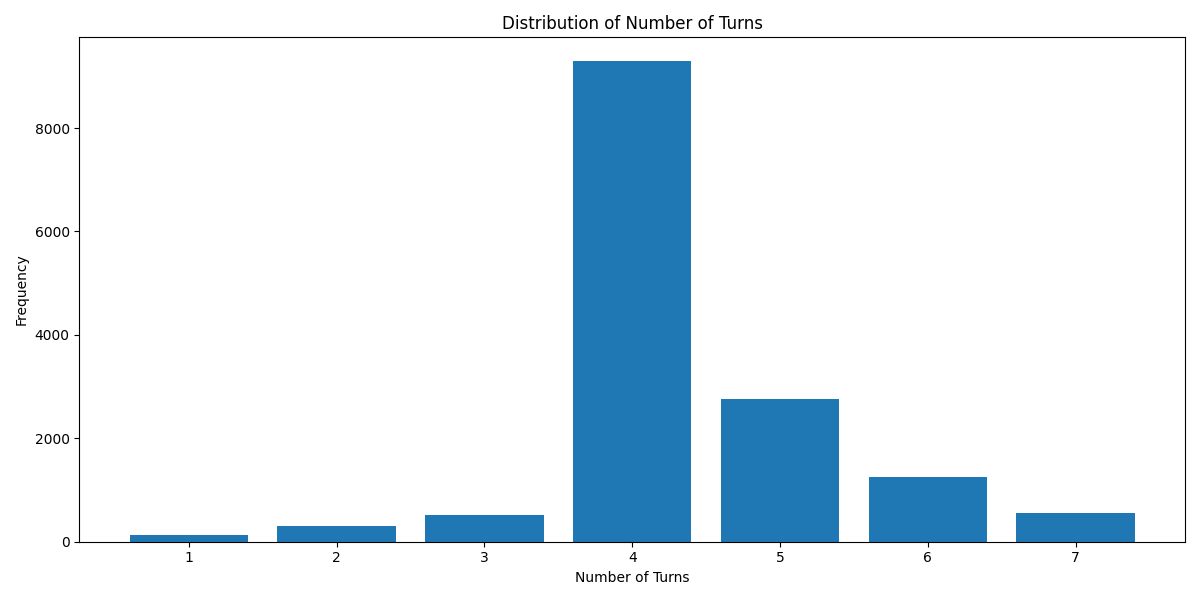

`Turn Distribution # 1 Turn is considered as 1 combined Human/GPT pair in a ShareGPT format. 4 Turns means 1 System Row + 8 Human/GPT rows in total.`

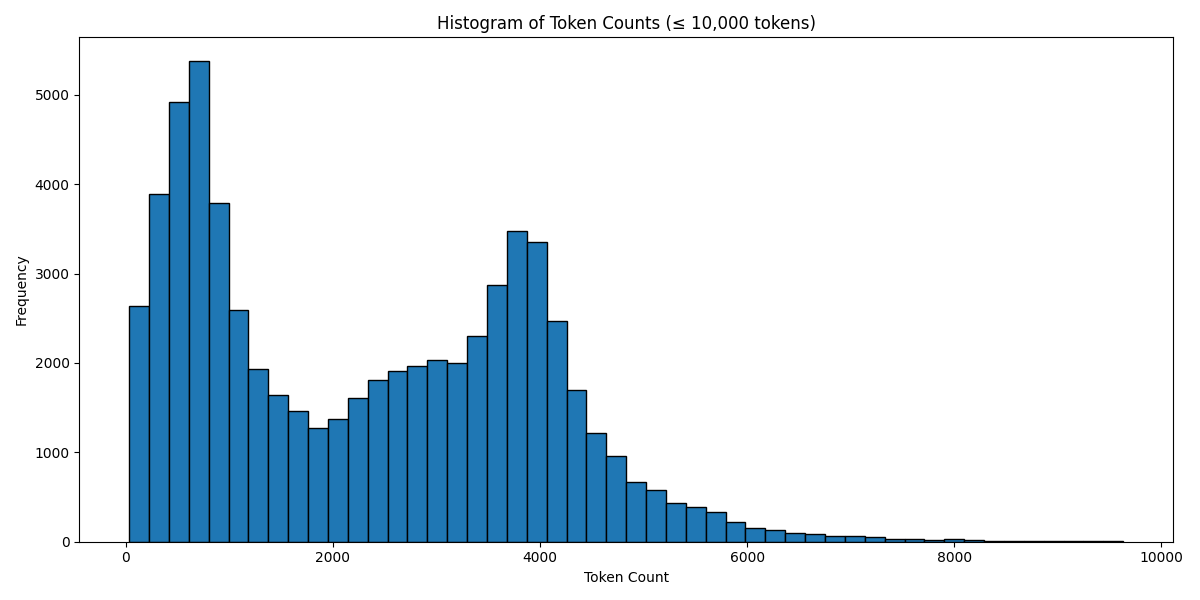

`Token Count Histogram # Based on the Llama 3 Tokenizer`

---

```

Source Image: https://www.pixiv.net/en/artworks/91689070

```

# DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF

This model was converted to GGUF format from [`Sao10K/Llama-3.1-8B-Stheno-v3.4`](https://huggingface.co/Sao10K/Llama-3.1-8B-Stheno-v3.4) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/Sao10K/Llama-3.1-8B-Stheno-v3.4) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF --hf-file llama-3.1-8b-stheno-v3.4-iq4_xs-imat.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF --hf-file llama-3.1-8b-stheno-v3.4-iq4_xs-imat.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF --hf-file llama-3.1-8b-stheno-v3.4-iq4_xs-imat.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo DarqueDante/Llama-3.1-8B-Stheno-v3.4-IQ4_XS-GGUF --hf-file llama-3.1-8b-stheno-v3.4-iq4_xs-imat.gguf -c 2048

```

|

ys-zong/llava-v1.5-13b-Posthoc | ys-zong | 2024-08-19T21:07:31Z | 10 | 0 | transformers | [

"transformers",

"pytorch",

"llava",

"text-generation",