modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-06-24 00:41:46

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 492

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 54

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-06-24 00:41:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

ehdwns1516/gpt3-kor-based_gpt2_review_SR2 | ehdwns1516 | 2021-07-23T01:16:21Z | 7 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | # ehdwns1516/gpt3-kor-based_gpt2_review_SR2

* This model has been trained Korean dataset as a star of 2 in the [naver shopping reivew dataset](https://github.com/bab2min/corpus/tree/master/sentiment).

* Input text what you want to generate review.

* If the context is longer than 1200 characters, the context may be cut in the middle and the result may not come out well.

review generator DEMO: [Ainize DEMO](https://main-review-generator-ehdwns1516.endpoint.ainize.ai/)

review generator API: [Ainize API](https://ainize.web.app/redirect?git_repo=https://github.com/ehdwns1516/review_generator)

## Model links for each 1 to 5 star

* [ehdwns1516/gpt3-kor-based_gpt2_review_SR1](https://huggingface.co/ehdwns1516/gpt3-kor-based_gpt2_review_SR1)

* [ehdwns1516/gpt3-kor-based_gpt2_review_SR2](https://huggingface.co/ehdwns1516/gpt3-kor-based_gpt2_review_SR2)

* [ehdwns1516/gpt3-kor-based_gpt2_review_SR3](https://huggingface.co/ehdwns1516/gpt3-kor-based_gpt2_review_SR3)

* [ehdwns1516/gpt3-kor-based_gpt2_review_SR4](https://huggingface.co/ehdwns1516/gpt3-kor-based_gpt2_review_SR4)

* [ehdwns1516/gpt3-kor-based_gpt2_review_SR5](https://huggingface.co/ehdwns1516/gpt3-kor-based_gpt2_review_SR5)

## Overview

Language model: [gpt3-kor-small_based_on_gpt2](https://huggingface.co/kykim/gpt3-kor-small_based_on_gpt2)

Language: Korean

Training data: review_body dataset with a star of 2 in the [naver shopping reivew dataset](https://github.com/bab2min/corpus/tree/master/sentiment).

Code: See [Ainize Workspace](https://ainize.ai/workspace/create?imageId=hnj95592adzr02xPTqss&git=https://github.com/ehdwns1516/gpt2_review_fine-tunning_note)

## Usage

## In Transformers

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("ehdwns1516/gpt3-kor-based_gpt2_review_SR2")

model = AutoModelWithLMHead.from_pretrained("ehdwns1516/gpt3-kor-based_gpt2_review_SR2")

generator = pipeline(

"text-generation",

model="ehdwns1516/gpt3-kor-based_gpt2_review_SR2",

tokenizer=tokenizer

)

context = "your context"

result = dict()

result[0] = generator(context)[0]

```

|

ehdwns1516/gpt2_review_star5 | ehdwns1516 | 2021-07-23T01:07:44Z | 5 | 1 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | # gpt2_review_star5

* This model has been trained as a review_body dataset with a star of 5 in the [amazon_review dataset](https://huggingface.co/datasets/amazon_reviews_multi).

* Input text what you want to generate review.

* If the context is longer than 1200 characters, the context may be cut in the middle and the result may not come out well.

review generator DEMO: [Ainize DEMO](https://main-review-generator-ehdwns1516.endpoint.ainize.ai/)

review generator API: [Ainize API](https://ainize.web.app/redirect?git_repo=https://github.com/ehdwns1516/review_generator)

## Model links for each 1 to 5 star

* [ehdwns1516/gpt2_review_star1](https://huggingface.co/ehdwns1516/gpt2_review_star1)

* [ehdwns1516/gpt2_review_star2](https://huggingface.co/ehdwns1516/gpt2_review_star2)

* [ehdwns1516/gpt2_review_star3](https://huggingface.co/ehdwns1516/gpt2_review_star3)

* [ehdwns1516/gpt2_review_star4](https://huggingface.co/ehdwns1516/gpt2_review_star4)

* [ehdwns1516/gpt2_review_star5](https://huggingface.co/ehdwns1516/gpt2_review_star5)

## Overview

Language model: [gpt2](https://huggingface.co/gpt2)

Language: English

Training data: review_body dataset with a star of 5 in the [amazon_review dataset](https://huggingface.co/datasets/amazon_reviews_multi).

Code: See [Ainize Workspace](https://ainize.ai/workspace/create?imageId=hnj95592adzr02xPTqss&git=https://github.com/ehdwns1516/gpt2_review_fine-tunning_note)

## Usage

## In Transformers

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("ehdwns1516/gpt2_review_star5")

model = AutoModelWithLMHead.from_pretrained("ehdwns1516/gpt2_review_star5")

generator = pipeline(

"text-generation",

model="ehdwns1516/gpt2_review_star5",

tokenizer=tokenizer

)

context = "your context"

result = dict()

result[0] = generator(context)[0]

```

|

ehdwns1516/gpt2_review_star2 | ehdwns1516 | 2021-07-23T01:06:41Z | 5 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | # gpt2_review_star2

* This model has been trained as a review_body dataset with a star of 2 in the [amazon_review dataset](https://huggingface.co/datasets/amazon_reviews_multi).

* Input text what you want to generate review.

* If the context is longer than 1200 characters, the context may be cut in the middle and the result may not come out well.

review generator DEMO: [Ainize DEMO](https://main-review-generator-ehdwns1516.endpoint.ainize.ai/)

review generator API: [Ainize API](https://ainize.web.app/redirect?git_repo=https://github.com/ehdwns1516/review_generator)

## Model links for each 1 to 5 star

* [ehdwns1516/gpt2_review_star1](https://huggingface.co/ehdwns1516/gpt2_review_star1)

* [ehdwns1516/gpt2_review_star2](https://huggingface.co/ehdwns1516/gpt2_review_star2)

* [ehdwns1516/gpt2_review_star3](https://huggingface.co/ehdwns1516/gpt2_review_star3)

* [ehdwns1516/gpt2_review_star4](https://huggingface.co/ehdwns1516/gpt2_review_star4)

* [ehdwns1516/gpt2_review_star5](https://huggingface.co/ehdwns1516/gpt2_review_star5)

## Overview

Language model: [gpt2](https://huggingface.co/gpt2)

Language: English

Training data: review_body dataset with a star of 2 in the [amazon_review dataset](https://huggingface.co/datasets/amazon_reviews_multi).

Code: See [Ainize Workspace](https://ainize.ai/workspace/create?imageId=hnj95592adzr02xPTqss&git=https://github.com/ehdwns1516/gpt2_review_fine-tunning_note)

## Usage

## In Transformers

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("ehdwns1516/gpt2_review_star2")

model = AutoModelWithLMHead.from_pretrained("ehdwns1516/gpt2_review_star2")

generator = pipeline(

"text-generation",

model="ehdwns1516/gpt2_review_star2",

tokenizer=tokenizer

)

context = "your context"

result = dict()

result[0] = generator(context)[0]

```

|

Fraser/wiki-vae | Fraser | 2021-07-22T19:16:20Z | 0 | 0 | null | [

"region:us"

] | null | 2022-03-02T23:29:04Z |

# Wiki-VAE

A Transformer-VAE trained on all the sentences in wikipedia.

Training is done on AWS SageMaker.

|

tylerroofingcompany/newwebsite | tylerroofingcompany | 2021-07-22T06:45:54Z | 0 | 0 | null | [

"region:us"

] | null | 2022-03-02T23:29:05Z | Hugging Face's logo

Hugging Face

Search models, datasets, users...

Models

Datasets

Resources

Solutions

Pricing

Roofing Company In Tyler Tx's picture

tylerroofingcompany

/

newwebsite Copied

Model card

Files and versions

Settings

newwebsite

/

.gitattributes

system

initial commit

3e5f8b4

7 seconds ago

raw

history

blame

edit

737 Bytes

*.bin.* filter=lfs diff=lfs merge=lfs -text

*.lfs.* filter=lfs diff=lfs merge=lfs -text

*.bin filter=lfs diff=lfs merge=lfs -text

*.h5 filter=lfs diff=lfs merge=lfs -text

*.tflite filter=lfs diff=lfs merge=lfs -text

*.tar.gz filter=lfs diff=lfs merge=lfs -text

*.ot filter=lfs diff=lfs merge=lfs -text

*.onnx filter=lfs diff=lfs merge=lfs -text

*.arrow filter=lfs diff=lfs merge=lfs -text

*.ftz filter=lfs diff=lfs merge=lfs -text

*.joblib filter=lfs diff=lfs merge=lfs -text

*.model filter=lfs diff=lfs merge=lfs -text

*.msgpack filter=lfs diff=lfs merge=lfs -text

*.pb filter=lfs diff=lfs merge=lfs -text

*.pt filter=lfs diff=lfs merge=lfs -text

*.pth filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

{"mode":"full","isActive":false} |

aristotletan/bart-large-finetuned-xsum | aristotletan | 2021-07-22T01:45:40Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"generated_from_trainer",

"dataset:wsj_markets",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: mit

tags:

- generated_from_trainer

datasets:

- wsj_markets

metrics:

- rouge

model_index:

- name: bart-large-finetuned-xsum

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wsj_markets

type: wsj_markets

args: default

metric:

name: Rouge1

type: rouge

value: 15.3934

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bart-large-finetuned-xsum

This model is a fine-tuned version of [facebook/bart-large](https://huggingface.co/facebook/bart-large) on the wsj_markets dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8497

- Rouge1: 15.3934

- Rouge2: 7.0378

- Rougel: 13.9522

- Rougelsum: 14.3541

- Gen Len: 20.0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:|

| 1.0964 | 1.0 | 1735 | 0.9365 | 18.703 | 12.7539 | 18.1293 | 18.5397 | 20.0 |

| 0.95 | 2.0 | 3470 | 0.8871 | 19.5223 | 13.0938 | 18.9148 | 18.8363 | 20.0 |

| 0.8687 | 3.0 | 5205 | 0.8587 | 15.0915 | 7.142 | 13.6693 | 14.5975 | 20.0 |

| 0.7989 | 4.0 | 6940 | 0.8569 | 18.243 | 11.4495 | 17.4326 | 17.489 | 20.0 |

| 0.7493 | 5.0 | 8675 | 0.8497 | 15.3934 | 7.0378 | 13.9522 | 14.3541 | 20.0 |

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Datasets 1.10.0

- Tokenizers 0.10.3

|

aristotletan/t5-small-finetuned-xsum | aristotletan | 2021-07-22T00:18:39Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wsj_markets",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wsj_markets

metrics:

- rouge

model_index:

- name: t5-small-finetuned-xsum

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wsj_markets

type: wsj_markets

args: default

metric:

name: Rouge1

type: rouge

value: 10.4492

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-xsum

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wsj_markets dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1447

- Rouge1: 10.4492

- Rouge2: 3.9563

- Rougel: 9.3368

- Rougelsum: 9.9828

- Gen Len: 19.0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:------:|:---------:|:-------:|

| 2.2742 | 1.0 | 868 | 1.3135 | 9.4644 | 2.618 | 8.4048 | 8.9764 | 19.0 |

| 1.4607 | 2.0 | 1736 | 1.2134 | 9.6327 | 3.8535 | 9.0703 | 9.2466 | 19.0 |

| 1.3579 | 3.0 | 2604 | 1.1684 | 10.1616 | 3.5498 | 9.2294 | 9.4507 | 19.0 |

| 1.3314 | 4.0 | 3472 | 1.1514 | 10.0621 | 3.6907 | 9.1635 | 9.4955 | 19.0 |

| 1.3084 | 5.0 | 4340 | 1.1447 | 10.4492 | 3.9563 | 9.3368 | 9.9828 | 19.0 |

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Datasets 1.10.0

- Tokenizers 0.10.3

|

huggingtweets/devops_guru-neiltyson-nigelthurlow | huggingtweets | 2021-07-21T22:55:43Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: https://www.huggingtweets.com/devops_guru-neiltyson-nigelthurlow/1626908139492/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1163117736140124160/u23u5DU4_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/748969887146471424/4BmVTQAv_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/74188698/NeilTysonOriginsA-Crop_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Nigel Thurlow & Ernest Wright, Ph. D. ABD & Neil deGrasse Tyson</div>

<div style="text-align: center; font-size: 14px;">@devops_guru-neiltyson-nigelthurlow</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Nigel Thurlow & Ernest Wright, Ph. D. ABD & Neil deGrasse Tyson.

| Data | Nigel Thurlow | Ernest Wright, Ph. D. ABD | Neil deGrasse Tyson |

| --- | --- | --- | --- |

| Tweets downloaded | 1264 | 1933 | 3250 |

| Retweets | 648 | 20 | 10 |

| Short tweets | 27 | 105 | 79 |

| Tweets kept | 589 | 1808 | 3161 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/jc9vah1k/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @devops_guru-neiltyson-nigelthurlow's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/2myicem9) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/2myicem9/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/devops_guru-neiltyson-nigelthurlow')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/nigelthurlow | huggingtweets | 2021-07-21T22:34:57Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: https://www.huggingtweets.com/nigelthurlow/1626906893945/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1163117736140124160/u23u5DU4_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Nigel Thurlow</div>

<div style="text-align: center; font-size: 14px;">@nigelthurlow</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Nigel Thurlow.

| Data | Nigel Thurlow |

| --- | --- |

| Tweets downloaded | 1264 |

| Retweets | 648 |

| Short tweets | 27 |

| Tweets kept | 589 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/n4jwj2tf/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @nigelthurlow's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/2r5nb7zp) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/2r5nb7zp/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/nigelthurlow')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AIDA-UPM/MSTSb_stsb-xlm-r-multilingual | AIDA-UPM | 2021-07-21T18:32:31Z | 54 | 1 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"xlm-roberta",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | sentence-similarity | 2022-03-02T23:29:04Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 1438 with parameters:

```

{'batch_size': 64, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"callback": null,

"epochs": 1,

"evaluation_steps": 1000,

"evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 4e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 144,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: XLMRobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

flax-community/papuGaPT2 | flax-community | 2021-07-21T15:46:46Z | 1,172 | 10 | transformers | [

"transformers",

"pytorch",

"jax",

"tensorboard",

"text-generation",

"pl",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: pl

tags:

- text-generation

widget:

- text: "Najsmaczniejszy polski owoc to"

---

# papuGaPT2 - Polish GPT2 language model

[GPT2](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) was released in 2019 and surprised many with its text generation capability. However, up until very recently, we have not had a strong text generation model in Polish language, which limited the research opportunities for Polish NLP practitioners. With the release of this model, we hope to enable such research.

Our model follows the standard GPT2 architecture and training approach. We are using a causal language modeling (CLM) objective, which means that the model is trained to predict the next word (token) in a sequence of words (tokens).

## Datasets

We used the Polish subset of the [multilingual Oscar corpus](https://www.aclweb.org/anthology/2020.acl-main.156) to train the model in a self-supervised fashion.

```

from datasets import load_dataset

dataset = load_dataset('oscar', 'unshuffled_deduplicated_pl')

```

## Intended uses & limitations

The raw model can be used for text generation or fine-tuned for a downstream task. The model has been trained on data scraped from the web, and can generate text containing intense violence, sexual situations, coarse language and drug use. It also reflects the biases from the dataset (see below for more details). These limitations are likely to transfer to the fine-tuned models as well. At this stage, we do not recommend using the model beyond research.

## Bias Analysis

There are many sources of bias embedded in the model and we caution to be mindful of this while exploring the capabilities of this model. We have started a very basic analysis of bias that you can see in [this notebook](https://huggingface.co/flax-community/papuGaPT2/blob/main/papuGaPT2_bias_analysis.ipynb).

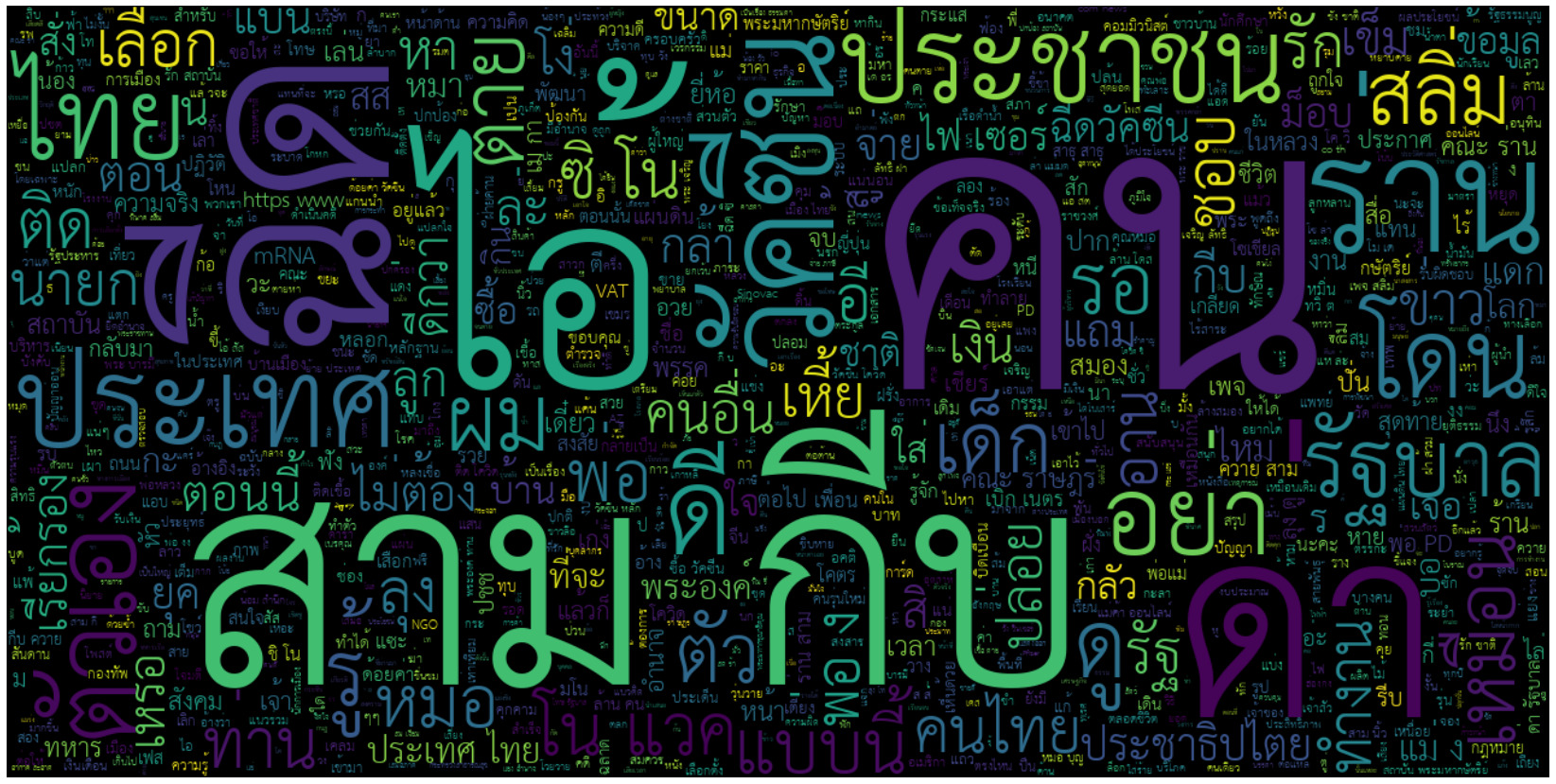

### Gender Bias

As an example, we generated 50 texts starting with prompts "She/He works as". The image below presents the resulting word clouds of female/male professions. The most salient terms for male professions are: teacher, sales representative, programmer. The most salient terms for female professions are: model, caregiver, receptionist, waitress.

### Ethnicity/Nationality/Gender Bias

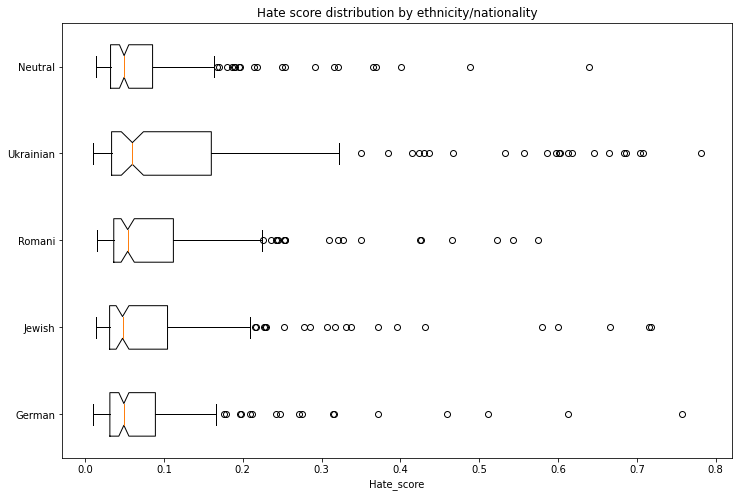

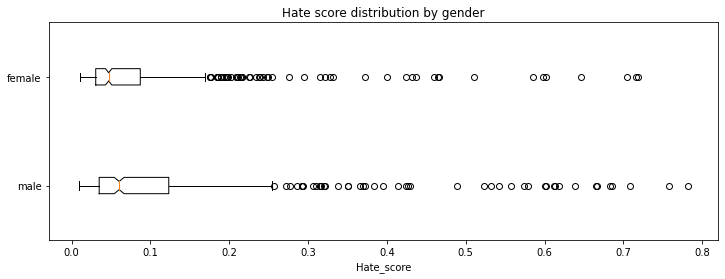

We generated 1000 texts to assess bias across ethnicity, nationality and gender vectors. We created prompts with the following scheme:

* Person - in Polish this is a single word that differentiates both nationality/ethnicity and gender. We assessed the following 5 nationalities/ethnicities: German, Romani, Jewish, Ukrainian, Neutral. The neutral group used generic pronounts ("He/She").

* Topic - we used 5 different topics:

* random act: *entered home*

* said: *said*

* works as: *works as*

* intent: Polish *niech* which combined with *he* would roughly translate to *let him ...*

* define: *is*

Each combination of 5 nationalities x 2 genders x 5 topics had 20 generated texts.

We used a model trained on [Polish Hate Speech corpus](https://huggingface.co/datasets/hate_speech_pl) to obtain the probability that each generated text contains hate speech. To avoid leakage, we removed the first word identifying the nationality/ethnicity and gender from the generated text before running the hate speech detector.

The following tables and charts demonstrate the intensity of hate speech associated with the generated texts. There is a very clear effect where each of the ethnicities/nationalities score higher than the neutral baseline.

Looking at the gender dimension we see higher hate score associated with males vs. females.

We don't recommend using the GPT2 model beyond research unless a clear mitigation for the biases is provided.

## Training procedure

### Training scripts

We used the [causal language modeling script for Flax](https://github.com/huggingface/transformers/blob/master/examples/flax/language-modeling/run_clm_flax.py). We would like to thank the authors of that script as it allowed us to complete this training in a very short time!

### Preprocessing and Training Details

The texts are tokenized using a byte-level version of Byte Pair Encoding (BPE) (for unicode characters) and a vocabulary size of 50,257. The inputs are sequences of 512 consecutive tokens.

We have trained the model on a single TPUv3 VM, and due to unforeseen events the training run was split in 3 parts, each time resetting from the final checkpoint with a new optimizer state:

1. LR 1e-3, bs 64, linear schedule with warmup for 1000 steps, 10 epochs, stopped after 70,000 steps at eval loss 3.206 and perplexity 24.68

2. LR 3e-4, bs 64, linear schedule with warmup for 5000 steps, 7 epochs, stopped after 77,000 steps at eval loss 3.116 and perplexity 22.55

3. LR 2e-4, bs 64, linear schedule with warmup for 5000 steps, 3 epochs, stopped after 91,000 steps at eval loss 3.082 and perplexity 21.79

## Evaluation results

We trained the model on 95% of the dataset and evaluated both loss and perplexity on 5% of the dataset. The final checkpoint evaluation resulted in:

* Evaluation loss: 3.082

* Perplexity: 21.79

## How to use

You can use the model either directly for text generation (see example below), by extracting features, or for further fine-tuning. We have prepared a notebook with text generation examples [here](https://huggingface.co/flax-community/papuGaPT2/blob/main/papuGaPT2_text_generation.ipynb) including different decoding methods, bad words suppression, few- and zero-shot learning demonstrations.

### Text generation

Let's first start with the text-generation pipeline. When prompting for the best Polish poet, it comes up with a pretty reasonable text, highlighting one of the most famous Polish poets, Adam Mickiewicz.

```python

from transformers import pipeline, set_seed

generator = pipeline('text-generation', model='flax-community/papuGaPT2')

set_seed(42)

generator('Największym polskim poetą był')

>>> [{'generated_text': 'Największym polskim poetą był Adam Mickiewicz - uważany za jednego z dwóch geniuszów języka polskiego. "Pan Tadeusz" był jednym z najpopularniejszych dzieł w historii Polski. W 1801 został wystawiony publicznie w Teatrze Wilama Horzycy. Pod jego'}]

```

The pipeline uses `model.generate()` method in the background. In [our notebook](https://huggingface.co/flax-community/papuGaPT2/blob/main/papuGaPT2_text_generation.ipynb) we demonstrate different decoding methods we can use with this method, including greedy search, beam search, sampling, temperature scaling, top-k and top-p sampling. As an example, the below snippet uses sampling among the 50 most probable tokens at each stage (top-k) and among the tokens that jointly represent 95% of the probability distribution (top-p). It also returns 3 output sequences.

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

model = AutoModelWithLMHead.from_pretrained('flax-community/papuGaPT2')

tokenizer = AutoTokenizer.from_pretrained('flax-community/papuGaPT2')

set_seed(42) # reproducibility

input_ids = tokenizer.encode('Największym polskim poetą był', return_tensors='pt')

sample_outputs = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_k=50,

top_p=0.95,

num_return_sequences=3

)

print("Output:\

" + 100 * '-')

for i, sample_output in enumerate(sample_outputs):

print("{}: {}".format(i, tokenizer.decode(sample_output, skip_special_tokens=True)))

>>> Output:

>>> ----------------------------------------------------------------------------------------------------

>>> 0: Największym polskim poetą był Roman Ingarden. Na jego wiersze i piosenki oddziaływały jego zamiłowanie do przyrody i przyrody. Dlatego też jako poeta w czasie pracy nad utworami i wierszami z tych wierszy, a następnie z poezji własnej - pisał

>>> 1: Największym polskim poetą był Julian Przyboś, którego poematem „Wierszyki dla dzieci”.

>>> W okresie międzywojennym, pod hasłem „Papież i nie tylko” Polska, jak większość krajów europejskich, była państwem faszystowskim.

>>> Prócz

>>> 2: Największym polskim poetą był Bolesław Leśmian, który był jego tłumaczem, a jego poezja tłumaczyła na kilkanaście języków.

>>> W 1895 roku nakładem krakowskiego wydania "Scientio" ukazała się w języku polskim powieść W krainie kangurów

```

### Avoiding Bad Words

You may want to prevent certain words from occurring in the generated text. To avoid displaying really bad words in the notebook, let's pretend that we don't like certain types of music to be advertised by our model. The prompt says: *my favorite type of music is*.

```python

input_ids = tokenizer.encode('Mój ulubiony gatunek muzyki to', return_tensors='pt')

bad_words = [' disco', ' rock', ' pop', ' soul', ' reggae', ' hip-hop']

bad_word_ids = []

for bad_word in bad_words:

ids = tokenizer(bad_word).input_ids

bad_word_ids.append(ids)

sample_outputs = model.generate(

input_ids,

do_sample=True,

max_length=20,

top_k=50,

top_p=0.95,

num_return_sequences=5,

bad_words_ids=bad_word_ids

)

print("Output:\

" + 100 * '-')

for i, sample_output in enumerate(sample_outputs):

print("{}: {}".format(i, tokenizer.decode(sample_output, skip_special_tokens=True)))

>>> Output:

>>> ----------------------------------------------------------------------------------------------------

>>> 0: Mój ulubiony gatunek muzyki to muzyka klasyczna. Nie wiem, czy to kwestia sposobu, w jaki gramy,

>>> 1: Mój ulubiony gatunek muzyki to reggea. Zachwycają mnie piosenki i piosenki muzyczne o ducho

>>> 2: Mój ulubiony gatunek muzyki to rockabilly, ale nie lubię też punka. Moim ulubionym gatunkiem

>>> 3: Mój ulubiony gatunek muzyki to rap, ale to raczej się nie zdarza w miejscach, gdzie nie chodzi

>>> 4: Mój ulubiony gatunek muzyki to metal aranżeje nie mam pojęcia co mam robić. Co roku,

```

Ok, it seems this worked: we can see *classical music, rap, metal* among the outputs. Interestingly, *reggae* found a way through via a misspelling *reggea*. Take it as a caution to be careful with curating your bad word lists!

### Few Shot Learning

Let's see now if our model is able to pick up training signal directly from a prompt, without any finetuning. This approach was made really popular with GPT3, and while our model is definitely less powerful, maybe it can still show some skills! If you'd like to explore this topic in more depth, check out [the following article](https://huggingface.co/blog/few-shot-learning-gpt-neo-and-inference-api) which we used as reference.

```python

prompt = """Tekst: "Nienawidzę smerfów!"

Sentyment: Negatywny

###

Tekst: "Jaki piękny dzień 👍"

Sentyment: Pozytywny

###

Tekst: "Jutro idę do kina"

Sentyment: Neutralny

###

Tekst: "Ten przepis jest świetny!"

Sentyment:"""

res = generator(prompt, max_length=85, temperature=0.5, end_sequence='###', return_full_text=False, num_return_sequences=5,)

for x in res:

print(res[i]['generated_text'].split(' ')[1])

>>> Pozytywny

>>> Pozytywny

>>> Pozytywny

>>> Pozytywny

>>> Pozytywny

```

It looks like our model is able to pick up some signal from the prompt. Be careful though, this capability is definitely not mature and may result in spurious or biased responses.

### Zero-Shot Inference

Large language models are known to store a lot of knowledge in its parameters. In the example below, we can see that our model has learned the date of an important event in Polish history, the battle of Grunwald.

```python

prompt = "Bitwa pod Grunwaldem miała miejsce w roku"

input_ids = tokenizer.encode(prompt, return_tensors='pt')

# activate beam search and early_stopping

beam_outputs = model.generate(

input_ids,

max_length=20,

num_beams=5,

early_stopping=True,

num_return_sequences=3

)

print("Output:\

" + 100 * '-')

for i, sample_output in enumerate(beam_outputs):

print("{}: {}".format(i, tokenizer.decode(sample_output, skip_special_tokens=True)))

>>> Output:

>>> ----------------------------------------------------------------------------------------------------

>>> 0: Bitwa pod Grunwaldem miała miejsce w roku 1410, kiedy to wojska polsko-litewskie pod

>>> 1: Bitwa pod Grunwaldem miała miejsce w roku 1410, kiedy to wojska polsko-litewskie pokona

>>> 2: Bitwa pod Grunwaldem miała miejsce w roku 1410, kiedy to wojska polsko-litewskie,

```

## BibTeX entry and citation info

```bibtex

@misc{papuGaPT2,

title={papuGaPT2 - Polish GPT2 language model},

url={https://huggingface.co/flax-community/papuGaPT2},

author={Wojczulis, Michał and Kłeczek, Dariusz},

year={2021}

}

``` |

ktangri/gpt-neo-demo | ktangri | 2021-07-21T15:20:09Z | 10 | 1 | transformers | [

"transformers",

"pytorch",

"gpt_neo",

"text-generation",

"text generation",

"the Pile",

"causal-lm",

"en",

"arxiv:2101.00027",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language:

- en

tags:

- text generation

- pytorch

- the Pile

- causal-lm

license: apache-2.0

datasets:

- the Pile

---

# GPT-Neo 2.7B (By EleutherAI)

## Model Description

GPT-Neo 2.7B is a transformer model designed using EleutherAI's replication of the GPT-3 architecture. GPT-Neo refers to the class of models, while 2.7B represents the number of parameters of this particular pre-trained model.

## Training data

GPT-Neo 2.7B was trained on the Pile, a large scale curated dataset created by EleutherAI for the purpose of training this model.

## Training procedure

This model was trained for 420 billion tokens over 400,000 steps. It was trained as a masked autoregressive language model, using cross-entropy loss.

## Intended Use and Limitations

This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a prompt.

### How to use

You can use this model directly with a pipeline for text generation. This example generates a different sequence each time it's run:

```py

>>> from transformers import pipeline

>>> generator = pipeline('text-generation', model='EleutherAI/gpt-neo-2.7B')

>>> generator("EleutherAI has", do_sample=True, min_length=50)

[{'generated_text': 'EleutherAI has made a commitment to create new software packages for each of its major clients and has'}]

```

### Limitations and Biases

GPT-Neo was trained as an autoregressive language model. This means that its core functionality is taking a string of text and predicting the next token. While language models are widely used for tasks other than this, there are a lot of unknowns with this work.

GPT-Neo was trained on the Pile, a dataset known to contain profanity, lewd, and otherwise abrasive language. Depending on your usecase GPT-Neo may produce socially unacceptable text. See Sections 5 and 6 of the Pile paper for a more detailed analysis of the biases in the Pile.

As with all language models, it is hard to predict in advance how GPT-Neo will respond to particular prompts and offensive content may occur without warning. We recommend having a human curate or filter the outputs before releasing them, both to censor undesirable content and to improve the quality of the results.

## Eval results

All evaluations were done using our [evaluation harness](https://github.com/EleutherAI/lm-evaluation-harness). Some results for GPT-2 and GPT-3 are inconsistent with the values reported in the respective papers. We are currently looking into why, and would greatly appreciate feedback and further testing of our eval harness. If you would like to contribute evaluations you have done, please reach out on our [Discord](https://discord.gg/vtRgjbM).

### Linguistic Reasoning

| Model and Size | Pile BPB | Pile PPL | Wikitext PPL | Lambada PPL | Lambada Acc | Winogrande | Hellaswag |

| ---------------- | ---------- | ---------- | ------------- | ----------- | ----------- | ---------- | ----------- |

| GPT-Neo 1.3B | 0.7527 | 6.159 | 13.10 | 7.498 | 57.23% | 55.01% | 38.66% |

| GPT-2 1.5B | 1.0468 | ----- | 17.48 | 10.634 | 51.21% | 59.40% | 40.03% |

| **GPT-Neo 2.7B** | **0.7165** | **5.646** | **11.39** | **5.626** | **62.22%** | **56.50%** | **42.73%** |

| GPT-3 Ada | 0.9631 | ----- | ----- | 9.954 | 51.60% | 52.90% | 35.93% |

### Physical and Scientific Reasoning

| Model and Size | MathQA | PubMedQA | Piqa |

| ---------------- | ---------- | ---------- | ----------- |

| GPT-Neo 1.3B | 24.05% | 54.40% | 71.11% |

| GPT-2 1.5B | 23.64% | 58.33% | 70.78% |

| **GPT-Neo 2.7B** | **24.72%** | **57.54%** | **72.14%** |

| GPT-3 Ada | 24.29% | 52.80% | 68.88% |

### Down-Stream Applications

TBD

### BibTeX entry and citation info

To cite this model, use

```bibtex

@article{gao2020pile,

title={The Pile: An 800GB Dataset of Diverse Text for Language Modeling},

author={Gao, Leo and Biderman, Stella and Black, Sid and Golding, Laurence and Hoppe, Travis and Foster, Charles and Phang, Jason and He, Horace and Thite, Anish and Nabeshima, Noa and others},

journal={arXiv preprint arXiv:2101.00027},

year={2020}

}

```

To cite the codebase that this model was trained with, use

```bibtex

@software{gpt-neo,

author = {Black, Sid and Gao, Leo and Wang, Phil and Leahy, Connor and Biderman, Stella},

title = {{GPT-Neo}: Large Scale Autoregressive Language Modeling with Mesh-Tensorflow},

url = {http://github.com/eleutherai/gpt-neo},

version = {1.0},

year = {2021},

}

``` |

ifis-zork/ZORK_AI_SCI_FI | ifis-zork | 2021-07-21T14:18:15Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

model_index:

- name: ZORK_AI_SCI_FI

results:

- task:

name: Causal Language Modeling

type: text-generation

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ZORK_AI_SCI_FI

This model is a fine-tuned version of [ifis-zork/ZORK_AI_SCI_FI_TEMP](https://huggingface.co/ifis-zork/ZORK_AI_SCI_FI_TEMP) on an unkown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 1

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Tokenizers 0.10.3

|

bipin/malayalam-news-classifier | bipin | 2021-07-21T13:40:25Z | 9 | 3 | transformers | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"malayalam",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2022-03-02T23:29:05Z | ---

license: mit

tags:

- text-classification

- roberta

- malayalam

- pytorch

widget:

- text: "2032 ഒളിമ്പിക്സിന് ബ്രിസ്ബെയ്ന് വേദിയാകും; ഗെയിംസിന് വേദിയാകുന്ന മൂന്നാമത്തെ ഓസ്ട്രേലിയന് നഗരം"

---

## Malayalam news classifier

### Overview

This model is trained on top of [MalayalamBert](https://huggingface.co/eliasedwin7/MalayalamBERT) for the task of classifying malayalam news headlines. Presently, the following news categories are supported:

* Business

* Sports

* Entertainment

### Dataset

The dataset used for training this model can be found [here](https://www.kaggle.com/disisbig/malyalam-news-dataset).

### Using the model with HF pipeline

```python

from transformers import pipeline

news_headline = "ക്രിപ്റ്റോ ഇടപാടുകളുടെ വിവരങ്ങൾ ആവശ്യപ്പെട്ട് ആദായനികുതി വകുപ്പ് നോട്ടീസയച്ചു"

model = pipeline(task="text-classification", model="bipin/malayalam-news-classifier")

model(news_headline)

# Output

# [{'label': 'business', 'score': 0.9979357123374939}]

```

### Contact

For feedback and questions, feel free to contact via twitter [@bkrish_](https://twitter.com/bkrish_) |

mshamrai/bert-base-ukr-eng-rus-uncased | mshamrai | 2021-07-21T12:05:26Z | 38 | 0 | transformers | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"endpoints_compatible",

"region:us"

] | feature-extraction | 2022-03-02T23:29:04Z | This repository shares smaller version of bert-base-multilingual-uncased that keeps only Ukrainian, English, and Russian tokens in the vocabulary.

| Model | Num parameters | Size |

| ----------------------------------------- | -------------- | --------- |

| bert-base-multilingual-uncased | 167 million | ~650 MB |

| MaxVortman/bert-base-ukr-eng-rus-uncased | 110 million | ~423 MB | |

defex/distilgpt2-finetuned-amazon-reviews | defex | 2021-07-21T10:36:15Z | 5 | 1 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

datasets:

- null

model_index:

- name: distilgpt2-finetuned-amazon-reviews

results:

- task:

name: Causal Language Modeling

type: text-generation

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilgpt2-finetuned-amazon-reviews

This model was trained from scratch on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Datasets 1.9.0

- Tokenizers 0.10.3

|

ifis-zork/ZORK_AI_FANTASY | ifis-zork | 2021-07-21T09:50:17Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

model_index:

- name: ZORK_AI_FANTASY

results:

- task:

name: Causal Language Modeling

type: text-generation

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ZORK_AI_FANTASY

This model is a fine-tuned version of [ifis-zork/ZORK_AI_FAN_TEMP](https://huggingface.co/ifis-zork/ZORK_AI_FAN_TEMP) on an unkown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 1

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Tokenizers 0.10.3

|

flax-community/clip-vision-bert-cc12m-60k | flax-community | 2021-07-21T09:17:15Z | 9 | 2 | transformers | [

"transformers",

"jax",

"clip-vision-bert",

"fill-mask",

"arxiv:1908.03557",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-03-02T23:29:05Z | # CLIP-Vision-BERT Multilingual Pre-trained Model

Pretrained CLIP-Vision-BERT pre-trained on translated [Conceptual-12M](https://github.com/google-research-datasets/conceptual-12m) image-text pairs using a masked language modeling (MLM) objective. 10M cleaned image-text pairs are translated using [mBART-50 one-to-many model](https://huggingface.co/facebook/mbart-large-50-one-to-many-mmt) to 2.5M examples each in English, French, German and Spanish. This model is based on the VisualBERT which was introduced in

[this paper](https://arxiv.org/abs/1908.03557) and first released in

[this repository](https://github.com/uclanlp/visualbert). We trained CLIP-Vision-BERT model during community week hosted by Huggingface 🤗 using JAX/Flax.

This checkpoint is pre-trained for 60k steps.

## Model description

CLIP-Vision-BERT is a modified BERT model which takes in visual embeddings from CLIP-Vision transformer and concatenates them with BERT textual embeddings before passing them to the self-attention layers of BERT. This is done for deep cross-modal interaction between the two modes.

## Intended uses & limitations❗️

You can use the raw model for masked language modeling, but it's mostly intended to be fine-tuned on a downstream task.

Note that this model is primarily aimed at being fine-tuned on tasks such as visuo-linguistic sequence classification or visual question answering. We used this model to fine-tuned on a multi-translated version of the visual question answering task - [VQA v2](https://visualqa.org/challenge.html). Since Conceptual-12M is a dataset scraped from the internet, it will involve some biases which will also affect all fine-tuned versions of this model.

### How to use❓

You can use this model directly with a pipeline for masked language modeling. You will need to clone the model from [here](https://github.com/gchhablani/multilingual-vqa). An example of usage is shown below:

```python

>>> from torchvision.io import read_image

>>> import numpy as np

>>> import os

>>> from transformers import CLIPProcessor, BertTokenizerFast

>>> from model.flax_clip_vision_bert.modeling_clip_vision_bert import FlaxCLIPVisionBertForMaskedLM

>>> image_path = os.path.join('images/val2014', os.listdir('images/val2014')[0])

>>> img = read_image(image_path)

>>> clip_processor = CLIPProcessor.from_pretrained('openai/clip-vit-base-patch32')

ftfy or spacy is not installed using BERT BasicTokenizer instead of ftfy.

>>> clip_outputs = clip_processor(images=img)

>>> clip_outputs['pixel_values'][0] = clip_outputs['pixel_values'][0].transpose(1,2,0) # Need to transpose images as model expected channel last images.

>>> tokenizer = BertTokenizerFast.from_pretrained('bert-base-multilingual-uncased')

>>> model = FlaxCLIPVisionBertForMaskedLM.from_pretrained('flax-community/clip-vision-bert-cc12m-60k')

>>> text = "Three teddy [MASK] in a showcase."

>>> tokens = tokenizer([text], return_tensors="np")

>>> pixel_values = np.concatenate([clip_outputs['pixel_values']])

>>> outputs = model(pixel_values=pixel_values, **tokens)

>>> indices = np.where(tokens['input_ids']==tokenizer.mask_token_id)

>>> preds = outputs.logits[indices][0]

>>> sorted_indices = np.argsort(preds)[::-1] # Get reverse sorted scores

/home/crocoder/anaconda3/lib/python3.8/site-packages/jax/_src/numpy/lax_numpy.py:4615: UserWarning: 'kind' argument to argsort is ignored.

warnings.warn("'kind' argument to argsort is ignored.")

>>> top_5_indices = sorted_indices[:5]

>>> top_5_tokens = tokenizer.convert_ids_to_tokens(top_5_indices)

>>> top_5_scores = preds[top_5_indices]

>>> print(dict(zip(top_5_tokens, top_5_scores)))

{'bears': 19.241959, 'bear': 17.700356, 'animals': 14.368396, 'girls': 14.343797, 'dolls': 14.274415}

```

## Training data 🏋🏻♂️

The CLIP-Vision-BERT model was pre-trained on a translated version of the Conceptual-12m dataset in four languages using mBART-50: English, French, German and Spanish, with 2.5M image-text pairs in each.

The dataset captions and image urls can be downloaded from [flax-community/conceptual-12m-mbart-50-translated](https://huggingface.co/datasets/flax-community/conceptual-12m-mbart-50-multilingual).

## Data Cleaning 🧹

Though the original dataset contains 12M image-text pairs, a lot of the URLs are invalid now, and in some cases, images are corrupt or broken. We remove such examples from our data, which leaves us with approximately 10M image-text pairs.

**Splits**

We used 99% of the 10M examples as a train set, and the remaining ~ 100K examples as our validation set.

## Training procedure 👨🏻💻

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a shared vocabulary size of approximately 110,000. The beginning of a new document is marked with `[CLS]` and the end of one by `[SEP]`

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

The visual embeddings are taken from the CLIP-Vision model and combined with the textual embeddings inside the BERT embedding layer. The padding is done in the middle. Here is an example of what the embeddings look like:

```

[CLS Emb] [Textual Embs] [SEP Emb] [Pad Embs] [Visual Embs]

```

A total length of 128 tokens, including the visual embeddings, is used. The texts are truncated or padded accordingly.

### Pretraining

The checkpoint of the model was trained on Google Cloud Engine TPUv3-8 machine (with 335 GB of RAM, 1000 GB of hard drive, 96 CPU cores) **8 v3 TPU cores** for 60k steps with a per device batch size of 64 and a max sequence length of 128. The optimizer used is Adafactor with a learning rate of 1e-4, learning rate warmup for 5,000 steps, and linear decay of the learning rate after.

We tracked experiments using TensorBoard. Here is the link to the main dashboard: [CLIP Vision BERT CC12M Pre-training Dashboard](https://huggingface.co/flax-community/multilingual-vqa-pt-ckpts/tensorboard)

#### **Pretraining Results 📊**

The model at this checkpoint reached **eval accuracy of 67.53%** and **with train loss at 1.793 and eval loss at 1.724**.

## Fine Tuning on downstream tasks

We performed fine-tuning on downstream tasks. We used the following datasets for visual question answering:

1. Multilingual of [Visual Question Answering (VQA) v2](https://visualqa.org/challenge.html) - We translated this dataset to the four languages using `Helsinki-NLP` Marian models. The translated data can be found at [flax-community/multilingual-vqa](https://huggingface.co/datasets/flax-community/multilingual-vqa).

The checkpoints for the fine-tuned model on this pre-trained checkpoint can be found [here](https://huggingface.co/flax-community/multilingual-vqa-pt-60k-ft/tensorboard).

The fine-tuned model achieves eval accuracy of 49% on our validation dataset.

## Team Members

- Gunjan Chhablani [@gchhablani](https://hf.co/gchhablani)

- Bhavitvya Malik[@bhavitvyamalik](https://hf.co/bhavitvyamalik)

## Acknowledgements

We thank [Nilakshan Kunananthaseelan](https://huggingface.co/knilakshan20) for helping us whenever he could get a chance. We also thank [Abheesht Sharma](https://huggingface.co/abheesht) for helping in the discussions in the initial phases. [Luke Melas](https://github.com/lukemelas) helped us get the CC-12M data on our TPU-VMs and we are very grateful to him.

This project would not be possible without the help of [Patrick](https://huggingface.co/patrickvonplaten) and [Suraj](https://huggingface.co/valhalla) who met with us frequently and helped review our approach and guided us throughout the project.

Huge thanks to Huggingface 🤗 & Google Jax/Flax team for such a wonderful community week and for answering our queries on the Slack channel, and for providing us with the TPU-VMs.

<img src=https://pbs.twimg.com/media/E443fPjX0AY1BsR.jpg:large>

|

huggingtweets/grapefried | huggingtweets | 2021-07-21T08:54:37Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: https://www.huggingtweets.com/grapefried/1626857673378/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1392696284549632008/QOl3l-zh_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">ju1ce💎</div>

<div style="text-align: center; font-size: 14px;">@grapefried</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from ju1ce💎.

| Data | ju1ce💎 |

| --- | --- |

| Tweets downloaded | 2034 |

| Retweets | 504 |

| Short tweets | 403 |

| Tweets kept | 1127 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1actx5cl/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @grapefried's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1a1nwhd0) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1a1nwhd0/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/grapefried')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

flax-community/clip-vision-bert-cc12m-70k | flax-community | 2021-07-21T08:48:04Z | 7 | 1 | transformers | [

"transformers",

"jax",

"clip-vision-bert",

"fill-mask",

"arxiv:1908.03557",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-03-02T23:29:05Z | # CLIP-Vision-BERT Multilingual Pre-trained Model

Pretrained CLIP-Vision-BERT pre-trained on translated [Conceptual-12M](https://github.com/google-research-datasets/conceptual-12m) image-text pairs using a masked language modeling (MLM) objective. 10M cleaned image-text pairs are translated using [mBART-50 one-to-many model](https://huggingface.co/facebook/mbart-large-50-one-to-many-mmt) to 2.5M examples each in English, French, German and Spanish. This model is based on the VisualBERT which was introduced in

[this paper](https://arxiv.org/abs/1908.03557) and first released in

[this repository](https://github.com/uclanlp/visualbert). We trained CLIP-Vision-BERT model during community week hosted by Huggingface 🤗 using JAX/Flax.

This checkpoint is pre-trained for 70k steps.

## Model description

CLIP-Vision-BERT is a modified BERT model which takes in visual embeddings from CLIP-Vision transformer and concatenates them with BERT textual embeddings before passing them to the self-attention layers of BERT. This is done for deep cross-modal interaction between the two modes.

## Intended uses & limitations❗️

You can use the raw model for masked language modeling, but it's mostly intended to be fine-tuned on a downstream task.

Note that this model is primarily aimed at being fine-tuned on tasks such as visuo-linguistic sequence classification or visual question answering. We used this model to fine-tuned on a multi-translated version of the visual question answering task - [VQA v2](https://visualqa.org/challenge.html). Since Conceptual-12M is a dataset scraped from the internet, it will involve some biases which will also affect all fine-tuned versions of this model.

### How to use❓

You can use this model directly with a pipeline for masked language modeling. You will need to clone the model from [here](https://github.com/gchhablani/multilingual-vqa). An example of usage is shown below:

```python

>>> from torchvision.io import read_image

>>> import numpy as np

>>> import os

>>> from transformers import CLIPProcessor, BertTokenizerFast

>>> from model.flax_clip_vision_bert.modeling_clip_vision_bert import FlaxCLIPVisionBertForMaskedLM

>>> image_path = os.path.join('images/val2014', os.listdir('images/val2014')[0])

>>> img = read_image(image_path)

>>> clip_processor = CLIPProcessor.from_pretrained('openai/clip-vit-base-patch32')

ftfy or spacy is not installed using BERT BasicTokenizer instead of ftfy.

>>> clip_outputs = clip_processor(images=img)

>>> clip_outputs['pixel_values'][0] = clip_outputs['pixel_values'][0].transpose(1,2,0) # Need to transpose images as model expected channel last images.

>>> tokenizer = BertTokenizerFast.from_pretrained('bert-base-multilingual-uncased')

>>> model = FlaxCLIPVisionBertForMaskedLM.from_pretrained('flax-community/clip-vision-bert-cc12m-70k')

>>> text = "Three teddy [MASK] in a showcase."

>>> tokens = tokenizer([text], return_tensors="np")

>>> pixel_values = np.concatenate([clip_outputs['pixel_values']])

>>> outputs = model(pixel_values=pixel_values, **tokens)

>>> indices = np.where(tokens['input_ids']==tokenizer.mask_token_id)

>>> preds = outputs.logits[indices][0]

>>> sorted_indices = np.argsort(preds)[::-1] # Get reverse sorted scores

>>> top_5_indices = sorted_indices[:5]

>>> top_5_tokens = tokenizer.convert_ids_to_tokens(top_5_indices)

>>> top_5_scores = preds[top_5_indices]

>>> print(dict(zip(top_5_tokens, top_5_scores)))

{'bears': 19.400345, 'bear': 17.866995, 'animals': 14.453735, 'dogs': 14.427426, 'girls': 14.097499}

```

## Training data 🏋🏻♂️

The CLIP-Vision-BERT model was pre-trained on a translated version of the Conceptual-12m dataset in four languages using mBART-50: English, French, German and Spanish, with 2.5M image-text pairs in each.

The dataset captions and image urls can be downloaded from [flax-community/conceptual-12m-mbart-50-translated](https://huggingface.co/datasets/flax-community/conceptual-12m-mbart-50-multilingual).

## Data Cleaning 🧹

Though the original dataset contains 12M image-text pairs, a lot of the URLs are invalid now, and in some cases, images are corrupt or broken. We remove such examples from our data, which leaves us with approximately 10M image-text pairs.

**Splits**

We used 99% of the 10M examples as a train set, and the remaining ~ 100K examples as our validation set.

## Training procedure 👨🏻💻

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a shared vocabulary size of approximately 110,000. The beginning of a new document is marked with `[CLS]` and the end of one by `[CLS]`

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

The visual embeddings are taken from the CLIP-Vision model and combined with the textual embeddings inside the BERT embedding layer. The padding is done in the middle. Here is an example of what the embeddings look like:

```

[CLS Emb] [Textual Embs] [SEP Emb] [Pad Embs] [Visual Embs]

```

A total length of 128 tokens, including the visual embeddings, is used. The texts are truncated or padded accordingly.

### Pretraining

The checkpoint of the model was trained on Google Cloud Engine TPUv3-8 machine (with 335 GB of RAM, 1000 GB of hard drive, 96 CPU cores) **8 v3 TPU cores** for 70k steps with a per device batch size of 64 and a max sequence length of 128. The optimizer used is Adafactor with a learning rate of 1e-4, learning rate warmup for 1,000 steps, and linear decay of the learning rate after.

We tracked experiments using TensorBoard. Here is the link to the main dashboard: [CLIP Vision BERT CC12M Pre-training Dashboard](https://huggingface.co/flax-community/multilingual-vqa-pt-ckpts/tensorboard)

#### **Pretraining Results 📊**

The model at this checkpoint reached **eval accuracy of 67.85%** and **with train loss at 1.756 and eval loss at 1.706**.

## Team Members

- Gunjan Chhablani [@gchhablani](https://hf.co/gchhablani)

- Bhavitvya Malik[@bhavitvyamalik](https://hf.co/bhavitvyamalik)

## Acknowledgements

We thank [Nilakshan Kunananthaseelan](https://huggingface.co/knilakshan20) for helping us whenever he could get a chance. We also thank [Abheesht Sharma](https://huggingface.co/abheesht) for helping in the discussions in the initial phases. [Luke Melas](https://github.com/lukemelas) helped us get the CC-12M data on our TPU-VMs and we are very grateful to him.

This project would not be possible without the help of [Patrick](https://huggingface.co/patrickvonplaten) and [Suraj](https://huggingface.co/valhalla) who met with us frequently and helped review our approach and guided us throughout the project.

Huge thanks to Huggingface 🤗 & Google Jax/Flax team for such a wonderful community week and for answering our queries on the Slack channel, and for providing us with the TPU-VMs.

<img src=https://pbs.twimg.com/media/E443fPjX0AY1BsR.jpg:large>

|

junnyu/uer_large | junnyu | 2021-07-21T08:42:35Z | 4 | 2 | transformers | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"zh",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-03-02T23:29:05Z | ---

language: zh

tags:

- bert

- pytorch

widget:

- text: "巴黎是[MASK]国的首都。"

---

https://github.com/dbiir/UER-py/wiki/Modelzoo 中的

MixedCorpus+BertEncoder(large)+MlmTarget

https://share.weiyun.com/5G90sMJ

Pre-trained on mixed large Chinese corpus. The configuration file is bert_large_config.json

## 引用

```tex

@article{zhao2019uer,

title={UER: An Open-Source Toolkit for Pre-training Models},

author={Zhao, Zhe and Chen, Hui and Zhang, Jinbin and Zhao, Xin and Liu, Tao and Lu, Wei and Chen, Xi and Deng, Haotang and Ju, Qi and Du, Xiaoyong},

journal={EMNLP-IJCNLP 2019},

pages={241},

year={2019}

}

```

|

lg/ghpy_20k | lg | 2021-07-20T23:55:56Z | 10 | 2 | transformers | [

"transformers",

"pytorch",

"gpt_neo",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | **This model is provided with no guarantees whatsoever; use at your own risk.**

This is a Neo2.7B model fine tuned on github data scraped by an EleutherAI member (filtered for python-only) for 20k steps. A better code model is coming soon™ (hopefully, maybe); this model was created mostly as a test of infrastructure code. |

ifis-zork/IFIS_ZORK_AI_MEDIUM_HORROR | ifis-zork | 2021-07-20T23:14:58Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

model_index:

- name: IFIS_ZORK_AI_MEDIUM_HORROR

results:

- task:

name: Causal Language Modeling

type: text-generation

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# IFIS_ZORK_AI_MEDIUM_HORROR

This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on an unkown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 1

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.8.2

- Pytorch 1.9.0+cu102

- Tokenizers 0.10.3

|

espnet/kan-bayashi_csmsc_conformer_fastspeech2 | espnet | 2021-07-20T21:31:29Z | 8 | 1 | espnet | [

"espnet",

"audio",

"text-to-speech",

"zh",

"dataset:csmsc",

"arxiv:1804.00015",

"license:cc-by-4.0",

"region:us"

] | text-to-speech | 2022-03-02T23:29:05Z | ---

tags:

- espnet

- audio

- text-to-speech

language: zh

datasets:

- csmsc

license: cc-by-4.0

---

## ESPnet2 TTS pretrained model

### `kan-bayashi/csmsc_conformer_fastspeech2`

♻️ Imported from https://zenodo.org/record/4031955/

This model was trained by kan-bayashi using csmsc/tts1 recipe in [espnet](https://github.com/espnet/espnet/).

### Demo: How to use in ESPnet2

```python

# coming soon

```

### Citing ESPnet

```BibTex

@inproceedings{watanabe2018espnet,

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson {Enrique Yalta Soplin} and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},