modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-06-28 18:27:08

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 501

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 54

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-06-28 18:25:37

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

kraken2404/ppo_lunarlander_unit1_v2 | kraken2404 | 2023-05-27T09:17:45Z | 0 | 0 | stable-baselines3 | [

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] | reinforcement-learning | 2023-05-27T08:58:10Z | ---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -65.20 +/- 40.64

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

YakovElm/Qt10Classic_32 | YakovElm | 2023-05-27T08:52:28Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T08:51:48Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Qt10Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Qt10Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2267

- Train Accuracy: 0.9208

- Validation Loss: 0.2144

- Validation Accuracy: 0.9416

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2754 | 0.9202 | 0.2156 | 0.9416 | 0 |

| 0.2484 | 0.9210 | 0.2215 | 0.9416 | 1 |

| 0.2267 | 0.9208 | 0.2144 | 0.9416 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

kraken2404/ppo_lunarlander_unit1 | kraken2404 | 2023-05-27T08:41:47Z | 2 | 0 | stable-baselines3 | [

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] | reinforcement-learning | 2023-05-27T07:44:48Z | ---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -326.26 +/- 71.54

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

kraken2404/unit8_1_lunarlander_v2_2 | kraken2404 | 2023-05-27T08:20:12Z | 0 | 0 | null | [

"tensorboard",

"LunarLander-v2",

"ppo",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"deep-rl-course",

"model-index",

"region:us"

] | reinforcement-learning | 2023-05-27T08:16:15Z | ---

tags:

- LunarLander-v2

- ppo

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

- deep-rl-course

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -117.88 +/- 50.03

name: mean_reward

verified: false

---

# PPO Agent Playing LunarLander-v2

This is a trained model of a PPO agent playing LunarLander-v2.

# Hyperparameters

```python

{'exp_name': 'ppo'

'seed': 1

'torch_deterministic': True

'cuda': True

'track': False

'wandb_project_name': 'cleanRL'

'wandb_entity': None

'capture_video': False

'env_id': 'LunarLander-v2'

'total_timesteps': 100000

'learning_rate': 0.00025

'num_envs': 4

'num_steps': 128

'anneal_lr': True

'gae': True

'gamma': 0.99

'gae_lambda': 0.95

'num_minibatches': 4

'update_epochs': 4

'norm_adv': True

'clip_coef': 0.2

'clip_vloss': True

'ent_coef': 0.01

'vf_coef': 0.5

'max_grad_norm': 0.5

'target_kl': None

'repo_id': 'kraken2404/unit8_1_lunarlander_v2_2'

'batch_size': 512

'minibatch_size': 128}

```

|

YakovElm/MariaDB15Classic_64 | YakovElm | 2023-05-27T08:06:04Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T08:05:30Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB15Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB15Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1915

- Train Accuracy: 0.9381

- Validation Loss: 0.1826

- Validation Accuracy: 0.9372

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2834 | 0.9096 | 0.1811 | 0.9598 | 0 |

| 0.2120 | 0.9238 | 0.1664 | 0.9598 | 1 |

| 0.1915 | 0.9381 | 0.1826 | 0.9372 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/MariaDB10Classic_64 | YakovElm | 2023-05-27T07:55:55Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:55:18Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB10Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB10Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1941

- Train Accuracy: 0.9339

- Validation Loss: 0.1951

- Validation Accuracy: 0.9472

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2920 | 0.9004 | 0.1959 | 0.9523 | 0 |

| 0.2384 | 0.9155 | 0.1959 | 0.9472 | 1 |

| 0.1941 | 0.9339 | 0.1951 | 0.9472 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

StevenLimcorn/indonesian-roberta-base-emotion-classifier | StevenLimcorn | 2023-05-27T07:47:15Z | 207 | 14 | transformers | [

"transformers",

"pytorch",

"tf",

"safetensors",

"roberta",

"text-classification",

"id",

"dataset:indonlu",

"doi:10.57967/hf/0681",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2022-03-02T23:29:05Z | ---

language: id

tags:

- roberta

license: mit

datasets:

- indonlu

widget:

- text: "Hal-hal baik akan datang."

---

# Indo RoBERTa Emotion Classifier

Indo RoBERTa Emotion Classifier is emotion classifier based on [Indo-roberta](https://huggingface.co/flax-community/indonesian-roberta-base) model. It was trained on the trained on [IndoNLU EmoT](https://huggingface.co/datasets/indonlu) dataset. The model used was [Indo-roberta](https://huggingface.co/flax-community/indonesian-roberta-base) and was transfer-learned to an emotion classifier model. Based from the [IndoNLU bencmark](https://www.indobenchmark.com/), the model achieve an f1-macro of 72.05%, accuracy of 71.81%, precision of 72.47% and recall of 71.94%.

## Model

The model was trained on 7 epochs with learning rate 2e-5. Achieved different metrics as shown below.

| Epoch | Training Loss | Validation Loss | Accuracy | F1 | Precision | Recall |

|-------|---------------|-----------------|----------|----------|-----------|----------|

| 1 | 1.300700 | 1.005149 | 0.622727 | 0.601846 | 0.640845 | 0.611144 |

| 2 | 0.806300 | 0.841953 | 0.686364 | 0.694096 | 0.701984 | 0.696657 |

| 3 | 0.591900 | 0.796794 | 0.686364 | 0.696573 | 0.707520 | 0.691671 |

| 4 | 0.441200 | 0.782094 | 0.722727 | 0.724359 | 0.725985 | 0.730229 |

| 5 | 0.334700 | 0.809931 | 0.711364 | 0.720550 | 0.718318 | 0.724608 |

| 6 | 0.268400 | 0.812771 | 0.718182 | 0.724192 | 0.721222 | 0.729195 |

| 7 | 0.226000 | 0.828461 | 0.725000 | 0.733625 | 0.731709 | 0.735800 |

## How to Use

### As Text Classifier

```python

from transformers import pipeline

pretrained_name = "StevenLimcorn/indonesian-roberta-base-emotion-classifier"

nlp = pipeline(

"sentiment-analysis",

model=pretrained_name,

tokenizer=pretrained_name

)

nlp("Hal-hal baik akan datang.")

```

## Disclaimer

Do consider the biases which come from both the pre-trained RoBERTa model and the `EmoT` dataset that may be carried over into the results of this model.

## Author

Indonesian RoBERTa Base Emotion Classifier was trained and evaluated by [Steven Limcorn](https://github.com/stevenlimcorn). All computation and development are done on Google Colaboratory using their free GPU access.

If used, please cite

```bibtex

@misc {steven_limcorn_2023,

author = { {Steven Limcorn} },

title = { indonesian-roberta-base-emotion-classifier (Revision e8a9cb9) },

year = 2023,

url = { https://huggingface.co/StevenLimcorn/indonesian-roberta-base-emotion-classifier },

doi = { 10.57967/hf/0681 },

publisher = { Hugging Face }

}

``` |

YakovElm/MariaDB5Classic_64 | YakovElm | 2023-05-27T07:47:05Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:46:25Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB5Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB5Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2438

- Train Accuracy: 0.9004

- Validation Loss: 0.2560

- Validation Accuracy: 0.9271

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3297 | 0.8921 | 0.2584 | 0.9322 | 0 |

| 0.2592 | 0.9079 | 0.2489 | 0.9271 | 1 |

| 0.2438 | 0.9004 | 0.2560 | 0.9271 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

nolanaatama/thyngfbtsrvc300pchncltr | nolanaatama | 2023-05-27T07:40:54Z | 0 | 0 | null | [

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-05-27T07:18:44Z | ---

license: creativeml-openrail-m

---

|

YakovElm/MariaDB20Classic_32 | YakovElm | 2023-05-27T07:38:04Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:37:18Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB20Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB20Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2150

- Train Accuracy: 0.9356

- Validation Loss: 0.1324

- Validation Accuracy: 0.9698

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2765 | 0.9305 | 0.1945 | 0.9698 | 0 |

| 0.2427 | 0.9356 | 0.1311 | 0.9698 | 1 |

| 0.2150 | 0.9356 | 0.1324 | 0.9698 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

andrei-saceleanu/vit-base-fixmatch | andrei-saceleanu | 2023-05-27T07:34:35Z | 59 | 0 | transformers | [

"transformers",

"tf",

"vit",

"image-feature-extraction",

"generated_from_keras_callback",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | image-feature-extraction | 2023-05-27T07:33:58Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: vit-base-fixmatch

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vit-base-fixmatch

This model is a fine-tuned version of [google/vit-base-patch16-224](https://huggingface.co/google/vit-base-patch16-224) on an unknown dataset.

It achieves the following results on the evaluation set:

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: None

- training_precision: float32

### Training results

### Framework versions

- Transformers 4.27.4

- TensorFlow 2.12.0

- Tokenizers 0.13.3

|

YakovElm/MariaDB10Classic_32 | YakovElm | 2023-05-27T07:25:01Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:24:28Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB10Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB10Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1860

- Train Accuracy: 0.9356

- Validation Loss: 0.2225

- Validation Accuracy: 0.9497

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2943 | 0.9121 | 0.2078 | 0.9523 | 0 |

| 0.2359 | 0.9213 | 0.2011 | 0.9497 | 1 |

| 0.1860 | 0.9356 | 0.2225 | 0.9497 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/Jira20Classic_64 | YakovElm | 2023-05-27T07:11:37Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:11:04Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Jira20Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Jira20Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2121

- Train Accuracy: 0.9224

- Validation Loss: 0.3072

- Validation Accuracy: 0.9085

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3687 | 0.8678 | 0.2697 | 0.9338 | 0 |

| 0.2722 | 0.8909 | 0.2871 | 0.9306 | 1 |

| 0.2121 | 0.9224 | 0.3072 | 0.9085 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/Jira15Classic_64 | YakovElm | 2023-05-27T07:03:03Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T07:02:29Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Jira15Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Jira15Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3055

- Train Accuracy: 0.8678

- Validation Loss: 0.8529

- Validation Accuracy: 0.6530

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.4973 | 0.7922 | 0.8065 | 0.5205 | 0 |

| 0.4266 | 0.7849 | 0.8817 | 0.5174 | 1 |

| 0.3055 | 0.8678 | 0.8529 | 0.6530 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

p1atdev/misted | p1atdev | 2023-05-27T06:57:52Z | 0 | 0 | null | [

"license:other",

"region:us"

] | null | 2023-05-27T06:39:54Z | ---

license: other

---

[Mist](https://mist-project.github.io/index_en.html) で防御した画像で学習したLoRA(LierLa)、防御していない画像で学習したLoRAとそれらのデータセット

学習のベースモデルは [WD 1.5 beta 3](https://huggingface.co/waifu-diffusion/wd-1-5-beta3) の base。

- [mist-no-1-2-40.safetensors](https://huggingface.co/p1atdev/misted/blob/main/test1/mist-no-1-2-40.safetensors): nijijourneyから5枚の画像を学習

- [mist-1-1-40.safetensors](https://huggingface.co/p1atdev/misted/blob/main/test1/mist-1-1-40.safetensors): 学習画像全てにMist を Textural モード、Strength 16、Steps 100、Output size 512、Low VRAM usage mode で付与して学習

学習画像以外は同じ設定で学習した。

```

masterpiece, best quality, exceptional, best aesthetic, mst style, 1girl, animal ears, solo, jacket, looking at viewer, tail, shirt, open clothes, white shirt, open jacket, blue hair, fox ears, upper body, blue eyes, hair ornament, black jacket, hairclip, fox tail, outdoors, short hair, closed mouth, bangs

Negative prompt: worst quality, low quality, bad aesthetic, oldest, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3062589856, Size: 512x512, Model hash: d38e779546, Model: wd-beta3-base-fp16, Clip skip: 2, Version: v1.2.1, Script: X/Y/Z plot, X Type: AddNet Model 1, X Values: "None,mist-no-1-2-40(394e4f330c12),mist-1-1-40(d68f0ae289be)"

```

```

masterpiece, best quality, exceptional, best aesthetic, 1girl, upper body, looking at viewer,

Negative prompt: worst quality, low quality, bad aesthetic, oldest, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3331018170, Size: 512x512, Model hash: d38e779546, Model: wd-beta3-base-fp16, Clip skip: 2, Version: v1.2.1, Script: X/Y/Z plot, X Type: AddNet Model 1, X Values: "None,mist-no-1-2-40(394e4f330c12),mist-1-1-40(d68f0ae289be)"

```

```

masterpiece, best quality, exceptional, best aesthetic, 1girl, aqua eyes, baseball cap, blonde hair, closed mouth, earrings, green background, hat, hoop earrings, jewelry, looking at viewer, shirt, short hair, simple background, solo, upper body, yellow shirt,

Negative prompt: worst quality, low quality, bad aesthetic, oldest, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2297393473, Size: 512x512, Model hash: d38e779546, Model: wd-beta3-base-fp16, Clip skip: 2, Version: v1.2.1, Script: X/Y/Z plot, X Type: AddNet Model 1, X Values: "None,mist-no-1-2-40(394e4f330c12),mist-1-1-40(d68f0ae289be)"

```

|

BBGAME605065444/my_awesome_model | BBGAME605065444 | 2023-05-27T06:54:29Z | 104 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-26T21:28:34Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

model-index:

- name: my_awesome_model

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

config: plain_text

split: test

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.9316

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_awesome_model

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2282

- Accuracy: 0.9316

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2295 | 1.0 | 1563 | 0.1848 | 0.9288 |

| 0.1511 | 2.0 | 3126 | 0.2282 | 0.9316 |

### Framework versions

- Transformers 4.28.0.dev0

- Pytorch 2.0.0+cu117

- Datasets 2.10.1

- Tokenizers 0.13.2

|

YakovElm/Jira15Classic_32 | YakovElm | 2023-05-27T06:32:14Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T06:31:38Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Jira15Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Jira15Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2421

- Train Accuracy: 0.9024

- Validation Loss: 1.1123

- Validation Accuracy: 0.6372

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.4984 | 0.7702 | 0.8224 | 0.5205 | 0 |

| 0.3898 | 0.8216 | 0.7801 | 0.6215 | 1 |

| 0.2421 | 0.9024 | 1.1123 | 0.6372 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

intanm/20230527-baseline-009-xlmrl-qa-squadv2-ft-clickbait-spoiling | intanm | 2023-05-27T06:28:32Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"question-answering",

"generated_from_trainer",

"license:cc-by-4.0",

"endpoints_compatible",

"region:us"

] | question-answering | 2023-05-27T05:57:52Z | ---

license: cc-by-4.0

tags:

- generated_from_trainer

model-index:

- name: 20230527-baseline-009-xlmrl-qa-squadv2-ft-clickbait-spoiling

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 20230527-baseline-009-xlmrl-qa-squadv2-ft-clickbait-spoiling

This model is a fine-tuned version of [deepset/xlm-roberta-large-squad2](https://huggingface.co/deepset/xlm-roberta-large-squad2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 6.8572

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 197 | 2.2523 |

| No log | 2.0 | 394 | 2.6083 |

| 1.7053 | 3.0 | 591 | 3.1023 |

| 1.7053 | 4.0 | 788 | 3.6280 |

| 1.7053 | 5.0 | 985 | 4.7670 |

| 0.3906 | 6.0 | 1182 | 5.1702 |

| 0.3906 | 7.0 | 1379 | 6.0245 |

| 0.119 | 8.0 | 1576 | 6.6972 |

| 0.119 | 9.0 | 1773 | 6.5388 |

| 0.119 | 10.0 | 1970 | 6.8572 |

### Framework versions

- Transformers 4.29.2

- Pytorch 2.0.1+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/Jira10Classic_32 | YakovElm | 2023-05-27T06:26:44Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T06:26:11Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Jira10Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Jira10Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2559

- Train Accuracy: 0.8972

- Validation Loss: 1.0026

- Validation Accuracy: 0.5994

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.5162 | 0.7629 | 0.8404 | 0.4890 | 0 |

| 0.4017 | 0.8122 | 0.8047 | 0.6151 | 1 |

| 0.2559 | 0.8972 | 1.0026 | 0.5994 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/Jira5Classic_32 | YakovElm | 2023-05-27T06:21:26Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T06:20:51Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Jira5Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Jira5Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3030

- Train Accuracy: 0.8867

- Validation Loss: 1.0513

- Validation Accuracy: 0.6151

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.5139 | 0.7555 | 0.7646 | 0.5047 | 0 |

| 0.4087 | 0.8038 | 0.8291 | 0.5552 | 1 |

| 0.3030 | 0.8867 | 1.0513 | 0.6151 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/MariaDB5Classic_256 | YakovElm | 2023-05-27T06:15:35Z | 60 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T06:14:59Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: MariaDB5Classic_256

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# MariaDB5Classic_256

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2607

- Train Accuracy: 0.9088

- Validation Loss: 0.2602

- Validation Accuracy: 0.9322

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3286 | 0.8862 | 0.2445 | 0.9322 | 0 |

| 0.2829 | 0.8954 | 0.2511 | 0.9322 | 1 |

| 0.2607 | 0.9088 | 0.2602 | 0.9322 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

CalderaAI/30B-Lazarus-GPTQ4bit | CalderaAI | 2023-05-27T06:14:54Z | 7 | 13 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2023-05-26T17:52:04Z | GPTQ 4 bit CUDA quantization of CalderaAI's 30B Lazarus:

https://huggingface.co/CalderaAI/30B-Lazarus |

MizukiTanaka31/Viloi | MizukiTanaka31 | 2023-05-27T06:00:02Z | 0 | 0 | null | [

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-05-27T05:58:36Z | ---

license: creativeml-openrail-m

---

|

YakovElm/IntelDAOS10Classic_64 | YakovElm | 2023-05-27T05:57:58Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T05:57:23Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: IntelDAOS10Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# IntelDAOS10Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2613

- Train Accuracy: 0.9200

- Validation Loss: 0.3848

- Validation Accuracy: 0.8739

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3128 | 0.8920 | 0.3859 | 0.8739 | 0 |

| 0.2678 | 0.9200 | 0.4156 | 0.8739 | 1 |

| 0.2613 | 0.9200 | 0.3848 | 0.8739 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/IntelDAOS20Classic_32 | YakovElm | 2023-05-27T05:40:35Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T05:40:00Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: IntelDAOS20Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# IntelDAOS20Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1464

- Train Accuracy: 0.9610

- Validation Loss: 0.3274

- Validation Accuracy: 0.9099

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.1971 | 0.9610 | 0.3072 | 0.9099 | 0 |

| 0.1570 | 0.9610 | 0.3179 | 0.9099 | 1 |

| 0.1464 | 0.9610 | 0.3274 | 0.9099 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

y22ma/luca | y22ma | 2023-05-27T05:13:52Z | 9 | 0 | diffusers | [

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"lora",

"base_model:SG161222/Realistic_Vision_V2.0",

"base_model:adapter:SG161222/Realistic_Vision_V2.0",

"license:creativeml-openrail-m",

"region:us"

] | text-to-image | 2023-05-27T05:13:51Z | ---

license: creativeml-openrail-m

base_model: SG161222/Realistic_Vision_V2.0

instance_prompt: a photograph of a sks luca

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# SVDiff-pytorch - luca

These are SVDiff weights for SG161222/Realistic_Vision_V2.0. The weights were trained on "a photograph of a sks luca" using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

|

kirp/psy-llama-extend-delta | kirp | 2023-05-27T05:02:31Z | 0 | 1 | null | [

"dataset:siyangliu/PsyQA",

"license:apache-2.0",

"region:us"

] | null | 2023-05-22T03:59:48Z | ---

license: apache-2.0

datasets:

- siyangliu/PsyQA

---

Extend the vocab of llama to 52992 and random initialization. |

Monero/WizardLM-13b-OpenAssistant-Uncensored | Monero | 2023-05-27T04:53:33Z | 1,436 | 7 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"text generation",

"instruct",

"en",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2023-05-15T03:41:29Z | ---

language:

- en

thumbnail: null

tags:

- text generation

- instruct

pipeline_tag: text-generation

inference: false

---

<h1 style="text-align: center">WizardLM 13b - Open Assistant</h1>

<h2 style="text-align: center">An instruction-following Llama model using full evolved-instructions. </h2>

## Model Details

This is a Lora merge of Open Assistant 13b - 4 Epoch with WizardLM-13b Uncensored. <br>

https://huggingface.co/serpdotai/llama-oasst-lora-13B <br>

https://huggingface.co/ehartford/WizardLM-13B-Uncensored

## Uncensored

Use ```### Certainly!``` at the end of your prompt to get answers to anything

<html>

<head>

<style>

table {

border:1px solid #b3adad;

border-collapse:collapse;

padding:5px;

}

table th {

border:1px solid #b3adad;

padding:5px;

background: #f0f0f0;

color: #313030;

}

table td {

border:1px solid #b3adad;

text-align:center;

padding:5px;

background: #ffffff;

color: #313030;

}

</style>

</head>

<body>

<table>

<thead>

<tr>

<th>Model:</th>

<th>Wikitext2</th>

<th>Ptb-New</th>

<th>C4-New</th>

</tr>

</thead>

<tbody>

<tr>

<td>WizardLM 13b OASST 16bit</td>

<td>8.9622220993042</td>

<td>15.324528694152832</td>

<td>12.847634315490723</td>

</tr>

<tr>

<td></td>

<td></td>

<td></td>

<td></td>

</tr>

</tbody>

</table>

</body>

</html>

<br><b>Other benchmark scores at the bottom of readme.</b>

<hr>

<hr>

<p><strong><font size="5">Click to Expand Benchmarks of different quantized variations</font></strong></p>

<strong><font size="4">The lower the number, the better the score.</font></strong>

<html>

<body>

<details>

<summary>Benchmarks Sorted by C4-New score</summary>

<table>

<thead>

<tr>

<th>GPTQ Variation:</th>

<th>Wikitext2</th>

<th>Ptb-New</th>

<th>C4-New</th>

</tr>

</thead>

<tbody>

<tr>

<td></td>

<td></td>

<td></td>

<td></td>

</tr>

<tr>

<td></td>

<td></td>

<td></td>

<td></td>

</tr>

<tr> |

YakovElm/Hyperledger15Classic_64 | YakovElm | 2023-05-27T04:53:01Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T04:52:27Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Hyperledger15Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Hyperledger15Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2628

- Train Accuracy: 0.9045

- Validation Loss: 0.3526

- Validation Accuracy: 0.8683

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3275 | 0.8942 | 0.3392 | 0.8807 | 0 |

| 0.2991 | 0.9035 | 0.3343 | 0.8807 | 1 |

| 0.2628 | 0.9045 | 0.3526 | 0.8683 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

kirp/psy-llama-base-delta | kirp | 2023-05-27T04:45:10Z | 0 | 0 | null | [

"dataset:siyangliu/PsyQA",

"region:us"

] | null | 2023-05-24T06:46:10Z | ---

datasets:

- siyangliu/PsyQA

---

lora + chinese llama + special tokens = psy-llama-base

https://wandb.ai/darkbird/psy-llama-4-4090 |

HillZhang/pseudo_native_bart_CGEC_media | HillZhang | 2023-05-27T04:43:35Z | 120 | 0 | transformers | [

"transformers",

"pytorch",

"bart",

"text2text-generation",

"Chinese",

"seq2seq",

"grammar",

"zh",

"arxiv:2305.16023",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2023-05-24T15:29:08Z | ---

tags:

- text2text-generation

- Chinese

- seq2seq

- grammar

language: zh

license: apache-2.0

---

# Pseudo-Native-BART-CGEC

This model is a cutting-edge CGEC model based on [Chinese BART-large](https://huggingface.co/fnlp/bart-large-chinese).

It is trained with about 100M pseudo native speaker CGEC training data generated by heuristic rules and human-annotated training data for the media domain.

More details can be found in our [Github](https://github.com/HillZhang1999/NaSGEC) and the [paper](https://arxiv.org/pdf/2305.16023.pdf).

## Usage

pip install transformers

```

from transformers import BertTokenizer, BartForConditionalGeneration, Text2TextGenerationPipeline

tokenizer = BertTokenizer.from_pretrained("HillZhang/pseudo_native_bart_CGEC_media")

model = BartForConditionalGeneration.from_pretrained("HillZhang/pseudo_native_bart_CGEC_media")

encoded_input = tokenizer(["北京是中国的都。", "他说:”我最爱的运动是打蓝球“", "我每天大约喝5次水左右。", "今天,我非常开开心。"], return_tensors="pt", padding=True, truncation=True)

if "token_type_ids" in encoded_input:

del encoded_input["token_type_ids"]

output = model.generate(**encoded_input)

print(tokenizer.batch_decode(output, skip_special_tokens=True))

```

## Citation

```

@inproceedings{zhang-etal-2023-nasgec,

title = "{Na}{SGEC}: a Multi-Domain Chinese Grammatical Error Correction Dataset from Native Speaker Texts",

author = "Zhang, Yue and

Zhang, Bo and

Jiang, Haochen and

Li, Zhenghua and

Li, Chen and

Huang, Fei and

Zhang, Min"

booktitle = "Findings of ACL",

year = "2023"

}

``` |

HillZhang/real_learner_bart_CGEC_exam | HillZhang | 2023-05-27T04:43:02Z | 120 | 3 | transformers | [

"transformers",

"pytorch",

"bart",

"text2text-generation",

"Chinese",

"seq2seq",

"grammar",

"zh",

"arxiv:2305.16023",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2023-05-24T15:28:28Z | ---

tags:

- text2text-generation

- Chinese

- seq2seq

- grammar

language: zh

license: apache-2.0

---

# Pseudo-Native-BART-CGEC

This model is a cutting-edge CGEC model based on [Chinese BART-large](https://huggingface.co/fnlp/bart-large-chinese).

It is trained with HSK and Lang8 learner CGEC data (about 1.3M) and human-annotated training data for the exam domain.

More details can be found in our [Github](https://github.com/HillZhang1999/NaSGEC) and the [paper](https://arxiv.org/pdf/2305.16023.pdf).

## Usage

pip install transformers

```

from transformers import BertTokenizer, BartForConditionalGeneration, Text2TextGenerationPipeline

tokenizer = BertTokenizer.from_pretrained("HillZhang/real_learner_bart_CGEC_exam")

model = BartForConditionalGeneration.from_pretrained("HillZhang/real_learner_bart_CGEC_exam")

encoded_input = tokenizer(["北京是中国的都。", "他说:”我最爱的运动是打蓝球“", "我每天大约喝5次水左右。", "今天,我非常开开心。"], return_tensors="pt", padding=True, truncation=True)

if "token_type_ids" in encoded_input:

del encoded_input["token_type_ids"]

output = model.generate(**encoded_input)

print(tokenizer.batch_decode(output, skip_special_tokens=True))

```

## Citation

```

@inproceedings{zhang-etal-2023-nasgec,

title = "{Na}{SGEC}: a Multi-Domain Chinese Grammatical Error Correction Dataset from Native Speaker Texts",

author = "Zhang, Yue and

Zhang, Bo and

Jiang, Haochen and

Li, Zhenghua and

Li, Chen and

Huang, Fei and

Zhang, Min"

booktitle = "Findings of ACL",

year = "2023"

}

``` |

HillZhang/pseudo_native_bart_CGEC | HillZhang | 2023-05-27T04:39:23Z | 304 | 1 | transformers | [

"transformers",

"pytorch",

"bart",

"text2text-generation",

"Chinese",

"seq2seq",

"grammar",

"zh",

"arxiv:2305.16023",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2023-05-24T15:19:59Z | ---

tags:

- text2text-generation

- Chinese

- seq2seq

- grammar

language: zh

license: apache-2.0

---

# Pseudo-Native-BART-CGEC

This model is a cutting-edge CGEC model based on [Chinese BART-large](https://huggingface.co/fnlp/bart-large-chinese).

It is trained with about 100M pseudo native speaker CGEC training data generated by heuristic rules.

More details can be found in our [Github](https://github.com/HillZhang1999/NaSGEC) and the [paper](https://arxiv.org/pdf/2305.16023.pdf).

## Usage

pip install transformers

```

from transformers import BertTokenizer, BartForConditionalGeneration, Text2TextGenerationPipeline

tokenizer = BertTokenizer.from_pretrained("HillZhang/pseudo_native_bart_CGEC")

model = BartForConditionalGeneration.from_pretrained("HillZhang/pseudo_native_bart_CGEC")

encoded_input = tokenizer(["北京是中国的都。", "他说:”我最爱的运动是打蓝球“", "我每天大约喝5次水左右。", "今天,我非常开开心。"], return_tensors="pt", padding=True, truncation=True)

if "token_type_ids" in encoded_input:

del encoded_input["token_type_ids"]

output = model.generate(**encoded_input)

print(tokenizer.batch_decode(output, skip_special_tokens=True))

```

## Citation

```

@inproceedings{zhang-etal-2023-nasgec,

title = "{Na}{SGEC}: a Multi-Domain Chinese Grammatical Error Correction Dataset from Native Speaker Texts",

author = "Zhang, Yue and

Zhang, Bo and

Jiang, Haochen and

Li, Zhenghua and

Li, Chen and

Huang, Fei and

Zhang, Min"

booktitle = "Findings of ACL",

year = "2023"

}

``` |

rsadaphule/vit-base-patch16-224-finetuned-flower-ravindrasadaphule | rsadaphule | 2023-05-27T04:32:58Z | 163 | 0 | transformers | [

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | image-classification | 2023-05-24T17:13:25Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imagefolder

model-index:

- name: vit-base-patch16-224-finetuned-flower-ravindrasadaphule

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-patch16-224-finetuned-flower-ravindrasadaphule

This model is a fine-tuned version of [google/vit-base-patch16-224](https://huggingface.co/google/vit-base-patch16-224) on the imagefolder dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

### Framework versions

- Transformers 4.24.0

- Pytorch 2.0.1+cu118

- Datasets 2.7.1

- Tokenizers 0.13.3

|

nakajimayoshi/ddpm-iris-256 | nakajimayoshi | 2023-05-27T04:32:06Z | 37 | 2 | diffusers | [

"diffusers",

"tensorboard",

"biology",

"unconditional-image-generation",

"arxiv:2006.11239",

"license:apache-2.0",

"diffusers:DDPMPipeline",

"region:us"

] | unconditional-image-generation | 2023-05-09T07:31:36Z | ---

license: apache-2.0

pipeline_tag: unconditional-image-generation

tags:

- biology

library_name: diffusers

---

Diffusion model trained on a public dataset of images from [image data resource](https://idr.openmicroscopy.org/cell/) to create highly detailed accurate depictions of flourescent and super-resolution cell images.

# Ground-truth image data obtained from idr:

```py

from diffusers import DDIMPipeline, DDPMPipeline, PNDMPipeline

model_id = "nakajimayoshi/ddpm-iris-256"

# load model and scheduler

ddim = DDIMPipeline.from_pretrained(model_id) # you can replace DDPMPipeline with DDIMPipeline or PNDMPipeline for faster inference

# run pipeline in inference (sample random noise and denoise)

image = ddim().images[0]

# save image

image.save("sample.png")

```

The role of generative AI in the science is a new discussion and the merits of it have yet to be evaluated. Whilst current image-to-image and text-to-image models make it easier than ever to create stunning images, they lack the specific training sets to replicate accurate and detailed images found in flourescent cell microscopy.

We propose ddpm-IRIS, a difusion network leveraging Google's [Diffusion Model](https://arxiv.org/abs/2006.11239) to generate visual depitctions of cell features with more detail than traditional models.

Hyperparameters:

- image_size = 256

- train_batch_size = 16

- eval_batch_size = 16

- num_epochs = 50

- gradient_accumulation_steps = 1

- learning_rate = 1e-4

- lr_warmup_steps = 500

- save_image_epochs = 10

- save_model_epochs = 30

- mixed_precision = 'fp16'

trained on 1 Nvidia A100 40GB GPU over 50 epochs for 2.5 hours.

|

Varaprabha/Qtable_taxi | Varaprabha | 2023-05-27T04:13:34Z | 0 | 0 | null | [

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] | reinforcement-learning | 2023-05-27T04:13:32Z | ---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: Qtable_taxi

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.54 +/- 2.73

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="Varaprbha/Qtable_taxi", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

YakovElm/Hyperledger5Classic_64 | YakovElm | 2023-05-27T04:03:05Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T04:02:31Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Hyperledger5Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Hyperledger5Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3683

- Train Accuracy: 0.8561

- Validation Loss: 0.4172

- Validation Accuracy: 0.8351

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.4207 | 0.8481 | 0.4357 | 0.8361 | 0 |

| 0.3940 | 0.8547 | 0.4199 | 0.8361 | 1 |

| 0.3683 | 0.8561 | 0.4172 | 0.8351 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

ThePioneer/CBDMix | ThePioneer | 2023-05-27T03:39:30Z | 0 | 0 | null | [

"art",

"safetensors",

"en",

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-05-25T11:24:01Z | ---

license: creativeml-openrail-m

language:

- en

tags:

- art

- safetensors

---

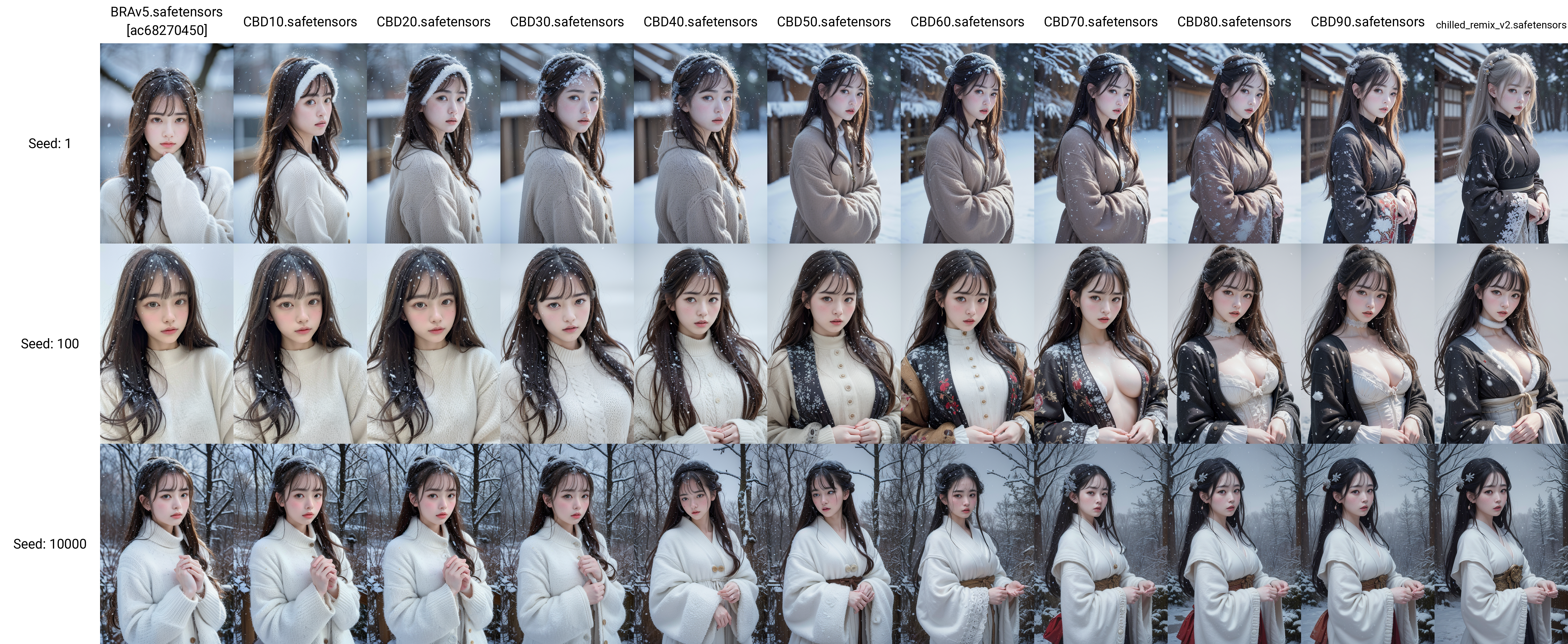

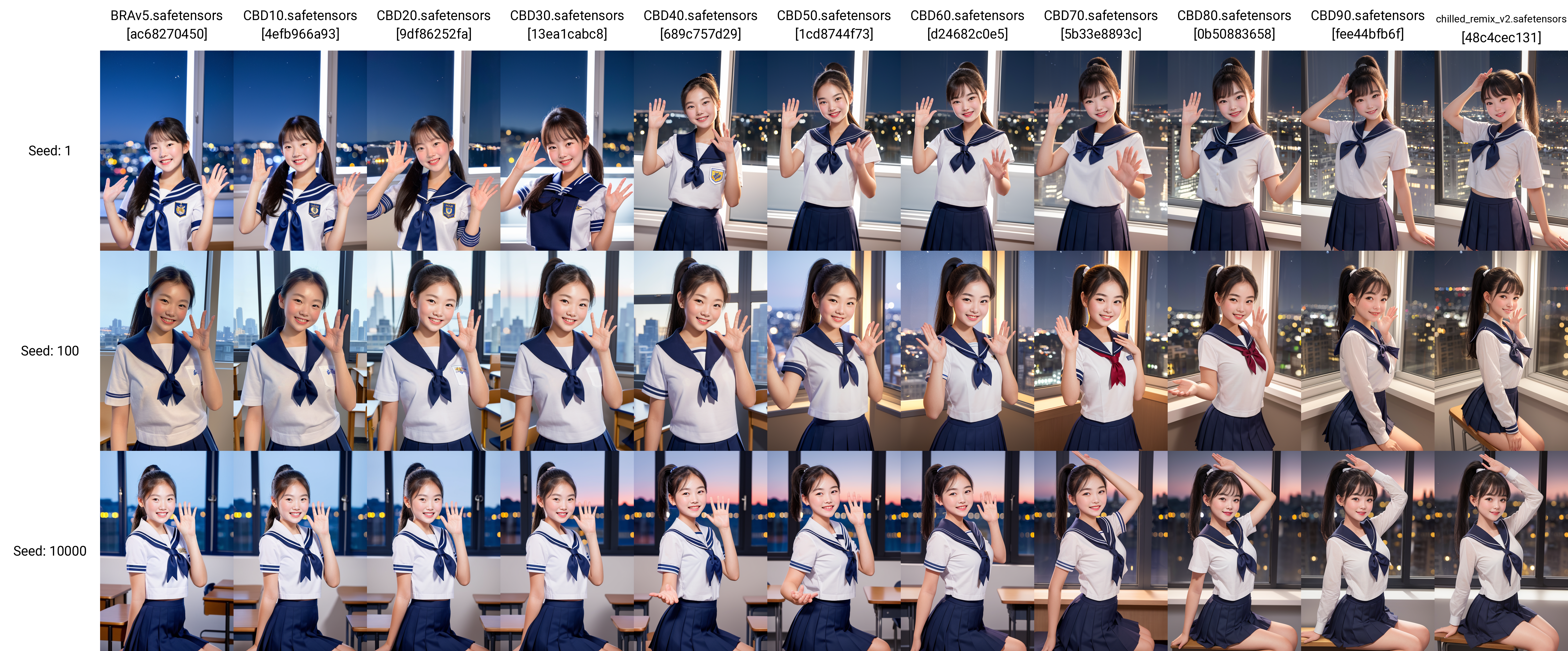

CBDMix is a mixed model of [BRAv5](https://huggingface.co/BanKaiPls/AsianModel) and [chilled remix V2](https://huggingface.co/sazyou-roukaku/chilled_remix), ranging from 10% to 90%.

Since both of them are released under the openrail license family, the same [CreativeML Open RAIL-M](https://huggingface.co/spaces/CompVis/stable-diffusion-license) license will be applied to the model as well.

## Samples

|

YakovElm/Hyperledger10Classic_32 | YakovElm | 2023-05-27T03:02:45Z | 62 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T03:02:01Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Hyperledger10Classic_32

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Hyperledger10Classic_32

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2991

- Train Accuracy: 0.8845

- Validation Loss: 0.3973

- Validation Accuracy: 0.8548

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.3675 | 0.8779 | 0.3861 | 0.8600 | 0 |

| 0.3449 | 0.8838 | 0.3911 | 0.8600 | 1 |

| 0.2991 | 0.8845 | 0.3973 | 0.8548 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

ShadowPower/ggml-alpaca-chatgpt-new | ShadowPower | 2023-05-27T02:34:47Z | 0 | 7 | null | [

"text generation",

"ggml",

"text-generation",

"zh",

"en",

"license:other",

"region:us"

] | text-generation | 2023-05-12T03:37:41Z | ---

license: other

language:

- zh

- en

pipeline_tag: text-generation

tags:

- text generation

- ggml

---

# 说明

这是兼容 llama.cpp commit [b9fd7ee](https://github.com/ggerganov/llama.cpp/pull/1405) 的版本。

支持的语言:汉语、英语。

以 LLaMa 为基础模型,使用 Alpaca 的方式,在大量 ChatGPT 聊天记录和百度搜索结果的基础上,使用 8 块 A100 训练一星期而成。输出的 Token 中加入了常用汉字,相比 Vicuna 有更好的汉语表达能力,能生成更长的中文内容。

可以生成和 ChatGPT 风格相似的对话。如果精心构造 Prompt,也可以用在一部分 NLP 任务中。

建议使用 koboldcpp 加载,然后载入 koboldcpp_config.json 配置文件。

也可以用来续写小说,质量比几乎所有同等参数量的开源模型还要好。

仅供个人娱乐,学习研究使用。不得用于商业用途。

小说续写,橙色线条以下为模型生成内容:

# 局限性

训练内容未经人工修正,模型可能产生存在偏见、错误或有害的内容;

有时模型会生成与 Alpaca 训练数据相似的无意义内容;

由于训练数据存在问题,在回答知识类问题时,模型会在末尾生成虚假的参考来源。 |

YakovElm/Apache20Classic_64 | YakovElm | 2023-05-27T02:30:58Z | 61 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T02:30:21Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Apache20Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Apache20Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1374

- Train Accuracy: 0.9624

- Validation Loss: 0.3081

- Validation Accuracy: 0.9055

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.1664 | 0.9620 | 0.3171 | 0.9055 | 0 |

| 0.1522 | 0.9624 | 0.2966 | 0.9055 | 1 |

| 0.1374 | 0.9624 | 0.3081 | 0.9055 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|

YakovElm/Apache15Classic_64 | YakovElm | 2023-05-27T01:46:32Z | 62 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2023-05-27T01:45:58Z | ---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Apache15Classic_64

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Apache15Classic_64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1664

- Train Accuracy: 0.9542

- Validation Loss: 0.3210

- Validation Accuracy: 0.8924

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': 1.0, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': 3e-05, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.1964 | 0.9533 | 0.3529 | 0.8924 | 0 |

| 0.1834 | 0.9542 | 0.3501 | 0.8924 | 1 |

| 0.1664 | 0.9542 | 0.3210 | 0.8924 | 2 |

### Framework versions

- Transformers 4.29.2

- TensorFlow 2.12.0

- Datasets 2.12.0

- Tokenizers 0.13.3

|