modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-06-04 18:27:18

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 468

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 54

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-06-04 18:26:45

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

fse/glove-twitter-100 | fse | 2021-12-02T16:39:20Z | 0 | 0 | null | [

"glove",

"gensim",

"fse",

"region:us"

] | null | 2022-03-02T23:29:05Z | ---

tags:

- glove

- gensim

- fse

---

# Glove Twitter

Pre-trained glove vectors based on 2B tweets, 27B tokens, 1.2M vocab, uncased.

Read more:

* https://nlp.stanford.edu/projects/glove/

* https://nlp.stanford.edu/pubs/glove.pdf

|

emrecan/bert-base-turkish-cased-allnli_tr | emrecan | 2021-12-02T14:58:36Z | 19 | 1 | transformers | [

"transformers",

"pytorch",

"bert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: mit

datasets:

- nli_tr

metrics:

- accuracy

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-turkish-cased_allnli_tr

This model is a fine-tuned version of [dbmdz/bert-base-turkish-cased](https://huggingface.co/dbmdz/bert-base-turkish-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5771

- Accuracy: 0.7978

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.8559 | 0.03 | 1000 | 0.7577 | 0.6798 |

| 0.6612 | 0.07 | 2000 | 0.7263 | 0.6958 |

| 0.6115 | 0.1 | 3000 | 0.6431 | 0.7364 |

| 0.5916 | 0.14 | 4000 | 0.6347 | 0.7407 |

| 0.5719 | 0.17 | 5000 | 0.6317 | 0.7483 |

| 0.5575 | 0.2 | 6000 | 0.6034 | 0.7544 |

| 0.5521 | 0.24 | 7000 | 0.6148 | 0.7568 |

| 0.5393 | 0.27 | 8000 | 0.5931 | 0.7610 |

| 0.5382 | 0.31 | 9000 | 0.5866 | 0.7665 |

| 0.5306 | 0.34 | 10000 | 0.5881 | 0.7594 |

| 0.5295 | 0.37 | 11000 | 0.6120 | 0.7632 |

| 0.5225 | 0.41 | 12000 | 0.5620 | 0.7759 |

| 0.5112 | 0.44 | 13000 | 0.5641 | 0.7769 |

| 0.5133 | 0.48 | 14000 | 0.5571 | 0.7798 |

| 0.5023 | 0.51 | 15000 | 0.5719 | 0.7722 |

| 0.5017 | 0.54 | 16000 | 0.5482 | 0.7844 |

| 0.5111 | 0.58 | 17000 | 0.5503 | 0.7800 |

| 0.4929 | 0.61 | 18000 | 0.5502 | 0.7836 |

| 0.4923 | 0.65 | 19000 | 0.5424 | 0.7843 |

| 0.4894 | 0.68 | 20000 | 0.5417 | 0.7851 |

| 0.4877 | 0.71 | 21000 | 0.5514 | 0.7841 |

| 0.4818 | 0.75 | 22000 | 0.5494 | 0.7848 |

| 0.4898 | 0.78 | 23000 | 0.5450 | 0.7859 |

| 0.4823 | 0.82 | 24000 | 0.5417 | 0.7878 |

| 0.4806 | 0.85 | 25000 | 0.5354 | 0.7875 |

| 0.4779 | 0.88 | 26000 | 0.5338 | 0.7848 |

| 0.4744 | 0.92 | 27000 | 0.5277 | 0.7934 |

| 0.4678 | 0.95 | 28000 | 0.5507 | 0.7871 |

| 0.4727 | 0.99 | 29000 | 0.5603 | 0.7789 |

| 0.4243 | 1.02 | 30000 | 0.5626 | 0.7894 |

| 0.3955 | 1.05 | 31000 | 0.5324 | 0.7939 |

| 0.4022 | 1.09 | 32000 | 0.5322 | 0.7925 |

| 0.3976 | 1.12 | 33000 | 0.5450 | 0.7920 |

| 0.3913 | 1.15 | 34000 | 0.5464 | 0.7948 |

| 0.406 | 1.19 | 35000 | 0.5406 | 0.7958 |

| 0.3875 | 1.22 | 36000 | 0.5489 | 0.7878 |

| 0.4024 | 1.26 | 37000 | 0.5427 | 0.7925 |

| 0.3988 | 1.29 | 38000 | 0.5335 | 0.7904 |

| 0.393 | 1.32 | 39000 | 0.5415 | 0.7923 |

| 0.3988 | 1.36 | 40000 | 0.5385 | 0.7962 |

| 0.3912 | 1.39 | 41000 | 0.5383 | 0.7950 |

| 0.3949 | 1.43 | 42000 | 0.5415 | 0.7931 |

| 0.3902 | 1.46 | 43000 | 0.5438 | 0.7893 |

| 0.3948 | 1.49 | 44000 | 0.5348 | 0.7906 |

| 0.3921 | 1.53 | 45000 | 0.5361 | 0.7890 |

| 0.3944 | 1.56 | 46000 | 0.5419 | 0.7953 |

| 0.3959 | 1.6 | 47000 | 0.5402 | 0.7967 |

| 0.3926 | 1.63 | 48000 | 0.5429 | 0.7925 |

| 0.3854 | 1.66 | 49000 | 0.5346 | 0.7959 |

| 0.3864 | 1.7 | 50000 | 0.5241 | 0.7979 |

| 0.385 | 1.73 | 51000 | 0.5149 | 0.8002 |

| 0.3871 | 1.77 | 52000 | 0.5325 | 0.8002 |

| 0.3819 | 1.8 | 53000 | 0.5332 | 0.8022 |

| 0.384 | 1.83 | 54000 | 0.5419 | 0.7873 |

| 0.3899 | 1.87 | 55000 | 0.5225 | 0.7974 |

| 0.3894 | 1.9 | 56000 | 0.5358 | 0.7977 |

| 0.3838 | 1.94 | 57000 | 0.5264 | 0.7988 |

| 0.3881 | 1.97 | 58000 | 0.5280 | 0.7956 |

| 0.3756 | 2.0 | 59000 | 0.5601 | 0.7969 |

| 0.3156 | 2.04 | 60000 | 0.5936 | 0.7925 |

| 0.3125 | 2.07 | 61000 | 0.5898 | 0.7938 |

| 0.3179 | 2.11 | 62000 | 0.5591 | 0.7981 |

| 0.315 | 2.14 | 63000 | 0.5853 | 0.7970 |

| 0.3122 | 2.17 | 64000 | 0.5802 | 0.7979 |

| 0.3105 | 2.21 | 65000 | 0.5758 | 0.7979 |

| 0.3076 | 2.24 | 66000 | 0.5685 | 0.7980 |

| 0.3117 | 2.28 | 67000 | 0.5799 | 0.7944 |

| 0.3108 | 2.31 | 68000 | 0.5742 | 0.7988 |

| 0.3047 | 2.34 | 69000 | 0.5907 | 0.7921 |

| 0.3114 | 2.38 | 70000 | 0.5723 | 0.7937 |

| 0.3035 | 2.41 | 71000 | 0.5944 | 0.7955 |

| 0.3129 | 2.45 | 72000 | 0.5838 | 0.7928 |

| 0.3071 | 2.48 | 73000 | 0.5929 | 0.7949 |

| 0.3061 | 2.51 | 74000 | 0.5794 | 0.7967 |

| 0.3068 | 2.55 | 75000 | 0.5892 | 0.7954 |

| 0.3053 | 2.58 | 76000 | 0.5796 | 0.7962 |

| 0.3117 | 2.62 | 77000 | 0.5763 | 0.7981 |

| 0.3062 | 2.65 | 78000 | 0.5852 | 0.7964 |

| 0.3004 | 2.68 | 79000 | 0.5793 | 0.7966 |

| 0.3146 | 2.72 | 80000 | 0.5693 | 0.7985 |

| 0.3146 | 2.75 | 81000 | 0.5788 | 0.7982 |

| 0.3079 | 2.79 | 82000 | 0.5726 | 0.7978 |

| 0.3058 | 2.82 | 83000 | 0.5677 | 0.7988 |

| 0.3055 | 2.85 | 84000 | 0.5701 | 0.7982 |

| 0.3049 | 2.89 | 85000 | 0.5809 | 0.7970 |

| 0.3044 | 2.92 | 86000 | 0.5741 | 0.7986 |

| 0.3057 | 2.96 | 87000 | 0.5743 | 0.7980 |

| 0.3081 | 2.99 | 88000 | 0.5771 | 0.7978 |

### Framework versions

- Transformers 4.12.3

- Pytorch 1.10.0+cu102

- Datasets 1.15.1

- Tokenizers 0.10.3

|

emrecan/convbert-base-turkish-mc4-cased-allnli_tr | emrecan | 2021-12-02T14:57:01Z | 97 | 2 | transformers | [

"transformers",

"pytorch",

"convbert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

metrics:

- accuracy

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# convbert-base-turkish-mc4-cased_allnli_tr

This model is a fine-tuned version of [dbmdz/convbert-base-turkish-mc4-cased](https://huggingface.co/dbmdz/convbert-base-turkish-mc4-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5541

- Accuracy: 0.8111

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.7338 | 0.03 | 1000 | 0.6722 | 0.7236 |

| 0.603 | 0.07 | 2000 | 0.6465 | 0.7399 |

| 0.5605 | 0.1 | 3000 | 0.5801 | 0.7728 |

| 0.55 | 0.14 | 4000 | 0.5994 | 0.7626 |

| 0.529 | 0.17 | 5000 | 0.5720 | 0.7697 |

| 0.5196 | 0.2 | 6000 | 0.5692 | 0.7769 |

| 0.5117 | 0.24 | 7000 | 0.5725 | 0.7785 |

| 0.5044 | 0.27 | 8000 | 0.5532 | 0.7787 |

| 0.5016 | 0.31 | 9000 | 0.5546 | 0.7812 |

| 0.5031 | 0.34 | 10000 | 0.5461 | 0.7870 |

| 0.4949 | 0.37 | 11000 | 0.5725 | 0.7826 |

| 0.4894 | 0.41 | 12000 | 0.5419 | 0.7933 |

| 0.4796 | 0.44 | 13000 | 0.5278 | 0.7914 |

| 0.4795 | 0.48 | 14000 | 0.5193 | 0.7953 |

| 0.4713 | 0.51 | 15000 | 0.5534 | 0.7771 |

| 0.4738 | 0.54 | 16000 | 0.5098 | 0.8039 |

| 0.481 | 0.58 | 17000 | 0.5244 | 0.7958 |

| 0.4634 | 0.61 | 18000 | 0.5215 | 0.7972 |

| 0.465 | 0.65 | 19000 | 0.5129 | 0.7985 |

| 0.4624 | 0.68 | 20000 | 0.5062 | 0.8047 |

| 0.4597 | 0.71 | 21000 | 0.5114 | 0.8029 |

| 0.4571 | 0.75 | 22000 | 0.5070 | 0.8073 |

| 0.4602 | 0.78 | 23000 | 0.5115 | 0.7993 |

| 0.4552 | 0.82 | 24000 | 0.5085 | 0.8052 |

| 0.4538 | 0.85 | 25000 | 0.5118 | 0.7974 |

| 0.4517 | 0.88 | 26000 | 0.5036 | 0.8044 |

| 0.4517 | 0.92 | 27000 | 0.4930 | 0.8062 |

| 0.4413 | 0.95 | 28000 | 0.5307 | 0.7964 |

| 0.4483 | 0.99 | 29000 | 0.5195 | 0.7938 |

| 0.4036 | 1.02 | 30000 | 0.5238 | 0.8029 |

| 0.3724 | 1.05 | 31000 | 0.5125 | 0.8082 |

| 0.3777 | 1.09 | 32000 | 0.5099 | 0.8075 |

| 0.3753 | 1.12 | 33000 | 0.5172 | 0.8053 |

| 0.367 | 1.15 | 34000 | 0.5188 | 0.8053 |

| 0.3819 | 1.19 | 35000 | 0.5218 | 0.8046 |

| 0.363 | 1.22 | 36000 | 0.5202 | 0.7993 |

| 0.3794 | 1.26 | 37000 | 0.5240 | 0.8048 |

| 0.3749 | 1.29 | 38000 | 0.5026 | 0.8054 |

| 0.367 | 1.32 | 39000 | 0.5198 | 0.8075 |

| 0.3759 | 1.36 | 40000 | 0.5298 | 0.7993 |

| 0.3701 | 1.39 | 41000 | 0.5072 | 0.8091 |

| 0.3742 | 1.43 | 42000 | 0.5071 | 0.8098 |

| 0.3706 | 1.46 | 43000 | 0.5317 | 0.8037 |

| 0.3716 | 1.49 | 44000 | 0.5034 | 0.8052 |

| 0.3717 | 1.53 | 45000 | 0.5258 | 0.8012 |

| 0.3714 | 1.56 | 46000 | 0.5195 | 0.8050 |

| 0.3781 | 1.6 | 47000 | 0.5004 | 0.8104 |

| 0.3725 | 1.63 | 48000 | 0.5124 | 0.8113 |

| 0.3624 | 1.66 | 49000 | 0.5040 | 0.8094 |

| 0.3657 | 1.7 | 50000 | 0.4979 | 0.8111 |

| 0.3669 | 1.73 | 51000 | 0.4968 | 0.8100 |

| 0.3636 | 1.77 | 52000 | 0.5075 | 0.8079 |

| 0.36 | 1.8 | 53000 | 0.4985 | 0.8110 |

| 0.3624 | 1.83 | 54000 | 0.5125 | 0.8070 |

| 0.366 | 1.87 | 55000 | 0.4918 | 0.8117 |

| 0.3655 | 1.9 | 56000 | 0.5051 | 0.8109 |

| 0.3609 | 1.94 | 57000 | 0.5083 | 0.8105 |

| 0.3672 | 1.97 | 58000 | 0.5129 | 0.8085 |

| 0.3545 | 2.0 | 59000 | 0.5467 | 0.8109 |

| 0.2938 | 2.04 | 60000 | 0.5635 | 0.8049 |

| 0.29 | 2.07 | 61000 | 0.5781 | 0.8041 |

| 0.2992 | 2.11 | 62000 | 0.5470 | 0.8077 |

| 0.2957 | 2.14 | 63000 | 0.5765 | 0.8073 |

| 0.292 | 2.17 | 64000 | 0.5472 | 0.8106 |

| 0.2893 | 2.21 | 65000 | 0.5590 | 0.8085 |

| 0.2883 | 2.24 | 66000 | 0.5535 | 0.8064 |

| 0.2923 | 2.28 | 67000 | 0.5508 | 0.8095 |

| 0.2868 | 2.31 | 68000 | 0.5679 | 0.8098 |

| 0.2892 | 2.34 | 69000 | 0.5660 | 0.8057 |

| 0.292 | 2.38 | 70000 | 0.5494 | 0.8088 |

| 0.286 | 2.41 | 71000 | 0.5653 | 0.8085 |

| 0.2939 | 2.45 | 72000 | 0.5673 | 0.8070 |

| 0.286 | 2.48 | 73000 | 0.5600 | 0.8092 |

| 0.2844 | 2.51 | 74000 | 0.5508 | 0.8095 |

| 0.2913 | 2.55 | 75000 | 0.5645 | 0.8088 |

| 0.2859 | 2.58 | 76000 | 0.5677 | 0.8095 |

| 0.2892 | 2.62 | 77000 | 0.5598 | 0.8113 |

| 0.2898 | 2.65 | 78000 | 0.5618 | 0.8096 |

| 0.2814 | 2.68 | 79000 | 0.5664 | 0.8103 |

| 0.2917 | 2.72 | 80000 | 0.5484 | 0.8122 |

| 0.2907 | 2.75 | 81000 | 0.5522 | 0.8116 |

| 0.2896 | 2.79 | 82000 | 0.5540 | 0.8093 |

| 0.2907 | 2.82 | 83000 | 0.5469 | 0.8104 |

| 0.2882 | 2.85 | 84000 | 0.5471 | 0.8122 |

| 0.2878 | 2.89 | 85000 | 0.5532 | 0.8108 |

| 0.2858 | 2.92 | 86000 | 0.5511 | 0.8115 |

| 0.288 | 2.96 | 87000 | 0.5491 | 0.8111 |

| 0.2834 | 2.99 | 88000 | 0.5541 | 0.8111 |

### Framework versions

- Transformers 4.12.3

- Pytorch 1.10.0+cu102

- Datasets 1.15.1

- Tokenizers 0.10.3

|

project2you/wav2vec2-large-xlsr-53-demo-colab | project2you | 2021-12-02T11:58:26Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | automatic-speech-recognition | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-large-xlsr-53-demo-colab

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xlsr-53-demo-colab

This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6901

- Wer: 1.6299

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 8.5034 | 3.42 | 400 | 3.5852 | 1.0 |

| 1.7853 | 6.83 | 800 | 0.7430 | 1.6774 |

| 0.5675 | 10.26 | 1200 | 0.6513 | 1.6330 |

| 0.3761 | 13.67 | 1600 | 0.6208 | 1.6081 |

| 0.2776 | 17.09 | 2000 | 0.6401 | 1.6081 |

| 0.2266 | 20.51 | 2400 | 0.6410 | 1.6295 |

| 0.1949 | 23.93 | 2800 | 0.6910 | 1.6287 |

| 0.1672 | 27.35 | 3200 | 0.6901 | 1.6299 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu111

- Datasets 1.14.0

- Tokenizers 0.10.3

|

tosin/dialogpt_mwoz | tosin | 2021-12-02T09:32:03Z | 52 | 6 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"en",

"dataset:multi_woz_v22",

"arxiv:2110.06273",

"license:cc-by-4.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

thumbnail: https://huggingface.co/front/thumbnails/dialogpt.png

language:

- en

license: cc-by-4.0

tags:

- conversational

- transformers

datasets:

- multi_woz_v22

metrics:

- perplexity

widget:

- text: "I would like to have breakfast."

---

## DialoGPT_MWOZ

This is a fine-tuned model of DialoGPT (medium) on the MultiWOZ v2.2 dataset. It is intended to be used as a conversational system.

The dataset it's trained on is limited in scope, as it covers only certain domains such as restaurants, hotel, taxi, train, hospital and police.

The perplexity achieved on the test and validation sets are 10.25 and 10.71, respectively. More information about the original pre-trained model can be found [here](https://huggingface.co/microsoft/DialoGPT-medium)

* Generation example from an interactive environment:

|Role | Response |

|---------|------------|

|User | I would like to have breakfast. |

|Bot | There are several options for Chinese in the city centre. Are you looking for a particular price range? |

|User | I'm looking for free food |

|Bot | There are 4 Chinese restaurants that are in the cheap price range. 3 in centre and one in north. |

|User | Not good enough |

|Bot | How about Charlie Chan? It is a Chinese restaurant in the centre of town. |

Please find the information about preprocessing, training and full details of the DialoGPT in the [original DialoGPT repository](https://github.com/microsoft/DialoGPT)

The paper for this work was accepted at the Northern Lights Deep Learning (NLDL) conference 2022. Arxiv paper: [https://arxiv.org/pdf/2110.06273.pdf](https://arxiv.org/pdf/2110.06273.pdf)

### How to use

Now we are ready to try out how the model works as a chatting partner!

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

tokenizer = AutoTokenizer.from_pretrained("tosin/dialogpt_mwoz")

model = AutoModelForCausalLM.from_pretrained("tosin/dialogpt_mwoz")

# Let's chat for 5 lines

for step in range(5):

# encode the new user input, add the eos_token and return a tensor in Pytorch

new_user_input_ids = tokenizer.encode(input(">> User:") + tokenizer.eos_token, return_tensors='pt')

# append the new user input tokens to the chat history

bot_input_ids = torch.cat([chat_history_ids, new_user_input_ids], dim=-1) if step > 0 else new_user_input_ids

# generated a response while limiting the total chat history to 1000 tokens,

chat_history_ids = model.generate(bot_input_ids, max_length=1000, pad_token_id=tokenizer.eos_token_id)

# pretty print last ouput tokens from bot

print("DialoGPT_MWOZ_Bot: {}".format(tokenizer.decode(chat_history_ids[:, bot_input_ids.shape[-1]:][0], skip_special_tokens=True)))

|

chandank/bart-base-finetuned-kaggglenews-batch8 | chandank | 2021-12-02T09:16:30Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: bart-base-finetuned-kaggglenews-batch8

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bart-base-finetuned-kaggglenews-batch8

This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:------:|:---------:|:-------:|

| No log | 1.0 | 495 | 1.6409 | 27.9647 | 15.4352 | 23.611 | 25.107 | 20.0 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu102

- Datasets 1.16.1

- Tokenizers 0.10.3

|

Jeska/VaccinChatSentenceClassifierDutch_fromBERTjeDIAL | Jeska | 2021-12-02T08:29:44Z | 5 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | 2022-03-02T23:29:04Z | ---

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: VaccinChatSentenceClassifierDutch_fromBERTjeDIAL

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# VaccinChatSentenceClassifierDutch_fromBERTjeDIAL

This model is a fine-tuned version of [Jeska/BertjeWDialDataQA20k](https://huggingface.co/Jeska/BertjeWDialDataQA20k) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8355

- Accuracy: 0.6322

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 3.4418 | 1.0 | 1457 | 2.3866 | 0.5406 |

| 1.7742 | 2.0 | 2914 | 1.9365 | 0.6069 |

| 1.1313 | 3.0 | 4371 | 1.8355 | 0.6322 |

### Framework versions

- Transformers 4.13.0.dev0

- Pytorch 1.10.0

- Datasets 1.16.1

- Tokenizers 0.10.3

|

huggingtweets/kaikothesharko | huggingtweets | 2021-12-02T04:58:11Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: http://www.huggingtweets.com/kaikothesharko/1638421086822/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1463379249578987527/OUX9AGXt_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Kaiko TF (RAFFLE IN PINNED)</div>

<div style="text-align: center; font-size: 14px;">@kaikothesharko</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Kaiko TF (RAFFLE IN PINNED).

| Data | Kaiko TF (RAFFLE IN PINNED) |

| --- | --- |

| Tweets downloaded | 2169 |

| Retweets | 259 |

| Short tweets | 529 |

| Tweets kept | 1381 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/18zt3o3w/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @kaikothesharko's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1ajrcjpz) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1ajrcjpz/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/kaikothesharko')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

eliotm/t5-small-finetuned-en-to-ro-lr_2e-6 | eliotm | 2021-12-02T03:07:16Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: t5-small-finetuned-en-to-ro-lr_2e-6

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wmt16

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 7.2935

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-en-to-ro-lr_2e-6

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt16 dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4232

- Bleu: 7.2935

- Gen Len: 18.2521

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-06

- train_batch_size: 10

- eval_batch_size: 10

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 0.04375

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|

| 0.6703 | 0.04 | 2671 | 1.4232 | 7.2935 | 18.2521 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

chopey/testmntdv | chopey | 2021-12-02T02:48:18Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | Test English-Dhivehi/Dhivehi-English NMT

Would need a lot more data to get accurate translations. |

BigSalmon/FormalRobertaa | BigSalmon | 2021-12-02T00:19:24Z | 5 | 0 | transformers | [

"transformers",

"pytorch",

"roberta",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-03-02T23:29:04Z | https://huggingface.co/spaces/BigSalmon/MASK2 |

BigSalmon/MrLincoln11 | BigSalmon | 2021-12-01T20:17:55Z | 10 | 0 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:04Z | Informal to Formal:

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("gpt2")

model = AutoModelWithLMHead.from_pretrained("BigSalmon/MrLincoln11")

```

```

How To Make Prompt:

Original: freedom of the press is a check against political corruption.

Edited: fundamental to the spirit of democracy, freedom of the press is a check against political corruption.

Edited 2: ever at odds with tyranny, freedom of the press is a check against political corruption.

Edited 3: never to be neglected, freedom of the press is a check against political corruption.

Original: solar is a beacon of achievement.

Edited: central to decoupling from the perils of unsustainable energy, solar is a beacon of achievement.

Edited 2: key to a future beyond fossil fuels, solar is a beacon of achievement.

Original: milan is nevertheless ambivalent towards his costly terms.

Edited: keen on contracting him, milan is nevertheless ambivalent towards his costly terms.

Edited 2: intent on securing his services, milan is nevertheless ambivalent towards his costly terms.

Original:

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

informal english: meteors are much harder to see, because they are only there for a fraction of a second.

Translated into the Style of Abraham Lincoln: meteors are not ( easily / readily ) detectable, lasting for mere fractions of a second.

informal english:

```` |

emrecan/convbert-base-turkish-mc4-cased-multinli_tr | emrecan | 2021-12-01T19:44:01Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"convbert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

|

emrecan/convbert-base-turkish-mc4-cased-snli_tr | emrecan | 2021-12-01T19:43:30Z | 6 | 0 | transformers | [

"transformers",

"pytorch",

"convbert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

|

emrecan/bert-base-multilingual-cased-snli_tr | emrecan | 2021-12-01T19:43:01Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"bert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

|

huggingtweets/binance | huggingtweets | 2021-12-01T14:02:42Z | 7 | 2 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: http://www.huggingtweets.com/binance/1638367358099/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1466001345324875784/4RrjsTR__400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Binance</div>

<div style="text-align: center; font-size: 14px;">@binance</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Binance.

| Data | Binance |

| --- | --- |

| Tweets downloaded | 3250 |

| Retweets | 268 |

| Short tweets | 353 |

| Tweets kept | 2629 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/m31ml960/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @binance's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/2vx6m0ip) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/2vx6m0ip/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/binance')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

wandemberg-eld/opus-mt-en-de-finetuned-en-to-de | wandemberg-eld | 2021-12-01T12:49:07Z | 49 | 0 | transformers | [

"transformers",

"pytorch",

"marian",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: opus-mt-en-de-finetuned-en-to-de

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wmt16

type: wmt16

args: de-en

metrics:

- name: Bleu

type: bleu

value: 29.4312

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# opus-mt-en-de-finetuned-en-to-de

This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-de](https://huggingface.co/Helsinki-NLP/opus-mt-en-de) on the wmt16 dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4083

- Bleu: 29.4312

- Gen Len: 24.746

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:------:|:---------------:|:-------:|:-------:|

| 1.978 | 1.0 | 568611 | 1.4083 | 29.4312 | 24.746 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu102

- Datasets 1.16.1

- Tokenizers 0.10.3

|

ying-tina/wav2vec2-base-timit-demo-colab-32 | ying-tina | 2021-12-01T10:54:26Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | automatic-speech-recognition | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

name: wav2vec2-base-timit-demo-colab-32

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-base-timit-demo-colab-32

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4488

- Wer: 0.3149

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 3.6155 | 4.0 | 500 | 2.2647 | 0.9992 |

| 0.9037 | 8.0 | 1000 | 0.4701 | 0.4336 |

| 0.3159 | 12.0 | 1500 | 0.4247 | 0.3575 |

| 0.1877 | 16.0 | 2000 | 0.4477 | 0.3442 |

| 0.1368 | 20.0 | 2500 | 0.4932 | 0.3384 |

| 0.1062 | 24.0 | 3000 | 0.4758 | 0.3202 |

| 0.0928 | 28.0 | 3500 | 0.4488 | 0.3149 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Tokenizers 0.10.3

|

emrecan/distilbert-base-turkish-cased-multinli_tr | emrecan | 2021-12-01T10:50:34Z | 5 | 0 | transformers | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"zero-shot-classification",

"nli",

"tr",

"dataset:nli_tr",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | 2022-03-02T23:29:05Z | ---

language:

- tr

tags:

- zero-shot-classification

- nli

- pytorch

pipeline_tag: zero-shot-classification

license: apache-2.0

datasets:

- nli_tr

widget:

- text: "Dolar yükselmeye devam ediyor."

candidate_labels: "ekonomi, siyaset, spor"

- text: "Senaryo çok saçmaydı, beğendim diyemem."

candidate_labels: "olumlu, olumsuz"

---

|

BSen/wav2vec2-large-xls-r-300m-turkish-colab | BSen | 2021-12-01T10:18:53Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | automatic-speech-recognition | 2022-03-02T23:29:04Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-large-xls-r-300m-turkish-colab

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xls-r-300m-turkish-colab

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu111

- Datasets 1.13.3

- Tokenizers 0.10.3

|

glasses/vit_base_patch16_384 | glasses | 2021-12-01T08:26:46Z | 1 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # vit_base_patch16_384

Implementation of Vision Transformer (ViT) proposed in [An Image Is

Worth 16x16 Words: Transformers For Image Recognition At

Scale](https://arxiv.org/pdf/2010.11929.pdf)

The following image from the authors shows the architecture.

``` python

ViT.vit_small_patch16_224()

ViT.vit_base_patch16_224()

ViT.vit_base_patch16_384()

ViT.vit_base_patch32_384()

ViT.vit_huge_patch16_224()

ViT.vit_huge_patch32_384()

ViT.vit_large_patch16_224()

ViT.vit_large_patch16_384()

ViT.vit_large_patch32_384()

```

Examples:

``` python

# change activation

ViT.vit_base_patch16_224(activation = nn.SELU)

# change number of classes (default is 1000 )

ViT.vit_base_patch16_224(n_classes=100)

# pass a different block, default is TransformerEncoderBlock

ViT.vit_base_patch16_224(block=MyCoolTransformerBlock)

# get features

model = ViT.vit_base_patch16_224

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[[torch.Size([1, 197, 768]), torch.Size([1, 197, 768]), ...]

# change the tokens, you have to subclass ViTTokens

class MyTokens(ViTTokens):

def __init__(self, emb_size: int):

super().__init__(emb_size)

self.my_new_token = nn.Parameter(torch.randn(1, 1, emb_size))

ViT(tokens=MyTokens)

```

|

glasses/efficientnet_b3 | glasses | 2021-12-01T08:08:37Z | 2 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:1905.11946",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # efficientnet_b3

Implementation of EfficientNet proposed in [EfficientNet: Rethinking

Model Scaling for Convolutional Neural

Networks](https://arxiv.org/abs/1905.11946)

The basic architecture is similar to MobileNetV2 as was computed by

using [Progressive Neural Architecture

Search](https://arxiv.org/abs/1905.11946) .

The following table shows the basic architecture

(EfficientNet-efficientnet\_b0):

Then, the architecture is scaled up from

[-efficientnet\_b0]{.title-ref} to [-efficientnet\_b7]{.title-ref}

using compound scaling.

``` python

EfficientNet.efficientnet_b0()

EfficientNet.efficientnet_b1()

EfficientNet.efficientnet_b2()

EfficientNet.efficientnet_b3()

EfficientNet.efficientnet_b4()

EfficientNet.efficientnet_b5()

EfficientNet.efficientnet_b6()

EfficientNet.efficientnet_b7()

EfficientNet.efficientnet_b8()

EfficientNet.efficientnet_l2()

```

Examples:

``` python

EfficientNet.efficientnet_b0(activation = nn.SELU)

# change number of classes (default is 1000 )

EfficientNet.efficientnet_b0(n_classes=100)

# pass a different block

EfficientNet.efficientnet_b0(block=...)

# store each feature

x = torch.rand((1, 3, 224, 224))

model = EfficientNet.efficientnet_b0()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

# [torch.Size([1, 32, 112, 112]), torch.Size([1, 24, 56, 56]), torch.Size([1, 40, 28, 28]), torch.Size([1, 80, 14, 14])]

```

|

raphaelmerx/distilbert-base-uncased-finetuned-imdb | raphaelmerx | 2021-12-01T07:54:16Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"fill-mask",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: distilbert-base-uncased-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 2.4722

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.7117 | 1.0 | 157 | 2.4977 |

| 2.5783 | 2.0 | 314 | 2.4241 |

| 2.5375 | 3.0 | 471 | 2.4358 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

glasses/densenet161 | glasses | 2021-12-01T07:50:20Z | 2 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:1608.06993",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # densenet161

Implementation of DenseNet proposed in [Densely Connected Convolutional

Networks](https://arxiv.org/abs/1608.06993)

Create a default models

``` {.sourceCode .}

DenseNet.densenet121()

DenseNet.densenet161()

DenseNet.densenet169()

DenseNet.densenet201()

```

Examples:

``` {.sourceCode .}

# change activation

DenseNet.densenet121(activation = nn.SELU)

# change number of classes (default is 1000 )

DenseNet.densenet121(n_classes=100)

# pass a different block

DenseNet.densenet121(block=...)

# change the initial convolution

model = DenseNet.densenet121()

model.encoder.gate.conv1 = nn.Conv2d(3, 64, kernel_size=3)

# store each feature

x = torch.rand((1, 3, 224, 224))

model = DenseNet.densenet121()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

# [torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14]), torch.Size([1, 512, 7, 7]), torch.Size([1, 1024, 7, 7])]

```

|

glasses/densenet169 | glasses | 2021-12-01T07:48:55Z | 1 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:1608.06993",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # densenet169

Implementation of DenseNet proposed in [Densely Connected Convolutional

Networks](https://arxiv.org/abs/1608.06993)

Create a default models

``` {.sourceCode .}

DenseNet.densenet121()

DenseNet.densenet161()

DenseNet.densenet169()

DenseNet.densenet201()

```

Examples:

``` {.sourceCode .}

# change activation

DenseNet.densenet121(activation = nn.SELU)

# change number of classes (default is 1000 )

DenseNet.densenet121(n_classes=100)

# pass a different block

DenseNet.densenet121(block=...)

# change the initial convolution

model = DenseNet.densenet121()

model.encoder.gate.conv1 = nn.Conv2d(3, 64, kernel_size=3)

# store each feature

x = torch.rand((1, 3, 224, 224))

model = DenseNet.densenet121()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

# [torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14]), torch.Size([1, 512, 7, 7]), torch.Size([1, 1024, 7, 7])]

```

|

glasses/regnety_008 | glasses | 2021-12-01T07:46:29Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:2003.13678",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # regnety_008

Implementation of RegNet proposed in [Designing Network Design

Spaces](https://arxiv.org/abs/2003.13678)

The main idea is to start with a high dimensional search space and

iteratively reduce the search space by empirically apply constrains

based on the best performing models sampled by the current search

space.

The resulting models are light, accurate, and faster than

EfficientNets (up to 5x times!)

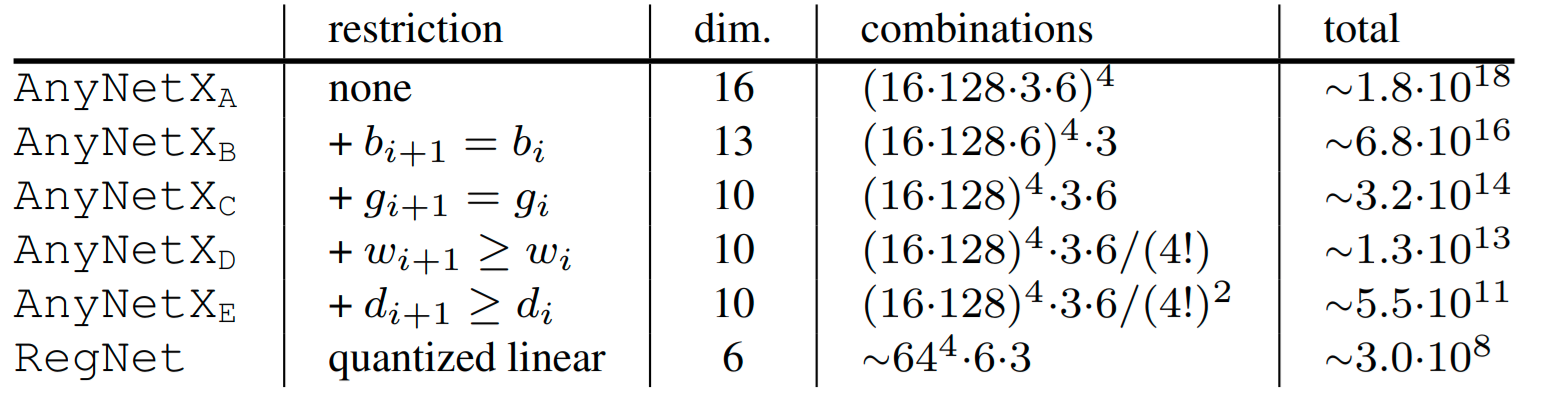

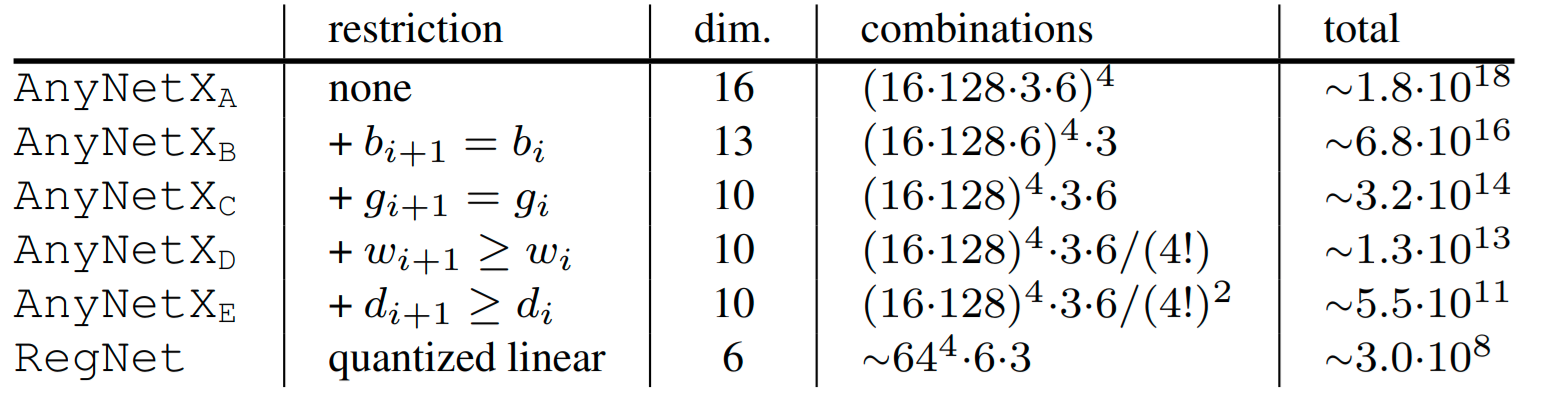

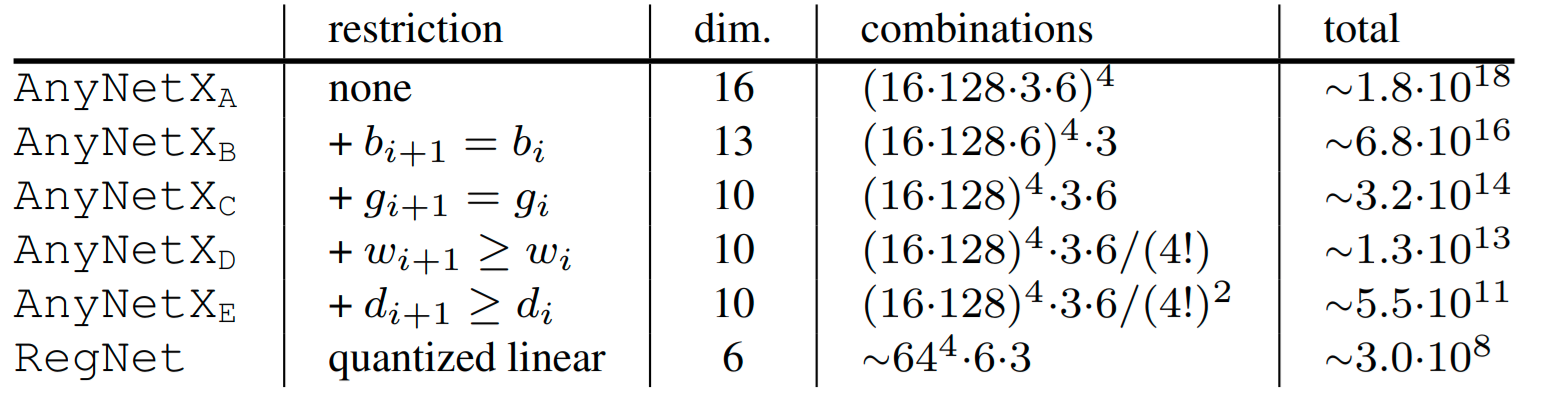

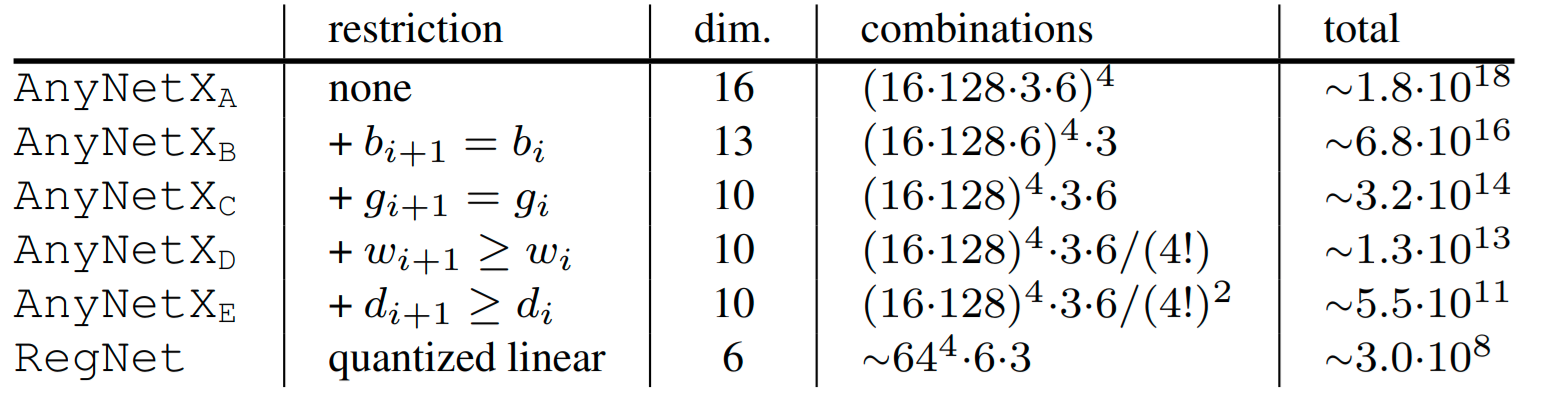

For example, to go from $AnyNet_A$ to $AnyNet_B$ they fixed the

bottleneck ratio $b_i$ for all stage $i$. The following table shows

all the restrictions applied from one search space to the next one.

The paper is really well written and very interesting, I highly

recommended read it.

``` python

ResNet.regnetx_002()

ResNet.regnetx_004()

ResNet.regnetx_006()

ResNet.regnetx_008()

ResNet.regnetx_016()

ResNet.regnetx_040()

ResNet.regnetx_064()

ResNet.regnetx_080()

ResNet.regnetx_120()

ResNet.regnetx_160()

ResNet.regnetx_320()

# Y variants (with SE)

ResNet.regnety_002()

# ...

ResNet.regnetx_320()

You can easily customize your model

```

Examples:

``` python

# change activation

RegNet.regnetx_004(activation = nn.SELU)

# change number of classes (default is 1000 )

RegNet.regnetx_004(n_classes=100)

# pass a different block

RegNet.regnetx_004(block=RegNetYBotteneckBlock)

# change the steam

model = RegNet.regnetx_004(stem=ResNetStemC)

change shortcut

model = RegNet.regnetx_004(block=partial(RegNetYBotteneckBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = RegNet.regnetx_004()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 32, 112, 112]), torch.Size([1, 32, 56, 56]), torch.Size([1, 64, 28, 28]), torch.Size([1, 160, 14, 14])]

```

|

glasses/regnety_004 | glasses | 2021-12-01T07:45:42Z | 1 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:2003.13678",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # regnety_004

Implementation of RegNet proposed in [Designing Network Design

Spaces](https://arxiv.org/abs/2003.13678)

The main idea is to start with a high dimensional search space and

iteratively reduce the search space by empirically apply constrains

based on the best performing models sampled by the current search

space.

The resulting models are light, accurate, and faster than

EfficientNets (up to 5x times!)

For example, to go from $AnyNet_A$ to $AnyNet_B$ they fixed the

bottleneck ratio $b_i$ for all stage $i$. The following table shows

all the restrictions applied from one search space to the next one.

The paper is really well written and very interesting, I highly

recommended read it.

``` python

ResNet.regnetx_002()

ResNet.regnetx_004()

ResNet.regnetx_006()

ResNet.regnetx_008()

ResNet.regnetx_016()

ResNet.regnetx_040()

ResNet.regnetx_064()

ResNet.regnetx_080()

ResNet.regnetx_120()

ResNet.regnetx_160()

ResNet.regnetx_320()

# Y variants (with SE)

ResNet.regnety_002()

# ...

ResNet.regnetx_320()

You can easily customize your model

```

Examples:

``` python

# change activation

RegNet.regnetx_004(activation = nn.SELU)

# change number of classes (default is 1000 )

RegNet.regnetx_004(n_classes=100)

# pass a different block

RegNet.regnetx_004(block=RegNetYBotteneckBlock)

# change the steam

model = RegNet.regnetx_004(stem=ResNetStemC)

change shortcut

model = RegNet.regnetx_004(block=partial(RegNetYBotteneckBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = RegNet.regnetx_004()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 32, 112, 112]), torch.Size([1, 32, 56, 56]), torch.Size([1, 64, 28, 28]), torch.Size([1, 160, 14, 14])]

```

|

glasses/regnety_002 | glasses | 2021-12-01T07:45:22Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:2003.13678",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # regnety_002

Implementation of RegNet proposed in [Designing Network Design

Spaces](https://arxiv.org/abs/2003.13678)

The main idea is to start with a high dimensional search space and

iteratively reduce the search space by empirically apply constrains

based on the best performing models sampled by the current search

space.

The resulting models are light, accurate, and faster than

EfficientNets (up to 5x times!)

For example, to go from $AnyNet_A$ to $AnyNet_B$ they fixed the

bottleneck ratio $b_i$ for all stage $i$. The following table shows

all the restrictions applied from one search space to the next one.

The paper is really well written and very interesting, I highly

recommended read it.

``` python

ResNet.regnetx_002()

ResNet.regnetx_004()

ResNet.regnetx_006()

ResNet.regnetx_008()

ResNet.regnetx_016()

ResNet.regnetx_040()

ResNet.regnetx_064()

ResNet.regnetx_080()

ResNet.regnetx_120()

ResNet.regnetx_160()

ResNet.regnetx_320()

# Y variants (with SE)

ResNet.regnety_002()

# ...

ResNet.regnetx_320()

You can easily customize your model

```

Examples:

``` python

# change activation

RegNet.regnetx_004(activation = nn.SELU)

# change number of classes (default is 1000 )

RegNet.regnetx_004(n_classes=100)

# pass a different block

RegNet.regnetx_004(block=RegNetYBotteneckBlock)

# change the steam

model = RegNet.regnetx_004(stem=ResNetStemC)

change shortcut

model = RegNet.regnetx_004(block=partial(RegNetYBotteneckBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = RegNet.regnetx_004()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 32, 112, 112]), torch.Size([1, 32, 56, 56]), torch.Size([1, 64, 28, 28]), torch.Size([1, 160, 14, 14])]

```

|

mofawzy/argpt2-goodreads | mofawzy | 2021-12-01T06:55:41Z | 7 | 1 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"ar",

"dataset:LABR",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

language: ar

datasets:

- LABR

widget:

- text: "كان الكاتب ممكن"

- text: "كتاب ممتاز ولكن"

- text: "رواية درامية جدا والافكار بسيطة"

model-index:

- name: argpt2-goodreads

results: []

---

# argpt2-goodreads

This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on an goodreads LABR dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4389

## Model description

Generate sentences either positive/negative examples based on goodreads corpus in arabic language.

## Intended uses & limitations

the model fine-tuned on arabic language only with aspect to generate sentences such as reviews in order todo the same for other languages you need to fine-tune it in your own.

any harmful content generated by GPT2 should not be used in anywhere.

## Training and evaluation data

training and validation done on goodreads dataset LABR 80% for trainng and 20% for testing

## Usage

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("mofawzy/argpt2-goodreads")

model = AutoModelForCausalLM.from_pretrained("mofawzy/argpt2-goodreads")

```

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- distributed_type: tpu

- num_devices: 8

- total_train_batch_size: 128

- total_eval_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20.0

### Training results

- train_loss = 1.474

### Evaluation results

- eval_loss = 1.4389

### train metrics

- epoch = 20.0

- train_loss = 1.474

- train_runtime = 2:18:14.51

- train_samples = 108110

- train_samples_per_second = 260.678

- train_steps_per_second = 2.037

### eval metrics

- epoch = 20.0

- eval_loss = 1.4389

- eval_runtime = 0:04:37.01

- eval_samples = 27329

- eval_samples_per_second = 98.655

- eval_steps_per_second = 0.773

- perplexity = 4.2162

### Framework versions

- Transformers 4.13.0.dev0

- Pytorch 1.10.0+cu102

- Datasets 1.16.1

- Tokenizers 0.10.3

|

ykliu1892/translation-en-pt-t5-finetuned-Duolingo | ykliu1892 | 2021-12-01T04:58:54Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

tags:

- generated_from_trainer

metrics:

- bleu

model-index:

- name: translation-en-pt-t5-finetuned-Duolingo

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# translation-en-pt-t5-finetuned-Duolingo

This model was trained from scratch on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7362

- Bleu: 39.4725

- Gen Len: 9.002

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|

| 0.5429 | 0.24 | 9000 | 0.7461 | 39.4744 | 9.0 |

| 0.5302 | 0.48 | 18000 | 0.7431 | 39.7559 | 8.97 |

| 0.5309 | 0.72 | 27000 | 0.7388 | 39.6751 | 8.998 |

| 0.5336 | 0.96 | 36000 | 0.7362 | 39.4725 | 9.002 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

rossanez/t5-small-finetuned-de-en-256-epochs2 | rossanez | 2021-12-01T01:08:03Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt14",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt14

metrics:

- bleu

model-index:

- name: t5-small-finetuned-de-en-256-epochs2

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wmt14

type: wmt14

args: de-en

metrics:

- name: Bleu

type: bleu

value: 7.8579

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-de-en-256-epochs2

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt14 dataset.

It achieves the following results on the evaluation set:

- Loss: 2.1073

- Bleu: 7.8579

- Gen Len: 17.3896

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|

| No log | 1.0 | 188 | 2.1179 | 7.8498 | 17.382 |

| No log | 2.0 | 376 | 2.1073 | 7.8579 | 17.3896 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

rossanez/t5-small-finetuned-de-en-256-nofp16 | rossanez | 2021-12-01T00:54:59Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt14",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt14

model-index:

- name: t5-small-finetuned-de-en-256-nofp16

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-de-en-256-nofp16

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt14 dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|

| No log | 1.0 | 188 | 2.1234 | 7.7305 | 17.4033 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

rossanez/t5-small-finetuned-de-en-256-wd-01 | rossanez | 2021-12-01T00:48:47Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt14",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2022-03-02T23:29:05Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt14

model-index:

- name: t5-small-finetuned-de-en-256-wd-01

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-de-en-256-wd-01

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt14 dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|

| No log | 1.0 | 188 | 2.1202 | 7.5964 | 17.3996 |

### Framework versions

- Transformers 4.12.5

- Pytorch 1.10.0+cu111

- Datasets 1.16.1

- Tokenizers 0.10.3

|

glasses/regnetx_016 | glasses | 2021-11-30T20:26:57Z | 3 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:2003.13678",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # regnetx_016

Implementation of RegNet proposed in [Designing Network Design

Spaces](https://arxiv.org/abs/2003.13678)

The main idea is to start with a high dimensional search space and

iteratively reduce the search space by empirically apply constrains

based on the best performing models sampled by the current search

space.

The resulting models are light, accurate, and faster than

EfficientNets (up to 5x times!)

For example, to go from $AnyNet_A$ to $AnyNet_B$ they fixed the

bottleneck ratio $b_i$ for all stage $i$. The following table shows

all the restrictions applied from one search space to the next one.

The paper is really well written and very interesting, I highly

recommended read it.

``` python

ResNet.regnetx_002()

ResNet.regnetx_004()

ResNet.regnetx_006()

ResNet.regnetx_008()

ResNet.regnetx_016()

ResNet.regnetx_040()

ResNet.regnetx_064()

ResNet.regnetx_080()

ResNet.regnetx_120()

ResNet.regnetx_160()

ResNet.regnetx_320()

# Y variants (with SE)

ResNet.regnety_002()

# ...

ResNet.regnetx_320()

You can easily customize your model

```

Examples:

``` python

# change activation

RegNet.regnetx_004(activation = nn.SELU)

# change number of classes (default is 1000 )

RegNet.regnetx_004(n_classes=100)

# pass a different block

RegNet.regnetx_004(block=RegNetYBotteneckBlock)

# change the steam

model = RegNet.regnetx_004(stem=ResNetStemC)

change shortcut

model = RegNet.regnetx_004(block=partial(RegNetYBotteneckBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = RegNet.regnetx_004()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 32, 112, 112]), torch.Size([1, 32, 56, 56]), torch.Size([1, 64, 28, 28]), torch.Size([1, 160, 14, 14])]

```

|

glasses/wide_resnet101_2 | glasses | 2021-11-30T20:20:06Z | 4 | 0 | transformers | [

"transformers",

"pytorch",

"arxiv:1605.07146",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z | # wide_resnet101_2

Implementation of Wide ResNet proposed in [\"Wide Residual

Networks\"](https://arxiv.org/pdf/1605.07146.pdf)

Create a default model

``` python

WideResNet.wide_resnet50_2()

WideResNet.wide_resnet101_2()

# create a wide_resnet18_4

WideResNet.resnet18(block=WideResNetBottleNeckBlock, width_factor=4)

```

Examples: