modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-02 18:27:42

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 549

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-02 18:24:50

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

rightspeed/spacehope

|

rightspeed

| 2023-07-13T11:52:09Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-13T11:51:41Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 5.00 +/- 7.07

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga rightspeed -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga rightspeed -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga rightspeed

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 100000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

# Environment Arguments

```python

{'render_mode': 'rgb_array'}

```

|

pigliketoeat/distilroberta-base-finetuned-wikitext2

|

pigliketoeat

| 2023-07-13T11:41:14Z | 161 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2023-07-13T11:09:53Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilroberta-base-finetuned-wikitext2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilroberta-base-finetuned-wikitext2

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8349

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.0852 | 1.0 | 2406 | 1.9234 |

| 1.992 | 2.0 | 4812 | 1.8828 |

| 1.9603 | 3.0 | 7218 | 1.8223 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

IbrahemVX2000/kandiskyai2-1

|

IbrahemVX2000

| 2023-07-13T11:29:14Z | 0 | 0 | null |

[

"text-to-image",

"kandinsky",

"license:apache-2.0",

"region:us"

] |

text-to-image

| 2023-07-13T11:27:16Z |

---

license: apache-2.0

prior: kandinsky-community/kandinsky-2-1-prior

tags:

- text-to-image

- kandinsky

---

# Kandinsky 2.1

Kandinsky 2.1 inherits best practices from Dall-E 2 and Latent diffusion while introducing some new ideas.

It uses the CLIP model as a text and image encoder, and diffusion image prior (mapping) between latent spaces of CLIP modalities. This approach increases the visual performance of the model and unveils new horizons in blending images and text-guided image manipulation.

The Kandinsky model is created by [Arseniy Shakhmatov](https://github.com/cene555), [Anton Razzhigaev](https://github.com/razzant), [Aleksandr Nikolich](https://github.com/AlexWortega), [Igor Pavlov](https://github.com/boomb0om), [Andrey Kuznetsov](https://github.com/kuznetsoffandrey) and [Denis Dimitrov](https://github.com/denndimitrov)

## Usage

Kandinsky 2.1 is available in diffusers!

```python

pip install diffusers transformers accelerate

```

### Text to image

```python

from diffusers import DiffusionPipeline

import torch

pipe_prior = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16)

pipe_prior.to("cuda")

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

t2i_pipe.to("cuda")

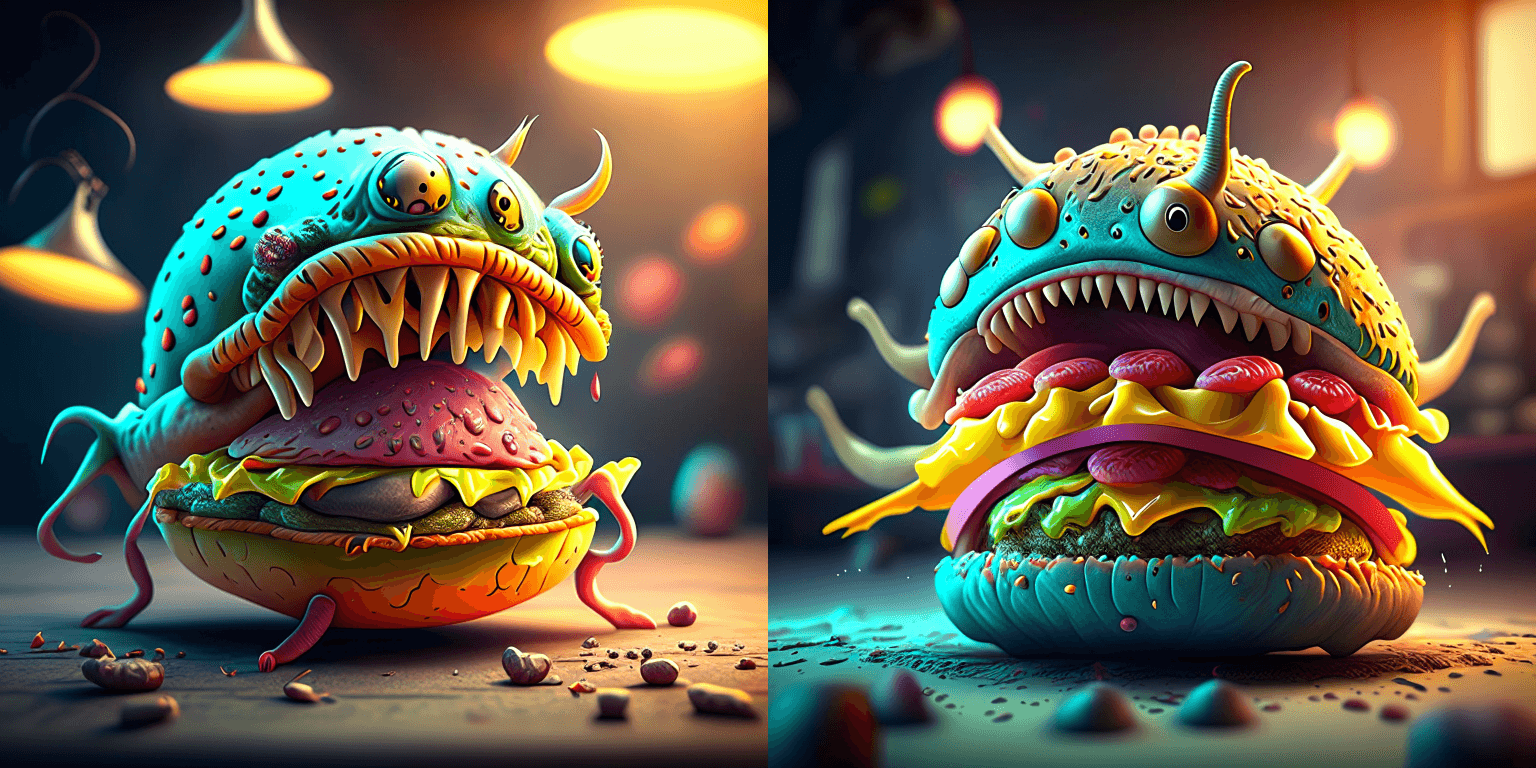

prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

negative_prompt = "low quality, bad quality"

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt, guidance_scale=1.0).to_tuple()

image = t2i_pipe(prompt, negative_prompt=negative_prompt, image_embeds=image_embeds, negative_image_embeds=negative_image_embeds, height=768, width=768).images[0]

image.save("cheeseburger_monster.png")

```

### Text Guided Image-to-Image Generation

```python

from diffusers import KandinskyImg2ImgPipeline, KandinskyPriorPipeline

import torch

from PIL import Image

import requests

from io import BytesIO

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

response = requests.get(url)

original_image = Image.open(BytesIO(response.content)).convert("RGB")

original_image = original_image.resize((768, 512))

# create prior

pipe_prior = KandinskyPriorPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

)

pipe_prior.to("cuda")

# create img2img pipeline

pipe = KandinskyImg2ImgPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

pipe.to("cuda")

prompt = "A fantasy landscape, Cinematic lighting"

negative_prompt = "low quality, bad quality"

image_embeds, negative_image_embeds = pipe_prior(prompt, negative_prompt).to_tuple()

out = pipe(

prompt,

image=original_image,

image_embeds=image_embeds,

negative_image_embeds=negative_image_embeds,

height=768,

width=768,

strength=0.3,

)

out.images[0].save("fantasy_land.png")

```

### Interpolate

```python

from diffusers import KandinskyPriorPipeline, KandinskyPipeline

from diffusers.utils import load_image

import PIL

import torch

pipe_prior = KandinskyPriorPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16

)

pipe_prior.to("cuda")

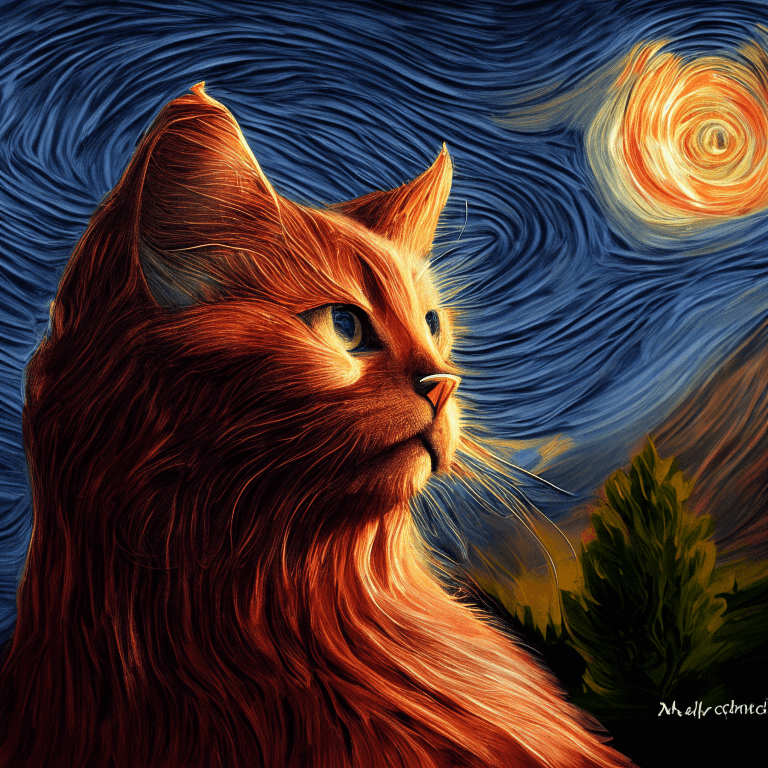

img1 = load_image(

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/cat.png"

)

img2 = load_image(

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/starry_night.jpeg"

)

# add all the conditions we want to interpolate, can be either text or image

images_texts = ["a cat", img1, img2]

# specify the weights for each condition in images_texts

weights = [0.3, 0.3, 0.4]

# We can leave the prompt empty

prompt = ""

prior_out = pipe_prior.interpolate(images_texts, weights)

pipe = KandinskyPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

pipe.to("cuda")

image = pipe(prompt, **prior_out, height=768, width=768).images[0]

image.save("starry_cat.png")

```

## Model Architecture

### Overview

Kandinsky 2.1 is a text-conditional diffusion model based on unCLIP and latent diffusion, composed of a transformer-based image prior model, a unet diffusion model, and a decoder.

The model architectures are illustrated in the figure below - the chart on the left describes the process to train the image prior model, the figure in the center is the text-to-image generation process, and the figure on the right is image interpolation.

<p float="left">

<img src="https://raw.githubusercontent.com/ai-forever/Kandinsky-2/main/content/kandinsky21.png"/>

</p>

Specifically, the image prior model was trained on CLIP text and image embeddings generated with a pre-trained [mCLIP model](https://huggingface.co/M-CLIP/XLM-Roberta-Large-Vit-L-14). The trained image prior model is then used to generate mCLIP image embeddings for input text prompts. Both the input text prompts and its mCLIP image embeddings are used in the diffusion process. A [MoVQGAN](https://openreview.net/forum?id=Qb-AoSw4Jnm) model acts as the final block of the model, which decodes the latent representation into an actual image.

### Details

The image prior training of the model was performed on the [LAION Improved Aesthetics dataset](https://huggingface.co/datasets/bhargavsdesai/laion_improved_aesthetics_6.5plus_with_images), and then fine-tuning was performed on the [LAION HighRes data](https://huggingface.co/datasets/laion/laion-high-resolution).

The main Text2Image diffusion model was trained on the basis of 170M text-image pairs from the [LAION HighRes dataset](https://huggingface.co/datasets/laion/laion-high-resolution) (an important condition was the presence of images with a resolution of at least 768x768). The use of 170M pairs is due to the fact that we kept the UNet diffusion block from Kandinsky 2.0, which allowed us not to train it from scratch. Further, at the stage of fine-tuning, a dataset of 2M very high-quality high-resolution images with descriptions (COYO, anime, landmarks_russia, and a number of others) was used separately collected from open sources.

### Evaluation

We quantitatively measure the performance of Kandinsky 2.1 on the COCO_30k dataset, in zero-shot mode. The table below presents FID.

FID metric values for generative models on COCO_30k

| | FID (30k)|

|:------|----:|

| eDiff-I (2022) | 6.95 |

| Image (2022) | 7.27 |

| Kandinsky 2.1 (2023) | 8.21|

| Stable Diffusion 2.1 (2022) | 8.59 |

| GigaGAN, 512x512 (2023) | 9.09 |

| DALL-E 2 (2022) | 10.39 |

| GLIDE (2022) | 12.24 |

| Kandinsky 1.0 (2022) | 15.40 |

| DALL-E (2021) | 17.89 |

| Kandinsky 2.0 (2022) | 20.00 |

| GLIGEN (2022) | 21.04 |

For more information, please refer to the upcoming technical report.

## BibTex

If you find this repository useful in your research, please cite:

```

@misc{kandinsky 2.1,

title = {kandinsky 2.1},

author = {Arseniy Shakhmatov, Anton Razzhigaev, Aleksandr Nikolich, Vladimir Arkhipkin, Igor Pavlov, Andrey Kuznetsov, Denis Dimitrov},

year = {2023},

howpublished = {},

}

```

|

offlinehq/autotrain-slovenian-swear-words-74310139575

|

offlinehq

| 2023-07-13T11:28:35Z | 111 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"roberta",

"text-classification",

"autotrain",

"unk",

"dataset:offlinehq/autotrain-data-slovenian-swear-words",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-13T11:22:57Z |

---

tags:

- autotrain

- text-classification

language:

- unk

widget:

- text: "I love AutoTrain"

datasets:

- offlinehq/autotrain-data-slovenian-swear-words

co2_eq_emissions:

emissions: 3.733207533466129

---

# Model Trained Using AutoTrain

- Problem type: Binary Classification

- Model ID: 74310139575

- CO2 Emissions (in grams): 3.7332

## Validation Metrics

- Loss: 0.575

- Accuracy: 0.702

- Precision: 0.682

- Recall: 0.708

- AUC: 0.764

- F1: 0.695

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/offlinehq/autotrain-slovenian-swear-words-74310139575

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("offlinehq/autotrain-slovenian-swear-words-74310139575", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("offlinehq/autotrain-slovenian-swear-words-74310139575", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

CanSukru/YORUvoicemodel

|

CanSukru

| 2023-07-13T11:23:45Z | 0 | 0 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-07-13T11:12:34Z |

---

license: creativeml-openrail-m

---

|

preetham/rmicki

|

preetham

| 2023-07-13T11:13:58Z | 2 | 0 |

diffusers

|

[

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"dreambooth",

"base_model:CompVis/stable-diffusion-v1-4",

"base_model:finetune:CompVis/stable-diffusion-v1-4",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-13T10:39:15Z |

---

license: creativeml-openrail-m

base_model: CompVis/stable-diffusion-v1-4

instance_prompt: a photo of sks person

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- dreambooth

inference: true

---

# DreamBooth - preetham/rmicki

This is a dreambooth model derived from CompVis/stable-diffusion-v1-4. The weights were trained on a photo of sks person using [DreamBooth](https://dreambooth.github.io/).

You can find some example images in the following.

DreamBooth for the text encoder was enabled: False.

|

Virch/q-FrozenLake-v1-4x4-noSlippery

|

Virch

| 2023-07-13T10:51:06Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-13T10:43:03Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="Virch/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

jackyjuakers/home

|

jackyjuakers

| 2023-07-13T10:50:41Z | 0 | 0 | null |

[

"license:bigcode-openrail-m",

"region:us"

] | null | 2023-07-13T10:50:41Z |

---

license: bigcode-openrail-m

---

|

jpandeinge/DialoGPT-medium-Oshiwambo-Bot

|

jpandeinge

| 2023-07-13T10:48:52Z | 154 | 1 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-11T06:12:35Z |

---

pipeline_tag: conversational

---

|

imone/LLaMA_13B_with_EOT_token

|

imone

| 2023-07-13T10:40:28Z | 12 | 2 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"en",

"license:other",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-05-26T08:22:58Z |

---

license: other

language:

- en

pipeline_tag: text-generation

---

# LLaMA 13B with End-of-turn (EOT) Token

This is the LLaMA 13B model with `<|end_of_turn|>` token added as id `32000`. The token input/output embedding is initialized as the mean of all existing input/output token embeddings, respectively.

|

zlwang19/autotrain-randengq-74291139565

|

zlwang19

| 2023-07-13T10:38:00Z | 112 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"mt5",

"text2text-generation",

"autotrain",

"summarization",

"zh",

"dataset:zlwang19/autotrain-data-randengq",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

summarization

| 2023-07-13T10:32:56Z |

---

tags:

- autotrain

- summarization

language:

- zh

widget:

- text: "I love AutoTrain"

datasets:

- zlwang19/autotrain-data-randengq

co2_eq_emissions:

emissions: 2.4988443809859002

---

# Model Trained Using AutoTrain

- Problem type: Summarization

- Model ID: 74291139565

- CO2 Emissions (in grams): 2.4988

## Validation Metrics

- Loss: 4.728

- Rouge1: 8.502

- Rouge2: 2.226

- RougeL: 8.053

- RougeLsum: 7.996

- Gen Len: 17.022

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_HUGGINGFACE_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/zlwang19/autotrain-randengq-74291139565

```

|

ivivnov/ppo-Huggy

|

ivivnov

| 2023-07-13T10:36:38Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-07-13T10:36:25Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: ivivnov/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

madroid/autotrain-text-chat-74266139562

|

madroid

| 2023-07-13T10:25:06Z | 108 | 1 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"deberta",

"text-classification",

"autotrain",

"en",

"dataset:madroid/autotrain-data-text-chat",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-13T10:24:08Z |

---

tags:

- autotrain

- text-classification

language:

- en

widget:

- text: "I love AutoTrain"

datasets:

- madroid/autotrain-data-text-chat

co2_eq_emissions:

emissions: 0.3508472536259808

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 74266139562

- CO2 Emissions (in grams): 0.3508

## Validation Metrics

- Loss: 0.005

- Accuracy: 1.000

- Macro F1: 1.000

- Micro F1: 1.000

- Weighted F1: 1.000

- Macro Precision: 1.000

- Micro Precision: 1.000

- Weighted Precision: 1.000

- Macro Recall: 1.000

- Micro Recall: 1.000

- Weighted Recall: 1.000

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/madroid/autotrain-text-chat-74266139562

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("madroid/autotrain-text-chat-74266139562", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("madroid/autotrain-text-chat-74266139562", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

VK246/IC_ver6a_coco_swin_gpt2_50Apc_1e

|

VK246

| 2023-07-13T10:22:02Z | 45 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vision-encoder-decoder",

"image-text-to-text",

"generated_from_trainer",

"dataset:coco",

"endpoints_compatible",

"region:us"

] |

image-text-to-text

| 2023-07-13T07:09:15Z |

---

tags:

- generated_from_trainer

datasets:

- coco

metrics:

- rouge

- bleu

model-index:

- name: IC_ver6a_coco_swin_gpt2_50Apc_1e

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# IC_ver6a_coco_swin_gpt2_50Apc_1e

This model is a fine-tuned version of [](https://huggingface.co/) on the coco dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8477

- Rouge1: 40.2406

- Rouge2: 15.0629

- Rougel: 36.6294

- Rougelsum: 36.6164

- Bleu: 9.0728

- Gen Len: 11.2806

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 96

- eval_batch_size: 96

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:------:|:-------:|

| 1.1343 | 0.17 | 500 | 0.9708 | 35.1592 | 11.4248 | 32.3362 | 32.3316 | 6.404 | 11.2806 |

| 0.9606 | 0.34 | 1000 | 0.9123 | 37.9656 | 12.9721 | 34.5569 | 34.5606 | 7.489 | 11.2806 |

| 0.9286 | 0.51 | 1500 | 0.8828 | 38.7702 | 13.945 | 35.4661 | 35.4648 | 8.022 | 11.2806 |

| 0.8994 | 0.68 | 2000 | 0.8619 | 39.8572 | 14.6183 | 36.3345 | 36.3262 | 8.7008 | 11.2806 |

| 0.8843 | 0.85 | 2500 | 0.8525 | 39.8151 | 14.7431 | 36.3033 | 36.2918 | 8.8305 | 11.2806 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

AlexZigma/timesformer-bert-video-captioning

|

AlexZigma

| 2023-07-13T10:11:04Z | 41 | 3 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vision-encoder-decoder",

"image-text-to-text",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] |

image-text-to-text

| 2023-07-12T18:45:26Z |

---

tags:

- generated_from_trainer

metrics:

- rouge

- bleu

model-index:

- name: timesformer-bert-video-captioning

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# timesformer-bert-video-captioning

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2821

- Rouge1: 30.0468

- Rouge2: 8.4998

- Rougel: 29.0632

- Rougelsum: 29.0231

- Bleu: 4.8298

- Gen Len: 9.5332

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Bleu | Gen Len | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:------:|:-------:|:---------------:|:-------:|:------:|:-------:|:---------:|

| 2.4961 | 0.12 | 200 | 1.5879 | 9.5332 | 1.6548 | 25.4717 | 5.11 | 24.6679 | 24.6696 |

| 1.6561 | 0.25 | 400 | 2.3515 | 9.5332 | 1.5339 | 26.1748 | 5.9106 | 25.413 | 25.3958 |

| 1.5772 | 0.37 | 600 | 2.266 | 9.5332 | 1.4510 | 28.6891 | 6.0431 | 27.7387 | 27.8043 |

| 1.492 | 0.49 | 800 | 3.6517 | 9.5332 | 1.3760 | 29.0257 | 7.8515 | 28.3142 | 28.3036 |

| 1.4736 | 0.61 | 1000 | 3.4866 | 9.5332 | 1.3425 | 27.9774 | 6.2175 | 26.7783 | 26.7207 |

| 1.3856 | 0.74 | 1200 | 3.1649 | 9.5332 | 1.3118 | 27.3532 | 6.5569 | 26.4964 | 26.5087 |

| 1.3972 | 0.86 | 1400 | 3.5337 | 9.5332 | 1.2868 | 28.233 | 7.6471 | 27.3651 | 27.3354 |

| 1.374 | 0.98 | 1600 | 3.5737 | 9.5332 | 1.2571 | 28.8216 | 7.542 | 27.9166 | 27.9353 |

| 1.2207 | 1.1 | 1800 | 3.7983 | 9.5332 | 1.3362 | 29.9574 | 8.1088 | 28.8866 | 28.855 |

| 1.1861 | 1.23 | 2000 | 3.6521 | 9.5332 | 1.3295 | 30.072 | 7.7799 | 28.8417 | 28.864 |

| 1.1173 | 1.35 | 2200 | 3.9784 | 9.5332 | 1.3335 | 29.736 | 7.9661 | 28.6877 | 28.6974 |

| 1.1255 | 1.47 | 2400 | 4.3021 | 9.5332 | 1.3097 | 29.8176 | 8.4656 | 28.958 | 28.9571 |

| 1.0909 | 1.6 | 2600 | 1.3095 | 30.0233 | 8.4896 | 29.2562 | 29.2375| 4.4782 | 9.5332 |

| 1.1205 | 1.72 | 2800 | 1.2992 | 29.7164 | 8.007 | 28.5027 | 28.5018| 4.44 | 9.5332 |

| 1.1069 | 1.84 | 3000 | 1.2830 | 29.851 | 8.4312 | 28.8139 | 28.8205| 4.6065 | 9.5332 |

| 1.076 | 1.96 | 3200 | 1.2821 | 30.0468 | 8.4998 | 29.0632 | 29.0231| 4.8298 | 9.5332 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

ZoeVN/segformer-scene-parse-150-lora-50-epoch

|

ZoeVN

| 2023-07-13T10:02:46Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-13T10:02:45Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.4.0.dev0

- PEFT 0.4.0.dev0

- PEFT 0.4.0.dev0

- PEFT 0.4.0.dev0

- PEFT 0.4.0.dev0

- PEFT 0.4.0.dev0

|

gaioNL/roberta-base_ag_news

|

gaioNL

| 2023-07-13T09:49:21Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"text-classification",

"generated_from_trainer",

"dataset:ag_news",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-10T04:49:51Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- ag_news

model-index:

- name: roberta-base_ag_news

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base_ag_news

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the ag_news dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7991

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 1.4306 | 1.0 | 15000 | 1.3696 |

| 1.0725 | 2.0 | 30000 | 0.9407 |

| 0.8715 | 3.0 | 45000 | 0.7991 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

pigliketoeat/distilgpt2-finetuned-wikitext2

|

pigliketoeat

| 2023-07-13T09:45:58Z | 200 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-13T08:51:35Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilgpt2-finetuned-wikitext2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilgpt2-finetuned-wikitext2

This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 3.6421

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 3.7602 | 1.0 | 2334 | 3.6669 |

| 3.653 | 2.0 | 4668 | 3.6472 |

| 3.6006 | 3.0 | 7002 | 3.6421 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

thomas2112/dqn-SpaceInvadersNoFrameskip-v4

|

thomas2112

| 2023-07-13T09:39:48Z | 6 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-15T12:26:01Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 622.50 +/- 94.51

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga thomas2112 -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga thomas2112 -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga thomas2112

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

# Environment Arguments

```python

{'render_mode': 'rgb_array'}

```

|

preetham/rpanda2

|

preetham

| 2023-07-13T09:37:58Z | 1 | 0 |

diffusers

|

[

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"dreambooth",

"base_model:CompVis/stable-diffusion-v1-4",

"base_model:finetune:CompVis/stable-diffusion-v1-4",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-13T09:02:06Z |

---

license: creativeml-openrail-m

base_model: CompVis/stable-diffusion-v1-4

instance_prompt: a photo of sks panda

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- dreambooth

inference: true

---

# DreamBooth - preetham/rpanda2

This is a dreambooth model derived from CompVis/stable-diffusion-v1-4. The weights were trained on a photo of sks panda using [DreamBooth](https://dreambooth.github.io/).

You can find some example images in the following.

DreamBooth for the text encoder was enabled: False.

|

dada325/Taxi-v3-qLearning-test

|

dada325

| 2023-07-13T09:34:57Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-13T09:34:46Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: Taxi-v3-qLearning-test

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.50 +/- 2.73

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="dada325/Taxi-v3-qLearning-test", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Fixedbot/ppo-Huggy

|

Fixedbot

| 2023-07-13T09:33:07Z | 23 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-07-13T09:32:52Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: Fixedbot/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

predictia/cerra_tas_vqvae

|

predictia

| 2023-07-13T09:31:45Z | 3 | 0 |

diffusers

|

[

"diffusers",

"tensorboard",

"climate",

"transformers",

"image-to-image",

"es",

"en",

"license:apache-2.0",

"region:us"

] |

image-to-image

| 2023-06-28T11:28:11Z |

---

license: apache-2.0

language:

- es

- en

metrics:

- mse

pipeline_tag: image-to-image

tags:

- climate

- transformers

---

# Europe Reanalysis Super Resolution

The aim of the project is to create a Machine learning (ML) model that can generate high-resolution regional reanalysis data (similar to the one produced by CERRA) by downscaling global reanalysis data from ERA5.

This will be accomplished by using state-of-the-art Deep Learning (DL) techniques like U-Net, conditional GAN, and diffusion models (among others). Additionally, an ingestion module will be implemented to assess the possible benefit of using CERRA pseudo-observations as extra predictors. Once the model is designed and trained, a detailed validation framework takes the place.

It combines classical deterministic error metrics with in-depth validations, including time series, maps, spatio-temporal correlations, and computer vision metrics, disaggregated by months, seasons, and geographical regions, to evaluate the effectiveness of the model in reducing errors and representing physical processes. This level of granularity allows for a more comprehensive and accurate assessment, which is critical for ensuring that the model is effective in practice.

Moreover, tools for interpretability of DL models can be used to understand the inner workings and decision-making processes of these complex structures by analyzing the activations of different neurons and the importance of different features in the input data.

This work is funded by [Code for Earth 2023](https://codeforearth.ecmwf.int/) initiative.

|

dada325/q-FrozenLake-v1-4x4-noSlippery

|

dada325

| 2023-07-13T09:26:20Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-13T09:26:17Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="dada325/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Devops-hestabit/Othehalf-350m-onnx

|

Devops-hestabit

| 2023-07-13T09:23:52Z | 3 | 0 |

transformers

|

[

"transformers",

"onnx",

"opt",

"text-generation",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-13T09:19:29Z |

---

license: creativeml-openrail-m

---

|

kfkas/LawBot-level1

|

kfkas

| 2023-07-13T09:13:25Z | 8 | 1 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-13T09:13:08Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.4.0.dev0

|

youlun77/finetuning-sentiment-model-25000-samples-BERT

|

youlun77

| 2023-07-13T09:10:41Z | 116 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-13T07:30:02Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: finetuning-sentiment-model-25000-samples-BERT

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuning-sentiment-model-25000-samples-BERT

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.2154

- eval_accuracy: 0.9422

- eval_f1: 0.9427

- eval_runtime: 823.1435

- eval_samples_per_second: 30.371

- eval_steps_per_second: 1.899

- step: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

NasimB/gpt2-concat-cbt-mod-formatting-rarity-no-cut

|

NasimB

| 2023-07-13T09:09:46Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"dataset:generator",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-13T07:25:10Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- generator

model-index:

- name: gpt2-concat-cbt-mod-formatting-rarity-no-cut

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-concat-cbt-mod-formatting-rarity-no-cut

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the generator dataset.

It achieves the following results on the evaluation set:

- Loss: 4.3220

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 1000

- num_epochs: 6

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 6.6963 | 0.29 | 500 | 5.6460 |

| 5.339 | 0.58 | 1000 | 5.2151 |

| 4.9881 | 0.87 | 1500 | 4.9639 |

| 4.7163 | 1.17 | 2000 | 4.8133 |

| 4.5583 | 1.46 | 2500 | 4.6867 |

| 4.4467 | 1.75 | 3000 | 4.5797 |

| 4.3262 | 2.04 | 3500 | 4.5034 |

| 4.1271 | 2.33 | 4000 | 4.4547 |

| 4.0958 | 2.62 | 4500 | 4.3996 |

| 4.0656 | 2.92 | 5000 | 4.3439 |

| 3.8593 | 3.21 | 5500 | 4.3407 |

| 3.8057 | 3.5 | 6000 | 4.3111 |

| 3.7844 | 3.79 | 6500 | 4.2748 |

| 3.684 | 4.08 | 7000 | 4.2752 |

| 3.5114 | 4.37 | 7500 | 4.2698 |

| 3.5119 | 4.66 | 8000 | 4.2560 |

| 3.498 | 4.96 | 8500 | 4.2415 |

| 3.3431 | 5.25 | 9000 | 4.2555 |

| 3.3208 | 5.54 | 9500 | 4.2541 |

| 3.3169 | 5.83 | 10000 | 4.2527 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.11.0+cu113

- Datasets 2.13.0

- Tokenizers 0.13.3

|

YanJiangJerry/sentiment-bloom-large-e6

|

YanJiangJerry

| 2023-07-13T08:58:38Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bloom",

"text-classification",

"generated_from_trainer",

"license:bigscience-bloom-rail-1.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-13T07:52:49Z |

---

license: bigscience-bloom-rail-1.0

tags:

- generated_from_trainer

model-index:

- name: sentiment-bloom-large-e6

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# sentiment-bloom-large-e6

This model is a fine-tuned version of [LYTinn/bloom-finetuning-sentiment-model-3000-samples](https://huggingface.co/LYTinn/bloom-finetuning-sentiment-model-3000-samples) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 6

### Training results

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

soonmo/distilbert-base-uncased-finetuned-clinc

|

soonmo

| 2023-07-13T08:58:26Z | 110 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:clinc_oos",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-12T01:45:07Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- clinc_oos

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-clinc

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: clinc_oos

type: clinc_oos

config: plus

split: validation

args: plus

metrics:

- name: Accuracy

type: accuracy

value: 0.9161290322580645

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7754

- Accuracy: 0.9161

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 48

- eval_batch_size: 48

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 4.2893 | 1.0 | 318 | 3.2831 | 0.7397 |

| 2.6289 | 2.0 | 636 | 1.8731 | 0.8345 |

| 1.5481 | 3.0 | 954 | 1.1580 | 0.89 |

| 1.0137 | 4.0 | 1272 | 0.8584 | 0.9077 |

| 0.7969 | 5.0 | 1590 | 0.7754 | 0.9161 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

digiplay/helloFlatAnime_v1

|

digiplay

| 2023-07-13T08:57:50Z | 1,606 | 2 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-13T07:42:03Z |

---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info:

https://civitai.com/models/102893/helloflatanime

Original Author's DEMO images :

,colored%20hair,%20(20%20years%20old%20woman%20in%20a%20tanktop,short%20pants,in%20the%20water%20on%20a.jpeg)

|

digiplay/hellopure_v2.24Beta

|

digiplay

| 2023-07-13T08:49:07Z | 70 | 4 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-13T04:21:25Z |

---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

👍👍👍👍👍

https://civitai.com/models/88202/hellopure

Other models from Author: https://civitai.com/user/aji1/models

Sample image I made with AUTOMATIC1111 :

parameters

very close-up ,(best beautiful:1.2), (masterpiece:1.2), (best quality:1.2),masterpiece, best quality, The image features a beautiful young woman with long light golden hair, beach near the ocean, white dress ,The beach is lined with palm trees,

Negative prompt: worst quality ,normal quality ,

Steps: 17, Sampler: Euler, CFG scale: 5, Seed: 1097775045, Size: 480x680, Model hash: 8d4fa7988b, Clip skip: 2, Version: v1.4.1

|

Krelyshy/Heavy

|

Krelyshy

| 2023-07-13T08:48:28Z | 0 | 0 | null |

[

"en",

"region:us"

] | null | 2023-07-12T20:34:24Z |

---

language:

- en

---

# Heavy (Misha) - Team Fortress 2 [RVC V2] [305 Epochs]

Created by @Krelyshy on discord, use freely.

Download: https://huggingface.co/Krelyshy/Heavy/resolve/main/heavy-krel.zip

Backup: https://drive.google.com/file/d/1osCZrtcx0Gtc-8nthZ6L1Pm5nRMi8kxk/view?usp=drive_link

|

daxiboy/vit-base-patch16-224-finetuned-flower

|

daxiboy

| 2023-07-13T08:47:12Z | 165 | 0 |

transformers

|

[

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-07-13T08:35:53Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imagefolder

model-index:

- name: vit-base-patch16-224-finetuned-flower

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-patch16-224-finetuned-flower

This model is a fine-tuned version of [google/vit-base-patch16-224](https://huggingface.co/google/vit-base-patch16-224) on the imagefolder dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

### Framework versions

- Transformers 4.24.0

- Pytorch 2.0.1+cu118

- Datasets 2.7.1

- Tokenizers 0.13.3

|

gabrielgme/falcon-7b-spider-with-schema

|

gabrielgme

| 2023-07-13T08:44:42Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-12T13:21:52Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.4.0.dev0

|

jordiclive/falcon-40b-lora-sft-stage2-1.1k

|

jordiclive

| 2023-07-13T08:35:07Z | 16 | 0 |

transformers

|

[

"transformers",

"pytorch",

"RefinedWeb",

"text-generation",

"sft",

"custom_code",

"en",

"dataset:OpenAssistant/oasst1",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-12T17:24:51Z |

---

license: mit

datasets:

- OpenAssistant/oasst1

language:

- en

tags:

- sft

pipeline_tag: text-generation

widget:

- text: >-

<|prompter|>What is a meme, and what's the history behind this

word?<|endoftext|><|assistant|>

- text: <|prompter|>What's the Earth total population<|endoftext|><|assistant|>

- text: <|prompter|>Write a story about future of AI development<|endoftext|><|assistant|>

---

# Load Merged Model (Recommended, identical configuration to a fine-tuned model)

```

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, GenerationConfig

repo_id = "jordiclive/falcon-40b-lora-sft-stage2-1.1k"

dtype = torch.bfloat16

tokenizer = AutoTokenizer.from_pretrained(repo_id)

model = AutoModelForCausalLM.from_pretrained(

repo_id,

torch_dtype=dtype,

trust_remote_code=True,

)

```

## Model Details

- **Developed** as part of the OpenAssistant Project

- **Model type:** LoRA (PEFT)

- **Language:** English, German, Spanish, French (and limited capabilities in Italian, Portuguese, Polish, Dutch, Romanian, Czech, Swedish);

- **Finetuned from:** [tiiuae/falcon-40b](https://huggingface.co/tiiuae/falcon-4b)

- **Model type:** Causal decoder-only transformer language model

- **Weights & Biases:** [Training log1](https://wandb.ai/open-assistant/public-sft/runs/q0q9lce4)

[Training log2](https://wandb.ai/open-assistant/public-sft/runs/qqok9ru2?workspace=user-jordanclive)

# LoRA Adapter for Falcon 40B trained on oasst-top1

This repo contains a **Falcon 40B** LoRA fine-tuned model and the low-rank adapter fit on datasets part of the OpenAssistant project.

This version of the weights was trained with the following hyperparameters:

SFT 1

- Epochs: 2

- Batch size: 128

- Max Length: 2048

- Learning rate: 1e-4

- Lora _r_: 64

- Lora Alpha: 16

- Lora target modules: ["dense_4h_to_h", "dense", "query_key_value", "dense_h_to_4h"]

SFT2

- Epochs: 10

- Batch size: 128

The model was trained with flash attention and gradient checkpointing and deepspeed stage 3 on 8 x A100 80gb

Dataset:

SFT1:

```

- oa_leet10k:

val_split: 0.05

max_val_set: 250

- cmu_wiki_qa:

val_split: 0.05

- joke:

val_split: 0.05

- webgpt:

val_split: 0.05

max_val_set: 250

- alpaca_gpt4:

val_split: 0.025

max_val_set: 250

- gpteacher_roleplay:

val_split: 0.05

- wizardlm_70k:

val_split: 0.05

max_val_set: 500

- poem_instructions:

val_split: 0.025

- tell_a_joke:

val_split: 0.05

max_val_set: 250

- gpt4all:

val_split: 0.01

max_val_set: 1000

- minimath:

val_split: 0.05

- humaneval_mbpp_codegen_qa:

val_split: 0.05

- humaneval_mbpp_testgen_qa:

val_split: 0.05

- dolly15k:

val_split: 0.05

max_val_set: 300

- recipes:

val_split: 0.05

- code_alpaca:

val_split: 0.05

max_val_set: 250

- vicuna:

fraction: 0.5

val_split: 0.025

max_val_set: 250

- oa_wiki_qa_bart_10000row:

val_split: 0.05

max_val_set: 250

- grade_school_math_instructions:

val_split: 0.05

```

SFT2

```

- oasst_export:

lang: "bg,ca,cs,da,de,en,es,fr,hr,hu,it,nl,pl,pt,ro,ru,sl,sr,sv,uk" # sft-8.0

input_file_path: 2023-05-06_OASST_labels.jsonl.gz

val_split: 0.05

top_k: 1

- lima:

val_split: 0.05

max_val_set: 50

```

## Prompting

Two special tokens are used to mark the beginning of user and assistant turns:

`<|prompter|>` and `<|assistant|>`. Each turn ends with a `<|endoftext|>` token.

Input prompt example:

```

<|prompter|>What is a meme, and what's the history behind this word?<|endoftext|><|assistant|>

```

The input ends with the `<|assistant|>` token to signal that the model should

start generating the assistant reply.

# Example Inference code (Prompt Template)

```

model = model.to(device)

if dtype == torch.float16:

model = model.half()

# Choose Generation parameters

generation_config = GenerationConfig(

temperature=0.1,

top_p=0.75,

top_k=40,

num_beams=4,

)

def format_system_prompt(prompt, eos_token=tokenizer.eos_token):

return "{}{}{}{}".format("<|prompter|>", prompt, eos_token, "<|assistant|>")

def generate(prompt, generation_config=generation_config, max_new_tokens=2048, device=device):

prompt = format_system_prompt(prompt,eos_token=tokenizer.eos_token) # OpenAssistant Prompt Format expected

input_ids = tokenizer(prompt, return_tensors="pt").input_ids.to(device)

with torch.no_grad():

generation_output = model.generate(

input_ids=input_ids,

generation_config=generation_config,

return_dict_in_generate=True,

output_scores=True,

max_new_tokens=max_new_tokens,

eos_token_id=tokenizer.eos_token_id,

)

s = generation_output.sequences[0]

output = tokenizer.decode(s)

print("Text generated:")

print(output)

return output

```

## LoRA weights

If you want to use the LoRA weights separately, several special token embeddings also need to be added.

```

base_model_id = "tiiuae/falcon-40b"

import torch

import transformers

from huggingface_hub import hf_hub_download

from peft import PeftModel

def add_embeddings(model, embed_path, tokenizer):

old_embeddings = model.get_input_embeddings()

old_num_tokens, old_embedding_dim = old_embeddings.weight.size()

new_embeddings = torch.nn.Embedding(old_num_tokens, old_embedding_dim)

new_embeddings.to(old_embeddings.weight.device, dtype=old_embeddings.weight.dtype)

model._init_weights(new_embeddings)

embed_weights = torch.load(embed_path, map_location=old_embeddings.weight.device)

vocab_size = tokenizer.vocab_size

new_embeddings.weight.data[:vocab_size, :] = old_embeddings.weight.data[:vocab_size, :]

new_embeddings.weight.data[vocab_size : vocab_size + embed_weights.shape[0], :] = embed_weights.to(

new_embeddings.weight.dtype

).to(new_embeddings.weight.device)

model.set_input_embeddings(new_embeddings)

model.tie_weights()

def load_peft_model(model, peft_model_path, tokenizer):

embed_weights = hf_hub_download(peft_model_path, "extra_embeddings.pt")

model.resize_token_embeddings(tokenizer.vocab_size + torch.load(embed_weights).shape[0])

model.config.eos_token_id = tokenizer.eos_token_id

model.config.bos_token_id = tokenizer.bos_token_id

model.config.pad_token_id = tokenizer.pad_token_id

model = PeftModel.from_pretrained(

model,

model_id=peft_model_path,

torch_dtype=model.dtype,

)

model.eos_token_id = tokenizer.eos_token_id

add_embeddings(model, embed_weights, tokenizer)

return model

def load_lora_model(base_model_id, tokenizer, device, dtype):

model = transformers.AutoModelForCausalLM.from_pretrained(

base_model_id,

torch_dtype=dtype,

trust_remote_code=True,

)

model = load_peft_model(model, repo_id, tokenizer)

model = model.to(device)

return model

model = load_lora_model(base_model_id=base_model_id, tokenizer=tokenizer, device=device, dtype=dtype)

```

|

HoaAn2003/ppo-Huggy

|

HoaAn2003

| 2023-07-13T08:13:54Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-07-13T08:13:06Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: HoaAn2003/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

Trong-Nghia/bert-large-uncased-detect-dep-v2

|

Trong-Nghia

| 2023-07-13T08:06:51Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-13T06:00:18Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: bert-large-uncased-detect-dep-v2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-large-uncased-detect-dep-v2

This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6741

- Accuracy: 0.727

- F1: 0.7976

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-06

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.6244 | 1.0 | 1502 | 0.5466 | 0.755 | 0.8228 |

| 0.5956 | 2.0 | 3004 | 0.5683 | 0.735 | 0.7988 |

| 0.523 | 3.0 | 4506 | 0.6741 | 0.727 | 0.7976 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

haxett333/RL-Reinforce-100TrainEpisodesInsteadof1000

|

haxett333

| 2023-07-13T08:00:13Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-13T08:00:09Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: RL-Reinforce-100TrainEpisodesInsteadof1000

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 98.70 +/- 36.77

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

saeedehj/led-base-finetune-cnn

|

saeedehj

| 2023-07-13T07:50:12Z | 34 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"led",

"text2text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-07-12T22:27:22Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- rouge

model-index:

- name: led-base-16384-finetune-cnn

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# led-base-16384-finetune-cnn

This model is a fine-tuned version of [allenai/led-base-16384](https://huggingface.co/allenai/led-base-16384) on the cnn_dailymail dataset.

It achieves the following results on the evaluation set:

- Loss: 3.2020

- Rouge1: 24.2258

- Rouge2: 9.0151

- Rougel: 19.0336

- Rougelsum: 22.2604

- Gen Len: 20.0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:|

| 1.8988 | 1.0 | 2000 | 2.0031 | 25.1709 | 10.0426 | 20.1311 | 23.1639 | 20.0 |

| 1.6038 | 2.0 | 4000 | 2.0314 | 25.0213 | 9.8701 | 19.8987 | 23.0129 | 20.0 |

| 1.3352 | 3.0 | 6000 | 2.1124 | 24.99 | 9.905 | 19.9566 | 23.0973 | 20.0 |