problem_id

stringlengths 18

22

| source

stringclasses 1

value | task_type

stringclasses 1

value | in_source_id

stringlengths 13

58

| prompt

stringlengths 1.1k

25.4k

| golden_diff

stringlengths 145

5.13k

| verification_info

stringlengths 582

39.1k

| num_tokens

int64 271

4.1k

| num_tokens_diff

int64 47

1.02k

|

|---|---|---|---|---|---|---|---|---|

gh_patches_debug_11601

|

rasdani/github-patches

|

git_diff

|

Parsl__parsl-596

|

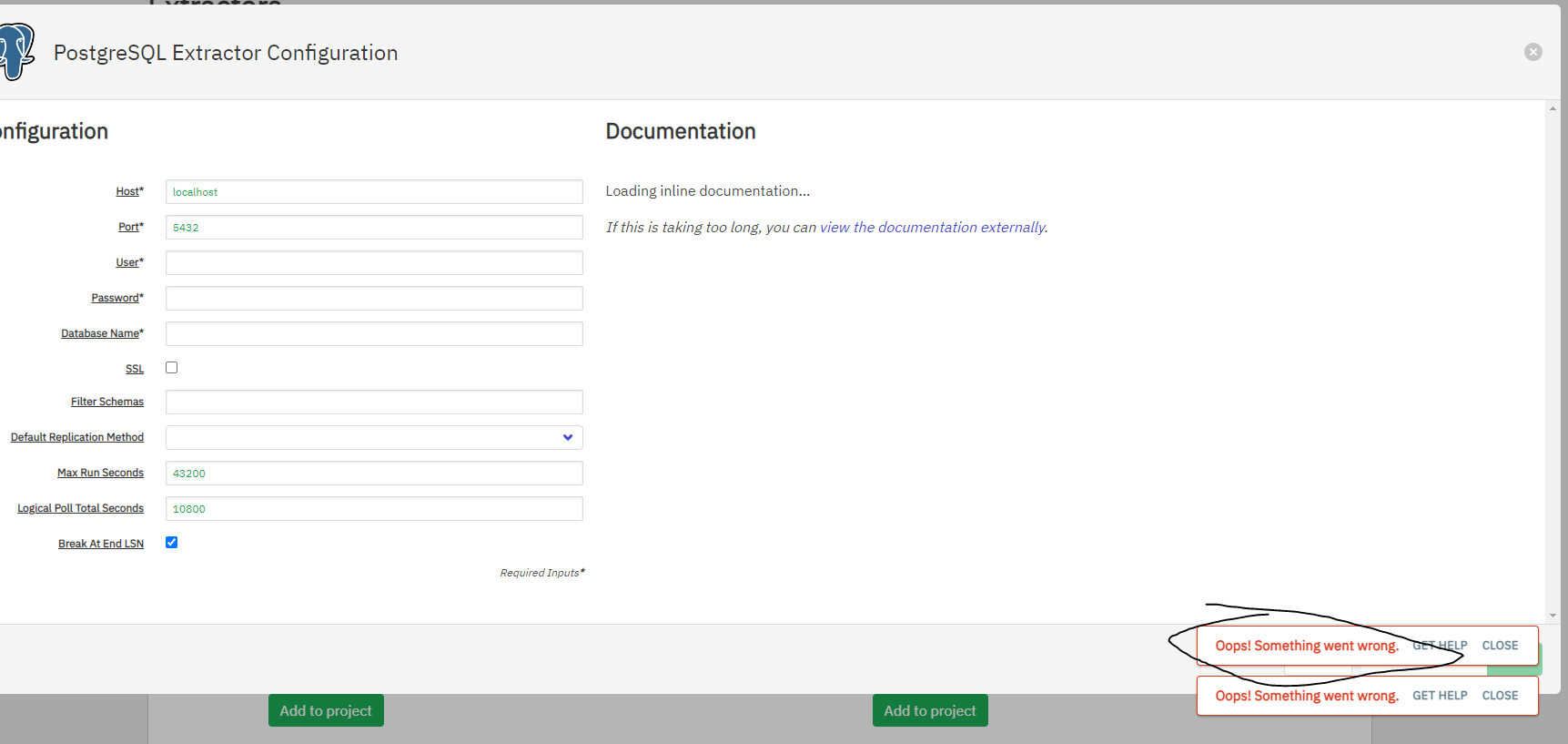

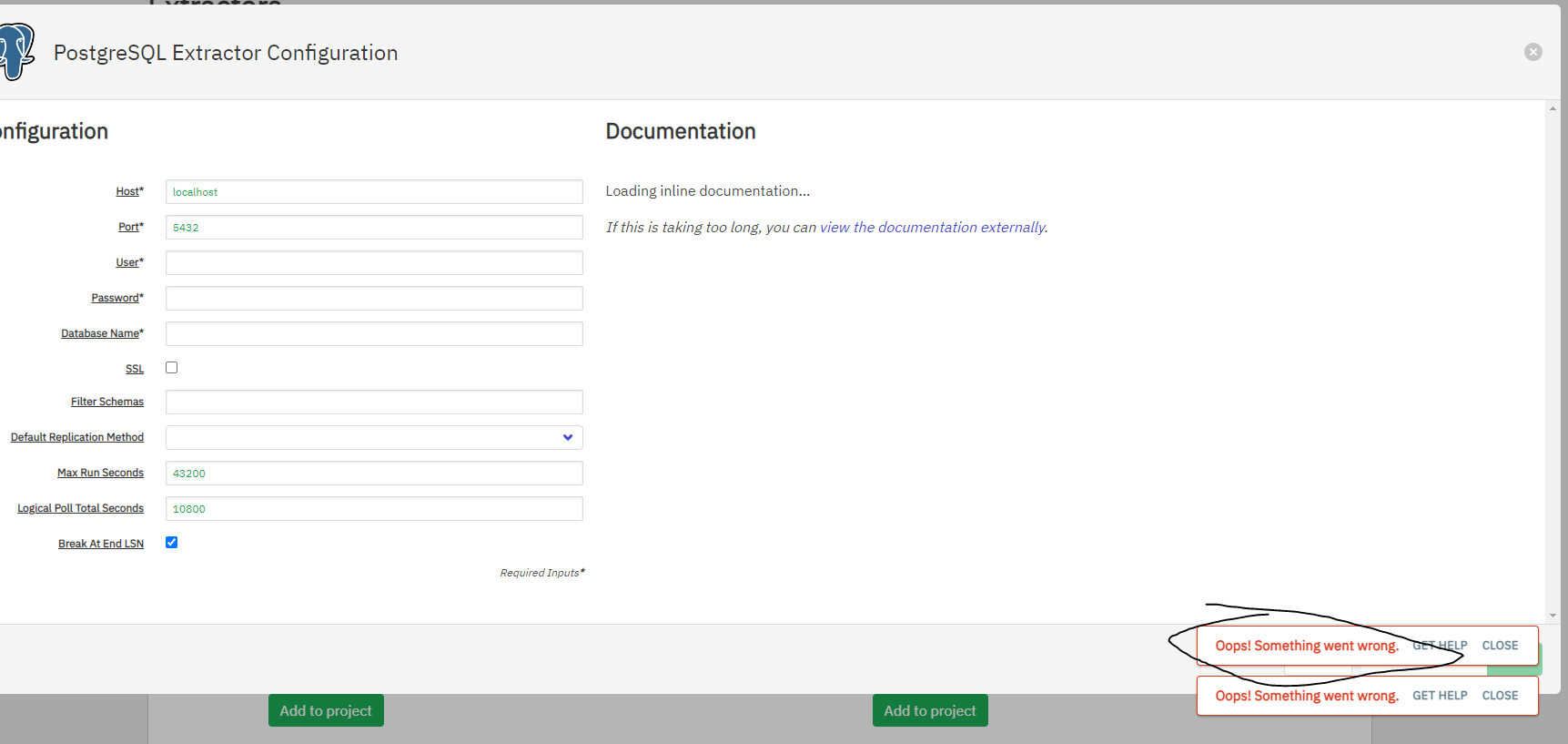

We are currently solving the following issue within our repository. Here is the issue text:

--- BEGIN ISSUE ---

Do not hardcode directory to `rundir` for globus tokens

Currently the token file is hardcoded to `TOKEN_FILE = 'runinfo/.globus.json'`. This will break if the user uses a non-default rundir. I vote not to couple it to the rundir so that it can be re-used between different scripts with different rundirs without requiring re-authentication, for example, `$HOME/.parsl/globus.json`.

Do not hardcode directory to `rundir` for globus tokens

Currently the token file is hardcoded to `TOKEN_FILE = 'runinfo/.globus.json'`. This will break if the user uses a non-default rundir. I vote not to couple it to the rundir so that it can be re-used between different scripts with different rundirs without requiring re-authentication, for example, `$HOME/.parsl/globus.json`.

--- END ISSUE ---

Below are some code segments, each from a relevant file. One or more of these files may contain bugs.

--- BEGIN FILES ---

Path: `parsl/data_provider/globus.py`

Content:

```

1 import logging

2 import json

3 import globus_sdk

4

5

6 logger = logging.getLogger(__name__)

7 # Add StreamHandler to print error Globus events to stderr

8 handler = logging.StreamHandler()

9 handler.setLevel(logging.WARN)

10 format_string = "%(asctime)s %(name)s:%(lineno)d [%(levelname)s] %(message)s"

11 formatter = logging.Formatter(format_string, datefmt='%Y-%m-%d %H:%M:%S')

12 handler.setFormatter(formatter)

13 logger.addHandler(handler)

14

15

16 """

17 'Parsl Application' OAuth2 client registered with Globus Auth

18 by [email protected]

19 """

20 CLIENT_ID = '8b8060fd-610e-4a74-885e-1051c71ad473'

21 REDIRECT_URI = 'https://auth.globus.org/v2/web/auth-code'

22 SCOPES = ('openid '

23 'urn:globus:auth:scope:transfer.api.globus.org:all')

24

25 TOKEN_FILE = 'runinfo/.globus.json'

26

27

28 get_input = getattr(__builtins__, 'raw_input', input)

29

30

31 def _load_tokens_from_file(filepath):

32 with open(filepath, 'r') as f:

33 tokens = json.load(f)

34 return tokens

35

36

37 def _save_tokens_to_file(filepath, tokens):

38 with open(filepath, 'w') as f:

39 json.dump(tokens, f)

40

41

42 def _update_tokens_file_on_refresh(token_response):

43 _save_tokens_to_file(TOKEN_FILE, token_response.by_resource_server)

44

45

46 def _do_native_app_authentication(client_id, redirect_uri,

47 requested_scopes=None):

48

49 client = globus_sdk.NativeAppAuthClient(client_id=client_id)

50 client.oauth2_start_flow(

51 requested_scopes=requested_scopes,

52 redirect_uri=redirect_uri,

53 refresh_tokens=True)

54

55 url = client.oauth2_get_authorize_url()

56 print('Please visit the following URL to provide authorization: \n{}'.format(url))

57 auth_code = get_input('Enter the auth code: ').strip()

58 token_response = client.oauth2_exchange_code_for_tokens(auth_code)

59 return token_response.by_resource_server

60

61

62 def _get_native_app_authorizer(client_id):

63 tokens = None

64 try:

65 tokens = _load_tokens_from_file(TOKEN_FILE)

66 except Exception:

67 pass

68

69 if not tokens:

70 tokens = _do_native_app_authentication(

71 client_id=client_id,

72 redirect_uri=REDIRECT_URI,

73 requested_scopes=SCOPES)

74 try:

75 _save_tokens_to_file(TOKEN_FILE, tokens)

76 except Exception:

77 pass

78

79 transfer_tokens = tokens['transfer.api.globus.org']

80

81 auth_client = globus_sdk.NativeAppAuthClient(client_id=client_id)

82

83 return globus_sdk.RefreshTokenAuthorizer(

84 transfer_tokens['refresh_token'],

85 auth_client,

86 access_token=transfer_tokens['access_token'],

87 expires_at=transfer_tokens['expires_at_seconds'],

88 on_refresh=_update_tokens_file_on_refresh)

89

90

91 def get_globus():

92 Globus.init()

93 return Globus()

94

95

96 class Globus(object):

97 """

98 All communication with the Globus Auth and Globus Transfer services is enclosed

99 in the Globus class. In particular, the Globus class is reponsible for:

100 - managing an OAuth2 authorizer - getting access and refresh tokens,

101 refreshing an access token, storing to and retrieving tokens from

102 .globus.json file,

103 - submitting file transfers,

104 - monitoring transfers.

105 """

106

107 authorizer = None

108

109 @classmethod

110 def init(cls):

111 if cls.authorizer:

112 return

113 cls.authorizer = _get_native_app_authorizer(CLIENT_ID)

114

115 @classmethod

116 def get_authorizer(cls):

117 return cls.authorizer

118

119 @classmethod

120 def transfer_file(cls, src_ep, dst_ep, src_path, dst_path):

121 tc = globus_sdk.TransferClient(authorizer=cls.authorizer)

122 td = globus_sdk.TransferData(tc, src_ep, dst_ep)

123 td.add_item(src_path, dst_path)

124 try:

125 task = tc.submit_transfer(td)

126 except Exception as e:

127 raise Exception('Globus transfer from {}{} to {}{} failed due to error: {}'.format(

128 src_ep, src_path, dst_ep, dst_path, e))

129

130 last_event_time = None

131 """

132 A Globus transfer job (task) can be in one of the three states: ACTIVE, SUCCEEDED, FAILED.

133 Parsl every 20 seconds polls a status of the transfer job (task) from the Globus Transfer service,

134 with 60 second timeout limit. If the task is ACTIVE after time runs out 'task_wait' returns False,

135 and True otherwise.

136 """

137 while not tc.task_wait(task['task_id'], 60, 15):

138 task = tc.get_task(task['task_id'])

139 # Get the last error Globus event

140 events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')

141 event = events.data[0]

142 # Print the error event to stderr and Parsl file log if it was not yet printed

143 if event['time'] != last_event_time:

144 last_event_time = event['time']

145 logger.warn('Non-critical Globus Transfer error event for globus://{}{}: "{}" at {}. Retrying...'.format(

146 src_ep, src_path, event['description'], event['time']))

147 logger.debug('Globus Transfer error details: {}'.format(event['details']))

148

149 """

150 The Globus transfer job (task) has been terminated (is not ACTIVE). Check if the transfer

151 SUCCEEDED or FAILED.

152 """

153 task = tc.get_task(task['task_id'])

154 if task['status'] == 'SUCCEEDED':

155 logger.debug('Globus transfer {}, from {}{} to {}{} succeeded'.format(

156 task['task_id'], src_ep, src_path, dst_ep, dst_path))

157 else:

158 logger.debug('Globus Transfer task: {}'.format(task))

159 events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')

160 event = events.data[0]

161 raise Exception('Globus transfer {}, from {}{} to {}{} failed due to error: "{}"'.format(

162 task['task_id'], src_ep, src_path, dst_ep, dst_path, event['details']))

163

```

--- END FILES ---

Please first localize the bug based on the issue statement, and then generate a patch according to the `git diff` format fenced by three backticks.

Here is an example:

```diff

diff --git a/examples/server_async.py b/examples/server_async.py

--- a/examples/server_async.py

+++ b/examples/server_async.py

@@ -313,4 +313,4 @@

if __name__ == "__main__":

- asyncio.run(run_async_server("."), debug=True)

+ asyncio.run(run_async_server(), debug=True)

diff --git a/examples/server_sync.py b/examples/server_sync.py

--- a/examples/server_sync.py

+++ b/examples/server_sync.py

@@ -313,5 +313,5 @@

if __name__ == "__main__":

- server = run_sync_server(".")

+ server = run_sync_server()

server.shutdown()

```

|

diff --git a/parsl/data_provider/globus.py b/parsl/data_provider/globus.py

--- a/parsl/data_provider/globus.py

+++ b/parsl/data_provider/globus.py

@@ -1,6 +1,7 @@

import logging

import json

import globus_sdk

+import os

logger = logging.getLogger(__name__)

@@ -22,8 +23,10 @@

SCOPES = ('openid '

'urn:globus:auth:scope:transfer.api.globus.org:all')

-TOKEN_FILE = 'runinfo/.globus.json'

-

+token_path = os.path.join(os.path.expanduser('~'), '.parsl')

+if not os.path.isdir(token_path):

+ os.mkdir(token_path)

+TOKEN_FILE = os.path.join(token_path, '.globus.json')

get_input = getattr(__builtins__, 'raw_input', input)

|

{"golden_diff": "diff --git a/parsl/data_provider/globus.py b/parsl/data_provider/globus.py\n--- a/parsl/data_provider/globus.py\n+++ b/parsl/data_provider/globus.py\n@@ -1,6 +1,7 @@\n import logging\n import json\n import globus_sdk\n+import os\n \n \n logger = logging.getLogger(__name__)\n@@ -22,8 +23,10 @@\n SCOPES = ('openid '\n 'urn:globus:auth:scope:transfer.api.globus.org:all')\n \n-TOKEN_FILE = 'runinfo/.globus.json'\n-\n+token_path = os.path.join(os.path.expanduser('~'), '.parsl')\n+if not os.path.isdir(token_path):\n+ os.mkdir(token_path)\n+TOKEN_FILE = os.path.join(token_path, '.globus.json')\n \n get_input = getattr(__builtins__, 'raw_input', input)\n", "issue": "Do not hardcode directory to `rundir` for globus tokens\nCurrently the token file is hardcoded to `TOKEN_FILE = 'runinfo/.globus.json'`. This will break if the user uses a non-default rundir. I vote not to couple it to the rundir so that it can be re-used between different scripts with different rundirs without requiring re-authentication, for example, `$HOME/.parsl/globus.json`.\nDo not hardcode directory to `rundir` for globus tokens\nCurrently the token file is hardcoded to `TOKEN_FILE = 'runinfo/.globus.json'`. This will break if the user uses a non-default rundir. I vote not to couple it to the rundir so that it can be re-used between different scripts with different rundirs without requiring re-authentication, for example, `$HOME/.parsl/globus.json`.\n", "before_files": [{"content": "import logging\nimport json\nimport globus_sdk\n\n\nlogger = logging.getLogger(__name__)\n# Add StreamHandler to print error Globus events to stderr\nhandler = logging.StreamHandler()\nhandler.setLevel(logging.WARN)\nformat_string = \"%(asctime)s %(name)s:%(lineno)d [%(levelname)s] %(message)s\"\nformatter = logging.Formatter(format_string, datefmt='%Y-%m-%d %H:%M:%S')\nhandler.setFormatter(formatter)\nlogger.addHandler(handler)\n\n\n\"\"\"\n'Parsl Application' OAuth2 client registered with Globus Auth\nby [email protected]\n\"\"\"\nCLIENT_ID = '8b8060fd-610e-4a74-885e-1051c71ad473'\nREDIRECT_URI = 'https://auth.globus.org/v2/web/auth-code'\nSCOPES = ('openid '\n 'urn:globus:auth:scope:transfer.api.globus.org:all')\n\nTOKEN_FILE = 'runinfo/.globus.json'\n\n\nget_input = getattr(__builtins__, 'raw_input', input)\n\n\ndef _load_tokens_from_file(filepath):\n with open(filepath, 'r') as f:\n tokens = json.load(f)\n return tokens\n\n\ndef _save_tokens_to_file(filepath, tokens):\n with open(filepath, 'w') as f:\n json.dump(tokens, f)\n\n\ndef _update_tokens_file_on_refresh(token_response):\n _save_tokens_to_file(TOKEN_FILE, token_response.by_resource_server)\n\n\ndef _do_native_app_authentication(client_id, redirect_uri,\n requested_scopes=None):\n\n client = globus_sdk.NativeAppAuthClient(client_id=client_id)\n client.oauth2_start_flow(\n requested_scopes=requested_scopes,\n redirect_uri=redirect_uri,\n refresh_tokens=True)\n\n url = client.oauth2_get_authorize_url()\n print('Please visit the following URL to provide authorization: \\n{}'.format(url))\n auth_code = get_input('Enter the auth code: ').strip()\n token_response = client.oauth2_exchange_code_for_tokens(auth_code)\n return token_response.by_resource_server\n\n\ndef _get_native_app_authorizer(client_id):\n tokens = None\n try:\n tokens = _load_tokens_from_file(TOKEN_FILE)\n except Exception:\n pass\n\n if not tokens:\n tokens = _do_native_app_authentication(\n client_id=client_id,\n redirect_uri=REDIRECT_URI,\n requested_scopes=SCOPES)\n try:\n _save_tokens_to_file(TOKEN_FILE, tokens)\n except Exception:\n pass\n\n transfer_tokens = tokens['transfer.api.globus.org']\n\n auth_client = globus_sdk.NativeAppAuthClient(client_id=client_id)\n\n return globus_sdk.RefreshTokenAuthorizer(\n transfer_tokens['refresh_token'],\n auth_client,\n access_token=transfer_tokens['access_token'],\n expires_at=transfer_tokens['expires_at_seconds'],\n on_refresh=_update_tokens_file_on_refresh)\n\n\ndef get_globus():\n Globus.init()\n return Globus()\n\n\nclass Globus(object):\n \"\"\"\n All communication with the Globus Auth and Globus Transfer services is enclosed\n in the Globus class. In particular, the Globus class is reponsible for:\n - managing an OAuth2 authorizer - getting access and refresh tokens,\n refreshing an access token, storing to and retrieving tokens from\n .globus.json file,\n - submitting file transfers,\n - monitoring transfers.\n \"\"\"\n\n authorizer = None\n\n @classmethod\n def init(cls):\n if cls.authorizer:\n return\n cls.authorizer = _get_native_app_authorizer(CLIENT_ID)\n\n @classmethod\n def get_authorizer(cls):\n return cls.authorizer\n\n @classmethod\n def transfer_file(cls, src_ep, dst_ep, src_path, dst_path):\n tc = globus_sdk.TransferClient(authorizer=cls.authorizer)\n td = globus_sdk.TransferData(tc, src_ep, dst_ep)\n td.add_item(src_path, dst_path)\n try:\n task = tc.submit_transfer(td)\n except Exception as e:\n raise Exception('Globus transfer from {}{} to {}{} failed due to error: {}'.format(\n src_ep, src_path, dst_ep, dst_path, e))\n\n last_event_time = None\n \"\"\"\n A Globus transfer job (task) can be in one of the three states: ACTIVE, SUCCEEDED, FAILED.\n Parsl every 20 seconds polls a status of the transfer job (task) from the Globus Transfer service,\n with 60 second timeout limit. If the task is ACTIVE after time runs out 'task_wait' returns False,\n and True otherwise.\n \"\"\"\n while not tc.task_wait(task['task_id'], 60, 15):\n task = tc.get_task(task['task_id'])\n # Get the last error Globus event\n events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')\n event = events.data[0]\n # Print the error event to stderr and Parsl file log if it was not yet printed\n if event['time'] != last_event_time:\n last_event_time = event['time']\n logger.warn('Non-critical Globus Transfer error event for globus://{}{}: \"{}\" at {}. Retrying...'.format(\n src_ep, src_path, event['description'], event['time']))\n logger.debug('Globus Transfer error details: {}'.format(event['details']))\n\n \"\"\"\n The Globus transfer job (task) has been terminated (is not ACTIVE). Check if the transfer\n SUCCEEDED or FAILED.\n \"\"\"\n task = tc.get_task(task['task_id'])\n if task['status'] == 'SUCCEEDED':\n logger.debug('Globus transfer {}, from {}{} to {}{} succeeded'.format(\n task['task_id'], src_ep, src_path, dst_ep, dst_path))\n else:\n logger.debug('Globus Transfer task: {}'.format(task))\n events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')\n event = events.data[0]\n raise Exception('Globus transfer {}, from {}{} to {}{} failed due to error: \"{}\"'.format(\n task['task_id'], src_ep, src_path, dst_ep, dst_path, event['details']))\n", "path": "parsl/data_provider/globus.py"}], "after_files": [{"content": "import logging\nimport json\nimport globus_sdk\nimport os\n\n\nlogger = logging.getLogger(__name__)\n# Add StreamHandler to print error Globus events to stderr\nhandler = logging.StreamHandler()\nhandler.setLevel(logging.WARN)\nformat_string = \"%(asctime)s %(name)s:%(lineno)d [%(levelname)s] %(message)s\"\nformatter = logging.Formatter(format_string, datefmt='%Y-%m-%d %H:%M:%S')\nhandler.setFormatter(formatter)\nlogger.addHandler(handler)\n\n\n\"\"\"\n'Parsl Application' OAuth2 client registered with Globus Auth\nby [email protected]\n\"\"\"\nCLIENT_ID = '8b8060fd-610e-4a74-885e-1051c71ad473'\nREDIRECT_URI = 'https://auth.globus.org/v2/web/auth-code'\nSCOPES = ('openid '\n 'urn:globus:auth:scope:transfer.api.globus.org:all')\n\ntoken_path = os.path.join(os.path.expanduser('~'), '.parsl')\nif not os.path.isdir(token_path):\n os.mkdir(token_path)\nTOKEN_FILE = os.path.join(token_path, '.globus.json')\n\nget_input = getattr(__builtins__, 'raw_input', input)\n\n\ndef _load_tokens_from_file(filepath):\n with open(filepath, 'r') as f:\n tokens = json.load(f)\n return tokens\n\n\ndef _save_tokens_to_file(filepath, tokens):\n with open(filepath, 'w') as f:\n json.dump(tokens, f)\n\n\ndef _update_tokens_file_on_refresh(token_response):\n _save_tokens_to_file(TOKEN_FILE, token_response.by_resource_server)\n\n\ndef _do_native_app_authentication(client_id, redirect_uri,\n requested_scopes=None):\n\n client = globus_sdk.NativeAppAuthClient(client_id=client_id)\n client.oauth2_start_flow(\n requested_scopes=requested_scopes,\n redirect_uri=redirect_uri,\n refresh_tokens=True)\n\n url = client.oauth2_get_authorize_url()\n print('Please visit the following URL to provide authorization: \\n{}'.format(url))\n auth_code = get_input('Enter the auth code: ').strip()\n token_response = client.oauth2_exchange_code_for_tokens(auth_code)\n return token_response.by_resource_server\n\n\ndef _get_native_app_authorizer(client_id):\n tokens = None\n try:\n tokens = _load_tokens_from_file(TOKEN_FILE)\n except Exception:\n pass\n\n if not tokens:\n tokens = _do_native_app_authentication(\n client_id=client_id,\n redirect_uri=REDIRECT_URI,\n requested_scopes=SCOPES)\n try:\n _save_tokens_to_file(TOKEN_FILE, tokens)\n except Exception:\n pass\n\n transfer_tokens = tokens['transfer.api.globus.org']\n\n auth_client = globus_sdk.NativeAppAuthClient(client_id=client_id)\n\n return globus_sdk.RefreshTokenAuthorizer(\n transfer_tokens['refresh_token'],\n auth_client,\n access_token=transfer_tokens['access_token'],\n expires_at=transfer_tokens['expires_at_seconds'],\n on_refresh=_update_tokens_file_on_refresh)\n\n\ndef get_globus():\n Globus.init()\n return Globus()\n\n\nclass Globus(object):\n \"\"\"\n All communication with the Globus Auth and Globus Transfer services is enclosed\n in the Globus class. In particular, the Globus class is reponsible for:\n - managing an OAuth2 authorizer - getting access and refresh tokens,\n refreshing an access token, storing to and retrieving tokens from\n .globus.json file,\n - submitting file transfers,\n - monitoring transfers.\n \"\"\"\n\n authorizer = None\n\n @classmethod\n def init(cls):\n if cls.authorizer:\n return\n cls.authorizer = _get_native_app_authorizer(CLIENT_ID)\n\n @classmethod\n def get_authorizer(cls):\n return cls.authorizer\n\n @classmethod\n def transfer_file(cls, src_ep, dst_ep, src_path, dst_path):\n tc = globus_sdk.TransferClient(authorizer=cls.authorizer)\n td = globus_sdk.TransferData(tc, src_ep, dst_ep)\n td.add_item(src_path, dst_path)\n try:\n task = tc.submit_transfer(td)\n except Exception as e:\n raise Exception('Globus transfer from {}{} to {}{} failed due to error: {}'.format(\n src_ep, src_path, dst_ep, dst_path, e))\n\n last_event_time = None\n \"\"\"\n A Globus transfer job (task) can be in one of the three states: ACTIVE, SUCCEEDED, FAILED.\n Parsl every 20 seconds polls a status of the transfer job (task) from the Globus Transfer service,\n with 60 second timeout limit. If the task is ACTIVE after time runs out 'task_wait' returns False,\n and True otherwise.\n \"\"\"\n while not tc.task_wait(task['task_id'], 60, 15):\n task = tc.get_task(task['task_id'])\n # Get the last error Globus event\n events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')\n event = events.data[0]\n # Print the error event to stderr and Parsl file log if it was not yet printed\n if event['time'] != last_event_time:\n last_event_time = event['time']\n logger.warn('Non-critical Globus Transfer error event for globus://{}{}: \"{}\" at {}. Retrying...'.format(\n src_ep, src_path, event['description'], event['time']))\n logger.debug('Globus Transfer error details: {}'.format(event['details']))\n\n \"\"\"\n The Globus transfer job (task) has been terminated (is not ACTIVE). Check if the transfer\n SUCCEEDED or FAILED.\n \"\"\"\n task = tc.get_task(task['task_id'])\n if task['status'] == 'SUCCEEDED':\n logger.debug('Globus transfer {}, from {}{} to {}{} succeeded'.format(\n task['task_id'], src_ep, src_path, dst_ep, dst_path))\n else:\n logger.debug('Globus Transfer task: {}'.format(task))\n events = tc.task_event_list(task['task_id'], num_results=1, filter='is_error:1')\n event = events.data[0]\n raise Exception('Globus transfer {}, from {}{} to {}{} failed due to error: \"{}\"'.format(\n task['task_id'], src_ep, src_path, dst_ep, dst_path, event['details']))\n", "path": "parsl/data_provider/globus.py"}]}

| 2,210 | 197 |

gh_patches_debug_20015

|

rasdani/github-patches

|

git_diff

|

easybuilders__easybuild-easyblocks-1660

|

We are currently solving the following issue within our repository. Here is the issue text:

--- BEGIN ISSUE ---

Perl-5.28.0-GCCcore-7.3.0.eb not building due to perldoc sanity checks failing

```

$ eb --version

This is EasyBuild 3.8.1 (framework: 3.8.1, easyblocks: 3.8.1)

```

From [my build log](https://www.dropbox.com/s/0rmwuqpju9kfqiy/install_foss_2018b_toolchain_job.sge.o3588049.gz?dl=0) for Perl-5.28.0-GCCcore-7.3.0.eb the following looks like a major problem:

```

/usr/local/community/rse/EasyBuild/software/Perl/5.28.0-GCCcore-7.3.0/lib/perl5/5.28.0/xCouldn't copy cpan/podlators/blib/script/pod2man to /usr/local/scripts/pod2man: No such file or directory

```

I.e., it looks like it is trying to install stuff in the wrong place.

@boegel thinks the problem is that the Perl install process finds a `/usr/local/scripts` directory in my environment and incorrectly assumes that's where I'd like it to install scripts.

[More background info](https://openpkg-dev.openpkg.narkive.com/bGejYSaD/bugdb-perl-possible-build-problem-copying-into-usr-local-scripts-pr-133) (from 16 years ago!)

Suggested fix: add the following to the Perl easyconfig (not tested yet):

```python

configopts = "-Dscriptdirexp=%(installdir)s/bin"

```

NB I've not yet had chance to test this!

--- END ISSUE ---

Below are some code segments, each from a relevant file. One or more of these files may contain bugs.

--- BEGIN FILES ---

Path: `easybuild/easyblocks/p/perl.py`

Content:

```

1 ##

2 # Copyright 2009-2019 Ghent University

3 #

4 # This file is part of EasyBuild,

5 # originally created by the HPC team of Ghent University (http://ugent.be/hpc/en),

6 # with support of Ghent University (http://ugent.be/hpc),

7 # the Flemish Supercomputer Centre (VSC) (https://www.vscentrum.be),

8 # Flemish Research Foundation (FWO) (http://www.fwo.be/en)

9 # and the Department of Economy, Science and Innovation (EWI) (http://www.ewi-vlaanderen.be/en).

10 #

11 # https://github.com/easybuilders/easybuild

12 #

13 # EasyBuild is free software: you can redistribute it and/or modify

14 # it under the terms of the GNU General Public License as published by

15 # the Free Software Foundation v2.

16 #

17 # EasyBuild is distributed in the hope that it will be useful,

18 # but WITHOUT ANY WARRANTY; without even the implied warranty of

19 # MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

20 # GNU General Public License for more details.

21 #

22 # You should have received a copy of the GNU General Public License

23 # along with EasyBuild. If not, see <http://www.gnu.org/licenses/>.

24 ##

25 """

26 EasyBuild support for Perl, implemented as an easyblock

27

28 @author: Jens Timmerman (Ghent University)

29 @author: Kenneth Hoste (Ghent University)

30 """

31 import os

32

33 from easybuild.easyblocks.generic.configuremake import ConfigureMake

34 from easybuild.framework.easyconfig import CUSTOM

35 from easybuild.tools.run import run_cmd

36

37 # perldoc -lm seems to be the safest way to test if a module is available, based on exit code

38 EXTS_FILTER_PERL_MODULES = ("perldoc -lm %(ext_name)s ", "")

39

40

41 class EB_Perl(ConfigureMake):

42 """Support for building and installing Perl."""

43

44 @staticmethod

45 def extra_options():

46 """Add extra config options specific to Perl."""

47 extra_vars = {

48 'use_perl_threads': [True, "Enable use of internal Perl threads via -Dusethreads configure option", CUSTOM],

49 }

50 return ConfigureMake.extra_options(extra_vars)

51

52 def configure_step(self):

53 """

54 Configure Perl build: run ./Configure instead of ./configure with some different options

55 """

56 configopts = [

57 self.cfg['configopts'],

58 '-Dcc="{0}"'.format(os.getenv('CC')),

59 '-Dccflags="{0}"'.format(os.getenv('CFLAGS')),

60 '-Dinc_version_list=none',

61 ]

62 if self.cfg['use_perl_threads']:

63 configopts.append('-Dusethreads')

64

65 cmd = './Configure -de %s -Dprefix="%s"' % (' '.join(configopts), self.installdir)

66 run_cmd(cmd, log_all=True, simple=True)

67

68 def test_step(self):

69 """Test Perl build via 'make test'."""

70 # allow escaping with runtest = False

71 if self.cfg['runtest'] is None or self.cfg['runtest']:

72 if isinstance(self.cfg['runtest'], basestring):

73 cmd = "make %s" % self.cfg['runtest']

74 else:

75 cmd = "make test"

76

77 # specify locale to be used, to avoid that a handful of tests fail

78 cmd = "export LC_ALL=C && %s" % cmd

79

80 run_cmd(cmd, log_all=False, log_ok=False, simple=False)

81

82 def prepare_for_extensions(self):

83 """

84 Set default class and filter for Perl modules

85 """

86 # build and install additional modules with PerlModule easyblock

87 self.cfg['exts_defaultclass'] = "PerlModule"

88 self.cfg['exts_filter'] = EXTS_FILTER_PERL_MODULES

89

90 def sanity_check_step(self):

91 """Custom sanity check for Perl."""

92 majver = self.version.split('.')[0]

93 custom_paths = {

94 'files': [os.path.join('bin', x) for x in ['perl', 'perldoc']],

95 'dirs': ['lib/perl%s/%s' % (majver, self.version), 'man']

96 }

97 super(EB_Perl, self).sanity_check_step(custom_paths=custom_paths)

98

99

100 def get_major_perl_version():

101 """"

102 Returns the major verson of the perl binary in the current path

103 """

104 cmd = "perl -MConfig -e 'print $Config::Config{PERL_API_REVISION}'"

105 (perlmajver, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)

106 return perlmajver

107

108

109 def get_site_suffix(tag):

110 """

111 Returns the suffix for site* (e.g. sitearch, sitelib)

112 this will look something like /lib/perl5/site_perl/5.16.3/x86_64-linux-thread-multi

113 so, e.g. sitearch without site prefix

114

115 @tag: site tag to use, e.g. 'sitearch', 'sitelib'

116 """

117 perl_cmd = 'my $a = $Config::Config{"%s"}; $a =~ s/($Config::Config{"siteprefix"})//; print $a' % tag

118 cmd = "perl -MConfig -e '%s'" % perl_cmd

119 (sitesuffix, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)

120 # obtained value usually contains leading '/', so strip it off

121 return sitesuffix.lstrip(os.path.sep)

122

```

--- END FILES ---

Please first localize the bug based on the issue statement, and then generate a patch according to the `git diff` format fenced by three backticks.

Here is an example:

```diff

diff --git a/examples/server_async.py b/examples/server_async.py

--- a/examples/server_async.py

+++ b/examples/server_async.py

@@ -313,4 +313,4 @@

if __name__ == "__main__":

- asyncio.run(run_async_server("."), debug=True)

+ asyncio.run(run_async_server(), debug=True)

diff --git a/examples/server_sync.py b/examples/server_sync.py

--- a/examples/server_sync.py

+++ b/examples/server_sync.py

@@ -313,5 +313,5 @@

if __name__ == "__main__":

- server = run_sync_server(".")

+ server = run_sync_server()

server.shutdown()

```

|

diff --git a/easybuild/easyblocks/p/perl.py b/easybuild/easyblocks/p/perl.py

--- a/easybuild/easyblocks/p/perl.py

+++ b/easybuild/easyblocks/p/perl.py

@@ -58,11 +58,19 @@

'-Dcc="{0}"'.format(os.getenv('CC')),

'-Dccflags="{0}"'.format(os.getenv('CFLAGS')),

'-Dinc_version_list=none',

+ '-Dprefix=%(installdir)s',

+ # guarantee that scripts are installed in /bin in the installation directory (and not in a guessed path)

+ # see https://github.com/easybuilders/easybuild-easyblocks/issues/1659

+ '-Dinstallscript=%(installdir)s/bin',

+ '-Dscriptdir=%(installdir)s/bin',

+ '-Dscriptdirexp=%(installdir)s/bin',

]

if self.cfg['use_perl_threads']:

configopts.append('-Dusethreads')

- cmd = './Configure -de %s -Dprefix="%s"' % (' '.join(configopts), self.installdir)

+ configopts = (' '.join(configopts)) % {'installdir': self.installdir}

+

+ cmd = './Configure -de %s' % configopts

run_cmd(cmd, log_all=True, simple=True)

def test_step(self):

|

{"golden_diff": "diff --git a/easybuild/easyblocks/p/perl.py b/easybuild/easyblocks/p/perl.py\n--- a/easybuild/easyblocks/p/perl.py\n+++ b/easybuild/easyblocks/p/perl.py\n@@ -58,11 +58,19 @@\n '-Dcc=\"{0}\"'.format(os.getenv('CC')),\n '-Dccflags=\"{0}\"'.format(os.getenv('CFLAGS')),\n '-Dinc_version_list=none',\n+ '-Dprefix=%(installdir)s',\n+ # guarantee that scripts are installed in /bin in the installation directory (and not in a guessed path)\n+ # see https://github.com/easybuilders/easybuild-easyblocks/issues/1659\n+ '-Dinstallscript=%(installdir)s/bin',\n+ '-Dscriptdir=%(installdir)s/bin',\n+ '-Dscriptdirexp=%(installdir)s/bin',\n ]\n if self.cfg['use_perl_threads']:\n configopts.append('-Dusethreads')\n \n- cmd = './Configure -de %s -Dprefix=\"%s\"' % (' '.join(configopts), self.installdir)\n+ configopts = (' '.join(configopts)) % {'installdir': self.installdir}\n+\n+ cmd = './Configure -de %s' % configopts\n run_cmd(cmd, log_all=True, simple=True)\n \n def test_step(self):\n", "issue": "Perl-5.28.0-GCCcore-7.3.0.eb not building due to perldoc sanity checks failing\n```\r\n$ eb --version\r\nThis is EasyBuild 3.8.1 (framework: 3.8.1, easyblocks: 3.8.1)\r\n```\r\n\r\nFrom [my build log](https://www.dropbox.com/s/0rmwuqpju9kfqiy/install_foss_2018b_toolchain_job.sge.o3588049.gz?dl=0) for Perl-5.28.0-GCCcore-7.3.0.eb the following looks like a major problem:\r\n```\r\n /usr/local/community/rse/EasyBuild/software/Perl/5.28.0-GCCcore-7.3.0/lib/perl5/5.28.0/xCouldn't copy cpan/podlators/blib/script/pod2man to /usr/local/scripts/pod2man: No such file or directory\r\n```\r\n\r\nI.e., it looks like it is trying to install stuff in the wrong place.\r\n\r\n@boegel thinks the problem is that the Perl install process finds a `/usr/local/scripts` directory in my environment and incorrectly assumes that's where I'd like it to install scripts. \r\n\r\n[More background info](https://openpkg-dev.openpkg.narkive.com/bGejYSaD/bugdb-perl-possible-build-problem-copying-into-usr-local-scripts-pr-133) (from 16 years ago!)\r\n\r\nSuggested fix: add the following to the Perl easyconfig (not tested yet):\r\n```python\r\nconfigopts = \"-Dscriptdirexp=%(installdir)s/bin\"\r\n```\r\n\r\nNB I've not yet had chance to test this! \n", "before_files": [{"content": "##\n# Copyright 2009-2019 Ghent University\n#\n# This file is part of EasyBuild,\n# originally created by the HPC team of Ghent University (http://ugent.be/hpc/en),\n# with support of Ghent University (http://ugent.be/hpc),\n# the Flemish Supercomputer Centre (VSC) (https://www.vscentrum.be),\n# Flemish Research Foundation (FWO) (http://www.fwo.be/en)\n# and the Department of Economy, Science and Innovation (EWI) (http://www.ewi-vlaanderen.be/en).\n#\n# https://github.com/easybuilders/easybuild\n#\n# EasyBuild is free software: you can redistribute it and/or modify\n# it under the terms of the GNU General Public License as published by\n# the Free Software Foundation v2.\n#\n# EasyBuild is distributed in the hope that it will be useful,\n# but WITHOUT ANY WARRANTY; without even the implied warranty of\n# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the\n# GNU General Public License for more details.\n#\n# You should have received a copy of the GNU General Public License\n# along with EasyBuild. If not, see <http://www.gnu.org/licenses/>.\n##\n\"\"\"\nEasyBuild support for Perl, implemented as an easyblock\n\n@author: Jens Timmerman (Ghent University)\n@author: Kenneth Hoste (Ghent University)\n\"\"\"\nimport os\n\nfrom easybuild.easyblocks.generic.configuremake import ConfigureMake\nfrom easybuild.framework.easyconfig import CUSTOM\nfrom easybuild.tools.run import run_cmd\n\n# perldoc -lm seems to be the safest way to test if a module is available, based on exit code\nEXTS_FILTER_PERL_MODULES = (\"perldoc -lm %(ext_name)s \", \"\")\n\n\nclass EB_Perl(ConfigureMake):\n \"\"\"Support for building and installing Perl.\"\"\"\n\n @staticmethod\n def extra_options():\n \"\"\"Add extra config options specific to Perl.\"\"\"\n extra_vars = {\n 'use_perl_threads': [True, \"Enable use of internal Perl threads via -Dusethreads configure option\", CUSTOM],\n }\n return ConfigureMake.extra_options(extra_vars)\n\n def configure_step(self):\n \"\"\"\n Configure Perl build: run ./Configure instead of ./configure with some different options\n \"\"\"\n configopts = [\n self.cfg['configopts'],\n '-Dcc=\"{0}\"'.format(os.getenv('CC')),\n '-Dccflags=\"{0}\"'.format(os.getenv('CFLAGS')),\n '-Dinc_version_list=none',\n ]\n if self.cfg['use_perl_threads']:\n configopts.append('-Dusethreads')\n\n cmd = './Configure -de %s -Dprefix=\"%s\"' % (' '.join(configopts), self.installdir)\n run_cmd(cmd, log_all=True, simple=True)\n\n def test_step(self):\n \"\"\"Test Perl build via 'make test'.\"\"\"\n # allow escaping with runtest = False\n if self.cfg['runtest'] is None or self.cfg['runtest']:\n if isinstance(self.cfg['runtest'], basestring):\n cmd = \"make %s\" % self.cfg['runtest']\n else:\n cmd = \"make test\"\n\n # specify locale to be used, to avoid that a handful of tests fail\n cmd = \"export LC_ALL=C && %s\" % cmd\n\n run_cmd(cmd, log_all=False, log_ok=False, simple=False)\n\n def prepare_for_extensions(self):\n \"\"\"\n Set default class and filter for Perl modules\n \"\"\"\n # build and install additional modules with PerlModule easyblock\n self.cfg['exts_defaultclass'] = \"PerlModule\"\n self.cfg['exts_filter'] = EXTS_FILTER_PERL_MODULES\n\n def sanity_check_step(self):\n \"\"\"Custom sanity check for Perl.\"\"\"\n majver = self.version.split('.')[0]\n custom_paths = {\n 'files': [os.path.join('bin', x) for x in ['perl', 'perldoc']],\n 'dirs': ['lib/perl%s/%s' % (majver, self.version), 'man']\n }\n super(EB_Perl, self).sanity_check_step(custom_paths=custom_paths)\n\n\ndef get_major_perl_version():\n \"\"\"\"\n Returns the major verson of the perl binary in the current path\n \"\"\"\n cmd = \"perl -MConfig -e 'print $Config::Config{PERL_API_REVISION}'\"\n (perlmajver, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)\n return perlmajver\n\n\ndef get_site_suffix(tag):\n \"\"\"\n Returns the suffix for site* (e.g. sitearch, sitelib)\n this will look something like /lib/perl5/site_perl/5.16.3/x86_64-linux-thread-multi\n so, e.g. sitearch without site prefix\n\n @tag: site tag to use, e.g. 'sitearch', 'sitelib'\n \"\"\"\n perl_cmd = 'my $a = $Config::Config{\"%s\"}; $a =~ s/($Config::Config{\"siteprefix\"})//; print $a' % tag\n cmd = \"perl -MConfig -e '%s'\" % perl_cmd\n (sitesuffix, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)\n # obtained value usually contains leading '/', so strip it off\n return sitesuffix.lstrip(os.path.sep)\n", "path": "easybuild/easyblocks/p/perl.py"}], "after_files": [{"content": "##\n# Copyright 2009-2019 Ghent University\n#\n# This file is part of EasyBuild,\n# originally created by the HPC team of Ghent University (http://ugent.be/hpc/en),\n# with support of Ghent University (http://ugent.be/hpc),\n# the Flemish Supercomputer Centre (VSC) (https://www.vscentrum.be),\n# Flemish Research Foundation (FWO) (http://www.fwo.be/en)\n# and the Department of Economy, Science and Innovation (EWI) (http://www.ewi-vlaanderen.be/en).\n#\n# https://github.com/easybuilders/easybuild\n#\n# EasyBuild is free software: you can redistribute it and/or modify\n# it under the terms of the GNU General Public License as published by\n# the Free Software Foundation v2.\n#\n# EasyBuild is distributed in the hope that it will be useful,\n# but WITHOUT ANY WARRANTY; without even the implied warranty of\n# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the\n# GNU General Public License for more details.\n#\n# You should have received a copy of the GNU General Public License\n# along with EasyBuild. If not, see <http://www.gnu.org/licenses/>.\n##\n\"\"\"\nEasyBuild support for Perl, implemented as an easyblock\n\n@author: Jens Timmerman (Ghent University)\n@author: Kenneth Hoste (Ghent University)\n\"\"\"\nimport os\n\nfrom easybuild.easyblocks.generic.configuremake import ConfigureMake\nfrom easybuild.framework.easyconfig import CUSTOM\nfrom easybuild.tools.run import run_cmd\n\n# perldoc -lm seems to be the safest way to test if a module is available, based on exit code\nEXTS_FILTER_PERL_MODULES = (\"perldoc -lm %(ext_name)s \", \"\")\n\n\nclass EB_Perl(ConfigureMake):\n \"\"\"Support for building and installing Perl.\"\"\"\n\n @staticmethod\n def extra_options():\n \"\"\"Add extra config options specific to Perl.\"\"\"\n extra_vars = {\n 'use_perl_threads': [True, \"Enable use of internal Perl threads via -Dusethreads configure option\", CUSTOM],\n }\n return ConfigureMake.extra_options(extra_vars)\n\n def configure_step(self):\n \"\"\"\n Configure Perl build: run ./Configure instead of ./configure with some different options\n \"\"\"\n configopts = [\n self.cfg['configopts'],\n '-Dcc=\"{0}\"'.format(os.getenv('CC')),\n '-Dccflags=\"{0}\"'.format(os.getenv('CFLAGS')),\n '-Dinc_version_list=none',\n '-Dprefix=%(installdir)s',\n # guarantee that scripts are installed in /bin in the installation directory (and not in a guessed path)\n # see https://github.com/easybuilders/easybuild-easyblocks/issues/1659\n '-Dinstallscript=%(installdir)s/bin',\n '-Dscriptdir=%(installdir)s/bin',\n '-Dscriptdirexp=%(installdir)s/bin',\n ]\n if self.cfg['use_perl_threads']:\n configopts.append('-Dusethreads')\n\n configopts = (' '.join(configopts)) % {'installdir': self.installdir}\n\n cmd = './Configure -de %s' % configopts\n run_cmd(cmd, log_all=True, simple=True)\n\n def test_step(self):\n \"\"\"Test Perl build via 'make test'.\"\"\"\n # allow escaping with runtest = False\n if self.cfg['runtest'] is None or self.cfg['runtest']:\n if isinstance(self.cfg['runtest'], basestring):\n cmd = \"make %s\" % self.cfg['runtest']\n else:\n cmd = \"make test\"\n\n # specify locale to be used, to avoid that a handful of tests fail\n cmd = \"export LC_ALL=C && %s\" % cmd\n\n run_cmd(cmd, log_all=False, log_ok=False, simple=False)\n\n def prepare_for_extensions(self):\n \"\"\"\n Set default class and filter for Perl modules\n \"\"\"\n # build and install additional modules with PerlModule easyblock\n self.cfg['exts_defaultclass'] = \"PerlModule\"\n self.cfg['exts_filter'] = EXTS_FILTER_PERL_MODULES\n\n def sanity_check_step(self):\n \"\"\"Custom sanity check for Perl.\"\"\"\n majver = self.version.split('.')[0]\n custom_paths = {\n 'files': [os.path.join('bin', x) for x in ['perl', 'perldoc']],\n 'dirs': ['lib/perl%s/%s' % (majver, self.version), 'man']\n }\n super(EB_Perl, self).sanity_check_step(custom_paths=custom_paths)\n\n\ndef get_major_perl_version():\n \"\"\"\"\n Returns the major verson of the perl binary in the current path\n \"\"\"\n cmd = \"perl -MConfig -e 'print $Config::Config{PERL_API_REVISION}'\"\n (perlmajver, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)\n return perlmajver\n\n\ndef get_site_suffix(tag):\n \"\"\"\n Returns the suffix for site* (e.g. sitearch, sitelib)\n this will look something like /lib/perl5/site_perl/5.16.3/x86_64-linux-thread-multi\n so, e.g. sitearch without site prefix\n\n @tag: site tag to use, e.g. 'sitearch', 'sitelib'\n \"\"\"\n perl_cmd = 'my $a = $Config::Config{\"%s\"}; $a =~ s/($Config::Config{\"siteprefix\"})//; print $a' % tag\n cmd = \"perl -MConfig -e '%s'\" % perl_cmd\n (sitesuffix, _) = run_cmd(cmd, log_all=True, log_output=True, simple=False)\n # obtained value usually contains leading '/', so strip it off\n return sitesuffix.lstrip(os.path.sep)\n", "path": "easybuild/easyblocks/p/perl.py"}]}

| 2,091 | 318 |

gh_patches_debug_23010

|

rasdani/github-patches

|

git_diff

|

uccser__cs-unplugged-67

|

We are currently solving the following issue within our repository. Here is the issue text:

--- BEGIN ISSUE ---

Add django-debug-toolbar for debugging

--- END ISSUE ---

Below are some code segments, each from a relevant file. One or more of these files may contain bugs.

--- BEGIN FILES ---

Path: `csunplugged/config/settings.py`

Content:

```

1 """

2 Django settings for csunplugged project.

3

4 Generated by 'django-admin startproject' using Django 1.10.3.

5

6 For more information on this file, see

7 https://docs.djangoproject.com/en/1.10/topics/settings/

8

9 For the full list of settings and their values, see

10 https://docs.djangoproject.com/en/1.10/ref/settings/

11 """

12

13 import os

14 from config.settings_secret import *

15

16 # Build paths inside the project like this: os.path.join(BASE_DIR, ...)

17 BASE_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))

18

19 # nasty hard coding

20 SETTINGS_PATH = os.path.dirname(os.path.dirname(__file__))

21

22

23 # Quick-start development settings - unsuitable for production

24 # See https://docs.djangoproject.com/en/1.10/howto/deployment/checklist/

25

26 # SECURITY WARNING: keep the secret key used in production secret!

27 SECRET_KEY = 'l@@)w&&%&u37+sjz^lsx^+29y_333oid3ygxzucar^8o(axo*f'

28

29 # SECURITY WARNING: don't run with debug turned on in production!

30 DEBUG = True

31

32 ALLOWED_HOSTS = []

33

34

35 # Application definition

36

37 INSTALLED_APPS = [

38 'general.apps.GeneralConfig',

39 'topics.apps.TopicsConfig',

40 'resources.apps.ResourcesConfig',

41 'django.contrib.admin',

42 'django.contrib.auth',

43 'django.contrib.contenttypes',

44 'django.contrib.sessions',

45 'django.contrib.messages',

46 'django.contrib.staticfiles',

47 ]

48

49 MIDDLEWARE = [

50 'django.middleware.security.SecurityMiddleware',

51 'django.contrib.sessions.middleware.SessionMiddleware',

52 'django.middleware.locale.LocaleMiddleware',

53 'django.middleware.common.CommonMiddleware',

54 'django.middleware.csrf.CsrfViewMiddleware',

55 'django.contrib.auth.middleware.AuthenticationMiddleware',

56 'django.contrib.messages.middleware.MessageMiddleware',

57 'django.middleware.clickjacking.XFrameOptionsMiddleware',

58 ]

59

60 ROOT_URLCONF = 'config.urls'

61

62 TEMPLATES = [

63 {

64 'BACKEND': 'django.template.backends.django.DjangoTemplates',

65 'DIRS': [

66 os.path.join(SETTINGS_PATH, 'templates'),

67 os.path.join(SETTINGS_PATH, 'resources/content/')

68 ],

69 'APP_DIRS': True,

70 'OPTIONS': {

71 'context_processors': [

72 'django.template.context_processors.debug',

73 'django.template.context_processors.request',

74 'django.contrib.auth.context_processors.auth',

75 'django.contrib.messages.context_processors.messages',

76 ],

77 },

78 },

79 ]

80

81 WSGI_APPLICATION = 'config.wsgi.application'

82

83

84 # Database

85 # https://docs.djangoproject.com/en/1.10/ref/settings/#databases

86 # Database values are stored in `settings_secret.py`

87 # A template of this file is available as `settings_secret_template.py`

88

89

90 # Password validation

91 # https://docs.djangoproject.com/en/1.10/ref/settings/#auth-password-validators

92

93 AUTH_PASSWORD_VALIDATORS = [

94 {

95 'NAME': 'django.contrib.auth.password_validation.UserAttributeSimilarityValidator',

96 },

97 {

98 'NAME': 'django.contrib.auth.password_validation.MinimumLengthValidator',

99 },

100 {

101 'NAME': 'django.contrib.auth.password_validation.CommonPasswordValidator',

102 },

103 {

104 'NAME': 'django.contrib.auth.password_validation.NumericPasswordValidator',

105 },

106 ]

107

108

109 # Internationalization

110 # https://docs.djangoproject.com/en/1.10/topics/i18n/

111

112 LANGUAGE_CODE = 'en-us'

113

114 TIME_ZONE = 'UTC'

115

116 USE_I18N = True

117

118 USE_L10N = True

119

120 USE_TZ = True

121

122 LOCALE_PATHS = ['locale']

123

124 # Static files (CSS, JavaScript, Images)

125 # https://docs.djangoproject.com/en/1.10/howto/static-files/

126

127 STATIC_URL = '/static/'

128 STATICFILES_DIRS = (

129 os.path.join(BASE_DIR, 'build'),

130 )

131

```

Path: `csunplugged/config/urls.py`

Content:

```

1 """csunplugged URL Configuration

2

3 The `urlpatterns` list routes URLs to views. For more information please see:

4 https://docs.djangoproject.com/en/1.10/topics/http/urls/

5 Examples:

6 Function views

7 1. Add an import: from my_app import views

8 2. Add a URL to urlpatterns: url(r'^$', views.home, name='home')

9 Class-based views

10 1. Add an import: from other_app.views import Home

11 2. Add a URL to urlpatterns: url(r'^$', Home.as_view(), name='home')

12 Including another URLconf

13 1. Import the include() function: from django.conf.urls import url, include

14 2. Add a URL to urlpatterns: url(r'^blog/', include('blog.urls'))

15 """

16 from django.conf.urls import include, url

17 from django.conf.urls.i18n import i18n_patterns

18 from django.contrib import admin

19 from django.conf import settings

20 from django.conf.urls.static import static

21

22 urlpatterns = i18n_patterns(

23 url(r'', include('general.urls', namespace='general')),

24 url(r'^topics/', include('topics.urls', namespace='topics')),

25 url(r'^resources/', include('resources.urls', namespace='resources')),

26 url(r'^admin/', include(admin.site.urls)),

27 )

28 # ] + static(settings.STATIC_URL, documnet_root=settings.STATIC_ROOT)

29

```

--- END FILES ---

Please first localize the bug based on the issue statement, and then generate a patch according to the `git diff` format fenced by three backticks.

Here is an example:

```diff

diff --git a/examples/server_async.py b/examples/server_async.py

--- a/examples/server_async.py

+++ b/examples/server_async.py

@@ -313,4 +313,4 @@

if __name__ == "__main__":

- asyncio.run(run_async_server("."), debug=True)

+ asyncio.run(run_async_server(), debug=True)

diff --git a/examples/server_sync.py b/examples/server_sync.py

--- a/examples/server_sync.py

+++ b/examples/server_sync.py

@@ -313,5 +313,5 @@

if __name__ == "__main__":

- server = run_sync_server(".")

+ server = run_sync_server()

server.shutdown()

```

|

diff --git a/csunplugged/config/settings.py b/csunplugged/config/settings.py

--- a/csunplugged/config/settings.py

+++ b/csunplugged/config/settings.py

@@ -44,9 +44,11 @@

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

+ 'debug_toolbar',

]

MIDDLEWARE = [

+ 'debug_toolbar.middleware.DebugToolbarMiddleware',

'django.middleware.security.SecurityMiddleware',

'django.contrib.sessions.middleware.SessionMiddleware',

'django.middleware.locale.LocaleMiddleware',

@@ -128,3 +130,7 @@

STATICFILES_DIRS = (

os.path.join(BASE_DIR, 'build'),

)

+

+# Internal IPs for Django Debug Toolbar

+# https://docs.djangoproject.com/en/1.10/ref/settings/#internal-ips

+INTERNAL_IPS = ['127.0.0.1']

diff --git a/csunplugged/config/urls.py b/csunplugged/config/urls.py

--- a/csunplugged/config/urls.py

+++ b/csunplugged/config/urls.py

@@ -26,3 +26,9 @@

url(r'^admin/', include(admin.site.urls)),

)

# ] + static(settings.STATIC_URL, documnet_root=settings.STATIC_ROOT)

+

+if settings.DEBUG:

+ import debug_toolbar

+ urlpatterns += [

+ url(r'^__debug__/', include(debug_toolbar.urls)),

+ ]

|

{"golden_diff": "diff --git a/csunplugged/config/settings.py b/csunplugged/config/settings.py\n--- a/csunplugged/config/settings.py\n+++ b/csunplugged/config/settings.py\n@@ -44,9 +44,11 @@\n 'django.contrib.sessions',\n 'django.contrib.messages',\n 'django.contrib.staticfiles',\n+ 'debug_toolbar',\n ]\n \n MIDDLEWARE = [\n+ 'debug_toolbar.middleware.DebugToolbarMiddleware',\n 'django.middleware.security.SecurityMiddleware',\n 'django.contrib.sessions.middleware.SessionMiddleware',\n 'django.middleware.locale.LocaleMiddleware',\n@@ -128,3 +130,7 @@\n STATICFILES_DIRS = (\n os.path.join(BASE_DIR, 'build'),\n )\n+\n+# Internal IPs for Django Debug Toolbar\n+# https://docs.djangoproject.com/en/1.10/ref/settings/#internal-ips\n+INTERNAL_IPS = ['127.0.0.1']\ndiff --git a/csunplugged/config/urls.py b/csunplugged/config/urls.py\n--- a/csunplugged/config/urls.py\n+++ b/csunplugged/config/urls.py\n@@ -26,3 +26,9 @@\n url(r'^admin/', include(admin.site.urls)),\n )\n # ] + static(settings.STATIC_URL, documnet_root=settings.STATIC_ROOT)\n+\n+if settings.DEBUG:\n+ import debug_toolbar\n+ urlpatterns += [\n+ url(r'^__debug__/', include(debug_toolbar.urls)),\n+ ]\n", "issue": "Add django-debug-toolbar for debugging\n\n", "before_files": [{"content": "\"\"\"\nDjango settings for csunplugged project.\n\nGenerated by 'django-admin startproject' using Django 1.10.3.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.10/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/en/1.10/ref/settings/\n\"\"\"\n\nimport os\nfrom config.settings_secret import *\n\n# Build paths inside the project like this: os.path.join(BASE_DIR, ...)\nBASE_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))\n\n# nasty hard coding\nSETTINGS_PATH = os.path.dirname(os.path.dirname(__file__))\n\n\n# Quick-start development settings - unsuitable for production\n# See https://docs.djangoproject.com/en/1.10/howto/deployment/checklist/\n\n# SECURITY WARNING: keep the secret key used in production secret!\nSECRET_KEY = 'l@@)w&&%&u37+sjz^lsx^+29y_333oid3ygxzucar^8o(axo*f'\n\n# SECURITY WARNING: don't run with debug turned on in production!\nDEBUG = True\n\nALLOWED_HOSTS = []\n\n\n# Application definition\n\nINSTALLED_APPS = [\n 'general.apps.GeneralConfig',\n 'topics.apps.TopicsConfig',\n 'resources.apps.ResourcesConfig',\n 'django.contrib.admin',\n 'django.contrib.auth',\n 'django.contrib.contenttypes',\n 'django.contrib.sessions',\n 'django.contrib.messages',\n 'django.contrib.staticfiles',\n]\n\nMIDDLEWARE = [\n 'django.middleware.security.SecurityMiddleware',\n 'django.contrib.sessions.middleware.SessionMiddleware',\n 'django.middleware.locale.LocaleMiddleware',\n 'django.middleware.common.CommonMiddleware',\n 'django.middleware.csrf.CsrfViewMiddleware',\n 'django.contrib.auth.middleware.AuthenticationMiddleware',\n 'django.contrib.messages.middleware.MessageMiddleware',\n 'django.middleware.clickjacking.XFrameOptionsMiddleware',\n]\n\nROOT_URLCONF = 'config.urls'\n\nTEMPLATES = [\n {\n 'BACKEND': 'django.template.backends.django.DjangoTemplates',\n 'DIRS': [\n os.path.join(SETTINGS_PATH, 'templates'),\n os.path.join(SETTINGS_PATH, 'resources/content/')\n ],\n 'APP_DIRS': True,\n 'OPTIONS': {\n 'context_processors': [\n 'django.template.context_processors.debug',\n 'django.template.context_processors.request',\n 'django.contrib.auth.context_processors.auth',\n 'django.contrib.messages.context_processors.messages',\n ],\n },\n },\n]\n\nWSGI_APPLICATION = 'config.wsgi.application'\n\n\n# Database\n# https://docs.djangoproject.com/en/1.10/ref/settings/#databases\n# Database values are stored in `settings_secret.py`\n# A template of this file is available as `settings_secret_template.py`\n\n\n# Password validation\n# https://docs.djangoproject.com/en/1.10/ref/settings/#auth-password-validators\n\nAUTH_PASSWORD_VALIDATORS = [\n {\n 'NAME': 'django.contrib.auth.password_validation.UserAttributeSimilarityValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.MinimumLengthValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.CommonPasswordValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.NumericPasswordValidator',\n },\n]\n\n\n# Internationalization\n# https://docs.djangoproject.com/en/1.10/topics/i18n/\n\nLANGUAGE_CODE = 'en-us'\n\nTIME_ZONE = 'UTC'\n\nUSE_I18N = True\n\nUSE_L10N = True\n\nUSE_TZ = True\n\nLOCALE_PATHS = ['locale']\n\n# Static files (CSS, JavaScript, Images)\n# https://docs.djangoproject.com/en/1.10/howto/static-files/\n\nSTATIC_URL = '/static/'\nSTATICFILES_DIRS = (\n os.path.join(BASE_DIR, 'build'),\n )\n", "path": "csunplugged/config/settings.py"}, {"content": "\"\"\"csunplugged URL Configuration\n\nThe `urlpatterns` list routes URLs to views. For more information please see:\n https://docs.djangoproject.com/en/1.10/topics/http/urls/\nExamples:\nFunction views\n 1. Add an import: from my_app import views\n 2. Add a URL to urlpatterns: url(r'^$', views.home, name='home')\nClass-based views\n 1. Add an import: from other_app.views import Home\n 2. Add a URL to urlpatterns: url(r'^$', Home.as_view(), name='home')\nIncluding another URLconf\n 1. Import the include() function: from django.conf.urls import url, include\n 2. Add a URL to urlpatterns: url(r'^blog/', include('blog.urls'))\n\"\"\"\nfrom django.conf.urls import include, url\nfrom django.conf.urls.i18n import i18n_patterns\nfrom django.contrib import admin\nfrom django.conf import settings\nfrom django.conf.urls.static import static\n\nurlpatterns = i18n_patterns(\n url(r'', include('general.urls', namespace='general')),\n url(r'^topics/', include('topics.urls', namespace='topics')),\n url(r'^resources/', include('resources.urls', namespace='resources')),\n url(r'^admin/', include(admin.site.urls)),\n)\n# ] + static(settings.STATIC_URL, documnet_root=settings.STATIC_ROOT)\n", "path": "csunplugged/config/urls.py"}], "after_files": [{"content": "\"\"\"\nDjango settings for csunplugged project.\n\nGenerated by 'django-admin startproject' using Django 1.10.3.\n\nFor more information on this file, see\nhttps://docs.djangoproject.com/en/1.10/topics/settings/\n\nFor the full list of settings and their values, see\nhttps://docs.djangoproject.com/en/1.10/ref/settings/\n\"\"\"\n\nimport os\nfrom config.settings_secret import *\n\n# Build paths inside the project like this: os.path.join(BASE_DIR, ...)\nBASE_DIR = os.path.dirname(os.path.dirname(os.path.abspath(__file__)))\n\n# nasty hard coding\nSETTINGS_PATH = os.path.dirname(os.path.dirname(__file__))\n\n\n# Quick-start development settings - unsuitable for production\n# See https://docs.djangoproject.com/en/1.10/howto/deployment/checklist/\n\n# SECURITY WARNING: keep the secret key used in production secret!\nSECRET_KEY = 'l@@)w&&%&u37+sjz^lsx^+29y_333oid3ygxzucar^8o(axo*f'\n\n# SECURITY WARNING: don't run with debug turned on in production!\nDEBUG = True\n\nALLOWED_HOSTS = []\n\n\n# Application definition\n\nINSTALLED_APPS = [\n 'general.apps.GeneralConfig',\n 'topics.apps.TopicsConfig',\n 'resources.apps.ResourcesConfig',\n 'django.contrib.admin',\n 'django.contrib.auth',\n 'django.contrib.contenttypes',\n 'django.contrib.sessions',\n 'django.contrib.messages',\n 'django.contrib.staticfiles',\n 'debug_toolbar',\n]\n\nMIDDLEWARE = [\n 'debug_toolbar.middleware.DebugToolbarMiddleware',\n 'django.middleware.security.SecurityMiddleware',\n 'django.contrib.sessions.middleware.SessionMiddleware',\n 'django.middleware.locale.LocaleMiddleware',\n 'django.middleware.common.CommonMiddleware',\n 'django.middleware.csrf.CsrfViewMiddleware',\n 'django.contrib.auth.middleware.AuthenticationMiddleware',\n 'django.contrib.messages.middleware.MessageMiddleware',\n 'django.middleware.clickjacking.XFrameOptionsMiddleware',\n]\n\nROOT_URLCONF = 'config.urls'\n\nTEMPLATES = [\n {\n 'BACKEND': 'django.template.backends.django.DjangoTemplates',\n 'DIRS': [\n os.path.join(SETTINGS_PATH, 'templates'),\n os.path.join(SETTINGS_PATH, 'resources/content/')\n ],\n 'APP_DIRS': True,\n 'OPTIONS': {\n 'context_processors': [\n 'django.template.context_processors.debug',\n 'django.template.context_processors.request',\n 'django.contrib.auth.context_processors.auth',\n 'django.contrib.messages.context_processors.messages',\n ],\n },\n },\n]\n\nWSGI_APPLICATION = 'config.wsgi.application'\n\n\n# Database\n# https://docs.djangoproject.com/en/1.10/ref/settings/#databases\n# Database values are stored in `settings_secret.py`\n# A template of this file is available as `settings_secret_template.py`\n\n\n# Password validation\n# https://docs.djangoproject.com/en/1.10/ref/settings/#auth-password-validators\n\nAUTH_PASSWORD_VALIDATORS = [\n {\n 'NAME': 'django.contrib.auth.password_validation.UserAttributeSimilarityValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.MinimumLengthValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.CommonPasswordValidator',\n },\n {\n 'NAME': 'django.contrib.auth.password_validation.NumericPasswordValidator',\n },\n]\n\n\n# Internationalization\n# https://docs.djangoproject.com/en/1.10/topics/i18n/\n\nLANGUAGE_CODE = 'en-us'\n\nTIME_ZONE = 'UTC'\n\nUSE_I18N = True\n\nUSE_L10N = True\n\nUSE_TZ = True\n\nLOCALE_PATHS = ['locale']\n\n# Static files (CSS, JavaScript, Images)\n# https://docs.djangoproject.com/en/1.10/howto/static-files/\n\nSTATIC_URL = '/static/'\nSTATICFILES_DIRS = (\n os.path.join(BASE_DIR, 'build'),\n )\n\n# Internal IPs for Django Debug Toolbar\n# https://docs.djangoproject.com/en/1.10/ref/settings/#internal-ips\nINTERNAL_IPS = ['127.0.0.1']\n", "path": "csunplugged/config/settings.py"}, {"content": "\"\"\"csunplugged URL Configuration\n\nThe `urlpatterns` list routes URLs to views. For more information please see:\n https://docs.djangoproject.com/en/1.10/topics/http/urls/\nExamples:\nFunction views\n 1. Add an import: from my_app import views\n 2. Add a URL to urlpatterns: url(r'^$', views.home, name='home')\nClass-based views\n 1. Add an import: from other_app.views import Home\n 2. Add a URL to urlpatterns: url(r'^$', Home.as_view(), name='home')\nIncluding another URLconf\n 1. Import the include() function: from django.conf.urls import url, include\n 2. Add a URL to urlpatterns: url(r'^blog/', include('blog.urls'))\n\"\"\"\nfrom django.conf.urls import include, url\nfrom django.conf.urls.i18n import i18n_patterns\nfrom django.contrib import admin\nfrom django.conf import settings\nfrom django.conf.urls.static import static\n\nurlpatterns = i18n_patterns(\n url(r'', include('general.urls', namespace='general')),\n url(r'^topics/', include('topics.urls', namespace='topics')),\n url(r'^resources/', include('resources.urls', namespace='resources')),\n url(r'^admin/', include(admin.site.urls)),\n)\n# ] + static(settings.STATIC_URL, documnet_root=settings.STATIC_ROOT)\n\nif settings.DEBUG:\n import debug_toolbar\n urlpatterns += [\n url(r'^__debug__/', include(debug_toolbar.urls)),\n ]\n", "path": "csunplugged/config/urls.py"}]}

| 1,745 | 315 |

gh_patches_debug_33414

|

rasdani/github-patches

|

git_diff

|

alltheplaces__alltheplaces-6733

|

We are currently solving the following issue within our repository. Here is the issue text:

--- BEGIN ISSUE ---

Caffe Nero GB spider using outdated JSON file

The caffe_nero_gb.py spider gets its data from JSON file that the Store Finder page at https://caffenero.com/uk/stores/ uses to display its map. However, it looks like that URL of that JSON file has changed, and ATP is still referencing the old (and no longer updated one).

The ATP code currently has

`allowed_domains = ["caffenero-webassets-production.s3.eu-west-2.amazonaws.com"]`

`start_urls = ["https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json"]`

But the URL referenced by https://caffenero.com/uk/stores/ is now

https://caffenerowebsite.blob.core.windows.net/production/data/stores/stores-gb.json

I think the format of the JSON file has remained the same, so it should just be a matter of swapping the URLs over.

To help issues like this be picked up sooner in the future, I wonder if there's a way of checking that the JSON URL used is still included in the https://caffenero.com/uk/stores/ page, and producing a warning to anyone running ATP if not?

--- END ISSUE ---

Below are some code segments, each from a relevant file. One or more of these files may contain bugs.

--- BEGIN FILES ---

Path: `locations/spiders/caffe_nero_gb.py`

Content:

```

1 from scrapy import Spider

2 from scrapy.http import JsonRequest

3

4 from locations.categories import Categories, Extras, apply_category, apply_yes_no

5 from locations.dict_parser import DictParser

6 from locations.hours import OpeningHours

7

8

9 class CaffeNeroGBSpider(Spider):

10 name = "caffe_nero_gb"

11 item_attributes = {"brand": "Caffe Nero", "brand_wikidata": "Q675808"}

12 allowed_domains = ["caffenero-webassets-production.s3.eu-west-2.amazonaws.com"]

13 start_urls = ["https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json"]

14

15 def start_requests(self):

16 for url in self.start_urls:

17 yield JsonRequest(url=url)

18

19 def parse(self, response):

20 for location in response.json()["features"]:

21 if (

22 not location["properties"]["status"]["open"]

23 or location["properties"]["status"]["opening_soon"]

24 or location["properties"]["status"]["temp_closed"]

25 ):

26 continue

27

28 item = DictParser.parse(location["properties"])

29 item["geometry"] = location["geometry"]

30 if location["properties"]["status"]["express"]:

31 item["brand"] = "Nero Express"

32

33 item["opening_hours"] = OpeningHours()

34 for day_name, day_hours in location["properties"]["hoursRegular"].items():

35 if day_hours["open"] == "closed" or day_hours["close"] == "closed":

36 continue

37 if day_name == "holiday":

38 continue

39 item["opening_hours"].add_range(day_name.title(), day_hours["open"], day_hours["close"])

40

41 apply_yes_no(Extras.TAKEAWAY, item, location["properties"]["status"]["takeaway"], False)

42 apply_yes_no(Extras.DELIVERY, item, location["properties"]["status"]["delivery"], False)

43 apply_yes_no(Extras.WIFI, item, location["properties"]["amenities"]["wifi"], False)

44 apply_yes_no(Extras.TOILETS, item, location["properties"]["amenities"]["toilet"], False)

45 apply_yes_no(Extras.BABY_CHANGING_TABLE, item, location["properties"]["amenities"]["baby_change"], False)

46 apply_yes_no(Extras.SMOKING_AREA, item, location["properties"]["amenities"]["smoking_area"], False)

47 apply_yes_no(Extras.AIR_CONDITIONING, item, location["properties"]["amenities"]["air_conditioned"], False)

48 apply_yes_no(Extras.WHEELCHAIR, item, location["properties"]["amenities"].get("disabled_access"), False)

49 apply_yes_no(Extras.TOILETS_WHEELCHAIR, item, location["properties"]["amenities"]["disabled_toilet"], False)

50 apply_yes_no(Extras.OUTDOOR_SEATING, item, location["properties"]["amenities"]["outside_seating"], False)

51 apply_category(Categories.COFFEE_SHOP, item)

52

53 item["website"] = f'https://caffenero.com/uk/store/{location["properties"]["slug"]}/'

54

55 yield item

56

```

--- END FILES ---

Please first localize the bug based on the issue statement, and then generate a patch according to the `git diff` format fenced by three backticks.

Here is an example:

```diff

diff --git a/examples/server_async.py b/examples/server_async.py

--- a/examples/server_async.py

+++ b/examples/server_async.py

@@ -313,4 +313,4 @@

if __name__ == "__main__":

- asyncio.run(run_async_server("."), debug=True)

+ asyncio.run(run_async_server(), debug=True)

diff --git a/examples/server_sync.py b/examples/server_sync.py

--- a/examples/server_sync.py

+++ b/examples/server_sync.py

@@ -313,5 +313,5 @@

if __name__ == "__main__":

- server = run_sync_server(".")

+ server = run_sync_server()

server.shutdown()

```

|

diff --git a/locations/spiders/caffe_nero_gb.py b/locations/spiders/caffe_nero_gb.py

--- a/locations/spiders/caffe_nero_gb.py

+++ b/locations/spiders/caffe_nero_gb.py

@@ -1,5 +1,8 @@

+import re

+from typing import Any

+

from scrapy import Spider

-from scrapy.http import JsonRequest

+from scrapy.http import JsonRequest, Response

from locations.categories import Categories, Extras, apply_category, apply_yes_no

from locations.dict_parser import DictParser

@@ -9,14 +12,15 @@

class CaffeNeroGBSpider(Spider):

name = "caffe_nero_gb"

item_attributes = {"brand": "Caffe Nero", "brand_wikidata": "Q675808"}

- allowed_domains = ["caffenero-webassets-production.s3.eu-west-2.amazonaws.com"]

- start_urls = ["https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json"]

+ allowed_domains = ["caffenero.com", "caffenerowebsite.blob.core.windows.net"]

+ start_urls = ["https://caffenero.com/uk/stores/"]

- def start_requests(self):

- for url in self.start_urls:

- yield JsonRequest(url=url)

+ def parse(self, response: Response, **kwargs: Any) -> Any:

+ yield JsonRequest(

+ re.search(r"loadGeoJson\(\n\s+'(https://.+)', {", response.text).group(1), callback=self.parse_geojson

+ )

- def parse(self, response):

+ def parse_geojson(self, response: Response, **kwargs: Any) -> Any:

for location in response.json()["features"]:

if (

not location["properties"]["status"]["open"]

@@ -30,6 +34,8 @@

if location["properties"]["status"]["express"]:

item["brand"] = "Nero Express"

+ item["branch"] = item.pop("name")

+

item["opening_hours"] = OpeningHours()

for day_name, day_hours in location["properties"]["hoursRegular"].items():

if day_hours["open"] == "closed" or day_hours["close"] == "closed":

|

{"golden_diff": "diff --git a/locations/spiders/caffe_nero_gb.py b/locations/spiders/caffe_nero_gb.py\n--- a/locations/spiders/caffe_nero_gb.py\n+++ b/locations/spiders/caffe_nero_gb.py\n@@ -1,5 +1,8 @@\n+import re\n+from typing import Any\n+\n from scrapy import Spider\n-from scrapy.http import JsonRequest\n+from scrapy.http import JsonRequest, Response\n \n from locations.categories import Categories, Extras, apply_category, apply_yes_no\n from locations.dict_parser import DictParser\n@@ -9,14 +12,15 @@\n class CaffeNeroGBSpider(Spider):\n name = \"caffe_nero_gb\"\n item_attributes = {\"brand\": \"Caffe Nero\", \"brand_wikidata\": \"Q675808\"}\n- allowed_domains = [\"caffenero-webassets-production.s3.eu-west-2.amazonaws.com\"]\n- start_urls = [\"https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json\"]\n+ allowed_domains = [\"caffenero.com\", \"caffenerowebsite.blob.core.windows.net\"]\n+ start_urls = [\"https://caffenero.com/uk/stores/\"]\n \n- def start_requests(self):\n- for url in self.start_urls:\n- yield JsonRequest(url=url)\n+ def parse(self, response: Response, **kwargs: Any) -> Any:\n+ yield JsonRequest(\n+ re.search(r\"loadGeoJson\\(\\n\\s+'(https://.+)', {\", response.text).group(1), callback=self.parse_geojson\n+ )\n \n- def parse(self, response):\n+ def parse_geojson(self, response: Response, **kwargs: Any) -> Any:\n for location in response.json()[\"features\"]:\n if (\n not location[\"properties\"][\"status\"][\"open\"]\n@@ -30,6 +34,8 @@\n if location[\"properties\"][\"status\"][\"express\"]:\n item[\"brand\"] = \"Nero Express\"\n \n+ item[\"branch\"] = item.pop(\"name\")\n+\n item[\"opening_hours\"] = OpeningHours()\n for day_name, day_hours in location[\"properties\"][\"hoursRegular\"].items():\n if day_hours[\"open\"] == \"closed\" or day_hours[\"close\"] == \"closed\":\n", "issue": "Caffe Nero GB spider using outdated JSON file\nThe caffe_nero_gb.py spider gets its data from JSON file that the Store Finder page at https://caffenero.com/uk/stores/ uses to display its map. However, it looks like that URL of that JSON file has changed, and ATP is still referencing the old (and no longer updated one).\r\n\r\nThe ATP code currently has\r\n`allowed_domains = [\"caffenero-webassets-production.s3.eu-west-2.amazonaws.com\"]`\r\n`start_urls = [\"https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json\"]`\r\nBut the URL referenced by https://caffenero.com/uk/stores/ is now\r\nhttps://caffenerowebsite.blob.core.windows.net/production/data/stores/stores-gb.json\r\n\r\nI think the format of the JSON file has remained the same, so it should just be a matter of swapping the URLs over.\r\n\r\nTo help issues like this be picked up sooner in the future, I wonder if there's a way of checking that the JSON URL used is still included in the https://caffenero.com/uk/stores/ page, and producing a warning to anyone running ATP if not?\n", "before_files": [{"content": "from scrapy import Spider\nfrom scrapy.http import JsonRequest\n\nfrom locations.categories import Categories, Extras, apply_category, apply_yes_no\nfrom locations.dict_parser import DictParser\nfrom locations.hours import OpeningHours\n\n\nclass CaffeNeroGBSpider(Spider):\n name = \"caffe_nero_gb\"\n item_attributes = {\"brand\": \"Caffe Nero\", \"brand_wikidata\": \"Q675808\"}\n allowed_domains = [\"caffenero-webassets-production.s3.eu-west-2.amazonaws.com\"]\n start_urls = [\"https://caffenero-webassets-production.s3.eu-west-2.amazonaws.com/stores/stores_gb.json\"]\n\n def start_requests(self):\n for url in self.start_urls:\n yield JsonRequest(url=url)\n\n def parse(self, response):\n for location in response.json()[\"features\"]:\n if (\n not location[\"properties\"][\"status\"][\"open\"]\n or location[\"properties\"][\"status\"][\"opening_soon\"]\n or location[\"properties\"][\"status\"][\"temp_closed\"]\n ):\n continue\n\n item = DictParser.parse(location[\"properties\"])\n item[\"geometry\"] = location[\"geometry\"]\n if location[\"properties\"][\"status\"][\"express\"]:\n item[\"brand\"] = \"Nero Express\"\n\n item[\"opening_hours\"] = OpeningHours()\n for day_name, day_hours in location[\"properties\"][\"hoursRegular\"].items():\n if day_hours[\"open\"] == \"closed\" or day_hours[\"close\"] == \"closed\":\n continue\n if day_name == \"holiday\":\n continue\n item[\"opening_hours\"].add_range(day_name.title(), day_hours[\"open\"], day_hours[\"close\"])\n\n apply_yes_no(Extras.TAKEAWAY, item, location[\"properties\"][\"status\"][\"takeaway\"], False)\n apply_yes_no(Extras.DELIVERY, item, location[\"properties\"][\"status\"][\"delivery\"], False)\n apply_yes_no(Extras.WIFI, item, location[\"properties\"][\"amenities\"][\"wifi\"], False)\n apply_yes_no(Extras.TOILETS, item, location[\"properties\"][\"amenities\"][\"toilet\"], False)\n apply_yes_no(Extras.BABY_CHANGING_TABLE, item, location[\"properties\"][\"amenities\"][\"baby_change\"], False)\n apply_yes_no(Extras.SMOKING_AREA, item, location[\"properties\"][\"amenities\"][\"smoking_area\"], False)\n apply_yes_no(Extras.AIR_CONDITIONING, item, location[\"properties\"][\"amenities\"][\"air_conditioned\"], False)\n apply_yes_no(Extras.WHEELCHAIR, item, location[\"properties\"][\"amenities\"].get(\"disabled_access\"), False)\n apply_yes_no(Extras.TOILETS_WHEELCHAIR, item, location[\"properties\"][\"amenities\"][\"disabled_toilet\"], False)\n apply_yes_no(Extras.OUTDOOR_SEATING, item, location[\"properties\"][\"amenities\"][\"outside_seating\"], False)\n apply_category(Categories.COFFEE_SHOP, item)\n\n item[\"website\"] = f'https://caffenero.com/uk/store/{location[\"properties\"][\"slug\"]}/'\n\n yield item\n", "path": "locations/spiders/caffe_nero_gb.py"}], "after_files": [{"content": "import re\nfrom typing import Any\n\nfrom scrapy import Spider\nfrom scrapy.http import JsonRequest, Response\n\nfrom locations.categories import Categories, Extras, apply_category, apply_yes_no\nfrom locations.dict_parser import DictParser\nfrom locations.hours import OpeningHours\n\n\nclass CaffeNeroGBSpider(Spider):\n name = \"caffe_nero_gb\"\n item_attributes = {\"brand\": \"Caffe Nero\", \"brand_wikidata\": \"Q675808\"}\n allowed_domains = [\"caffenero.com\", \"caffenerowebsite.blob.core.windows.net\"]\n start_urls = [\"https://caffenero.com/uk/stores/\"]\n\n def parse(self, response: Response, **kwargs: Any) -> Any:\n yield JsonRequest(\n re.search(r\"loadGeoJson\\(\\n\\s+'(https://.+)', {\", response.text).group(1), callback=self.parse_geojson\n )\n\n def parse_geojson(self, response: Response, **kwargs: Any) -> Any:\n for location in response.json()[\"features\"]:\n if (\n not location[\"properties\"][\"status\"][\"open\"]\n or location[\"properties\"][\"status\"][\"opening_soon\"]\n or location[\"properties\"][\"status\"][\"temp_closed\"]\n ):\n continue\n\n item = DictParser.parse(location[\"properties\"])\n item[\"geometry\"] = location[\"geometry\"]\n if location[\"properties\"][\"status\"][\"express\"]:\n item[\"brand\"] = \"Nero Express\"\n\n item[\"branch\"] = item.pop(\"name\")\n\n item[\"opening_hours\"] = OpeningHours()\n for day_name, day_hours in location[\"properties\"][\"hoursRegular\"].items():\n if day_hours[\"open\"] == \"closed\" or day_hours[\"close\"] == \"closed\":\n continue\n if day_name == \"holiday\":\n continue\n item[\"opening_hours\"].add_range(day_name.title(), day_hours[\"open\"], day_hours[\"close\"])\n\n apply_yes_no(Extras.TAKEAWAY, item, location[\"properties\"][\"status\"][\"takeaway\"], False)\n apply_yes_no(Extras.DELIVERY, item, location[\"properties\"][\"status\"][\"delivery\"], False)\n apply_yes_no(Extras.WIFI, item, location[\"properties\"][\"amenities\"][\"wifi\"], False)\n apply_yes_no(Extras.TOILETS, item, location[\"properties\"][\"amenities\"][\"toilet\"], False)\n apply_yes_no(Extras.BABY_CHANGING_TABLE, item, location[\"properties\"][\"amenities\"][\"baby_change\"], False)\n apply_yes_no(Extras.SMOKING_AREA, item, location[\"properties\"][\"amenities\"][\"smoking_area\"], False)\n apply_yes_no(Extras.AIR_CONDITIONING, item, location[\"properties\"][\"amenities\"][\"air_conditioned\"], False)\n apply_yes_no(Extras.WHEELCHAIR, item, location[\"properties\"][\"amenities\"].get(\"disabled_access\"), False)\n apply_yes_no(Extras.TOILETS_WHEELCHAIR, item, location[\"properties\"][\"amenities\"][\"disabled_toilet\"], False)\n apply_yes_no(Extras.OUTDOOR_SEATING, item, location[\"properties\"][\"amenities\"][\"outside_seating\"], False)\n apply_category(Categories.COFFEE_SHOP, item)\n\n item[\"website\"] = f'https://caffenero.com/uk/store/{location[\"properties\"][\"slug\"]}/'\n\n yield item\n", "path": "locations/spiders/caffe_nero_gb.py"}]}

| 1,264 | 495 |

gh_patches_debug_24105

|

rasdani/github-patches

|

git_diff

|

deepchecks__deepchecks-372

|

We are currently solving the following issue within our repository. Here is the issue text:

--- BEGIN ISSUE ---

The mean value is not shown in the regression systematic error plot

I would expect that near the plot (or when I hover over the mean line in the plot), I would see the mean error value.

To reproduce:

https://www.kaggle.com/itay94/notebookf8c78e84d7

--- END ISSUE ---

Below are some code segments, each from a relevant file. One or more of these files may contain bugs.

--- BEGIN FILES ---

Path: `deepchecks/checks/performance/regression_systematic_error.py`

Content:

```

1 # ----------------------------------------------------------------------------

2 # Copyright (C) 2021 Deepchecks (https://www.deepchecks.com)

3 #

4 # This file is part of Deepchecks.

5 # Deepchecks is distributed under the terms of the GNU Affero General

6 # Public License (version 3 or later).

7 # You should have received a copy of the GNU Affero General Public License

8 # along with Deepchecks. If not, see <http://www.gnu.org/licenses/>.

9 # ----------------------------------------------------------------------------

10 #

11 """The RegressionSystematicError check module."""

12 import plotly.graph_objects as go

13 from sklearn.base import BaseEstimator

14 from sklearn.metrics import mean_squared_error