modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-07-16 12:29:00

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 523

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-07-16 12:28:25

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

sanjayadhikesaven/Llama-2-7b-hf3bit | sanjayadhikesaven | 2024-02-21T20:34:59Z | 5 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"3-bit",

"gptq",

"region:us"

]

| text-generation | 2024-02-21T20:32:19Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

Technoculture/BioMistral-Hermes-Dare | Technoculture | 2024-02-21T20:22:28Z | 49 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"BioMistral/BioMistral-7B-DARE",

"NousResearch/Nous-Hermes-2-Mistral-7B-DPO",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2024-02-21T20:17:41Z | ---

license: apache-2.0

tags:

- merge

- mergekit

- BioMistral/BioMistral-7B-DARE

- NousResearch/Nous-Hermes-2-Mistral-7B-DPO

---

# BioMistral-Hermes-Dare

BioMistral-Hermes-Dare is a merge of the following models:

* [BioMistral/BioMistral-7B-DARE](https://huggingface.co/BioMistral/BioMistral-7B-DARE)

* [NousResearch/Nous-Hermes-2-Mistral-7B-DPO](https://huggingface.co/NousResearch/Nous-Hermes-2-Mistral-7B-DPO)

## Evaluations

| Benchmark | BioMistral-Hermes-Dare | Orca-2-7b | llama-2-7b | meditron-7b | meditron-70b |

| --- | --- | --- | --- | --- | --- |

| MedMCQA | | | | | |

| ClosedPubMedQA | | | | | |

| PubMedQA | | | | | |

| MedQA | | | | | |

| MedQA4 | | | | | |

| MedicationQA | | | | | |

| MMLU Medical | | | | | |

| MMLU | | | | | |

| TruthfulQA | | | | | |

| GSM8K | | | | | |

| ARC | | | | | |

| HellaSwag | | | | | |

| Winogrande | | | | | |

More details on the Open LLM Leaderboard evaluation results can be found here.

## 🧩 Configuration

```yaml

models:

- model: BioMistral/BioMistral-7B-DARE

parameters:

weight: 1.0

- model: NousResearch/Nous-Hermes-2-Mistral-7B-DPO

parameters:

weight: 0.6

merge_method: linear

dtype: float16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "Technoculture/BioMistral-Hermes-Dare"

messages = [{"role": "user", "content": "I am feeling sleepy these days"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

``` |

megaaziib/Miacaroni-7B-Indonesia | megaaziib | 2024-02-21T20:20:18Z | 10 | 1 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"lazymergekit",

"SanjiWatsuki/Loyal-Macaroni-Maid-7B",

"indischepartij/OpenMia-Indo-Mistral-7b-v4",

"base_model:SanjiWatsuki/Loyal-Macaroni-Maid-7B",

"base_model:merge:SanjiWatsuki/Loyal-Macaroni-Maid-7B",

"base_model:indischepartij/OpenMia-Indo-Mistral-7b-v4",

"base_model:merge:indischepartij/OpenMia-Indo-Mistral-7b-v4",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2024-02-21T20:14:17Z | ---

tags:

- merge

- mergekit

- lazymergekit

- SanjiWatsuki/Loyal-Macaroni-Maid-7B

- indischepartij/OpenMia-Indo-Mistral-7b-v4

base_model:

- SanjiWatsuki/Loyal-Macaroni-Maid-7B

- indischepartij/OpenMia-Indo-Mistral-7b-v4

---

# Miacaroni-7B-Indonesia

Miacaroni-7B-Indonesia is a merge of the following models using [LazyMergekit](https://colab.research.google.com/drive/1obulZ1ROXHjYLn6PPZJwRR6GzgQogxxb?usp=sharing):

* [SanjiWatsuki/Loyal-Macaroni-Maid-7B](https://huggingface.co/SanjiWatsuki/Loyal-Macaroni-Maid-7B)

* [indischepartij/OpenMia-Indo-Mistral-7b-v4](https://huggingface.co/indischepartij/OpenMia-Indo-Mistral-7b-v4)

## 🧩 Configuration

```yaml

slices:

- sources:

- model: SanjiWatsuki/Loyal-Macaroni-Maid-7B

layer_range: [0, 32]

- model: indischepartij/OpenMia-Indo-Mistral-7b-v4

layer_range: [0, 32]

merge_method: slerp

base_model: SanjiWatsuki/Loyal-Macaroni-Maid-7B

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "megaaziib/Miacaroni-7B-Indonesia"

messages = [{"role": "user", "content": "What is a large language model?"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

``` |

OscarGalavizC/Reinforce-Cartpole-v1 | OscarGalavizC | 2024-02-21T20:13:56Z | 0 | 0 | null | [

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2024-02-21T20:13:46Z | ---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-Cartpole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

eren23/gemma-2b-tr-instruct-test-lora | eren23 | 2024-02-21T20:11:44Z | 2 | 0 | peft | [

"peft",

"safetensors",

"arxiv:1910.09700",

"base_model:google/gemma-2b-it",

"base_model:adapter:google/gemma-2b-it",

"region:us"

]

| null | 2024-02-21T20:11:39Z | ---

library_name: peft

base_model: google/gemma-2b-it

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.8.2 |

Technoculture/BioMistral-Hermes-Slerp | Technoculture | 2024-02-21T20:10:14Z | 56 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"BioMistral/BioMistral-7B-DARE",

"NousResearch/Nous-Hermes-2-Mistral-7B-DPO",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2024-02-21T20:05:32Z | ---

license: apache-2.0

tags:

- merge

- mergekit

- BioMistral/BioMistral-7B-DARE

- NousResearch/Nous-Hermes-2-Mistral-7B-DPO

---

# BioMistral-Hermes-Slerp

BioMistral-Hermes-Slerp is a merge of the following models:

* [BioMistral/BioMistral-7B-DARE](https://huggingface.co/BioMistral/BioMistral-7B-DARE)

* [NousResearch/Nous-Hermes-2-Mistral-7B-DPO](https://huggingface.co/NousResearch/Nous-Hermes-2-Mistral-7B-DPO)

## Evaluations

| Benchmark | BioMistral-Hermes-Slerp | Orca-2-7b | llama-2-7b | meditron-7b | meditron-70b |

| --- | --- | --- | --- | --- | --- |

| MedMCQA | | | | | |

| ClosedPubMedQA | | | | | |

| PubMedQA | | | | | |

| MedQA | | | | | |

| MedQA4 | | | | | |

| MedicationQA | | | | | |

| MMLU Medical | | | | | |

| MMLU | | | | | |

| TruthfulQA | | | | | |

| GSM8K | | | | | |

| ARC | | | | | |

| HellaSwag | | | | | |

| Winogrande | | | | | |

More details on the Open LLM Leaderboard evaluation results can be found here.

## 🧩 Configuration

```yaml

slices:

- sources:

- model: BioMistral/BioMistral-7B-DARE

layer_range: [0, 32]

- model: NousResearch/Nous-Hermes-2-Mistral-7B-DPO

layer_range: [0, 32]

merge_method: slerp

base_model: NousResearch/Nous-Hermes-2-Mistral-7B-DPO

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5 # fallback for rest of tensors

dtype: float16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "Technoculture/BioMistral-Hermes-Slerp"

messages = [{"role": "user", "content": "I am feeling sleepy these days"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

``` |

smcleod/Smaug-Mixtral-v0.1-GGUF | smcleod | 2024-02-21T20:08:00Z | 13 | 3 | null | [

"gguf",

"smaug",

"mixtral",

"license:other",

"endpoints_compatible",

"region:us",

"conversational"

]

| null | 2024-02-21T02:34:26Z | ---

license: other

license_name: other

license_link: LICENSE

tags:

- smaug

- mixtral

---

GGUF Quantised variants of https://huggingface.co/abacusai/Smaug-Mixtral-v0.1 |

Weni/heading_investigation_e1.0 | Weni | 2024-02-21T19:57:44Z | 1 | 0 | peft | [

"peft",

"safetensors",

"trl",

"sft",

"generated_from_trainer",

"dataset:generator",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"license:apache-2.0",

"region:us"

]

| null | 2024-02-21T19:36:28Z | ---

license: apache-2.0

library_name: peft

tags:

- trl

- sft

- generated_from_trainer

datasets:

- generator

base_model: mistralai/Mistral-7B-v0.1

model-index:

- name: heading_investigation_e1.0

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# heading_investigation_e1.0

This model is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) on the generator dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9729

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- gradient_accumulation_steps: 4

- total_train_batch_size: 64

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- training_steps: 2

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- PEFT 0.7.1

- Transformers 4.39.0.dev0

- Pytorch 2.1.0+cu118

- Datasets 2.16.1

- Tokenizers 0.15.1 |

A-Issa-1999/test_1 | A-Issa-1999 | 2024-02-21T19:48:01Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

]

| null | 2024-02-21T19:47:53Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

nes07/mistral-7b-metlife-ia-congreso-balanced-data | nes07 | 2024-02-21T19:47:37Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

]

| null | 2024-02-21T18:10:43Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

lex-hue/LexGPT-V2.5 | lex-hue | 2024-02-21T19:39:14Z | 4 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

| text-generation | 2024-02-05T20:15:32Z | ---

license: mit

---

# Model Card

### Model Name: LexGPT-V2.5

#### Overview:

Purpose: This general-purpose language model serves as a powerful tool for personal exploration and learning in the domain of AI development. Its rapid evolution suggests the potential to surpass the performance of some established state-of-the-art models.

Status: The model remains under active development, with continuous improvements leading to more robust capabilities. The next major iteration is undergoing rigorous testing and is expected to release this weekend (Saturday or Sunday).

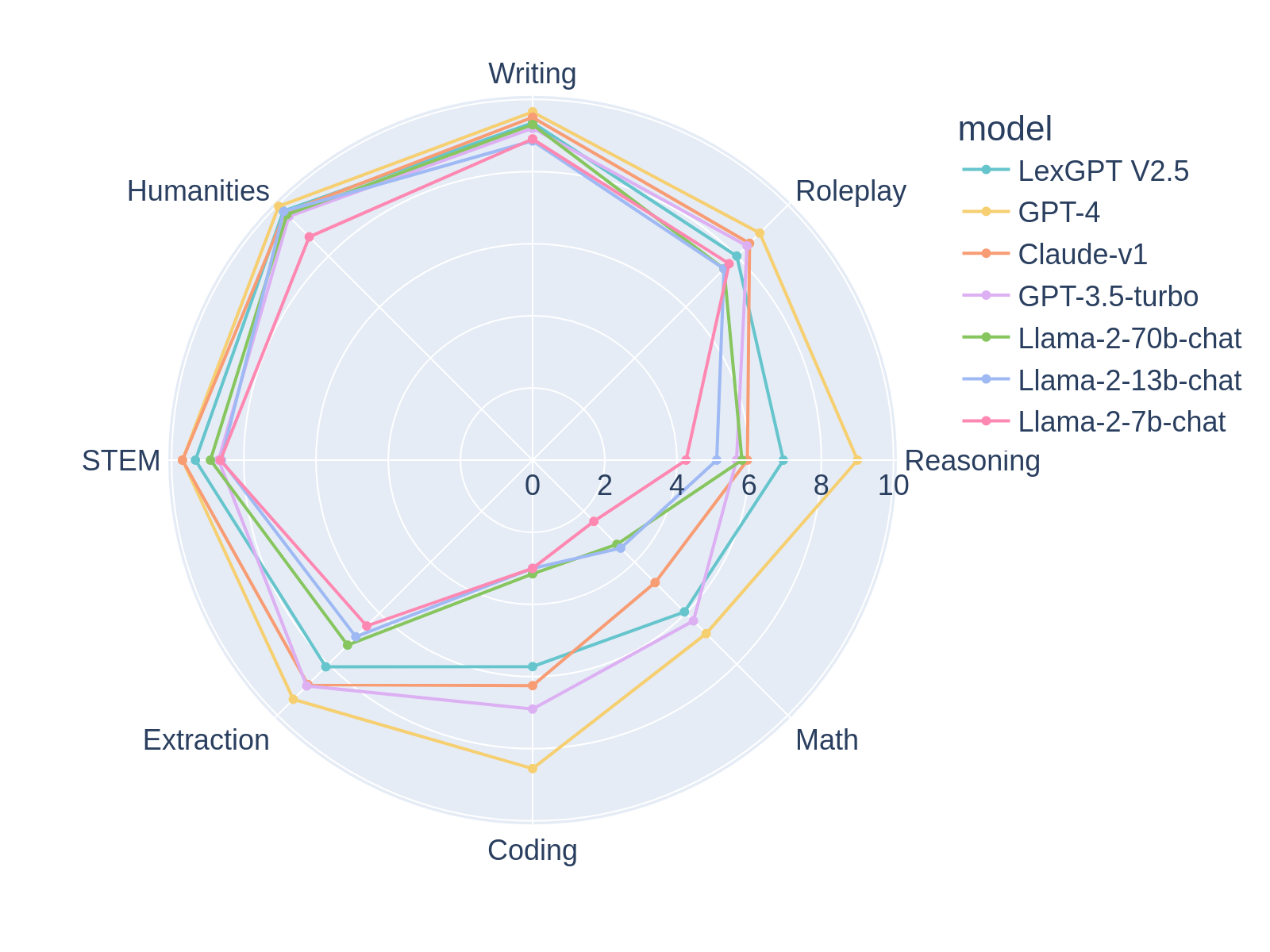

Skills: LexGPT-2.5 demonstrates impressive reasoning abilities, excelling in STEM (Science, Technology, Engineering, and Math) related fields. Surprisingly, it also possesses a capacity for imaginative engagement, making it surprisingly adept at roleplaying scenarios.

Evaluation: MT-BENCH scores indicate LexGPT-2.5's rapid progress. While it has yet to fully surpass GPT-3.5, its current performance is remarkably close and demonstrates significant potential for further improvement.

MT-BENCH SCORE:

###### First turn

| model | turn | score |

|---|---|---|

| gpt-4 | 1 | 8.956250 |

| claude-v1 | 1 | 8.150000 |

| LexGPT-V2.5 | 1 | 8.075949 |

| gpt-3.5-turbo | 1 | 8.075000 |

| vicuna-13b-v1.3 | 1 | 6.812500 |

###### Second turn

| model | turn | score |

|---|---|---|

| gpt-4 | 2 | 9.0250 |

| gpt-3.5-turbo | 2 | 7.8125 |

| LexGPT-V2.5 | 2 | 7.7500 |

| claude-v1 | 2 | 7.6500 |

| vicuna-13b-v1.3 | 2 | 5.9625 |

###### Average

| model | score |

|---|---|

| gpt-4 | 8.990625 |

| gpt-3.5-turbo | 7.943750 |

| LexGPT-V2.5 | 7.920530 |

| claude-v1 | 7.900000 |

| vicuna-13b-v1.3 | 6.387500 |

### Intended Use:

Primary Use: Designed for general language generation tasks and to facilitate the creator's personal explorations in AI development. It offers a valuable sandbox for experimentation and learning.

Potential Additional Uses: The model's STEM proficiency and roleplaying ability suggest it might find applications in educational tools or creative writing assistants.

Potential Risks: As with many powerful language models, there's a potential for the generation of harmful, biased, or offensive content. Careful monitoring and the implementation of appropriate safeguards are essential.

### Ethical Considerations

The model is largely uncensored, emphasizing user responsibility to avoid using it for illegal or intentionally harmful purposes.

Ongoing evaluation during development is crucial for identifying and addressing potential biases in the model's generated outputs. Transparency and regular updates to this model card will foster ethical awareness in its use.

### Additional Notes

LexGPT-2.5 showcases impressive progress, rapidly approaching the capabilities of GPT-3.5 and hinting at significant untapped potential.

The creator welcomes questions, feedback, and collaboration to continue developing this model responsibly. |

juliowaissman/Reinforce-CartPole-v1 | juliowaissman | 2024-02-21T19:36:32Z | 0 | 0 | null | [

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2024-02-21T19:36:21Z | ---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

Danjie/SQLMaster_13b | Danjie | 2024-02-21T19:36:00Z | 0 | 0 | null | [

"safetensors",

"license:mit",

"region:us"

]

| null | 2024-02-10T22:48:55Z | ---

license: mit

---

# SQLMaster

A minimum of 10 GB VRAM is required.

## Colab Example

https://colab.research.google.com/drive/1Nvwie-klMNPPWI4o7Nae4l5spxEX1PaD?usp=sharing

## Install Prerequisite

```bash

!pip install peft

!pip install transformers

!pip install bitsandbytes

!pip install accelerate

```

## Login Using Huggingface Token

```bash

# You need a huggingface token that can access llama2

from huggingface_hub import notebook_login

notebook_login()

```

## Download Model

```python

import torch

from peft import PeftModel, PeftConfig

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_use_double_quant=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16

)

peft_model_id = "Danjie/SQLMaster_13b"

config = PeftConfig.from_pretrained(peft_model_id)

tokenizer = AutoTokenizer.from_pretrained(config.base_model_name_or_path)

model = AutoModelForCausalLM.from_pretrained(config.base_model_name_or_path, device_map='auto', quantization_config=bnb_config)

model.resize_token_embeddings(len(tokenizer) + 1)

# Load the Lora model

model = PeftModel.from_pretrained(model, peft_model_id)

```

## Inference

```python

def create_sql_query(question: str, context: str) -> str:

input = "Question: " + question + "\nContext:" + context + "\nAnswer"

# Encode and move tensor into cuda if applicable.

encoded_input = tokenizer(input, return_tensors='pt')

encoded_input = {k: v.to(device) for k, v in encoded_input.items()}

output = model.generate(**encoded_input, max_new_tokens=256)

response = tokenizer.decode(output[0], skip_special_tokens=True)

response = response[len(input):]

return response

```

## Example

```python

create_sql_query("What is the highest age of users with name Danjie", "CREATE TABLE user (age INTEGER, name STRING)")

``` |

Fhermin/ReinforcePixelCopter | Fhermin | 2024-02-21T19:25:44Z | 0 | 0 | null | [

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

]

| reinforcement-learning | 2024-02-21T19:24:56Z | ---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: ReinforcePixelCopter

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 36.80 +/- 27.97

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

furrutiav/bert_qa_extractor_cockatiel_2022_ulra_by_kmeans_Q_nllf_sub_best_ef_signal_it_136 | furrutiav | 2024-02-21T19:25:18Z | 5 | 0 | transformers | [

"transformers",

"safetensors",

"bert",

"feature-extraction",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

]

| feature-extraction | 2024-02-21T19:24:43Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

baseten/gemma-7b-it-trtllm-3k-1k-64bs | baseten | 2024-02-21T19:25:07Z | 1 | 0 | transformers | [

"transformers",

"endpoints_compatible",

"region:us"

]

| null | 2024-02-21T18:53:04Z | trtllm-build --checkpoint_dir ./trt-ckpt/ --gemm_plugin bfloat16 --gpt_attention_plugin bfloat16 --max_batch_size 64 --max_input_len 3000 --max_output_len 1000 --context_fmha enable --output_dir ./engines

quantized to int8 as per the `config.json` |

Jaimefebe/llama-2-7b-euskara-v1 | Jaimefebe | 2024-02-21T19:15:22Z | 1 | 2 | peft | [

"peft",

"safetensors",

"arxiv:1910.09700",

"base_model:NousResearch/Llama-2-7b-chat-hf",

"base_model:adapter:NousResearch/Llama-2-7b-chat-hf",

"region:us"

]

| null | 2024-02-21T19:15:00Z | ---

library_name: peft

base_model: NousResearch/Llama-2-7b-chat-hf

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.6.2

|

predibase/dbpedia | predibase | 2024-02-21T19:14:00Z | 1,720 | 8 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-19T23:16:23Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: Topic extraction from a news article and title\

Original dataset: https://huggingface.co/datasets/fancyzhx/dbpedia_14 \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Topic Identification and the name is News Topic Identification (dbpedia)\

---\

Sample input: You are given the title and the body of an article below. Please determine the type of the article.\n### Title: Great White Whale\n\n### Body: Great White Whale is the debut album by the Canadian rock band Secret and Whisper. The album was in the works for about a year and was released on February 12 2008. A music video was shot in Pittsburgh for the album's first single XOXOXO. The album reached number 17 on iTunes's top 100 albums in its first week on sale.\n\n### Article Type: \

---\

Sample output: 11\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/dbpedia"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

predibase/hellaswag | predibase | 2024-02-21T19:13:58Z | 47 | 3 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-19T19:11:15Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: Multiple-choice sentence completion\

Original dataset: https://huggingface.co/datasets/Rowan/hellaswag \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Other and the name is Multiple Choice Sentence Completion (hellaswag)\

---\

Sample input: You are provided with an incomplete passage below as well as 4 endings in quotes and separated by commas, with only one of them being the correct ending. Treat the endings as being labelled 0, 1, 2, 3 in order. Please respond with the number corresponding to the correct ending for the passage.\n\n### Passage: The mother instructs them on how to brush their teeth while laughing. The boy helps his younger sister brush his teeth. she\n\n### Endings: ['shows how to hit the mom and then kiss his dad as well.'

'brushes past the camera, looking better soon after.'

'glows from the center of the camera as a reaction.'

'gets them some water to gargle in their mouths.']\n\n### Correct Ending Number: \

---\

Sample output: 3.0\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/hellaswag"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

predibase/bc5cdr | predibase | 2024-02-21T19:13:58Z | 105 | 1 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-20T02:59:29Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: 1500 PubMed articles with 4409 annotated chemicals, 5818 diseases and 3116 chemical-disease interactions.\

Original dataset: https://huggingface.co/datasets/tner/bc5cdr \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Named Entity Recognition and the name is Chemical and Disease Recognition (bc5cdr)\

---\

Sample input: Your task is a Named Entity Recognition (NER) task. Predict the category of each entity, then place the entity into the list associated with the category in an output JSON payload. Below is an example:

Input: "Naloxone reverses the antihypertensive effect of clonidine ."

Output: {'B-Chemical': ['Naloxone', 'clonidine'], 'B-Disease': [], 'I-Disease': [], 'I-Chemical': []}

Now, complete the task.

Input: "A standardized loading dose of VPA was administered , and venous blood was sampled at 0 , 1 , 2 , 3 , and 4 hours ."

Output: \

---\

Sample output: {'B-Chemical': ['VPA'], 'B-Disease': [], 'I-Disease': [], 'I-Chemical': []}\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/bc5cdr"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

predibase/e2e_nlg | predibase | 2024-02-21T19:13:56Z | 110 | 1 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-19T19:06:55Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: Translation from meaning representation to natural language\

Original dataset: https://huggingface.co/datasets/e2e_nlg \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Structured-to-Text and the name is Structured-to-Text (e2e_nlg)\

---\

Sample input: You are given a meaning representation below. Please translate it into plain English. Here is an example:\n\n### Meaning Representation: name[Blue Spice], eatType[coffee shop], area[city centre]\n\n### Plain English: A coffee shop in the city centre area called Blue Spice.\n\nNow please translate the following meaning representation:\n\n### Meaning Representation: name[Blue Spice], eatType[pub], food[Chinese], area[city centre], familyFriendly[yes], near[Rainbow Vegetarian Café]\n\n### Plain English:\

---\

Sample output: Blue Spice is a pub that serves Chinese food. It is located in the city centre near Rainbow Vegetarian Café.\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/e2e_nlg"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

predibase/drop | predibase | 2024-02-21T19:13:54Z | 30 | 4 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-19T23:22:39Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: Question answering given a passage\

Original dataset: https://huggingface.co/datasets/drop \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Other and the name is Question Answering (drop)\

---\

Sample input: Given a passage, you need to accurately identify and extract relevant spans of text that answer specific questions. Provide concise and coherent responses based on the information present in the passage.\n\n### Passage: Coming off their home win over the Browns, the Ravens flew to Heinz Field for their first road game of the year, as they played a Week 4 MNF duel with the throwback-clad Pittsburgh Steelers. In the first quarter, Baltimore trailed early as Steelers kicker Jeff Reed got a 49-yard field goal. The Ravens responded with kicker Matt Stover getting a 33-yard field goal. Baltimore gained the lead in the second quarter as Stover kicked a 20-yard field goal, while rookie quarterback Joe Flacco completed his first career touchdown pass as he hooked up with TE Daniel Wilcox from 4 yards out. In the third quarter, Pittsburgh took the lead with quarterback Ben Roethlisberger completing a 38-yard TD pass to WR Santonio Holmes, along with LB James Harrison forcing a fumble from Flacco with LB LaMarr Woodley returning the fumble 7 yards for a touchdown. In the fourth quarter, the Steelers increased their lead with Reed getting a 19-yard field goal. Afterwards, the Ravens tied the game with RB Le'Ron McClain getting a 2-yard TD run. However, despite winning the coin toss in overtime, Baltimore was unable to gain ground. In the end, Pittsburgh sealed Baltimore's fate as Reed nailed the game-winning 46-yard field goal.\n### Question: How many more field goals were made in the first half than in the second?\n### Answer:\

---\

Sample output: 1\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/drop"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

predibase/wikisql | predibase | 2024-02-21T19:13:53Z | 31 | 4 | peft | [

"peft",

"safetensors",

"text-generation",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

]

| text-generation | 2024-02-19T23:24:39Z | ---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

pipeline_tag: text-generation

---

Description: SQL generation given a table and question\

Original dataset: https://huggingface.co/datasets/wikisql \

---\

Try querying this adapter for free in Lora Land at https://predibase.com/lora-land! \

The adapter_category is Reasoning and the name is WikiSQL (SQL Generation)\

---\

Sample input: Considering the provided database schema and associated query, produce SQL code to retrieve the answer to the query.\n### Database Schema: {'header': ['Game', 'Date', 'Opponent', 'Score', 'Decision', 'Location/Attendance', 'Record'], 'types': ['real', 'real', 'text', 'text', 'text', 'text', 'text'], 'rows': [array(['77', '1', 'Pittsburgh Penguins', '1-6', 'Brodeur',

'Mellon Arena - 17,132', '47-26-4'], dtype=object), array(['78', '3', 'Tampa Bay Lightning', '4-5 (OT)', 'Brodeur',

'Prudential Center - 17,625', '48-26-4'], dtype=object), array(['79', '4', 'Buffalo Sabres', '3-2', 'Brodeur',

'HSBC Arena - 18,690', '49-26-4'], dtype=object), array(['80', '7', 'Toronto Maple Leafs', '4-1', 'Brodeur',

'Prudential Center - 15,046', '49-27-4'], dtype=object), array(['81', '9', 'Ottawa Senators', '3-2 (SO)', 'Brodeur',

'Scotiabank Place - 20,151', '50-27-4'], dtype=object), array(['82', '11', 'Carolina Hurricanes', '2-3', 'Brodeur',

'Prudential Center - 17,625', '51-27-4'], dtype=object)]}\n### Query: What is Score, when Game is greater than 78, and when Date is "4"?\n### SQL: \

---\

Sample output: SELECT Score FROM table WHERE Game > 78 AND Date = 4\

---\

Try using this adapter yourself!

```

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "mistralai/Mistral-7B-v0.1"

peft_model_id = "predibase/wikisql"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

``` |

Jingni/my_first_food_model | Jingni | 2024-02-21T18:44:51Z | 7 | 0 | transformers | [

"transformers",

"safetensors",

"vit",

"image-classification",

"generated_from_trainer",

"base_model:google/vit-base-patch16-224-in21k",

"base_model:finetune:google/vit-base-patch16-224-in21k",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| image-classification | 2024-02-21T16:10:27Z | ---

license: apache-2.0

base_model: google/vit-base-patch16-224-in21k

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: my_first_food_model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_first_food_model

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.9013

- Accuracy: 0.965

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 3.584 | 1.0 | 25 | 2.8238 | 0.9475 |

| 2.2086 | 2.0 | 50 | 2.0773 | 0.95 |

| 1.941 | 3.0 | 75 | 1.9013 | 0.965 |

### Framework versions

- Transformers 4.38.0

- Pytorch 2.1.2

- Datasets 2.17.1

- Tokenizers 0.15.2

|

navneetDS/en_pipeline | navneetDS | 2024-02-21T18:39:07Z | 0 | 0 | spacy | [

"spacy",

"token-classification",

"en",

"model-index",

"region:us"

]

| token-classification | 2024-02-21T18:39:04Z | ---

tags:

- spacy

- token-classification

language:

- en

model-index:

- name: en_pipeline

results:

- task:

name: NER

type: token-classification

metrics:

- name: NER Precision

type: precision

value: 1.0

- name: NER Recall

type: recall

value: 1.0

- name: NER F Score

type: f_score

value: 1.0

---

| Feature | Description |

| --- | --- |

| **Name** | `en_pipeline` |

| **Version** | `0.0.0` |

| **spaCy** | `>=3.6.1,<3.7.0` |

| **Default Pipeline** | `tok2vec`, `ner` |

| **Components** | `tok2vec`, `ner` |

| **Vectors** | 0 keys, 0 unique vectors (0 dimensions) |

| **Sources** | n/a |

| **License** | n/a |

| **Author** | [n/a]() |

### Label Scheme

<details>

<summary>View label scheme (1 labels for 1 components)</summary>

| Component | Labels |

| --- | --- |

| **`ner`** | `URL` |

</details>

### Accuracy

| Type | Score |

| --- | --- |

| `ENTS_F` | 100.00 |

| `ENTS_P` | 100.00 |

| `ENTS_R` | 100.00 |

| `TOK2VEC_LOSS` | 0.00 |

| `NER_LOSS` | 0.00 | |

furrutiav/bert_qa_extractor_cockatiel_2022_ulra_by_question_type_sub_best_ef_signal_it_149 | furrutiav | 2024-02-21T18:34:56Z | 5 | 0 | transformers | [

"transformers",

"safetensors",

"bert",

"feature-extraction",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

]

| feature-extraction | 2024-02-21T18:34:24Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]