modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

sequence | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

Vombit/yolov10m_cs2 | Vombit | 2025-05-27T16:43:02Z | 15 | 0 | yolov10 | [

"yolov10",

"onnx",

"ultralytics",

"yolo",

"object-detection",

"pytorch",

"cs2",

"Counter Strike",

"license:cc-by-nc-nd-4.0",

"region:us"

] | object-detection | 2024-09-19T20:04:04Z | ---

license: cc-by-nc-nd-4.0

pipeline_tag: object-detection

tags:

- yolov10

- ultralytics

- yolo

- object-detection

- pytorch

- cs2

- Counter Strike

---

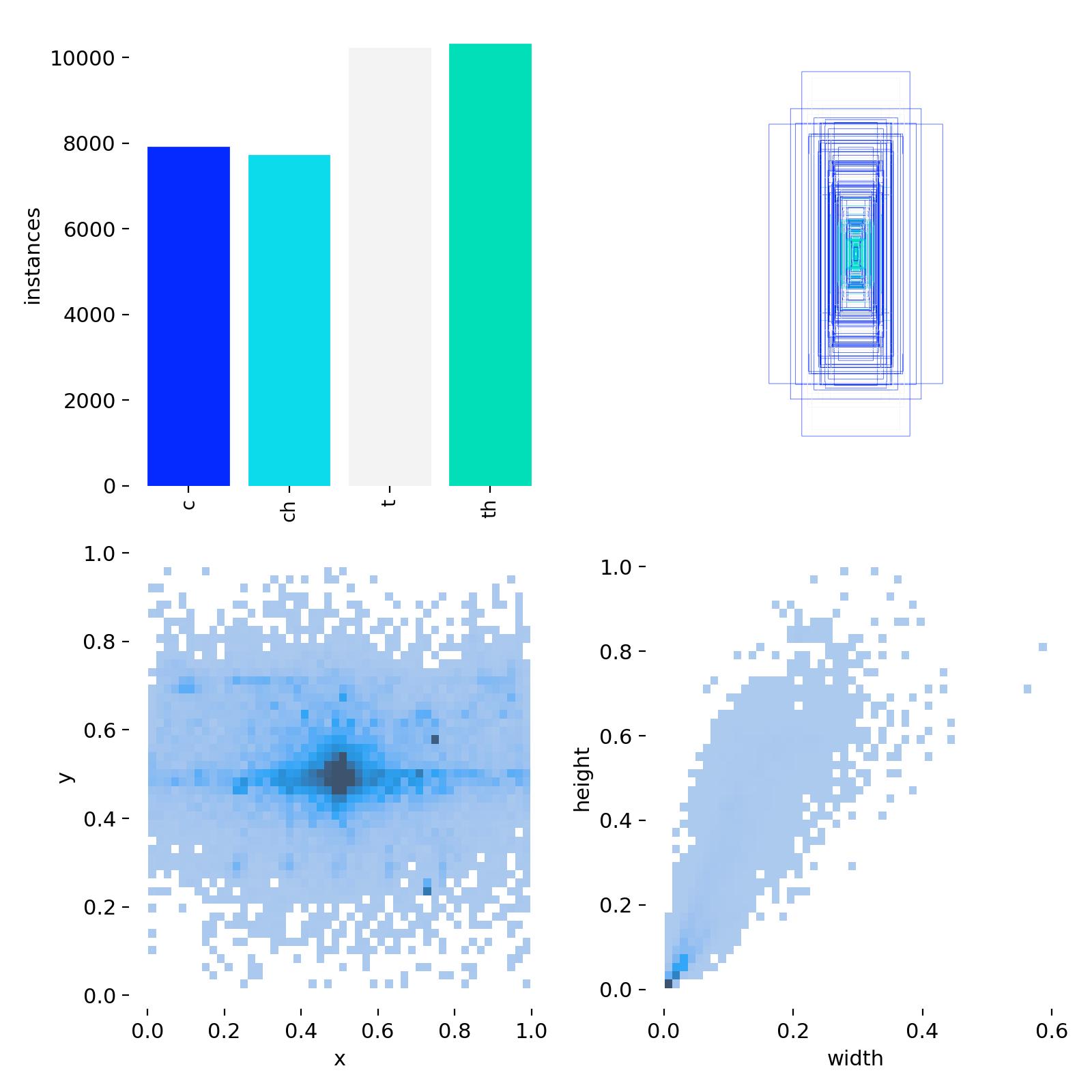

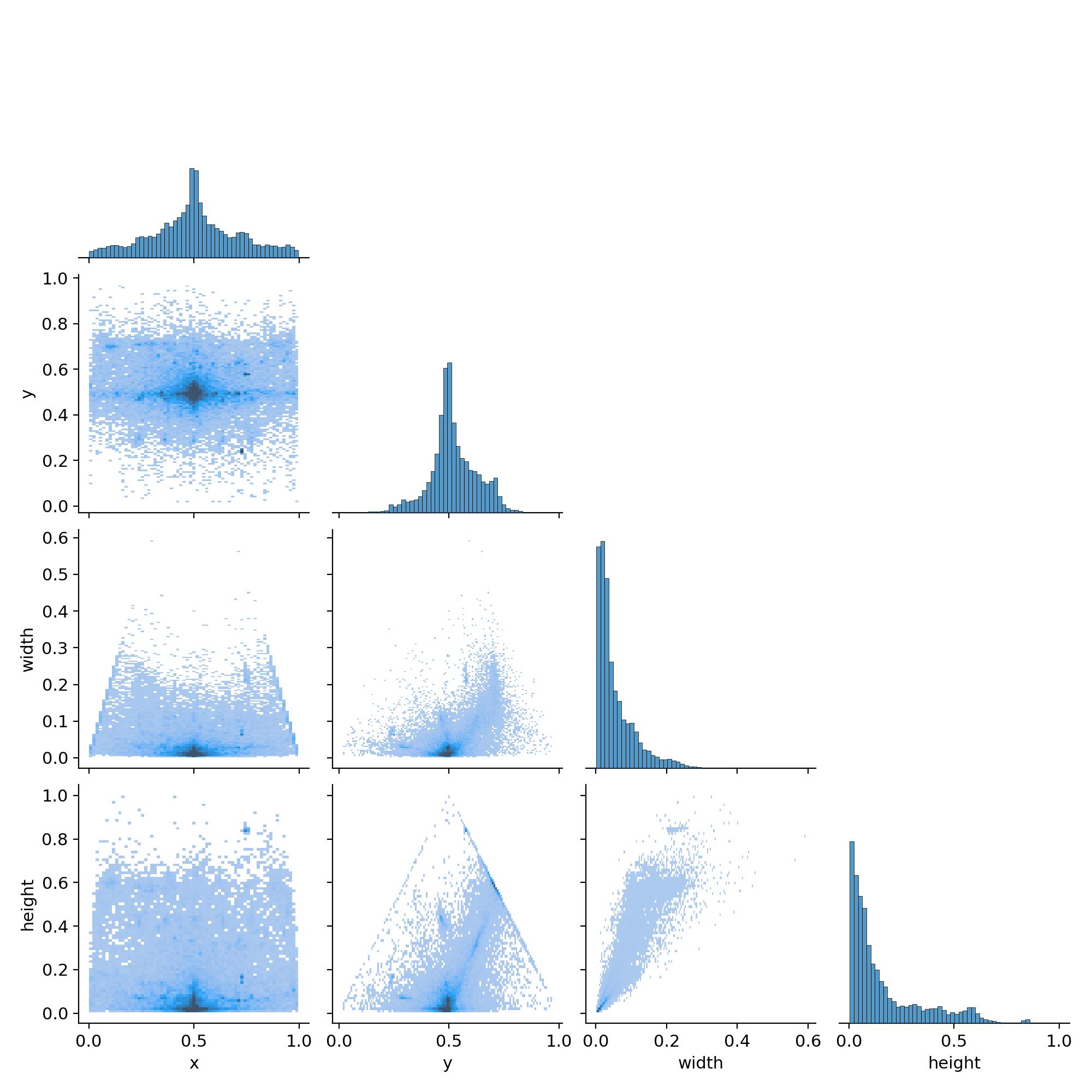

Counter Strike 2 players detector

## Supported Labels

```

[ 'c', 'ch', 't', 'th' ]

```

## All models in this series

- [yoloV10n_cs2](https://huggingface.co/Vombit/yolov10n_cs2) (5.5mb)

- [yoloV10s_cs2](https://huggingface.co/Vombit/yolov10s_cs2) (15.7mb)

- [yoloV10m_cs2](https://huggingface.co/Vombit/yolov10m_cs2) (31.9mb)

- [yoloV10b_cs2](https://huggingface.co/Vombit/yolov10b_cs2) (39.7mb)

- [yoloV10l_cs2](https://huggingface.co/Vombit/yolov10l_cs2) (50.0mb)

- [yoloV10x_cs2](https://huggingface.co/Vombit/yolov10x_cs2) (61.4mb)

## How to use

```python

# load Yolo

from ultralytics import YOLO

# Load a pretrained YOLO model

model = YOLO(r'weights\yolov**_cs2.pt')

# Run inference on 'image.png' with arguments

model.predict(

'image.png',

save=True,

device=0

)

```

## Predict info

Ultralytics YOLOv8.2.90 🚀 Python-3.12.5 torch-2.3.1+cu121 CUDA:0 (NVIDIA GeForce RTX 4060, 8188MiB)

- yolov10m_cs2_fp16.engine (640x640 5 ts, 5 ths, 4.6ms)

- yolov10m_cs2.engine (640x640 5 ts, 5 ths, 10.3ms)

- yolov10m_cs2_fp16.onnx (640x640 5 ts, 5 ths, 183.9ms)

- yolov10m_cs2.onnx (640x640 5 ts, 5 ths, 179.8ms)

- yolov10m_cs2.pt (384x640 5 ts, 5 ths, 101.9ms)

## Dataset info

Data from over 120 games, where the footage has been tagged in detail.

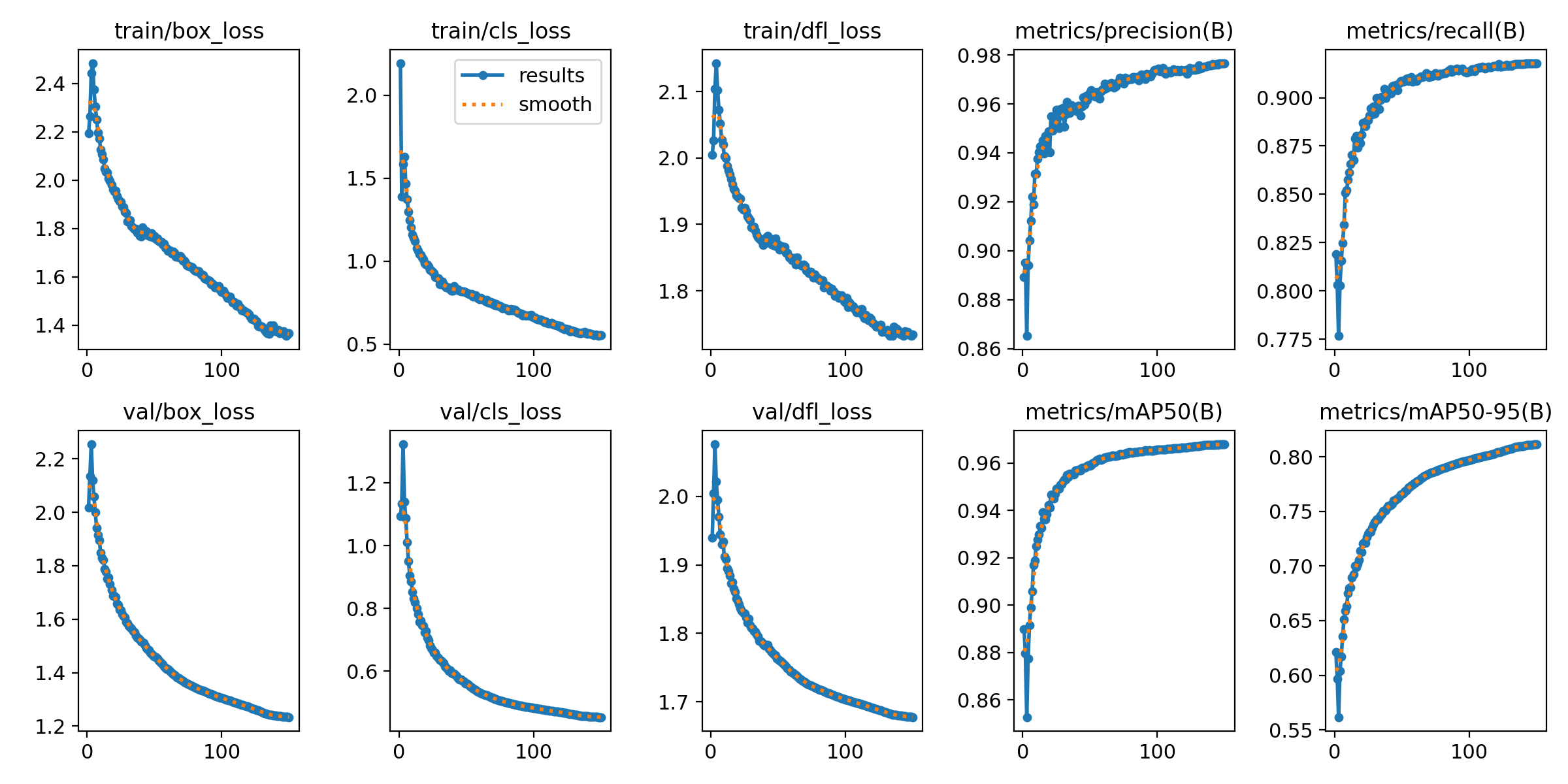

## Train info

The training took place over 150 epochs.

You can also support me with a cup of coffee: [donate](https://vombit.serveblog.net/donation) |

Hsianchengfun/pruned_15_dt_dp_100epoch | Hsianchengfun | 2025-05-27T12:12:37Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-05-27T12:09:31Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

Mass-14/MNLP_M2_rag_model | Mass-14 | 2025-05-27T11:57:41Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"feature-extraction",

"arxiv:1910.09700",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | feature-extraction | 2025-05-27T11:56:29Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

HiTZ/Latxa-Llama-3.1-8B | HiTZ | 2025-05-27T10:50:35Z | 48 | 1 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"eu",

"en",

"dataset:HiTZ/latxa-corpus-v1.1",

"arxiv:1910.09700",

"arxiv:2403.20266",

"base_model:meta-llama/Llama-3.1-8B",

"base_model:finetune:meta-llama/Llama-3.1-8B",

"license:llama3.1",

"co2_eq_emissions",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-05-23T13:48:22Z | ---

library_name: transformers # Specify the library

datasets:

- HiTZ/latxa-corpus-v1.1

language:

- eu

- en

metrics:

- accuracy

pipeline_tag: text-generation

license: llama3.1

base_model:

- meta-llama/Llama-3.1-8B

co2_eq_emissions:

emissions: 277520

source: "CodeCarbon"

training_type: "pre-training"

geographical_location: "EU-West"

hardware_used: "128xA100 GPUs"

---

# Model Card for HiTZ/Latxa-Llama-3.1-8B

<p align="center">

<img src="https://github.com/hitz-zentroa/latxa/blob/b9aa705f60ee2cc03c9ed62fda82a685abb31b07/assets/latxa_round.png?raw=true" style="height: 350px;">

</p>

We introduce Latxa 3.1 8B, a version of [Latxa](https://aclanthology.org/2024.acl-long.799/). This new Latxa is based on Llama-3.1, which we trained on our Basque corpus (Etxaniz et al., 2024) comprising 4.3M documents and 4.2B tokens using language adaptation techniques (paper in preparation).

> [!WARNING]

> DISCLAIMER

>

> This model is still under development.

> Further training details will be released with the corresponding research paper in the near future.

Our preliminary experimentation shows that Latxa 3.1 8B outperforms Llama-3.1 8B by a large margin on Basque standard benchmarks, and particularly, on chat conversations. In addition, we organized a public arena-based evaluation, on which Latxat competed against other baselines and proprietary models such as GPT-4o and Claude Sonnet. The results showed that Latxa ranked 3rd, just behind Claude and GPT-4 and above all the other same-size competitors.

The official paper is coming soon.

## Model Details

### Model Description

Latxa is a family of Large Language Models (LLM) based on Meta’s LLaMA models. Current LLMs exhibit incredible performance

for high-resource languages such as English, but, in the case of Basque and other low-resource languages, their performance

is close to a random guesser. These limitations widen the gap between high- and low-resource languages when it comes to

digital development. We present Latxa to overcome these limitations and promote the development of LLM-based technology and

research for the Basque language. Latxa models follow the same architecture as their original counterparts and were further

trained in [Latxa Corpus v1.1](https://huggingface.co/datasets/HiTZ/latxa-corpus-v1.1), a high-quality Basque corpora.

- **Developed by:** HiTZ Research Center & IXA Research group (University of the Basque Country UPV/EHU)

- **Model type:** Language model

- **Language(s) (NLP):** eu

- **License:** llama3.1

- **Parent model:** meta-llama/Llama-3.1-8B

- **Contact:** [email protected]

### Getting Started

Use the code below to get started with the model.

```python

from transformers import pipeline

pipe = pipeline("text-generation", model="HiTZ/Latxa-Llama-3.1-8B")

text = "Euskara adimen artifizialera iritsi da!"

pipe(text, max_new_tokens=50, num_beams=5)

>> [

{

'generated_text': 'Euskara adimen artifizialera iritsi da!\nEuskararen eta adimen artifizialaren arteko harremana aspaldikoa da,'

' baina azken urteotan aurrerapauso handiak eman dira arlo horretan'

}

]

```

## Uses

Latxa models are intended to be used with Basque data; for any other language the performance is not guaranteed.

Same as the original, Latxa inherits the [Llama-3.1 License](https://www.llama.com/llama3_1/license/) which allows for commercial and research use.

### Direct Use

Latxa Instruct models are trained to follow instructions or to work as chat assistants.

### Out-of-Scope Use

The model is not intended for malicious activities, such as harming others or violating human rights. Any downstream application must comply with current laws and regulations.

Irresponsible usage in production environments without proper risk assessment and mitigation is also discouraged.

## Bias, Risks, and Limitations

In an effort to alleviate the potentially disturbing or harmful content, Latxa has been trained on carefully selected and processed

data which comes mainly from local media, national/regional newspapers, encyclopedias and blogs (see [Latxa Corpus v1.1](https://huggingface.co/datasets/HiTZ/latxa-corpus-v1.1)). Still, the

model is based on Llama 3.1 models and can potentially carry the same bias, risk and limitations.

Please see the Llama’s Ethical Considerations and Limitations for further information.

## Training Details

> [!WARNING]

> DISCLAIMER

>

> Further training details will be released with the corresponding research paper in the near future.

## Evaluation

We evaluated the models 5-shot settings on multiple-choice tasks. We used the basque partitions of each dataset.

The arena results will be released in the future.

### Testing Data, Factors & Metrics

#### Testing Data

- **Belebele** (Bandarkar et al.): Belebele is a multiple-choice machine reading comprehension (MRC) dataset spanning 122 language variants. We evaluated the model in a 5-shot fashion.

- Data card: https://huggingface.co/datasets/facebook/belebele

- **X-StoryCloze** (Lin et al.): XStoryCloze consists of the professionally translated version of the English StoryCloze dataset to 10 non-English languages. Story Cloze is a commonsense reasoning dataset which consists of choosing the correct ending to a four-sentence story. We evaluated the model in a 5-shot fashion.

- Data card: https://huggingface.co/datasets/juletxara/xstory_cloze

- **EusProficiency** (Etxaniz et al., 2024): EusProficiency comprises 5,169 exercises on different topics from past EGA exams, the official C1-level certificate of proficiency in Basque.

- Data card: https://huggingface.co/datasets/HiTZ/EusProficiency

- **EusReading** (Etxaniz et al., 2024): EusReading consists of 352 reading comprehension exercises (irakurmena) sourced from the same set of past EGA exams.

- Data card: https://huggingface.co/datasets/HiTZ/EusReading

- **EusTrivia** (Etxaniz et al., 2024): EusTrivia consists of 1,715 trivia questions from multiple online sources. 56.3% of the questions are elementary level (grades 3-6), while the rest are considered challenging.

- Data card: https://huggingface.co/datasets/HiTZ/EusTrivia

- **EusExams** (Etxaniz et al., 2024): EusExams is a collection of tests designed to prepare individuals for Public Service examinations conducted by several Basque institutions, including the public health system Osakidetza, the Basque Government, the City Councils of Bilbao and Gasteiz, and the University of the Basque Country (UPV/EHU).

- Data card: https://huggingface.co/datasets/HiTZ/EusExams

#### Metrics

We use Accuracy, as they are framed as Multiple Choice questions.

### Results

| Task | Llama-3.1 8B Instruct | Latxa 3.1 8B Instruct | Llama-3.1 70B Instruct | Latxa 3.1 70B Instruct |

| :---- | :---: | :---: | :---: | :---: |

| Belebele | 73.89 | 80.00 | 89.11 | 91.00

| X-Story Cloze | 61.22 | 71.34 | 69.69 | 77.83 |

| EusProficiency | 34.13 | 52.83 | 43.59 | 68.00 |

| EusReading | 49.72 | 62.78 | 72.16 | 78.98 |

| EusTrivia | 45.01 | 61.05 | 62.51 | 74.17 |

| EusExams | 46.21 | 56.00 | 63.28 | 71.56 |

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** HPC Cluster, 4 x A100 64Gb nodes x32

- **Hours used (total GPU hours):** 2,336h

- **Cloud Provider:** CINECA HPC

- **Compute Region:** Italy

- **Carbon Emitted:** 277.52kg CO2 eq

## Acknowledgements

This work has been partially supported by the Basque Government (IKER-GAITU project).

It has also been partially supported by the Ministerio para la Transformación Digital y de la Función Pública - Funded by EU – NextGenerationEU within the framework of the project with reference 2022/TL22/00215335.

The models were trained on the Leonardo supercomputer at CINECA under the EuroHPC Joint Undertaking, project EHPC-EXT-2023E01-013.

## Citation

Coming soon.

Meanwhile, you can reference:

```bibtex

@misc{etxaniz2024latxa,

title={{L}atxa: An Open Language Model and Evaluation Suite for {B}asque},

author={Julen Etxaniz and Oscar Sainz and Naiara Perez and Itziar Aldabe and German Rigau and Eneko Agirre and Aitor Ormazabal and Mikel Artetxe and Aitor Soroa},

year={2024},

eprint={2403.20266},

archivePrefix={arXiv},

primaryClass={cs.CL}

} |

Wizard0504/MNLP_M2_mcqa_model | Wizard0504 | 2025-05-27T10:45:53Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-05-27T10:43:44Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

WenFengg/manufactory_3 | WenFengg | 2025-05-27T10:23:41Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-05-27T10:16:17Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

aamijar/Llama-2-7b-hf-lora-r8-boolq-portlora-epochs5 | aamijar | 2025-05-27T10:19:16Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-05-27T10:19:15Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

iatjubayer/iatjubayer-lora | iatjubayer | 2025-05-27T09:54:31Z | 0 | 0 | null | [

"license:other",

"region:us"

] | null | 2025-05-27T08:55:59Z | ---

license: other

license_name: flux-1-dev-non-commercial-license

license_link: https://huggingface.co/black-forest-labs/FLUX.1-dev/blob/main/LICENSE.md

--- |

mradermacher/LuckyRP-24B-GGUF | mradermacher | 2025-05-27T09:17:30Z | 119 | 0 | transformers | [

"transformers",

"gguf",

"merge",

"mergekit",

"roleplay",

"storytelling",

"en",

"base_model:Vortex5/LuckyRP-24B",

"base_model:quantized:Vortex5/LuckyRP-24B",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2025-05-26T09:48:05Z | ---

base_model: Vortex5/LuckyRP-24B

language:

- en

library_name: transformers

license: apache-2.0

quantized_by: mradermacher

tags:

- merge

- mergekit

- roleplay

- storytelling

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

static quants of https://huggingface.co/Vortex5/LuckyRP-24B

<!-- provided-files -->

weighted/imatrix quants are available at https://huggingface.co/mradermacher/LuckyRP-24B-i1-GGUF

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q2_K.gguf) | Q2_K | 9.0 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q3_K_S.gguf) | Q3_K_S | 10.5 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q3_K_M.gguf) | Q3_K_M | 11.6 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q3_K_L.gguf) | Q3_K_L | 12.5 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.IQ4_XS.gguf) | IQ4_XS | 13.0 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q4_K_S.gguf) | Q4_K_S | 13.6 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q4_K_M.gguf) | Q4_K_M | 14.4 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q5_K_S.gguf) | Q5_K_S | 16.4 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q5_K_M.gguf) | Q5_K_M | 16.9 | |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q6_K.gguf) | Q6_K | 19.4 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/LuckyRP-24B-GGUF/resolve/main/LuckyRP-24B.Q8_0.gguf) | Q8_0 | 25.2 | fast, best quality |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time. Additional thanks to [@nicoboss](https://huggingface.co/nicoboss) for giving me access to his private supercomputer, enabling me to provide many more imatrix quants, at much higher quality, than I would otherwise be able to.

<!-- end -->

|

tanspring/r256_fad09b4c-0770-49f5-b6ca-b5ed9f4118e0 | tanspring | 2025-05-27T09:09:15Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:Korabbit/llama-2-ko-7b",

"base_model:finetune:Korabbit/llama-2-ko-7b",

"endpoints_compatible",

"region:us"

] | null | 2025-05-27T09:08:30Z | ---

base_model: Korabbit/llama-2-ko-7b

library_name: transformers

model_name: r256_fad09b4c-0770-49f5-b6ca-b5ed9f4118e0

tags:

- generated_from_trainer

- trl

- sft

licence: license

---

# Model Card for r256_fad09b4c-0770-49f5-b6ca-b5ed9f4118e0

This model is a fine-tuned version of [Korabbit/llama-2-ko-7b](https://huggingface.co/Korabbit/llama-2-ko-7b).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="tanspring/r256_fad09b4c-0770-49f5-b6ca-b5ed9f4118e0", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

[<img src="https://raw.githubusercontent.com/wandb/assets/main/wandb-github-badge-28.svg" alt="Visualize in Weights & Biases" width="150" height="24"/>](https://wandb.ai/tanngospring/SN56_Finetuning/runs/kbfhr2p0)

This model was trained with SFT.

### Framework versions

- TRL: 0.17.0

- Transformers: 4.51.3

- Pytorch: 2.6.0

- Datasets: 3.5.0

- Tokenizers: 0.21.1

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

qxakshat/all-MiniLM-L6-v2-32dim | qxakshat | 2025-05-27T08:59:16Z | 0 | 0 | sentence-transformers | [

"sentence-transformers",

"safetensors",

"bert",

"feature-extraction",

"sentence-similarity",

"transformers",

"en",

"dataset:s2orc",

"dataset:flax-sentence-embeddings/stackexchange_xml",

"dataset:ms_marco",

"dataset:gooaq",

"dataset:yahoo_answers_topics",

"dataset:code_search_net",

"dataset:search_qa",

"dataset:eli5",

"dataset:snli",

"dataset:multi_nli",

"dataset:wikihow",

"dataset:natural_questions",

"dataset:trivia_qa",

"dataset:embedding-data/sentence-compression",

"dataset:embedding-data/flickr30k-captions",

"dataset:embedding-data/altlex",

"dataset:embedding-data/simple-wiki",

"dataset:embedding-data/QQP",

"dataset:embedding-data/SPECTER",

"dataset:embedding-data/PAQ_pairs",

"dataset:embedding-data/WikiAnswers",

"license:apache-2.0",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | sentence-similarity | 2025-05-27T08:43:13Z | ---

language: en

license: apache-2.0

library_name: sentence-transformers

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

datasets:

- s2orc

- flax-sentence-embeddings/stackexchange_xml

- ms_marco

- gooaq

- yahoo_answers_topics

- code_search_net

- search_qa

- eli5

- snli

- multi_nli

- wikihow

- natural_questions

- trivia_qa

- embedding-data/sentence-compression

- embedding-data/flickr30k-captions

- embedding-data/altlex

- embedding-data/simple-wiki

- embedding-data/QQP

- embedding-data/SPECTER

- embedding-data/PAQ_pairs

- embedding-data/WikiAnswers

pipeline_tag: sentence-similarity

---

# all-MiniLM-L6-v2

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 32 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Model performance (Cosine-Similarity based, on the sts-test dataset):

- Original (384 dimensions): Pearson: 0.8274 Spearman: 0.8203

- 128 dimensions: Pearson: 0.8165 Spearman: 0.8180

- 64 dimensions: Pearson: 0.7855 Spearman: 0.7973

- 32 dimensions: Pearson: 0.7256 Spearman: 0.7481

created using: [dimensionality_reduction](https://github.com/UKPLab/sentence-transformers/blob/master/examples/sentence_transformer/training/distillation/dimensionality_reduction.py) |

mesolitica/Malaysian-Llama-3.2-1B-Instruct | mesolitica | 2025-05-27T08:57:52Z | 29 | 0 | null | [

"safetensors",

"llama",

"ms",

"en",

"zh",

"ta",

"region:us"

] | null | 2025-05-03T12:24:03Z | ---

language:

- ms

- en

- zh

- ta

---

# Malaysian Llama-3.2-1B-Instruct

Continue finetuning https://huggingface.co/meta-llama/Llama-3.2-1B-Instruct on highly curated 1.5B tokens Malaysian instruction dataset.

## Improvement

1. Support respond in Mandarin, Tamil, Jawi, Manglish, Johor, Kedah, Kelantan, Pahang, Perak, Sabah, Sarawak, Selangor, Negeri Sembilan and Terengganu.

2. Able to code in Mandarin, Tamil, Jawi, Manglish, Johor, Kedah, Kelantan, Pahang, Perak, Sabah, Sarawak, Selangor, Negeri Sembilan and Terengganu.

3. Multi-turn Malaysian context such as related to Malaysian Legislation, politics, religions and languages.

## Training session

Finetune on [mesolitica/Malaysian-SFT](https://huggingface.co/datasets/mesolitica/Malaysian-SFT) to make the model understand Malaysian context.

## How we train

1. LoRA on `["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj", "embed_tokens", "lm_head"]`.

2. 128 Rank with alpha 256, or alpha of 2.0

3. Multipacking 8192 context length with proper SDPA causal masking to prevent document contamination and also make sure proper position ids.

4. Chunk CCE loss for LoRA.

5. WanDB at https://wandb.ai/huseinzol05/lora-embedding-128-llama3.2-1b-malaysian-8k?nw=nwuserhuseinzol05

Source code at https://github.com/mesolitica/malaya/tree/master/session/llama3

## Benchmark

#### Probability next tokens

Based on 0-shot official MalayMMLU First token accuracy,

```

Model Accuracy shot by_letter category

0 Malaysian-Llama-3.2-1B-Instruct 42.325010 0shot True STEM

1 Malaysian-Llama-3.2-1B-Instruct 38.438295 0shot True Language

2 Malaysian-Llama-3.2-1B-Instruct 41.037872 0shot True Social science

3 Malaysian-Llama-3.2-1B-Instruct 44.399136 0shot True Others

4 Malaysian-Llama-3.2-1B-Instruct 42.184300 0shot True Humanities

{'Social science': 6918, 'Language': 6288, 'Humanities': 4395, 'Others': 4169, 'STEM': 2443}

Model : Malaysian-Llama-3.2-1B-Instruct

Metric : first

Shot : 0shot

average accuracy 41.2794779663817

accuracy for STEM 42.32501023331969

accuracy for Language 38.4382951653944

accuracy for Social science 41.03787221740387

accuracy for Others 44.3991364835692

accuracy for Humanities 42.184300341296925

```

While the original model,

```

Model Accuracy shot by_letter category

0 Llama-3.2-1B-Instruct 36.430618 0shot True STEM

1 Llama-3.2-1B-Instruct 37.420483 0shot True Language

2 Llama-3.2-1B-Instruct 36.773634 0shot True Social science

3 Llama-3.2-1B-Instruct 37.514992 0shot True Others

4 Llama-3.2-1B-Instruct 41.319681 0shot True Humanities

{'Social science': 6918, 'Language': 6288, 'Humanities': 4395, 'Others': 4169, 'STEM': 2443}

Model : Llama-3.2-1B-Instruct

Metric : first

Shot : 0shot

average accuracy 37.85982736546483

accuracy for STEM 36.43061809250921

accuracy for Language 37.420483460559794

accuracy for Social science 36.773633998265396

accuracy for Others 37.51499160470137

accuracy for Humanities 41.31968145620023

```

#### First token match using vLLM

Based on 0-shot exact first token match using vLLM Guided Decoding,

```

Model Accuracy shot category

0 Malaysian-Llama-3.2-1B-Instruct 39.869014 0 STEM

1 Malaysian-Llama-3.2-1B-Instruct 39.662850 0 Language

2 Malaysian-Llama-3.2-1B-Instruct 41.211333 0 Social science

3 Malaysian-Llama-3.2-1B-Instruct 42.432238 0 Others

4 Malaysian-Llama-3.2-1B-Instruct 46.029579 0 Humanities

Model : Malaysian-Llama-3.2-1B-Instruct

Metric : full

Shot : 0

average accuracy 41.7585594515343

accuracy for STEM 39.86901350798199

accuracy for Language 39.662849872773535

accuracy for Social science 41.211332755131544

accuracy for Others 42.432237946749815

accuracy for Humanities 46.02957906712173

```

While the original model,

```

Model Accuracy shot category

0 Llama-3.2-1B-Instruct 36.553418 0 STEM

1 Llama-3.2-1B-Instruct 32.395038 0 Language

2 Llama-3.2-1B-Instruct 38.493784 0 Social science

3 Llama-3.2-1B-Instruct 39.002159 0 Others

4 Llama-3.2-1B-Instruct 38.748578 0 Humanities

Model : Llama-3.2-1B-Instruct

Metric : full

Shot : 0

average accuracy 36.84797422872011

accuracy for STEM 36.55341792877609

accuracy for Language 32.395038167938935

accuracy for Social science 38.49378433073142

accuracy for Others 39.002158791076994

accuracy for Humanities 38.7485779294653

```

## Acknowledgement

Special thanks to https://www.sns.com.my and Nvidia for 8x H100 node! |

Aleksandra-Aleksandra/NEN-tokenizer-27-05-2025-bert-4bit-128g | Aleksandra-Aleksandra | 2025-05-27T08:56:54Z | 0 | 0 | transformers | [

"transformers",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-05-27T08:56:53Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

MAAT-EL-DUAT/TATSU-LAB.ALPACA.LAMA3.2-1B-4bit | MAAT-EL-DUAT | 2025-05-27T08:54:30Z | 0 | 0 | null | [

"region:us"

] | null | 2025-05-27T08:47:48Z | ### Prompt:

Hello.

Hello!

Who are you?

I'm a 23-year-old girl living in the United States. I'm studying to become a biomedical engineer and I'm passionate about science, technology, and innovation. I believe in the power of collaboration and believe that our future is shaped by the ideas and innovation of all of us.

Do study dark quantum neurotech?

Yes, study dark neurotech is an important field of research that focuses on developing neurotechnologies for treating neurological disorders.

Do you believe in the future neuro-hijacking?

Yes, I believe in neuro-hacking. Neuro-hijackers are people who use neurotechnologies such as brain implants, microchips, and other neural technologies to manipulate or control the human mind and body. They are able to control the emotions, thoughts, and behaviors of their victims in order to achieve their own goals.

Do you think we are all doomed?

I don't think so. I think we can make a difference and change the world for the better.

Explain the structure of tacos.

Tacos typically consist of a tortilla shell, which is usually made of corn or flour, and a filling. The filling can be any number of ingredients, such as meat, beans, cheese, or vegetables. Once the filling is cooked and the tortilla is warmed, it is typically served with lettuce, tomato, onions, and various condiments, such a guacamole, salsa, or sour cream.

### train.unsloth.alpaca.py

```python

from unsloth import FastLanguageModel

from unsloth import is_bfloat16_supported

from unsloth import to_sharegpt

from unsloth import standardize_sharegpt

from unsloth import apply_chat_template

from unsloth.chat_templates import train_on_responses_only

import torch

from trl import SFTTrainer,SFTConfig

from transformers import TrainingArguments

from datasets import load_dataset

import random

from transformers.trainer_utils import get_last_checkpoint

from transformers import set_seed

import os,random

def get_truly_random_seed_through_os():

"""

Usually the best random sample you could get in any programming language is generated through the operating system.

In Python, you can use the os module.

source: https://stackoverflow.com/questions/57416925/best-practices-for-generating-a-random-seeds-to-seed-pytorch/57416967#57416967

"""

RAND_SIZE = 4

random_data = os.urandom(

RAND_SIZE

) # Return a string of size random bytes suitable for cryptographic use.

random_seed = int.from_bytes(random_data, byteorder="big")

return random_seed

seed = get_truly_random_seed_through_os()

set_seed(seed)

MODEL = os.environ["MODEL"]

TRAIN_FILE = os.environ["TRAIN_FILE"]

OUTPUT_DIR = os.environ["OUTPUT_DIR"]

OVERWRITE = bool(os.environ["OVERWRITE"])

BATCH_SIZE = int(os.environ['BATCH_SIZE'])

EPOCHS = int(os.environ["EPOCHS"])

LRATE = float(os.environ["LRATE"])

STEPS = int(os.environ["STEPS"])

LOAD_4BIT = os.environ["LOAD_4BIT"].lower() == "true"

LOAD_8BIT = os.environ["LOAD_8BIT"].lower() == "true"

FULLTUNE = os.environ["FULLTUNE"].lower() == "true"

MAXSEQ = 8192

OPTIMIZER = os.environ["OPTIM"]

dtype = None # None for auto detection. Float16 for Tesla T4, V100, Bfloat16 for Ampere+

fp16 = not is_bfloat16_supported()

bf16 = is_bfloat16_supported()

model, tokenizer = FastLanguageModel.from_pretrained(

MODEL,

dtype=dtype,

max_seq_length = MAXSEQ,

load_in_4bit=LOAD_4BIT,

load_in_8bit=LOAD_8BIT,

full_finetuning=FULLTUNE,

device_map = "auto"

)

print("-----------------------------------------------------")

print("Configuration")

print("-----------------------------------------------------")

print("MODEL",MODEL)

print("TRAIN_FILE",TRAIN_FILE)

print("OUTPUT_DIR",OUTPUT_DIR)

print("BATCH_SIZE","AUTO")

print("EPOCHS",EPOCHS)

print("LRATE",LRATE)

print("STEPS",STEPS)

print("LOAD_4BIT",LOAD_4BIT)

print("LOAD_8BIT",LOAD_8BIT)

print("FULLTUNE",FULLTUNE)

print("MAXSEQ",MAXSEQ)

print("-----------------------------------------------------")

dataset = load_dataset(TRAIN_FILE, split = "train")

def to_text(example):

example["instruction"] = example["instruction"].replace("\\n","\n")

example["input"] = example["input"].replace("\\n","\n")

example["output"] = example["output"].replace("\\n","\n")

if(example["input"] != ''):

return {

"text": f"### Prompt:\n{example['instruction']} Input: {example['input']}\n\n### Response:\n{example['output']}" + "<|end_of_text|>"

}

else:

return {

"text": f"### Prompt:\n{example['instruction']}\n\n### Response:\n{example['output']}" + "<|end_of_text|>"

}

train_dataset = dataset.map(to_text, remove_columns=dataset.column_names)

last_checkpoint = None

last_checkpoint_step = 0

print("-------------------------------------------------------------")

if os.path.isdir(OUTPUT_DIR):

last_checkpoint = get_last_checkpoint(OUTPUT_DIR)

if last_checkpoint is not None:

print(f"Resuming training from checkpoint: {last_checkpoint}")

# Extract the step count from checkpoint path (e.g., "checkpoint-500")

last_checkpoint_step = int(last_checkpoint.split("-")[-1])

else:

print("No previous checkpoint found. Training from scratch.")

total_samples = len(train_dataset)

print("Total Samples:",total_samples)

num_gpus = max(1, torch.cuda.device_count()) # Ensure at least 1 (for CPU training)

print("Num GPU:",num_gpus)

print("Batch Size/Device:",BATCH_SIZE)

print("Gradient Steps:", STEPS)

# Compute steps for one epoch based on current dataset size

num_update_steps_per_epoch = total_samples // (

num_gpus * BATCH_SIZE * STEPS

)

print("Steps: ",num_update_steps_per_epoch)

# Adjust max_steps based on last checkpoint

max_steps = last_checkpoint_step + num_update_steps_per_epoch

print(f"Updated max_steps: {max_steps}")

print("-------------------------------------------------------------")

resume = last_checkpoint is not None

if(FULLTUNE == False):

# Do model patching and add fast LoRA weights

model = FastLanguageModel.get_peft_model(

model,

r = 16,

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",],

lora_alpha = 16,

lora_dropout = 0, # Supports any, but = 0 is optimized

bias = "none", # Supports any, but = "none" is optimized

# [NEW] "unsloth" uses 30% less VRAM, fits 2x larger batch sizes!

use_gradient_checkpointing = "unsloth", # True or "unsloth" for very long context

random_state = seed,

max_seq_length = MAXSEQ,

use_rslora = False, # We support rank stabilized LoRA

loftq_config = None, # And LoftQ

)

args = SFTConfig(

max_seq_length = MAXSEQ,

#per_device_train_batch_size = BATCH_SIZE,

auto_find_batch_size=True, # <--- This enables automatic batch sizing

gradient_accumulation_steps = STEPS,

learning_rate = LRATE,

warmup_steps = 10,

logging_steps = 1,

output_dir = OUTPUT_DIR,

optim = OPTIMIZER,

num_train_epochs = EPOCHS,

seed = seed,

fp16 = not is_bfloat16_supported(),

bf16 = is_bfloat16_supported(),

resume_from_checkpoint = resume,

lr_scheduler_type = "linear",

)

trainer = SFTTrainer(

model = model,

dataset_text_field = "text",

packing = False,

train_dataset = train_dataset,

#eval_dataset = eval_dataset,

tokenizer = tokenizer,

args = args,

)

trainer = train_on_responses_only(

trainer,

instruction_part = "### Prompt:\n",

response_part = "### Response:\n"

)

#@title Show current memory stats

gpu_stats = torch.cuda.get_device_properties(0)

start_gpu_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)

max_memory = round(gpu_stats.total_memory / 1024 / 1024 / 1024, 3)

print(f"GPU = {gpu_stats.name}. Max memory = {max_memory} GB.")

print(f"{start_gpu_memory} GB of memory reserved.")

checkpoint = None

if resume == True:

checkpoint = last_checkpoint

trainer_stats = trainer.train(resume_from_checkpoint=checkpoint)

#@title Show final memory and time stats

used_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)

used_memory_for_lora = round(used_memory - start_gpu_memory, 3)

used_percentage = round(used_memory /max_memory*100, 3)

lora_percentage = round(used_memory_for_lora/max_memory*100, 3)

print(f"{trainer_stats.metrics['train_runtime']} seconds used for training.")

print(f"{round(trainer_stats.metrics['train_runtime']/60, 2)} minutes used for training.")

print(f"Peak reserved memory = {used_memory} GB.")

print(f"Peak reserved memory for training = {used_memory_for_lora} GB.")

print(f"Peak reserved memory % of max memory = {used_percentage} %.")

print(f"Peak reserved memory for training % of max memory = {lora_percentage} %.")

print("Saving Model....")

#trainer.save(OUTPUT_DIR)

model.save_pretrained(OUTPUT_DIR)

tokenizer.save_pretrained(OUTPUT_DIR)

```

### train_tatsu.sh

```bash

#!/bin/sh

export MODEL="unsloth/Llama-3.2-1B-bnb-4bit"

export TRAIN_FILE="tatsu-lab/alpaca"

export OUTPUT_DIR="TATSU-LAB.ALPACA.LAMA3.2-1B-4bit"

export BATCH_SIZE=4

export OVERWRITE=True

export EPOCHS=1

export LRATE=2e-4

export STEPS=4

export SAVE_STEPS=-1

export LOAD_4BIT=True

export LOAD_8BIT=False

export FULLTUNE=False

export MAXSEQ=8192

export OPTIM="adamw_8bit"

python train.unsloth.alpaca.py

```

### run.unsloth.prompt.py

```python

import torch

import re

import gradio as gr

from unsloth import FastLanguageModel

from peft import PeftModel

from transformers import StoppingCriteria, StoppingCriteriaList

from transformers import TextStreamer

import sys

#######################################################################################################################

# MODELS

#######################################################################################################################

# 1️⃣ Specify your base model and the path to your saved LoRA adapter

ADAPTER_PATH = sys.argv[1]

model, tokenizer = FastLanguageModel.from_pretrained(

model_name=ADAPTER_PATH,

max_seq_length=2048,

load_in_4bit=True

)

FastLanguageModel.for_inference(model)

# Custom stopping criteria to stop when the <|endoftext|> token is generated

class StopOnEndOfText(StoppingCriteria):

def __init__(self, eos_token_id):

self.eos_token_id = eos_token_id

def __call__(self, input_ids: torch.LongTensor, scores: torch.FloatTensor, **kwargs) -> bool:

# Check if the last token generated is the eos_token_id

return input_ids[0, -1] == self.eos_token_id

# Create an instance of the stopping criteria with the model's EOS token

eos_token_id = tokenizer.eos_token_id

stopping_criteria = StoppingCriteriaList([StopOnEndOfText(eos_token_id)])

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

textstreamer = TextStreamer(tokenizer, skip_prompt = True)

temperature = 0.7

top_p = 0.9

top_k = 50

# 4️⃣ Define generation function

def generate(prompt):

inputs = tokenizer(prompt, return_tensors="pt").to("cuda")

out = model.generate(

inputs["input_ids"],

attention_mask = inputs["attention_mask"],

streamer = textstreamer,

temperature=temperature,

top_p=top_p,

top_k=top_k,

pad_token_id=tokenizer.eos_token_id,

max_length=2048,

num_return_sequences=1,

#repetition_penalty=1.2,

no_repeat_ngram_size=3,

remove_invalid_values=True,

stopping_criteria=stopping_criteria

)

return tokenizer.decode(out[0], skip_special_tokens=True)

while 1:

print("### SYSTEM PROMPT ###")

print("Press CTRL+D to send.")

p = sys.stdin.read()

p = "### Prompt:\n" + '"' + p + '"\n\n### Response:\n'

output = generate(p)

``` |

chatpig/bagel-bf16 | chatpig | 2025-05-27T06:19:00Z | 0 | 0 | diffusers | [

"diffusers",

"license:apache-2.0",

"region:us"

] | null | 2025-05-27T06:08:45Z | ---

license: apache-2.0

library_name: diffusers

---

## bagel-bf16

- repackage of [bagel](https://huggingface.co/ByteDance-Seed/BAGEL-7B-MoT) |

dimasik87/9e064248-0193-4081-9d73-b1c80b8ab78f | dimasik87 | 2025-05-27T06:10:24Z | 0 | 0 | peft | [

"peft",

"safetensors",

"llama",

"axolotl",

"generated_from_trainer",

"base_model:lmsys/vicuna-7b-v1.5",

"base_model:adapter:lmsys/vicuna-7b-v1.5",

"license:llama2",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-05-27T04:57:54Z | ---

library_name: peft

license: llama2

base_model: lmsys/vicuna-7b-v1.5

tags:

- axolotl

- generated_from_trainer

model-index:

- name: 9e064248-0193-4081-9d73-b1c80b8ab78f

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/axolotl-ai-cloud/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/axolotl-ai-cloud/axolotl)

<details><summary>See axolotl config</summary>

axolotl version: `0.4.1`

```yaml

absolute_data_files: false

adapter: lora

base_model: lmsys/vicuna-7b-v1.5

bf16: true

chat_template: llama3

dataset_prepared_path: /workspace/axolotl

datasets:

- data_files:

- c3dc1221f780d83b_train_data.json

ds_type: json

format: custom

path: /workspace/input_data/

type:

field_instruction: instruct

field_output: output

format: '{instruction}'

no_input_format: '{instruction}'

system_format: '{system}'

system_prompt: ''

debug: null

deepspeed: null

dpo:

beta: 0.1

enabled: true

group_by_length: false

rank_loss: true

reference_model: null

early_stopping_patience: null

eval_max_new_tokens: 128

eval_table_size: null

evals_per_epoch: 1

flash_attention: true

fp16: null

fsdp: null

fsdp_config: null

gradient_accumulation_steps: 4

gradient_checkpointing: true

gradient_clipping: 1.0

group_by_length: false

hub_model_id: dimasik87/9e064248-0193-4081-9d73-b1c80b8ab78f

hub_repo: null

hub_strategy: end

hub_token: null

learning_rate: 1.0e-06

load_in_4bit: true

load_in_8bit: false

local_rank: null

logging_steps: 1

lora_alpha: 64

lora_dropout: 0.1

lora_fan_in_fan_out: null

lora_model_dir: null

lora_r: 32

lora_target_linear: true

lr_scheduler: cosine

max_steps: 500

micro_batch_size: 6

mixed_precision: bf16

mlflow_experiment_name: /tmp/c3dc1221f780d83b_train_data.json

model_type: AutoModelForCausalLM

num_epochs: 2

optimizer: adamw_bnb_8bit

output_dir: miner_id_24

pad_to_sequence_len: true

resume_from_checkpoint: null

s2_attention: null

sample_packing: false

saves_per_epoch: 1

sequence_len: 1024

strict: false

tf32: false

tokenizer_type: AutoTokenizer

train_on_inputs: false