url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

2.12B

| node_id

stringlengths 18

32

| number

int64 1

6.65k

| title

stringlengths 1

290

| user

dict | labels

listlengths 0

4

| state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

listlengths 0

4

| milestone

dict | comments

int64 0

70

| created_at

timestamp[ns, tz=UTC] | updated_at

timestamp[ns, tz=UTC] | closed_at

timestamp[ns, tz=UTC] | author_association

stringclasses 3

values | active_lock_reason

float64 | draft

float64 0

1

⌀ | pull_request

dict | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

float64 | state_reason

stringclasses 3

values | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5099

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5099/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5099/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5099/events

|

https://github.com/huggingface/datasets/issues/5099

| 1,404,370,191

|

I_kwDODunzps5TtP0P

| 5,099

|

datasets doesn't support # in data paths

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/44069155?v=4",

"events_url": "https://api.github.com/users/loubnabnl/events{/privacy}",

"followers_url": "https://api.github.com/users/loubnabnl/followers",

"following_url": "https://api.github.com/users/loubnabnl/following{/other_user}",

"gists_url": "https://api.github.com/users/loubnabnl/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/loubnabnl",

"id": 44069155,

"login": "loubnabnl",

"node_id": "MDQ6VXNlcjQ0MDY5MTU1",

"organizations_url": "https://api.github.com/users/loubnabnl/orgs",

"received_events_url": "https://api.github.com/users/loubnabnl/received_events",

"repos_url": "https://api.github.com/users/loubnabnl/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/loubnabnl/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/loubnabnl/subscriptions",

"type": "User",

"url": "https://api.github.com/users/loubnabnl"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "7057ff",

"default": true,

"description": "Good for newcomers",

"id": 1935892877,

"name": "good first issue",

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue"

},

{

"color": "DF8D62",

"default": false,

"description": "",

"id": 4614514401,

"name": "hacktoberfest",

"node_id": "LA_kwDODunzps8AAAABEwvm4Q",

"url": "https://api.github.com/repos/huggingface/datasets/labels/hacktoberfest"

}

] |

closed

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

] | null | 9

| 2022-10-11T10:05:32Z

| 2022-10-13T13:14:20Z

| 2022-10-13T13:14:20Z

|

NONE

| null | null | null |

## Describe the bug

dataset files with `#` symbol their paths aren't read correctly.

## Steps to reproduce the bug

The data in folder `c#`of this [dataset](https://huggingface.co/datasets/loubnabnl/bigcode_csharp) can't be loaded. While the folder `c_sharp` with the same data is loaded properly

```python

ds = load_dataset('loubnabnl/bigcode_csharp', split="train", data_files=["data/c#/*"])

```

```

FileNotFoundError: Couldn't find file at https://huggingface.co/datasets/loubnabnl/bigcode_csharp/resolve/27a3166cff4bb18e11919cafa6f169c0f57483de/data/c#/data_0003.jsonl

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.5.2

- Platform: macOS-12.2.1-arm64-arm-64bit

- Python version: 3.9.13

- PyArrow version: 9.0.0

- Pandas version: 1.4.3

cc @lhoestq

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5099/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5099/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5098

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5098/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5098/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5098/events

|

https://github.com/huggingface/datasets/issues/5098

| 1,404,058,518

|

I_kwDODunzps5TsDuW

| 5,098

|

Classes label error when loading symbolic links using imagefolder

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/49552732?v=4",

"events_url": "https://api.github.com/users/horizon86/events{/privacy}",

"followers_url": "https://api.github.com/users/horizon86/followers",

"following_url": "https://api.github.com/users/horizon86/following{/other_user}",

"gists_url": "https://api.github.com/users/horizon86/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/horizon86",

"id": 49552732,

"login": "horizon86",

"node_id": "MDQ6VXNlcjQ5NTUyNzMy",

"organizations_url": "https://api.github.com/users/horizon86/orgs",

"received_events_url": "https://api.github.com/users/horizon86/received_events",

"repos_url": "https://api.github.com/users/horizon86/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/horizon86/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/horizon86/subscriptions",

"type": "User",

"url": "https://api.github.com/users/horizon86"

}

|

[

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "7057ff",

"default": true,

"description": "Good for newcomers",

"id": 1935892877,

"name": "good first issue",

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue"

},

{

"color": "DF8D62",

"default": false,

"description": "",

"id": 4614514401,

"name": "hacktoberfest",

"node_id": "LA_kwDODunzps8AAAABEwvm4Q",

"url": "https://api.github.com/repos/huggingface/datasets/labels/hacktoberfest"

}

] |

closed

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

] | null | 3

| 2022-10-11T06:10:58Z

| 2022-11-14T14:40:20Z

| 2022-11-14T14:40:20Z

|

NONE

| null | null | null |

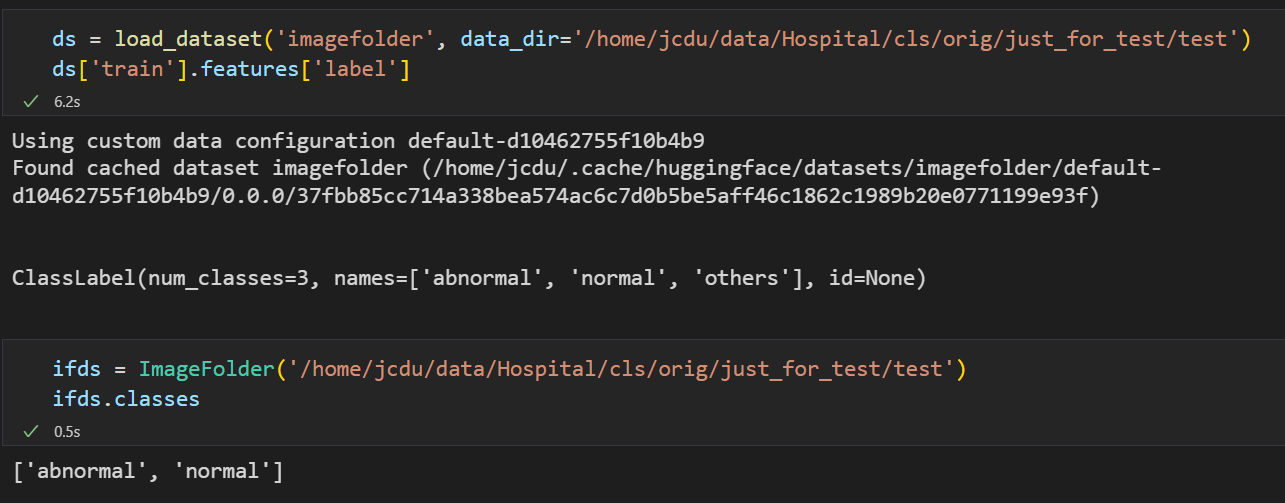

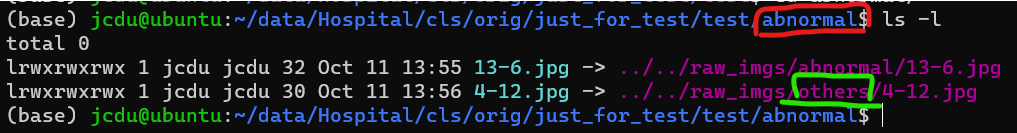

**Is your feature request related to a problem? Please describe.**

Like this: #4015

When there are **symbolic links** to pictures in the data folder, the parent folder name of the **real file** will be used as the class name instead of the parent folder of the symbolic link itself. Can you give an option to decide whether to enable symbolic link tracking?

This is inconsistent with the `torchvision.datasets.ImageFolder` behavior.

For example:

It use `others` in green circle as class label but not `abnormal`, I wish `load_dataset` not use the real file parent as label.

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context about the feature request here.

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5098/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5098/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5097

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5097/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5097/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5097/events

|

https://github.com/huggingface/datasets/issues/5097

| 1,403,679,353

|

I_kwDODunzps5TqnJ5

| 5,097

|

Fatal error with pyarrow/libarrow.so

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/11340846?v=4",

"events_url": "https://api.github.com/users/catalys1/events{/privacy}",

"followers_url": "https://api.github.com/users/catalys1/followers",

"following_url": "https://api.github.com/users/catalys1/following{/other_user}",

"gists_url": "https://api.github.com/users/catalys1/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/catalys1",

"id": 11340846,

"login": "catalys1",

"node_id": "MDQ6VXNlcjExMzQwODQ2",

"organizations_url": "https://api.github.com/users/catalys1/orgs",

"received_events_url": "https://api.github.com/users/catalys1/received_events",

"repos_url": "https://api.github.com/users/catalys1/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/catalys1/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/catalys1/subscriptions",

"type": "User",

"url": "https://api.github.com/users/catalys1"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] |

closed

| false

| null |

[] | null | 1

| 2022-10-10T20:29:04Z

| 2022-10-11T06:56:01Z

| 2022-10-11T06:56:00Z

|

NONE

| null | null | null |

## Describe the bug

When using datasets, at the very end of my jobs the program crashes (see trace below).

It doesn't seem to affect anything, as it appears to happen as the program is closing down. Just importing `datasets` is enough to cause the error.

## Steps to reproduce the bug

This is sufficient to reproduce the problem:

```bash

python -c "import datasets"

```

## Expected results

Program should run to completion without an error.

## Actual results

```bash

Fatal error condition occurred in /opt/vcpkg/buildtrees/aws-c-io/src/9e6648842a-364b708815.clean/source/event_loop.c:72: aws_thread_launch(&cleanup_thread, s_event_loop_destroy_async_thread_fn, el_group, &thread_options) == AWS_OP_SUCCESS

Exiting Application

################################################################################

Stack trace:

################################################################################

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200af06) [0x150dff547f06]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x20028e5) [0x150dff53f8e5]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1f27e09) [0x150dff464e09]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200ba3d) [0x150dff548a3d]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1f25948) [0x150dff462948]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200ba3d) [0x150dff548a3d]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1ee0b46) [0x150dff41db46]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x194546a) [0x150dfee8246a]

/lib64/libc.so.6(+0x39b0c) [0x150e15eadb0c]

/lib64/libc.so.6(on_exit+0) [0x150e15eadc40]

/u/user/miniconda3/envs/env/bin/python(+0x28db18) [0x560ae370eb18]

/u/user/miniconda3/envs/env/bin/python(+0x28db4b) [0x560ae370eb4b]

/u/user/miniconda3/envs/env/bin/python(+0x28db90) [0x560ae370eb90]

/u/user/miniconda3/envs/env/bin/python(_PyRun_SimpleFileObject+0x1e6) [0x560ae37123e6]

/u/user/miniconda3/envs/env/bin/python(_PyRun_AnyFileObject+0x44) [0x560ae37124c4]

/u/user/miniconda3/envs/env/bin/python(Py_RunMain+0x35d) [0x560ae37135bd]

/u/user/miniconda3/envs/env/bin/python(Py_BytesMain+0x39) [0x560ae37137d9]

/lib64/libc.so.6(__libc_start_main+0xf3) [0x150e15e97493]

/u/user/miniconda3/envs/env/bin/python(+0x2125d4) [0x560ae36935d4]

Aborted (core dumped)

```

## Environment info

- `datasets` version: 2.5.1

- Platform: Linux-4.18.0-348.23.1.el8_5.x86_64-x86_64-with-glibc2.28

- Python version: 3.10.4

- PyArrow version: 9.0.0

- Pandas version: 1.4.3

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5097/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5097/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5096

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5096/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5096/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5096/events

|

https://github.com/huggingface/datasets/issues/5096

| 1,403,379,816

|

I_kwDODunzps5TpeBo

| 5,096

|

Transfer some canonical datasets under an organization namespace

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

|

[

{

"color": "0e8a16",

"default": false,

"description": "Contribution to a dataset script",

"id": 4564477500,

"name": "dataset contribution",

"node_id": "LA_kwDODunzps8AAAABEBBmPA",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20contribution"

}

] |

open

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

] | null | 10

| 2022-10-10T15:44:31Z

| 2024-01-11T10:03:35Z

| null |

MEMBER

| null | null | null |

As discussed during our @huggingface/datasets meeting, we are planning to move some "canonical" dataset scripts under their corresponding organization namespace (if this does not exist).

On the contrary, if the dataset already exists under the organization namespace, we are deprecating the canonical one (and eventually delete it).

First, we should test it using a dummy dataset/organization.

TODO:

- [x] Test with a dummy dataset

- [x] Create dummy canonical dataset: https://huggingface.co/datasets/dummy_canonical_dataset

- [x] Create dummy organization: https://huggingface.co/dummy-canonical-org

- [x] Transfer dummy canonical dataset to dummy organization

- [ ] Transfer datasets

- [x] babi_qa => facebook

- [x] blbooks => TheBritishLibrary/blbooks

- [x] blbooksgenre => TheBritishLibrary/blbooksgenre

- [x] common_gen => allenai

- [x] commonsense_qa => tau

- [x] competition_math => hendrycks/competition_math

- [x] cord19 => allenai

- [x] emotion => dair-ai

- [ ] gem => GEM

- [x] hendrycks_test => cais/mmlu

- [x] indonlu => indonlp

- [ ] multilingual_librispeech => facebook

- It already exists "facebook/multilingual_librispeech"

- [ ] oscar => oscar-corpus

- [x] peer_read => allenai

- [x] qasper => allenai

- [x] reddit => webis/tldr-17

- [x] russian_super_glue => russiannlp

- [x] rvl_cdip => aharley

- [x] s2orc => allenai

- [x] scicite => allenai

- [x] scifact => allenai

- [x] scitldr => allenai

- [x] swiss_judgment_prediction => rcds

- [x] the_pile => EleutherAI

- [ ] wmt14, wmt15, wmt16, wmt17, wmt18, wmt19,... => wmt

- [ ] Deprecate (and eventually remove) datasets that cannot be transferred because they already exist

- [x] banking77 => PolyAI

- [x] common_voice => mozilla-foundation

- [x] german_legal_entity_recognition => elenanereiss

- ...

EDIT: the list above is continuously being updated

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 2,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5096/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5096/timeline

| null | null | false

|

https://api.github.com/repos/huggingface/datasets/issues/5095

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5095/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5095/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5095/events

|

https://github.com/huggingface/datasets/pull/5095

| 1,403,221,408

|

PR_kwDODunzps5Afzsq

| 5,095

|

Fix tutorial (#5093)

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

|

[] |

closed

| false

| null |

[] | null | 2

| 2022-10-10T13:55:15Z

| 2022-10-10T17:50:52Z

| 2022-10-10T15:32:20Z

|

CONTRIBUTOR

| null | 0

|

{

"diff_url": "https://github.com/huggingface/datasets/pull/5095.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5095",

"merged_at": "2022-10-10T15:32:20Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5095.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5095"

}

|

Close #5093

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5095/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5095/timeline

| null | null | true

|

https://api.github.com/repos/huggingface/datasets/issues/5094

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5094/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5094/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5094/events

|

https://github.com/huggingface/datasets/issues/5094

| 1,403,214,950

|

I_kwDODunzps5To1xm

| 5,094

|

Multiprocessing with `Dataset.map` and `PyTorch` results in deadlock

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/36822895?v=4",

"events_url": "https://api.github.com/users/RR-28023/events{/privacy}",

"followers_url": "https://api.github.com/users/RR-28023/followers",

"following_url": "https://api.github.com/users/RR-28023/following{/other_user}",

"gists_url": "https://api.github.com/users/RR-28023/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/RR-28023",

"id": 36822895,

"login": "RR-28023",

"node_id": "MDQ6VXNlcjM2ODIyODk1",

"organizations_url": "https://api.github.com/users/RR-28023/orgs",

"received_events_url": "https://api.github.com/users/RR-28023/received_events",

"repos_url": "https://api.github.com/users/RR-28023/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/RR-28023/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/RR-28023/subscriptions",

"type": "User",

"url": "https://api.github.com/users/RR-28023"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] |

closed

| false

| null |

[] | null | 11

| 2022-10-10T13:50:56Z

| 2023-07-24T15:29:13Z

| 2023-07-24T15:29:13Z

|

NONE

| null | null | null |

## Describe the bug

There seems to be an issue with using multiprocessing with `datasets.Dataset.map` (i.e. setting `num_proc` to a value greater than one) combined with a function that uses `torch` under the hood. The subprocesses that `datasets.Dataset.map` spawns [a this step](https://github.com/huggingface/datasets/blob/1b935dab9d2f171a8c6294269421fe967eb55e34/src/datasets/arrow_dataset.py#L2663) go into wait mode forever.

## Steps to reproduce the bug

The below code goes into deadlock when `NUMBER_OF_PROCESSES` is greater than one.

```python

NUMBER_OF_PROCESSES = 2

from transformers import AutoTokenizer, AutoModel

from datasets import load_dataset

dataset = load_dataset("glue", "mrpc", split="train")

tokenizer = AutoTokenizer.from_pretrained("sentence-transformers/all-MiniLM-L6-v2")

model = AutoModel.from_pretrained("sentence-transformers/all-MiniLM-L6-v2")

model.to("cpu")

def cls_pooling(model_output):

return model_output.last_hidden_state[:, 0]

def generate_embeddings_batched(examples):

sentences_batch = list(examples['sentence1'])

encoded_input = tokenizer(

sentences_batch, padding=True, truncation=True, return_tensors="pt"

)

encoded_input = {k: v.to("cpu") for k, v in encoded_input.items()}

model_output = model(**encoded_input)

embeddings = cls_pooling(model_output)

examples['embeddings'] = embeddings.detach().cpu().numpy() # 64, 384

return examples

embeddings_dataset = dataset.map(

generate_embeddings_batched,

batched=True,

batch_size=10,

num_proc=NUMBER_OF_PROCESSES

)

```

While debugging it I've seen that it gets "stuck" when calling `torch.nn.Embedding.forward` but some testing shows that the same happens with other functions from `torch.nn`.

## Environment info

- Platform: Linux-5.14.0-1052-oem-x86_64-with-glibc2.31

- Python version: 3.9.14

- PyArrow version: 9.0.0

- Pandas version: 1.5.0

Not sure if this is a HF problem, a PyTorch problem or something I'm doing wrong..

Thanks!

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5094/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5094/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5093

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5093/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5093/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5093/events

|

https://github.com/huggingface/datasets/issues/5093

| 1,402,939,660

|

I_kwDODunzps5TnykM

| 5,093

|

Mismatch between tutoriel and doc

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/22726840?v=4",

"events_url": "https://api.github.com/users/clefourrier/events{/privacy}",

"followers_url": "https://api.github.com/users/clefourrier/followers",

"following_url": "https://api.github.com/users/clefourrier/following{/other_user}",

"gists_url": "https://api.github.com/users/clefourrier/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/clefourrier",

"id": 22726840,

"login": "clefourrier",

"node_id": "MDQ6VXNlcjIyNzI2ODQw",

"organizations_url": "https://api.github.com/users/clefourrier/orgs",

"received_events_url": "https://api.github.com/users/clefourrier/received_events",

"repos_url": "https://api.github.com/users/clefourrier/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/clefourrier/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/clefourrier/subscriptions",

"type": "User",

"url": "https://api.github.com/users/clefourrier"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "7057ff",

"default": true,

"description": "Good for newcomers",

"id": 1935892877,

"name": "good first issue",

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue"

},

{

"color": "DF8D62",

"default": false,

"description": "",

"id": 4614514401,

"name": "hacktoberfest",

"node_id": "LA_kwDODunzps8AAAABEwvm4Q",

"url": "https://api.github.com/repos/huggingface/datasets/labels/hacktoberfest"

}

] |

closed

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco"

}

] | null | 3

| 2022-10-10T10:23:53Z

| 2022-10-10T17:51:15Z

| 2022-10-10T17:51:14Z

|

MEMBER

| null | null | null |

## Describe the bug

In the "Process text data" tutorial, [`map` has `return_tensors` as kwarg](https://huggingface.co/docs/datasets/main/en/nlp_process#map). It does not seem to appear in the [function documentation](https://huggingface.co/docs/datasets/main/en/package_reference/main_classes#datasets.Dataset.map), nor to work.

## Steps to reproduce the bug

MWE:

```python

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("bert-base-cased")

from datasets import load_dataset

dataset = load_dataset("lhoestq/demo1", split="train")

dataset = dataset.map(lambda examples: tokenizer(examples["review"]), batched=True, return_tensors="pt")

```

## Expected results

return_tensors to be a valid kwarg :smiley:

## Actual results

```python

>> TypeError: map() got an unexpected keyword argument 'return_tensors'

```

## Environment info

- `datasets` version: 2.3.2

- Platform: Linux-5.14.0-1052-oem-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyArrow version: 8.0.0

- Pandas version: 1.4.3

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5093/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5093/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5092

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5092/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5092/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5092/events

|

https://github.com/huggingface/datasets/pull/5092

| 1,402,713,517

|

PR_kwDODunzps5AeIsS

| 5,092

|

Use HTML relative paths for tiles in the docs

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lewtun",

"id": 26859204,

"login": "lewtun",

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"repos_url": "https://api.github.com/users/lewtun/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lewtun"

}

|

[] |

closed

| false

| null |

[] | null | 3

| 2022-10-10T07:24:27Z

| 2022-10-11T13:25:45Z

| 2022-10-11T13:23:23Z

|

MEMBER

| null | 0

|

{

"diff_url": "https://github.com/huggingface/datasets/pull/5092.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5092",

"merged_at": "2022-10-11T13:23:23Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5092.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5092"

}

|

This PR replaces the absolute paths in the landing page tiles with relative ones so that one can test navigation both locally in and in future PRs (see [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5084/en/index) for an example PR where the links don't work).

I encountered this while working on the `optimum` docs and figured I'd fix it elsewhere too :)

Internal Slack thread: https://huggingface.slack.com/archives/C02GLJ5S0E9/p1665129710176619

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5092/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5092/timeline

| null | null | true

|

https://api.github.com/repos/huggingface/datasets/issues/5091

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5091/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5091/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5091/events

|

https://github.com/huggingface/datasets/pull/5091

| 1,401,112,552

|

PR_kwDODunzps5AZCm9

| 5,091

|

Allow connection objects in `from_sql` + small doc improvement

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko"

}

|

[] |

closed

| false

| null |

[] | null | 1

| 2022-10-07T12:39:44Z

| 2022-10-09T13:19:15Z

| 2022-10-09T13:16:57Z

|

CONTRIBUTOR

| null | 0

|

{

"diff_url": "https://github.com/huggingface/datasets/pull/5091.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5091",

"merged_at": "2022-10-09T13:16:57Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5091.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5091"

}

|

Allow connection objects in `from_sql` (emit a warning that they are cachable) and add a tip that explains the format of the con parameter when provided as a URI string.

PS: ~~This PR contains a parameter link, so https://github.com/huggingface/doc-builder/pull/311 needs to be merged before it's "ready for review".~~ Done!

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5091/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5091/timeline

| null | null | true

|

https://api.github.com/repos/huggingface/datasets/issues/5090

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5090/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5090/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5090/events

|

https://github.com/huggingface/datasets/issues/5090

| 1,401,102,407

|

I_kwDODunzps5TgyBH

| 5,090

|

Review sync issues from GitHub to Hub

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] |

closed

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

] | null | 1

| 2022-10-07T12:31:56Z

| 2022-10-08T07:07:36Z

| 2022-10-08T07:07:36Z

|

MEMBER

| null | null | null |

## Describe the bug

We have discovered that sometimes there were sync issues between GitHub and Hub datasets, after a merge commit to main branch.

For example:

- this merge commit: https://github.com/huggingface/datasets/commit/d74a9e8e4bfff1fed03a4cab99180a841d7caf4b

- was not properly synced with the Hub: https://github.com/huggingface/datasets/actions/runs/3002495269/jobs/4819769684

```

[main 9e641de] Add Papers with Code ID to scifact dataset (#4941)

Author: Albert Villanova del Moral <[email protected]>

1 file changed, 42 insertions(+), 14 deletions(-)

push failed !

GitCommandError(['git', 'push'], 1, b'remote: ---------------------------------------------------------- \nremote: Sorry, your push was rejected during YAML metadata verification: \nremote: - Error: "license" does not match any of the allowed types \nremote: ---------------------------------------------------------- \nremote: Please find the documentation at: \nremote: https://huggingface.co/docs/hub/models-cards#model-card-metadata \nremote: ---------------------------------------------------------- \nTo [https://huggingface.co/datasets/scifact.git\n](https://huggingface.co/datasets/scifact.git/n) ! [remote rejected] main -> main (pre-receive hook declined)\nerror: failed to push some refs to \'[https://huggingface.co/datasets/scifact.git\](https://huggingface.co/datasets/scifact.git/)'', b'')

```

We are reviewing sync issues in previous commits to recover them and repushing to the Hub.

TODO: Review

- [x] #4941

- scifact

- [x] #4931

- scifact

- [x] #4753

- wikipedia

- [x] #4554

- wmt17, wmt19, wmt_t2t

- Fixed with "Release 2.4.0" commit: https://github.com/huggingface/datasets/commit/401d4c4f9b9594cb6527c599c0e7a72ce1a0ea49

- https://huggingface.co/datasets/wmt17/commit/5c0afa83fbbd3508ff7627c07f1b27756d1379ea

- https://huggingface.co/datasets/wmt19/commit/b8ad5bf1960208a376a0ab20bc8eac9638f7b400

- https://huggingface.co/datasets/wmt_t2t/commit/b6d67191804dd0933476fede36754a436b48d1fc

- [x] #4607

- [x] #4416

- lccc

- Fixed with "Release 2.3.0" commit: https://huggingface.co/datasets/lccc/commit/8b1f8cf425b5653a0a4357a53205aac82ce038d1

- [x] #4367

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5090/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5090/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5089

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5089/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5089/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5089/events

|

https://github.com/huggingface/datasets/issues/5089

| 1,400,788,486

|

I_kwDODunzps5TflYG

| 5,089

|

Resume failed process

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/208336?v=4",

"events_url": "https://api.github.com/users/felix-schneider/events{/privacy}",

"followers_url": "https://api.github.com/users/felix-schneider/followers",

"following_url": "https://api.github.com/users/felix-schneider/following{/other_user}",

"gists_url": "https://api.github.com/users/felix-schneider/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/felix-schneider",

"id": 208336,

"login": "felix-schneider",

"node_id": "MDQ6VXNlcjIwODMzNg==",

"organizations_url": "https://api.github.com/users/felix-schneider/orgs",

"received_events_url": "https://api.github.com/users/felix-schneider/received_events",

"repos_url": "https://api.github.com/users/felix-schneider/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/felix-schneider/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/felix-schneider/subscriptions",

"type": "User",

"url": "https://api.github.com/users/felix-schneider"

}

|

[

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] |

open

| false

| null |

[] | null | 0

| 2022-10-07T08:07:03Z

| 2022-10-07T08:07:03Z

| null |

NONE

| null | null | null |

**Is your feature request related to a problem? Please describe.**

When a process (`map`, `filter`, etc.) crashes part-way through, you lose all progress.

**Describe the solution you'd like**

It would be good if the cache reflected the partial progress, so that after we restart the script, the process can restart where it left off.

**Describe alternatives you've considered**

Doing processing outside of `datasets`, by writing the dataset to json files and building a restart mechanism myself.

**Additional context**

N/A

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5089/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5089/timeline

| null | null | false

|

https://api.github.com/repos/huggingface/datasets/issues/5088

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5088/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5088/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5088/events

|

https://github.com/huggingface/datasets/issues/5088

| 1,400,530,412

|

I_kwDODunzps5TemXs

| 5,088

|

load_datasets("json", ...) don't read local .json.gz properly

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/112650299?v=4",

"events_url": "https://api.github.com/users/junwang-wish/events{/privacy}",

"followers_url": "https://api.github.com/users/junwang-wish/followers",

"following_url": "https://api.github.com/users/junwang-wish/following{/other_user}",

"gists_url": "https://api.github.com/users/junwang-wish/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/junwang-wish",

"id": 112650299,

"login": "junwang-wish",

"node_id": "U_kgDOBrboOw",

"organizations_url": "https://api.github.com/users/junwang-wish/orgs",

"received_events_url": "https://api.github.com/users/junwang-wish/received_events",

"repos_url": "https://api.github.com/users/junwang-wish/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/junwang-wish/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/junwang-wish/subscriptions",

"type": "User",

"url": "https://api.github.com/users/junwang-wish"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] |

open

| false

| null |

[] | null | 2

| 2022-10-07T02:16:58Z

| 2022-10-07T14:43:16Z

| null |

NONE

| null | null | null |

## Describe the bug

I have a local file `*.json.gz` and it can be read by `pandas.read_json(lines=True)`, but cannot be read by `load_datasets("json")` (resulting in 0 lines)

## Steps to reproduce the bug

```python

fpath = '/data/junwang/.cache/general/57b6f2314cbe0bc45dda5b78f0871df2/test.json.gz'

ds_panda = DatasetDict(

test=Dataset.from_pandas(

pd.read_json(fpath, lines=True)

)

)

ds_direct = load_dataset(

'json', data_files={

'test': fpath

}, features=Features(

text_input=Value(dtype="string", id=None),

text_output=Value(dtype="string", id=None)

)

)

len(ds_panda['test']), len(ds_direct['test'])

```

## Expected results

Lines of `ds_panda['test']` and `ds_direct['test']` should match.

## Actual results

```

Using custom data configuration default-c0ef2598760968aa

Downloading and preparing dataset json/default to /data/junwang/.cache/huggingface/datasets/json/default-c0ef2598760968aa/0.0.0/e6070c77f18f01a5ad4551a8b7edfba20b8438b7cad4d94e6ad9378022ce4aab...

Dataset json downloaded and prepared to /data/junwang/.cache/huggingface/datasets/json/default-c0ef2598760968aa/0.0.0/e6070c77f18f01a5ad4551a8b7edfba20b8438b7cad4d94e6ad9378022ce4aab. Subsequent calls will reuse this data.

(62087, 0)

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: Ubuntu 18.04.4 LTS

- Python version: 3.8.13

- PyArrow version: 9.0.0

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5088/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5088/timeline

| null | null | false

|

https://api.github.com/repos/huggingface/datasets/issues/5087

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5087/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5087/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5087/events

|

https://github.com/huggingface/datasets/pull/5087

| 1,400,487,967

|

PR_kwDODunzps5AW-N9

| 5,087

|

Fix filter with empty indices

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/23029765?v=4",

"events_url": "https://api.github.com/users/Mouhanedg56/events{/privacy}",

"followers_url": "https://api.github.com/users/Mouhanedg56/followers",

"following_url": "https://api.github.com/users/Mouhanedg56/following{/other_user}",

"gists_url": "https://api.github.com/users/Mouhanedg56/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Mouhanedg56",

"id": 23029765,

"login": "Mouhanedg56",

"node_id": "MDQ6VXNlcjIzMDI5NzY1",

"organizations_url": "https://api.github.com/users/Mouhanedg56/orgs",

"received_events_url": "https://api.github.com/users/Mouhanedg56/received_events",

"repos_url": "https://api.github.com/users/Mouhanedg56/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Mouhanedg56/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Mouhanedg56/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Mouhanedg56"

}

|

[] |

closed

| false

| null |

[] | null | 1

| 2022-10-07T01:07:00Z

| 2022-10-07T18:43:03Z

| 2022-10-07T18:40:26Z

|

CONTRIBUTOR

| null | 0

|

{

"diff_url": "https://github.com/huggingface/datasets/pull/5087.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5087",

"merged_at": "2022-10-07T18:40:26Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5087.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5087"

}

|

Fix #5085

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5087/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5087/timeline

| null | null | true

|

https://api.github.com/repos/huggingface/datasets/issues/5086

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5086/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5086/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5086/events

|

https://github.com/huggingface/datasets/issues/5086

| 1,400,216,975

|

I_kwDODunzps5TdZ2P

| 5,086

|

HTTPError: 404 Client Error: Not Found for url

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/54015474?v=4",

"events_url": "https://api.github.com/users/km5ar/events{/privacy}",

"followers_url": "https://api.github.com/users/km5ar/followers",

"following_url": "https://api.github.com/users/km5ar/following{/other_user}",

"gists_url": "https://api.github.com/users/km5ar/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/km5ar",

"id": 54015474,

"login": "km5ar",

"node_id": "MDQ6VXNlcjU0MDE1NDc0",

"organizations_url": "https://api.github.com/users/km5ar/orgs",

"received_events_url": "https://api.github.com/users/km5ar/received_events",

"repos_url": "https://api.github.com/users/km5ar/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/km5ar/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/km5ar/subscriptions",

"type": "User",

"url": "https://api.github.com/users/km5ar"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] |

closed

| false

| null |

[] | null | 3

| 2022-10-06T19:48:58Z

| 2022-10-07T15:12:01Z

| 2022-10-07T15:12:01Z

|

NONE

| null | null | null |

## Describe the bug

I was following chap 5 from huggingface course: https://huggingface.co/course/chapter5/6?fw=tf

However, I'm not able to download the datasets, with a 404 erros

<img width="1160" alt="iShot2022-10-06_15 54 50" src="https://user-images.githubusercontent.com/54015474/194406327-ae62c2f3-1da5-4686-8631-13d879a0edee.png">

## Steps to reproduce the bug

```python

from huggingface_hub import hf_hub_url

data_files = hf_hub_url(

repo_id="lewtun/github-issues",

filename="datasets-issues-with-hf-doc-builder.jsonl",

repo_type="dataset",

)

from datasets import load_dataset

issues_dataset = load_dataset("json", data_files=data_files, split="train")

issues_dataset

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.5.2

- Platform: macOS-10.16-x86_64-i386-64bit

- Python version: 3.9.12

- PyArrow version: 9.0.0

- Pandas version: 1.4.4

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5086/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5086/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5085

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5085/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5085/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5085/events

|

https://github.com/huggingface/datasets/issues/5085

| 1,400,113,569

|

I_kwDODunzps5TdAmh

| 5,085

|

Filtering on an empty dataset returns a corrupted dataset.

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/36087158?v=4",

"events_url": "https://api.github.com/users/gabegma/events{/privacy}",

"followers_url": "https://api.github.com/users/gabegma/followers",

"following_url": "https://api.github.com/users/gabegma/following{/other_user}",

"gists_url": "https://api.github.com/users/gabegma/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/gabegma",

"id": 36087158,

"login": "gabegma",

"node_id": "MDQ6VXNlcjM2MDg3MTU4",

"organizations_url": "https://api.github.com/users/gabegma/orgs",

"received_events_url": "https://api.github.com/users/gabegma/received_events",

"repos_url": "https://api.github.com/users/gabegma/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/gabegma/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/gabegma/subscriptions",

"type": "User",

"url": "https://api.github.com/users/gabegma"

}

|

[

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "DF8D62",

"default": false,

"description": "",

"id": 4614514401,

"name": "hacktoberfest",

"node_id": "LA_kwDODunzps8AAAABEwvm4Q",

"url": "https://api.github.com/repos/huggingface/datasets/labels/hacktoberfest"

}

] |

closed

| false

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/23029765?v=4",

"events_url": "https://api.github.com/users/Mouhanedg56/events{/privacy}",

"followers_url": "https://api.github.com/users/Mouhanedg56/followers",

"following_url": "https://api.github.com/users/Mouhanedg56/following{/other_user}",

"gists_url": "https://api.github.com/users/Mouhanedg56/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Mouhanedg56",

"id": 23029765,

"login": "Mouhanedg56",

"node_id": "MDQ6VXNlcjIzMDI5NzY1",

"organizations_url": "https://api.github.com/users/Mouhanedg56/orgs",

"received_events_url": "https://api.github.com/users/Mouhanedg56/received_events",

"repos_url": "https://api.github.com/users/Mouhanedg56/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Mouhanedg56/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Mouhanedg56/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Mouhanedg56"

}

|

[

{

"avatar_url": "https://avatars.githubusercontent.com/u/23029765?v=4",

"events_url": "https://api.github.com/users/Mouhanedg56/events{/privacy}",

"followers_url": "https://api.github.com/users/Mouhanedg56/followers",

"following_url": "https://api.github.com/users/Mouhanedg56/following{/other_user}",

"gists_url": "https://api.github.com/users/Mouhanedg56/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Mouhanedg56",

"id": 23029765,

"login": "Mouhanedg56",

"node_id": "MDQ6VXNlcjIzMDI5NzY1",

"organizations_url": "https://api.github.com/users/Mouhanedg56/orgs",

"received_events_url": "https://api.github.com/users/Mouhanedg56/received_events",

"repos_url": "https://api.github.com/users/Mouhanedg56/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Mouhanedg56/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Mouhanedg56/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Mouhanedg56"

}

] | null | 3

| 2022-10-06T18:18:49Z

| 2022-10-07T19:06:02Z

| 2022-10-07T18:40:26Z

|

NONE

| null | null | null |

## Describe the bug

When filtering a dataset twice, where the first result is an empty dataset, the second dataset seems corrupted.

## Steps to reproduce the bug

```python

datasets = load_dataset("glue", "sst2")

dataset_split = datasets['validation']

ds_filter_1 = dataset_split.filter(lambda x: False) # Some filtering condition that leads to an empty dataset

assert ds_filter_1.num_rows == 0

sentences = ds_filter_1['sentence']

assert len(sentences) == 0

ds_filter_2 = ds_filter_1.filter(lambda x: False) # Some other filtering condition

assert ds_filter_2.num_rows == 0

assert 'sentence' in ds_filter_2.column_names

sentences = ds_filter_2['sentence']

```

## Expected results

The last line should be returning an empty list, same as 4 lines above.

## Actual results

The last line currently raises `IndexError: index out of bounds`.

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.5.2

- Platform: macOS-11.6.6-x86_64-i386-64bit

- Python version: 3.9.11

- PyArrow version: 7.0.0

- Pandas version: 1.4.1

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 3,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 3,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5085/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5085/timeline

| null |

completed

| false

|

https://api.github.com/repos/huggingface/datasets/issues/5084

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5084/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5084/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5084/events

|

https://github.com/huggingface/datasets/pull/5084

| 1,400,016,229

|

PR_kwDODunzps5AVXwm

| 5,084

|

IterableDataset formatting in numpy/torch/tf/jax

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

}

|

[] |

closed

| false

| null |

[] | null | 3

| 2022-10-06T16:53:38Z

| 2023-09-24T10:06:51Z

| 2022-12-20T17:19:52Z

|

MEMBER

| null | 1

|

{

"diff_url": "https://github.com/huggingface/datasets/pull/5084.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5084",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/5084.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5084"

}

|

This code now returns a numpy array:

```python

from datasets import load_dataset

ds = load_dataset("imagenet-1k", split="train", streaming=True).with_format("np")

print(next(iter(ds))["image"])

```

It also works with "arrow", "pandas", "torch", "tf" and "jax"

### Implementation details:

I'm using the existing code to format an Arrow Table to the right output format for simplicity.

Therefore it's probbaly not the most optimized approach.

For example to output PyTorch tensors it does this for every example:

python data -> arrow table -> numpy extracted data -> pytorch formatted data

### Releasing this feature

Even though I consider this as a bug/inconsistency, this change is a breaking change.

And I'm sure some users were relying on the torch iterable dataset to return PIL Image and used data collators to convert to pytorch.

So I guess this is `datasets` 3.0 ?

### TODO

- [x] merge https://github.com/huggingface/datasets/pull/5072

- [ ] docs

- [ ] tests

Close https://github.com/huggingface/datasets/issues/5083

|

{

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5084/reactions"

}

|

https://api.github.com/repos/huggingface/datasets/issues/5084/timeline

| null | null | true

|

https://api.github.com/repos/huggingface/datasets/issues/5083

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5083/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5083/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5083/events

|

https://github.com/huggingface/datasets/issues/5083

| 1,399,842,514

|

I_kwDODunzps5Tb-bS

| 5,083

|

Support numpy/torch/tf/jax formatting for IterableDataset

|

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",