File size: 5,154 Bytes

59b8205 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 |

---

pipeline_tag: text-generation

base_model: bigcode/starcoder2-15b-instruct-v0.1

datasets:

- bigcode/self-oss-instruct-sc2-exec-filter-50k

license: bigcode-openrail-m

library_name: transformers

tags:

- code

model-index:

- name: starcoder2-15b-instruct-v0.1

results:

- task:

type: text-generation

dataset:

name: LiveCodeBench (code generation)

type: livecodebench-codegeneration

metrics:

- type: pass@1

value: 20.4

- task:

type: text-generation

dataset:

name: LiveCodeBench (self repair)

type: livecodebench-selfrepair

metrics:

- type: pass@1

value: 20.9

- task:

type: text-generation

dataset:

name: LiveCodeBench (test output prediction)

type: livecodebench-testoutputprediction

metrics:

- type: pass@1

value: 29.8

- task:

type: text-generation

dataset:

name: LiveCodeBench (code execution)

type: livecodebench-codeexecution

metrics:

- type: pass@1

value: 28.1

- task:

type: text-generation

dataset:

name: HumanEval

type: humaneval

metrics:

- type: pass@1

value: 72.6

- task:

type: text-generation

dataset:

name: HumanEval+

type: humanevalplus

metrics:

- type: pass@1

value: 63.4

- task:

type: text-generation

dataset:

name: MBPP

type: mbpp

metrics:

- type: pass@1

value: 75.2

- task:

type: text-generation

dataset:

name: MBPP+

type: mbppplus

metrics:

- type: pass@1

value: 61.2

- task:

type: text-generation

dataset:

name: DS-1000

type: ds-1000

metrics:

- type: pass@1

value: 40.6

---

# StarCoder2-Instruct-GGUF

- This is quantized version of [bigcode/starcoder2-15b-instruct-v0.1](https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1) created using llama.cpp

## Model Summary

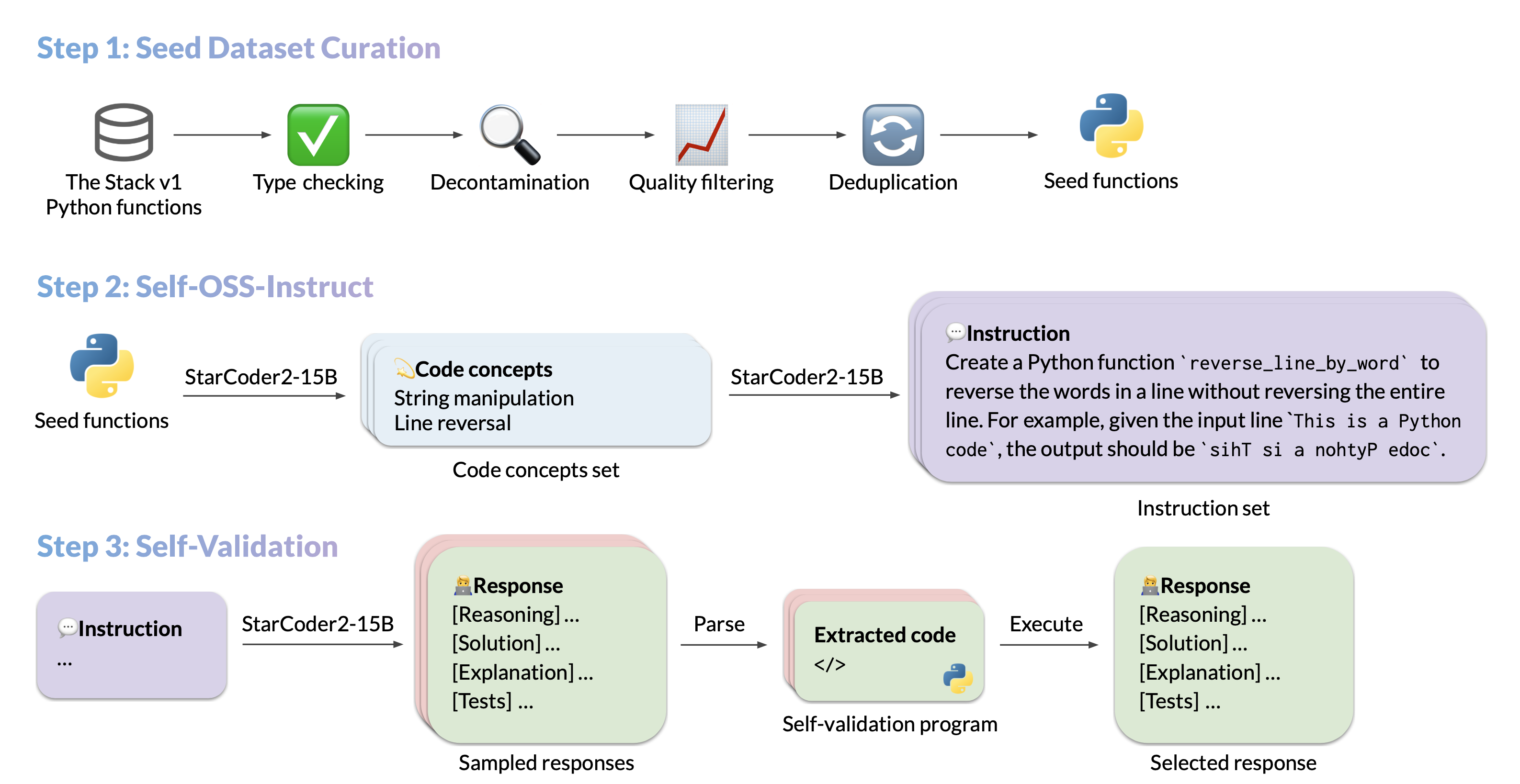

We introduce StarCoder2-15B-Instruct-v0.1, the very first entirely self-aligned code Large Language Model (LLM) trained with a fully permissive and transparent pipeline. Our open-source pipeline uses StarCoder2-15B to generate thousands of instruction-response pairs, which are then used to fine-tune StarCoder-15B itself without any human annotations or distilled data from huge and proprietary LLMs.

- **Model:** [bigcode/starcoder2-15b-instruct-v0.1](https://huggingface.co/bigcode/starcoder2-instruct-15b-v0.1)

- **Code:** [bigcode-project/starcoder2-self-align](https://github.com/bigcode-project/starcoder2-self-align)

- **Dataset:** [bigcode/self-oss-instruct-sc2-exec-filter-50k](https://huggingface.co/datasets/bigcode/self-oss-instruct-sc2-exec-filter-50k/)

- **Authors:**

[Yuxiang Wei](https://yuxiang.cs.illinois.edu),

[Federico Cassano](https://federico.codes/),

[Jiawei Liu](https://jw-liu.xyz),

[Yifeng Ding](https://yifeng-ding.com),

[Naman Jain](https://naman-ntc.github.io),

[Harm de Vries](https://www.harmdevries.com),

[Leandro von Werra](https://twitter.com/lvwerra),

[Arjun Guha](https://www.khoury.northeastern.edu/home/arjunguha/main/home/),

[Lingming Zhang](https://lingming.cs.illinois.edu).

## Use

### Intended use

The model is designed to respond to **coding-related instructions in a single turn**. Instructions in other styles may result in less accurate responses.

### Bias, Risks, and Limitations

StarCoder2-15B-Instruct-v0.1 is primarily finetuned for Python code generation tasks that can be verified through execution, which may lead to certain biases and limitations. For example, the model might not adhere strictly to instructions that dictate the output format. In these situations, it's beneficial to provide a **response prefix** or a **one-shot example** to steer the model’s output. Additionally, the model may have limitations with other programming languages and out-of-domain coding tasks.

The model also inherits the bias, risks, and limitations from its base StarCoder2-15B model. For more information, please refer to the [StarCoder2-15B model card](https://huggingface.co/bigcode/starcoder2-15b).

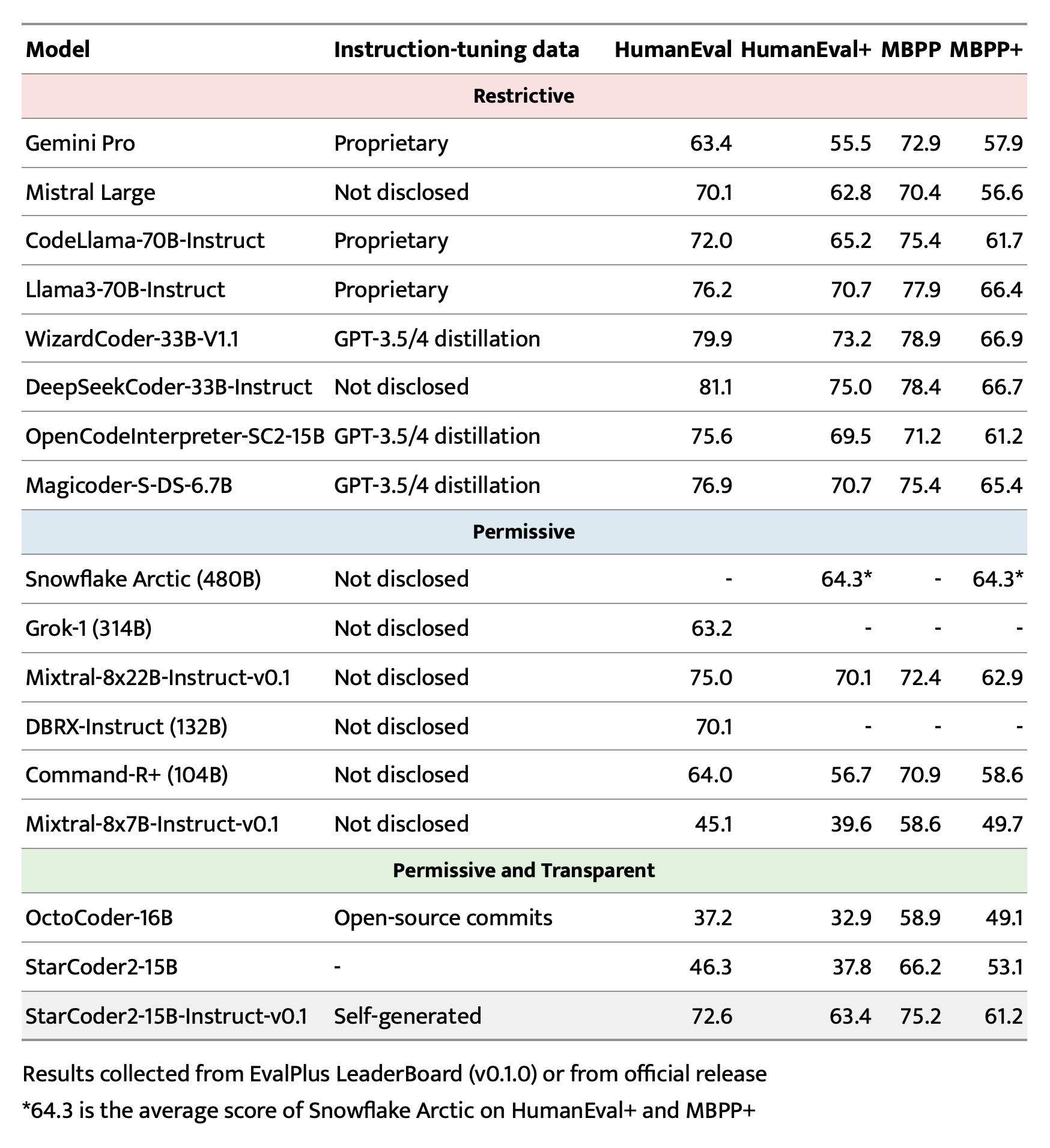

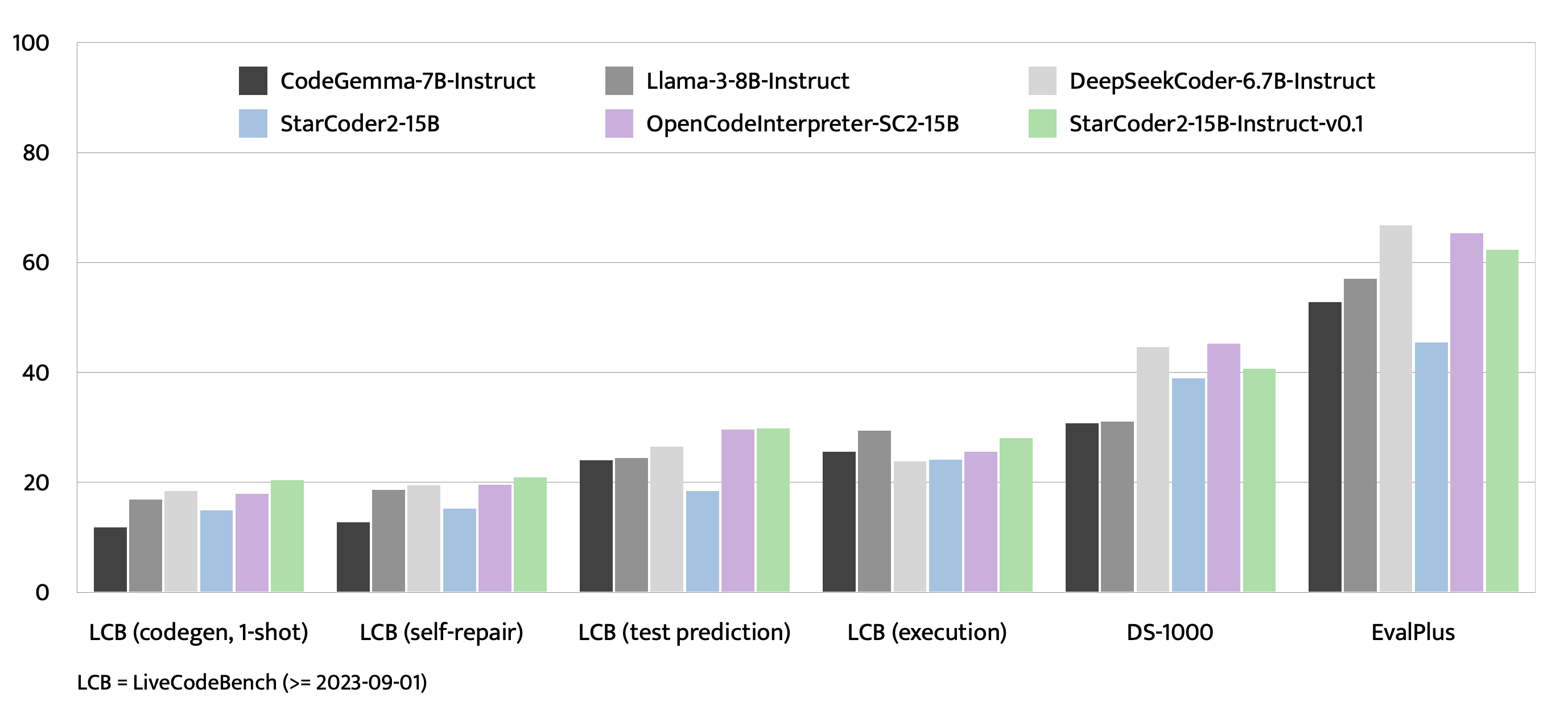

## Evaluation on EvalPlus, LiveCodeBench, and DS-1000

## Training Details

### Hyperparameters

- **Optimizer:** Adafactor

- **Learning rate:** 1e-5

- **Epoch:** 4

- **Batch size:** 64

- **Warmup ratio:** 0.05

- **Scheduler:** Linear

- **Sequence length:** 1280

- **Dropout**: Not applied

### Hardware

1 x NVIDIA A100 80GB

## Resources

- **Model:** [bigcode/starCoder2-15b-instruct-v0.1](https://huggingface.co/bigcode/starcoder2-instruct-15b-v0.1)

- **Code:** [bigcode-project/starcoder2-self-align](https://github.com/bigcode-project/starcoder2-self-align)

- **Dataset:** [bigcode/self-oss-instruct-sc2-exec-filter-50k](https://huggingface.co/datasets/bigcode/self-oss-instruct-sc2-exec-filter-50k/)

|