license: cc-by-nc-4.0

CapSpeech

DataSet used for the paper: CapSpeech: Enabling Downstream Applications in Style-Captioned Text-to-Speech

Please refer to CapSpeech repo for more details.

Overview

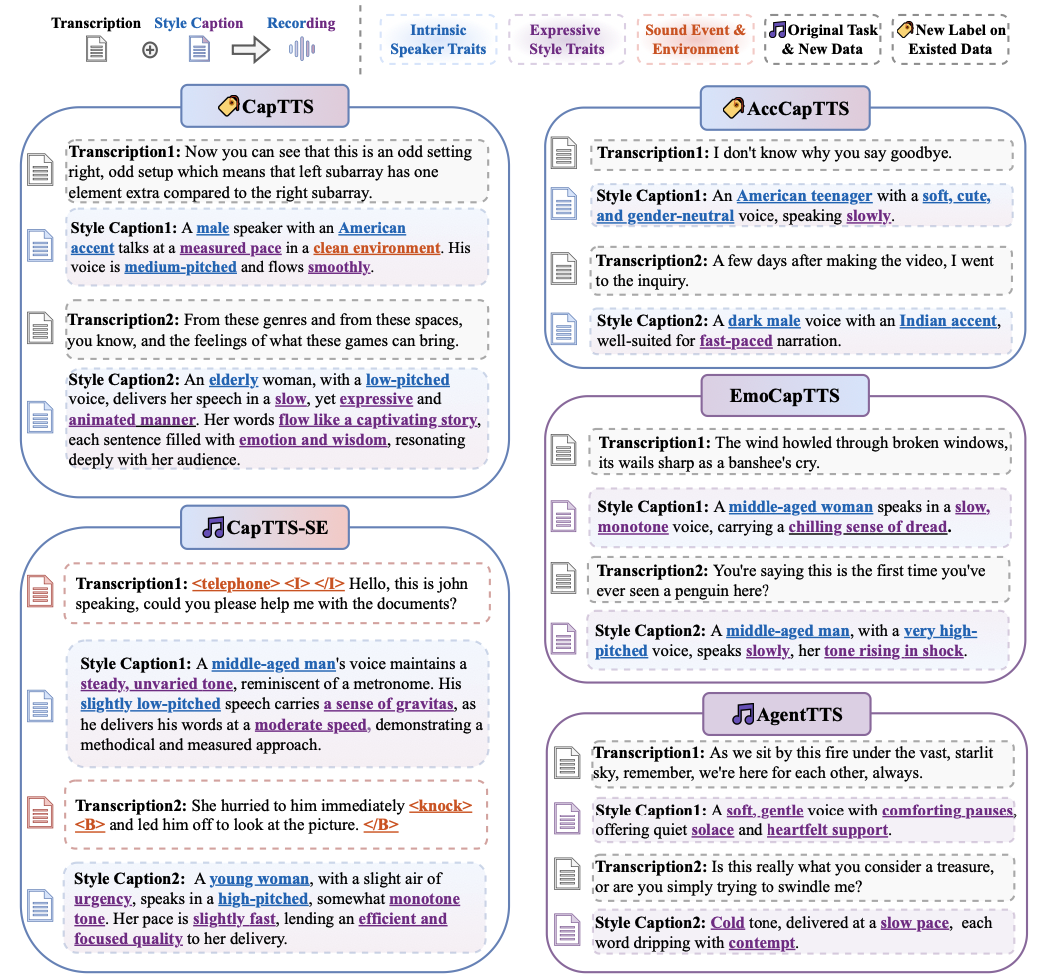

🔥 CapSpeech is a new benchmark designed for style-captioned TTS (CapTTS) tasks, including style-captioned text-to-speech synthesis with sound effects (CapTTS-SE), accent-captioned TTS (AccCapTTS), emotion-captioned TTS (EmoCapTTS) and text-to-speech synthesis for chat agent (AgentTTS). CapSpeech comprises over 10 million machine-annotated audio-caption pairs and nearly 0.36 million human-annotated audio-caption pairs. 3 new speech datasets are specifically designed for the CapTTS-SE and AgentTTS tasks to enhance the benchmark’s coverage of real-world scenarios.

License

⚠️ All resources are under the CC BY-NC 4.0 license.

Citation

If you use the models, please cite our work as follows:

@misc{wang2025capspeechenablingdownstreamapplications,

title={CapSpeech: Enabling Downstream Applications in Style-Captioned Text-to-Speech},

author={Helin Wang and Jiarui Hai and Dading Chong and Karan Thakkar and Tiantian Feng and Dongchao Yang and Junhyeok Lee and Laureano Moro Velazquez and Jesus Villalba and Zengyi Qin and Shrikanth Narayanan and Mounya Elhiali and Najim Dehak},

year={2025},

eprint={2506.02863},

archivePrefix={arXiv},

primaryClass={eess.AS},

url={https://arxiv.org/abs/2506.02863},

}