Upload 33 files

Browse files- README.md +61 -3

- adapter_config.json +26 -0

- adapter_model.safetensors +3 -0

- added_tokens.json +16 -0

- all_results.json +12 -0

- checkpoint-208/README.md +202 -0

- checkpoint-208/adapter_config.json +26 -0

- checkpoint-208/adapter_model.safetensors +3 -0

- checkpoint-208/added_tokens.json +16 -0

- checkpoint-208/merges.txt +0 -0

- checkpoint-208/optimizer.pt +3 -0

- checkpoint-208/rng_state.pth +3 -0

- checkpoint-208/scheduler.pt +3 -0

- checkpoint-208/special_tokens_map.json +31 -0

- checkpoint-208/tokenizer.json +0 -0

- checkpoint-208/tokenizer_config.json +143 -0

- checkpoint-208/trainer_state.json +173 -0

- checkpoint-208/training_args.bin +3 -0

- checkpoint-208/vocab.json +0 -0

- eval_results.json +7 -0

- merges.txt +0 -0

- preprocessor_config.json +29 -0

- runs/Sep03_06-03-57_peter-rog/events.out.tfevents.1725336255.peter-rog.178069.0 +3 -0

- runs/Sep03_06-03-57_peter-rog/events.out.tfevents.1725340350.peter-rog.178069.1 +3 -0

- special_tokens_map.json +31 -0

- tokenizer.json +0 -0

- tokenizer_config.json +143 -0

- train_results.json +8 -0

- trainer_log.jsonl +21 -0

- trainer_state.json +182 -0

- training_args.bin +3 -0

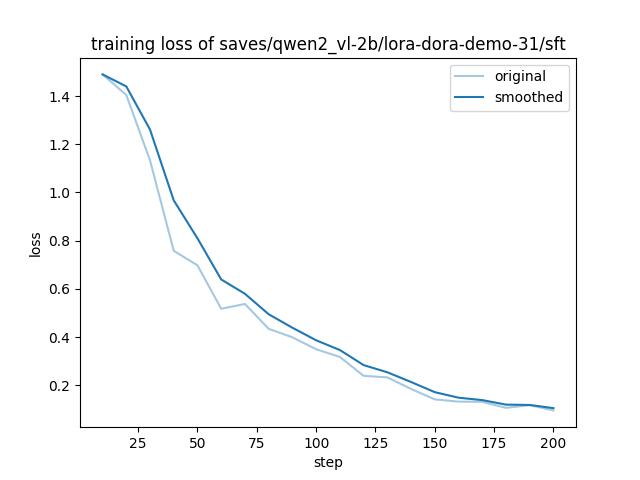

- training_loss.png +0 -0

- vocab.json +0 -0

README.md

CHANGED

|

@@ -1,3 +1,61 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: Qwen/Qwen2-VL-2B-Instruct

|

| 3 |

+

library_name: peft

|

| 4 |

+

license: other

|

| 5 |

+

tags:

|

| 6 |

+

- llama-factory

|

| 7 |

+

- lora

|

| 8 |

+

- generated_from_trainer

|

| 9 |

+

model-index:

|

| 10 |

+

- name: sft

|

| 11 |

+

results: []

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 15 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 16 |

+

|

| 17 |

+

# sft

|

| 18 |

+

|

| 19 |

+

This model is a fine-tuned version of [Qwen/Qwen2-VL-2B-Instruct](https://huggingface.co/Qwen/Qwen2-VL-2B-Instruct) on the dora_demo_31 and the identity datasets.

|

| 20 |

+

It achieves the following results on the evaluation set:

|

| 21 |

+

- Loss: 0.5046

|

| 22 |

+

|

| 23 |

+

## Model description

|

| 24 |

+

|

| 25 |

+

More information needed

|

| 26 |

+

|

| 27 |

+

## Intended uses & limitations

|

| 28 |

+

|

| 29 |

+

More information needed

|

| 30 |

+

|

| 31 |

+

## Training and evaluation data

|

| 32 |

+

|

| 33 |

+

More information needed

|

| 34 |

+

|

| 35 |

+

## Training procedure

|

| 36 |

+

|

| 37 |

+

### Training hyperparameters

|

| 38 |

+

|

| 39 |

+

The following hyperparameters were used during training:

|

| 40 |

+

- learning_rate: 0.0001

|

| 41 |

+

- train_batch_size: 4

|

| 42 |

+

- eval_batch_size: 1

|

| 43 |

+

- seed: 42

|

| 44 |

+

- gradient_accumulation_steps: 8

|

| 45 |

+

- total_train_batch_size: 32

|

| 46 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 47 |

+

- lr_scheduler_type: cosine

|

| 48 |

+

- lr_scheduler_warmup_ratio: 0.1

|

| 49 |

+

- num_epochs: 16

|

| 50 |

+

|

| 51 |

+

### Training results

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

### Framework versions

|

| 56 |

+

|

| 57 |

+

- PEFT 0.12.0

|

| 58 |

+

- Transformers 4.45.0.dev0

|

| 59 |

+

- Pytorch 2.4.0+cu121

|

| 60 |

+

- Datasets 2.21.0

|

| 61 |

+

- Tokenizers 0.19.1

|

adapter_config.json

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "Qwen/Qwen2-VL-2B-Instruct",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"fan_in_fan_out": false,

|

| 7 |

+

"inference_mode": true,

|

| 8 |

+

"init_lora_weights": true,

|

| 9 |

+

"layer_replication": null,

|

| 10 |

+

"layers_pattern": null,

|

| 11 |

+

"layers_to_transform": null,

|

| 12 |

+

"loftq_config": {},

|

| 13 |

+

"lora_alpha": 16,

|

| 14 |

+

"lora_dropout": 0.0,

|

| 15 |

+

"megatron_config": null,

|

| 16 |

+

"megatron_core": "megatron.core",

|

| 17 |

+

"modules_to_save": null,

|

| 18 |

+

"peft_type": "LORA",

|

| 19 |

+

"r": 8,

|

| 20 |

+

"rank_pattern": {},

|

| 21 |

+

"revision": null,

|

| 22 |

+

"target_modules": "^(?!.*visual).*(?:down_proj|k_proj|o_proj|up_proj|gate_proj|v_proj|q_proj).*",

|

| 23 |

+

"task_type": "CAUSAL_LM",

|

| 24 |

+

"use_dora": false,

|

| 25 |

+

"use_rslora": false

|

| 26 |

+

}

|

adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:003d7fea1b63ba5ccc728d83cbc41b2ffd675f9d850f3932206e753ad980e55c

|

| 3 |

+

size 36981072

|

added_tokens.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"<|box_end|>": 151649,

|

| 3 |

+

"<|box_start|>": 151648,

|

| 4 |

+

"<|endoftext|>": 151643,

|

| 5 |

+

"<|im_end|>": 151645,

|

| 6 |

+

"<|im_start|>": 151644,

|

| 7 |

+

"<|image_pad|>": 151655,

|

| 8 |

+

"<|object_ref_end|>": 151647,

|

| 9 |

+

"<|object_ref_start|>": 151646,

|

| 10 |

+

"<|quad_end|>": 151651,

|

| 11 |

+

"<|quad_start|>": 151650,

|

| 12 |

+

"<|video_pad|>": 151656,

|

| 13 |

+

"<|vision_end|>": 151653,

|

| 14 |

+

"<|vision_pad|>": 151654,

|

| 15 |

+

"<|vision_start|>": 151652

|

| 16 |

+

}

|

all_results.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 16.0,

|

| 3 |

+

"eval_loss": 0.5046026110649109,

|

| 4 |

+

"eval_runtime": 20.0388,

|

| 5 |

+

"eval_samples_per_second": 2.345,

|

| 6 |

+

"eval_steps_per_second": 2.345,

|

| 7 |

+

"total_flos": 3.485945501879501e+16,

|

| 8 |

+

"train_loss": 0.457215073876656,

|

| 9 |

+

"train_runtime": 4064.4346,

|

| 10 |

+

"train_samples_per_second": 1.638,

|

| 11 |

+

"train_steps_per_second": 0.051

|

| 12 |

+

}

|

checkpoint-208/README.md

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: Qwen/Qwen2-VL-2B-Instruct

|

| 3 |

+

library_name: peft

|

| 4 |

+

---

|

| 5 |

+

|

| 6 |

+

# Model Card for Model ID

|

| 7 |

+

|

| 8 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

## Model Details

|

| 13 |

+

|

| 14 |

+

### Model Description

|

| 15 |

+

|

| 16 |

+

<!-- Provide a longer summary of what this model is. -->

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

- **Developed by:** [More Information Needed]

|

| 21 |

+

- **Funded by [optional]:** [More Information Needed]

|

| 22 |

+

- **Shared by [optional]:** [More Information Needed]

|

| 23 |

+

- **Model type:** [More Information Needed]

|

| 24 |

+

- **Language(s) (NLP):** [More Information Needed]

|

| 25 |

+

- **License:** [More Information Needed]

|

| 26 |

+

- **Finetuned from model [optional]:** [More Information Needed]

|

| 27 |

+

|

| 28 |

+

### Model Sources [optional]

|

| 29 |

+

|

| 30 |

+

<!-- Provide the basic links for the model. -->

|

| 31 |

+

|

| 32 |

+

- **Repository:** [More Information Needed]

|

| 33 |

+

- **Paper [optional]:** [More Information Needed]

|

| 34 |

+

- **Demo [optional]:** [More Information Needed]

|

| 35 |

+

|

| 36 |

+

## Uses

|

| 37 |

+

|

| 38 |

+

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

|

| 39 |

+

|

| 40 |

+

### Direct Use

|

| 41 |

+

|

| 42 |

+

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

|

| 43 |

+

|

| 44 |

+

[More Information Needed]

|

| 45 |

+

|

| 46 |

+

### Downstream Use [optional]

|

| 47 |

+

|

| 48 |

+

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

|

| 49 |

+

|

| 50 |

+

[More Information Needed]

|

| 51 |

+

|

| 52 |

+

### Out-of-Scope Use

|

| 53 |

+

|

| 54 |

+

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

|

| 55 |

+

|

| 56 |

+

[More Information Needed]

|

| 57 |

+

|

| 58 |

+

## Bias, Risks, and Limitations

|

| 59 |

+

|

| 60 |

+

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 61 |

+

|

| 62 |

+

[More Information Needed]

|

| 63 |

+

|

| 64 |

+

### Recommendations

|

| 65 |

+

|

| 66 |

+

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

| 67 |

+

|

| 68 |

+

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

|

| 69 |

+

|

| 70 |

+

## How to Get Started with the Model

|

| 71 |

+

|

| 72 |

+

Use the code below to get started with the model.

|

| 73 |

+

|

| 74 |

+

[More Information Needed]

|

| 75 |

+

|

| 76 |

+

## Training Details

|

| 77 |

+

|

| 78 |

+

### Training Data

|

| 79 |

+

|

| 80 |

+

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

|

| 81 |

+

|

| 82 |

+

[More Information Needed]

|

| 83 |

+

|

| 84 |

+

### Training Procedure

|

| 85 |

+

|

| 86 |

+

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

|

| 87 |

+

|

| 88 |

+

#### Preprocessing [optional]

|

| 89 |

+

|

| 90 |

+

[More Information Needed]

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

#### Training Hyperparameters

|

| 94 |

+

|

| 95 |

+

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

|

| 96 |

+

|

| 97 |

+

#### Speeds, Sizes, Times [optional]

|

| 98 |

+

|

| 99 |

+

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

|

| 100 |

+

|

| 101 |

+

[More Information Needed]

|

| 102 |

+

|

| 103 |

+

## Evaluation

|

| 104 |

+

|

| 105 |

+

<!-- This section describes the evaluation protocols and provides the results. -->

|

| 106 |

+

|

| 107 |

+

### Testing Data, Factors & Metrics

|

| 108 |

+

|

| 109 |

+

#### Testing Data

|

| 110 |

+

|

| 111 |

+

<!-- This should link to a Dataset Card if possible. -->

|

| 112 |

+

|

| 113 |

+

[More Information Needed]

|

| 114 |

+

|

| 115 |

+

#### Factors

|

| 116 |

+

|

| 117 |

+

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

|

| 118 |

+

|

| 119 |

+

[More Information Needed]

|

| 120 |

+

|

| 121 |

+

#### Metrics

|

| 122 |

+

|

| 123 |

+

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 124 |

+

|

| 125 |

+

[More Information Needed]

|

| 126 |

+

|

| 127 |

+

### Results

|

| 128 |

+

|

| 129 |

+

[More Information Needed]

|

| 130 |

+

|

| 131 |

+

#### Summary

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

## Model Examination [optional]

|

| 136 |

+

|

| 137 |

+

<!-- Relevant interpretability work for the model goes here -->

|

| 138 |

+

|

| 139 |

+

[More Information Needed]

|

| 140 |

+

|

| 141 |

+

## Environmental Impact

|

| 142 |

+

|

| 143 |

+

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 144 |

+

|

| 145 |

+

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 146 |

+

|

| 147 |

+

- **Hardware Type:** [More Information Needed]

|

| 148 |

+

- **Hours used:** [More Information Needed]

|

| 149 |

+

- **Cloud Provider:** [More Information Needed]

|

| 150 |

+

- **Compute Region:** [More Information Needed]

|

| 151 |

+

- **Carbon Emitted:** [More Information Needed]

|

| 152 |

+

|

| 153 |

+

## Technical Specifications [optional]

|

| 154 |

+

|

| 155 |

+

### Model Architecture and Objective

|

| 156 |

+

|

| 157 |

+

[More Information Needed]

|

| 158 |

+

|

| 159 |

+

### Compute Infrastructure

|

| 160 |

+

|

| 161 |

+

[More Information Needed]

|

| 162 |

+

|

| 163 |

+

#### Hardware

|

| 164 |

+

|

| 165 |

+

[More Information Needed]

|

| 166 |

+

|

| 167 |

+

#### Software

|

| 168 |

+

|

| 169 |

+

[More Information Needed]

|

| 170 |

+

|

| 171 |

+

## Citation [optional]

|

| 172 |

+

|

| 173 |

+

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 174 |

+

|

| 175 |

+

**BibTeX:**

|

| 176 |

+

|

| 177 |

+

[More Information Needed]

|

| 178 |

+

|

| 179 |

+

**APA:**

|

| 180 |

+

|

| 181 |

+

[More Information Needed]

|

| 182 |

+

|

| 183 |

+

## Glossary [optional]

|

| 184 |

+

|

| 185 |

+

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

|

| 186 |

+

|

| 187 |

+

[More Information Needed]

|

| 188 |

+

|

| 189 |

+

## More Information [optional]

|

| 190 |

+

|

| 191 |

+

[More Information Needed]

|

| 192 |

+

|

| 193 |

+

## Model Card Authors [optional]

|

| 194 |

+

|

| 195 |

+

[More Information Needed]

|

| 196 |

+

|

| 197 |

+

## Model Card Contact

|

| 198 |

+

|

| 199 |

+

[More Information Needed]

|

| 200 |

+

### Framework versions

|

| 201 |

+

|

| 202 |

+

- PEFT 0.12.0

|

checkpoint-208/adapter_config.json

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "Qwen/Qwen2-VL-2B-Instruct",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"fan_in_fan_out": false,

|

| 7 |

+

"inference_mode": true,

|

| 8 |

+

"init_lora_weights": true,

|

| 9 |

+

"layer_replication": null,

|

| 10 |

+

"layers_pattern": null,

|

| 11 |

+

"layers_to_transform": null,

|

| 12 |

+

"loftq_config": {},

|

| 13 |

+

"lora_alpha": 16,

|

| 14 |

+

"lora_dropout": 0.0,

|

| 15 |

+

"megatron_config": null,

|

| 16 |

+

"megatron_core": "megatron.core",

|

| 17 |

+

"modules_to_save": null,

|

| 18 |

+

"peft_type": "LORA",

|

| 19 |

+

"r": 8,

|

| 20 |

+

"rank_pattern": {},

|

| 21 |

+

"revision": null,

|

| 22 |

+

"target_modules": "^(?!.*visual).*(?:down_proj|k_proj|o_proj|up_proj|gate_proj|v_proj|q_proj).*",

|

| 23 |

+

"task_type": "CAUSAL_LM",

|

| 24 |

+

"use_dora": false,

|

| 25 |

+

"use_rslora": false

|

| 26 |

+

}

|

checkpoint-208/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:003d7fea1b63ba5ccc728d83cbc41b2ffd675f9d850f3932206e753ad980e55c

|

| 3 |

+

size 36981072

|

checkpoint-208/added_tokens.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"<|box_end|>": 151649,

|

| 3 |

+

"<|box_start|>": 151648,

|

| 4 |

+

"<|endoftext|>": 151643,

|

| 5 |

+

"<|im_end|>": 151645,

|

| 6 |

+

"<|im_start|>": 151644,

|

| 7 |

+

"<|image_pad|>": 151655,

|

| 8 |

+

"<|object_ref_end|>": 151647,

|

| 9 |

+

"<|object_ref_start|>": 151646,

|

| 10 |

+

"<|quad_end|>": 151651,

|

| 11 |

+

"<|quad_start|>": 151650,

|

| 12 |

+

"<|video_pad|>": 151656,

|

| 13 |

+

"<|vision_end|>": 151653,

|

| 14 |

+

"<|vision_pad|>": 151654,

|

| 15 |

+

"<|vision_start|>": 151652

|

| 16 |

+

}

|

checkpoint-208/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

checkpoint-208/optimizer.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b2a729ca72aa79ef785f7ab3a36b46f357c6449e6db061230aa8b9f096a9e1a7

|

| 3 |

+

size 74188650

|

checkpoint-208/rng_state.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:52cca5856c568bc52c683b690919168fa27bfbdfefc6e0a62355afa6011157c3

|

| 3 |

+

size 14244

|

checkpoint-208/scheduler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9e84653c32772b6ebe38bc1fa88233f076b0acccd83473b0e19c56f7f06105d5

|

| 3 |

+

size 1064

|

checkpoint-208/special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|endoftext|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

checkpoint-208/tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

checkpoint-208/tokenizer_config.json

ADDED

|

@@ -0,0 +1,143 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"added_tokens_decoder": {

|

| 4 |

+

"151643": {

|

| 5 |

+

"content": "<|endoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": false,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false,

|

| 10 |

+

"special": true

|

| 11 |

+

},

|

| 12 |

+

"151644": {

|

| 13 |

+

"content": "<|im_start|>",

|

| 14 |

+

"lstrip": false,

|

| 15 |

+

"normalized": false,

|

| 16 |

+

"rstrip": false,

|

| 17 |

+

"single_word": false,

|

| 18 |

+

"special": true

|

| 19 |

+

},

|

| 20 |

+

"151645": {

|

| 21 |

+

"content": "<|im_end|>",

|

| 22 |

+

"lstrip": false,

|

| 23 |

+

"normalized": false,

|

| 24 |

+

"rstrip": false,

|

| 25 |

+

"single_word": false,

|

| 26 |

+

"special": true

|

| 27 |

+

},

|

| 28 |

+

"151646": {

|

| 29 |

+

"content": "<|object_ref_start|>",

|

| 30 |

+

"lstrip": false,

|

| 31 |

+

"normalized": false,

|

| 32 |

+

"rstrip": false,

|

| 33 |

+

"single_word": false,

|

| 34 |

+

"special": true

|

| 35 |

+

},

|

| 36 |

+

"151647": {

|

| 37 |

+

"content": "<|object_ref_end|>",

|

| 38 |

+

"lstrip": false,

|

| 39 |

+

"normalized": false,

|

| 40 |

+

"rstrip": false,

|

| 41 |

+

"single_word": false,

|

| 42 |

+

"special": true

|

| 43 |

+

},

|

| 44 |

+

"151648": {

|

| 45 |

+

"content": "<|box_start|>",

|

| 46 |

+

"lstrip": false,

|

| 47 |

+

"normalized": false,

|

| 48 |

+

"rstrip": false,

|

| 49 |

+

"single_word": false,

|

| 50 |

+

"special": true

|

| 51 |

+

},

|

| 52 |

+

"151649": {

|

| 53 |

+

"content": "<|box_end|>",

|

| 54 |

+

"lstrip": false,

|

| 55 |

+

"normalized": false,

|

| 56 |

+

"rstrip": false,

|

| 57 |

+

"single_word": false,

|

| 58 |

+

"special": true

|

| 59 |

+

},

|

| 60 |

+

"151650": {

|

| 61 |

+

"content": "<|quad_start|>",

|

| 62 |

+

"lstrip": false,

|

| 63 |

+

"normalized": false,

|

| 64 |

+

"rstrip": false,

|

| 65 |

+

"single_word": false,

|

| 66 |

+

"special": true

|

| 67 |

+

},

|

| 68 |

+

"151651": {

|

| 69 |

+

"content": "<|quad_end|>",

|

| 70 |

+

"lstrip": false,

|

| 71 |

+

"normalized": false,

|

| 72 |

+

"rstrip": false,

|

| 73 |

+

"single_word": false,

|

| 74 |

+

"special": true

|

| 75 |

+

},

|

| 76 |

+

"151652": {

|

| 77 |

+

"content": "<|vision_start|>",

|

| 78 |

+

"lstrip": false,

|

| 79 |

+

"normalized": false,

|

| 80 |

+

"rstrip": false,

|

| 81 |

+

"single_word": false,

|

| 82 |

+

"special": true

|

| 83 |

+

},

|

| 84 |

+

"151653": {

|

| 85 |

+

"content": "<|vision_end|>",

|

| 86 |

+

"lstrip": false,

|

| 87 |

+

"normalized": false,

|

| 88 |

+

"rstrip": false,

|

| 89 |

+

"single_word": false,

|

| 90 |

+

"special": true

|

| 91 |

+

},

|

| 92 |

+

"151654": {

|

| 93 |

+

"content": "<|vision_pad|>",

|

| 94 |

+

"lstrip": false,

|

| 95 |

+

"normalized": false,

|

| 96 |

+

"rstrip": false,

|

| 97 |

+

"single_word": false,

|

| 98 |

+

"special": true

|

| 99 |

+

},

|

| 100 |

+

"151655": {

|

| 101 |

+

"content": "<|image_pad|>",

|

| 102 |

+

"lstrip": false,

|

| 103 |

+

"normalized": false,

|

| 104 |

+

"rstrip": false,

|

| 105 |

+

"single_word": false,

|

| 106 |

+

"special": true

|

| 107 |

+

},

|

| 108 |

+

"151656": {

|

| 109 |

+

"content": "<|video_pad|>",

|

| 110 |

+

"lstrip": false,

|

| 111 |

+

"normalized": false,

|

| 112 |

+

"rstrip": false,

|

| 113 |

+

"single_word": false,

|

| 114 |

+

"special": true

|

| 115 |

+

}

|

| 116 |

+

},

|

| 117 |

+

"additional_special_tokens": [

|

| 118 |

+

"<|im_start|>",

|

| 119 |

+

"<|im_end|>",

|

| 120 |

+

"<|object_ref_start|>",

|

| 121 |

+

"<|object_ref_end|>",

|

| 122 |

+

"<|box_start|>",

|

| 123 |

+

"<|box_end|>",

|

| 124 |

+

"<|quad_start|>",

|

| 125 |

+

"<|quad_end|>",

|

| 126 |

+

"<|vision_start|>",

|

| 127 |

+

"<|vision_end|>",

|

| 128 |

+

"<|vision_pad|>",

|

| 129 |

+

"<|image_pad|>",

|

| 130 |

+

"<|video_pad|>"

|

| 131 |

+

],

|

| 132 |

+

"bos_token": null,

|

| 133 |

+

"chat_template": "{% set system_message = 'You are a helpful assistant.' %}{% if messages[0]['role'] == 'system' %}{% set loop_messages = messages[1:] %}{% set system_message = messages[0]['content'] %}{% else %}{% set loop_messages = messages %}{% endif %}{% if system_message is defined %}{{ '<|im_start|>system\n' + system_message + '<|im_end|>\n' }}{% endif %}{% for message in loop_messages %}{% set content = message['content'] %}{% if message['role'] == 'user' %}{{ '<|im_start|>user\n' + content + '<|im_end|>\n<|im_start|>assistant\n' }}{% elif message['role'] == 'assistant' %}{{ content + '<|im_end|>' + '\n' }}{% endif %}{% endfor %}",

|

| 134 |

+

"clean_up_tokenization_spaces": false,

|

| 135 |

+

"eos_token": "<|im_end|>",

|

| 136 |

+

"errors": "replace",

|

| 137 |

+

"model_max_length": 32768,

|

| 138 |

+

"pad_token": "<|endoftext|>",

|

| 139 |

+

"padding_side": "right",

|

| 140 |

+

"split_special_tokens": false,

|

| 141 |

+

"tokenizer_class": "Qwen2Tokenizer",

|

| 142 |

+

"unk_token": null

|

| 143 |

+

}

|

checkpoint-208/trainer_state.json

ADDED

|

@@ -0,0 +1,173 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"best_metric": null,

|

| 3 |

+

"best_model_checkpoint": null,

|

| 4 |

+

"epoch": 16.0,

|

| 5 |

+

"eval_steps": 500,

|

| 6 |

+

"global_step": 208,

|

| 7 |

+

"is_hyper_param_search": false,

|

| 8 |

+

"is_local_process_zero": true,

|

| 9 |

+

"is_world_process_zero": true,

|

| 10 |

+

"log_history": [

|

| 11 |

+

{

|

| 12 |

+

"epoch": 0.7692307692307693,

|

| 13 |

+

"grad_norm": 1.4849790334701538,

|

| 14 |

+

"learning_rate": 4.761904761904762e-05,

|

| 15 |

+

"loss": 1.4899,

|

| 16 |

+

"step": 10

|

| 17 |

+

},

|

| 18 |

+

{

|

| 19 |

+

"epoch": 1.5384615384615383,

|

| 20 |

+

"grad_norm": 1.4494893550872803,

|

| 21 |

+

"learning_rate": 9.523809523809524e-05,

|

| 22 |

+

"loss": 1.4047,

|

| 23 |

+

"step": 20

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"epoch": 2.3076923076923075,

|

| 27 |

+

"grad_norm": 1.1887791156768799,

|

| 28 |

+

"learning_rate": 9.94295546780682e-05,

|

| 29 |

+

"loss": 1.1335,

|

| 30 |

+

"step": 30

|

| 31 |

+

},

|

| 32 |

+

{

|

| 33 |

+

"epoch": 3.076923076923077,

|

| 34 |

+

"grad_norm": 2.6606452465057373,

|

| 35 |

+

"learning_rate": 9.747435008912438e-05,

|

| 36 |

+

"loss": 0.758,

|

| 37 |

+

"step": 40

|

| 38 |

+

},

|

| 39 |

+

{

|

| 40 |

+

"epoch": 3.8461538461538463,

|

| 41 |

+

"grad_norm": 2.238654136657715,

|

| 42 |

+

"learning_rate": 9.418238419956484e-05,

|

| 43 |

+

"loss": 0.6976,

|

| 44 |

+

"step": 50

|

| 45 |

+

},

|

| 46 |

+

{

|

| 47 |

+

"epoch": 4.615384615384615,

|

| 48 |

+

"grad_norm": 2.202763080596924,

|

| 49 |

+

"learning_rate": 8.964635069757802e-05,

|

| 50 |

+

"loss": 0.5176,

|

| 51 |

+

"step": 60

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"epoch": 5.384615384615385,

|

| 55 |

+

"grad_norm": 1.5672999620437622,

|

| 56 |

+

"learning_rate": 8.399397316510596e-05,

|

| 57 |

+

"loss": 0.5374,

|

| 58 |

+

"step": 70

|

| 59 |

+

},

|

| 60 |

+

{

|

| 61 |

+

"epoch": 6.153846153846154,

|

| 62 |

+

"grad_norm": 1.7563928365707397,

|

| 63 |

+

"learning_rate": 7.738440869493018e-05,

|

| 64 |

+

"loss": 0.4344,

|

| 65 |

+

"step": 80

|

| 66 |

+

},

|

| 67 |

+

{

|

| 68 |

+

"epoch": 6.923076923076923,

|

| 69 |

+

"grad_norm": 1.8594719171524048,

|

| 70 |

+

"learning_rate": 7.000376641716133e-05,

|

| 71 |

+

"loss": 0.3991,

|

| 72 |

+

"step": 90

|

| 73 |

+

},

|

| 74 |

+

{

|

| 75 |

+

"epoch": 7.6923076923076925,

|

| 76 |

+

"grad_norm": 1.8146908283233643,

|

| 77 |

+

"learning_rate": 6.205986712243875e-05,

|

| 78 |

+

"loss": 0.3497,

|

| 79 |

+

"step": 100

|

| 80 |

+

},

|

| 81 |

+

{

|

| 82 |

+

"epoch": 8.461538461538462,

|

| 83 |

+

"grad_norm": 1.8267848491668701,

|

| 84 |

+

"learning_rate": 5.377639153800229e-05,

|

| 85 |

+

"loss": 0.3176,

|

| 86 |

+

"step": 110

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"epoch": 9.23076923076923,

|

| 90 |

+

"grad_norm": 1.6012214422225952,

|

| 91 |

+

"learning_rate": 4.5386582026834906e-05,

|

| 92 |

+

"loss": 0.2394,

|

| 93 |

+

"step": 120

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"epoch": 10.0,

|

| 97 |

+

"grad_norm": 2.043828010559082,

|

| 98 |

+

"learning_rate": 3.712667505458622e-05,

|

| 99 |

+

"loss": 0.2328,

|

| 100 |

+

"step": 130

|

| 101 |

+

},

|

| 102 |

+

{

|

| 103 |

+

"epoch": 10.76923076923077,

|

| 104 |

+

"grad_norm": 2.1970419883728027,

|

| 105 |

+

"learning_rate": 2.9229249349905684e-05,

|

| 106 |

+

"loss": 0.1849,

|

| 107 |

+

"step": 140

|

| 108 |

+

},

|

| 109 |

+

{

|

| 110 |

+

"epoch": 11.538461538461538,

|

| 111 |

+

"grad_norm": 1.2817729711532593,

|

| 112 |

+

"learning_rate": 2.1916677057681785e-05,

|

| 113 |

+

"loss": 0.1416,

|

| 114 |

+

"step": 150

|

| 115 |

+

},

|

| 116 |

+

{

|

| 117 |

+

"epoch": 12.307692307692308,

|

| 118 |

+

"grad_norm": 1.8149962425231934,

|

| 119 |

+

"learning_rate": 1.5394862284655264e-05,

|

| 120 |

+

"loss": 0.1323,

|

| 121 |

+

"step": 160

|

| 122 |

+

},

|

| 123 |

+

{

|

| 124 |

+

"epoch": 13.076923076923077,

|

| 125 |

+

"grad_norm": 2.4009556770324707,

|

| 126 |

+

"learning_rate": 9.847443344610297e-06,

|

| 127 |

+

"loss": 0.1315,

|

| 128 |

+

"step": 170

|

| 129 |

+

},

|

| 130 |

+

{

|

| 131 |

+

"epoch": 13.846153846153847,

|

| 132 |

+

"grad_norm": 1.9544780254364014,

|

| 133 |

+

"learning_rate": 5.430621953703785e-06,

|

| 134 |

+

"loss": 0.1065,

|

| 135 |

+

"step": 180

|

| 136 |

+

},

|

| 137 |

+

{

|

| 138 |

+

"epoch": 14.615384615384615,

|

| 139 |

+

"grad_norm": 1.0407898426055908,

|

| 140 |

+

"learning_rate": 2.268764973114684e-06,

|

| 141 |

+

"loss": 0.1174,

|

| 142 |

+

"step": 190

|

| 143 |

+

},

|

| 144 |

+

{

|

| 145 |

+

"epoch": 15.384615384615385,

|

| 146 |

+

"grad_norm": 3.4905996322631836,

|

| 147 |

+

"learning_rate": 4.5090254315662826e-07,

|

| 148 |

+

"loss": 0.0959,

|

| 149 |

+

"step": 200

|

| 150 |

+

}

|

| 151 |

+

],

|

| 152 |

+

"logging_steps": 10,

|

| 153 |

+

"max_steps": 208,

|

| 154 |

+

"num_input_tokens_seen": 0,

|

| 155 |

+

"num_train_epochs": 16,

|

| 156 |

+

"save_steps": 500,

|

| 157 |

+

"stateful_callbacks": {

|

| 158 |

+

"TrainerControl": {

|

| 159 |

+

"args": {

|

| 160 |

+

"should_epoch_stop": false,

|

| 161 |

+

"should_evaluate": false,

|

| 162 |

+

"should_log": false,

|

| 163 |

+

"should_save": true,

|

| 164 |

+

"should_training_stop": true

|

| 165 |

+

},

|

| 166 |

+

"attributes": {}

|

| 167 |

+

}

|

| 168 |

+

},

|

| 169 |

+

"total_flos": 3.485945501879501e+16,

|

| 170 |

+

"train_batch_size": 4,

|

| 171 |

+

"trial_name": null,

|

| 172 |

+

"trial_params": null

|

| 173 |

+

}

|

checkpoint-208/training_args.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d984b13dc94c2c552d631f900dbb389ec8e262db2b9e4dbd2e77e110776602dc

|

| 3 |

+

size 5368

|

checkpoint-208/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

eval_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 16.0,

|

| 3 |

+

"eval_loss": 0.5046026110649109,

|

| 4 |

+

"eval_runtime": 20.0388,

|

| 5 |

+

"eval_samples_per_second": 2.345,

|

| 6 |

+

"eval_steps_per_second": 2.345

|

| 7 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_convert_rgb": true,

|

| 3 |

+

"do_normalize": true,

|

| 4 |

+

"do_rescale": true,

|

| 5 |

+

"do_resize": true,

|

| 6 |

+

"image_mean": [

|

| 7 |

+

0.48145466,

|

| 8 |

+

0.4578275,

|

| 9 |

+

0.40821073

|

| 10 |

+

],

|

| 11 |

+

"image_processor_type": "Qwen2VLImageProcessor",

|

| 12 |

+

"image_std": [

|

| 13 |

+

0.26862954,

|

| 14 |

+

0.26130258,

|

| 15 |

+

0.27577711

|

| 16 |

+

],

|

| 17 |

+

"max_pixels": 12845056,

|

| 18 |

+

"merge_size": 2,

|

| 19 |

+

"min_pixels": 3136,

|

| 20 |

+

"patch_size": 14,

|

| 21 |

+

"processor_class": "Qwen2VLProcessor",

|

| 22 |

+

"resample": 3,

|

| 23 |

+

"rescale_factor": 0.00392156862745098,

|

| 24 |

+

"size": {

|

| 25 |

+

"max_pixels": 12845056,

|

| 26 |

+

"min_pixels": 3136

|

| 27 |

+

},

|

| 28 |

+

"temporal_patch_size": 2

|

| 29 |

+

}

|

runs/Sep03_06-03-57_peter-rog/events.out.tfevents.1725336255.peter-rog.178069.0

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f0cbaf214aceff41f8b37d89f1cb46e41fd8be13470fe95f0ff6665625b43896

|

| 3 |

+

size 10153

|

runs/Sep03_06-03-57_peter-rog/events.out.tfevents.1725340350.peter-rog.178069.1

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:daf6bb8783e88a65400bae27d2e74806018f2aa63beed359bf17c69c5534fcb5

|

| 3 |

+

size 359

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|endoftext|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,143 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"added_tokens_decoder": {

|

| 4 |

+

"151643": {

|

| 5 |

+

"content": "<|endoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": false,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false,

|

| 10 |

+

"special": true

|

| 11 |

+

},

|

| 12 |

+

"151644": {

|

| 13 |

+

"content": "<|im_start|>",

|

| 14 |

+

"lstrip": false,

|

| 15 |

+

"normalized": false,

|

| 16 |

+

"rstrip": false,

|

| 17 |

+

"single_word": false,

|

| 18 |

+

"special": true

|

| 19 |

+

},

|

| 20 |

+

"151645": {

|

| 21 |

+

"content": "<|im_end|>",

|

| 22 |

+

"lstrip": false,

|

| 23 |

+

"normalized": false,

|

| 24 |

+

"rstrip": false,

|

| 25 |

+

"single_word": false,

|

| 26 |

+

"special": true

|

| 27 |

+

},

|

| 28 |

+

"151646": {

|

| 29 |

+

"content": "<|object_ref_start|>",

|

| 30 |

+

"lstrip": false,

|

| 31 |

+

"normalized": false,

|

| 32 |

+

"rstrip": false,

|

| 33 |

+

"single_word": false,

|

| 34 |

+

"special": true

|

| 35 |

+

},

|

| 36 |

+

"151647": {

|

| 37 |

+

"content": "<|object_ref_end|>",

|

| 38 |

+

"lstrip": false,

|

| 39 |

+

"normalized": false,

|

| 40 |

+

"rstrip": false,

|

| 41 |

+

"single_word": false,

|

| 42 |

+

"special": true

|

| 43 |

+

},

|

| 44 |

+

"151648": {

|

| 45 |

+

"content": "<|box_start|>",

|

| 46 |

+

"lstrip": false,

|

| 47 |

+

"normalized": false,

|

| 48 |