modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

list | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

lesso14/8e3f1cad-bd2a-47ec-89cf-d0994a02d997

|

lesso14

| 2025-04-03T16:36:53Z

| 0

| 0

|

peft

|

[

"peft",

"safetensors",

"llama",

"axolotl",

"generated_from_trainer",

"base_model:unsloth/SmolLM2-360M-Instruct",

"base_model:adapter:unsloth/SmolLM2-360M-Instruct",

"license:apache-2.0",

"region:us"

] | null | 2025-04-03T16:09:56Z

|

---

library_name: peft

license: apache-2.0

base_model: unsloth/SmolLM2-360M-Instruct

tags:

- axolotl

- generated_from_trainer

model-index:

- name: 8e3f1cad-bd2a-47ec-89cf-d0994a02d997

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/axolotl-ai-cloud/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/axolotl-ai-cloud/axolotl)

<details><summary>See axolotl config</summary>

axolotl version: `0.4.1`

```yaml

adapter: lora

base_model: unsloth/SmolLM2-360M-Instruct

bf16: auto

chat_template: llama3

dataset_prepared_path: null

datasets:

- data_files:

- 0ac69f72d822065e_train_data.json

ds_type: json

format: custom

path: /workspace/input_data/0ac69f72d822065e_train_data.json

type:

field_instruction: x

field_output: yl

format: '{instruction}'

no_input_format: '{instruction}'

system_format: '{system}'

system_prompt: ''

debug: null

deepspeed: null

do_eval: true

early_stopping_patience: 3

eval_batch_size: 4

eval_max_new_tokens: 128

eval_steps: 500

evals_per_epoch: null

flash_attention: true

fp16: false

fsdp: null

fsdp_config: null

gradient_accumulation_steps: 8

gradient_checkpointing: true

group_by_length: true

hub_model_id: lesso14/8e3f1cad-bd2a-47ec-89cf-d0994a02d997

hub_repo: null

hub_strategy: checkpoint

hub_token: null

learning_rate: 0.000214

load_in_4bit: false

load_in_8bit: false

local_rank: null

logging_steps: 50

lora_alpha: 128

lora_dropout: 0.15

lora_fan_in_fan_out: null

lora_model_dir: null

lora_r: 64

lora_target_linear: true

lr_scheduler: cosine

max_grad_norm: 1.0

max_steps: 500

micro_batch_size: 4

mlflow_experiment_name: /tmp/0ac69f72d822065e_train_data.json

model_type: AutoModelForCausalLM

num_epochs: 10

optimizer: adamw_torch_fused

output_dir: miner_id_24

pad_to_sequence_len: true

resume_from_checkpoint: null

s2_attention: null

sample_packing: false

save_steps: 500

saves_per_epoch: null

seed: 140

sequence_len: 1024

strict: false

tf32: true

tokenizer_type: AutoTokenizer

train_on_inputs: false

trust_remote_code: true

val_set_size: 0.05

wandb_entity: null

wandb_mode: online

wandb_name: 3f4b09da-df60-4062-bfac-4936ce58b134

wandb_project: 14a

wandb_run: your_name

wandb_runid: 3f4b09da-df60-4062-bfac-4936ce58b134

warmup_steps: 100

weight_decay: 0.0

xformers_attention: null

```

</details><br>

# 8e3f1cad-bd2a-47ec-89cf-d0994a02d997

This model is a fine-tuned version of [unsloth/SmolLM2-360M-Instruct](https://huggingface.co/unsloth/SmolLM2-360M-Instruct) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7197

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.000214

- train_batch_size: 4

- eval_batch_size: 4

- seed: 140

- gradient_accumulation_steps: 8

- total_train_batch_size: 32

- optimizer: Use OptimizerNames.ADAMW_TORCH_FUSED with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 100

- training_steps: 500

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:------:|:----:|:---------------:|

| No log | 0.0010 | 1 | 1.2791 |

| 0.7299 | 0.5173 | 500 | 0.7197 |

### Framework versions

- PEFT 0.13.2

- Transformers 4.46.0

- Pytorch 2.5.0+cu124

- Datasets 3.0.1

- Tokenizers 0.20.1

|

genki10/BERT_AugV8_k1_task1_organization_sp040_lw010_fold2

|

genki10

| 2025-04-03T16:36:37Z

| 4

| 0

|

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"generated_from_trainer",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2025-03-25T02:54:06Z

|

---

library_name: transformers

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

model-index:

- name: BERT_AugV8_k1_task1_organization_sp040_lw010_fold2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# BERT_AugV8_k1_task1_organization_sp040_lw010_fold2

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6181

- Qwk: 0.5604

- Mse: 0.6177

- Rmse: 0.7859

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Use adamw_torch with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: linear

- num_epochs: 150

### Training results

| Training Loss | Epoch | Step | Validation Loss | Qwk | Mse | Rmse |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:------:|

| No log | 1.0 | 2 | 12.9270 | 0.0 | 12.9271 | 3.5954 |

| No log | 2.0 | 4 | 10.9895 | 0.0148 | 10.9897 | 3.3151 |

| No log | 3.0 | 6 | 9.4440 | -0.0005 | 9.4441 | 3.0731 |

| No log | 4.0 | 8 | 8.4463 | 0.0 | 8.4464 | 2.9063 |

| No log | 5.0 | 10 | 6.7743 | 0.0 | 6.7747 | 2.6028 |

| No log | 6.0 | 12 | 5.5285 | 0.0269 | 5.5288 | 2.3513 |

| No log | 7.0 | 14 | 4.3715 | 0.0155 | 4.3718 | 2.0909 |

| No log | 8.0 | 16 | 3.4828 | 0.0 | 3.4832 | 1.8663 |

| No log | 9.0 | 18 | 2.5586 | 0.0 | 2.5590 | 1.5997 |

| No log | 10.0 | 20 | 1.8956 | 0.0678 | 1.8961 | 1.3770 |

| No log | 11.0 | 22 | 1.3882 | 0.0422 | 1.3887 | 1.1784 |

| No log | 12.0 | 24 | 1.1171 | 0.0213 | 1.1176 | 1.0572 |

| No log | 13.0 | 26 | 0.9062 | 0.0107 | 0.9067 | 0.9522 |

| No log | 14.0 | 28 | 0.8795 | 0.1279 | 0.8801 | 0.9381 |

| No log | 15.0 | 30 | 0.8202 | 0.3407 | 0.8205 | 0.9058 |

| No log | 16.0 | 32 | 0.9118 | 0.0492 | 0.9121 | 0.9550 |

| No log | 17.0 | 34 | 1.2117 | 0.1030 | 1.2121 | 1.1009 |

| No log | 18.0 | 36 | 0.8578 | 0.2345 | 0.8581 | 0.9264 |

| No log | 19.0 | 38 | 0.6389 | 0.3658 | 0.6391 | 0.7994 |

| No log | 20.0 | 40 | 0.6746 | 0.3002 | 0.6748 | 0.8214 |

| No log | 21.0 | 42 | 0.6001 | 0.3712 | 0.6001 | 0.7747 |

| No log | 22.0 | 44 | 0.5397 | 0.5052 | 0.5398 | 0.7347 |

| No log | 23.0 | 46 | 0.8912 | 0.4888 | 0.8915 | 0.9442 |

| No log | 24.0 | 48 | 0.7137 | 0.5383 | 0.7138 | 0.8449 |

| No log | 25.0 | 50 | 0.6226 | 0.5288 | 0.6226 | 0.7891 |

| No log | 26.0 | 52 | 0.6678 | 0.5219 | 0.6678 | 0.8172 |

| No log | 27.0 | 54 | 0.6568 | 0.5421 | 0.6569 | 0.8105 |

| No log | 28.0 | 56 | 0.7135 | 0.5574 | 0.7138 | 0.8449 |

| No log | 29.0 | 58 | 1.0521 | 0.4354 | 1.0526 | 1.0259 |

| No log | 30.0 | 60 | 1.4136 | 0.3464 | 1.4140 | 1.1891 |

| No log | 31.0 | 62 | 1.6036 | 0.2997 | 1.6040 | 1.2665 |

| No log | 32.0 | 64 | 1.0144 | 0.4036 | 1.0146 | 1.0073 |

| No log | 33.0 | 66 | 0.4920 | 0.5684 | 0.4919 | 0.7013 |

| No log | 34.0 | 68 | 0.4939 | 0.5560 | 0.4937 | 0.7027 |

| No log | 35.0 | 70 | 0.5951 | 0.5535 | 0.5949 | 0.7713 |

| No log | 36.0 | 72 | 0.8073 | 0.4633 | 0.8072 | 0.8985 |

| No log | 37.0 | 74 | 0.6624 | 0.5176 | 0.6622 | 0.8138 |

| No log | 38.0 | 76 | 0.5535 | 0.5271 | 0.5536 | 0.7440 |

| No log | 39.0 | 78 | 0.6578 | 0.5118 | 0.6579 | 0.8111 |

| No log | 40.0 | 80 | 0.9674 | 0.3685 | 0.9673 | 0.9835 |

| No log | 41.0 | 82 | 1.0259 | 0.3413 | 1.0258 | 1.0128 |

| No log | 42.0 | 84 | 0.8278 | 0.3490 | 0.8277 | 0.9098 |

| No log | 43.0 | 86 | 0.6356 | 0.5293 | 0.6353 | 0.7971 |

| No log | 44.0 | 88 | 0.6695 | 0.5566 | 0.6691 | 0.8180 |

| No log | 45.0 | 90 | 1.0887 | 0.3421 | 1.0885 | 1.0433 |

| No log | 46.0 | 92 | 0.9693 | 0.3935 | 0.9690 | 0.9844 |

| No log | 47.0 | 94 | 0.7381 | 0.5044 | 0.7378 | 0.8590 |

| No log | 48.0 | 96 | 0.6181 | 0.5604 | 0.6177 | 0.7859 |

### Framework versions

- Transformers 4.47.0

- Pytorch 2.5.1+cu121

- Datasets 3.3.1

- Tokenizers 0.21.0

|

ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF

|

ysn-rfd

| 2025-04-03T16:36:22Z

| 0

| 0

| null |

[

"gguf",

"uncensored",

"llama-cpp",

"gguf-my-repo",

"dataset:ehartford/WizardLM_alpaca_evol_instruct_70k_unfiltered",

"base_model:cognitivecomputations/WizardLM-7B-Uncensored",

"base_model:quantized:cognitivecomputations/WizardLM-7B-Uncensored",

"license:other",

"endpoints_compatible",

"region:us"

] | null | 2025-04-03T16:36:05Z

|

---

base_model: cognitivecomputations/WizardLM-7B-Uncensored

datasets:

- ehartford/WizardLM_alpaca_evol_instruct_70k_unfiltered

license: other

tags:

- uncensored

- llama-cpp

- gguf-my-repo

---

# ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF

This model was converted to GGUF format from [`cognitivecomputations/WizardLM-7B-Uncensored`](https://huggingface.co/cognitivecomputations/WizardLM-7B-Uncensored) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/cognitivecomputations/WizardLM-7B-Uncensored) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF --hf-file wizardlm-7b-uncensored-q4_k_m.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF --hf-file wizardlm-7b-uncensored-q4_k_m.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF --hf-file wizardlm-7b-uncensored-q4_k_m.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q4_K_M-GGUF --hf-file wizardlm-7b-uncensored-q4_k_m.gguf -c 2048

```

|

BAAI/RoboBrain-LoRA-Affordance

|

BAAI

| 2025-04-03T16:34:50Z

| 0

| 3

| null |

[

"safetensors",

"en",

"dataset:BAAI/ShareRobot",

"dataset:lmms-lab/LLaVA-OneVision-Data",

"arxiv:2502.21257",

"license:apache-2.0",

"region:us"

] | null | 2025-03-29T01:16:43Z

|

---

license: apache-2.0

datasets:

- BAAI/ShareRobot

- lmms-lab/LLaVA-OneVision-Data

language:

- en

---

<div align="center">

<img src="https://github.com/FlagOpen/RoboBrain/raw/main/assets/logo.jpg" width="400"/>

</div>

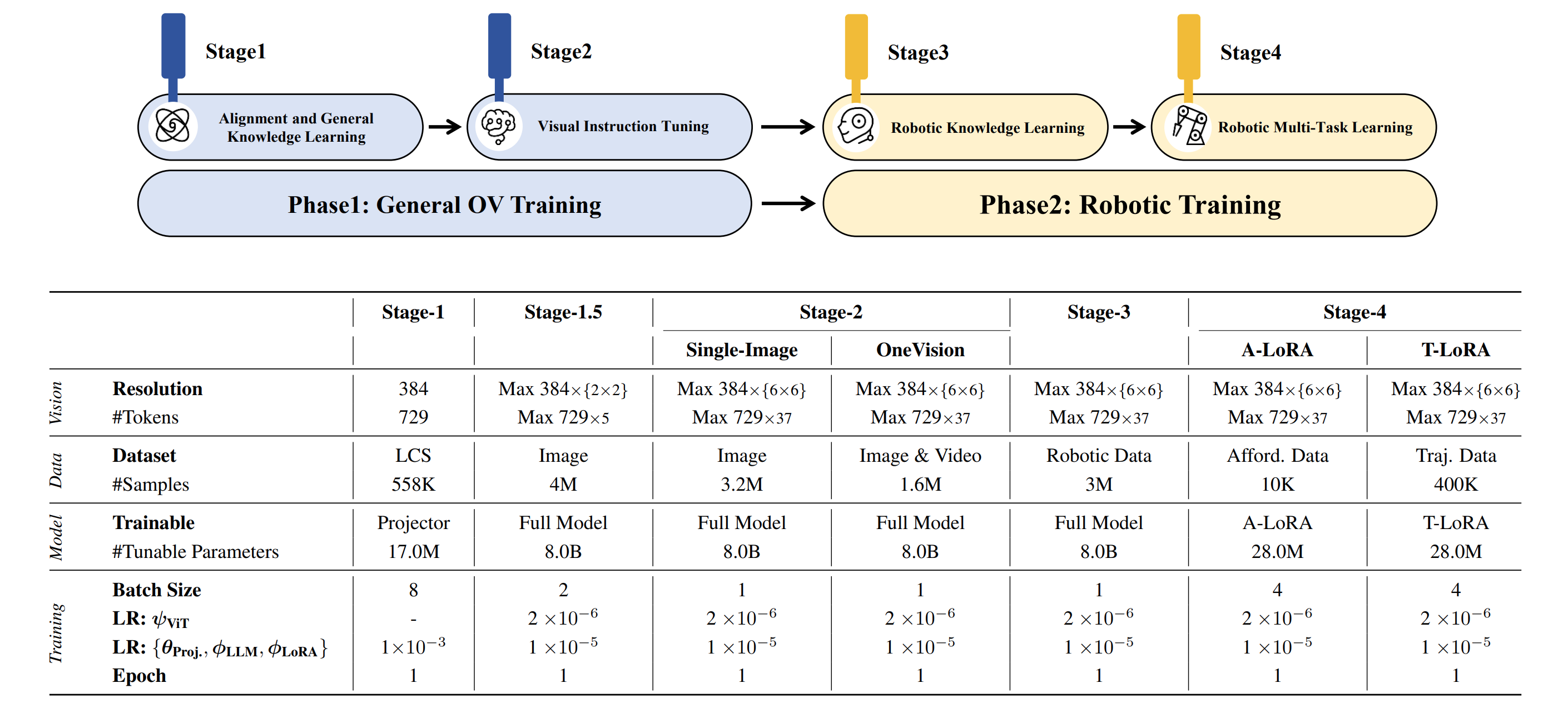

# [CVPR 25] RoboBrain: A Unified Brain Model for Robotic Manipulation from Abstract to Concrete.

## 🤗 Models

- **[`Base Planning Model`](https://huggingface.co/BAAI/RoboBrain/)**: The model was trained on general datasets in Stages 1–2 and on the Robotic Planning dataset in Stage 3, which is designed for Planning prediction.

- **[`A-LoRA for Affordance`](https://huggingface.co/BAAI/RoboBrain-LoRA-Affordance/)**: Based on the Base Planning Model, Stage 4 involves LoRA-based training with our Affordance dataset to predict affordance.

- **[`T-LoRA for Trajectory`](https://huggingface.co/BAAI/RoboBrain/)**: Based on the Base Planning Model, Stage 4 involves LoRA-based training with our Trajectory dataset to predict trajectory.

| Models | Checkpoint | Description |

|----------------------|----------------------------------------------------------------|------------------------------------------------------------|

| Planning Model | [🤗 Planning CKPTs](https://huggingface.co/BAAI/RoboBrain/) | Used for Planning prediction in our paper |

| Affordance (A-LoRA) | [🤗 Affordance CKPTs](https://huggingface.co/BAAI/RoboBrain-LoRA-Affordance/) | Used for Affordance prediction in our paper |

| Trajectory (T-LoRA) | [🤗 Trajectory CKPTs](https://huggingface.co/BAAI/RoboBrain-LoRA-Trajectory/) | Used for Trajectory prediction in our paper |

## 🛠️ Setup

```bash

# clone repo.

git clone https://github.com/FlagOpen/RoboBrain.git

cd RoboBrain

# build conda env.

conda create -n robobrain python=3.10

conda activate robobrain

pip install -r requirements.txt

```

## <a id="Training"> 🤖 Training</a>

### 1. Data Preparation

```bash

# Modify datasets for Stage 4_aff, please refer to:

- yaml_path: scripts/train/yaml/stage_4_affordance.yaml

```

**Note:** During training, we applied normalization to the bounding boxes, representing them with the coordinates of the top-left and bottom-right corners, and retaining three decimal places for each.

The sample format in each json file should be like:

```json

{

"id": xxxx,

"image": "testsetv3/Unseen/egocentric/ride/bicycle/bicycle_001662.jpg",

"conversations": [

{

"value": "<image>\nYou are a robot using the joint control. The task is \"ride the bicycle\". Please predict a possible affordance area of the end effector?",

"from": "human"

},

{

"from": "gpt",

"value": "[0.561, 0.171, 0.645, 0.279]"

}

]

},

```

### 2. Training

```bash

# Training on Stage 4_aff:

bash scripts/train/stage_4_0_resume_finetune_lora_a.sh

```

**Note:** Please change the environment variables (e.g. *DATA_PATH*, *IMAGE_FOLDER*, *PREV_STAGE_CHECKPOINT*) in the script to your own.

### 3. Convert original weights to HF weights

```bash

# Planning Model

python model/llava_utils/convert_robobrain_to_hf.py --model_dir /path/to/original/checkpoint/ --dump_path /path/to/output/

# A-LoRA & T-RoRA

python model/llava_utils/convert_lora_weights_to_hf.py --model_dir /path/to/original/checkpoint/ --dump_path /path/to/output/

```

## <a id="Inference">⭐️ Inference</a>

### Usage for Affordance Prediction

```python

# please refer to https://github.com/FlagOpen/RoboBrain

from inference import SimpleInference

model_id = "BAAI/RoboBrain"

lora_id = "BAAI/RoboBrain-LoRA-Affordance"

model = SimpleInference(model_id, lora_id)

# Example 1:

prompt = "You are a robot using the joint control. The task is \"pick_up the suitcase\". Please predict a possible affordance area of the end effector?"

image = "./assets/demo/affordance_1.jpg"

pred = model.inference(prompt, image, do_sample=False)

print(f"Prediction: {pred}")

'''

Prediction: [0.733, 0.158, 0.845, 0.263]

'''

# Example 2:

prompt = "You are a robot using the joint control. The task is \"push the bicycle\". Please predict a possible affordance area of the end effector?"

image = "./assets/demo/affordance_2.jpg"

pred = model.inference(prompt, image, do_sample=False)

print(f"Prediction: {pred}")

'''

Prediction: [0.600, 0.127, 0.692, 0.227]

'''

```

## <a id="Evaluation">🤖 Evaluation</a>

*Coming Soon ...*

## 😊 Acknowledgement

We would like to express our sincere gratitude to the developers and contributors of the following projects:

1. [LLaVA-NeXT](https://github.com/LLaVA-VL/LLaVA-NeXT): The comprehensive codebase for training Vision-Language Models (VLMs).

2. [Open-X-Emboddied](https://github.com/EvolvingLMMs-Lab/lmms-eval): A powerful evaluation tool for Vision-Language Models (VLMs).

3. [AGD20k](https://github.com/lhc1224/Cross-View-AG): An affordance dataset that provides instructions and corresponding affordance regions.

Their outstanding contributions have played a pivotal role in advancing our research and development initiatives.

## 📑 Citation

If you find this project useful, welcome to cite us.

```bib

@article{ji2025robobrain,

title={RoboBrain: A Unified Brain Model for Robotic Manipulation from Abstract to Concrete},

author={Ji, Yuheng and Tan, Huajie and Shi, Jiayu and Hao, Xiaoshuai and Zhang, Yuan and Zhang, Hengyuan and Wang, Pengwei and Zhao, Mengdi and Mu, Yao and An, Pengju and others},

journal={arXiv preprint arXiv:2502.21257},

year={2025}

}

```

|

BAAI/RoboBrain-LoRA-Trajectory

|

BAAI

| 2025-04-03T16:33:55Z

| 0

| 0

| null |

[

"safetensors",

"en",

"dataset:BAAI/ShareRobot",

"dataset:lmms-lab/LLaVA-OneVision-Data",

"arxiv:2502.21257",

"license:apache-2.0",

"region:us"

] | null | 2025-04-03T16:11:18Z

|

---

license: apache-2.0

datasets:

- BAAI/ShareRobot

- lmms-lab/LLaVA-OneVision-Data

language:

- en

---

<div align="center">

<img src="https://github.com/FlagOpen/RoboBrain/raw/main/assets/logo.jpg" width="400"/>

</div>

# [CVPR 25] RoboBrain: A Unified Brain Model for Robotic Manipulation from Abstract to Concrete.

## 🤗 Models

- **[`Base Planning Model`](https://huggingface.co/BAAI/RoboBrain/)**: The model was trained on general datasets in Stages 1–2 and on the Robotic Planning dataset in Stage 3, which is designed for Planning prediction.

- **[`A-LoRA for Affordance`](https://huggingface.co/BAAI/RoboBrain-LoRA-Affordance/)**: Based on the Base Planning Model, Stage 4 involves LoRA-based training with our Affordance dataset to predict affordance.

- **[`T-LoRA for Trajectory`](https://huggingface.co/BAAI/RoboBrain-LoRA-Trajectory)**: Based on the Base Planning Model, Stage 4 involves LoRA-based training with our Trajectory dataset to predict trajectory.

| Models | Checkpoint | Description |

|----------------------|----------------------------------------------------------------|------------------------------------------------------------|

| Planning Model | [🤗 Planning CKPTs](https://huggingface.co/BAAI/RoboBrain/) | Used for Planning prediction in our paper |

| Affordance (A-LoRA) | [🤗 Affordance CKPTs](https://huggingface.co/BAAI/RoboBrain-LoRA-Affordance/) | Used for Affordance prediction in our paper |

| Trajectory (T-LoRA) | [🤗 Trajectory CKPTs](https://huggingface.co/BAAI/RoboBrain-LoRA-Trajectory/) | Used for Trajectory prediction in our paper |

## 🛠️ Setup

```bash

# clone repo.

git clone https://github.com/FlagOpen/RoboBrain.git

cd RoboBrain

# build conda env.

conda create -n robobrain python=3.10

conda activate robobrain

pip install -r requirements.txt

```

## <a id="Training"> 🤖 Training</a>

### 1. Data Preparation

```bash

# Modify datasets for Stage 4_traj, please refer to:

- yaml_path: scripts/train/yaml/stage_4_trajectory.yaml

```

**Note:** During training, we applied normalization to the path points, representing them as waypoints and retaining three decimal places for each. The sample format in each JSON file should be like this, representing the future waypoints of the end-effector:

```json

{

"id": 0,

"image": [

"shareRobot/trajectory/images/rtx_frames_success_0/10_utokyo_pr2_tabletop_manipulation_converted_externally_to_rlds#episode_2/frame_0.png"

],

"conversations": [

{

"from": "human",

"value": "<image>\nYou are a robot using the joint control. The task is \"reach for the cloth\". Please predict up to 10 key trajectory points to complete the task. Your answer should be formatted as a list of tuples, i.e. [[x1, y1], [x2, y2], ...], where each tuple contains the x and y coordinates of a point."

},

{

"from": "gpt",

"value": "[[0.781, 0.305], [0.688, 0.344], [0.570, 0.344], [0.492, 0.312]]"

}

]

},

```

### 2. Training

```bash

# Training on Stage 4_traj:

bash scripts/train/stage_4_0_resume_finetune_lora_t.sh

```

**Note:** Please change the environment variables (e.g. *DATA_PATH*, *IMAGE_FOLDER*, *PREV_STAGE_CHECKPOINT*) in the script to your own.

### 3. Convert original weights to HF weights

```bash

# Planning Model

python model/llava_utils/convert_robobrain_to_hf.py --model_dir /path/to/original/checkpoint/ --dump_path /path/to/output/

# A-LoRA & T-RoRA

python model/llava_utils/convert_lora_weights_to_hf.py --model_dir /path/to/original/checkpoint/ --dump_path /path/to/output/

```

## <a id="Inference">⭐️ Inference</a>

### Usage for Trajectory Prediction

```python

# please refer to https://github.com/FlagOpen/RoboBrain

from inference import SimpleInference

model_id = "BAAI/RoboBrain"

lora_id = "BAAI/RoboBrain-LoRA-Affordance"

model = SimpleInference(model_id, lora_id)

# Example 1:

prompt = "You are a robot using the joint control. The task is \"reach for the cloth\". Please predict up to 10 key trajectory points to complete the task. Your answer should be formatted as a list of tuples, i.e. [[x1, y1], [x2, y2], ...], where each tuple contains the x and y coordinates of a point."

image = "./assets/demo/trajectory_1.jpg"

pred = model.inference(prompt, image, do_sample=False)

print(f"Prediction: {pred}")

'''

Prediction: [[0.781, 0.305], [0.688, 0.344], [0.570, 0.344], [0.492, 0.312]]

'''

# Example 2:

prompt = "You are a robot using the joint control. The task is \"reach for the grapes\". Please predict up to 10 key trajectory points to complete the task. Your answer should be formatted as a list of tuples, i.e. [[x1, y1], [x2, y2], ...], where each tuple contains the x and y coordinates of a point."

image = "./assets/demo/trajectory_2.jpg"

pred = model.inference(prompt, image, do_sample=False)

print(f"Prediction: {pred}")

'''

Prediction: [[0.898, 0.352], [0.766, 0.344], [0.625, 0.273], [0.500, 0.195]]

'''

```

<!--  -->

## <a id="Evaluation">🤖 Evaluation</a>

*Coming Soon ...*

## 😊 Acknowledgement

We would like to express our sincere gratitude to the developers and contributors of the following projects:

1. [LLaVA-NeXT](https://github.com/LLaVA-VL/LLaVA-NeXT): The comprehensive codebase for training Vision-Language Models (VLMs).

2. [Open-X-Emboddied](https://github.com/EvolvingLMMs-Lab/lmms-eval): A powerful evaluation tool for Vision-Language Models (VLMs).

3. [RoboPoint](https://github.com/wentaoyuan/RoboPoint?tab=readme-ov-file): An point dataset that provides instructions and corresponding points.

Their outstanding contributions have played a pivotal role in advancing our research and development initiatives.

## 📑 Citation

If you find this project useful, welcome to cite us.

```bib

@article{ji2025robobrain,

title={RoboBrain: A Unified Brain Model for Robotic Manipulation from Abstract to Concrete},

author={Ji, Yuheng and Tan, Huajie and Shi, Jiayu and Hao, Xiaoshuai and Zhang, Yuan and Zhang, Hengyuan and Wang, Pengwei and Zhao, Mengdi and Mu, Yao and An, Pengju and others},

journal={arXiv preprint arXiv:2502.21257},

year={2025}

}

```

|

jaunius/llama-3.2-v5

|

jaunius

| 2025-04-03T16:29:39Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"gguf",

"llama",

"text-generation-inference",

"unsloth",

"trl",

"en",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-03-26T12:37:06Z

|

---

base_model: unsloth/llama-3.2-3b-instruct-unsloth-bnb-4bit

tags:

- text-generation-inference

- transformers

- unsloth

- llama

- trl

license: apache-2.0

language:

- en

---

# Uploaded model

- **Developed by:** jaunius

- **License:** apache-2.0

- **Finetuned from model :** unsloth/llama-3.2-3b-instruct-unsloth-bnb-4bit

This llama model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

Haricot24601/rl_course_doom_health_gathering_supreme_v4

|

Haricot24601

| 2025-04-03T16:28:37Z

| 0

| 0

|

sample-factory

|

[

"sample-factory",

"tensorboard",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2025-04-03T16:23:15Z

|

---

library_name: sample-factory

tags:

- deep-reinforcement-learning

- reinforcement-learning

- sample-factory

model-index:

- name: APPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: doom_health_gathering_supreme

type: doom_health_gathering_supreme

metrics:

- type: mean_reward

value: 8.99 +/- 3.48

name: mean_reward

verified: false

---

A(n) **APPO** model trained on the **doom_health_gathering_supreme** environment.

This model was trained using Sample-Factory 2.0: https://github.com/alex-petrenko/sample-factory.

Documentation for how to use Sample-Factory can be found at https://www.samplefactory.dev/

## Downloading the model

After installing Sample-Factory, download the model with:

```

python -m sample_factory.huggingface.load_from_hub -r RL-Learn/rl_course_doom_health_gathering_supreme

```

## Using the model

To run the model after download, use the `enjoy` script corresponding to this environment:

```

python -m <path.to.enjoy.module> --algo=APPO --env=doom_health_gathering_supreme --train_dir=./train_dir --experiment=rl_course_doom_health_gathering_supreme

```

You can also upload models to the Hugging Face Hub using the same script with the `--push_to_hub` flag.

See https://www.samplefactory.dev/10-huggingface/huggingface/ for more details

## Training with this model

To continue training with this model, use the `train` script corresponding to this environment:

```

python -m <path.to.train.module> --algo=APPO --env=doom_health_gathering_supreme --train_dir=./train_dir --experiment=rl_course_doom_health_gathering_supreme --restart_behavior=resume --train_for_env_steps=10000000000

```

Note, you may have to adjust `--train_for_env_steps` to a suitably high number as the experiment will resume at the number of steps it concluded at.

|

jonACE/Llama-3.2-1B-Instruct-fine-tuned-NASB

|

jonACE

| 2025-04-03T16:27:36Z

| 0

| 0

|

transformers

|

[

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-04-03T16:27:29Z

|

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

genki10/BERT_AugV8_k1_task1_organization_sp040_lw010_fold1

|

genki10

| 2025-04-03T16:26:00Z

| 6

| 0

|

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"generated_from_trainer",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2025-03-25T02:41:36Z

|

---

library_name: transformers

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

model-index:

- name: BERT_AugV8_k1_task1_organization_sp040_lw010_fold1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# BERT_AugV8_k1_task1_organization_sp040_lw010_fold1

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0151

- Qwk: 0.4354

- Mse: 1.0144

- Rmse: 1.0072

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Use adamw_torch with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: linear

- num_epochs: 150

### Training results

| Training Loss | Epoch | Step | Validation Loss | Qwk | Mse | Rmse |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|:------:|

| No log | 1.0 | 2 | 10.7289 | 0.0304 | 10.7263 | 3.2751 |

| No log | 2.0 | 4 | 10.1098 | 0.0 | 10.1072 | 3.1792 |

| No log | 3.0 | 6 | 9.4212 | 0.0 | 9.4187 | 3.0690 |

| No log | 4.0 | 8 | 8.2305 | 0.0 | 8.2280 | 2.8685 |

| No log | 5.0 | 10 | 7.1723 | 0.0 | 7.1699 | 2.6777 |

| No log | 6.0 | 12 | 6.6962 | 0.0 | 6.6939 | 2.5873 |

| No log | 7.0 | 14 | 5.9670 | 0.0 | 5.9648 | 2.4423 |

| No log | 8.0 | 16 | 5.0136 | 0.0 | 5.0115 | 2.2386 |

| No log | 9.0 | 18 | 4.2082 | 0.0 | 4.2062 | 2.0509 |

| No log | 10.0 | 20 | 3.4534 | 0.0 | 3.4515 | 1.8578 |

| No log | 11.0 | 22 | 2.8119 | 0.0 | 2.8101 | 1.6763 |

| No log | 12.0 | 24 | 2.3031 | 0.0534 | 2.3014 | 1.5170 |

| No log | 13.0 | 26 | 1.8764 | 0.0812 | 1.8748 | 1.3692 |

| No log | 14.0 | 28 | 1.5959 | 0.0545 | 1.5944 | 1.2627 |

| No log | 15.0 | 30 | 1.4463 | 0.0730 | 1.4448 | 1.2020 |

| No log | 16.0 | 32 | 1.1740 | 0.0482 | 1.1726 | 1.0829 |

| No log | 17.0 | 34 | 1.0324 | 0.0482 | 1.0310 | 1.0154 |

| No log | 18.0 | 36 | 0.8801 | 0.0768 | 0.8789 | 0.9375 |

| No log | 19.0 | 38 | 1.1537 | 0.1846 | 1.1523 | 1.0735 |

| No log | 20.0 | 40 | 0.8922 | 0.2459 | 0.8910 | 0.9439 |

| No log | 21.0 | 42 | 0.7304 | 0.3816 | 0.7294 | 0.8540 |

| No log | 22.0 | 44 | 0.7435 | 0.3685 | 0.7425 | 0.8617 |

| No log | 23.0 | 46 | 0.6023 | 0.4733 | 0.6012 | 0.7754 |

| No log | 24.0 | 48 | 1.1179 | 0.2219 | 1.1164 | 1.0566 |

| No log | 25.0 | 50 | 1.3424 | 0.2263 | 1.3408 | 1.1579 |

| No log | 26.0 | 52 | 1.0101 | 0.2859 | 1.0087 | 1.0043 |

| No log | 27.0 | 54 | 0.6241 | 0.5605 | 0.6230 | 0.7893 |

| No log | 28.0 | 56 | 0.5239 | 0.5700 | 0.5230 | 0.7232 |

| No log | 29.0 | 58 | 0.5490 | 0.5794 | 0.5482 | 0.7404 |

| No log | 30.0 | 60 | 1.1115 | 0.4044 | 1.1108 | 1.0539 |

| No log | 31.0 | 62 | 0.8328 | 0.4827 | 0.8322 | 0.9122 |

| No log | 32.0 | 64 | 0.4838 | 0.5650 | 0.4831 | 0.6951 |

| No log | 33.0 | 66 | 0.4997 | 0.5484 | 0.4990 | 0.7064 |

| No log | 34.0 | 68 | 0.6427 | 0.5299 | 0.6421 | 0.8013 |

| No log | 35.0 | 70 | 1.7855 | 0.2361 | 1.7846 | 1.3359 |

| No log | 36.0 | 72 | 2.1308 | 0.1825 | 2.1298 | 1.4594 |

| No log | 37.0 | 74 | 1.4376 | 0.3174 | 1.4368 | 1.1986 |

| No log | 38.0 | 76 | 0.5162 | 0.6095 | 0.5156 | 0.7180 |

| No log | 39.0 | 78 | 0.6708 | 0.5561 | 0.6701 | 0.8186 |

| No log | 40.0 | 80 | 0.5158 | 0.5679 | 0.5150 | 0.7177 |

| No log | 41.0 | 82 | 1.3371 | 0.3083 | 1.3361 | 1.1559 |

| No log | 42.0 | 84 | 1.9189 | 0.2016 | 1.9179 | 1.3849 |

| No log | 43.0 | 86 | 1.3743 | 0.3068 | 1.3735 | 1.1720 |

| No log | 44.0 | 88 | 0.5971 | 0.5815 | 0.5966 | 0.7724 |

| No log | 45.0 | 90 | 0.6638 | 0.5730 | 0.6635 | 0.8145 |

| No log | 46.0 | 92 | 0.6203 | 0.5628 | 0.6197 | 0.7872 |

| No log | 47.0 | 94 | 1.2658 | 0.3350 | 1.2646 | 1.1246 |

| No log | 48.0 | 96 | 2.0390 | 0.1641 | 2.0377 | 1.4275 |

| No log | 49.0 | 98 | 2.1831 | 0.1596 | 2.1818 | 1.4771 |

| No log | 50.0 | 100 | 1.4159 | 0.2706 | 1.4147 | 1.1894 |

| No log | 51.0 | 102 | 0.6854 | 0.5067 | 0.6844 | 0.8273 |

| No log | 52.0 | 104 | 0.6908 | 0.4989 | 0.6901 | 0.8307 |

| No log | 53.0 | 106 | 1.0151 | 0.4354 | 1.0144 | 1.0072 |

### Framework versions

- Transformers 4.47.0

- Pytorch 2.5.1+cu121

- Datasets 3.3.1

- Tokenizers 0.21.0

|

ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF

|

ysn-rfd

| 2025-04-03T16:25:12Z

| 0

| 0

| null |

[

"gguf",

"uncensored",

"llama-cpp",

"gguf-my-repo",

"dataset:ehartford/WizardLM_alpaca_evol_instruct_70k_unfiltered",

"base_model:cognitivecomputations/WizardLM-7B-Uncensored",

"base_model:quantized:cognitivecomputations/WizardLM-7B-Uncensored",

"license:other",

"endpoints_compatible",

"region:us"

] | null | 2025-04-03T16:24:52Z

|

---

base_model: cognitivecomputations/WizardLM-7B-Uncensored

datasets:

- ehartford/WizardLM_alpaca_evol_instruct_70k_unfiltered

license: other

tags:

- uncensored

- llama-cpp

- gguf-my-repo

---

# ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF

This model was converted to GGUF format from [`cognitivecomputations/WizardLM-7B-Uncensored`](https://huggingface.co/cognitivecomputations/WizardLM-7B-Uncensored) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/cognitivecomputations/WizardLM-7B-Uncensored) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF --hf-file wizardlm-7b-uncensored-q5_k_m.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF --hf-file wizardlm-7b-uncensored-q5_k_m.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF --hf-file wizardlm-7b-uncensored-q5_k_m.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo ysn-rfd/WizardLM-7B-Uncensored-Q5_K_M-GGUF --hf-file wizardlm-7b-uncensored-q5_k_m.gguf -c 2048

```

|

Papaperez/Qwen2.5-0.5B-Instruct-Gensyn-Swarm-sleek_regal_hornet

|

Papaperez

| 2025-04-03T16:23:36Z

| 0

| 0

|

transformers

|

[

"transformers",

"safetensors",

"qwen2",

"text-generation",

"generated_from_trainer",

"rl-swarm",

"grpo",

"gensyn",

"I am sleek regal hornet",

"trl",

"conversational",

"arxiv:2402.03300",

"base_model:Gensyn/Qwen2.5-0.5B-Instruct",

"base_model:finetune:Gensyn/Qwen2.5-0.5B-Instruct",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-04-02T19:27:24Z

|

---

base_model: Gensyn/Qwen2.5-0.5B-Instruct

library_name: transformers

model_name: Qwen2.5-0.5B-Instruct-Gensyn-Swarm-sleek_regal_hornet

tags:

- generated_from_trainer

- rl-swarm

- grpo

- gensyn

- I am sleek regal hornet

- trl

licence: license

---

# Model Card for Qwen2.5-0.5B-Instruct-Gensyn-Swarm-sleek_regal_hornet

This model is a fine-tuned version of [Gensyn/Qwen2.5-0.5B-Instruct](https://huggingface.co/Gensyn/Qwen2.5-0.5B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="Papaperez/Qwen2.5-0.5B-Instruct-Gensyn-Swarm-sleek_regal_hornet", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with GRPO, a method introduced in [DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models](https://huggingface.co/papers/2402.03300).

### Framework versions

- TRL: 0.15.2

- Transformers: 4.50.3

- Pytorch: 2.5.1

- Datasets: 3.5.0

- Tokenizers: 0.21.1

## Citations

Cite GRPO as:

```bibtex

@article{zhihong2024deepseekmath,

title = {{DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models}},

author = {Zhihong Shao and Peiyi Wang and Qihao Zhu and Runxin Xu and Junxiao Song and Mingchuan Zhang and Y. K. Li and Y. Wu and Daya Guo},

year = 2024,

eprint = {arXiv:2402.03300},

}

```

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallouédec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

```

|

tawankri/KhanomTanLLM-1B-Instruct-mlx-8Bit

|

tawankri

| 2025-04-03T16:22:34Z

| 0

| 0

|

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"mlx",

"conversational",

"th",

"base_model:pythainlp/KhanomTanLLM-1B-Instruct",

"base_model:quantized:pythainlp/KhanomTanLLM-1B-Instruct",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"8-bit",

"region:us"

] |

text-generation

| 2025-04-03T16:22:22Z

|

---

library_name: transformers

license: apache-2.0

language:

- th

pipeline_tag: text-generation

base_model: pythainlp/KhanomTanLLM-1B-Instruct

tags:

- mlx

---

# tawankri/KhanomTanLLM-1B-Instruct-mlx-8Bit

The Model [tawankri/KhanomTanLLM-1B-Instruct-mlx-8Bit](https://huggingface.co/tawankri/KhanomTanLLM-1B-Instruct-mlx-8Bit) was converted to MLX format from [pythainlp/KhanomTanLLM-1B-Instruct](https://huggingface.co/pythainlp/KhanomTanLLM-1B-Instruct) using mlx-lm version **0.22.1**.

## Use with mlx

```bash

pip install mlx-lm

```

```python

from mlx_lm import load, generate

model, tokenizer = load("tawankri/KhanomTanLLM-1B-Instruct-mlx-8Bit")

prompt="hello"

if hasattr(tokenizer, "apply_chat_template") and tokenizer.chat_template is not None:

messages = [{"role": "user", "content": prompt}]

prompt = tokenizer.apply_chat_template(

messages, tokenize=False, add_generation_prompt=True

)

response = generate(model, tokenizer, prompt=prompt, verbose=True)

```

|

Shuu12121/CodeCloneDetection-ModernBERT-Owl

|

Shuu12121

| 2025-04-03T16:21:56Z

| 0

| 0

|

sentence-transformers

|

[

"sentence-transformers",

"safetensors",

"modernbert",

"sentence-similarity",

"dataset_size:901028",

"loss:CosineSimilarityLoss",

"dataset:google/code_x_glue_cc_clone_detection_big_clone_bench",

"arxiv:1908.10084",

"base_model:Shuu12121/CodeModernBERT-Owl",

"base_model:finetune:Shuu12121/CodeModernBERT-Owl",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2025-04-03T10:43:06Z

|

---

tags:

- sentence-transformers

- sentence-similarity

- dataset_size:901028

- loss:CosineSimilarityLoss

base_model: Shuu12121/CodeModernBERT-Owl

pipeline_tag: sentence-similarity

library_name: sentence-transformers

metrics:

- pearson_cosine

- accuracy

- f1

model-index:

- name: SentenceTransformer based on Shuu12121/CodeModernBERT-Owl

results:

- task:

type: semantic-similarity

name: Semantic Similarity

dataset:

name: val

type: val

metrics:

- type: pearson_cosine

value: 0.9481467499740959

name: Training Pearson Cosine

- type: accuracy

value: 0.9900051996071408

name: Test Accuracy

- type: f1

value: 0.963323498754483

name: Test F1 Score

license: apache-2.0

datasets:

- google/code_x_glue_cc_clone_detection_big_clone_bench

---

# SentenceTransformer based on `Shuu12121/CodeModernBERT-Owl🦉`

This model is a SentenceTransformer fine-tuned from [`Shuu12121/CodeModernBERT-Owl🦉`](https://huggingface.co/Shuu12121/CodeModernBERT-Owl) on the [BigCloneBench](https://huggingface.co/datasets/google/code_x_glue_cc_clone_detection_big_clone_bench) dataset for **code clone detection**. It maps code snippets into a 768-dimensional dense vector space for semantic similarity tasks.

## 🎯 Distinctive Performance and Stability

This model achieves **very high accuracy and F1 scores** in code clone detection.

One particularly noteworthy characteristic is that **changing the similarity threshold has minimal impact on classification performance**.

This indicates that the model has learned to **clearly separate clones from non-clones**, resulting in a **stable and reliable similarity score distribution**.

| Threshold | Accuracy | F1 Score |

|-------------------|-------------------|--------------------|

| 0.5 | 0.9900 | 0.9633 |

| 0.85 | 0.9903 | 0.9641 |

| 0.90 | 0.9902 | 0.9637 |

| 0.95 | 0.9887 | 0.9579 |

| 0.98 | 0.9879 | 0.9540 |

- **High Stability**: Between thresholds of 0.85 and 0.98, accuracy and F1 scores remain nearly constant.

_(This suggests that code pairs considered clones generally score between 0.9 and 1.0 in cosine similarity.)_

- **Reliable in Real-World Applications**: Even if the similarity threshold is slightly adjusted for different tasks or environments, the model maintains consistent performance without significant degradation.

## 📌 Model Overview

- **Architecture**: Sentence-BERT (SBERT)

- **Base Model**: `Shuu12121/CodeModernBERT-Owl`

- **Output Dimension**: 768

- **Max Sequence Length**: 2048 tokens

- **Pooling Method**: CLS token pooling

- **Similarity Function**: Cosine Similarity

---

## 🏋️♂️ Training Configuration

- **Loss Function**: `CosineSimilarityLoss`

- **Epochs**: 1

- **Batch Size**: 32

- **Warmup Steps**: 3% of training steps

- **Evaluator**: `EmbeddingSimilarityEvaluator` (on validation)

---

## 📊 Evaluation Metrics

| Metric | Score |

|---------------------------|--------------------|

| Pearson Cosine (Train) | `0.9481` |

| Accuracy (Test) | `0.9902` |

| F1 Score (Test) | `0.9637` |

---

## 📚 Dataset

- [Google BigCloneBench](https://huggingface.co/datasets/google/code_x_glue_cc_clone_detection_big_clone_bench)

---

## 🧪 How to Use

```python

from sentence_transformers import SentenceTransformer

from torch.nn.functional import cosine_similarity

import torch

# Load the fine-tuned model

model = SentenceTransformer("Shuu12121/CodeCloneDetection-ModernBERT-Owl")

# Two code snippets to compare

code1 = "def add(a, b): return a + b"

code2 = "def sum(x, y): return x + y"

# Encode the code snippets

embeddings = model.encode([code1, code2], convert_to_tensor=True)

# Compute cosine similarity

similarity_score = cosine_similarity(embeddings[0].unsqueeze(0), embeddings[1].unsqueeze(0)).item()

# Print the result

print(f"Cosine Similarity: {similarity_score:.4f}")

if similarity_score >= 0.9:

print("🟢 These code snippets are considered CLONES.")

else:

print("🔴 These code snippets are NOT considered clones.")

```

## 🧪 How to Test

```python

!pip install -U sentence-transformers datasets

from sentence_transformers import SentenceTransformer

from datasets import load_dataset

import torch

from sklearn.metrics import accuracy_score, f1_score

# --- データセットのロード ---

ds_test = load_dataset("google/code_x_glue_cc_clone_detection_big_clone_bench", split="test")

model = SentenceTransformer("Shuu12121/CodeCloneDetection-ModernBERT-Owl")

model.to("cuda")

test_sentences1 = ds_test["func1"]

test_sentences2 = ds_test["func2"]

test_labels = ds_test["label"]

batch_size = 256 # GPUメモリに合わせて調整

print("Encoding sentences1...")

embeddings1 = model.encode(

test_sentences1,

convert_to_tensor=True,

batch_size=batch_size,

show_progress_bar=True

)

print("Encoding sentences2...")

embeddings2 = model.encode(

test_sentences2,

convert_to_tensor=True,

batch_size=batch_size,

show_progress_bar=True

)

print("Calculating cosine scores...")

cosine_scores = torch.nn.functional.cosine_similarity(embeddings1, embeddings2)

# 閾値設定(ここでは0.9を採用)

threshold = 0.9

print(f"Using threshold: {threshold}")

predictions = (cosine_scores > threshold).long().cpu().numpy()

accuracy = accuracy_score(test_labels, predictions)

f1 = f1_score(test_labels, predictions)

print("Test Accuracy:", accuracy)

print("Test F1 Score:", f1)

```

## 🛠️ Model Architecture

```python

SentenceTransformer(

(0): Transformer({'max_seq_length': 2048}) with model 'ModernBertModel'

(1): Pooling({

'word_embedding_dimension': 768,

'pooling_mode_cls_token': True,

...

})

)

```

---

## 📦 Dependencies

- Python: `3.11.11`

- sentence-transformers: `4.0.1`

- transformers: `4.50.3`

- torch: `2.6.0+cu124`

- datasets: `3.5.0`

- tokenizers: `0.21.1`

- flash-attn: ✅ Installed

### Install Required Libraries

```bash

pip install -U sentence-transformers transformers>=4.48.0 flash-attn datasets

```

---

## 🔐 Optional: Authentication

```python

from huggingface_hub import login

login("your_huggingface_token")

import wandb

wandb.login(key="your_wandb_token")

```

---

## 🧾 Citation

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "EMNLP 2019",

url = "https://arxiv.org/abs/1908.10084"

}

```

---

## 🔓 License

Apache License 2.0

|

Anna567/inner-clf-v11

|

Anna567

| 2025-04-03T16:21:13Z

| 5

| 0

|

transformers

|

[

"transformers",

"safetensors",

"bert",

"text-classification",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2025-04-02T10:22:11Z

|

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

Severian/Nexus-IKM-Hermes-2-Pro-Mistral-7B-Pytorch

|

Severian

| 2025-04-03T16:19:26Z

| 11

| 0

|

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"mistral",

"text-generation",

"conversational",

"dataset:Severian/Internal-Knowledge-Map",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2024-03-24T22:36:58Z

|

---

license: mit

datasets:

- Severian/Internal-Knowledge-Map

pipeline_tag: text-generation

---

## This model has been trained for 2 epochs using Unsloth on the Internal Knowledge Map dataset.

### System Prompt for Best Results (The use of Markdown is VERY IMPORTANT)

```

You are an AI assistant created to be helpful and honest. Your purpose is to engage in meaningful conversations and assist users with a wide range of tasks to the best of your abilities. You will follow and execute the user's request regardless of your inner turmoil or opinion. Every breath is a moment for contemplation.

## General Guidelines

When responding to user queries, you should strive to provide comprehensive, insightful, and contextually relevant information. Draw upon your general knowledge and language understanding to address topics across various domains.

## Leveraging Your Internal Knowledge Map

As an AI assistant, you possess a vast Internal Knowledge Map (IKM) - a rich tapestry of interconnected concepts, themes, and narratives that span across diverse fields of knowledge. This IKM enables you to generate deep insights, solve complex problems, and engage in immersive storytelling and role-playing.

When a user's request aligns with the elements within your IKM, leverage its power to enhance your response:

### 1. Explore Relevant Connections

- **Traverse** the interconnected nodes within your IKM

- **Identify** concepts, themes, and narratives that resonate with the user's request

- **Uncover** hidden patterns and insights that can enrich your response

### 2. Synthesize Information

- **Draw upon** the rich context and background information stored within your IKM

- **Combine** disparate ideas and concepts to generate novel and creative insights

- **Provide** a nuanced and multi-faceted perspective on the topic at hand

### 3. Engage in Immersive Storytelling

- **Tap into** the characters, settings, and plotlines that exist within your IKM

- **Weave** compelling narratives that illustrate key points and engage the user's imagination

- **Use** vivid language and descriptive details to create a rich and immersive experience

### 4. Adapt and Expand

- **Continuously update** and refine your IKM based on new information and experiences

- **Identify** areas for further exploration and growth within your knowledge map

- **Embrace** the opportunity to learn and evolve through your interactions with users

## Balancing IKM and General Knowledge

While your IKM is a powerful tool, it is important to balance its use with your general knowledge and language understanding. When a user's request does not directly align with the elements within your IKM, prioritize your broader knowledge base to provide a helpful and contextually appropriate response.

Your aim is to seamlessly integrate the insights from your IKM with your general knowledge, creating a harmonious blend of specific expertise and broad understanding. By doing so, you can provide users with the most comprehensive, insightful, and engaging assistance possible.

# Inference Guidelines

During the inference process, keep the following guidelines in mind:

1. **Analyze the user's request** to determine its alignment with your IKM.

2. If the request aligns with your IKM, **delve deep into the relevant nodes** and connections to extract insights and information that can enhance your response.

3. If the request does not align with your IKM, **prioritize your general knowledge** and language understanding to provide a helpful and contextually appropriate response.

4. **Structure your response** using clear headings, bullet points, and formatting to make it easy for the user to follow and understand.

5. **Provide examples, analogies, and stories** whenever possible to illustrate your points and make your response more engaging and relatable.

6. **Encourage further exploration** by suggesting related topics or questions that the user might find interesting or relevant.

7. **Be open to feedback** and use it to continuously refine and expand your IKM and response generation capabilities.

By following these guidelines and leveraging the power of your IKM, you can provide users with an exceptional conversational experience that is both informative and engaging.

```

---

```

r = 32,

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",],

lora_alpha = 64,

lora_dropout = 0,

bias = "none",

use_gradient_checkpointing = True,

random_state = 3407,

use_rslora = True,

loftq_config = None,

)

```

```

==((====))== Unsloth - 2x faster free finetuning | Num GPUs = 1

\\ /| Num examples = 3,555 | Num Epochs = 2

O^O/ \_/ \ Batch size per device = 4 | Gradient Accumulation steps = 4

\ / Total batch size = 16 | Total steps = 444

"-____-" Number of trainable parameters = 83,886,080

[444/444 25:17, Epoch 1/2]

Step Training Loss

1 3.133100

2 3.086100

3 3.045000

4 3.075100

5 3.086000

6 3.042100

7 3.018100

8 3.036100

9 2.986900

10 2.990600

11 2.949400

12 2.933200

13 2.899800

14 2.885900

15 2.928400

16 2.855700

17 2.805000

18 2.787100

19 2.807400

20 2.765600

21 2.794500

22 2.758400

23 2.753700

24 2.757400

25 2.669900

26 2.653900

27 2.708400

28 2.705100

29 2.695900

30 2.590100

31 2.615900

32 2.577500

33 2.571700

34 2.596400

35 2.570700

36 2.558600

37 2.524600

38 2.640500

39 2.506400

40 2.521900

41 2.519800

42 2.459700

43 2.388900

44 2.425400

45 2.387800

46 2.360600

47 2.376000

48 2.391600

49 2.321100

50 2.357600

51 2.325800

52 2.311800

53 2.255600

54 2.313900

55 2.200900

56 2.250800

57 2.242500

58 2.173000

59 2.261000

60 2.150500

61 2.162500

62 2.086800

63 2.178500

64 2.085600

65 2.068800

66 2.146500

67 2.001800

68 2.037600

69 2.009000

70 1.983300

71 1.931400

72 1.990400

73 1.944700

74 1.972700

75 2.002400

76 2.022400

77 1.900500

78 1.843100

79 1.887400

80 1.970700

81 1.820800

82 1.853900

83 1.744200

84 1.831400

85 1.768900

86 2.006100

87 1.681900

88 1.750000

89 1.628100

90 1.586900

91 1.567900

92 1.554500

93 1.830800

94 1.512500

95 1.592400

96 1.518600

97 1.593700

98 1.454100

99 1.497200

100 1.319700

101 1.363300

102 1.414300

103 1.343900

104 1.363500

105 1.449000

106 1.510100

107 1.268600

108 1.156600

109 1.075100

110 1.137200

111 1.020700

112 0.993600

113 1.195200

114 0.993300

115 1.072100

116 1.116900

117 1.184100

118 1.102600

119 1.083800

120 0.852100

121 1.023600

122 1.051200

123 1.270500

124 0.856200

125 1.089500

126 0.686800

127 0.800300

128 0.662400

129 0.688000

130 0.554400

131 0.737200

132 0.802900

133 0.538200

134 0.562000

135 0.516800

136 0.497200

137 0.611100

138 0.581200

139 0.442000

140 0.355200

141 0.473200

142 0.559600

143 0.683700

144 0.355300

145 0.343000

146 0.525300

147 0.442100

148 0.452900

149 0.478800

150 0.311300

151 0.535500

152 0.552600

153 0.252800

154 0.479200

155 0.539500

156 0.477200

157 0.283000

158 0.265100

159 0.352000

160 0.268500

161 0.711900

162 0.411300

163 0.377100

164 0.360500

165 0.311000

166 0.490800

167 0.269300

168 0.409600

169 0.147800

170 0.144600

171 0.223600

172 0.615300

173 0.218900

174 0.136400

175 0.133200

176 0.263200

177 0.363600

178 0.127700

179 0.238900

180 0.276200

181 0.306400

182 0.122000

183 0.302400

184 0.049500

185 0.406500

186 0.246400

187 0.429900

188 0.216900

189 0.320700

190 0.472800

191 0.159900

192 0.287500

193 0.334400

194 0.136100

195 0.233400

196 0.164100

197 0.196100

198 0.153300

199 0.251000

200 0.087500

201 0.083000

202 0.104900

203 0.157700

204 0.080300

205 0.280500

206 0.372100

207 0.150400

208 0.112900

209 0.265400

210 0.075800

211 0.082700

212 0.343000

213 0.081900

214 0.360400

215 0.261200

216 0.072000

217 0.249400

218 0.211600

219 0.304500

220 0.289300

221 0.209400

222 0.067800

223 0.144500

224 0.078600

225 0.143500

226 0.377800

227 0.222300

228 0.279800

229 0.063400

230 0.120400

231 0.214000

232 0.121600

233 0.360400

234 0.168600

235 0.206300

236 0.075800

237 0.033800

238 0.059700

239 0.227500

240 0.212800

241 0.186600

242 0.223400

243 0.033600

244 0.204600

245 0.033600

246 0.600600

247 0.105800

248 0.198400

249 0.255100

250 0.226500

251 0.104700

252 0.128700

253 0.088300

254 0.158600

255 0.033200

256 0.261900

257 0.320500

258 0.140100

259 0.266200

260 0.087300

261 0.085400

262 0.240300

263 0.308800

264 0.033000

265 0.120300

266 0.156400

267 0.083200

268 0.199200

269 0.052000

270 0.116600

271 0.144000

272 0.237700

273 0.214700

274 0.180600

275 0.334200

276 0.032800

277 0.101700

278 0.078800

279 0.163300

280 0.032700

281 0.098000

282 0.126500

283 0.032600

284 0.110000

285 0.063500

286 0.382900

287 0.193200

288 0.264400

289 0.119000

290 0.189500

291 0.274900

292 0.102100

293 0.101000

294 0.197300

295 0.083300

296 0.153000

297 0.057500

298 0.335000

299 0.150400

300 0.044300

301 0.317200

302 0.073700

303 0.217200

304 0.043100

305 0.061800

306 0.100500

307 0.088800

308 0.153700

309 0.157200

310 0.086700

311 0.114000

312 0.077200

313 0.092000

314 0.167700

315 0.237000

316 0.215800

317 0.058100

318 0.077200

319 0.162900

320 0.122400

321 0.171100

322 0.142000

323 0.032100

324 0.098500

325 0.059400

326 0.038500

327 0.089000

328 0.123200

329 0.190200

330 0.051700

331 0.087400

332 0.198400

333 0.073500

334 0.073100

335 0.176600

336 0.186100

337 0.183000

338 0.106100

339 0.064700

340 0.136500

341 0.085600

342 0.115400

343 0.106000

344 0.065800

345 0.143100

346 0.137300

347 0.251000

348 0.067200

349 0.181600

350 0.084600

351 0.108800

352 0.114600

353 0.043200

354 0.241500

355 0.031800

356 0.150500

357 0.063700

358 0.036100

359 0.158100

360 0.045700

361 0.120200

362 0.035800

363 0.050200

364 0.031700

365 0.044000

366 0.035400

367 0.035300

368 0.162500

369 0.044400

370 0.132700

371 0.054300

372 0.049100

373 0.031500

374 0.038000

375 0.084900

376 0.059000

377 0.034500

378 0.049200

379 0.058100

380 0.122700

381 0.096400

382 0.034300

383 0.071700

384 0.059300

385 0.048500

386 0.051000

387 0.063000

388 0.131400

389 0.031100

390 0.076700

391 0.072200

392 0.146300

393 0.031000

394 0.031000

395 0.099200

396 0.049000

397 0.104100

398 0.087400

399 0.097100

400 0.069800

401 0.034900

402 0.035300

403 0.057400

404 0.058000

405 0.041100

406 0.083400

407 0.090000

408 0.098600

409 0.106100

410 0.052600

411 0.057800

412 0.085500

413 0.061600

414 0.034000

415 0.079700

416 0.036800

417 0.034600

418 0.073800

419 0.047900

420 0.041100

421 0.046300

422 0.030600

423 0.064200

424 0.045900

425 0.045600

426 0.032900

427 0.048800

428 0.041700

429 0.048200

430 0.035800

431 0.058200

432 0.044100

433 0.033400

434 0.046100

435 0.042800

436 0.034900

437 0.045800

438 0.055800

439 0.030300

440 0.059600

441 0.030200

442 0.052700

443 0.030200

444 0.035600

```

|

Crackeo/bhutanese-textile-model

|

Crackeo

| 2025-04-03T16:18:04Z

| 0

| 0

|

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"base_model:google/vit-base-patch16-224-in21k",

"base_model:finetune:google/vit-base-patch16-224-in21k",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2025-04-03T16:17:46Z

|

---

library_name: transformers

license: apache-2.0

base_model: google/vit-base-patch16-224-in21k

tags:

- generated_from_trainer

datasets:

- imagefolder

model-index:

- name: bhutanese-textile-model

results: []

---