Upload README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,108 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Model Overview

|

| 2 |

+

|

| 3 |

+

This repository contains the code for Swin UNETR [1,2]. Swin UNETR is the state-of-the-art on Medical Segmentation

|

| 4 |

+

Decathlon (MSD) and Beyond the Cranial Vault (BTCV) Segmentation Challenge dataset. In [1], a novel methodology is devised for pre-training Swin UNETR backbone in a self-supervised

|

| 5 |

+

manner. We provide the option for training Swin UNETR by fine-tuning from pre-trained self-supervised weights or from scratch.

|

| 6 |

+

|

| 7 |

+

The source repository for the training of these models can be found [here](https://github.com/Project-MONAI/research-contributions/tree/main/SwinUNETR/BTCV).

|

| 8 |

+

|

| 9 |

+

# Installing Dependencies

|

| 10 |

+

Dependencies for training and inference can be installed using the model requirements :

|

| 11 |

+

``` bash

|

| 12 |

+

pip install -r requirements.txt

|

| 13 |

+

```

|

| 14 |

+

|

| 15 |

+

# Intended uses & limitations

|

| 16 |

+

|

| 17 |

+

You can use the raw model for masked language modeling, but it's mostly intended to be fine-tuned on a downstream task.

|

| 18 |

+

|

| 19 |

+

Note that this model is primarily aimed at being fine-tuned on tasks which segment CAT scans or MRIs on images in dicom format.

|

| 20 |

+

|

| 21 |

+

# How to use

|

| 22 |

+

|

| 23 |

+

To be filled....

|

| 24 |

+

|

| 25 |

+

# Limitations and bias

|

| 26 |

+

|

| 27 |

+

The training data used for this model is specific to CAT scans from certain health facilities and machines. Data from other facilities may difffer in image distributions, and may require finetuning of the models for best performance.

|

| 28 |

+

|

| 29 |

+

# Evaluation results

|

| 30 |

+

|

| 31 |

+

We provide several pre-trained models on BTCV dataset in the following.

|

| 32 |

+

|

| 33 |

+

<table>

|

| 34 |

+

<tr>

|

| 35 |

+

<th>Name</th>

|

| 36 |

+

<th>Dice (overlap=0.7)</th>

|

| 37 |

+

<th>Dice (overlap=0.5)</th>

|

| 38 |

+

<th>Feature Size</th>

|

| 39 |

+

<th># params (M)</th>

|

| 40 |

+

<th>Self-Supervised Pre-trained </th>

|

| 41 |

+

</tr>

|

| 42 |

+

<tr>

|

| 43 |

+

<td>Swin UNETR/Base</td>

|

| 44 |

+

<td>82.25</td>

|

| 45 |

+

<td>81.86</td>

|

| 46 |

+

<td>48</td>

|

| 47 |

+

<td>62.1</td>

|

| 48 |

+

<td>Yes</td>

|

| 49 |

+

</tr>

|

| 50 |

+

|

| 51 |

+

<tr>

|

| 52 |

+

<td>Swin UNETR/Small</td>

|

| 53 |

+

<td>79.79</td>

|

| 54 |

+

<td>79.34</td>

|

| 55 |

+

<td>24</td>

|

| 56 |

+

<td>15.7</td>

|

| 57 |

+

<td>No</td>

|

| 58 |

+

</tr>

|

| 59 |

+

|

| 60 |

+

<tr>

|

| 61 |

+

<td>Swin UNETR/Tiny</td>

|

| 62 |

+

<td>72.05</td>

|

| 63 |

+

<td>70.35</td>

|

| 64 |

+

<td>12</td>

|

| 65 |

+

<td>4.0</td>

|

| 66 |

+

<td>No</td>

|

| 67 |

+

</tr>

|

| 68 |

+

|

| 69 |

+

</table>

|

| 70 |

+

|

| 71 |

+

# Data Preparation

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

The training data is from the [BTCV challenge dataset](https://www.synapse.org/#!Synapse:syn3193805/wiki/217752).

|

| 75 |

+

|

| 76 |

+

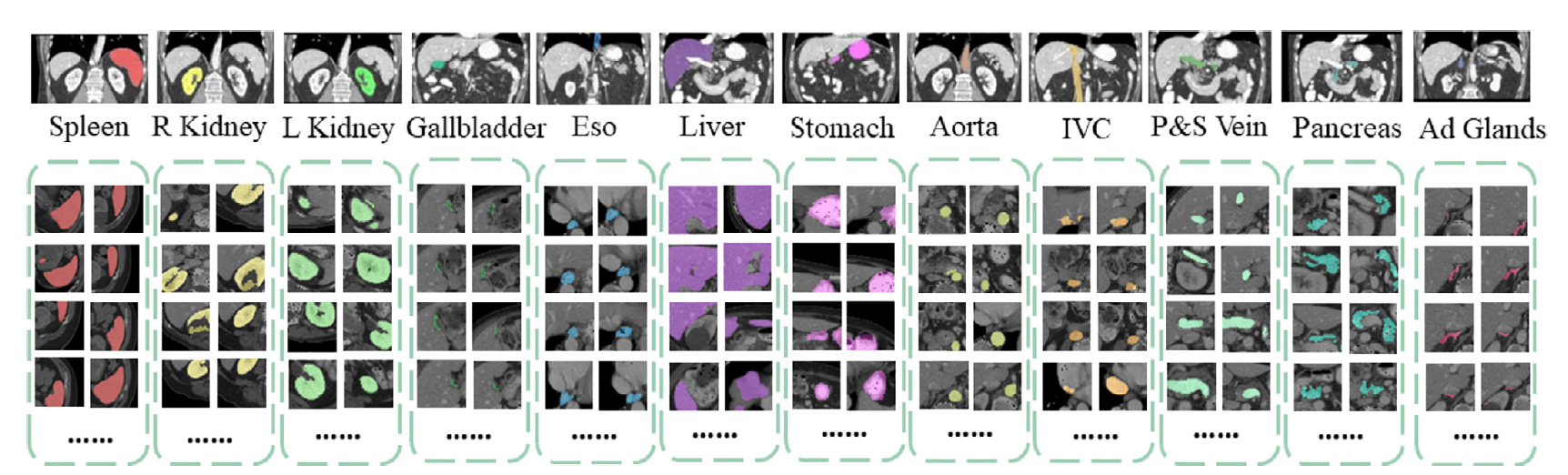

- Target: 13 abdominal organs including 1. Spleen 2. Right Kidney 3. Left Kideny 4.Gallbladder 5.Esophagus 6. Liver 7. Stomach 8.Aorta 9. IVC 10. Portal and Splenic Veins 11. Pancreas 12.Right adrenal gland 13.Left adrenal gland.

|

| 77 |

+

- Task: Segmentation

|

| 78 |

+

- Modality: CT

|

| 79 |

+

- Size: 30 3D volumes (24 Training + 6 Testing)

|

| 80 |

+

|

| 81 |

+

# Training

|

| 82 |

+

|

| 83 |

+

See the source repository [here](https://github.com/Project-MONAI/research-contributions/tree/main/SwinUNETR/BTCV) for information on training.

|

| 84 |

+

|

| 85 |

+

# BibTeX entry and citation info

|

| 86 |

+

If you find this repository useful, please consider citing the following papers:

|

| 87 |

+

|

| 88 |

+

```

|

| 89 |

+

@inproceedings{tang2022self,

|

| 90 |

+

title={Self-supervised pre-training of swin transformers for 3d medical image analysis},

|

| 91 |

+

author={Tang, Yucheng and Yang, Dong and Li, Wenqi and Roth, Holger R and Landman, Bennett and Xu, Daguang and Nath, Vishwesh and Hatamizadeh, Ali},

|

| 92 |

+

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

|

| 93 |

+

pages={20730--20740},

|

| 94 |

+

year={2022}

|

| 95 |

+

}

|

| 96 |

+

|

| 97 |

+

@article{hatamizadeh2022swin,

|

| 98 |

+

title={Swin UNETR: Swin Transformers for Semantic Segmentation of Brain Tumors in MRI Images},

|

| 99 |

+

author={Hatamizadeh, Ali and Nath, Vishwesh and Tang, Yucheng and Yang, Dong and Roth, Holger and Xu, Daguang},

|

| 100 |

+

journal={arXiv preprint arXiv:2201.01266},

|

| 101 |

+

year={2022}

|

| 102 |

+

}

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

# References

|

| 106 |

+

[1]: Tang, Y., Yang, D., Li, W., Roth, H.R., Landman, B., Xu, D., Nath, V. and Hatamizadeh, A., 2022. Self-supervised pre-training of swin transformers for 3d medical image analysis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 20730-20740).

|

| 107 |

+

|

| 108 |

+

[2]: Hatamizadeh, A., Nath, V., Tang, Y., Yang, D., Roth, H. and Xu, D., 2022. Swin UNETR: Swin Transformers for Semantic Segmentation of Brain Tumors in MRI Images. arXiv preprint arXiv:2201.01266.

|