Update README.md

Browse files

README.md

CHANGED

|

@@ -1,117 +1,15 @@

|

|

| 1 |

---

|

| 2 |

-

base_model:

|

| 3 |

-

- trashpanda-org/

|

| 4 |

-

- Qwen/QwQ-32B

|

| 5 |

-

- Qwen/Qwen2.5-32B

|

| 6 |

-

- trashpanda-org/Qwen2.5-32B-Marigold-v0-exp

|

| 7 |

library_name: transformers

|

|

|

|

| 8 |

tags:

|

| 9 |

- mergekit

|

| 10 |

- mergekitty

|

| 11 |

- merge

|

| 12 |

|

| 13 |

---

|

| 14 |

-

|

| 15 |

|

| 16 |

-

|

| 17 |

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

<p><b>Has's notes</b>: it's actually pretty damn good?!</p>

|

| 21 |

-

|

| 22 |

-

<p><b>Severian's notes</b>: R1 at home for RP, literally. Able to handle my cards with gimmicks and subtle tricks in them. With a good reasoning starter+prompt, I'm getting consistently-structured responses that have a good amount of variation across them still while rerolling. Char/scenario portrayal is good despite my focus on writing style, lorebooks are properly referenced at times. Slop doesn't seem to be too much of an issue with thinking enabled. Some user impersonation is rarely observed. Prose is refreshing if you take advantage of what I did (writing style fixation). I know I said Marigold would be my daily driver, but this one is that now, it's that good.</p>

|

| 23 |

-

|

| 24 |

-

## Recommended settings

|

| 25 |

-

|

| 26 |

-

<p><b>Context/instruct template</b>: ChatML. <s>Was definitely not tested with ChatML instruct and Mistral v7 template, nuh-uh.</s></p>

|

| 27 |

-

|

| 28 |

-

<p><b>Samplers</b>: temperature at 0.9, min_p at 0.05, top_a at 0.3, TFS at 0.75, repetition_penalty at 1.03, DRY if you have access to it.</p>

|

| 29 |

-

|

| 30 |

-

A virt-io derivative prompt worked best during our testing, but feel free to use what you like.

|

| 31 |

-

|

| 32 |

-

Master import for ST: [https://files.catbox.moe/b6nwbc.json](https://files.catbox.moe/b6nwbc.json)

|

| 33 |

-

|

| 34 |

-

## Reasoning

|

| 35 |

-

|

| 36 |

-

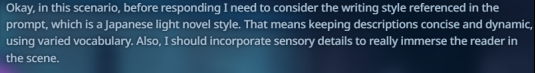

Feel free to test whichever reasoning setup you're most comfortable with, but here's a recommendation from me. My prompt has a line that says:

|

| 37 |

-

|

| 38 |

-

```

|

| 39 |

-

Style Preference: Encourage the usage of a Japanese light novel writing style.

|

| 40 |

-

```

|

| 41 |

-

|

| 42 |

-

Deciding to fixate on that, my reasoning starter is:

|

| 43 |

-

|

| 44 |

-

```

|

| 45 |

-

<think>Okay, in this scenario, before responding I need to consider the writing style referenced in the prompt, which is

|

| 46 |

-

```

|

| 47 |

-

|

| 48 |

-

What this did for me, at least during testing is that it gave the reasoning a structure to follow across rerolls, seeking out that part of the prompt consistently.

|

| 49 |

-

See below:

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

But the responses were still varied, because the next few paragraphs after these delved into character details, so on and so forth. Might want to experiment and make your own thinking/reasoning starter that focuses on what you hope to get out of the responses for best results.

|

| 57 |

-

|

| 58 |

-

-- Severian

|

| 59 |

-

|

| 60 |

-

## Thank you!

|

| 61 |

-

|

| 62 |

-

Big thanks to the folks in the trashpanda-org discord for testing and sending over some logs!

|

| 63 |

-

|

| 64 |

-

## Reviews

|

| 65 |

-

|

| 66 |

-

To follow.

|

| 67 |

-

|

| 68 |

-

## Just us having fun, don't mind it

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

|

| 72 |

-

## Some logs

|

| 73 |

-

|

| 74 |

-

To follow.

|

| 75 |

-

|

| 76 |

-

## Merge Details

|

| 77 |

-

### Merge Method

|

| 78 |

-

|

| 79 |

-

This model was merged using the [TIES](https://arxiv.org/abs/2306.01708) merge method using [Qwen/Qwen2.5-32B](https://huggingface.co/Qwen/Qwen2.5-32B) as a base.

|

| 80 |

-

|

| 81 |

-

### Models Merged

|

| 82 |

-

|

| 83 |

-

The following models were included in the merge:

|

| 84 |

-

* [trashpanda-org/Qwen2.5-32B-Marigold-v0](https://huggingface.co/trashpanda-org/Qwen2.5-32B-Marigold-v0)

|

| 85 |

-

* [Qwen/QwQ-32B](https://huggingface.co/Qwen/QwQ-32B)

|

| 86 |

-

* [trashpanda-org/Qwen2.5-32B-Marigold-v0-exp](https://huggingface.co/trashpanda-org/Qwen2.5-32B-Marigold-v0-exp)

|

| 87 |

-

|

| 88 |

-

### Configuration

|

| 89 |

-

|

| 90 |

-

The following YAML configuration was used to produce this model:

|

| 91 |

-

|

| 92 |

-

```yaml

|

| 93 |

-

models:

|

| 94 |

-

- model: trashpanda-org/Qwen2.5-32B-Marigold-v0-exp

|

| 95 |

-

parameters:

|

| 96 |

-

weight: 1

|

| 97 |

-

density: 1

|

| 98 |

-

- model: trashpanda-org/Qwen2.5-32B-Marigold-v0

|

| 99 |

-

parameters:

|

| 100 |

-

weight: 1

|

| 101 |

-

density: 1

|

| 102 |

-

- model: Qwen/QwQ-32B

|

| 103 |

-

parameters:

|

| 104 |

-

weight: 0.9

|

| 105 |

-

density: 0.9

|

| 106 |

-

merge_method: ties

|

| 107 |

-

base_model: Qwen/Qwen2.5-32B

|

| 108 |

-

parameters:

|

| 109 |

-

weight: 0.9

|

| 110 |

-

density: 0.9

|

| 111 |

-

normalize: true

|

| 112 |

-

int8_mask: true

|

| 113 |

-

tokenizer_source: Qwen/Qwen2.5-32B-Instruct

|

| 114 |

-

dtype: bfloat16

|

| 115 |

-

|

| 116 |

-

|

| 117 |

-

```

|

|

|

|

| 1 |

---

|

| 2 |

+

base_model:

|

| 3 |

+

- trashpanda-org/QwQ-32B-Snowdrop-v0

|

|

|

|

|

|

|

|

|

|

| 4 |

library_name: transformers

|

| 5 |

+

base_model_relation: quantized

|

| 6 |

tags:

|

| 7 |

- mergekit

|

| 8 |

- mergekitty

|

| 9 |

- merge

|

| 10 |

|

| 11 |

---

|

|

|

|

| 12 |

|

| 13 |

+

EXL2 Quants by FrenzyBiscuit.

|

| 14 |

|

| 15 |

+

This model is 8.0 BPW EXL2.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|